Data-Driven Model Predictive Control with Stability and Robustness Guarantees

We propose a robust data-driven model predictive control (MPC) scheme to control linear time-invariant (LTI) systems. The scheme uses an implicit model description based on behavioral systems theory and past measured trajectories. In particular, it d…

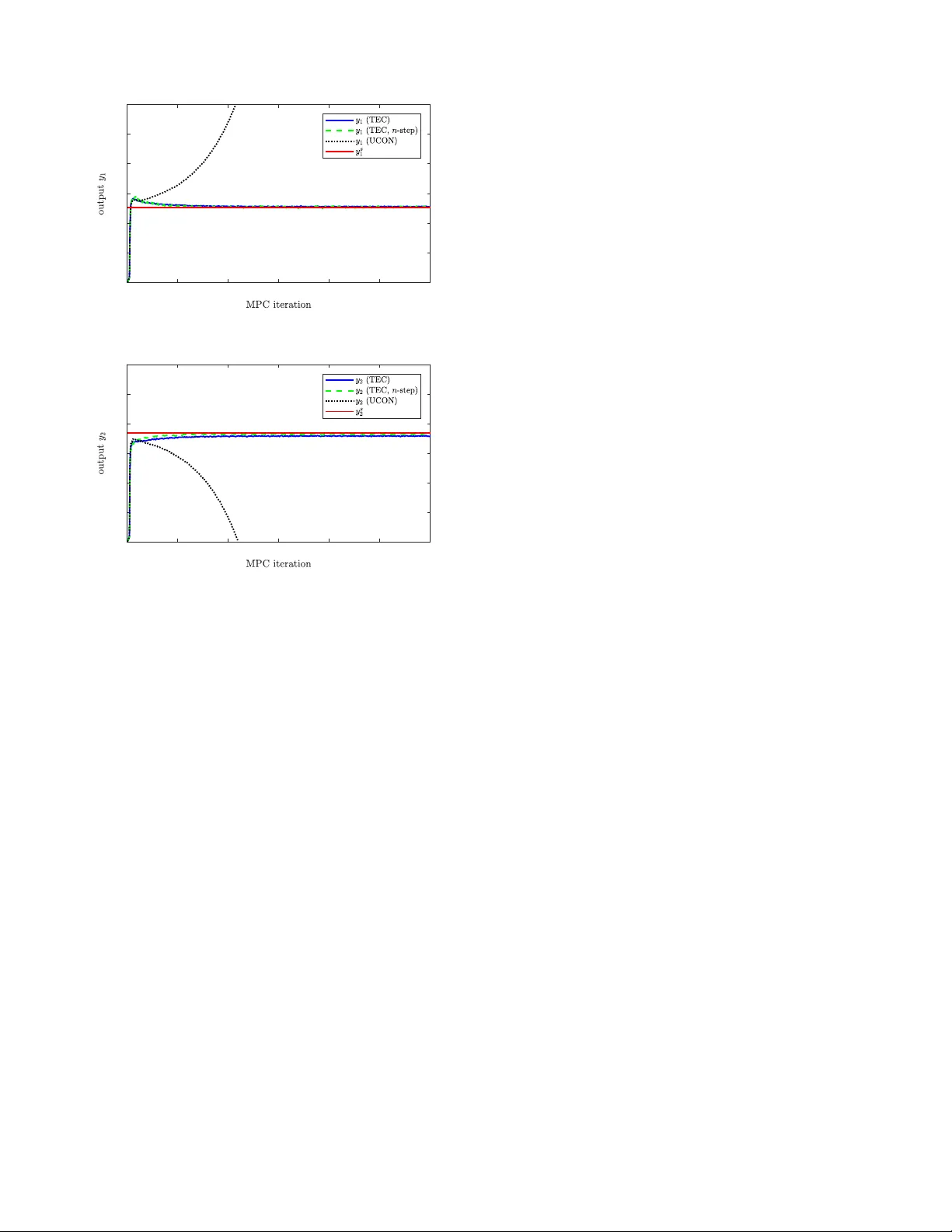

Authors: Julian Berberich, Johannes K"ohler, Matthias A. M"uller