Policy Iterations for Reinforcement Learning Problems in Continuous Time and Space -- Fundamental Theory and Methods

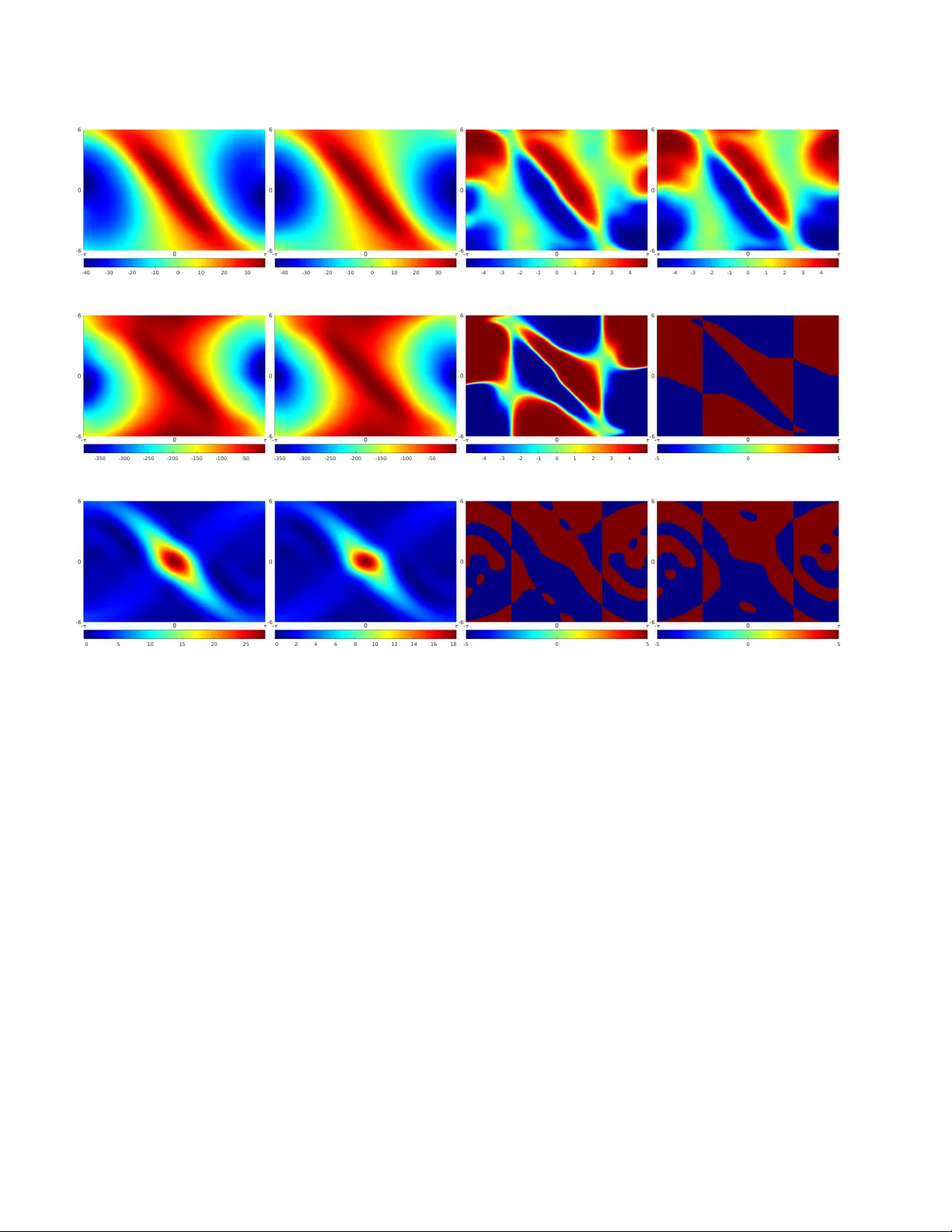

Policy iteration (PI) is a recursive process of policy evaluation and improvement for solving an optimal decision-making/control problem, or in other words, a reinforcement learning (RL) problem. PI has also served as the fundamental for developing R…

Authors: Jaeyoung Lee, Richard S. Sutton