An efficient bounded-variable nonlinear least-squares algorithm for embedded MPC

This paper presents a new approach to solve linear and nonlinear model predictive control (MPC) problems that requires small memory footprint and throughput and is particularly suitable when the model and/or controller parameters change at runtime. T…

Authors: Nilay Saraf, Alberto Bemporad

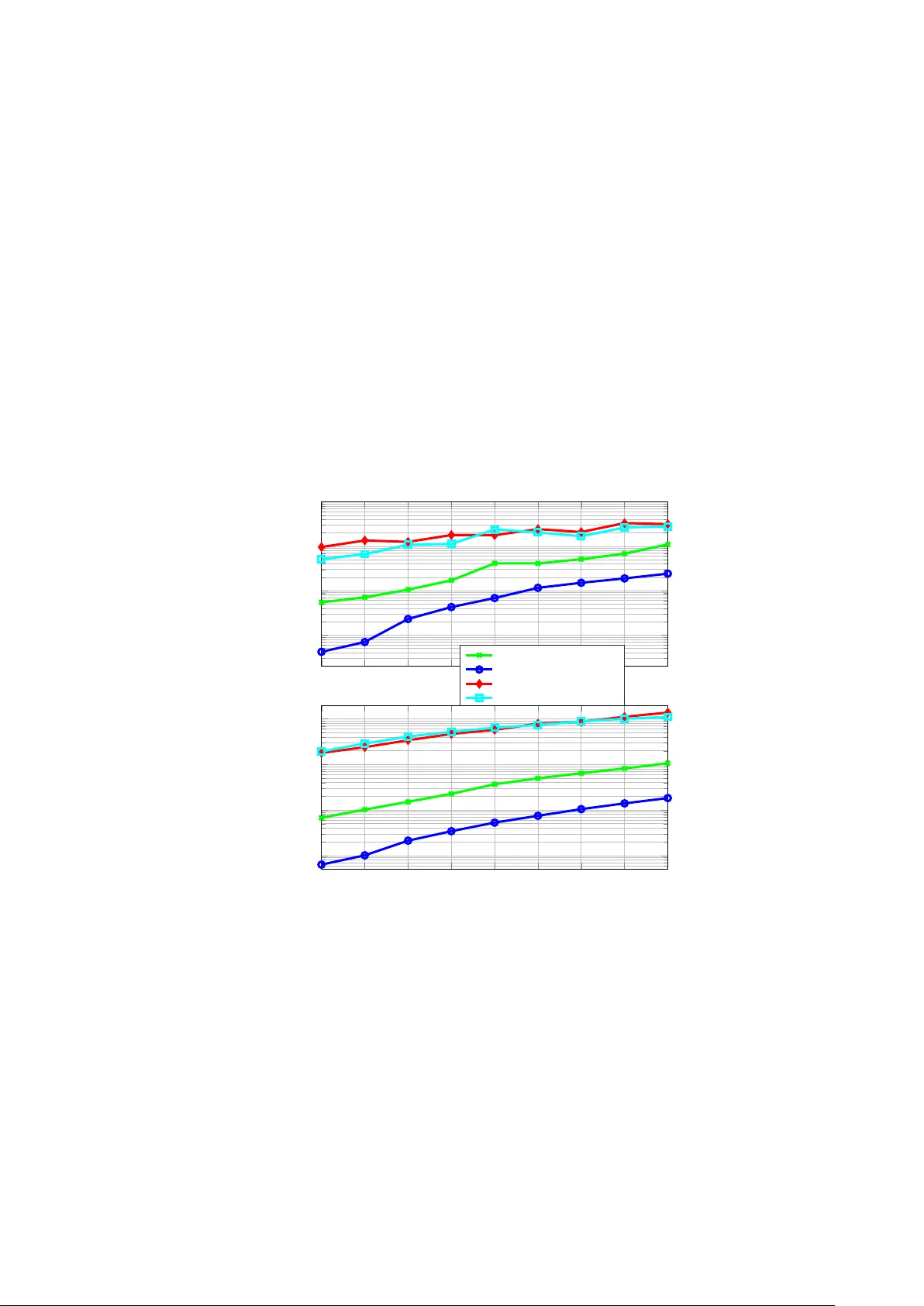

An efficien t b ounded-v ariable nonlinear least-squares algorithm for em b edded MPC Nila y Saraf and Alb erto Bemporad Marc h 25, 2021 Abstract This pap er presen ts a new approach to solv e linear and nonlinear model predictiv e con trol (MPC) problems that requires small memory fo otprin t and throughput and is particularly suitable when the mo del and/or con- troller parameters change at runtime. T ypically MPC requires t wo phases: 1) construct an optimization problem based on the giv en MPC parameters (prediction mo del, tuning w eights, prediction horizon, and constraints), whic h results in a quadratic or nonlinear programming problem, and then 2) call an optimization algorithm to solv e the resulting problem. In the prop osed approac h the problem construction step is systematically elim- inated, as in the optimization algorithm problem matrices are expressed in terms of abstract functions of the MPC parameters. W e present a uni- fying algorithmic framework based on active-set metho ds with b ounded v ariables that can cop e with linear, nonlinear, and adaptive MPC v ari- an ts based on a broad class of prediction models and a sum-of-squares cost function. The theoretical and numerical results demonstrate the p oten- tial, applicabilit y , and efficiency of the prop osed framework for practical real-time embedded MPC. Keyw ords: Mo del predictiv e con trol, activ e-set metho ds, nonlinear parameter-v arying con trol, adaptive control, nonlinear programming, sparse recursiv e QR factorization. 1 In tro duction Mo del predictiv e con trol has ev olved ov er the y ears from a metho d developed for con trolling slo w processes [18, 26] to an adv anced multiv ariable con trol metho d that is applicable ev en to fast-sampling applications, such as in the automotiv e and aerospace domains [3, 11]. This evolution has b een p ossible because of the significant amount of research on computationally efficient real-time MPC algorithms. F or an incomplete list of such efforts and tools the reader is referred to [7, 13, 14, 17, 20, 24, 32, 36]. Despite the success of MPC, demand for faster This pro ject had received funding from the Europ ean Union’s Horizon 2020 F ramew ork Programme for Research and Innov ation under gran t agreement No. 674875 (oCPS). N. Saraf (e-mail: nilay .saraf@alumni.im tlucca.it) and A. Bemp orad (e-mail: alberto.b emp orad@im tlucca.it) are with the IMT School for Ad- v anced Studies Lucca, Piazza San F rancesco 19, Lucca, 55100 LU Italy . 1 n umerical algorithms for a wider scop e of applications has b een rep orted for instance in [11]. A common approac h to reduce computational load is to solve the MPC problem sub optimally , see for instance [13, 36]. Ho w ever, even such MPC approaches hav e limitations that could b e prohibitive in some resource- constrained applications, especially in the case of (parameter-v arying) nonlinear MPC (NMPC). This denotes that there is still a large scop e of improv ement. A usual practice in MPC is to first formulate an optimization problem based on the prediction model and MPC tuning parameters, b efore passing it in a standard form to an optimization solver. Such a problem construction step can b e performed offline when the prediction model is time-inv arian t, such as linear time-in v ariant (L TI) mo del of the system, whereas it needs to b e rep eated at eac h instance in case of parameter-v arying mo dels, such as nonlinear mo dels linearized at the current op erating p oint, or changes of MPC tuning parame- ters (such as prediction horizon, con trol horizon, tuning w eights, or sampling time). Often, constructing the optimization problem requires a computation ef- fort comparable to that required for solving the optimization problem itself. The same o ccurs in the recently proposed data-driven MPC sc heme [25] where due to p oten tially time-v arying mo del and/or tuning parameters, re-constructing the MPC optimization problem on line b ecomes necessary , which significantly increases the computational load. Notwithstanding these limitations of MPC, scarcely any effort has b een made till date to design a real-time MPC approach whic h do es not need (re-)construction of the optimization problem with v ary- ing mo del and/or MPC tuning parameters. Approaches whic h partially address this aspect for a limited class of linear parameter-v arying (LPV) mo dels with a fixed MPC problem structure include [8, 36]. The metho ds prop osed in this paper aim at reducing the computational com- plexit y of MPC while eliminating the optimization problem construction step ev en for the general case of nonlinear parameter-v arying systems, through al- gorithms that can adapt to c hanges in the mo del and/or tuning parameters at run time. The main ideas employ ed for this purp ose are: 1) a structured and sparse form ulation of the MPC problem through a quadratic p enalt y function in order to exploit simple and fast solution metho ds, 2) replacing matrix instances via abstract operators that map the model and tuning parameters to the result of the required matrix op erations in the optimization algorithm. Besides this, the contributions of this pap er include: 1) an ov erview on the relation b etw een quadratic p enalt y and augmented Lagrangian metho ds w.r.t. their application for equalit y constrain t elimination in the considered (nonlinear) MPC problems, 2) a discussion on alternative metho ds to implemen t MPC with resulting so- lution algorithms having negligible increase in memory requirement w.r.t. the n umber of decision v ariables, 3) metho ds to exploit problem sparsity and ef- ficien tly implement the active-set metho d prop osed in [30] for MPC based on b o x-constrained (nonlinear) least-squares. Regarding the last contribution, we note that each iteration of a primal activ e-set metho d [23] inv olv es the solution of a linear system, which in the case of the algorithm in [30] is a sparse unconstrained linear least-squares (LS) problem. These LS problems b et ween successive iterations are related by a rank-one up date. In [30], it has b een sho wn for the numerically dense case that, as compared to solving an LS problem from scratch, emplo ying a recursive QR factorization scheme that exploits the relation betw een successiv e LS problems can significan tly increase computational sp eed without compromising numerical 2 robustness, ev en without using adv anced linear algebra libraries. In the sp arse case, even though very efficien t approaches exist for solving a single LS problem using direct [10] or iterative [27] metho ds with sparse linear algebra libraries, to the b est of the authors’ knowledge no metho ds hav e b een rep orted for recursively up dating the sparse QR factorization of a matrix. A recursive approach for sparse LU factorization has b een describ ed in [15]; ho wev er, such an approach not only needs the storage of the matrix and its sparsity pattern, which requires constructing the MPC problem and forming the normal equations that could b e n umerically susceptible to ill-conditioning, but it also relies on linear algebra pac k ages that could b e cumbersome to co de, esp ecially in an embedded control platform. In this paper, we present nov el metho ds for numerically stable sparse recursiv e QR factorization based on Gram-Schmidt orthogonalization, which are easy to implement and are v ery efficient ev en for small-size problems, therefore extending the dense approach of [30]. Although the prop osed metho ds are designed for the sp ecific MPC application, i.e., to solv e the sparse LS problems ha ving a sp ecific parameter-dep endent structure without forming the matrix that is factorized, they ma y b e applicable for other LS problems with block- sparse matrices ha ving similar sp ecial structures. The pap er is organized as follows. Section 2 describ es the considered general class of discrete-time mo dels and MPC problem formulation. The prop osed non- condensed form ulation based on eliminating the equality constraints due to the mo del equations is motiv ated and describ ed in detail in Section 3. In Section 4 w e describ e a solution algorithm for b ound-constrained nonlinear least-squares optimization with a theoretical analysis on its global con vergence. A param- eterized implementation of this algorithm for solving MPC problems without the construction phase and relying on the abstraction of matrix instances is describ ed in Section 5. Metho ds for sparse recursiv e thin QR factorization are describ ed in Section 6. Section 7 briefly rep orts numerical results based on a nonlinear MPC (NMPC) b enc hmark example that clearly demonstrate the v ery go o d computational p erformance of the prop osed metho ds against other metho ds. Finally , Section 8 concludes the pap er. Excerpts of Sections 2-3, and Section 4.1 are based on the authors’ conference pap ers [29, 31]. These pap ers in tro duced the idea to form ulate the (N)MPC problem using a quadratic p enalty function for a fast solution using b ounded- v ariable (nonlinear) least squares with numerically dense computations. All the other ideas that we hav e introduced ab o ve are prop osed in this pap er and are original. In addition, we include corrections and extensions of the conten ts in common with [29, 31]. Basic notation W e denote the set of real vectors of dimension n as R n ; a real matrix with m ro ws and n columns as A ∈ R m × n ; its transp ose as A > , its inv erse as A − 1 , and its pseudo-inv erse as A † . F or a vector a ∈ R m , its p -norm is k a k p , its j th elemen t is a ( j ), and k a k 2 2 = a > a . A v ector or matrix with all zero elemen ts is represen ted b y 0 . If F denotes a set of indices, A F denotes a matrix formed from columns of A corresp onding to the indices in F . Given N square matrices A 1 , . . . , A N , of p ossible different orders, blockdiag( A 1 , . . . , A N ) is the blo c k diagonal matrix whose diagonal blocks are A 1 , . . . , A N . F or scalars a and b , min( a, b ) and max( a, b ) denote, resp ectively , the mini- 3 m um and maximum of the tw o v alues. Dep ending on the con text, ( a, b ] or [ b, a ) represen t either the set of real num b ers or in tegers b et ween a and b , excluding a and including b , or vice-v ersa. The gradien t of a function f : R n → R at a point ¯ x ∈ R n is either denoted b y ∇ x f ( x ) | ¯ x or ∇ x f ( ¯ x ), the Hessian matrix by ∇ 2 x f ( ¯ x ); the Jacobian of a v ector function g : R n → R m b y J x g ( x ) | ¯ x or J g ( ¯ x ). Finite sets of elements are represented b y curly braces containing the ele- men ts; ∅ denotes the empty set. If a set A is a subset of set B (i.e., if B is the sup erset of A ), it is written as A ⊆ B (or alternatively B ⊇ A ). The symbols ∪ , ∩ , and \ b et ween tw o sets denote, resp ectiv ely , set union, intersection, and difference. The summation notation for sets is denoted by S . The cardinality (n umber of elements) of a finite set A is denoted b y |A| . 2 Preliminaries F or maximum generality , the prediction mo del we use in MPC is describ ed by the following discrete-time multiv ariable nonlinear parameter-v arying dynamical mo del equation M ( Y k , U k , S k ) = 0 , (1) where U k = ( u k − n b , . . . , u k − 1 ) with u k ∈ R n u the input vector at sampled time step k , and Y k = ( y k − n a , . . . , y k ) with y k ∈ R n y the output vector at k . V ector S k = ( s k − n c , . . . , s k − 1 ), where s k ∈ R n s , n s ≥ 0, contains p ossible exogenous signals, such as measured disturbances. W e assume that function M : R n a n y × R n b n u × R n c n s → R n y is differen tiable, where n a , n b and n c denote the mo del order. Sp ecial cases include deterministic nonlinear parameter-v arying auto-regressive exogenous (NLPV-ARX) models, state-space mo dels ( y = state v ector, n a = n b = n c = 1), neural netw orks with a smo oth activ ation function, discretized first-principles mo dels and differential algebraic equations. Designing the MPC controller based on the input-output (I/O) difference equation (1) has several b enefits such as: 1) data-based black- b o x mo dels which are often identified in I/O form do not need a state-space realization for control, 2) a state estimator is not required when all output and exogenous v ariables are measured, 3) the n umber of decision v ariables to b e optimized do es not scale linearly with the num b er of system states but outputs, whic h could b e few er in num ber, 4) input delays can easily b e incorp orated in the mo del by simply shifting the sequence in U k bac kwards in time. Linearizing (1) w.r.t. a sequence of inputs ˆ U (that is, U k = ˆ U + ∆ U ) and outputs ˆ Y ( Y k = ˆ Y + ∆ Y ) gives M ( ˆ Y , ˆ U , S k ) + J Y k M ( Y k , U k , S k ) ˆ Y , ˆ U ∆ Y + J U k M ( Y k , U k , S k ) ˆ Y , ˆ U ∆ U = 0 , whic h is equiv alen tly written as the following affine parameter-v arying I/O mo del, i.e., − A ( S k ) 0 ∆ y k = n a X j =1 A ( S k ) j ∆ y k − j + n b X j =1 B ( S k ) j ∆ u k − j + M ( ˆ Y , ˆ U , S k ) , (2) where the Jacobian matrices A ( S k ) j = J y k − j M ( Y k , U k , S k ) ˆ Y , ˆ U ∈ R n y × n y , ∀ j ∈ [0 , n a ] , B ( S k ) j = J u k − j M ( Y k , U k , S k ) ˆ Y , ˆ U ∈ R n y × n u , ∀ j ∈ [1 , n b ] . 4 Note that for the sp ecial case of L TI mo dels in ARX form, in (2) A 0 w ould b e an identit y matrix whereas S k w ould b e absen t and M ( ˆ Y , ˆ U ) = 0 , ˆ Y = 0 , ˆ U = 0 . W e consider the following p erformance index (P) which is commonly em- plo yed for reference trac king in MPC: P k = N p X j =1 1 2 k W y k + j ( y k + j − ¯ y k + j ) k 2 2 + N u − 2 X j =0 1 2 k W u k + j ( u k + j − ¯ u k + j ) k 2 2 + 1 2 ( N p − N u + 1) · k W u k + j ( u k + N u − 1 − ¯ u k + N u − 1 ) k 2 2 , (3) where N p and N u denote the prediction and con trol horizon respectively . Matri- ces W y ( · ) ∈ R n y × n y , W u ( · ) ∈ R n u × n u denote tuning w eights, vectors ¯ y , ¯ u denote output and input references, resp ectively . The methods described later in this paper can straigh tforw ardly b e extended to any p erformance index whic h is a sum of squares of linear or differentiable nonlinear functions. The MPC optimization problem is form ulated based on the cost function (3) sub ject to constraints on the vector w k = ( u k , . . . , u k + N u − 1 , y k +1 , . . . , y k + N p ) of input and output v ariables. In this pap er w e will consider equality constraints that arise from the prediction mo del (1), and restrict inequality constrain ts to only simple b ounds on input and output v ariables. General ( soft ) inequalit y constrain ts (4) can nevertheless b e included as equalities b y introducing non- negativ e slac k v ariables ν ∈ R n i suc h that g ( w k ) ≤ 0 becomes , (4) g ( w k ) + ν k = 0 and ν k ≥ 0 , where z k = ( w k , ν k ) and g : R ( n z − n i ) → R n i is assumed to be differen tiable, while n z and n i denote the n umber of decision v ariables and general inequality constrain ts resp ectiv ely . In summary , the MPC optimization problem to b e solv ed at each step k is min z k 1 2 k W k ( z k − ¯ z k ) k 2 2 (5a) s.t. h k ( z k , φ k ) = 0 , (5b) p k ≤ z k ≤ q k , (5c) where p k , q k are v ectors defining b ounds on the input and output v ariables, and p ossible non-negativity constraint on slack v ariables. Some comp onen ts of z k ma y be unbounded, in that case those b ounds are passed to the solv er w e prop ose later as the largest negativ e or p ositiv e floating-p oin t num ber in the computing platform, so that we can assume p k , q k ∈ R n z . V ector φ k = ( u k − n b +1 , . . . , u k − 1 , y k , . . . , y k − n a +1 ) denotes the initial condition whereas ¯ z con- tains references on the decision v ariables. The blo c k-sparse m atrix W k is con- structed from the tuning weigh ts ( W u ( · ) , W y ( · ) ) defined in (3). 3 Eliminating equalit y constrain ts Handling equality constraints via p enalt y functions, or an augmented Lagrangian metho d, has pro ven to b e effective for efficiently solving constrained optimiza- tion problems [4, 23], [21, Chapter 22]. This section shows how similar metho ds 5 can b e applied to efficiently solv e MPC problems of the form (5). In order to employ fast solution metho ds, the general constrained problem (5) can b e simplified as a box-constrained nonlinear least-squares (NLLS-b ox) problem by using a quadratic p enalt y function and consequen tly eliminating the equality constrain ts (5b) such that (5) becomes min p k ≤ z k ≤ q k 1 2 1 √ ρ W k ( z k − ¯ z k ) h k ( z k , φ k ) 2 2 ≡ min p k ≤ z k ≤ q k 1 2 k r k ( z k ) k 2 2 , (6) where the p enalt y parameter ρ is a p ositiv e scalar and r : R n z → R n r denotes the vector of residuals. W e prop ose the reform ulation (6) of problem (5) for the follo wing reasons: 1. Penalizing the violation of equalit y constraints mak es problem (6) alwa ys feasible; 2. No additional slac k v ariables are needed for softening output constraints, whic h would result in inequalities of general type instead of b o x con- strain ts; 3. While solving (6), since w e do not include additional slack v ariables to soften constraints, the function h k do es not need to b e analytic b ey ond b ounds, whic h is discussed in further detail in Section 4 (cf. Remark 2); 4. No dual v ariables need to be optimized to handle equality constrain ts; 5. Problem (6) is simpler to solve as compared to (5), for instance, when using SQP algorithms (cf. Section 4), initializing a feasible guess is straightfor- w ard, the subproblems are feasible even with inconsisten t linearizations of h k , and conv ergence impe ding phenomena suc h as the Maratos effect [23] are implicitly a voided. Relaxing the equality constraints as ab o ve also has an engineering justifica- tion [29]: As the prediction mo del (1) is only an approximation of the true system dynamics, (opp ortunistic) violations of the dynamic mo del equations will only affect the quality of predictions, dep ending on the magnitude of the violation. Instead of using the iterative quadratic p enalt y metho d (QPM) [23, F ramework 17.1] with increasing v alues of ρ in each iteration, w e prop ose to use a single iteration with a large v alue of ρ for solving (5), owing to the fact that a go od initial guess is often av ailable in MPC. It has been prov en in [29, Theorem 1] that for a quadratic cost (5a) sub ject to only consistent linear equality con- strain ts, a single QPM iteration with sufficiently large p enalt y ρ may result in negligible solution inaccuracy . This has been clearly demonstrated by numerical examples in [29, 31] for the general case. A practical upp er b ound on ρ dep ends on the computing precision and numerical robustness of the optimization solver suc h that the Jacobian of the vector of residuals in (6) is numerically full-rank. The parameter ρ is tuned (cf. [28, Section 3.5.3]) based on the fact that a higher v alue results in a low er solution inaccuracy at the cost of problem scaling whic h ma y affect the conv ergence rate of the adopted solution metho ds. A theoretical lo wer b ound on ρ exists and has b een deriv ed in [29] for the case of L TI systems based on closed-loop stability conditions. The extension of such a result to the general case is not immediate and thereb y p oses a risk giv en that the b ound is 6 not deterministic. How ev er, in practice, based on the arguments and details in the references mentioned in this section, we exp ect a sufficien tly low v alue of the equality constrain t violation. An alternativ e approach to solv e the optimization problem (5) without the equalit y constraints (5b) is the b ound-constrained Lagrangian metho d (BLM) [23, Algorithm 17.4], which can efficien tly be solv ed by iterativ ely using the nonlin- ear gradient pro jection algorithm [23]. A t each iteration ( i ) of the BLM, one solv es z ( i +1) k = arg min p k ≤ z k ≤ q k 1 2 W k ( z k − ¯ z k ) p ρ ( i ) h k ( z k , φ k ) 2 2 + Λ > k ( i ) h k ( z k , φ k ) (7) where Λ denotes the v ector of Lagrange multipliers corresp onding to the equality constrain ts, and updates the estimates Λ ( i ) and ρ ( i ) , until conv ergence (cf. [23, Algorithm 17.4]). Prop osition 1 The optimization pr oblem (7) is e quivalent to the NLLS-b ox pr oblem z ( i +1) k = arg min p k ≤ z k ≤ q k 1 2 1 √ ρ ( i ) W k ( z k − ¯ z k ) h k ( z k , φ k ) + Λ ( i ) k ρ ( i ) 2 2 (8) Pro of: W e hav e that problem arg min p k ≤ z k ≤ q k 1 2 W k ( z k − ¯ z k ) p ρ ( i ) h k ( z k , φ k ) 2 2 +Λ > k ( i ) h k ( z k , φ k ) and arg min p k ≤ z k ≤ q k 1 2 k W k ( z k − ¯ z k ) k 2 2 + H ( z k ) , where H ( z k ) = ρ ( i ) 2 k h k ( z k , φ k ) k 2 2 + Λ > k ( i ) h k ( z k , φ k ) + Λ ( i ) k 2 2 2 ρ ( i ) = ρ ( i ) 2 k h k ( z k , φ k ) k 2 2 + 2Λ > k ( i ) h k ( z k , φ k ) ρ ( i ) + Λ ( i ) k ρ ( i ) 2 2 = ρ ( i ) 2 h k ( z k , φ k ) + Λ ( i ) k ρ ( i ) ! > h k ( z k , φ k ) + Λ ( i ) k ρ ( i ) ! = ρ ( i ) 2 h k ( z k , φ k ) + Λ ( i ) k ρ ( i ) 2 2 , are equiv alent. Scaling by the constant 1 /ρ ( i ) yields the result. Remark 1 Pr op osition 1 holds for any sum-of-squar es c ost function with (5a) as the sp e cial c ase, for instanc e kS ( z k ) k 2 2 , wher e S is any ve ctor-value d function. 7 Prop osition 1 sho ws that w e can employ the same NLLS-b o x solv ers to solve (7), whic h ma y b e more efficien t and n umerically robust (cf. Section 4) as compared to the use of other NLP solvers. When using BLM, sequences of z ( i ) k and Λ ( i ) k resp ectiv ely conv erge to their optimal v alues z ? k and Λ ? k whereas h k ( z ? k , φ k ) ≈ 0 , n umerically . Then via Prop osition 1, we note that for a fixed v alue of ρ k Λ ? k k ∞ in the equalit y-constrained case, we obtain h k z ( i +1) k , φ k ≈ Λ ( i +1) k /ρ ≈ 0 [23, Chapter 17], which is simply the solution obtained using a single iteration of QPM for the same ρ and is consistent with the sp ecial case describ ed by [29, Theorem 1]. Although with BLM it is p ossible to initialize ρ to aribtrarily lo w v alues and solve numerically easier problems, which is its main adv an tage ov er QPM, the final v alue of ρ is not guaranteed to remain low. A main disadv antage of BLM ov er QPM is that it needs problem (5) to b e feasible, otherwise the problem must b e formulated with soft c onstr aints on output v ariables [19], whic h t ypically results in the use of penalty functions with large v alues of the penalty parameter and non-b o x inequality constraints, making the problems relatively more difficult to solve. Moreo ver, ev en if the feasibility of (5) is given, it may tak e significantly longer to solve multiple instances of (7) as compared to a single iteration of QPM with a large p enalt y , which is more suitable for MPC problems where slight suboptimality may be preferable to a longer computation time. How ev er, in the presence of har d general (nonlinear) inequality constraints where QPM might not b e applicable, using BLM for feasible problems with the proposed solver and sparsity exploiting methods describ ed in the following sections may b e an efficien t alternative. BLM is not discussed further as the scop e of this pap er is limited to MPC problems with b o x constrain ts on decision v ariables. 4 Optimization algorithm 4.1 Bounded-v ariable nonlinear least squares In order to efficiently solve the MPC problem (6), it is desirable to hav e a solu- tion metho d that benefits from warm-starting information, is robust to problem scaling, and exploits the structure of the problem. The b ounded-v ariable nonlin- ear least-squares (BVNLLS) metho d we propose in Algorithm 1 addresses suc h features. It can be seen as either an ad ho c primal-feasible line-searc h SQP algo- rithm [23] or an extension of the Gauss-Newton metho d [5, Section 9.2] to handle b o x-constrain ts. The Gauss-Newton approximation of the Hessian is effective for nonlinear least-squares cost functions and it only needs first-order informa- tion of the residual. Although the global conv ergence prop ert y of Algorithm 1 follo ws that of line-search methods for problems with simple b ounds [4], we pro- vide b elo w an alternative pro of sp ecific to BVNLLS for an insightful o verview whic h also justifies the backtrac king rule (Steps 6-10 of Algorithm 1), that is analogous to the Armijo c ondition [23] for the c hoice of the step-size α . 8 Algorithm 1 Bounded-V ariable Nonlinear Least Squares (BVNLLS) solver Inputs: Bounds p, q ∈ R n z , feasible initial guess z , b = r ( z ), optimality toler- ance γ ≥ 0, c ∈ (0 , 0 . 5), τ ∈ (0 , 1). 1: J ← J z r (Linearization); 2: L ← { j | z ( j ) ≤ p ( j ) } ; U ← { j | z ( j ) ≥ q ( j ) } ; 3: d ← J > b (Compute gradien t of the cost function); λ p ( j ) ← d ( j ) , ∀ j ∈ L ; λ q ( j ) ← − d ( j ) , ∀ j ∈ U ; 4: if λ p ( j ) ≥ − γ , ∀ j ∈ L and λ q ( j ) ≥ − γ , ∀ j ∈ U and | d ( j ) | ≤ γ , ∀ j / ∈ L ∪ U then go to Step 12 (Stop if conv erged to a first-order optimal p oin t); 5: ∆ z ← arg min p − z ≤ ∆ ˆ z ≤ q − z k J ∆ ˆ z + b k 2 2 (searc h direction); 6: α = 1; θ ← cαd > ∆ z ; ψ ← b > b ; b ← r ( z + ∆ z ); Φ ← b > b ; 7: while Φ > ψ + θ do (Backtrac king line search) 8: α ← τ α ; θ ← αθ ; 9: b ← r ( z + α ∆ z ); Φ ← b > b ; 10: end while 11: z ← z + α ∆ z ; go to Step 1 (Update the iterate); 12: z ? ← z ; λ p ( j ) ← 0 , ∀ j / ∈ L ; λ q ( j ) ← 0 , ∀ j / ∈ U ; 13: end. Outputs: Lo cal minim um z ? of (5), ob jective function v alue Φ at z ? , and Lagrange m ultiplier vectors λ p and λ q corresp onding to low er and upper b ounds, respectively . 4.2 Global con vergence A t the i th iteration of Algorithm 1, the search direction ∆ z ( i ) is computed at Step 5 as ∆ z ( i ) = arg min ¯ p ≤ ∆ ˆ z ≤ ¯ q k J ∆ ˆ z + b k 2 2 , (9) where the Jacobian matrix J = J z r z ( i − 1) is full rank, b = r z ( i − 1) , ¯ p = p − z ( i − 1) and ¯ q = q − z ( i − 1) , and p, q are the bounds on z . Lemma 1 (Primal feasibility) Consider that z ( i ) = z ( i − 1) + α ∆ z ( i ) as in Step 11 at the i th iter ation of Algorithm 1 with any α ∈ (0 , 1] . If p ≤ z (0) ≤ q and ¯ p ≤ ∆ z ( i ) ≤ ¯ q , then p ≤ z ( i ) ≤ q at al l iter ations i . Pro of: W e prov e the lemma by induction. The lemma clearly holds for i = 0, as by assumption the initial guess z 0 is feasible, p ≤ z (0) ≤ q . Consider the i th iteration of Algorithm 1. F rom Step 5 we hav e that p − z ( i − 1) ≤ ∆ z ( i ) ≤ q − z ( i − 1) , which m ultiplied b y α , α > 0, gives αp − αz ( i − 1) ≤ α ∆ z ( i ) ≤ αq − αz ( i − 1) . (10) By adding z ( i − 1) to each side of the inequalities in (10) we get αp + (1 − α ) z ( i − 1) ≤ z ( i ) ≤ αq + (1 − α ) z ( i − 1) . (11) By induction, let us assume that p ≤ z ( i − 1) ≤ q . Since α ≤ 1, we get the further inequalities p + (1 − α ) p ≤ z ( i ) ≤ αq + (1 − α ) q 9 or p ≤ z ( i ) ≤ q . Lemma 2 The se ar ch dir e ction ∆ z ( i ) given by (9) is a desc ent dir e ction for the c ost function f ( z ) = 1 2 k r ( z ) k 2 2 in (6) . Pro of: If D ( f ( z ) , ∆ z ) denotes the directional deriv ative of f ( z ) in the direction ∆ z , then ∆ z ( i ) is a descen t direction if D f z ( i − 1) , ∆ z ( i ) < 0. By definition of directional deriv ativ e [23, Appendix A], D f z ( i − 1) , ∆ z ( i ) = ∇ z f z ( i − 1) > ∆ z ( i ) . (12) By substituting ∇ z f z ( i − 1) = J z r z ( i − 1) > r z ( i − 1) = J > b (13) in (12) w e get D f z ( i − 1) , ∆ z ( i ) = b > J ∆ z ( i ) . (14) As ∆ z ( i ) solv es the con vex subproblem (9), the following Karush-Kuhn-T uck er (KKT) conditions [6] hold: J > J ∆ z ( i ) + b + Λ ¯ q − Λ ¯ p = 0 (15a) ∆ z ( i ) ≥ ¯ p (15b) ∆ z ( i ) ≤ ¯ q (15c) Λ ¯ q , Λ ¯ p ≥ 0 (15d) Λ ¯ q ( j ) ∆ z ( i ) ( j ) − ¯ q ( j ) = 0 ∀ j (15e) Λ ¯ p ( j ) ¯ p ( j ) − ∆ z ( i ) ( j ) = 0 ∀ j, (15f ) where Λ ¯ q and Λ ¯ p denote the optimal Lagrange multipliers of subproblem (9). F rom (15a) we hav e, b > J ∆ z ( i ) = (Λ ¯ p − Λ ¯ q ) > ∆ z ( i ) − ∆ z ( i ) > J > J ∆ z ( i ) . (16) By substituting ¯ p = p − z ( i − 1) and ¯ q = q − z ( i − 1) in the complementarit y conditions (15e)-(15f), w e can write Λ > ¯ q ∆ z ( i ) − q + z ( i − 1) + Λ > ¯ p p − z ( i − 1) − ∆ z ( i ) = 0 , i.e., (Λ ¯ q − Λ ¯ p ) > ∆ z ( i ) = Λ > ¯ q ( q − z ( i − 1) ) + Λ > ¯ p ( z ( i − 1) − p ) . F rom (15b)-(15d) we hav e Λ ¯ q , Λ ¯ p ≥ 0 , and by Lemma 1 q − z ( i − 1) ≥ 0 as w ell as z ( i − 1) − p ≥ 0 , which implies that (Λ ¯ q − Λ ¯ p ) > ∆ z ( i ) ≥ 0 , i.e., (Λ ¯ p − Λ ¯ q ) > ∆ z ( i ) ≤ 0 . (17) 10 Since J is full rank, J > J > 0. Using this fact and Lemma 4 along with (17) in (16) giv es b > J ∆ z ( i ) < 0 . (18) Considering (18) and (14), we ha ve that the directional deriv ative for the con- sidered search direction is negativ e, whic h prov es the lemma. Remark 2 We infer fr om L emma 1 and (15b) - (15c) that BVNLLS is a primal- fe asible metho d, which is an imp ortant pr op erty when the function r ( z ) is not analytic b eyond b ounds [2]. Lemma 3 If the solution of (9) is ∆ z ( i ) = 0 , z ( i − 1) is a stationary p oint satisfying the first-or der optimality c onditions of pr oblem (6) . Pro of: Giv en ∆ z ( i ) = 0 , w e need to prov e that z ( i − 1) satisfies the following first-order optimality conditions for problem (6): J z r ( z ) > r ( z ) + λ q − λ p = 0 (19a) p ≤ z ≤ q (19b) λ q , λ p ≥ 0 (19c) λ q ( j )( z ( j ) − q ( j )) = λ p ( j )( p ( j ) − z ( j )) = 0 , ∀ j, (19d) where the optimal Lagrange multipliers are denoted by λ p and λ q for the low er and upp er b ounds, resp ectiv ely . By substituting ∆ z ( i ) = 0 in (15), and recalling ¯ q = q − z ( i − 1) and ¯ p = p − z ( i − 1) , we obtain J > b + Λ ¯ q − Λ ¯ p = 0 , (20a) p ≤ z ( i − 1) ≤ q , (20b) Λ ¯ q ( j )( z ( i − 1) ( j ) − q ( j )) = 0 ∀ j, (20c) Λ ¯ p ( j )( p ( j ) − z ( i − 1) ( j )) = 0 ∀ j. (20d) Clearly , considering (15d) along with the definitions of J , b , and (20), we con- clude that z ( i − 1) , Λ ¯ q and Λ ¯ p solv e the KKT system (19). Lemma 4 In Algorithm 1, ∆ z ( i ) 6 = 0 at any iter ation. Pro of: W e pro ve this lemma by contradiction. Assume that Algorithm 1 reac hes an iteration i where Step 5 is executed and returns ∆ z ( i ) = 0 . This implies that z ( i − 1) is a stationary p oin t satisfying the first-order optimality conditions of nonlinear problem (6), as shown in Lemma 3. Then, the termina- tion criterion in Step 4 would end the algorithm without further computations, so that iteration i is never reached, a contradiction. Note that in particular, if the initial guess z (0) is optimal, ∆ z ( i ) is never computed. 11 Theorem 1 (Global conv ergence of BVNLLS) Consider the optimization pr oblem (6) and define the sc alar c ost function f ( z ) = 1 2 k r ( z ) k 2 2 . At e ach iter a- tion i of Algorithm 1, ther e exists a sc alar α ∈ (0 , 1] such that f z ( i − 1) + α ∆ z ( i ) − f z ( i − 1) < cα ∇ f z ( i − 1) > ∆ z ( i ) (21) with 0 < α ≤ 1 and 0 < c < 1 , wher e z ( i ) = z ( i − 1) + α ∆ z ( i ) . Pro of: Consider the T aylor series expansion of f z ( i ) f z ( i − 1) + α ∆ z ( i ) = f z ( i − 1) + α ∇ z f z ( i − 1) > ∆ z ( i ) + α 2 2 ∆ z ( i ) > ∇ 2 z f z ( i − 1) ∆ z ( i ) + E ( k α ∆ z ( i ) k 3 ) , (22) where the term E k ( · ) k 3 represen ts the third order error. Also, ∇ 2 z f z ( i − 1) = n r X j =1 r j z ( i − 1) ∇ 2 z r j z ( i − 1) + J z r z ( i − 1) > J z r z ( i − 1) = H + J > J, (23) where r j denotes the j th element of the residual vector. By substituting the relations (13) and (23) in (22) we get f z ( i − 1) + α ∆ z ( i ) − f z ( i − 1) = αb > J ∆ z ( i ) + α 2 2 ∆ z ( i ) > H + J > J ∆ z ( i ) + E α ∆ z ( i ) 3 . (24) Using (16), Equation (24) can b e simplified as f z ( i − 1) + α ∆ z ( i ) − f z ( i − 1) = − α (2 − α ) 2 ∆ z ( i ) > J > J ∆ z ( i ) + α (Λ ¯ p − Λ ¯ q ) > ∆ z ( i ) + α 2 2 ∆ z ( i ) > H ∆ z ( i ) + E α ∆ z ( i ) 3 . (25) Referring (13) and (16), on subtracting cα ∇ f ( z ( i − 1) ) > ∆ z from b oth sides of (25) w e get f z ( i − 1) + α ∆ z ( i ) − f z ( i − 1) − cα ∇ f z ( i − 1) > ∆ z ( i ) = − α (2 − 2 c − α ) 2 ∆ z ( i ) > J > J ∆ z ( i ) + α (1 − c )(Λ ¯ p − Λ ¯ q ) > ∆ z ( i ) + α 2 2 ∆ z ( i ) > H ∆ z ( i ) + E α ∆ z ( i ) 3 . (26) 12 J h k ( z ) = ∇ z h k ( z k , φ k ) > = B (1) 1 A (1) 0 0 0 · · · · · · 0 B (2) 2 A (2) 1 B (2) 1 A (2) 0 0 · · · · · · 0 . . . . . . . . . . . . B ( N u ) N u A ( N u ) N u − 1 · · · B ( N u ) 1 A ( N u ) 0 0 · · · 0 B ( N u +1) N u +1 A ( N u +1) N u · · · B ( N u +1) 3 A ( N u +1) 2 2 P i =1 B ( N u +1) i A ( N u +1) 1 A ( N u +1) 0 0 · · · 0 B ( N u +2) N u +2 A ( N u +2) N u +1 . . . · · · B ( N u +2) 4 A ( N u +2) 3 3 P i =1 B ( N u +2) i A ( N u +2) 2 A ( N u +2) 1 A ( N u +2) 0 0 · · · 0 . . . . . . . . . . . . . . . . . . 0 B ( N p ) N p A ( N p ) N p − 1 · · · B ( N p ) N p − N u +2 A ( N p ) N p − N u +1 N p − N u +1 P i =1 B ( N p ) i A ( N p ) N p − N u A ( N p ) N p − N u − 1 · · · A ( N p ) 1 A ( N p ) 0 (29) Let ¯ N = − (2 − 2 c − α ) 2 ∆ z ( i ) > J > J ∆ z ( i ) + (1 − c )(Λ ¯ p − Λ ¯ q ) > ∆ z ( i ) . F rom (17), Le mma 4, and from the facts that α ∈ (0 , 1], c ∈ (0 , 1), and that matrix J has full rank ( J > J > 0), we infer that ¯ N must b e negative for sufficien tly small α . Let ¯ M = 1 2 ∆ z ( i ) > H ∆ z ( i ) + E α ∆ z ( i ) 3 Then (26) can b e written as f z ( i − 1) + α ∆ z ( i ) − f z ( i − 1) − cα ∇ f z ( i − 1) > ∆ z ( i ) = α ¯ N + α 2 ¯ M . (27) Let α ¯ N + α 2 ¯ M + = 0, or = α − α ¯ M − ¯ N . Clearly , since ¯ N < 0, there exists a v alue of α > 0 such that > 0. This prov es that there exists a p ositiv e v alue of α suc h that α ¯ N + α 2 ¯ M < 0. Hence from (27), f z ( i − 1) + α ∆ z ( i ) − f z ( i − 1) − cα ∇ f z ( i − 1) > ∆ z ( i ) < 0, for a sufficien tly small p ositiv e v alue of α . Remark 3 In the c ase of line ar MPC i.e., when h k ( z k , φ k ) is line ar in (6) , the b ounde d-variable le ast-squar es (BVLS) pr oblem (9) is solve d only onc e as the KKT c onditions (19) c oincide with (15) . Mor e over, the b acktr acking steps ar e not r e quir e d as the higher or der terms in (22) ar e zer o and The or em 1 holds with α = 1 for any c ∈ (0 , 1) . Remark 4 R eferring to (26) , the value of c is pr actic al ly kept b elow 0 . 5 in A lgorithm 1 in or der to enfor c e fast c onver genc e with ful l steps and is typic al ly chosen to b e as smal l as 10 − 4 [23]. As se en in (26) , sinc e we only ne e d the matrix J to b e ful l r ank for c onver genc e of BVNLLS, the matrix W k in (6) may b e r ank-deficient as long as J is ful l r ank. 13 Remark 5 Sub optimality in solving the BVLS subpr oblems may r esult in a smal ler de cr e ase in the c ost b etwe en BVNLLS iter ations than the the or etic al de cr e ase indic ate d by The or em 1. Henc e, it is essential to have an ac cur ate BVLS solver in or der to have fast c onver genc e. F or this r e ason, we advo c ate the use of active-set metho ds to solve BVLS pr oblems. Eac h iteration of BVNLLS corresp onds to solving a linear MPC problem, a sp ecial case of (6). This allows to hav e a common framework for linear and nonlinear MPC in our approac h. The BVLS problem (9) can b e solved effi- cien tly and accurately by using a primal activ e-set algorithm as shown in [30], whic h use s numerically robust recursive QR factorization routines to solv e the LS subproblems. Unlike most of the QP solvers, in which the Hessian J > J w ould b e constructed via a matrix m ultiplication and then factorized, the BVLS solv er [30] only factorizes column subsets of J , whose condition n umber is square-ro ot as compared to that of J > J , whic h makes it numerically preferable. In applications with very restrictive memory requirements, using the metho ds describ ed in Section 5 with the gradien t-pro jection algorithm [22] on the primal problem (9), one may employ a matrix-free solver similar to [12] and its refer- ences. How ev er, when using the gradien t-pro jection algorithm, its low memory usage may come at the cost of slo w conv ergence due to their sensitivit y to prob- lem scaling. The follo wing sections show how the Jacobian matrix J can b e replaced by using parameterized operators for saving memory and how its spar- sit y can b e exploited for faster execution of the prop osed BVLS solv er of [30]. 5 Abstracting matrix instances 5.1 Problem structure The sparse structure of matrices W k and ∇ z h k ( z k , φ k ) > , whic h form the Jaco- bian J of the residual in (6), completely dep ends on the MPC tuning parameters, mo del order, and the ordering of the decision v ariables. Let us assume that there are no slack v ariables due to non-b o x inequality constrain ts (4). In case of slack v ariables, the sparsity pattern will dep end on the structure of Jacobian of the inequality constrain ts, whic h is not discussed in further detail here for conciseness. By ordering the decision v ariables in vector z k as follows z k = u > k y > k +1 u > k +1 y > k +2 . . . u > k + N u − 1 y > k + N u y > k + N u +1 . . . y > k + N p − 1 y > k + N p i > (28) w e get the matrix structure describ ed in (29), where the superscript of matrices in parentheses denote the output prediction step the matrices refer to. Note that we dropp ed the parentheses ( S k ) in (29) to simplify the notation and, as defined in (2), A ( S k ) j = 0 , ∀ j > n a , and B ( S k ) j = 0 , ∀ j > n b . Clearly , the Jacobian matrix J h k of equalit y constraints only consists of en tries from the sequence of linear mo dels of the form (2) linearized around the initial guess tra jectory . Considering the mo del parameters n a , n b to b e smaller than N p in (29), as illustrated in Figure 1, we observe that the top-left part of J h k is blo c k sparse, the b ottom-right part has a block-banded structure, the b ottom- left part has dense columns corresponding to u k + N u − 1 , whereas the top-righ t 14 0 5 10 15 20 25 0 5 10 15 20 Figure 1: Sparsity pattern of J h k for a random mo del with N p = 10, N u = 4, n a = 2, n b = 4, n u = 2 and n y = 2. part is a zero matrix with n y N u ro ws and n y · ( N p − N u ) columns. If n a , n b are greater than N p , then J h k w ould instead hav e its b ottom-left part to b e dense with block low er-triangular structure in its top-left and b ottom-right parts. All in all, the sparsity pattern of J h k is completely defined by the mo del parame- ters n u , n y , n a , n b , and MPC horizons N u , N p . Clearly , ev aluating J h k only requires the sequence of linear mo dels and the sparsity pattern information. Note that in case the linear mo dels are computed by a linearization function, a memory/throughput tradeoff can b e c hosen here, as they can b e either com- puted once and stored (lo west throughput), or ev aluated by the linearization eac h time they are required (low est memory allo cation). Finally , recalling (6), w e obtain the full Jacobian matrix J = W k J h k required in Algorithm 1, where W k is the block diagonal matrix W k = blo c kdiag( W u k , W y k +1 , W u k +1 , W y k +2 , . . . , W u k + N u − 1 , W y k + N u , W y k + N u +1 , . . . , W y k + N p ) In the sequel w e assume for simplicity that all matrices W u ( · ) , W y ( · ) are diagonal, so that W k is actually a diagonal matrix. 5.2 Abstract op erators All matrix-vector op erations inv olving J in Algorithm 1 and in the BVLS solv er [30], including the matrix factorization routines that will b e described 15 Algorithm 2 Op erator Jix Inputs: Output memory v = 0 ∈ R n z + N p n y ; v ector w storing diagonal elements of W k ; scalar x ; column num ber i ; parameters n a , n b , n u , n y , N u and N p . 1: v ( i ) ← w ( i ) · x ; 2: Find integers β ∈ [0 , N p ) and η ∈ [1 , n u + n y ] such that i = β n y + n u · min( β , N u − 1) + η ; 3: ¯ n ← N u n u + ( N p + β ) n y ; m ← N u n u + 2 N p n y ; j ← 0; 4: if β 6 = N u − 1 or η > n u then 5: if η > n u , ¯ m ← ¯ n + n a n y + n y else ¯ m ← ¯ n + n b n y ; 6: for j 0 ∈ { ¯ n, ¯ n + n y , · · · , min( ¯ m, m ) − n y } do 7: if η > n u then ∀ j 00 ∈ { 1 , · · · , n y } , 8: v ( j 0 + j 00 ) ← x · A ( β + j +1) j ( j 00 , η − n u ); 9: else 10: v ( j 0 + j 00 ) ← x · B ( β + j +1) j +1 ( j 00 , η ); 11: end if 12: j ← j + 1; 13: end for 14: else 15: for j 0 ∈ { ¯ n, ¯ n + n y , · · · , m − n y } do 16: j ← j + 1; 17: ¯ B ( j 00 ) ← min( j, n b ) P i 0 =1 B β + j i 0 ( j 00 , η ), ∀ j 00 ∈ [1 , n y ]; 18: v ( j 0 + j 00 ) ← x · ¯ B ( j 00 ), ∀ j 00 ∈ [1 , n y ]; 19: end for 20: end if 21: end. Output: V ector v = i th column of J in (9) scaled b y x . in Section 6, only need the product of a column subset of J or a row-subset of J > with a vector. Hence, rather than explicitly forming and storing J , all the op erations in volving J can b e represented by t wo op erators Jix ( i th column of J times a scalar x ) and JtiX ( i th column of J times a vector X ) defined by Algorithms 2 and 3, resp ectively . The basic principle of b oth Algorithms 2 and 3 is to extract nonzero entries indexed in J from the corresponding model coefficients based on the given mo del and MPC tuning parameters. Since the top part W k of J is a diagonal matrix, the first nonzero en try in any column of J is obtained from the v ector of w eights (cf. Step 1 of Jix and JtiX ). The remaining steps only concern ev aluating J h k as in (29), in whic h the coefficients in eac h column matc h the corresp onding elemen t in z k as in (28). Referring to the sparsity pattern of J h k in (29), eac h of its columns only con tains either mo del co efficien ts related to the input or to the output, and in the columns corresp onding to the inputs u k + N u − 1 some of the input co efficien ts are summed due to the finite control horizon N u < N p . The lo cation of the first nonzero term in each column of J h k dep ends on the corresp onding stage of the input or output v ariable in prediction, whereas the 16 Algorithm 3 Op erator JtiX Inputs: V ector w storing diagonal elements of W k ; vector X ; column num b er i ; parameters n a , n b , n u , n y , N u and N p . 1: v 0 ← w ( i ) · X ( i ); 2: Steps 2-3 of Algorithm 2; 3: if β 6 = N u − 1 or η > n u then 4: if η > n u , ¯ m ← ¯ n + n a n y + n y else ¯ m ← ¯ n + n b n y ; 5: for j 0 ∈ { ¯ n, ¯ n + n y , · · · , min( ¯ m, m ) − n y } do 6: if η > n u then ∀ j 00 ∈ { 1 , · · · , n y } , 7: v 0 ← v 0 + X ( j 0 + j 00 ) · A ( β + j +1) j ( j 00 , η − n u ); 8: else 9: v 0 ← v 0 + X ( j 0 + j 00 ) · B ( β + j +1) j +1 ( j 00 , η ); 10: end if 11: j ← j + 1; 12: end for 13: else 14: for j 0 ∈ { ¯ n, ¯ n + n y , · · · , m − n y } do 15: Steps 16-17 of Algorithm 2; 16: v 0 ← v 0 + X ( j 0 + j 00 ) · ¯ B ( j 00 ), ∀ j 00 ∈ [1 , n y ]; 17: end for 18: end if 19: end. Output: v 0 = inner product of i th ro w of J > in (9) and X . last entry dep ends on n a or n b and N p . Hence, in Step 2 of Algorithm 2, the integer β is computed such that β n y + 1 is the index of the first nonzero en try in J h k ( z ) (cf. Steps 3, 6 and 15). The in teger η computed in the same step denotes the input or output channel to which the column corresp onds, in order to accordingly index and extract the co efficien ts to b e scaled as sho wn in Steps 8, 10 and 17 of Algorithm 2. Dep ending on the column index i of J , computing β and η only needs a trivial n umber of integer op erations including at most one in teger division, for instance, if i ≤ N u ( n u + n y ), β is obtained b y an in teger division of i b y ( n u + n y ) and η = i − β ( n u + n y ). The same computation is straightforw ard for the only other p ossible case in whic h i > N u ( n u + n y ). Clearly , since the rows of J > are the columns of J , Algorithm 3 differs from Algorithm 2 only in Steps 7, 9 and 16 in which the scaled co efficient is accumulated to the resulting inner pro duct instead of a plain assignment op eration. It is p ossible to easily extend Algorithm 3 for the sp ecial case in whic h X in JtiX is the i th column of J i.e., to efficiently compute the 2-norm of the i th column of J , which may b e required in the linear algebra routines. Replacing the instances of J b y Jix and JtiX in the BVNLLS and in the inner BVLS solver has the follo wing adv an tages: 1) The problem construction step in MPC is eliminated, as matrix J is neither formed nor stored. 2) The co de of the tw o op erators do es not change with any change in the re- quired data or dimensions as all the indexing steps are parameterized in terms 17 of MPC tuning parameters, i.e., known data. Hence, the resulting optimization solv er does not need to b e co de-generated with a change in problem dimensions or data. The same fact also allows real-time changes in the MPC problem data and tuning parameters without any change in the solver. A structural c hange in the BVNLLS optimization problem formulation, such as the type of p erfor- mance index, is already decided in the MPC design phase and can b e simply accommo dated b y only mo difying Algorithms 2 and 3. 3) Unlike basic sparse-matrix storage sc hemes [27] which w ould store the nonze- ros of J along with indexing information, we only store the sequence of linear mo dels at most, resulting in a significantly low er memory requirement. Alter- nativ ely , as men tioned earlier, ev en the co efficients A ( ∗ ) ∗ , B ( ∗ ) ∗ can b e generated during the execution of Algorithms 2- 3 using linearization functions applied on the current tra jectory . 4) The num b er of floating-point op erations ( flops ) in volving instances of J , both in the BVNLLS and the BVLS solvers, is minimal and is reduced essentially to what sparse linear algebra routines can achiev e. 5) A matrix-free implementation can b e ac hieved when using the gradient- pro jection algorithm [22] to solve (9) in BVNLLS, as the op erators Jix and JtiX can b e used for computing the gradient. In addition, considering that ev en the mo del co efficien ts are optional to store, the resulting NMPC algo- rithm will hav e negligible increase in memory requiremen t w.r.t. the prediction horizon. 6 Recursiv e thin QR factorization The primal active-set metho d for solving BVLS problems describ ed in [30] ef- ficien tly solves a sequence of related LS problems using recursive thin QR fac- torization. The reader is referred to [9, 16, 30] for an ov erview on thin QR factorization and the rec ursiv e up date routines in the context of the BVLS solv er. This se ction shows how the sparsit y of matrix J can b e exploited for significan tly reducing the computations in volv ed in the recursive up dates of its QR factors, without the use of sparse-matrix storage or conv entional sparse lin- ear algebra routines. The main idea is to hav e the lo cation of nonzeros in the matrix factors expressed in terms of mo del and MPC tuning parameters, as de- scrib ed abov e. W e first analyze how the sparse structure of column subsets of J is reflected in their thin QR factors based on Gram-Sc hmidt orthogonalization, then characterize the recursive up date routines. 6.1 Gram-Sc hmidt orthogonalization Recall that J ∈ R m × n , where n = N u n u + N p n y and m = n + N p n y , i.e., m > n (see (6), (9), (28) and (29)). Let J F denote the matrix formed from those columns of J with indices in the set F . Then there exists a unique thin QR factorization [16, Theorem 5.2.3] of J F whic h ma y b e expressed via the 18 Gram-Sc hmidt orthonormalization pro cedure ∀ i ∈ [1 , |F | ] as Q 0 i = J F i − i − 1 X j =1 Q j Q > j J F i , (30a) Q i = Q 0 i / Q 0 i 2 , (30b) R ( j, i ) = Q > j J F i , ∀ j ∈ [1 , i − 1] , (30c) R ( i, i ) = Q 0 i 2 , (30d) where Q ∈ R m ×|F | : = [ Q 1 , Q 2 , · · · , Q |F | ] has orthonormal columns, R ∈ R |F |×|F | is upp er triangular and J F = QR . In (30), with a slight abuse of notation, the subscripts denote column n umber, i.e., Q i denotes the i th column of Q , whereas F i denotes the i th index in F . As sho wn in (30a), starting from the first column of J F , the procedure constructs an orthogonal basis by sequentially orthogonal- izing the subsequent columns w.r.t. the basis. The orthogonalization pro cedure sho wn in (30a) is referred to as the classical Gram-Schmidt (CGS) method [16, Section 5.2.7]. Since the CGS method is practically prone to n umerical can- cellation due to finite-precision arithmetic, w e use the modified Gram-Schmidt (MGS) metho d [16, Section 5.2.8] in which the orthogonalization is p erformed using the working v alue of Q 0 i instead of J F i in each iteration of the pro ce- dure. When applying MGS to solve the linear system b efore recursive up dates, w e also orthogonalize the right hand side (RHS) of the equations, i.e., we use an augmen ted system of equations in order to comp ensate the orthogonaliza- tion error (cf. [33, Chapter 19]). Moreo ver, for numerical robustness in limited precision, in the prop osed MGS pro cedure a reorthogonalization step is auto- matically p erformed which iteratively refines the QR factors for reducing the orthogonalization error in case it exceeds a given threshold (cf. [30, Algorithm 2], [9]). 6.2 Sparsit y analysis In order to av oid redundant flops due to multiplying zero en tries while solving the LS problems without sparse storage sc hemes, we first determine the sparsity pattern of Q and R approximately , based on the relations described in (30). While doing so, the following notions will b e used. Definition 1 (Nonzero structure) We define the nonzer o structur e of a ve c- tor x to b e the set of indic es S ( x ) such that x ( i ) 6 = 0 , ∀ i ∈ S ( x ) , and x ( j ) = 0 , ∀ j / ∈ S ( x ) . Definition 2 (Predicted nonzero structure) If ˆ S ( x ) denotes the pr e dicte d nonzer o structur e of a ve ctor x , then x ( j ) = 0 ∀ j / ∈ ˆ S ( x ) i.e., ˆ S ( x ) ⊇ S ( x ) . Based on the Definition 1, x = x 0 implies S ( x ) = S ( x 0 ) . (31) S ( x 0 + x 00 ) ⊆ {S ( x 0 ) ∪ S ( x 00 ) } , (32) 19 whic h holds with equality , i.e., S ( x 0 + x 00 ) = {S ( x 0 ) ∪ S ( x 00 ) } , if and only if the set { i | x 0 ( i ) + x 00 ( i ) = 0 , x 0 ( i ) 6 = 0 , x 00 ( i ) 6 = 0 } = ∅ . Lik ewise, S ( κx ) ⊆ S ( x ) , κ ∈ R , b ecause S ( κx ) = ∅ for κ = 0 whereas, S ( κx ) = S ( x ) , ∀ κ ∈ R \ { 0 } . (33) Theorem 2 Consider an arbitr ary sp arse matrix M ∈ R n 1 × n 2 of ful l r ank such that n 1 ≥ n 2 and let ˜ Q denote the Q-factor fr om its thin QR factorization i.e., M = ˜ Q ˜ R . The nonzer o structur e of e ach c olumn ˜ Q i of ˜ Q satisfies S ˜ Q i ⊆ i [ j =1 S ( M j ) , ∀ i ∈ [1 , n 2 ] , (34a) and S ˜ Q 1 = S ( M 1 ) . (34b) Pro of: W e consider the Gram-Schmidt orthogonalization pro cedure describ ed in (30) applied to M with F = [1 , n 2 ] (this simplifies the notation, i.e., F i = i ). Referring to (30b), since ˜ Q 0 represen ts an orthogonal basis of the full rank matrix M with real n umbers, 1 / ˜ Q 0 i 6 = 0 ∀ i , and hence from (33), S ˜ Q i = S ˜ Q 0 i , ∀ i. (35) F rom (30a), ˜ Q 0 1 = M 1 . (36) Th us, considering (36) with (31) and (35) prov es (34b). Again, considering (30a) with (35) and (31), S ˜ Q i = S M i − i − 1 X j =1 ˜ Q j ˜ Q > j M i = S M i + i − 1 X j =1 ˜ Q j κ j (37) where κ j = − ˜ Q > j M i ∈ R , ∀ j ∈ [1 , i − 1], as κ j represen ts the result of an inner pro duct of tw o real v ectors. F rom (32) and (37), S ˜ Q i ⊆ S ( M i ) ∪ i − 1 [ j =1 S ˜ Q j . (38) Applying (38) recursively , S ˜ Q i ⊆ i [ j =2 S ( M j ) ∪ S ˜ Q 1 . (39) Th us, substituting (34b) in (39) completes the proof. 20 Corollary 1 Given i ∈ [1 , n 2 ] and j 0 ∈ [1 , n 2 ] , if j 0 [ j =1 S ( M j ) ∩ S ( M i ) = ∅ , then ˜ R ( j, i ) = 0 ∀ j ∈ [1 , j 0 ] . (40) Pro of: Based on result (34a) of Theorem 2, we can say that i S j =1 S ( M j ) is a predicted nonzero structure of ˜ Q i i.e., i [ j =1 S ( M j ) = ˆ S ˜ Q i , (41) and hence ˆ S ˜ Q i = S ( M i ) ∪ ˆ S ˜ Q i − 1 , ∀ i ∈ [1 , n 2 ] . (42) If S ˜ Q j ∩ S ( M i ) = ∅ , then ˜ Q j and M i ha ve disjoin t nonzero structures and hence, referring to (30c), S ˜ Q j ∩ S ( M i ) = ∅ = ⇒ R ( j, i ) = ˜ Q > j M i = 0 . (43) F rom (42) we hav e that ˆ S ˜ Q i ⊇ ˆ S ˜ Q i 0 , ∀ i 0 < i. (44) F rom (41), (44) and Definition 2, i.e., ˆ S ˜ Q i ⊇ S ˜ Q i , it follo ws that ( j 0 S j =1 S ( M j ) ) ∩ S ( M i ) = ∅ implies ˆ S ˜ Q j ∩ S ( M i ) = ∅ , ∀ j < j 0 . The corollary result is then immediate given (43). Theorem 2 and Corollary 1 establish rigorous upp er b ounds on the nonzero structure of the QR factors based on the nonzero structure of the factorized matrix. Since the nonzero structure of J F is completely determined in terms of model and tuning parameters as sho wn in Section 5.2, the predicted nonzero structure of its QR factors consequently dep ends only on them, as will b e shown in the remaining part of this section. Corollary 2 Consider the matrix J ∈ R m × n whose first n r ows form a diagonal matrix and the last m − n r ows c ontain J h k ( z ) as shown in (29) . L et J F denote the matrix forme d fr om the c olumns of J indexe d in the index set F such that F i +1 > F i , ∀ i ∈ [1 , |F | ] . If Q ∈ R m ×|F | denotes the Q-factor fr om the thin QR factorization of J F , then, ∀ i ∈ [2 , |F | ] , ( i S j =1 {F j } ) ∪ ( ¯ n F 1 , max ( B i − 1 , min ( ¯ m F i , m ))] = ˆ S ( Q i ) , wher e the p ositive inte gers ¯ n j 0 , ¯ m j 0 r esp e ctively denote the values of ¯ n , ¯ m c ompute d in Steps 2-5 of Algorithm 2 for j 0 th c olumn of J , and B is an index set such that its i th element stor es the lar gest index of ˆ S ( Q i ) . 21 Pro of: Considering the structure of matrix J , Definition 1 and the fact that min ( ¯ m j , m ) > ¯ n j ≥ n ≥ |F | , ∀ j by construction, we ha ve that S ( J F i ) = {F i } ∪ ( ¯ n F i , min ( ¯ m F i , m )] . (45) F rom (41) we note that i S j =1 S J F j = ˆ S ( Q i ), and using (45) w e can rewrite ˆ S ( Q i ) = i [ j =1 S J F j = i [ j =1 {F j } ∪ i [ j =1 ¯ n F j , min ¯ m F j , m , = i [ j =1 {F j } ∪ ( ¯ n F 1 , B i ] , (46) b ecause observing (29), F j +1 > F j implies ¯ n F j ≤ ¯ n F j +1 . F rom result (42), (45) and definition of set B , B i = max ( B i − 1 , min ( ¯ m F i , m )) , (47) whic h on substitution in (46) completes the pro of. Note that from (45) and result (34b), S ( Q 1 ) = S ( J F 1 ) = {F 1 , ( ¯ n F 1 , min ( ¯ m F 1 , m )] } . (48) By definition of set B w e hav e B 1 = min ( ¯ m F 1 , m ) from (48), and hence B i can b e determined ∀ i by using (47). Corollary 3 Q ( i, j ) = 0 ∀ i ∈ [1 , n ] \ F , ∀ j ∈ [1 , |F | ] . Also, ∀ j 0 ∈ [1 , |F | ] , Q ( i, j ) = 0 ∀ j ∈ [1 , j 0 ) such that i = F j 0 . Pro of: Let Q 00 denote the submatrix formed from the first n rows of Q . Since ¯ n F 1 > n , from Corollary 2 we can write i S j =1 {F j } = ˆ S ( Q 00 i ). Th us, referring this relation and Definition 2, if an index is not in the set F , the corresp onding row of Q 00 and hence Q has no nonzero element. The latter part is prov ed by (42) considering the facts that J is diagonal and F i +1 > F i . F rom Corollaries 2 and 3 we infer that the nonzero structure of all the |F | columns of Q can b e stored using a scalar for ¯ n F 1 and tw o integer vectors of dimension |F | con taining the index sets F and B , where B i = max (min ( ¯ m i , m ) , ¯ m i − 1 ). In order to only compute the nonzeros of R , while constructing each of its column, w e need to find and store a scalar j 0 as sho wn in Corollary 1. This is done b y using the relations describ ed in Theorem 2, Corollary 1 and (46). Sp ecifically , when computing the i th column of R ( i > 1), j 0 is found by coun ting the num b er of times B j < ¯ n F i for increasing v alues of j ∈ ˆ j , i un til the condition is not satisfied, where ˆ j denotes the v alue of j 0 for the ( i − 1)th column of R . 22 6.3 Recursiv e up dates In the primal active-set metho d, a change in the active-set corresp onds to an index inserted in or deleted from the set F . W e exploit the uniqueness of thin QR factorization in order to up date the structure indicating sets F and B . When an index t is inserted in the activ e-set of b ounds, the last column of Q and the i th column of R are deleted suc h that t = F i , and the QR factorization is up dated b y applying Given’s rotations that triangularize R . In this case F is simply updated to F 0 = F \ { t } and B is updated such that (47) is satisfied after remo ving its i th index. Morov er, using Corollary 3, the Given’s rotations are not applied on the t th row of Q whic h is simply zeroed. On the other hand, when an index t is remov ed from the active-set of b ounds, F is updated to F ∪ { t } suc h that F j +1 > F j , ∀ j . If t is inserted in F in the j th p osition, an index is inserted in the j th p osition of B using (47) and the elements with p osition greater than j are updated to satisfy (47). Since the sparse structure of the updated QR factors is known during recursiv e up dates, using F , B and Corollary 3, the flops for applying Given’s rotations on rows of Q and matrix-vector multiplications in the Gram-Schmidt (re)orthogonalization pro cedure are p erformed only on nonzero elements. This makes the QR update routines significantly faster as is reflected in the numerical results describ ed in Section 7. 6.4 Adv antages and limitations The predicted nonzero structure of the Q-factor via (42) is exact if and only if the set relation (34a) holds with equality . F or (34a) to hold with equality for Q , Q > j J F i m ust b e nonzero for all pairs of indices i and j referring the CGS orthogonalization in (30a) and moreo ver the summation of nonzeros in the RHS of (30a) must result in a nonzero . Ev en though theoretically this ma y not b e the case for the matrices that we consider, due to finite precision computations which disallow p erfect orthogonality , and the use of MGS with p oten tially multiple orthogonalizations to compute columns of Q , the predicted nonzero structure of columns of Q via Corollary 2 rarely contains indices of zero elements, i.e., numerically it is an accurate estimate and often the exact nonzero structure. Referring to Corollary 1 and [30, Algorithm 2], the same fact leads to the conclusion that if multiple orthogonalizations (for numerical robustness) are p erformed, in the w orst case, the upp er-triangular part of the R factor may ha ve no zero elements. Nevertheless, the initial sparsit y in R b efore reorthogonalization is still exploited in its construction but the w orst- case fill-in makes it necessary to use R as a dense upp er-triangular matrix when solving the triangular system by back-substitution to compute the solution of the underlying LS problem. F rom Theorem 2, w e observ e that the predicted nonzero structure of columns Q j , ∀ j ≥ i , would contain at least the indices of nonzero elements in the i th col- umn of J F . Hence, in case N u < N p , referring the analysis in Section 6.2, the fill-in of Q can b e reduced b y a re-ordering of the decision v ariable vec- tor in (28) such that the columns of J corresp onding to the v ariables u k + N u − 1 are mov ed to b ecome its last columns. Note that even though this re-ordering do es not optimize the fill-in of Q , for whic h dedicated routines exist in literature (cf. [10]), it still allo ws a relativ ely simple and a computationally effectiv e imple- men tation of recursive thin QR factorization for the matrix of interest through 23 a straightforw ard extension of the methods describ ed in Section 6.3. In order to b enefit computationally from the recursive up dates, a full stor- age of the thin QR factors is required. This causes greater memory requirement b ey ond a certain large problem size where a sparse-storage sc heme would need smaller memory considering that with con ven tional sparse linear algebra, one w ould only compute and store the R factor while alwa ys solving the LS problem from scratc h instead. Ho wev er, the latter approac h could turn out to be compu- tationally m uch more exp ensiv e. Using the techniques discussed in Sections 6.2 and 6.3 with a sparse-storage scheme could address this limitation sp ecific to large-scale problems for memory-constrained applications but it needs a muc h more intricate implementation with cumbersome indexing, that is b ey ond the scop e of this pap er. 7 Numerical results 7.1 Soft w are framework In order to implement the (nonlinear) MPC con troller based on form ulation (6) or (8), one only needs the co de for Algorithm 1. The inner BVLS solver of [30] could b e replaced by another algorithm that exploits sparsity via the abstract op erators, suc h as the gradien t-pro jection algorithm of [22] we mentioned earlier. Besides, routines that ev aluate the mo del (1) and the Jacobian matrices, i.e., the mo del co efficients in (2), are required from the user in order to ev aluate the residual and p erform the linearization step (or alternatively finite-differences) in BVNLLS. Note that an optimized self-contained co de for these routines can easily b e generated or derived by using sym b olic to ols suc h as those of MA TLAB or the excellent op en-source softw are CasADi [1]. This signifies that except for the user-defined mo del and tuning parameters, the softw are do es not need an y co de-generation, as for a given class of p erformance indices the code for Algorithms 1-3 does not change with the application. The user is only required to provide the MPC tuning parameters and a sym- b olic expression for the mo del (1), which eases the deplo yment of the prop osed MPC solution algorithm in embedded control hardware. 7.2 Computational p erformance The results presen ted in this section are based on a library-free C implementa- tion of BVNLLS based on Algorithms 2 and 3, and the BVLS solver based on the recursive thin QR factorization routines discussed in Section 6. The reader is referred to [31, Section 5] for details on simulation settings, tuning parame- ters, constraints, and b enchmark solvers related to the following discussion on the example problem, which consists of NMPC applied to a CSTR (contin uous stirred tank reactor). All the optimization problems in the simulations referred b elo w were solved un til conv ergence 1 , on a Macb o ok Pro 2013 equipp ed with 8GB RAM and 2.6 GHz Intel Core i5 processor. Figure 2 illustrates the sp ecific sim ulation scenario for whic h the execution times of the solvers w ere compared 1 the optimality and feasibility tolerances for all solvers were tuned to 10 − 6 and 10 − 8 respectively , in order to achiev e the same quality of solution at conv ergence for a fair compar- ison. 24 250 300 350 400 T emp erature (K) Reactor Reference F eed Co olan t 0 20 40 60 80 100 120 140 0 5 10 Time (min) Concen tration (kgmol/m 3 ) Reactor F eed Reference Figure 2: Closed-lo op NMPC sim ulation tra jectories of CSTR v ariables with N p = N u = 160 (16 min utes). for increasing v alues of the prediction horizon. Figure 3 shows that the equality constrain ts were satisfied with sufficient accuracy by BVNLLS. Hence, as also demonstrated in [31, Section 5, Figure 2], all the solvers including the prop osed one yield the same control p erformance. Figure 4 shows that the prop osed metho ds ( custom-sp arse ) allow BVNLLS to outp erform its dense linear algebra based v ariant even on small-sized test problems by almost an order of magnitude on av erage. As compared to other 0 20 40 60 80 100 120 140 0 1 2 3 · 10 − 5 Time (min) 1 2 k h ( z , φ ) k 2 2 Figure 3: W orst-case equalit y constraint residuals ( N p = N u = 160) for BVN- LLS with √ ρ = 10 4 during the sim ulation described b y Figure 2. 25 solv ers which are instead applied to the b enc hmark form ulation (5), i.e., the SQP solv er ( fmincon ) of MA TLAB and the interior-point solver (IPOPT) of [35], a reduction in computational time by around t wo orders of magnitude is observed for the small-sized test problems. This reduction can be credited to the fact that IPOPT, which is based on sparse linear algebra routines, is more effective for large-sized problems, and that BVNLLS exploits warmstarts based on the pre- viously computed solution which is pro vided from the second instance onw ards. Note that the solvers fmincon/IPOPT w ere used b ecause they are widely used as benchmarks (see for instance for IPOPT the recent pap er [34] and its refer- ences), and are av ailable for repro ducing the results, which also allows one to compare with another approach through the time ratios observ ed in the referred figures. Figure 5 suggests that despite b eing based on an active-set algorithm, the prop osed sparsity-exploiting metho ds emp o w er BVNLLS to significantly out- p erform the b enc hmarks even for large problems. 10 0 10 1 10 2 10 3 W orst-case CPU time (ms) dense BVNLLS custom-sparse BVNLLS fminconSQP IPOPT 30 45 60 75 90 105 120 135 150 10 − 1 10 0 10 1 10 2 Num b er of decision v ariables n Mean CPU time (ms) Figure 4: Computational time sp en t b y each solv er during NMPC simulation of CSTR [31] for increasing v alues of N p = N u = n/ 3, n set of b o x-constraints and 2 N p equalit y constrain ts. 8 Conclusions This pap er has presen ted a new approach to solving constrained linear and non- linear MPC problems that, by relaxing the equality constraints generated b y the prediction mo del in to quadratic penalties, allows the use of a very efficient 26 10 1 10 2 10 3 10 4 W orst-case CPU time (ms) custom-sparse BVNLLS fminconSQP IPOPT 150 210 255 300 345 390 435 480 10 1 10 2 10 3 10 4 Num b er of decision v ariables n Mean CPU time (ms) Figure 5: Computational time sp en t b y each solv er during NMPC simulation of CSTR for large v alues of N p = N u = n/ 3 with n set of b o x-constraints and 2 N p equalit y constrain ts. b ounded-v ariable nonlinear least squares solver. The linear algebra b ehind the latter has b een sp ecialized in detail to take into account the particular struc- ture of the MPC problem, so that the resulting required memory fo otprin t and throughput are minimized for efficien t real-time implementation, without the need of an y external adv anced linear algebra library . Ac kno wledgmen ts The authors thank Laura F errarotti and Mario Zanon (IMT Lucca) and Stefa- nia Bellavia (Univ ersity of Florence) for stim ulating discussions concerning the con vergence of BVNLLS. References [1] J. A. E. Andersson, J. Gillis, G. Horn, J. B. Rawlings, and M. Diehl. CasADi – A softw are framew ork for nonlinear optimization and optimal con trol. Mathematic al Pr o gr amming Computation , 11(1):1–36, 2019. [2] S. Bellavia, M. Macconi, and B. Morini. An affine scaling trust-region ap- proac h to b ound-constrained nonlinear systems. Applie d Numeric al Math- ematics , 44(3):257 – 280, 2003. 27 [3] A. Bemp orad, D. Bernardini, R. Long, and J. V erdejo. Mo del predictiv e con trol of turb o c harged gasoline engines for mass pro duction. In WCX TM : SAE World Congr ess Exp erienc e , Detroit, MI, USA, April 2018. [4] D. Bertsek as. Constr aine d Optimization and Lagr ange Multiplier Metho ds . A thena Scien tific, 1996. [5] ˚ A. Bj¨ orc k. Numeric al Metho ds for Le ast Squar es Pr oblems . So ciet y for Industrial and Applied Mathematics, Philadelphia, P A, 1996. [6] F. Borrelli, A. Bemporad, and M. Morari. Pr e dictive contr ol for line ar and hybrid systems . Cambridge Univ ersity Press, 2017. [7] M. Cannon. Efficien t nonlinear mo del predictive control algorithms. A nnual R eviews in Contr ol , 28(2):229–237, 2004. [8] L. Ca v anini, G. Cimini, and G. Ipp oliti. Computationally efficient mo del predictiv e con trol for a class of linear parameter-v arying systems. IET Contr ol The ory & Applic ations , 12(10):1384–1392, 2018. [9] J. W. Daniel, W. B. Gragg, L. Kaufman, and G. W. Stew art. Reorthogo- nalization and stable algorithms for up dating the Gram-Schmidt QR fac- torization. Mathematics of Computation , 30(136):772–795, 1976. [10] T.A. Davis. Dir e ct Metho ds for Sp arse Line ar Systems . So ciety for Indus- trial and Applied Mathematics, Philadelphia, P A, 2006. [11] S. Di Cairano and I. V. Kolmanovsky . Real-time optimization and mo del predictiv e control for aerospace and automotiv e applications. In Pr o c. An- nual A meric an Contr ol Confer enc e (A CC) , pages 2392–2 409, Milwauk ee, WI, 2018. [12] S. Diamond and S. Bo yd. Matrix-F r e e Convex Optimization Mo deling , pages 221–264. In: Goldegorin B. (eds) Optimization and Its Applications in Control and Data Sciences. Springer Optimization and Its Applications, v ol 115. Springer, Cham, 2016. [13] M. Diehl, H.G. Bo c k, and J.P . Schl¨ oder. A Real-Time Iteration Sc heme for Nonlinear Optimization in Optimal Feedback Control. SIAM Journal on Contr ol and Optimization , 43(5):1714–1736, 2005. [14] M. Diehl, H.J. F erreau, and N. Hav erb ek e. Efficient Numerical Metho ds for Nonlinear MPC and Mo ving Horizon Estimation. In L. Magni, D.M. Raimondo, and F. Allg¨ ow er, editors, Nonline ar Mo del Pr e dictive Contr ol. L e ctur e Notes in Contr ol and Information Scienc es , v olume 384, pages 56– 98. Springer, Berlin, Heidelb erg, 2009. [15] J. Dongarra, V. Eijkhout, and P . Luszczek. Recursive approach in sparse matrix LU factorization. Sci. Pr o gr am. , 9(1):51–60, 2001. [16] G. H. Golub and C. F. V an Loan. Matrix Computations . 4th ed. The John Hopkins Universit y Press, Baltimore, MD, 2013. 28 [17] J. L. Jerez, P . J. Goulart, S. Ric hter, G. A. Constan tinides, E. C. Kerrigan, and M. Morari. Embedded online optimization for mo del predictiv e con trol at megahertz rates. IEEE T r ansactions on Automatic Contr ol , 59:3238– 3251, 2014. [18] S. Jo e Qin and T. A. Badgwell. A survey of industrial mo del predictive con trol tec hnology . Contr ol Engine ering Pr actic e , 11(7):733 – 764, 2003. [19] E.C. Kerrigan and J.M. Maciejo wski. Soft constraints and exact p enalty functions in mo del predictiv e control. In Pr o c. UKA CC International Con- fer enc e (Contr ol) , Cam bridge, UK, 2000. [20] D. Kouzoupis, G. F rison, A. Zanelli, and M. Diehl. Recent adv ances in quadratic programming algorithms for nonlinear mo del predictive control. Vietnam Journal of Mathematics , Sept. 2018. [21] C. L. La wson and R. J. Hanson. Solving Le ast Squar es Pr oblems . Classics in Applied Mathematics. So ciet y for Industrial and Applied Mathematics, Philadelphia, P A, 1995. [22] Y. Nesterov. Intr o ductory L e ctur es on Convex Optimization: A Basic Course . Klu wer Academic, Dordrech t, The Netherlands, 2004. [23] J. Nocedal and S. W right. Numeric al Optimization . 2nd ed. Springer, 2006. [24] T. Oh tsuk a. A con tinuation/GMRES metho d for fast computation of non- linear receding horizon control. Automatic a , 40(4):563–574, 2004. [25] D. Piga, M. F orgione, S. F ormentin, and A. Bemp orad. Performance- orien ted mo del learning for data-driven MPC design. IEEE c ontr ol systems letters , 3(3):577–582, 2019. [26] M.D. Rafal and W.F. Stevens. Discrete dynamic optimization applied to on-line optimal con trol. AiChE Journal , 14(1):85–91, 1968. [27] Y. Saad. Iter ative Metho ds for Sp arse Line ar Systems . 2nd ed. Society for Industrial and Applied Mathematics, 2003. [28] N. Saraf. Bounde d-variable le ast-squar es metho ds for line ar and nonline ar mo del pr e dictive c ontr ol . Ph.D. dissertation, IMT School for Adv anced Studies Lucca, Italy , 2019. [29] N. Saraf and A. Bemp orad. F ast mo del predictive control based on linear input/output mo dels and b ounded-v ariable least squares. In Pr o c. 56th IEEE Confer enc e on De cision and Contr ol , pages 1919–1924, Melb ourne, Australia, 2017. [30] N. Saraf and A. Bemp orad. A b ounded-v ariable least-squares solver based on stable QR up dates. IEEE T r ansactions on Automatic Contr ol , 2019. [31] N. Saraf, M. Zanon, and A. Bemp orad. A fast NMPC approach based on b ounded-v ariable nonlinear least squares. In Pr o c. 6th IF AC Confer enc e on Nonline ar Mo del Pr e dictive Contr ol , pages 337–342, Madison, WI, August 2018. 29 [32] L. Stella, A. Themelis, P . Sopasakis, and P . Patrinos. A Simple and Ef- ficien t Algorithm for Nonlinear Mo del Predictive Control. In Pr o c. 56th IEEE Confer enc e on De cision and Contr ol , pages 1939–1944, Melb ourne, Australia, 2017. [33] L. N. T refethen and D. Bau. Numeric al Line ar Algebr a . So ciet y for Indus- trial and Applied Mathematics, Philadelphia, P A, 1997. [34] R. V ersc h ueren, G. F rison, D. Kouzoupis, N. v an Duijkeren, A. Zanelli, B. Nov oselnik, J. F rey , T. Albin, R. Quirynen, and M. Diehl. acados: a mo dular op en-source framew ork for fast em b edded optimal con trol. arXiv pr eprint , 2019. [35] A. W¨ ach ter and L.T. Biegler. On the Implementation of a Primal-Dual In terior Poin t Filter Line Search Algorithm for Large-Scale Nonlinear Pro- gramming. Mathematic al Pr o gr amming , 106(1):25–57, 2006. [36] Y. W ang and S. Boyd. F ast mo del predictive control using online opti- mization. IEEE T r ansactions on Contr ol Systems T e chnolo gy , 18:267–278, 2010. 30

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment