HYDRA: Hybrid Deep Magnetic Resonance Fingerprinting

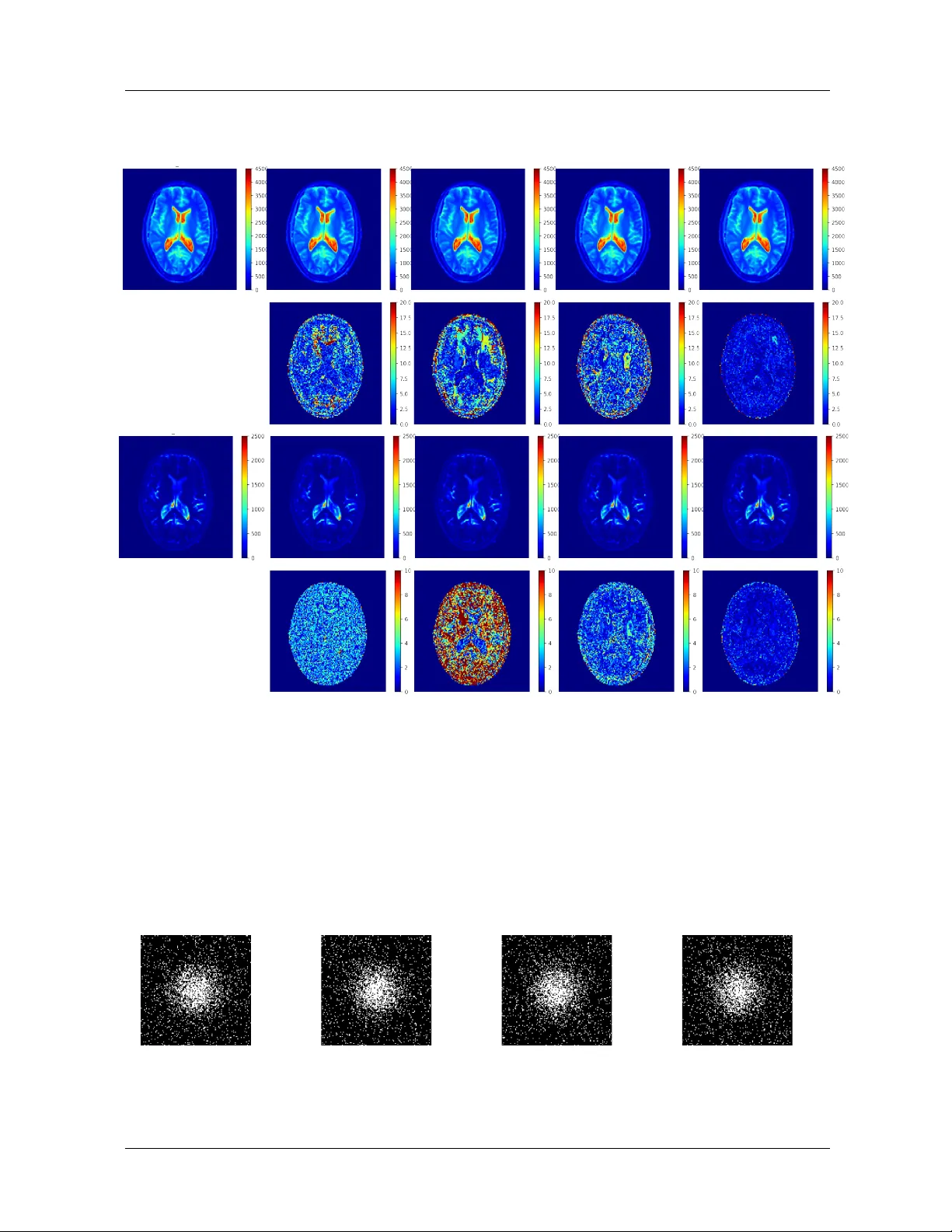

Purpose: Magnetic resonance fingerprinting (MRF) methods typically rely on dictio-nary matching to map the temporal MRF signals to quantitative tissue parameters. Such approaches suffer from inherent discretization errors, as well as high computation…

Authors: Pingfan Song, Yonina C. Eldar, Gal Mazor