Variation-aware Binarized Memristive Networks

The quantization of weights to binary states in Deep Neural Networks (DNNs) can replace resource-hungry multiply accumulate operations with simple accumulations. Such Binarized Neural Networks (BNNs) exhibit greatly reduced resource and power require…

Authors: Corey Lammie, Olga Krestinskaya, Alex James

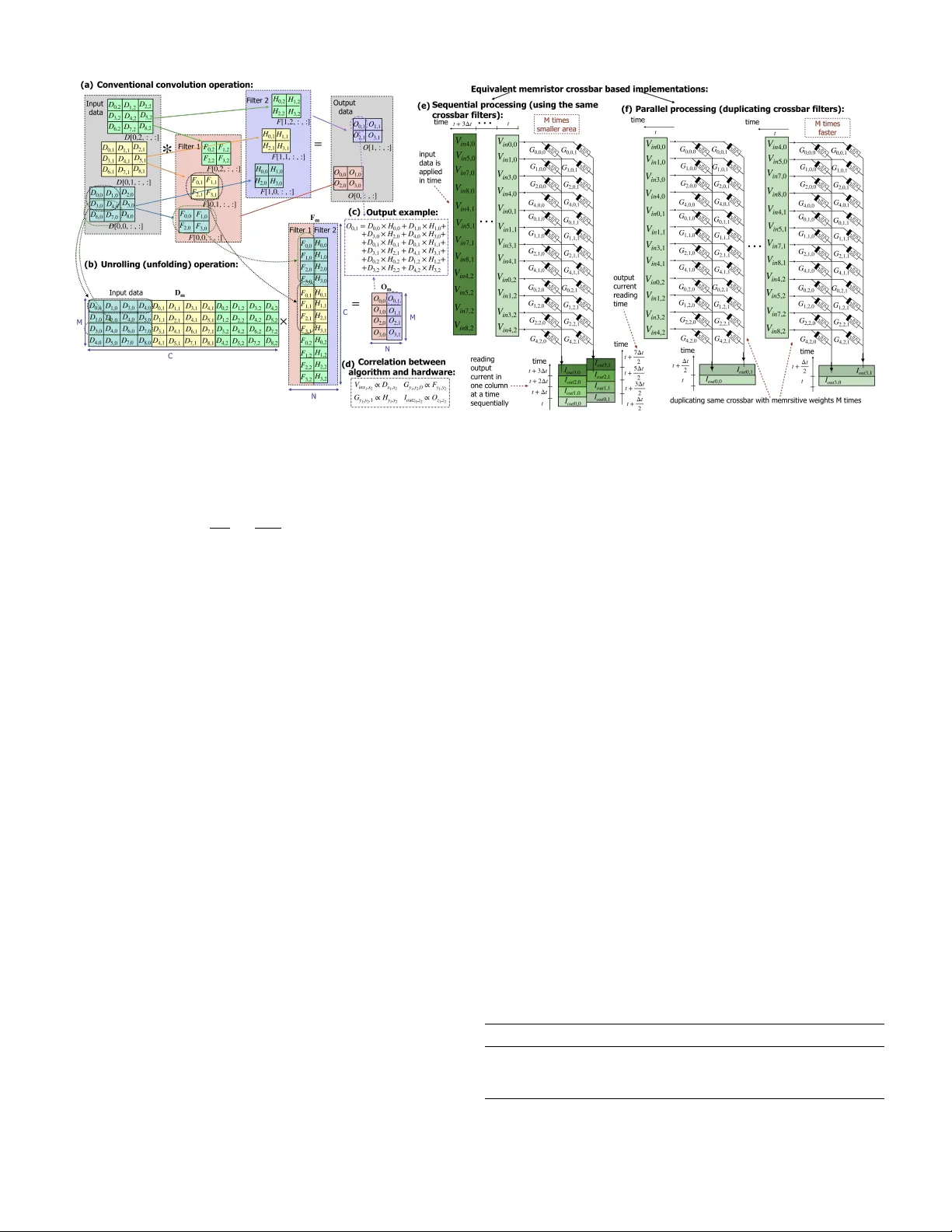

978-1-7281-0397-6/19/$31.00 2019 IEEE V ariation-a ware Binarized Memristi v e Networks Corey Lammie 1 , Olga Krestinskaya 2 , Alex James 3 , and Mostafa Rahimi Azghadi 1 1 College of Science and Engineering, James Cook Univ ersity , Queensland 4814, Australia Email: { corey .lammie, mostafa.rahimiazghadi } @jcu.edu.au 2 School of Engineering, Nazarbayev Univ ersity , Kazakhstan, Email: okrestinskaya@nu.edu.kz 3 AI division, Clootrack Pvt Ltd, Bangalore, India, Email: apj@ieee.org Abstract —The quantization of weights to binary states in Deep Neural Networks (DNNs) can replace resource-hungry multiply accumulate operations with simple accumulations. Such Binarized Neural Networks (BNNs) exhibit gr eatly reduced resour ce and power r equirements. In addition, memristors have been shown as pr omising synaptic weight elements in DNNs. In this paper , we propose and simulate novel Binarized Mem- ristive Con volutional Neural Netw ork (BMCNN) architectur es employing hybrid weight and parameter r epresentations. W e train the proposed architectur es offline and then map the trained parameters to our binarized memristive devices for inference. T o take into account the variations in memristive devices, and to study their effect on the performance, we introduce variations in R ON and R OFF . Moreo ver , we intr oduce means to mitigate the adverse effect of memristive variations in our proposed networks. Finally , we benchmark our BMCNNs and variation- aware BMCNNs using the MNIST dataset. I . I N T R O D U C T I O N R E S I S T I V E Random Access Memory (ReRAM) is a class of memristors, that when arranged in a crossbar config- uration, can be used to implement multiply and accumulate (MA C) or dot-product multiplications consuming low energy and area on chip. ReRAM devices, in such configurations, can be used to reduce the time complexity of 2D matrix-v ector multiplications, used extensi vely during forward and backward propagation cycles in DNNs, from O ( n 2 ) to O ( n ) , and in extreme cases to O (1) . Howe ver , current ReRAM crossbars face concerns of aging, non-idealities and endurance [1], that limit the accuracy of their conducti ve states, affecting the reliability and robustness of memristiv e DNNs. Memristiv e DNNs can either employ ReRAM crossbars with multiple distincti ve conducti ve states, to represent analog weight representations, or with two distinctiv e conductiv e states, to represent binary weight states. Given the aging and state variability issues of ReRAM, binary weight represen- tations, adopted in BNNs, are currently more practical for hardware realization. Binarized Neural Networks (BNNs) [2], which perform binary MA C computations during forward and backward propagations, hav e demonstrated comparable performance to con ventional DNNs, while significantly reducing resource and power utilizations [3]. On account of endurance concerns, ReRAM devices are ill-suited for implementing backward propagations, required during the training routine of BNNs where a large number of programming cycles are required. Howe ver , they are well-suited [4] for implementing forward propagations, required during inference, as only a limited number of programming cycles and two conductiv e states are required. In this paper , we propose and simulate novel BMCNNs and variation-aw are BMCNNs using a customized simulation framew ork for memristiv e crossbars, which integrates directly with the P y T or ch Machine Learning (ML) library . Our de- veloped networks employ of fline training routines adopting hybrid fixed-point and floating-point representations, and bina- rized memristiv e weights. Furthermore, to reduce the effect of memristor variability on the performance of our architectures after crossbar programming, we propose a tuning method. The specific contributions of this work are as follows: • W e propose and simulate novel BMCNNs and variation- aware BMCNNs, adopting hybrid fixed-point, floating- point, and binarized parameter representations, simulat- ing memristi ve devices, and benchmark them using the MNIST dataset. • W e in vestigate the performance degradation observed when the variance of R ON and R OFF are increased within memristiv e crossbars that compute matrix multiplication operations for con volutional layers during inference. • W e propose a tuning method to reduce the effects of memristor variability without reprogramming memristi ve devices. I I . P R E L I M I N A R I E S This section briefly revie ws and presents the algorithms and methods used in our de veloped architectures. A. Binary W eight Re gularization Binary weight regularization [2], constrains netw ork weights to binary states of { +1, -1 } during forward and backward propagations. The binarization operation transforms full-precision weights into binary values using the signum function, described in Eq. (1). w b = sign ( w ) = − 1 if w ≤ 0 +1 otherwise , (1) where w b is the binarized weight and w is the full-precision weight. During backward propagations, lar ge weights are clipped using t clip , described in Eq. (2), where c denotes the objectiv e function. Fig. 1. Depiction of (a) the reduction of a conv entional con volutional layer to (b) an unrolled matrix multiplication, (c) an output e xample, (d) the correlation between the algorithm and hardware, and corresponding hardware implementation of con volutional layers for (e) sequential and (f) parallel processing. ∂ c ∂ w = ∂ c ∂ w b 1 | w |≤ t clip (2) B. Con volutional Oper ation as a Matrix Multiplication Con volutional operations in BMCNNs can be performed using unrolling techniques, which reduce con ventional con- volution operations to matrix multiplications. Fig. 1 (a) and (b) depict the computation of the con volution of two filters ( f = 2) using conv entional and unrolling techniques. In Fig. 1 (b), both con volutional filters, F and H , are reshaped to form F m of size ( C × N ) . The input, D , is reshaped to form D m of size ( M × C ) . The con volution result, O , is determined by reshaping the result of F m × D m , O m , from ( M × N ) to ( f × o 1 × o 2 ) , where o 1 = o 2 = ([ i 2 − k 2 + 2 × P ] /S ) + 1 , which in this instance is 2. Fig. 1 (e) and (f) depict the mapping of the matrix multi- plication operation to memristiv e crossbars using sequential and parallel processing approaches. Elements of D m are represented using equiv alent voltages, V in . In the first column of the crossbar , memristors are programmed to G y 1 ,y 2 , 0 ∝ F y 1 ,y 2 . In the second column of the crossbar , memristors are programmed to G y 1 ,y 2 , 1 ∝ H y 1 ,y 2 (see Fig. 1 (d)). The total output current from the crossbar , I out , is lineary proportional to the con volution result, i.e. O ∝ K · I out , and can either be read sequentially column-by-column, or in parallel, to reduce the output current error due to leakage. Con volutional layers can be processed sequentially , using the same crossbar representativ e of F m to process M input rows of D m one by one, or in parallel with M crossbars, using the same memristi ve filter F m duplicated M times (see Fig. 1 (e)). Despite being much faster , this parallel approach increases the on-chip area by a factor of M . I I I . N E T W O R K A R C H I T E C T U R E The network architecture adopted by all of our memristiv e BNNs, originally proposed in [2], is depicted in Fig. 2 (b), and summarized in T able I. All conv olutional layers are followed by batch-normalization, max-pooling ( k 2 = k 3 = S = 2) , and har dtanh activ ation operations. Binary weight representations are used for all con volution layers. W e implemented four network architectures, each denoted by a name which includes two parts. The first part denotes the number representation method used for weights during the parameter-update stage, and for the last fully connected layer , while the second part denotes the binary weight representation. For instance, FR BNN describes an architecture that uses (FR) Full-Resolution 32-bit floating point numbers, and (BNN) binarized weights. The other architectures are as follows: 8-bit Fixed-point and Binary (FP-8 BNN), and 8-bit Fixed-point and Memristive Bi- nary (FP-8 MBNN, and TFP-8 MBNN). FP-8 MBNN is used to denote BMCNNs with fixed crossbar current amplification parameters, whereas TFP-8 MBNN is used to denote variation- aware BMCNNs with tuned crossbar current amplification parameters. Our proposed hardware implementation consists of an of- fline training module, which can be based on either a FPGA or co-processor, a programming and crossbar control circuit, T ABLE I M E MR I S T IV E B N N A R C HI T E C TU R E . Layer Binarized Memristive Con volutional Layer 1 . f = 16, k 2 = k 3 =2, P=2 3 3 Con volutional Layer 1 . f = 32, k 2 = k 3 =2, P=2 3 3 Fully Connected Layer 1 . N = 1568 1 No biases are used. Fig. 2. (a) The single-column memristor crossbar array architecture used in all of our FP-8 MBNN and TFP-8 MBNN networks. (b) Overall architecture of our binarized CNNs. and forward propagation circuits. These forward propagation circuits utilize several ReRAM crossbars, depicted in Fig. 2 (a), in which each memristors state is confined to [ R ON , R OFF ] , to represent [ − 1 , +1] , respecti vely [5]. The multiplication of D m × F m = O m , where D m contains full resolution elements, and F m contains binary elements ∈ [ − 1 , +1] (see Fig. 1(d)), can be performed as described in Eq. (3). O m [ j, k ] = K C − 1 X 0 V i,k ( G i,j − G c ) (3) Each element in a single row of matrix O m is equiv alent to the scaled output current from a single crossbar column. Each row of O m is computed by applying V in to each crossbar row in time. T o represent both positiv e and negati ve binary weights, we introduce a crossbar column with fixed resistors G c = [ G ON + G OFF ] / 2 , whose current, − I c , is duplicated to all the crossbar columns with memristors using current mirrors [5]. The output current from each column is computed as I out j,k = P C − 1 0 V i,k ( G i,j − G c ) , where V ini,k is an input to row i at time k and I out j,k is an output of the column j at time k , for i = 1 to C , j = 1 to N and k = 1 to M . If the utilized memristiv e devices are considered ideal, we can pick a current amplification parameter K=4000, which maps devices perfectly to their desired R ON , R OFF states. T o develop a framework for realistic memristors, we perform tuning for our proposed variation-a ware BMCNNs to alleviate performance degradation due to memristor variabilities. This tuning process uses Bayesian optimization to determine and set each crossbars adaptable current amplification parameter , K , for each layer ∈ [3000 : 5000] with 15 Bayesian trials. I V . I M P L E M E N T AT I O N R E S U L T S In order to in vestigate the performance of our networks the MNIST dataset was used. During backward propagations Eq. (2) was used to binarize weights, and after the parameter update procedure, their full-precision representations were clipped using t clip . T ABLE II T U NE D N E TW O R K H Y P ER PA RA M E TE R S . Optimizer = t clip η V alidation Set Accuracy FR-32 BNN AdaGrad 64 0.64 1.60E-03 97.51 Adam 128 0.91 3.78E-03 96.45 SGD m =0 119 0.56 3.79E-03 96.71 SGD m =0 . 8 120 0.87 1.00E-03 97.97 FP-8 BNN AdaGrad 64 1.00 4.53E-03 94.31 Adam 128 0.68 1.00E-03 98.35 SGD m =0 128 0.50 4.61E-03 95.97 SGD m =0 . 8 119 0.89 3.27E-03 96.64 A. Hyperparameter Optimization Prior to training networks using the MNIST training set hyperparameter optimization was performed by constructing modified training and validation sets, using 80% (48,000) and 20% (12,000) of training samples, respecti vely . W e performed Bayesian optimization using Ax for a batch size = ∈ [64 : 128] , t clip ∈ [0 . 5 : 1 . 0] , and a learning rate, η ∈ [1 e − 3 : 1 e − 2 ] , for AdaGrad, Adam, SGD m =0 (SGD with m=0), and SGD m =0 . 8 . The best validation set accuracy for each network during 20 training epochs for 15 Bayesian trials is presented in T able II. W e observed no notable drop in the validation set accuracy between our optimized FR-32 BNN and FP-8 BNN implementations. Hence, herein, all memristiv e BNNs adopt hybrid 8-bit fixed-point and memristiv e binary weight representations. B. Memristor Cr ossbar Pr ogramming and T uning After each crossbar was programmed using programming and control circuitry , all trained binarized network weights were programmed to the crossbars and then discarded, requir- ing no further storage. Tuning was performed for all variation- aware BMCNNs. W e note that after training, for our proposed Fig. 3. T est set classification accuracy for all FP-8 MBNN and TFP-8 MBNN networks. hardware implementation, the offline training module can be freely disconnected. C. P erformance In vestigation T o in vestigate the performance of our networks, we di- rectly compared the test set classification accuracy of all our BNNs. FP-8 MBNN networks adopted fixed crossbar current amplification parameters, K = 4000 for each layer, while TFP-8 MBNN networks adopted tunable crossbar current amplification parameters. Simulations of memristiv e BNNs were performed using a modified Generalized Boundary Con- dition Memristor T iO 2 model [6] with R ON = 1000Ω and R OFF = 2000Ω . All results are presented in T able III. D. P erformance De gradation Due to De vice V ariability T o determine the ef fect of memristor variability on the performance of each network, and how the tuning of K improv es their accuracy , resistive states for each memristor were sampled from a Gaussian distribution with a standard deviation of σ from the trained state of that memristor . As the variability of the R OFF state can be higher than R ON , we used a lar ger σ v alue when sampling R OFF , i.e. σ R ON = σ and σ R OFF = 2 σ . The performance of all memristiv e BNNs under such conditions are presented in Fig. 3. For all networks, a test classification accuracy of > 90% was obtained when σ ≤ 40 and the distributions of R ON and R OFF weights did not correlate. Ev en small correlations of R ON and R OFF states caused a substantial drop in accuracy . Fig. 3 demonstrates that the proposed tuning method can increase the test set classification accuracy , when σ ≥ 100 . V . C O N C L U S I O N W e proposed nov el memristiv e BNNs with tunable crossbar output current amplification factors. W e benchmarked the per - formance of our no vel architectures and compared them to the T ABLE III C O MPA R IS O N O F T E S T S E T C L A SS I FI C A T I O N A CC U R AC Y ( % ) F OR M E MR I S T IV E B N N A N D DI G I T A L I MP L E ME N TA T I O N O F B NN W I T H D I FFE R E N T W E IG H T R ES O L U TI O N S A N D O PT I M IZ ATI O N S . Optimizer AdaGrad Adam SGD m =0 SGD m =0 . 8 FP-8 BNN 93.68% 93.42% 93.99% 94.31% FR-32 BNN 93.93% 92.21% 94.17% 94.11% FP-8 MBNN 93.56% 93.43% 93.90% 94.50% TFP-8 MBNN 86.94% 93.33% 93.95% 94.41% digital implementations of Binarized CNNs. W e demonstrated that memristor v ariabilities can degrade performance, and proposed an alleviating tuning method. W e leave the devel- opment of full circuit lev el implementations of the proposed architecture and specific device and technology in vestigations to future works. R E F E R E N C E S [1] O. Krestinskaya, A. Irmanov a, and A. P . James, “Memristiv e non- idealities: Is there any practical implications for designing neural network chips?” in 2019 IEEE International Symposium on Cir cuits and Systems (ISCAS) , May 2019, pp. 1–5. [2] I. Hubara, M. Courbariaux, D. Soudry , R. El-Y aniv , and Y . Bengio, “Bi- narized neural networks, ” in Advances in neural information processing systems , 2016, pp. 4107–4115. [3] C. Lammie, W . Xiang, and M. R. Azghadi, “Accelerating Deterministic and Stochastic Binarized Neural Networks on FPGAs Using OpenCL, ” CoRR , vol. abs/1905.06105, 2019. [Online]. A vailable: http://arxiv .org/ abs/1905.06105 [4] O. Krestinskaya and A. P . James, “Binary weighted memristive analog deep neural network for near -sensor edge processing, ” in 2018 IEEE 18th International Conference on Nanotechnology (IEEE-NANO) , July 2018, pp. 1–4. [5] K. V an Pham, T . V an Nguyen, S. B. Tran, H. Nam, M. J. Lee, B. J. Choi, S. N. T ruong, and K. Min, “Memristor binarized neural networks, ” J. Semicond. T echnol. Sci , vol. 18, pp. 568–577, 2018. [6] V . Mladenov and S. Kirilov , “ A memristor model with a modified window function and activ ation thresholds, ” in 2018 IEEE International Symposium on Circuits and Systems (ISCAS) , May 2018, pp. 1–5.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment