Haptic communication optimises joint decisions and affords implicit confidence sharing

Group decisions can outperform the choices of the best individual group members. Previous research suggested that optimal group decisions require individuals to communicate explicitly (e.g., verbally) their confidence levels. Our study addresses the …

Authors: Giovanni Pezzulo, Lucas Roche, Ludovic Saint-Bauzel

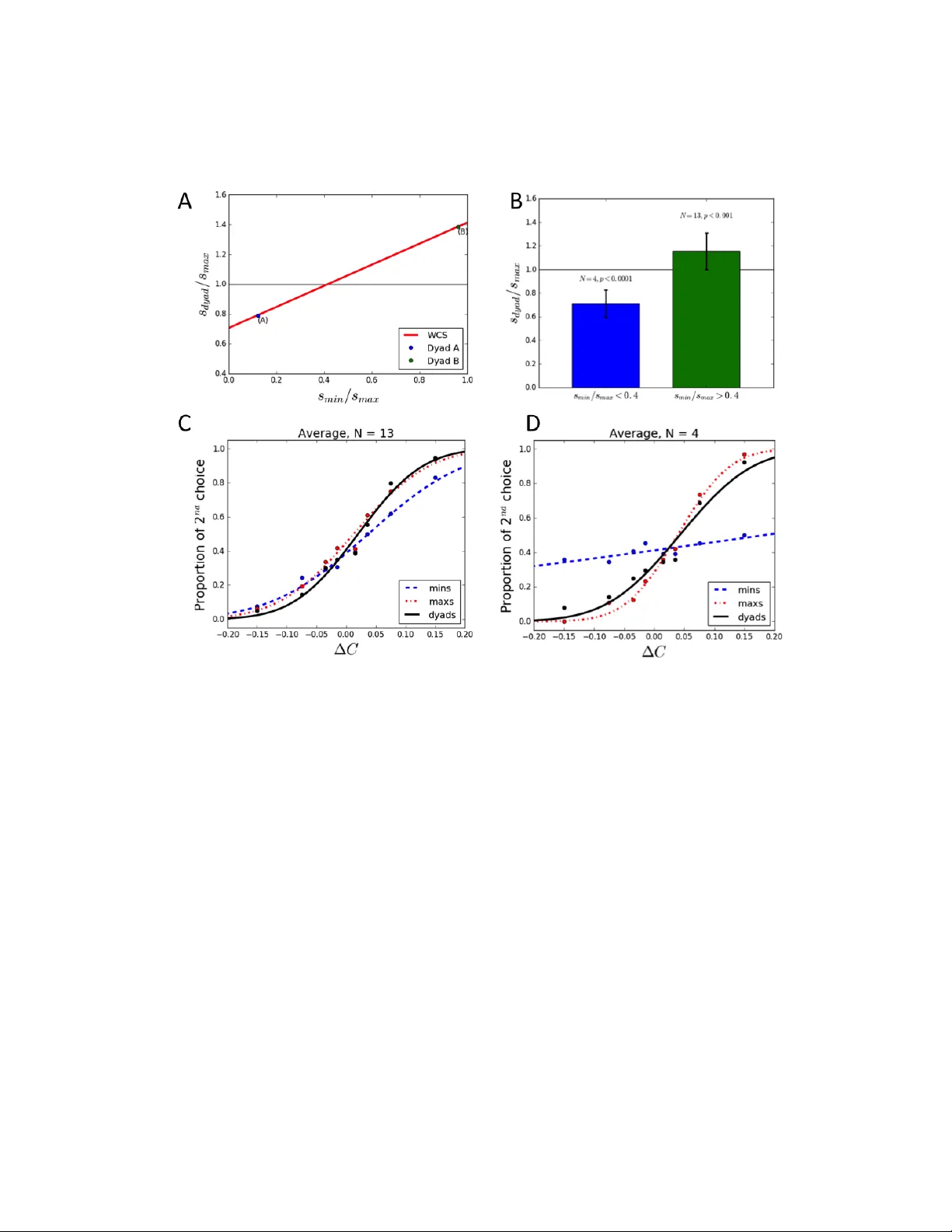

Haptic comm unication optimises joint decisions and aff or ds implicit confidence sharing Giov anni Pezzulo 1 , ∗ , Lucas Roche 2 & Ludovic Saint-Bauzel 2 1 Institute of Cogniti ve Sciences and T echnologies, National Research Council, V ia S. Martino della Battaglia 44, 00185 Rome, Italy 2 Institut des Systemes Intelligents et de Robotique, Univ ersit ´ e Pierre et Marie Curie, 75005 Paris, France *Corresponding author (giov anni.pezzulo@istc.cnr .it) Group decisions can outperf orm the choices of the best individual group members. Pre vious resear ch suggested that optimal group decisions require individuals to communicate explic- itly (e.g., verbally) their confidence levels. Our study addresses the untested hypothesis that implicit communication using a sensorimotor channel – haptic coupling – may afford optimal group decisions, too. W e r eport that haptically coupled dyads solve a perceptual discrimi- nation task more accurately than their best individual members; and five times faster than dyads using explicit communication. Furthermore, our computational analyses indicate that the haptic channel affords implicit confidence sharing. W e found that dyads take leadership over the choice and communicate their confidence in it by modulating both the timing and the f orce of their mov ements. Our findings may pav e the way to negotiation technologies using fast sensorimotor communication to solve pr oblems in groups. 1 W e often make important decisions in groups, such as when we decide a trav el destination with a group of friends or peer-re view papers. Group decisions can sometimes outperform the choices of the best individual group members; for example, during logical problems 1 , numerical 2 or perceptual tasks 3 . There is strong consensus that effecti ve group decisions require group members to share their degree of confidence in their individual choices 3–8 . This allows weighting individual choices ac- cording to their relativ e confidence le vels, following principles of optimal (Bayesian) multisensory integration 9–11 . It has been assumed so far that explicit communicativ e channels, such as verbal communication 3 or visual confidence reports 4 , are required to share confidence le vels – and more broadly , ne gotiate optimal decisions 12 . This idea is in keeping with a long tradition in communica- tion theory and cognitive psychology that emphasises the importance of communicating intentions and metacogniti ve confidence le v els explicitly (e.g., v erbally) 13, 14 . Ho we ver , during ecologically realistic interactions, dyads also use implicit , sensorimotor communication channels to improv e coordination and achie ve joint goals 15, 16 . F or example, dur- ing joint grasping or joint pressing tasks, dyads modulate (e.g., amplify) the kinematics of their finger and arm mov ements to mak e the trajectory of their mov ements less v ariable and hence more predictable 17 , to make their intentions (e.g., what object the y intend to grasp and when) easier to infer by coactors 18–23 , or to dynamically negotiate leader-follo wer roles 24–26 . Moreover , in the same tasks, participants tend to implicitly imitate each other’ s actions 27, 28 – a mechanism suggested to promote group af filiation 29 . During joint pulling tasks, haptically coupled dyads am- 2 plify their force to improve their coordination 30 . As a result, sensorimotor communication during jointly ex ecuted tasks can improv e performance and the quality of execution 31–39 . This suggests the untested hypothesis that implicit communication based on sensorimotor (e.g., haptic) channels – which is faster and cheaper in cogniti ve load than e xplicit communication – may be sufficient to optimize group decisions and share confidence le vels. T o test this hypothesis, we adapted a pre vious task designed to study optimal group decisions using verbal communication 3 – but we allowed participants to communicate only via a sensori- motor (haptic) channel. In our study , dyads (couples of indi viduals) make a series of individual decisions and then – if they disagree – gr oup (consensus) decisions, about which of two sequen- tially presented stimuli contains an oddball target. During the group decisions, the dyads control coupled haptic devices with one degree-of-freedom, to jointly mov e the end effectors to wards one of two (left or right) e xtreme positions, corresponding to their two choices. W e report three three main findings. First, we sho w that haptic communication allo ws dyads to optimize group decisions and outperform the accuracy of individual participants (ha ving sim- ilar sensiti vity le vels), akin to consensus reached using verbal communication 3 – but fiv e times faster . Second, our computational analysis indicates that the haptic channel affords sharing confi- dence lev els during the group choice – albeit in an implicit form. Indeed, the same computational (Bayesian) scheme explains group choices using both explicit (verbal) and implicit (haptic) com- munication, in terms of (weighted) confidence sharing. Third, our analyses indicate that dyads tak e leadership o ver the choice and communicate their confidence in it by manipulating both the timing 3 and force of their movements – hence exploiting the haptic channel in full to optimise their joint performance. Results Couples of participants (dyads) are presented with a series of tw o stimuli, one of which containing an oddball target. Each participant sees the (identical) stimuli on a dif ferent computer screen (Figure 1). During the first, individual decision phase, each participant indicates which of the stimuli (first or second) contains the oddball tar get, by mo ving a cursor to the left or right response area. Each participant controls his or her cursor independently , using a haptic device. In this phase, the de vices of the two participants are not coupled and participants cannot communicate. If the individual decisions are identical, the trial ends. If they differ , a second consensus decision phase be gins, in which participants mak e the same decision as abov e, b ut jointly control the cursor trajectory (i.e., the cursor trajectory is an av erage of the individual trajectories). Dif ferent from the first phase, participants see the joint (not the individual) cursor trajectory on their screens. Furthermore, the participants’ haptic devices are coupled and permit sensing the amount of force the co-actor applies to his or her de vice. Haptic communication optimizes gr oup decisions alike explicit communication – but is much faster W e fitted the response data using the weighted confidence sharing (WCS) model, which successfully explained group decisions using verbal communication 3 . The WCS model assumes that group decisions weight individual choices according to their relativ e confidence lev els. It 4 makes the theoretical prediction that when the ratio of sensitivities of dyad members is greater than 0.4 (i.e., participants ha ve similar sensitivities), then the dyad will outperform each individual (Figure 2A). The opposite happens if the ratio is lo wer than 0.4 (i.e., participants have different sensiti vities). This prediction was confirmed, with dyads whose members had similar sensitivities ( s min /s max > 0 . 4 ) performing significantly better than their best members ( t(13)=3.94, p < 0.001 ) and dyads whose members had dif ferent sensiti vities ( s min /s max < 0 . 4 ) performed significantly worse than their best members ( t(4)=-9.89, p < 0.0001 ) (Figure 2B-D). Furthermore, the slopes of the dyads’ psychometric functions and those predicted by the WCS were not significantly different ( t (17) = 0 . 51 , p = 0 . 62 ) . As predicted by the WCS, the sensiti vity of the dyads whose members had similar (dissimilar) sensiti vity le vels was significantly higher (lo wer) than the relati ve sensiti vity of dyad members (Figure 3). W e found a significant lin- ear correlation between impro vement of the dyads ag ainst the relati ve sensiti vities of their members ( R 2 = 0 . 62 , F (1 , 17) = 24 . 8 , p = 0 . 0002 ) , with slope (0.64 ± 0.13) and intercept ( 0 . 66 ± 0 . 09 ) close to those predicted by the WCS model ( 0 . 71 for slope and intercept). The results of our study align v ery well with those of Bahrami et al, 3 , who used e xplicit v er - bal communication – and same WCS model applies equally well to both studies. Ho we ver , group decision time was significantly faster in our study (N=850, mean=2856ms, std=2022ms) than in Bahrami et al, 3 (N=5 groups, mean=13860ms, std=3720ms; Dan Bang, personal communication). Dyad members implicitly communicate and shar e their confidence by manipulating both the speed and the for ce of their movements The WCS model requires individual confidence le vels 5 to be shared (because they need to be integrated). This raises the question of ho w , in our study , participants (implicitly) communicate and negotiate through the haptic channel. Previous studies sho wed that dyadic sensorimotor tasks, such as tapping in synchrony or lifting objects together , promote the emer gence of Leader and Follo wer roles – with the Leader determining (for e xample) the pace of joint tapping 15 . Furthermore, Leaders often modify their action kinematics in com- municati ve ways, to signal their roles and con ve y rele v ant task information to F ollowers 18, 20, 21 . In keeping with this body of evidence, we asked whether participants exploit their kinematic and kinetic mov ement parameters (e.g., the speed and force of their movements) to become “Leaders” of the group decision and to implicitly communicate their leadership and confidence. W e considered v arious kinematic and kinetic mov ement parameters as predictors of group choices. First, we considered indi viduals’ initial decision time for each trial as a predictor of leadership in the same trial. The correlation between being the individual who moves first and being the Leader (i.e., determining the group choice) is 66.5% ov erall (67.7% and 63% for dyads having similar and dif ferent sensitivities, respecti v ely). As a further proxy to initiativ e and early commitment to the group decision, we designed a First Crossing (1C) predictor: the side (left or right) at which an y of the participants’ handles firstly exits a “small zone” centred on the start position 40 . W e parametrised the “small zone” around the start position X 0 as X 0 ± X thresh using dif ferent thresholds (see T able 1). W e found that the side selected by the (first) participant who mov es less than 10% of the total distance to the target (i.e., threshold X thresh = 0 . 05 ) already predicts 88.5% of the group choices. 6 Finally , we considered two kinetic parameters a vailable through the haptic device – peak force (i.e., the highest force applied by a subject on the interf ace) and mechanical w ork (i.e., W i = 1 N n P k =1 F i ( X i,k − X i,k − 1 ) , i= { 0,1 } , where F i is the force applied on interface i and X i,k is the position of the interf ace i at time step k .) – and found that both are good predictors of group choices (71,7% and 69% accuracy , respectiv ely). Leaders were more activ e than Follo wers during the interaction, with both significantly higher peak forces applied (Leader: 0.75N vs F ollo wer: 0.43N; t(676)=9.71, p < 0.0001) and significantly higher mechanical work provided (Leader: 0.30J vs Follo wer: -0.08J; t(676)=15.7, p < 0.0001). Rather , Follo wers tended to apply negati ve mechanical work, ef fecti vely ex erting some (small) resistance to the Leader’ s motion – see 41, 42 for similar results on dyadic co-manipulation. Leaders impose their pace to the group decision movements Previous studies of sensorimotor communication reported that Follo wers tend to align to Leaders’ mov ements during joint tasks (see 15 for a revie w). In keeping, we asked whether Leaders imposed their pace to the group decision mov ements (and Follo wers adapted to it). W e considered the ratio between the mean velocity of Leaders (V eloL) and F ollowers (V eloF) during the first part of the dyad mo vement (i.e., before the X T hr esh is crossed for the first time), which is arguable more important to reach consensus; and the mean velocity of the dyad (V eloD) during the second part of dyad movement (i.e., after the X T hr esh is crossed the first time), which is necessary to complete the trial. W e found V eloL/V eloD (1.0788) to be significantly smaller than V eloF/V eloD (1.1115), (N = 1866.0, p-v alue: 0.00348, t-v alue: -2.92344, d-value: -0.05613). The fact that the former ratio (V eloL/V eloD) is closer to 1 than the latter (V eloF/V eloD) ratio indicates that the Leader is more able to impose his pace on the 7 group decision mov ements. Control analyses T o rule out the possibility that group decisions were made without negotia- tion, by simply follo wing “who moves first (or pulls harder)”, we performed two control anal- yses, which compare indi vidual and group decisions. W e found group decision time (N=850, mean=2856ms, std=2022ms) to be significantly longer than decision time of group members de- cision time (N=4352, mean=881ms, std=788ms): t(850, 4352)=-23.84, p < 0.0001. This result holds true both for dyads of similar (t(626,3328) = -23.42, p < 0.0001) and different (t(224, 1024) = -10.15, p < 0.0001) sensitivities. Furthermore, we found group initiation time, as index ed by the time the group reaches the 1C parameter (i.e., the handle firstly e xit the starting zone X 0 as X 0 ± X thresh ) to be significantly slower than initiation time of group members, but only for T thresh = 0.05 and for dyads having similar sensiti vities ( t (626 , 3328) = 4 . 51 , p < 0 . 0001 ). Rather , we found group initiation time to be significantly faster than initiation time of group members ( t (224 , 1024) = − 4 . 78 , p < 0 . 0001 ) for dyads ha ving different sensitivities. These two control analyses reassuringly suggest that group decisions require in v olve time-consuming negotiation; and the slo wer initiation time of groups whose members have similar sensiti vities may be con- duci ve of better choices. Discussion Group decision making is an acti ve area of research across behavioral sciences, psychology and neuroscience 43 , ecology 44 and collecti ve (or swarm) robotics 45 ; but its dynamics and optimality 8 principles are still incompletely kno wn. W e show that sensorimotor (haptic) communication can optimise group decisions. Hapti- cally coupled dyads perform significantly better than the best indi vidual of the dyad, when the two individual members have similar visual sensiti vities; but the opposite is true when the dyad members ha ve dif ferent sensiti vity le vels. This group adv antage was sho wn in tasks using e xplicit , verbal communication 3 . Here we demonstrate that implicit (haptic) channels can achie ve the same results, at least in the joint decision in vestigated here. In general, verbal communication can be much richer than implicit communication. How- e ver , in the conte xt of this task, the specific information to be con veyed concerns (one’ s belief about) the correct target. Both verbal and haptic channels can con ve y this information; b ut it is plausible that haptic information can con vey it more precisely , i.e., with a better information/noise ratio. This speaks to the fact that e xplicit and implicit communication channels may hav e comple- mentary benefits. T asks requiring sophisticated debate and diplomacy and where response options are open-ended, such as legal proceedings or diplomatic negotiates, may be more dif ficult to ad- dress using implicit compared to e xplicit, v erbal communication. On the other hand, simpler tasks having clear response options that can be mapped to spatial locations, implicit communication may af ford a faster b ut equally accurate consensus compared to explicit communication. Indeed, in our study , consensus was reached (in most cases) in less than 3 seconds, whereas with v erbal communication it required about 14 seconds 3 . Note that neither in our study nor in those using explicit communication there was any time pressure. Clearly , the comparison may 9 seem unfair , as (compared to haptics) language is a much richer communication channel; and hence consensus and con ventions may take time to arise. What is most interesting in this comparison is that implicit channels afford very fast group consensus – which is rele vant when it is necessary to trade off richness and speed of communication, such as during situated group decisions and team sports. Our results can be explained within an optimal multisensory integration framew ork 9–11 , which combines multiple sources of evidence and weights them in proportion to their confidence le vels. Importantly , the same WCS model that incorporates the abov e Bayesian assumptions ex- plains group decisions that use both e xplicit 3 and implicit communication (this study), suggesting that the dif ferences between the two may be less prominent than currently believ ed – at least for the choices considered here. The computational model further indicates confidence sharing as a key ingredient to optimize group decisions. This raises the question of ho w exactly dyads communicate their confidence le vels through the haptic channel. Our results sho w that co-actors share their confidence levels and optimise group decisions by synergistically manipulating the mov ement parameters that are av ailable via the haptic inter- face, such as speed and force. W e found initiati ve (as indexed by 1C) to be the most effecti ve group choice predictor; indeed, the participant who takes the initiati ve and commits early to a decision often acquires leadership and determines the final choice. Ho wev er , the fact that higher le vels of activity and force afford accurate predictions of group choice indicates that both kine- matic and kinetic parameters may be used in combination. Supposedly , an ef fecti ve sensorimotor 10 communication strategy consists in taking initiativ e and then applying some force to maintain – and communicate – commitment to the choice and to impose a pace to the decision. The success of such sensorimotor communication strategy may be due to the fact that both speed and force of movement reliably signal confidence in addition to advancing the decision. Indeed, giv en that response time and confidence are in versely correlated 46, 47 , making an early commitment is a reliable signal that one is confident about the decision. Exerting force can re- liably signal one’ s confidence, too. The parallel between force and confidence is made apparent by the recent finding that participants showing (sub-threshold) motor acti v ation in their response ef fectors hav e significantly higher confidence (but not necessarily accuracy) in their choices 48 . These findings suggest that haptic interfaces provide efficient channels, such as speed and force, for implicit confidence sharing , which is ke y to optimal group decisions. Our results hav e deep theoretical and technological implications. From a theoretical perspec- ti ve, our findings run against the hypothesis that explicit communication is necessary to achiev e optimal decisions or to communicate confidence; suggesting that classical theories of commu- nication should be e xpanded to consider more fully sensorimotor e xchanges 13, 14, 49–54 . From a technological perspectiv e, our results can pav e the way to the dev elopment of novel negotiation and decision support tools that exploit fast sensorimotor channels to facilitate and improve group decisions. While we focused on a visuo-haptic interface, similar results may be obtained using other (e.g., auditory) channels, to the e xtent that they afford rich sensorimotor communication. Our study rev eals that crucial information for accurate group decisions – one’ s own confidence 11 about the decision – can be con v eyed by modulating speed and force of movement. It remains to be studied whether using dif ferent sensorimotor channels or interfaces offers the same or dif- ferent ways to communicate confidence and other important information. Another challenge for the future consists in expanding the scope of sensorimotor technologies, to afford more comple x negotiation dynamics that may be required to reach consensus be yond simple tasks. Methods Participants Thirty-six participants (11 women) were recruited for this experiment amongst in- terns, master degree students, PhD students, post-docs and engineers of the Sorbonne Uni versit ´ e and paired in dyads (8 M-M, 9 M-F , 1 F-F). Dyads were formed by pairing participants who were not friends or collaborators, to av oid possible influences of pre vious interactions on task perfor- mance and leadership. Participants were free of an y kno wn psychiatric or neurological symptoms, non-corrected visual or auditory deficits and recent use of any substance that could impede con- centration. They were all right handed. Their mean age was 26.3 (SD = 5.25). This research was re vie wed and approv ed by the High Council for Research and Higher Education (HCERES) insti- tutional ethics committee. The research was performed in accordance with the rele vant guidelines and regulations. Informed consent was obtained from each participant. One dyad had to be ex- cluded because one of the members systematically defaulted to her partner’ s choice in the second phase. The analysis was thus conducted on 34 participants. Procedur e Dyad members are in the same testing room, seated side by side, and each has a computer screen. An opaque curtain is positioned between them in order to pre vent them from 12 seeing each other . Participants are instructed to refrain from trying to communicate orally with their partners for the duration of the experiment. Headphones playing pink noise are used to pre vent the subjects from hearing each other or potential audio clues in the testing room. V isual feedback is provided to the participants through indi vidual displays. Each subject controls a custom, one degree-of-freedom haptic interface, which use two MAXON DC Motors (RE65-250W), connected to a 80mm handle for actuation and a magnetic encoder (CUI INC AMT11) 55 . The full design of the haptic interface is open source, av ailable on GitHub at: github .com/LudovicSaintBauzel/teleop-controller -bbb-xeno.git. Each experiment includes 8 blocks of 16 trials each. Subjects switch their positions after half the trials. Each trial proceeds as follows. First, the haptic interfaces are automatically centred and a warning message is displayed (1000ms). Second, a black central fixation cross is displayed on each subject’ s screen for a random duration (500-1000ms). The third phase is the individual decision phase . T wo visual stimuli (6 Gabor patches dis- played in circle) are sequentially presented to both subjects, for 85ms. A 1000 ms pause (grey screen, black fixation cross) is observ ed between the two stimuli. In either the first or second stim- ulus, one of the 6 patches has a slightly higher contrast (oddball target). The task objecti ve is to determine whether the oddball target is in the first or second stimulus. Note that he oddball tar gets can hav e 4 different lev el of contrast compared to the baseline. The oddball target timing (first or second wa v e), position (one of the six patches) and contrast (one of the four le vels, 11 . 5% , 13 . 5% , 17% and 25% ; baseline is 10% ) are randomized for each trial. The oddball timing and contrast 13 le vels were used as independent variables and the number of occurrences of each of their combi- nations was balanced o ver each block (each of the 8 combinations appear twice per block, for a total of 16 trials per block). After the presentation of the stimuli, both subjects must indicate their indi vidual answer , by moving the handle of the haptic interface tow ards the left (to select the first stimulus) or right (to select the second stimulus). In this phase, the positions of the haptic interfaces are independent, and each subject answers indi vidually . After both subjects have answered, both answers are displayed for each subject. If they agree, feedback about the correct answer is gi ven with both a color code (green for a correct answer , red for an incorrect one) and a symbol (green check mark for a correct answer , red cross for an incorrect one); and the trial ends. If they disagree, only their indi vidual choices is provided and participants enter in the gr oup decision phase . The fourth phase is the gr oup decision phase . In this phase, haptic feedback is added to the interfaces: the teleoperation controller will constrain the motions of the interf aces so that there are identical at all time. In this configuration, the interfaces’ positions are the same and the subjects hav e equal control ov er it. Furthermore, they can feel the force applied to the interfaces by each other . The subject must jointly mov e the interfaces in order to indicate their final choice (left for first stimulus, right for second). The interfaces must remain one second at stop in order to v alidate the common answer . During the group decision, participants can sense the force applied by their co-actors via the haptic interf ace. T o av oid conflicts being resolv ed by brute force, participants are instructed to keep their force belo w the maximum. Finally , after the group decision, feedback about the individual choices and the common decision are gi ven to the subjects (CORRECT/WR ONG). Feedback is color-coded: yellow for 14 subject 1 on the left, blue for subject 1 on the right. Note that each feedback phase lasts a maximum of 10 seconds; but after 3 seconds, participants can skip by placing their fingers on the interface. At the end of the feedback, the graphical interf ace goes back to step 1, and the trials continue until the end of the experimental block. Psychometric functions Individual and dyadic psychometric functions are constructed by plot- ting the proportion of trials in which the oddball target was seen in the second wa ve of stimuli against the contrast difference at the oddball location (contrast in the second wa ve minus contrast in the first); see also 3 . Examples of psychometric functions are sho wn in the main article. The dots correspond to the av erage proportion of 2 nd stimuli chosen as answer , for each contrast difference ( ± 1,5%, ± 3.5%, ± 7%, ± 15%). Lines are the fitted cumulativ e Gaussian functions for each indi viduals and dyads. The psychometric curves are fit to a cumulati ve Gaussian function whose parameters are bias (b) and variance ( σ 2 ). Estimation of these parameters is done through curve fitting regression (Python Scipy curv e fit() function). A participant with bias b and v ariance σ 2 would ha ve a psychometric curv e gi ven by: P (∆ C ) = H ∆ C + b σ ! , (1) with ∆ C the contrast dif ference between second and first stimuli, and H(z) the cumulativ e normal function. 15 The psychometric curve, P( ∆ C), corresponds to the probability of reporting that the second stimulus had the higher contrast. Thus, a positi ve bias indicates an increased probability of saying that the second stimulus had higher contrast (and thus corresponds to a ne gati ve mean for the underlying Gaussian distribution). Gi ven the above definitions for P( ∆ C), the variance is related to the maximum slope of the psychometric curve, denoted s , via : s = 1 √ 2 π σ 2 . (2) A large slope indicates small v ariance and thus highly sensitiv e performance. W eighted Confidence Sharing (WCS) Model W e used the W eighted Confidence Sharing (WCS) model 3 to fit our behavioral data. The WCS assumes that participants share their confidence and make a Bayes-optimal decision based on the ratio of the individual δ C /σ values. This permits inferring the dyad psychometric function from the psychometric functions of the dyad members, as follo ws: P W C S dy ad (∆ C ) = H ∆ C + b W C S dy ad σ W C S dy ad ! , (3) with 16 b W C S dy ad = σ 2 b 1 + σ 1 b 2 σ 1 + σ 2 (4) and σ W C S dy ad = √ 2 σ 1 σ 2 σ 1 + σ 2 . (5) Consequently , the slope of the dyad’ s psychometric function can be calculated as: s W C S dy ad = s 1 + s 2 √ 2 . (6) The WCS model predicts that the performance (sensitivity) of the dyad is superior to those of the best member if the sensiti vities of the participants are similar . This can be appreciated by noting that if s max is the slope of the psychometric function of the best performing member of the dyad, and s min the slope of his/her partner’ s psychometric function, we hav e: s dy ad = s min + s max √ 2 = 1 + s min s max √ 2 s max . (7) If we compare the performances of the dyad and of the best performing member we hav e: s dy ad s max = 1 + s min s max √ 2 = √ 2 2 + √ 2 2 s min s max . (8) 17 The WCS model makes the theoretical prediction that if the ratio of sensitivities of the dyad’ s members is greater than 0.4 (i.e., participants have similar sensiti vities), then the dyad will outperform each individual. The opposite happens if the ratio is lower than 0.4 (i.e., partic- ipants hav e different sensitivities). More formally , the following property holds: s dy ad > s max if s min /s max > 1 − √ 2 2 ' 0 . 4 . Our findings reported in the main article confirm the theoretical predictions of the WCS model and permit to rule out alternativ e models considered in 3 : the Coin Flip (CF) model, which considers that conflicts are decided by chance; the Behaviour and F eedback (BF) model, which considers that participants learn who is the most accurate group member and rely on his or her choice during group decisions; and the Dir ect Signal Sharing (DSS) model, which considers that dyads communicate the mean and standard de viation of each member’ s sensory response. None of these alternati ve models w ould predict the pattern of responses that we observe in our data. Note that as reported in the main article, we found the slope of the linear regression fit of participants’ performance to be slightly slo wer than what predicted by the WCS model (despite the dif ference does not reach significance). This finding can be explained with a small modification of the WCS model: by adding a small bias to the most skilled member of the dyad. Indeed, according to the WCS model, the dyad sensiti vity improvement can be calculated as: s dyad s max = 1 √ 2 1 + s min s max = √ 2 2 + √ 2 2 s min s max (Equation 8). If the dyad slightly over -weights the decision of the most skilled member , the resulting sensitivity will be shifted to wards s max : 18 s dy ad s max = √ 2 2 + √ 2 2 αs min β s max , (9) with β > α > 0 the relati v e weights. This model would lead to a similar intercept than the WCS model, with a lower slope, which would e xplain the pattern of results we obtain. First Crossing (1C) parameter The 1C parameter is defined as the side on which the indi vidual position of one of the two subjects exits the interv al [ − X thresh ; X thresh ] . The position data from the group decision phase are extracted and normalised so that the middle starting position corresponds to X pos = 0 , and the left and right sides corresponds to X pos = − 1 and X pos = 1 respectively . The v alue of X thresh for the 1C calculations is then chosen as a percentage of X pos . Mechanical work parameter The mechanical work of a subject is calculated as W i = 1 N n P k =1 F i ( X i,k − X i,k − 1 ) , i= { 0,1 } , where F i is the force applied on the interface i and X i,k is the position of the in- terface i at time step k . 1. Moshman, D. & Geil, M. Collaborati ve reasoning: Evidence for collectiv e rational- ity . Thinking & Reasoning 4 , 231–248 (1998). URL https://doi.org/10.1080/ 135467898394148 . https://doi.org/10.1080/135467898394148 . 2. Bang, D. & Frith, C. D. Making better decisions in groups. Royal Society Open Sci- ence 4 (2017). URL http://rsos.royalsocietypublishing.org/content/ 19 4/8/170193 . http://rsos.royalsocietypublishing.org/content/4/8/ 170193.full.pdf . 3. Bahrami, B. et al. Optimally interacting minds. Science 329 , 1081–1085 (2010). URL http: //science.sciencemag.org/content/329/5995/1081 . http://science. sciencemag.org/content/329/5995/1081.full.pdf . 4. Bahrami, B. et al. What failure in collecti ve decision-making tells us about metacognition. Phil. T rans. R. Soc. B 367 , 1350–1365 (2012). 5. Fusaroli, R. et al. Coming to terms: quantifying the benefits of linguistic coordination. Psy- cholo gical science 23 , 931–939 (2012). 6. Haller , S. P ., Bang, D., Bahrami, B. & Lau, J. Y . Group decision-making is optimal in adoles- cence. Scientific r eports 8 , 15565 (2018). 7. K oriat, A. When are two heads better than one and why? Science 336 , 360–362 (2012). URL http://science.sciencemag.org/content/336/6079/360 . http:// science.sciencemag.org/content/336/6079/360.full.pdf . 8. Sorkin, R. D., Hays, C. J. & W est, R. Signal-detection analysis of group decision making. Psycholo gical r evie w 108 , 183 (2001). 9. Ernst, M. O. & Banks, M. S. Humans inte grate visual and haptic information in a statistically optimal fashion. Natur e 415 (2002). 20 10. K ording, K. & W olpert, D. Bayesian decision theory in sensorimotor control. T rends Cogn. Sci. 10 , 319–326 (2006). 11. Doya, K., Ishii, S., Pouget, A. & Rao, R. P . N. (eds.) Bayesian Br ain: Pr obabilistic Appr oaches to Neural Coding (The MIT Press, 2007), 1 edn. 12. Nav ajas, J., Niella, T ., Garb ulsk y , G., Bahrami, B. & Sigman, M. Aggregated kno wledge from a small number of debates outperforms the wisdom of lar ge cro wds. Nature Human Behaviour 2 , 126 (2018). 13. Metcalfe, J. Metacognitiv e processes. In Memory , 381–407 (Elsevier , 1996). 14. Sperber , D. & W ilson, D. Relevance: Communication and cognition (W iley-Blackwell, 1995). 15. Pezzulo, G. et al. The body talks: Sensorimotor communication and its brain and kinematic signatures. Physics of life r evie ws (2018). 16. Sebanz, N., Bekkering, H. & Knoblitch, G. Joint action: bodies and minds moving together . In TRENDS in Cognitive Sciences V ol.10 No.2 F ebruary 2006 (2006). 17. V esper , C., van der W el, R. P . R. D., Knoblich, G. & Sebanz, N. Making oneself predictable: reduced temporal variability facilitates joint action coordination. Exp Br ain Res 211 , 517–530 (2011). URL http://dx.doi.org/10.1007/s00221- 011- 2706- z . 18. Candidi, M., Curioni, A., Donnarumma, F ., Sacheli, L. M. & Pezzulo, G. Interactional leader - follo wer sensorimotor communication strategies during repetiti ve joint actions. J ournal of the Royal Society Interface 12 , 20150644 (2015). [IF: 3.818]. 21 19. Curioni, A., Minio-Paluello, I., Sacheli, L. M., Candidi, M. & Aglioti, S. M. Autistic traits af fect interpersonal motor coordination by modulating strate gic use of role-based beha vior . Molecular autism 8 , 1–13 (2017). 20. Pezzulo, G., Donnarumma, F . & Dindo, H. Human sensorimotor communication: A theory of signaling in online social interactions. PLOS ONE 8 , 1–11 (2013). URL https://doi. org/10.1371/journal.pone.0079876 . 21. Sacheli, L. M., Tidoni, E., Pav one, E., Aglioti, S. & Candidi, M. Kinematics fingerprints of leader and follo wer role-taking during cooperativ e joint actions. Experimental Br ain Resear ch 226 (2013). 22. Sartori, L., Becchio, C., Bara, B. G. & Castiello, U. Does the intention to communicate af fect action kinematics? Conscious Cogn 18 , 766–772 (2009). URL http://dx.doi.org/ 10.1016/j.concog.2009.06.004 . 23. V esper , C. & Richardson, M. J. Strategic communication and behavioral coupling in asym- metric joint action. Exp Br ain Res (2014). URL http://dx.doi.org/10.1007/ s00221- 014- 3982- 1 . 24. K on v alinka, I., V uust, P ., Roepstorff, A. & Frith, C. D. Follo w you, follow me: continuous mutual prediction and adaptation in joint tapping. Q J Exp Psychol (Colchester) 63 , 2220– 2230 (2010). URL http://dx.doi.org/10.1080/17470218.2010.497843 . 25. Noy , L., Dekel, E. & Alon, U. The mirror game as a paradigm for studying the dynamics of two people improvising motion together . Pr oceedings of the National Academy of Sciences 22 108 , 20947–20952 (2011). URL http://www.pnas.org/content/108/52/20947 . http://www.pnas.org/content/108/52/20947.full.pdf . 26. Ske wes, J. C., Ske wes, L., Michael, J. & K on valinka, I. Synchronised and complementary coordination mechanisms in an asymmetric joint aiming task. Experimental brain r esear c h 233 , 551–565 (2015). 27. Era, V ., Aglioti, S. M., Mancusi, C. & Candidi, M. V isuo-motor interference with a virtual partner is equally present in cooperati ve and competiti ve interactions. Psychological Resear ch 84 , 810–822 (2020). 28. Gandolfo, M., Era, V ., T ieri, G., Sacheli, L. M. & Candidi, M. Interactor’ s body shape does not af fect visuo-motor interference ef fects during motor coordination. Acta psycholo gica 196 , 42–50 (2019). 29. Salazar K ¨ ampf, M. et al. Disentangling the sources of mimicry: Social relations analyses of the link between mimicry and liking. Psychological Science 29 , 131–138 (2018). 30. van der W el, R., Knoblich, G. & Sebanz, N. Let the force be with us: Dyads exploit hap- tic coupling for coordination. Journal of Experimental Psycholo gy: Human P er ception and P erformance (2010). 31. D’Ausilio, A. et al. Leadership in orchestra emerges from the causal relationships of move- ment kinematics. PLoS One 7 , e35757 (2012). URL http://dx.doi.org/10.1371/ journal.pone.0035757 . 23 32. Ganesh, G., T agaki, A., Y oshioka, T ., Kawato, M. & Burdet, E. T wo is better than one: Physical interactions impro ve motor performance in humans. Natur e, Scientific Report 4:3824 (2014). 33. Groten, R., Feth, D., Peer , A. & Buss, M. Shared decision making in a collaborati ve task with reciprocal haptic feedback-an ef ficiency-analysis. In 2010 IEEE International Confer ence on Robotics and Automation , 1834–1839 (IEEE, 2010). 34. Malysz, P . & Sirouspour , S. T ask performance e v aluation of asymmetric semiautonomous teleoperation of mobile twin-arm robotic manipulators. IEEE T ransact ions on Haptics 6 , 484–495 (2013). 35. Masumoto, J. & Inui, N. Motor control hierarchy in joint action that in volv es bimanual force production. Journal of neur ophysiology 113 , 3736–3743 (2015). 36. Pezzulo, G., Iodice, P ., Donnarumma, F ., Dindo, H. & Knoblich, G. A voiding accidents at the champagne reception: A study of joint lifting and balancing. Psyc hological Science 28 , 338–345 (2017). [IF 2015: 5.476]. 37. Reed, K. B. & Peshkin, M. A. Physical collaboration of human-human and human-robot teams. IEEE T ransactions on Haptics, V ol 1, No 2 (2008). 38. T akagi, A., Usai, F ., Ganesh, G., Sanguineti, V . & Burdet, E. Haptic communication between humans is tuned by the hard or soft mechanics of interaction. PLoS computational biology 14 , e1005971 (2018). 24 39. T akagi, A., Hirashima, M., Nozaki, D. & Burdet, E. Indi viduals physically interacting in a group rapidly coordinate their mov ement by estimating the collectiv e goal. eLife 8 , e41328 (2019). 40. Roche, L. & Saint-Bauzel, L. Implementation of haptic communication in comanipulativ e tasks: A statistical state machine model. In 2016 IEEE/RSJ International Confer ence on Intelligent Robots and Systems (IR OS) , 2670–2675 (2016). 41. Melendez-Calderon, A. Classification of strategies for disturbance attenuation in human- human collaborati ve tasks. In 33r d Annual International Confer ence of the IEEE EMBS (2011). 42. Reed, K. B. et al. Haptic cooperation between people, and between people and machines. In 2006 IEEE/RSJ International Confer ence on Intelligent Robots and Systems , 2109–2114 (2006). 43. T oyokawa, W ., Kim, H.-r . & Kameda, T . Human collecti ve intelligence under dual exploration-e xploitation dilemmas. PloS one 9 , e95789 (2014). 44. Marshall, J. A., Bro wn, G. & Radford, A. N. Indi vidual confidence-weighting and group decision-making. T r ends in ecology & evolution 32 , 636–645 (2017). 45. Rosenberg, L. Artificial swarm intelligence vs human experts. In Neural Networks (IJCNN), 2016 International J oint Confer ence on , 2547–2551 (IEEE, 2016). 46. Pleskac, T . J. & Busemeyer , J. R. T wo-stage dynamic signal detection: a theory of choice, decision time, and confidence. Psychological r evie w 117 , 864 (2010). 25 47. V ickers, D. & Packer , J. Effects of alternating set for speed or accuracy on response time, accuracy and confidence in a unidimensional discrimination task. Acta psycholo gica 50 , 179– 197 (1982). 48. Gajdos, T ., Fleming, S. M., Saez Garcia, M., W eindel, G. & Davranche, K. Rev ealing sub- threshold motor contrib utions to perceptual confidence. Neur oscience of consciousness (2019). 49. Donnarumma, F ., Dindo, H. & Pezzulo, G. Y ou cannot speak and listen at the same time: a probabilistic model of turn-taking. Biological Cybernetics 111 , 156–183 (2017). [IF 2015: 1.611]. 50. Donnarumma, F ., Dindo, H. & Pezzulo, G. Sensorimotor coarticulation in the ex ecution and recognition of intentional actions. F r ontiers in Psyc hology 8 , 237 (2017). [IF 2015: 2.463]. 51. Donnarumma, F ., Dindo, H. & Pezzulo, G. Sensorimotor communication for humans and robots: improving interacti ve skills by sending coordination signals. IEEE T ransactions on Cognitive and De velopmental Systems (2017). 52. Pezzulo, G. & Dindo, H. What should i do next? using shared representations to solve inter- action problems. Experimental Brain Resear ch 211 , 613–30 (2011). 53. Pezzulo, G. The interaction engine: a common pragmatic competence across linguistic and non-linguistic interactions. IEEE T ransactions on Autonomous Mental Development 4 , 105– 123 (2012). [IF: 2.170]. 54. Pezzulo, G. Studying mirror mechanisms within generati ve and predictiv e architectures for joint action. Cortex 49 , 2968–2969 (2013). [IF: 6.042]. 26 55. Roche, L. & Saint-Bauzel, L. The semaphoro haptic interface: a real-time low-cost open- source implementation for dyadic teleoperation. In ERTS (2018). Acknowledgements Funding: This work has receiv ed fundings from a grant to G.P . from the European Research Council (Grant Agreement No. 820213, ThinkAhead) and a grant to G.P from ONR (grant number N62909-19-1-2017). W e thank Bahador Bahrami and Dan Bang for sharing data on their group decision tasks. Competing Interests The authors declare that they hav e no competing financial interests. Correspondence Correspondence and requests for materials should be addressed to Giov anni Pezzulo (email: giov anni.pezzulo@istc.cnr .it). A uthor contributions GP , LR, LSB designed the research; LR conducted the research under the supervi- sion of GP and LSB; GP , LR and LSB wrote the article. 27 X thresh : 0.05 0.08 0.10 0.15 0.20 0.25 0.30 % of correct predictions : 88.5 90.0 91.9 92.9 93.7 94.6 95.7 T able 1: Proportion of group choices correctly predicted by the 1C predictor . 28 Figure 1: Experimental setup. (A) Example experimental stimuli, without (left) or with (right) an oddball target. (B) Graphical illustration of the (left-right) decisions using the haptic interface. Participants are presented with a choice between the first and second stimulus (center panel) and hav e to mov e the haptic interf ace to the left (left panel) or right (right panel). The setup is the same for both individual and group decisions; but while during individual decisions the haptic interf aces of the two participants are not connected, the y are connected during group decisions. See the main text for details. 29 Figure 2: Experimental results. (A) Theoretical prediction of the WCS model: dyads out- perform their best indi vidual members ( s dy ad /s max > 1 ) if members ha ve similar sensitivities ( s min /s max > 0 . 4 ). (B) Performance of dyads whose members ha ve similar (green) or differ - ent (blue) sensiti vities. (C,D) A verage psychometric functions of the worst (blue) and best (red) indi vidual members, compared to the dyad (black). Dots are the average percentage of 2 nd stim- uli chosen as answer , for each contrast dif ference in the experiment. Lines are fitted cumulativ e Gaussian functions. Steeper slopes correspond to higher sensitivities. 30 Figure 3: Computational analyses. (A) Correlation between dyads’ sensitivities observed in the experiment and those predicted by the WCS model. Blue dots: data points (mean data of each dyad ov er the 8 blocks); red line: theoretical prediction of the WCS model. (B) Correlation between dyads’ sensiti vity , relativ e to the best member of the dyad. Green and purple lines: linear regression model fitted on the data points and 95% confidence interv al, respecti vely . 31

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment