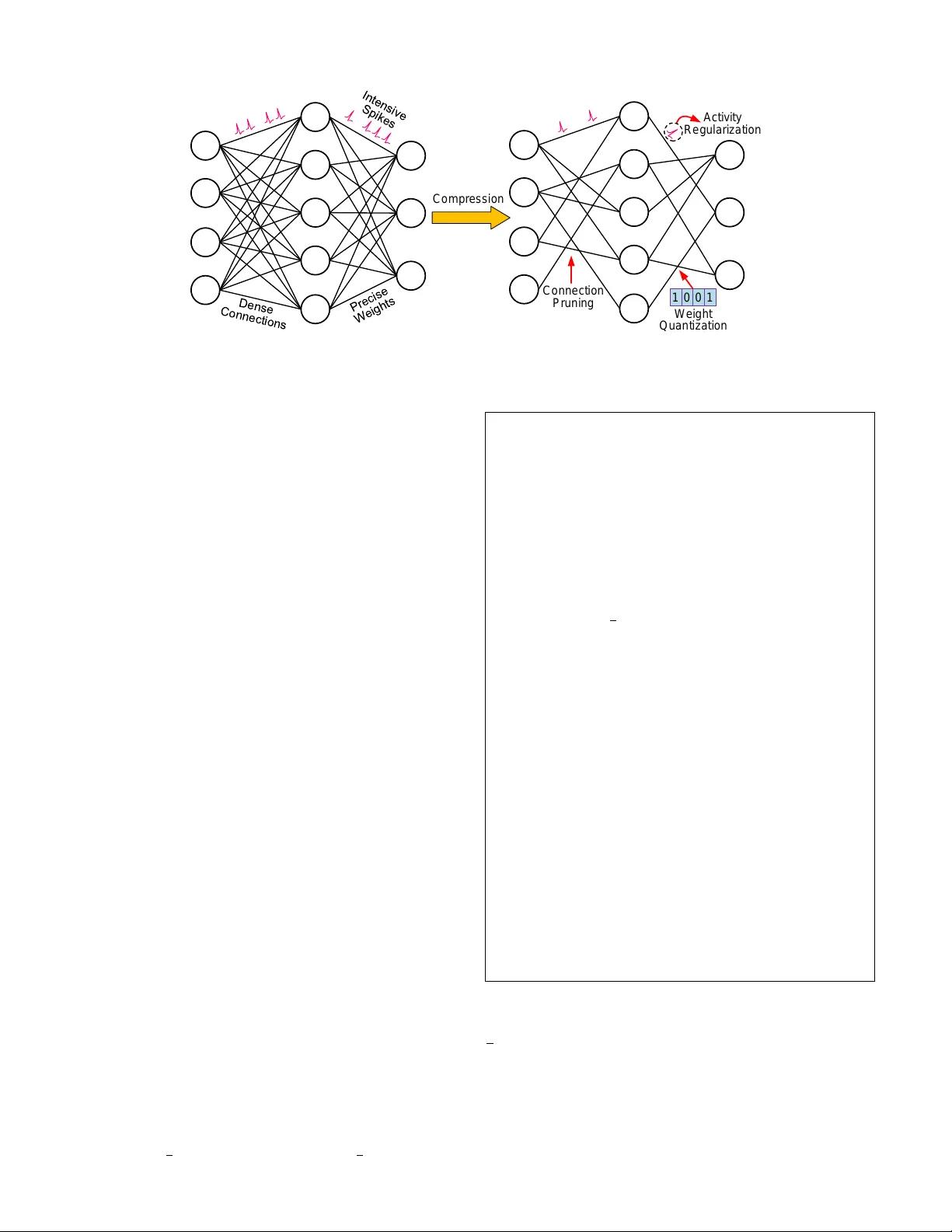

Comprehensive SNN Compression Using ADMM Optimization and Activity Regularization

As well known, the huge memory and compute costs of both artificial neural networks (ANNs) and spiking neural networks (SNNs) greatly hinder their deployment on edge devices with high efficiency. Model compression has been proposed as a promising tec…

Authors: Lei Deng, Yujie Wu, Yifan Hu