Computationally Efficient Distributed Multi-sensor Fusion with Multi-Bernoulli Filter

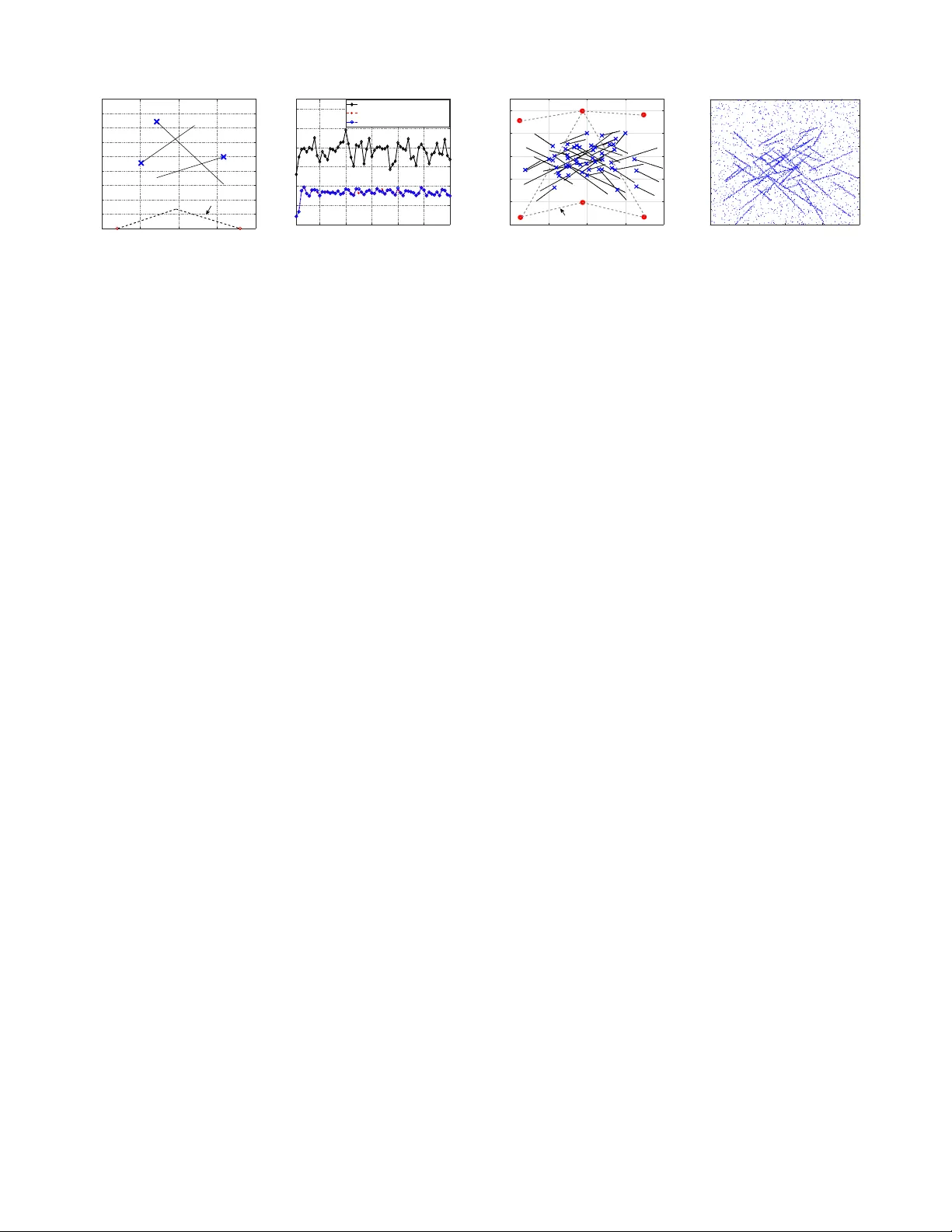

This paper proposes a computationally efficient algorithm for distributed fusion in a sensor network in which multi-Bernoulli (MB) filters are locally running in every sensor node for multi-target tracking. The generalized Covariance Intersection (GC…

Authors: Wei Yi, Suqi Li, Bailu Wang