Classification accuracy as a proxy for two sample testing

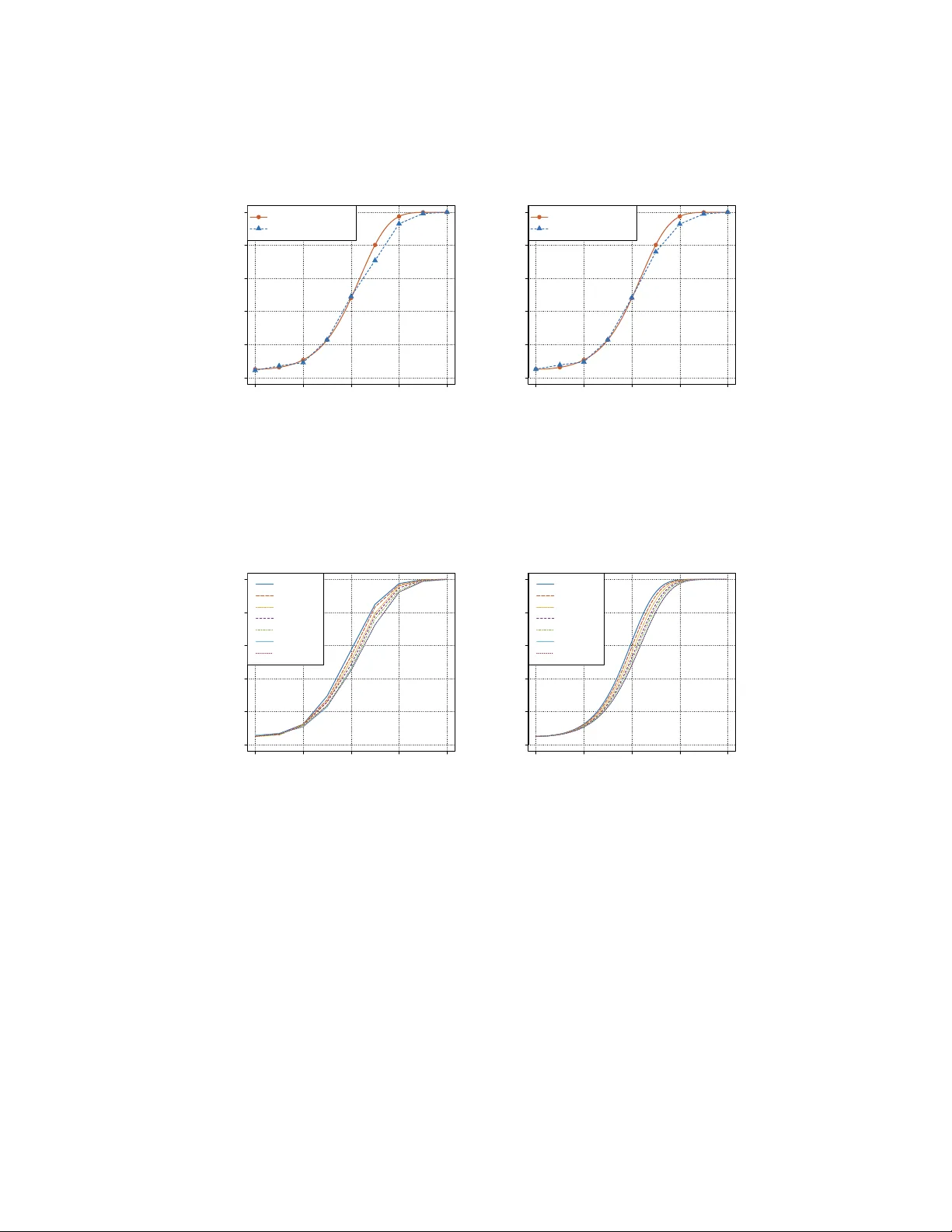

When data analysts train a classifier and check if its accuracy is significantly different from chance, they are implicitly performing a two-sample test. We investigate the statistical properties of this flexible approach in the high-dimensional sett…

Authors: Ilmun Kim, Aaditya Ramdas, Aarti Singh