PANTHER: A Programmable Architecture for Neural Network Training Harnessing Energy-efficient ReRAM

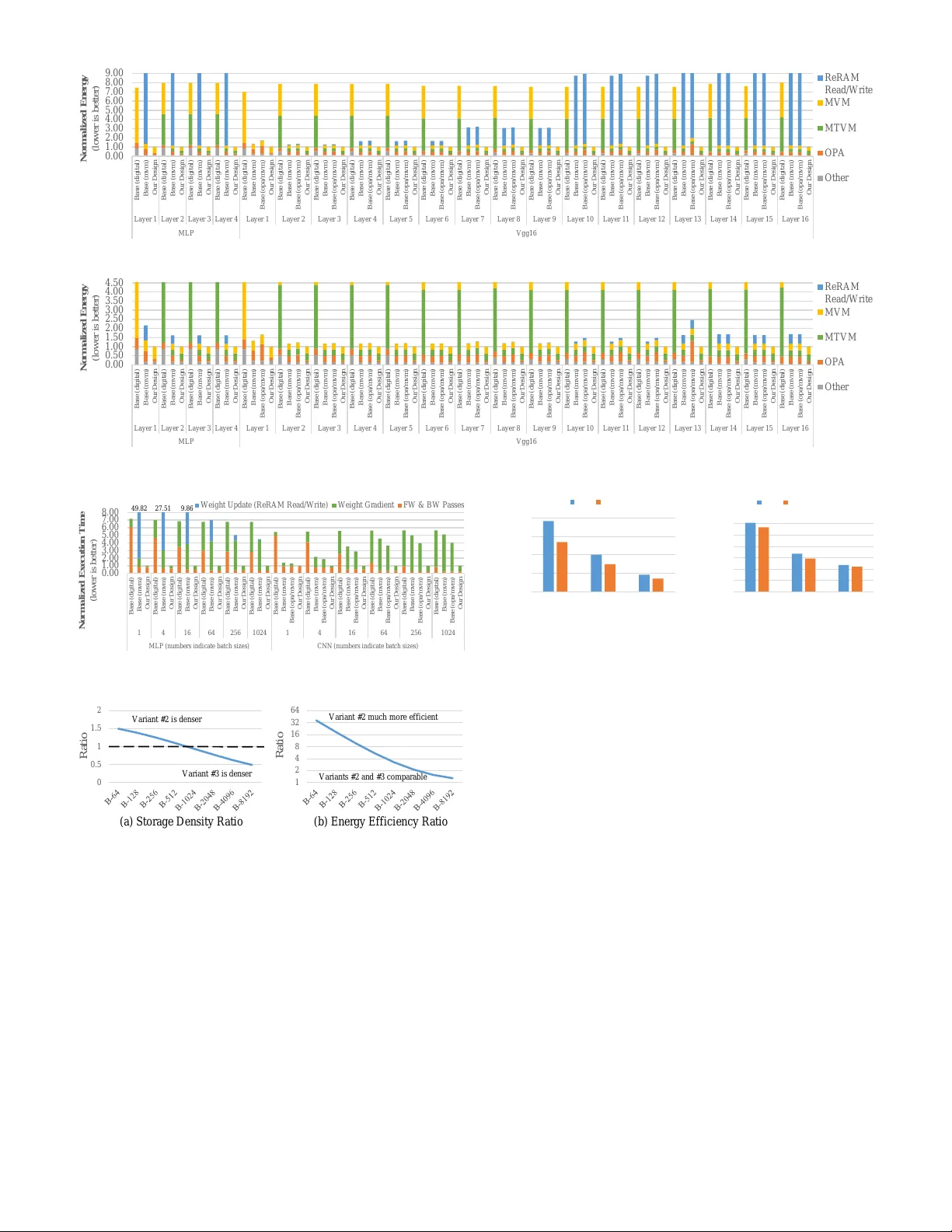

The wide adoption of deep neural networks has been accompanied by ever-increasing energy and performance demands due to the expensive nature of training them. Numerous special-purpose architectures have been proposed to accelerate training: both digi…

Authors: Aayush Ankit, Izzat El Hajj, Sai Rahul Chalamalasetti