Accelerating Least Squares Imaging Using Deep Learning Techniques

Wave equation techniques have been an integral part of geophysical imaging workflows to investigate the Earth's subsurface. Least-squares reverse time migration (LSRTM) is a linearized inversion problem that iteratively minimizes a misfit functional …

Authors: Janaki Vamaraju, Jeremy Vila, Mauricio Araya-Polo

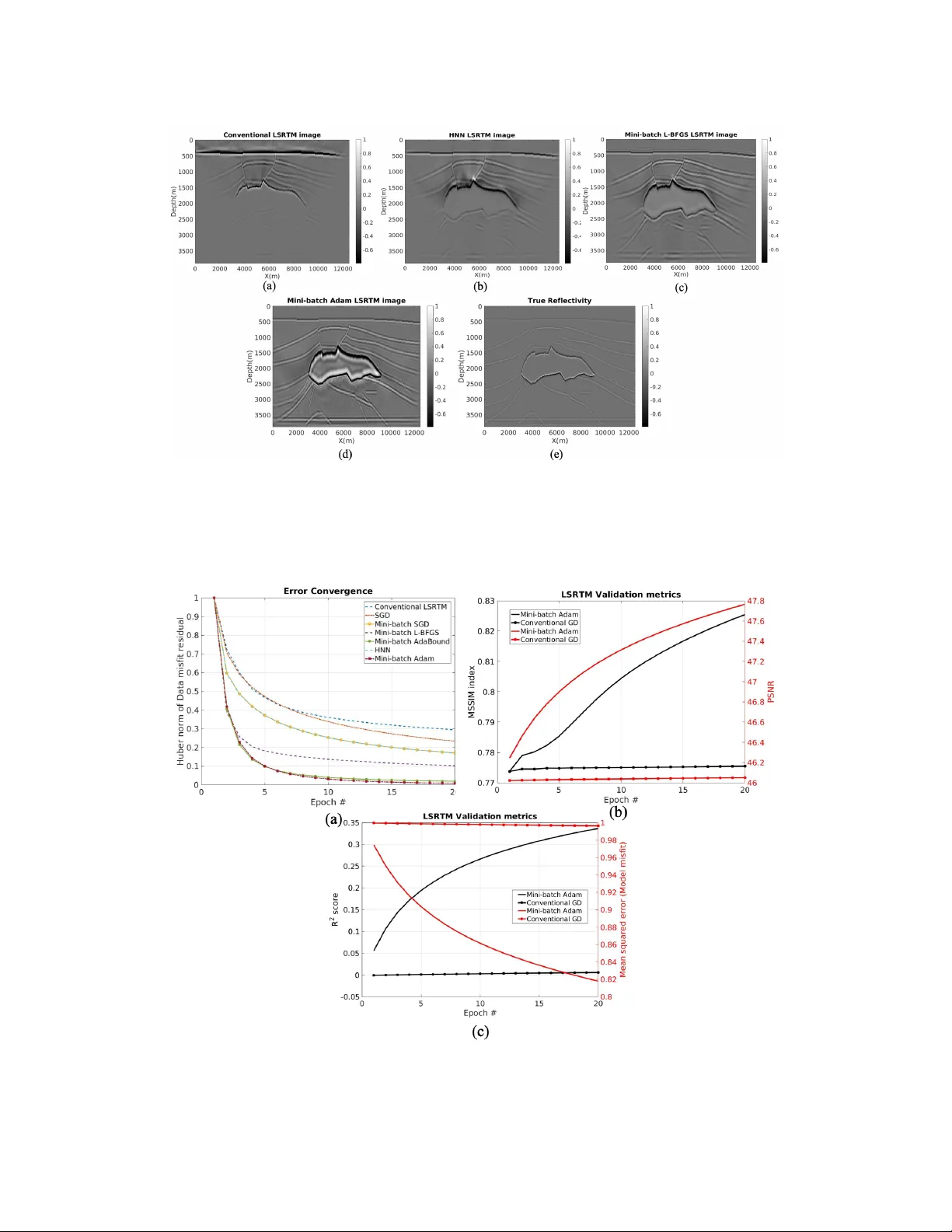

Accelerating Least Squar es Imaging Using Deep Lear ning T echniques Janaki V amaraju John A. and Katherine G. Jackson School of Geosciences, The Univ ersity of T exas at Austin Jer emy Vila Shell International Exploration and Production Inc. Mauricio Araya-Polo ∗ Shell Global Solutions US Inc. Debanjan Datta Shell International Exploration and Production Inc. Mrinal K. Sen John A. and Katherine G. Jackson School of Geosciences, The Univ ersity of T exas at Austin Abstract W ave equation techniques ha ve been an integral part of geophysical imaging work- flows to in vestigate the Earth’ s subsurface. Least-squares re verse time migration (LSR TM) is a linearized inv ersion problem that iterativ ely minimizes a misfit func- tional as a function of the model perturbation. The success of the inv ersion largely depends on our ability to handle large systems of equations giv en the massiv e computation costs. The size of the system almost exponentially increases with the demand for higher resolution images in complicated subsurface media. W e propose an unsupervised deep learning approach that lev erages the existing physics-based models and machine learning optimizers to achie ve more accurate and cheaper solu- tions. W e compare dif ferent optimizers and demonstrate their efficac y in mitigating imaging artifacts. Further , minimizing the Huber loss with mini-batch gradients and Adam optimizer is not only less memory-intensiv e b ut is also more rob ust. Our empirical results on synthetic, densely sampled datasets suggest faster con vergence to an accurate LSR TM result than a traditional approach. 1 Introduction Imaging techniques are used in geophysics to produce images of the Earth’ s subsurface at di verse length scales. These techniques are recently being adopted for monitoring subsurface geological formations that are used for carbon capture and storage (Figure 1). C O 2 sequestration is one of the solutions to the increasing greenhouse gas emissions that cause global warming and climate change. In this context, w av e equation based migration algorithms [ 1 , 2 ] hav e been an integral part of ∗ Mauricio Araya-Polo is now at T otal. All contributions to this work were made while at Shell Global Solutions US Inc. Second W orkshop on Machine Learning and the Physical Sciences (NeurIPS 2019), V ancouver , Canada. imaging workflo ws to in vert for subsurface properties at greater depths. Among them, least-squares rev erse time migration (LSR TM) [ 3 , 4 ] is the most popular migration method due to its ability to image complex subsurface areas with large computational resources such as graphic processing units (GPUs). LSR TM can be seen as a linear in verse problem based on the acoustic w av e equation. The goal is to in vert for an earth model that represents rock properties to fit the recorded surface data. The imaging condition or the gradient calculation in LSR TM is to take the zero-lag of the cross-correlation between the re verse-time-e xtrapolated receiv er wa vefield and the forw ard-time-extrapolated source wa vefield [ 5 ]. When the data is subjected to aliasing, truncation or noise, the adjoint operator can degrade the resolution of the final migrated image. LSR TM obtains an approximate image of the model perturbation by iterati vely minimizing the cost function. The LSR TM, howe ver , is an expensi ve replacement and its ability to migrate surface data depends on the accuracy of the velocity model and adequate preconditioning [ 6 , 7 ], and needs additional re gularization terms for successful damping of artifacts [ 8 , 9 ]. At each iteration of LSR TM, Born forw ard modeling and adjoint operators are applied, which makes the computational cost extremely high. Under the paradigm of theory guided data science, man y researchers in the geophysics community are looking at ways of combining physics and machine learning [ 10 – 17 ]. These works, howe ver were not extended to least-squares migrations. In this paper , we implement LSR TM using a deep learning approach and adopt strate gies from data science to reduce computational costs and accelerate con vergence.The feasibility of LSR TM is examined in combination with mini-batch gradients and deep learning optimizers such as the Hopfield neural networks (HNN), adaptiv e moment estimation (Adam) and Limited memory BFGS (L-BFGS). Mini-batch gradients help in reduction of cross-talk [ 18 – 20 ] and the deep learning optimizers can help mitigate acquisition footprints that are caused by the lack of shot data. This not only achiev es faster con vergence through iterations b ut also generates geologically consistent models. The computation cost is further reduced by using a subset of total shot data for each iteration. Implementing LSR TM in a deep learning framework (Pytorch or T ensorflow) enables us to e xperiment with machine learning loss functions and re gularizations. The automatic differentiation capability of the softw are can be used to calculate the gradient of the cost function. W e further minimize the Huber loss function to improve the ef ficiency of LSR TM. W e apply the techniques to a 2D synthetic model and sho w improvement ov er conv entional LSR TM baselines. The proposed methodology achiev es higher spatial resolution according to quantitative e valuation metrics. 2 Methodology The goal of least-squares migration is to in vert for the earth’ s surface reflectivity model (model perturbation indicating rock properties) m to fit the recorded data, d 0 : C ( m ) = 1 2 k d 0 − Gm k 2 , (1) where C is the cost/loss function to be minimized and G is the linearized Born modeling (scattering) operator that requires a background velocity model. This background velocity model is known a-priori from other velocity analysis methods. If G T G is in vertible, the least-squares solution for equation (1) can be written as: m = ( G T G ) − 1 G T d 0 , (2) where G T is the migration operator and G T G is the Hessian matrix H . The key to LSR TM is to obtain the inv erse of H ; ho wev er the computational cost and storage of H are not feasible for realistic problems. Alternati vely , different approximations, such as gradient based iterativ e approaches [21, 3, 22] are pursued. In this paper , we use PyT orch [23] for the above implementation. The Born modeling based wave equations can be implemented as a recurrent neural network (RNN) which is similar to the workflo w proposed by [ 17 ]. The same operations are applied in each cell of an RNN, but the data that the operations act upon changes. Each cell applies the finite-difference con volution operation to propagate forward one time step, by taking the state from the pre vious cell (the displacement w av efield at adjacent time steps and auxiliary wav efield) and the source amplitude as inputs, and producing the updated state vectors and the current wavefield as outputs. Although, automatic dif ferentiation can be used to calculate the gradient of the cost function, we 2 use the traditional adjoint state method to sa ve GPU memory costs. Easy chaining of operations in PyT orch, enables users to create customized loss functions or apply data normalizing operations. W e generate observed data with the full finite dif ference wav e propagation operator . LSR TM intrinsically is an under -determined in verse problem. There are alw ays a limited number of receivers that can cov er the subsurface and the source function is a band limiting signal. This can lead to incomplete data in terms of spatial co verage and frequenc y content. Here, we use the Huber loss function [ 24 , 25 ] to improv e the resolution and robustness of LSR TM: C ( m ) = 1 N N X i C i ( m ) where C i ( m ) = ( 1 2 k d 0 − Gm k 2 if | d 0 − Gm | ≤ ε ε | d 0 − Gm | − ε/ 2 otherwise , (3) where i is the corresponding shot record for i -th source and N is the total number of shots. The parameter ε defines a threshold based on the distance between target and prediction. For the example in this paper , we use a default value of 1 for ε . Con ventionally , the LSR TM cost function is ev aluated at each iteration using all the shot data that is av ailable. The gradient calculation can be very expensi ve when the number of shots are large (especially in 3D surv eys). T o reduce the computation costs and to reap the benefits of stochastic optimization, mini-batch gradient methods take a subset of entire shots to construct the objectiv e function and update the model: C ( m ) ≈ C B k ( m ) = 1 | B | X i ∈ B C i ( m ) , (4) where B is a subset of total shots, and | B | is its size. This performs frequent updates with reasonable variance and is faster to con verge. The fluctuation of the objective function is not sev ere. W e first divide all the shot data into sequential mini-batches and then randomly shuffle them. Although mini-batches of fer a more scalable solution, the y can introduce migration artifacts if the data is not sampled effecti vely . Therefore, we need more efficient optimization algorithms to produce migrated images with higher resolution. First-order gradient based optimization algorithms can be v ery slo w to con verge to high accuracy solutions, specially in noisy lar ge-scale datasets. W e combine mini-batch gradients with various optimizers such as the Hopfield neural networks (HNN), adaptiv e moment estimation (Adam) and Limited memory BFGS (L-BFGS). These techniques not only reduces the number of forward solves but also enhances the accuracy of in verted results when compared to traditional LSR TM algorithms (using gradient descent (GD)). W e provide qualitati ve and quantitativ e comparisons of the above presented methods against con ventional LSR TM baseline migrated images. Note that, no preconditioning w as used for either conv entional LSR TM or for the proposed mini-batch approach. 3 Results and Discussion The synthetic example is based on a 2 D slice from the SEG/EA GE 3 D salt model [ 26 ]. This model is 4 km in depth and 12.5 km wide. W e set 160 shots and 160 receivers per shot both deployed on the surface. The peak frequenc y of the source Ricker wa velet is 6 Hz. Figures 2(a), 2(b) and 2(c) show the true velocity model, the background smooth v elocity model and the true reflectivity respecti vely . Figure 3(a) sho ws a con ventional LSR TM image using GD after 20 epochs. The reflectivity is still far from the true reflectivity and the bottom of the salt body is not very clear . Figure 3(b) shows the migrated image from HNN which is slightly impro ved with a mean structural similarity index (MSSIM) of 0.79. Figures 3(c) and 3(d) show the migrated images from L-BFGS and Adam optimizers respecti vely . When combined with mini-batch training, not only the true amplitudes are better reco vered but also the salt bottom is no w clearly resolved. The reflectors at the bottom of the image are enhanced and there is no loss of continuity . The number of shots in each mini-batch and the step-size for model updates are the two important hyperparameters. W e randomly reserve twenty-fiv e percent of all the shots that e venly co ver the entire surv ey . This de velopment dataset is used to ev aluate the cost function for 25 combinations of batch-sizes and learning rates (Grid-search optimization). The batch size and learning rate that correspond to lo west cost function v alue is chosen for each optimizer . For 40 shots and Adam optimizer , the best learning rate and batch-size is 1 e − 7 and 4 respectiv ely . The migrated images hav e an MSSIM index greater than 0.8 and the migration 3 Figure 1: Geophysical imaging of geological formations for enhanced carbon capture and storage Figure 2: SEG/EA GE salt model (a) True velocity (b) Smooth migration velocity model (c) T rue reflectivity artifacts are removed. Figure 4(a) shows the error con ver gence or the cost function calculated for each optimizer . Adam and AdaBound con verge within 6 epochs and the cost function becomes flat beyond 10 epochs. Howe ver , the image from Adam (MSSIM=0.8255) has a higher MSSIM than AdaBound (MSSIM = 0.8157). The performance of L-BFGS is comparable but con ver ges in slightly more number of epochs than Adam. Figures 4(b) and 4(c) plot the other v alidation metrics for a quantitative ev aluation. Using the Adam optimizer , at the end of 20 epochs, MSSIM inde x reaches a value of 0.8255 from 0.77. Compared to Adam, conv entional LSR TM shows almost no improv ement from the initial image. Additional preconditioning and filtering is usually needed to greatly improv e the image. The final R 2 score of the Adam migrated image is raised to 0.34 from an initial 0.06. The mean squared error (MSE) value is reduced to 0.825 and the peak signal-to-noise ratio (PSNR) is improv ed to 47.78 o ver 20 epochs. Note that the PSNR v alue is measured in log scale. The performance trend is ov erall positiv e. 4 Conclusions W e present a deep learning approach for LSR TM by adopting strategies from data-science to accelerate con vergence. In a time-domain formulation, mini-batch gradients can reduce the computation cost by using a subset of total shots for each epoch. The Adam optimizer , combined with the Huber loss and mini-batch gradients resulted in significantly faster con ver gence than when using con ventional gradient descent optimizer with the cost and gradient calculate d using the entire dataset. W e apply the techniques to the SEG/EA GE 3D salt model and show impro vements o ver con ventional LSR TM baseline. The described methods mitigate artif acts that arise from limited aperture, lo w subsurface illumination, and cross-correlation noise. Ef fects that irregular sampling has on the proposed mini- batch approach remains future work. 4 Figure 3: SEG/EA GE salt model migrated images (a) Conv entional LSR TM image (MSSIM=0.7755) (b) HNN LSR TM image (MSSIM=0.79) (c) Mini-batch L-BFGS LSR TM image (MSSIM=0.8026) (d) Mini-batch Adam LSR TM image (MSSIM=0.8255) (e) T rue reflecti vity Figure 4: Comparison of (a) Error con vergence (b) MSSIM and PSNR (c) R 2 and MSE metrics for con ventional LSR TM and mini-batch Adam LSR TM images 5 References [1] Edip Baysal, Dan D Koslof f, and John WC Sherwood. Reverse time migration. Geophysics , 48(11):1514–1524, 1983. [2] George A McMechan. Migration by extrapolation of time-dependent boundary v alues. Geo- physical Pr ospecting , 31(3):413–420, 1983. [3] T amas Nemeth, Chengjun W u, and Gerard T Schuster . Least-squares migration of incomplete reflection data. Geophysics , 64(1):208–221, 1999. [4] Henning Kühl and Mauricio D Sacchi. Least-squares wa ve-equation migration for avp/av a in version. Geophysics , 68(1):262–273, 2003. [5] Jon F Claerbout. T oward a unified theory of reflector mapping. Geophysics , 36(3):467–481, 1971. [6] James E Rickett. Illumination-based normalization for wav e-equation depth migration. Geo- physics , 68(4):1371–1379, 2003. [7] Antoine Guitton. Amplitude and kinematic corrections of migrated images for nonunitary imaging operators. Geophysics , 69(4):1017–1024, 2004. [8] Zhiguang Xue, Y angkang Chen, Ser gey Fomel, and Junzhe Sun. Seismic imaging of incomplete data and simultaneous-source data using least-squares rev erse time migration with shaping regularization. Geophysics , 81(1):S11–S20, 2015. [9] Di W u, Gang Y ao, Jingjie Cao, and Y anghua W ang. Least-squares rtm with l1 norm regularisa- tion. Journal of Geophysics and Engineering , 13(5):666–673, 2016. [10] Carlos Calderón-Macías, Mrinal K Sen, and Paul L Stoff a. Hopfield neural networks, and mean field annealing for seismic decon volution and multiple attenuation. Geophysics , 62(3):992–1002, 1997. [11] Janaki V amaraju and Mrinal K Sen. Unsupervised physics based neural netw orks for seismic migration. Interpretation , 7(3):1–51, 2019. [12] Mauricio Araya-Polo, T aylor Dahlke, Charlie Frogner , Chiyuan Zhang, T omaso Poggio, and Detlef Hohl. Automated fault detection without seismic processing. The Leading Edge , 36(3):208–214, 2017. [13] Thomas Mejer Hansen and Knud Skou Cordua. Efficient monte carlo sampling of in verse problems using a neural network-based forward—applied to gpr crosshole traveltime in version. Geophysical Journal International , 211(3):1524–1533, 2017. [14] Mauricio Araya-Polo, Joseph Jennings, Amir Adler , and T aylor Dahlke. Deep-learning tomog- raphy . The Leading Edge , 37(1):58–66, 2018. [15] Amir Adler , Mauricio Araya-Polo, and T omaso Poggio. Deep recurrent architectures for seismic tomography . In 81st EAGE Confer ence and Exhibition 2019 , 2019. [16] Reetam Biswas, Mrinal K Sen, V ishal Das, and T apan Mukerji. Pre-stack and post-stack in version using a physics-guided conv olutional neural network. Interpr etation , 7(3):1–76, 2019. [17] Alan Richardson. Seismic full-wav eform inv ersion using deep learning tools and techniques. arXiv pr eprint arXiv:1801.07232 , 2018. [18] Michael P Friedlander and Mark Schmidt. Hybrid deterministic-stochastic methods for data fitting. SIAM Journal on Scientific Computing , 34(3):A1380–A1405, 2012. [19] T ristan v an Leeuwen and Felix J Herrmann. Fast wav eform inv ersion without source-encoding. Geophysical Pr ospecting , 61:10–19, 2013. [20] Hui Y ang, Junxiong Jia, Bangyu W u, and Jinghuai Gao. Mini-batch optimized full wav eform in version with geological constrained gradient filtering. Journal of Applied Geophysics , 152:9– 16, 2018. 6 [21] Gerard T Schuster . Least-squares cross-well migration. In SEG T echnical Pr ogram Expanded Abstracts 1993 , pages 110–113. Society of Exploration Geophysicists, 1993. [22] Y axun T ang. T arget-oriented wav e-equation least-squares migration/in version with phase- encoded hessian. Geophysics , 74(6):WCA95–WCA107, 2009. [23] Adam Paszke, Sam Gross, Soumith Chintala, Gre gory Chanan, Edward Y ang, Zachary DeV ito, Zeming Lin, Alban Desmaison, Luca Antiga, and Adam Lerer . Automatic differentiation in pytorch. 2017. [24] Peter J Huber . Robust estimation of a location parameter . In Breakthr oughs in statistics , pages 492–518. Springer , 1992. [25] Antoine Guitton and William W Symes. Robust in version of seismic data using the huber norm. Geophysics , 68(4):1310–1319, 2003. [26] Fred Aminzadeh, N Burkhard, J Long, T im Kunz, and P Duclos. Three dimensional seg/eaeg models—an update. The Leading Edge , 15(2):131–134, 1996. 7

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment