Generalized Methods and Solvers for Noise Removal from Piecewise Constant Signals

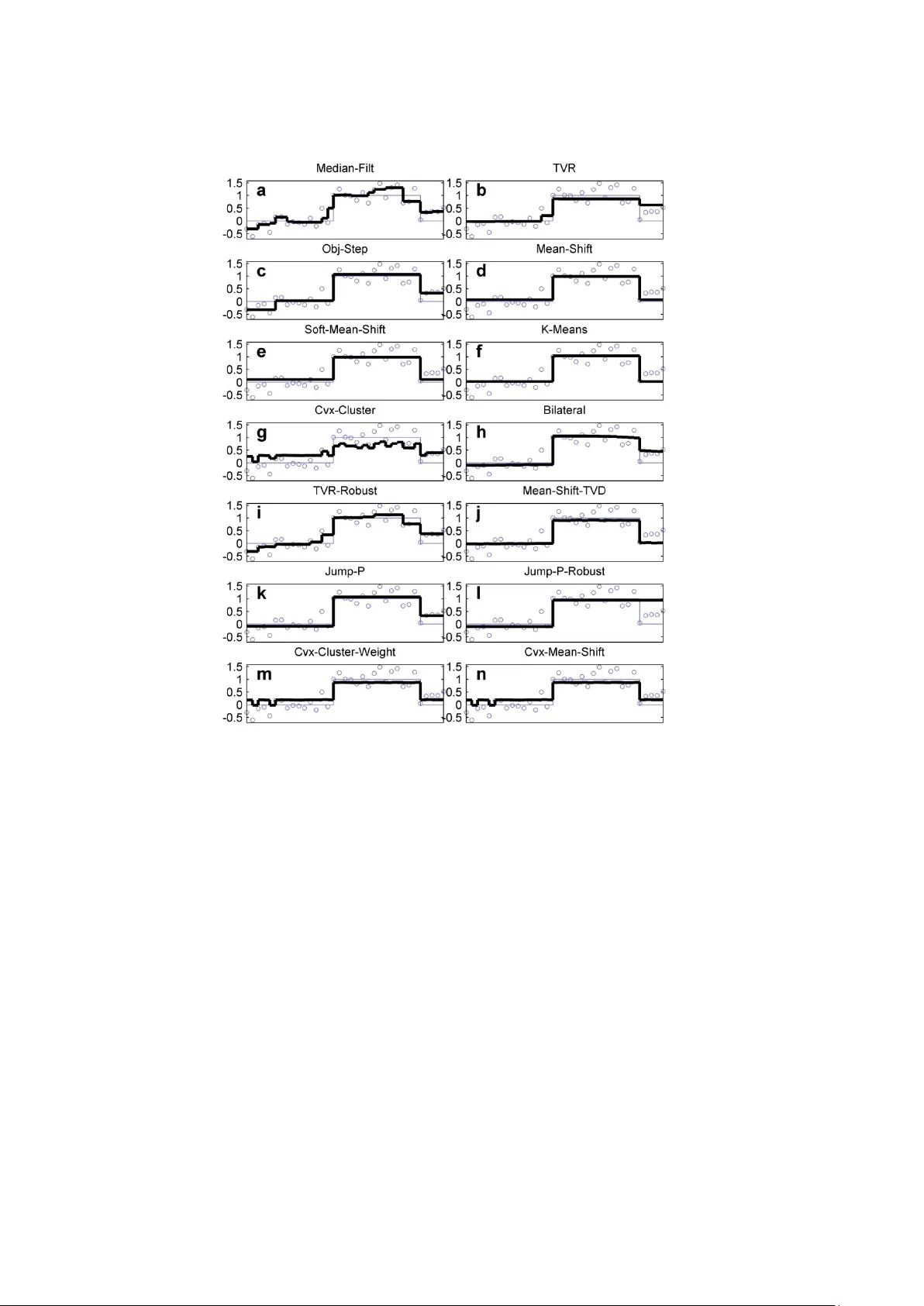

Removing noise from piecewise constant (PWC) signals, is a challenging signal processing problem arising in many practical contexts. For example, in exploration geosciences, noisy drill hole records need separating into stratigraphic zones, and in bi…

Authors: Max A. Little, Nick S. Jones