Port-Hamiltonian Approach to Neural Network Training

Neural networks are discrete entities: subdivided into discrete layers and parametrized by weights which are iteratively optimized via difference equations. Recent work proposes networks with layer outputs which are no longer quantized but are soluti…

Authors: Stefano Massaroli, Michael Poli, Federico Califano

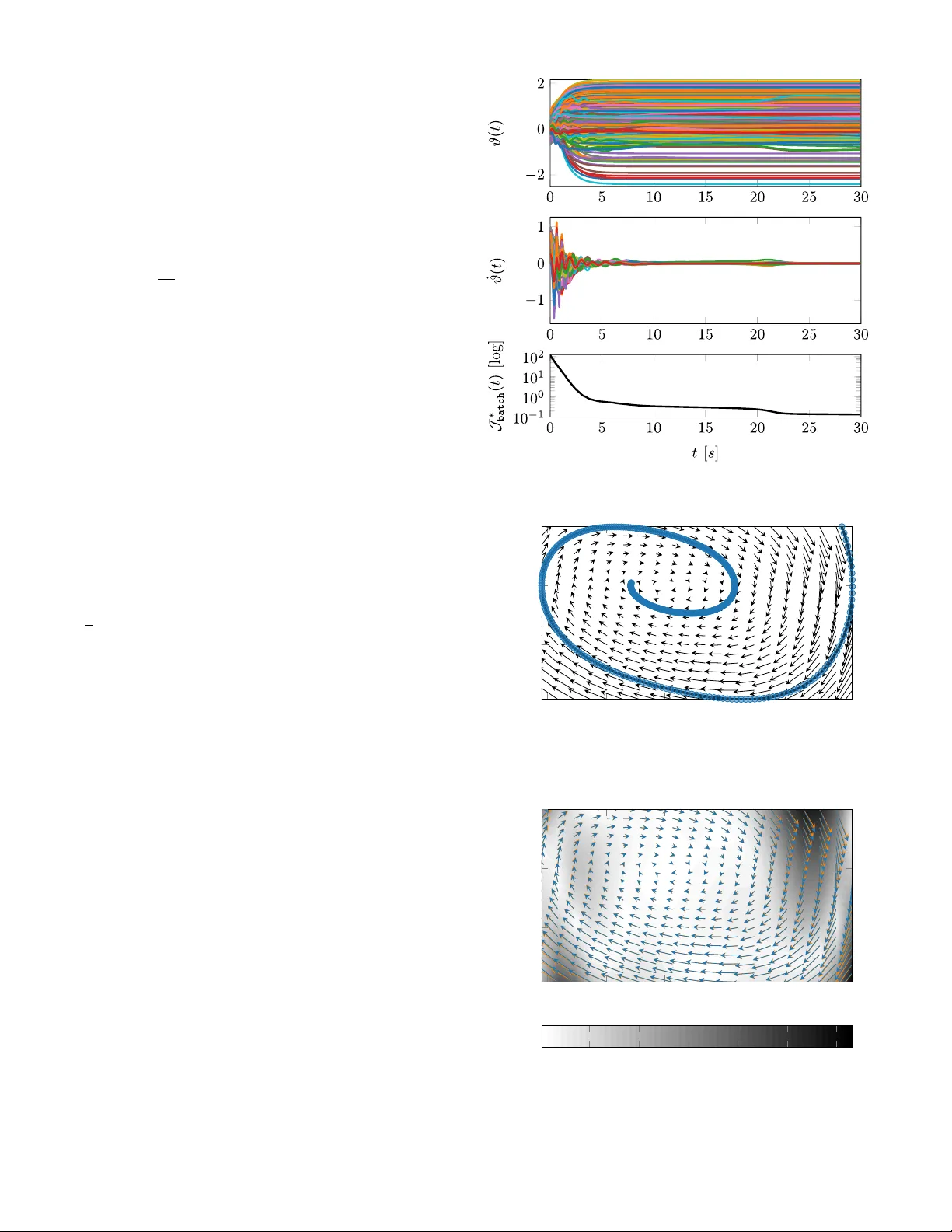

P ort–Hamiltonian A ppr oach to Neural Network T raining Stefano Massaroli 1 , † ,? and Michael Poli 2 ,? , Federico Califano 3 , Angela Faragasso 1 , † , Jinkyoo Park 2 , Atsushi Y amashita 1 , † and Hajime Asama 1 , ‡ Abstract — Neural netw orks ar e discr ete entities: subdivided into discrete layers and parametrized by weights which are iteratively optimized via diff erence equations. Recent work proposes networks with layer outputs which are no longer quantized but ar e solutions of an ordinary differential equation (ODE); however , these netw orks are still optimized via discrete methods (e.g. gradient descent). In this paper , we explor e a different direction: namely , we propose a novel framework for learning in which the parameters themselves are solutions of ODEs. By viewing the optimization process as the e volution of a port-Hamiltonian system, we can ensure conver gence to a minimum of the objectiv e function. Numerical experiments hav e been performed to show the validity and effectiveness of the proposed methods. I . I N T R O D U C T I O N Neural networks are universal function approximators [1]. Giv en enough capacity , which can arbitrarily be increased by adding more parameters to the model, they can approximate any Borel–measurable function mapping finite–dimensional spaces. Each layer of a neural network performs an af fine transformation to its input and generates an output which is then fed into the next layer . Backpropagation [2] is at the core of modern deep learning, and most state-of-the- art architectures for tasks such as image segmentation [3], generativ e tasks [4], image classification [5] and machine translation [6] rely on the effecti v e combination of uni versal approximators and line search optimization methods: most notably stochastic gradient descent (SGD), Adam [7] RM- SProp [8] and recently RAdam [9]. T raining neural networks is a non–con ve x optimization problem which aims to obtain globally or locally optimal values for its parameters by minimizing an objectiv e function 1 Stefano Massaroli, Angela Faragasso, Atsushi Y amashita and Hajime Asama are with the Department of Precision Engineering, The Univ ersity of T ok yo, 7-1-3 Hongo, Bunkyo, T okyo, Japan { massaroli,faragasso,yamashita,asama } @robot.t.u-tokyo.ac.jp 2 Michael Poli and Jinkyoo Park are with the Department of Industrial and Systems Engineering, Korea Advanced Institute of Science and T echnology (KAIST), 291 Daehak-ro, Eoeun-dong, Y useong-gu, Daejeon, South Korea { poli m,jinkyoo.park } @kaist.ac.kr 3 Federico Califano is with the Faculty of Electrical Engineering, Math- ematics & Computer Science (EWI), Robotics and Mechatronics (RAM), Univ ersity of T wente, Hallenweg 23 7522NH, Enschede, The Netherlands f.califano@utwente.nl † IEEE Member , ‡ IEEE F ellow ? These authors contributed equally to the work. © 2019 IEEE. Personal use of this material is permitted. Permission from IEEE must be obtained for all other uses, in any current or future media, including reprinting/r epublishing this material f or advertising or promotional purposes, creating new collective works, f or resale or redistrib ution to servers or lists, or reuse of any copyrighted component of this work in other works. ϑ (1) ϑ (2) Gradient Descent ϑ (1) ϑ (2) Port–Hamiltonian Optimizer Fig. 1. Comparison between the discrete optimizer gradient descent and our continuous port-Hamiltonian approach. that is usually designed ad–hoc for the application at hand. The landscape of such objective functions is often highly non–con ve x and finding global optima is in general an NP–complete problem [10], [11]. Optimality guarantees for algorithms such as gradient descent do not hold in this setting; moreover , the discrete nature of neural networks adds complications to the de velopment of a proper theo- retical understanding with sufficient conv ergence conditions. Despite the empirical successes of deep learning, these reasons alone lead many to question whether or not relying on these standard methods could be a limitation to the advancement of deep learning research. In this w ork, we of fer a new perspectiv e on the optimization of neural networks, where parameters are no longer iterati vely updated via dif- ference equations, but are instead solutions of ODEs. This is achie ved by equipping the parameters with autonomous port-Hamiltonian dynamics. Port-Hamiltonian (PH) systems [12], [13], [14] have been introduced to model dynamical systems coming from different physical domains in a unified manner . This frame work turned out to be fruitful in dealing with passivity based control (PBC) [15], [16], [17] since dissipativity information is e xplicitly encoded in PH systems, i.e. under mild assumptions those systems are passi ve. The aim of this work is to take advantage of such a structure and build a proper PH system associated to a neural network, in which the parameters of the latter are the states of the PH system. Within this framew ork, the weights ev olve in time on a continuous trajectory along strictly decreasing lev el sets of the energy function, i.e. the objectiv e function of the optimization problem, ev entually landing in one of its minima. In this way , local optimality is intrinsically guaranteed by the PH dynamics. This paper is structured as follows: Section II discusses previous works on continuous–time and energy–based ap- proaches for neural networks. In Section III, a formal in- troduction to neural networks to resolve some notational conflicts between control and learning theory . Section IV introduces port–Hamiltonian systems and their application to the training of neural networks. Next, in Section V, the performance of the proposed method is e valuated on a series of tasks and the results are discussed. Finally , in Section VI conclusions are drawn and future work is discussed. I I . R E L AT E D W O R K Recent works [18] have sho wn that it is possible to model residual layers as continuous blocks. This allows for a smooth transition between input and output: the input is inte grated for a fixed time, which can be seen as the continuous analog of the number of network layers in the discrete case. By using the adjoint integration method Neural Ordinary Dif ferential Equation Networks (ODE-Nets) offer improved memory ef ficiency and their performance is comparable to regular neural networks. ODE-Nets, howe ver , are still optimized via discrete gradient descent methods. A similar idea was previously proposed in [19], which introduces Hamiltonian dynamics as a means of modeling network activ ations. [20] introduces the Hamiltonian function as a useful physics-driv en prior for learning conservati ve dynamics. [21] explores a connection between non-con vex optimization and viscous Hamilton–Jacobi partial differential equations by introducing a modified version of stochastic gradient descent, Entropy–SGD. Entropy–SGD is applied to a function that is more con vex in its input than the original loss function and yields faster con ver gence times. Similarly , a connection between Hamiltonian dynamics and learning was proposed in [22] and [23]. Energy-based models ha ve been explored in the past [24] [25]. Hopfield neural networks are designed to learn binary patters by iterativ ely reducing their ener gy until con ver gence to an attractor . The energy is defined as a L yapunov function of the weights of the network such that con vergence to a local mininum is guaranteed. The binary-valued units i of a Hopfield network are updated via a discrete procedure which checks if the weighted sum of the neighbouring units v alues does not reach a threshold, in which case the value of i is flipped. I I I . P R O B L E M S E T T I N G A. Notation The set R ( R + ) is the the set of real (non ne gativ e real) numbers. The set of squared–inte grable functions z : R → R m is L m 2 while the set of d –times continuously differentiable functions is C d . Let h· , ·i : R m × R m → R denote the inner product on R m and k v k 2 , p h v , v i its induced norm. The origin of R n is 0 n . Let H : R n → R be C 1 and let ∂ H ∈ R n be its transposed gradient, i.e. ∂ H , ( ∇ H ) > ∈ R n . In ambiguous cases, the v ariable with respect to which H is differentiated may appear as subscript, e.g. ∂ x H . Indexes of v ectors are indicated in superscripts, e.g. if v is a vector v ( i ) indicates the i - th entry . Given two vectors u, v ∈ R n , let ( u, v ) , [ u > , v > ] > . qqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqqq B. Intr oduction to neural networks In order to provide a definition of neural networks suitable for the scope of this paper , we must clarify the class of mathematical objects that they can handle. In particular, only networks whose input and outputs are vectors are treated. Indeed, the concepts presented hereafter can be naturally extended to more complex networks 1 . Definition 3.1 (Neural Network): A neural network is a map f : U × K → Y , being U ⊂ R n u the input space , Y ⊂ R n y the output space and K ⊆ R p the manifold where the parameters characteriz- ing the neural network li ve. The parameters, collected in a vector ϑ , are assumed time dependent. Hence, y = f ( u, ϑ ( t )) u ∈ U , y ∈ Y , ϑ ∈ K . (1) If samples ˆ u i , ˆ y i ( i = 1 , . . . , s ) of the input and output spaces are provided, the parameters ϑ may be tuned in order to minimize an arbitrary cost function, e.g. the squared–norm of the output reconstruction error k e i k 2 2 , k ˆ y i − f ( ˆ u i , ϑ ) k 2 2 ∀ i = 1 , . . . , s. (2) Example 3.2 (Fully Connected Network): In a fully– connected neural network, the j –th element y ( j ) i of the output of the i –th layer is y ( j ) i = σ h i − 1 X k =1 y ( j ) i − 1 w i,j,k + b i,j ∀ j = 1 , . . . , h i , (3) where h i is the number of neurons in the i –th layer, w i,j,k , b i,j ∈ R , σ i : R → R is called activation function 2 and y 0 , u . Indeed, y i can be symbolically rewritten in vector form as y i = σ i ( W i y i − 1 + b i ) , where W i = w i, 1 , 1 w i, 1 , 2 · · · w i, 1 ,h i − 1 w i, 2 , 1 w i, 2 , 2 · · · w i, 2 ,h i − 1 . . . . . . . . . . . . w i,h i , 1 w i,h i , 2 · · · w i,h i ,h i − 1 , b i = b i, 1 b i, 2 . . . b i,h i and σ i is thought to be acting component–wise. Thus, for the i –th layer a vector ϑ i containing all the weights and biases can be defined as ϑ i = [ w i, 1 , 1 , . . . , w i,h i ,h i − 1 , b i, 1 , . . . , b i,h i ] > ∈ R h i (1+ h i − 1 ) . (4) Therefore, the overall vector containing all the parameters of a fully connected neural network with l layers is ϑ = ( ϑ 1 , ϑ 2 , . . . , ϑ l ) ∈ R p , (5) 1 Aforementioned networks often deal with multi–dimensional arrays ( holors [26]) which are referred as tensors by the artificial intelligence community . 2 Usually σ i is a nonlinear function. where p = l X i =1 h i (1 + h i − 1 ) . C. T raining of a Neural Network Let U s , Y s be finite and ordered subsets of the input and output spaces, i.e. U s = { ˆ u 1 , ˆ u 2 , . . . , ˆ u i , . . . , ˆ u s } ⊂ U , Y s = { ˆ y 1 , ˆ y 2 , . . . , ˆ y i , . . . , ˆ y s } ⊂ Y , such that ∃ Ψ : U → Y : ˆ y i = Ψ( ˆ u i ) ∀ i = 1 , . . . , s . The aim of the tr aining process of a neural network is to find a value of the parameters ϑ ∈ K such that the elements of U s are mapped by f defined in (1) minimizing a gi ven objectiv e function dependent on the output samples, e.g. (2). Let J : U × Y × K → R be the objective ( loss ) function; then, the solution of the training problem is ϑ ∗ = arg min ϑ J ( ˆ u i , ˆ y i , ϑ ) ∀ ˆ u i ∈ U s , ˆ y i ∈ Y s . (6) Consider a function Γ : U × Y × K → K . Traditionally , a locally optimal solution of (6) can be obtained by iterating a difference equation of the form: ϑ t +1 = ϑ t + Γ( ˆ u t +1 , ˆ y t +1 , ϑ t ) t = 1 , . . . , s , (7) for a sufficient 3 number of steps, where the specific choice of Γ determines the dif ference between training algorithms. In contrast to such state–of–the–art methods, our approach is to equip the weights with continuous–time dynamics. In particular , we model the behavior of the parameters with port-Hamiltonian systems. Due to the unique structure of this class of dynamical systems, asymptotic conv ergence toward an optimal solution will be automatically guaranteed. I V . T R A I N I N G O F N E U R A L N E T W O R K S : T H E P O RT – H A M I L T O N I A N A P P R OA C H A. Intr oduction to port–Hamiltonian systems A port–Hamiltonian (PH) system has an input–state– output representation ˙ ξ = [ J ( ξ ) − R ( ξ )] ∂ H ( ξ ) + g ( ξ ) v z = g > ( ξ ) ∂ H ( ξ ) , (8) with state ξ ∈ X ⊂ R n , input v ∈ V ⊂ R m and output z ∈ Z ⊂ R m . The function H : X → R is called Hamiltonian function and has the role of a generalized energy while J ( ξ ) = − J > ( ξ ) ∈ R n × n represents power preserving– interconnections, R ( ξ ) = R ( ξ ) ≥ 0 models dissipativ e effects and g ( ξ ) ∈ R n × m describes the way in which e xternal power is distributed into the system. In general, X is an n – dimensional manifold, V is a m –dimensional vector space and Z = V ∗ is its dual space. Consequently the natural pairing h v , z i , z > v can be defined, which carries the unit measure of po wer (when modeling physical systems). F or 3 Here sufficient is intended in a statistical learning theory sense compactness, from now on let us define F ( ξ ) , J ( ξ ) − R ( ξ ) and omit the dependence on ξ of H and F . Assumption 4.1: Assumptions for PH systems 1. F , g , H are assumed smooth enough such that solutions are forward–complete for all initial conditions ξ 0 ∈ X , v ∈ L m 2 ; 2. H is lower –bounded in X , i.e. ∃ ζ ∈ R : ∀ x ∈ X H ( ξ ) > ζ . From these assumptions it follo ws that PH systems are passiv e (see [15], [14] ), i.e. ˙ H ≤ z > v . As a consequence, in the autonomous case ( v = 0 ) the Hamiltonian function is always non–increasing along trajec- tories. In particular , ˙ H = h ∂ H , ˙ ξ i = − ( ∂ H ) > R∂ H ≤ 0 ∀ t ≥ 0 . Thus, any strict minimum of H is a L yapunov stable equi- librium point of the system. Furthermore, the control law v = − k z ( k > 0) , usually referred as damping injection , asymptotically stabilizes the equilibria [15]. Therefore, depending on the initial condition and the basins of attraction of the minima of H , the state will ev entually land in one minimum point of the Hamiltonian function. This latter property is the key that allo ws the use of PH systems for the training of neural networks. B. Equip the network with port–Hamiltonian dynamics The proposed approach consists in describing the dynam- ics of the neural network’ s parameters using an autonomous PH system. In fact, if the Hamiltonian function coincides with the loss function of the learning problem, i.e. H , J , we guarantee asymptotic con vergence to a minimum of J , i.e. solution of the problem (6) and hence successful training of the neural network. Generally , a desirable property of the parameter dynamics is to reach a minimum of J with null velocity ˙ ϑ . In the port–Hamiltonian frame work, this can be achie ved with mechanical–like equations. Let ω , M ( ϑ ) ˙ ϑ be a vector of fictitious generalized momenta where M = M > > 0 is the generalized inertia matrix. The role of M is to giv e dif ferent weight to the parameters and model specific couplings between their dynamics. Then, let the state of the PH system be ξ , ( ϑ, ω ) ∈ R 2 p . Hence, the loss function might be redefined adding a term J kin ( ϑ, ω ) equiv alent to a pseudo kinetic energy: J ∗ ( ˆ u, ˆ y , ξ ) , J ( ˆ u, ˆ y , ϑ ) + ω > M − 1 ( ϑ ) ω | {z } J kin ( ϑ,ω ) . Note that J ( ˆ u, ˆ y , ϑ ) represents the potential energy of the fictitious mechanical system. As for general p degrees–of–freedom mechanical system in PH form, the choice of J and R is: J , O p I p − I p O p ∈ R 2 p × 2 p , R , O p O p O p B ∈ R 2 p × 2 p , where O p , I p are respectively the p –dimensional zero and identity matrices while B = B > > 0 , B ∈ R p × p . Therefore, the autonomous PH model of the parameters dynamics obtained by setting H = J ∗ is: ˙ ϑ ˙ ω = ( J − R ) ∂ ϑ J ∗ ∂ ω J ∗ ⇔ ˙ ξ = 0 I n − I n − B | {z } F ∂ J ∗ . (9) Hence, trajectories of ϑ, ω will unfold on continuously decreasing lev el sets of J ∗ which plays the role of a generalized energy . Example 4.2: Suppose M ( ϑ ) = I p ⇒ ω = ˙ ϑ and let J be the mean–squared–error loss. Therefore, a possible choice of the loss function J ∗ is J ∗ ( ˆ u, ˆ y , ξ ) = 1 2 h α k ˆ y − f ( ˆ u, ϑ ( t )) k 2 2 + β ϑ > ϑ + ˙ ϑ > ˙ ϑ i , (10) with α, β ∈ R + . Indeed, ev ery minima of J ∗ is placed in ˙ ϑ = 0 p . The gradient of J ∗ is ∂ J ∗ = α ∂ f ∂ ϑ [ ˆ y − f ( ˆ u, ϑ )] + β ϑ, ˙ ϑ . W ith this choice of J ∗ , the dynamics of the parameters become a (nonlinear) second–order ordinary differential equation: ¨ ϑ + α ∂ f ∂ ϑ [ ˆ y − f ( ˆ u, ϑ )] + β ϑ + B ˙ ϑ = 0 p . Remark 4.3: The term β ϑ > ϑ in (10) is introduced as a r egularization tool. Regularization is a fundamental tech- nique in machine learning that is widely used in order to find solutions with smaller norm (e.g. weight decay ) or to enforce sparsity in the parameters (e.g. L1–re gularization ). Note that this is a particular case of the T ikhonov regularization term k Λ ϑ k 2 2 with Λ = β I p [27], also known as weight decay [28]. Remark 4.4: In the context of mechanical–like PH sys- tem, a consistent choice of the po wer port is g , O p O p O p I p , selecting as input the fictitious generalized forces and as output the velocities z = ˙ ϑ . Hence, during the training of the neural network, a control input v = − k ( t ) ˙ ϑ ( k ( t ) ≥ 0 ∀ t ) might be applied to dynamically modify the rate with which the parameters of the network are optimized. This opens different scenarios for designing a k ( t ) which increases the probability of reaching the global minimum of J ∗ . In fact, the choice of k determines the shape of the basins of attraction of the minima of J ∗ [29]. This open problem is left for future work. Definition 4.5 (P ort–Hamiltonian neur al network): W e define a port–Hamiltonian neur al network (PHNN) as a neural network whose parameters ϑ ha ve continuous–time dynamics (9): ˙ ξ = F ∂ J ∗ y = f ( u, ξ ) . (11) Note that a PHNN is uniquely defined by the triplet ( f , F , J ∗ ). Example 4.6 (Linear Classifier): Consider a fully con- nected network (see Example 3.2) with a single layer , h neurons 4 and l classes, i.e. u ∈ U ⊂ R h , y ∈ Y ⊂ R l . Therefore, y = f ( u, ϑ ) , w 1 , 1 w 1 , 2 · · · w 1 ,h b 1 w 2 , 1 w 2 , 2 · · · w 2 ,h b 2 . . . . . . . . . . . . . . . w l, 1 w l, 2 · · · w l,h b l u 1 . (12) Let ϑ and the loss function J ∗ be defined as in (4) and (10) respectiv ely . Hence, ξ = ( ϑ, ˙ ϑ ) ∈ R 2 l ( h +1) . (13) Then, ∂ J ∗ = ∂ ϑ J ∗ ∂ ˙ ϑ J ∗ = α h ( ˆ u, 1) , ˆ y − f ( ˆ u, ϑ ) i + β ϑ ˙ ϑ , ˙ ξ = F ∂ J ∗ = ˙ ϑ − α h ( ˆ u, 1) , ˆ y − f ( ˆ u, ϑ ) i − β ϑ − B ˙ ϑ . C. T raining of PHNNs Let us assume that a dataset of inputs U s and outputs ( la- bels ) Y s is av ailable. In this section two training techniques will be introduced. 1) Sequential data training: As already pointed out, giv en an initial condition ξ 0 , the system will con verge to a minimum ϑ ∗ of J , i.e. to a minimum ( ϑ ∗ , 0 p ) of J ∗ . Howe ver , the location of the minima strictly depends on the training data. The sequential training approach relies on iterativ ely feeding one tuple ˆ u i , ˆ y i to the PHNN integrating the differential equation for a time t ∗ in each iteration. This process can be carried out from scratch se veral times ( epochs ). Let τ be a timer , i.e. ˙ τ = 1 and ζ a cycle counter , both initialized to 0. After the initialization step, a first tuple ˆ u 1 , ˆ y 1 is fed to the PHNN and integration starts from τ = 0 . When τ = t ∗ a new tuple is fetched, τ is reset, ζ is increased by 1 and the state ξ is carried ov er . The process is repeated until ζ = s and the first epoch is complete, at which point the first tuple will be fetched once again. This technique is reminiscent of the way in which SGD updates are performed in practice. The PHNN with the update and conver ge training can be represented by means of an hybrid dynamical system (see [30]) whose graphical representation is shown in Fig. 2. 2) Batch training: In the batch method the neural network is trained using the entire dataset simultaneously . T o do this, 4 In this case, the number of neurons equals the dimension of the input space. ˙ ξ ˙ τ ˙ ζ ˙ ˆ u ˙ ˆ y = F ∂ J ∗ ( ˆ u, ˆ y , ξ ) 1 0 0 n u 0 n y y = f ( ˆ u, ξ ) τ = t ∗ ∧ ζ 6 = s τ = t ∗ ∧ ζ = s ξ + τ + ζ + ˆ u + ˆ y + = ξ 0 ζ + 1 ˆ u ζ +1 ˆ y ζ +1 ξ + τ + ζ + ˆ u + ˆ y + = ξ 0 0 ˆ u ζ +1 ˆ y ζ +1 Fig. 2. Hybrid automata: Conceptual representation of the hybrid system modeling the sequential training of the neural network. the loss function is redefined as the average of the losses of each sample, i.e. J ∗ batch ( U s , Y s , ξ ) , 1 s s X i =1 J ∗ ( ˆ u i , ˆ y i , ξ ) , s X i =1 J ∗ i . Thanks to the linearity of differentiation, it is also possible to compute the gradient as the av erage of the gradients of the single losses: ∂ J ∗ batch = 1 s s X i =1 ∂ J ∗ i . Then, the training is simply achieved by integrating ˙ ξ = F ∂ J ∗ batch . Remark 4.7: Note that the sequential training will stop at one of the minima of J ∗ ( ˆ u ζ , ˆ y ζ , ξ ) (depending of the time at which the procedure is stopped), which might not necessarily coincide with a minimum of J ∗ batch ( U s , Y s , ξ ) . D. Computational comple xity Theoretical space and time complexity of the proposed method are comparable to standard gradient descent and depend on the specific ODE solving algorithm employed. Let p be the number of parameters of the neural network to optimize. Re gular gradient descent has space complexity linear in p (i.e O ( p ) ), whereas PHNNs require an additional state per parameter , the momentum, thus also yielding linear space complexity . Similarly , time complexity is linear in , the number of gradient descent steps necessary for con ver- gence to a neighbourhood of a minimum. V . N U M E R I C A L E X P E R I M E N T The effecti veness of PHNNs has been ev aluated on the following two classes of numerical experiments. As an initial test, the PHNN has been tasked with learning a linear boundary between two classes of points by using a sequential training approach. The second experiment, on the other hand, deals with non-linear vector field approximation via the use of the batch training method. All the experiments have been implemented in Python 5 5 The code is available at: https://github.com/Zymrael/ PortHamiltonianNN . − 0 . 4 − 0 . 2 0 0 . 2 0 . 4 0 . 6 0 . 8 1 1 . 2 0 0 . 5 1 u (1) u (2) C 1 C 2 Fig. 3. Dataset used to train the neural netw ork in the numerical experiment A. T ask 1: Learning a linear boundary Consider the PHNN of Example 4.6 in the case h = 2 , l = 2 , i.e., u , [ u (1) , u (2) ] > ∈ R 2 , y , [ y (1) , y (2) ] > ∈ R 2 . The aim of the numerical experiment is to learn a linear boundary separating two classes of points sampled from two biv ariate Gaussian distributions N 1 , N 2 . W e will refer to the two classes as C 1 and C 2 . The neural network model is y (1) y (2) = w 1 , 1 u (1) + w 1 , 2 u (2) + b 1 w 2 , 1 u (1) + w 2 , 2 u (2) + b 2 and the corresponding parameter vector is ϑ , [ w 1 , 1 , w 1 , 2 , w 2 , 1 , w 2 , 2 , b 1 , b 2 ] > ∈ R 6 ⇒ ξ ∈ R 12 . The dataset has been built sampling a total of 1000 points from each distribution and has been collected in U s in a shuf- fled order . The result is shown in Fig. 3. The corresponding reference outputs ha ve been computed and stored in Y s . In particular , ˆ y = Ψ( ˆ u ) , [1 , 0] > ˆ u ∈ C 1 [0 , 1] > ˆ u ∈ C 2 ∀ ˆ u ∈ U s . (14) Then, the training procedure has been performed on a single sample ( ˆ u, ˆ y ) = ([0 . 6 , 0 . 6] > , [1 , 0] > ) . The weights and their velocities have been initialized as ξ 0 = [0 . 6 , − 2 . 3 , − 0 . 1 , − 1 . 1 , − 1 . 2 , 0 . 3 , − 1 . 2 , 0 . 3 , 0 . 2 , 1 . 6 , − 0 . 4 , 1 . 6] > . The system parameters hav e been chosen as, B = I 6 , α = 1 and β = 0 . The resulting ODE has been numerically integrated for a time t f = 5 s . The resulting weight trajectories are reported in Fig. 4. Black is used to highlight parameters that are used to compute the first output element y (1) whereas blue is similarly used for parameters of y (2) . Furthermore, the time ev olution of the output of the neural netw ork and the one of the loss function are shown in Fig. 5. In order to show the effect of the re gularization term β ϑ > ϑ we performed the same experiment multiple times v arying β in the interv al [0 , 3] . At each iteration, the relati ve output tracking squared error e r , k ˆ y − y ( t f ) k 2 2 k ˆ y k 2 2 and the norm k ϑ k 2 of the parameters vector , have been computed. This shows that the effect of β is comparable to the ef fect of weight decay in neural networks optimized 0 1 2 3 4 5 − 2 − 1 0 1 ϑ ( t ) 0 1 2 3 4 5 − 1 0 1 t [ s ] ˙ ϑ ( t ) Fig. 4. T ime ev olution of the parameters ϑ and their velocities ω . Black indicates parameters of y (1) while blue parameters of y (2) . 0 1 2 3 4 5 − 1 0 1 y ( t ) 0 1 2 3 4 5 0 5 10 t [ s ] J ∗ ( t ) Fig. 5. [Abo ve] Time evolution of the estimated output y . y (1) , y (2) are indicated with black and blue lines respectively . [Below] Decay in time of the loss function J ∗ . via discrete methods, namely a reduction of the parameter norm. The results are sho wn in Fig. 6. Subsequently , the dataset U s , Y s has been split in a training set and a test set with a ratio of 3 : 1 . Then, the optimization of the network’ s parameters has been performed with the sequential method by using exclusi vely training set data while classification accuracy of the trained network has been ev aluated on the test set. The chosen v alues of the parameters are the following: B = 100 I 6 , α = 1 , β = 0 . 001 , t ∗ = 0 . 1 and ξ 0 has been initialized as before. The training procedure has been carried out for 100 epochs. The time ev olution of the parameters and the loss function (one value per epoch) is sho wn in Fig. 7. As the loss is non-increasing with the number of epochs, the parameters conv erge to constant values. Furthermore, a decision boundary has been plotted in Fig. 8 which sho ws how the the linear boundary learned by the network during training correctly separates 0 0 . 5 1 1 . 5 2 2 . 5 3 0 0 . 2 0 . 4 0 0 . 5 1 1 . 5 2 2 . 5 3 1 2 β e r k θ k 2 Fig. 6. Effect of the regularization term on the output reconstruction error and on the parameters vector norm. 0 20 40 60 80 100 − 2 − 1 0 1 ϑ ( t ) 0 20 40 60 80 100 − 1 0 1 ˙ ϑ ( t ) 0 20 40 60 80 100 10 − 1 10 0 10 1 t [ s ] J ∗ ( t ) [log ] Fig. 7. Time ev olution of the parameters during the sequential training on the linear boundary problem. Black indicates parameters of y (1) while blue parameters of y (2) . the two classes, correctly classify all the points of the test set. B. T ask 2: Learning a vector field T o further test the performance of the proposed training approach in a more complex scenario, the problem of ap- proximating a vector field has been addressed. Consider a nonlinear ODE du dx = Φ( u ) u ∈ R n , Φ : R n → R n , x ∈ R . (15) The learning task consisted in training a fully–connected neural network to approximate the v ector field Φ by using only some samples of the state u . Thus, input data hav e been generated collecting state observ ations along a trajectory in s + 1 points x i ˆ u i , u ( x i ) . The corresponding labels have been computed approximating the state deri vati ve via forward difference, i.e. ˆ y i = u ( x i +1 ) − u ( x i ) x i +1 − x i ≈ Φ( u ( x i )) ∀ i = 1 , . . . , s . Hence, the input and output datasets U s , Y s hav e been built and, then, the neural network has been trained with the PHNN method. The objecti ve was to obtain a network able to infer the knowledge of the v ector field, learned on a single − 0 . 2 0 0 . 2 0 . 4 0 . 6 0 . 8 1 0 0 . 5 u (1) u (2) Fig. 8. Decision boundary plot and test set. trajectory , to a wider region of the state space. Thus, the metric chosen to ev aluate the training performance has been the absolute approximation error in a domain D : E ( u ) , k Φ( u ) − f ( u, ϑ ∗ ) k 2 u ∈ D , where ϑ ∗ is the optimized vector of parameters. Notice that the accuracy of the results is increased by the choice of nonlinear activ ation function σ . The chosen ODE model has been a Duffing oscillator [31] du dx = " u (2) − u (1) − u (2) − 0 . 5 u (1) 3 # . Giv en the initial condition u 0 = [1 . 5 , 1] > , a trajectory u ( t ) was numerically integrated in x ∈ [0 , 8] via the odeint solver of Scipy library and 400 evenly distrib uted measure- ments have been collected (i.e., δ x = x i +1 − x i = 0 . 2 ∀ i = 1 , . . . , s ). The vector field and the computed trajectory are shown in Fig. 10. A three layers neural network has been selected with the two hidden layers having a width h 1 = h 2 = 16 . The total number of netw ork parameters is p = 354 . The design of a network with two hidden layers instead of a single, larger hidden layer or additional, narrower layers is motiv ated by [32] and [33]. While traditionally depth has been regarded as the more important attrib ute, recent de velopments ha ve shown that a correct balance of depth and width can be beneficial for neural network performance. The activ ation function has been selected as σ ( · ) , 1 γ ln(1 + e − γ ( · )) ( γ = 10 ). This function, referred as softplus , is the smooth counterpart of the more popular ReLu activ ation. ReLu of fers fast conv ergence to a minimum due to its linear region but is not differentiable in 0 . While in practice this drawback rarely causes problem due to numerical approximation, the choice of softplus was made to not violate the smoothness assumption of J ∗ . The PH model of the parameters and the objective function hav e been defined as in Example 4.2 with α = 1 , β = 0 and B = 0 . 5 · I p . ϑ and ˙ ϑ has been initialized sampling a Gaussian distribution with unitary variance and a uniform distrib ution ov er [0 , 1) respectiv ely . The training has been performed with batch method by numerically integrating the PH model for 100s. The training outcomes of first 30 s the are shown in Fig. 9. It can be noticed that after 30 s most of the parameters ha ve con verged, thus reaching a minimum of J ∗ batch . Around the 20 s point some of the velocities show a ripple, follo wed by a variation of the corresponding parameters. The loss decay is simultaneously accelerated during this ev ent due to the dissipation term B ˙ ϑ . This behavior is most likely due to the state passing through a saddle point of the J ∗ batch . The error E ( u ) has then been computed for u ∈ D , [ − 1 , 1 . 5] × [ − 1 . 9 , 1] . Figure 11 shows that the reconstruction error is highest in the state-space regions from which the neural network recei ved no training information. The neural network has been able to infer the shape of the vector field elsewhere, especially in regions with a higher training data density . Fig. 9. Results of the batch training of the neural network for the vector field reconstruction. [Abov e] T rajectory of the 354 parameters ϑ ( t ) and their velocities ˙ ϑ . [Below] Decay of the loss function over time. − 1 − 0 . 5 0 0 . 5 1 1 . 5 − 1 0 1 u (1) u (2) Fig. 10. Qui ver plot of the vector field of the Duffing ODE described in. The blue points are sampled from a single continuous trajectory and used for the batch training procedure. − 1 − 0 . 5 0 0 . 5 1 1 . 5 − 1 0 1 u (1) u (2) 0 . 1 0 . 2 0 . 3 0 . 4 0 . 5 0 . 6 E ( u ) Fig. 11. Learned vector field (blue arrows) versus true vector field (orange arrows) and absolute reconstruction error . V I . C O N C L U S I O N S In this work we provide a new perspectiv e on the process of neural network optimization. Inspired by their modular nature, we design objectiv e function and parameter training dynamics in such a w ay that the neural network itself beha ves as an autonomous Port-Hamiltonian system. A result is the implicit guarantee on con ver gence to a minimum of the loss function due to PH passi vity . In the context of training neural networks, escaping from saddle points has been a challenge due to the non–conv exity and high–dimensionality of the optimization problem. The proposed frame work is promising since it it circumvents the problem of getting stuck at saddle points by guaranteeing con vergence to a minimum of the loss function. In juxtaposition with the discrete nature of many other popular neural network optimization schemes currently used in state-of-the-art deep learning models, our framew ork fea- tures a continuous e volution of the parameters. Future work will be carried out in order to exploit this property to shed more light on some of the underlying characteristics of neural networks, especially those with a high number of layers, the behavior of which is proving to be quite challenging to model. Additionally , this framew ork enables a treatment of neural networks based on physical systems and PH control which can increase the performance of the learning procedure and the probability of finding the global minimum of the objectiv e function. Here, we performed experiments on clas- sification and vector field approximation and determined that the proposed method scales up to neural networks of non- trivial size. R E F E R E N C E S [1] Kurt Hornik, Maxwell Stinchcombe, and Halbert White. Multilayer feedforward networks are uni versal approximators. Neural networks , 2(5):359–366, 1989. [2] David E Rumelhart, Geoffrey E Hinton, and Ronald J W illiams. Learning internal representations by error propagation. T echnical report, California Univ San Diego La Jolla Inst for Cognitive Science, 1985. [3] Kaiming He, Georgia Gkioxari, Piotr Doll ´ ar , and Ross Girshick. Mask r-cnn. In Proceedings of the IEEE international confer ence on computer vision , pages 2961–2969, 2017. [4] Andrew Brock, Jeff Donahue, and Karen Simonyan. Large scale gan training for high fidelity natural image synthesis. arXiv pr eprint arXiv:1809.11096 , 2018. [5] Kaiming He, Xiangyu Zhang, Shaoqing Ren, and Jian Sun. Deep residual learning for image recognition. In Proceedings of the IEEE confer ence on computer vision and pattern r ecognition , pages 770– 778, 2016. [6] Jacob Devlin, Ming-W ei Chang, Kenton Lee, and Kristina T outanova. Bert: Pre-training of deep bidirectional transformers for language understanding. arXiv preprint , 2018. [7] Diederik P . Kingma and Jimmy Ba. Adam: A method for stochastic optimization. In 3rd International Confer ence on Learning Repr esen- tations, ICLR 2015 , 2015. [8] Tijmen Tieleman and Geoffrey Hinton. Lecture 6.5-rmsprop: Divide the gradient by a running average of its recent magnitude. COURS- ERA: Neural networks for machine learning , 4(2):26–31, 2012. [9] Liyuan Liu, Haoming Jiang, Pengcheng He, W eizhu Chen, Xiaodong Liu, Jianfeng Gao, and Jiawei Han. On the v ariance of the adaptiv e learning rate and beyond. arXiv preprint , 2019. [10] Hao Li, Zheng Xu, Gavin T aylor, Christoph Studer, and T om Gold- stein. Visualizing the loss landscape of neural nets. In Advances in Neural Information Processing Systems , pages 6391–6401, 2018. [11] A vrim Blum and Ronald L Rivest. Training a 3-node neural network is np-complete. In Advances in neural information processing systems , pages 494–501, 1989. [12] Bernhard M Maschke and Arjan J v an der Schaft. Port-controlled hamiltonian systems: modelling origins and systemtheoretic properties. IF A C Proceedings V olumes , 25(13):359–365, 1992. [13] V incent Duindam, Alessandro Macchelli, Stefano Stramigioli, and Herman Bruyninckx. Modeling and contr ol of complex physical systems: the port-Hamiltonian approac h . Springer Science & Business Media, 2009. [14] Arjan van der Schaft, Dimitri Jeltsema, et al. Port-hamiltonian systems theory: An introductory overvie w . F oundations and Tr ends® in Systems and Contr ol , 1(2-3):173–378, 2014. [15] Romeo Ortega, Arjan J V an Der Schaft, Iven Mareels, and Bernhard Maschke. Putting energy back in control. IEEE Contr ol Systems Magazine , 21(2):18–33, 2001. [16] Romeo Ortega, Arjan V an Der Schaft, Bernhard Maschke, and Ger- ardo Escobar . Interconnection and damping assignment passi vity- based control of port-controlled hamiltonian systems. Automatica , 38(4):585–596, 2002. [17] Romeo Ortega, Arjan V an Der Schaft, Fernando Castanos, and Alessandro Astolfi. Control by interconnection and standard passivity- based control of port-hamiltonian systems. IEEE T ransactions on Automatic contr ol , 53(11):2527–2542, 2008. [18] Tian Qi Chen, Y ulia Rubanova, Jesse Bettencourt, and David K Duvenaud. Neural ordinary differential equations. In Advances in Neural Information Processing Systems , pages 6572–6583, 2018. [19] Lars Ruthotto and Eldad Haber . Deep neural networks motiv ated by partial differential equations. arXiv preprint , 2018. [20] Sam Greydanus, Misko Dzamba, and Jason Y osinski. Hamiltonian neural networks. arXiv preprint , 2019. [21] Pratik Chaudhari, Adam Oberman, Stanle y Osher, Stefano Soatto, and Guillaume Carlier . Deep relaxation: partial differential equations for optimizing deep neural networks. Research in the Mathematical Sciences , 5(3):30, 2018. [22] James W Howse, Chaouki T Abdallah, and Gregory L Heileman. Gradient and hamiltonian dynamics applied to learning in neural networks. In Advances in Neural Information Pr ocessing Systems , pages 274–280, 1996. [23] Wiesla w Sienko, W ieslaw Citko, and Dariusz Jak ´ obczak. Learning and system modeling via hamiltonian neural networks. In International Confer ence on Artificial Intelligence and Soft Computing , pages 266– 271. Springer, 2004. [24] David H Ackley , Geoffre y E Hinton, and T errence J Sejnowski. A learning algorithm for boltzmann machines. Cognitive science , 9(1):147–169, 1985. [25] John J Hopfield. Neural netw orks and physical systems with emer- gent collecti ve computational abilities. Proceedings of the national academy of sciences , 79(8):2554–2558, 1982. [26] Parry Hiram Moon and Domina Eberle Spencer . Theory of holors: A generalization of tensors . Cambridge University Press, 2005. [27] Gene H Golub, Per Christian Hansen, and Dianne P O’Leary . T ikhonov regularization and total least squares. SIAM J ournal on Matrix Analysis and Applications , 21(1):185–194, 1999. [28] Anders Krogh and John A Hertz. A simple weight decay can improve generalization. In Advances in neural information processing systems , pages 950–957, 1992. [29] Stefano Massaroli, Federico Califano, Angela F aragasso, Atsushi Y amashita, and Hajime Asama. Multistable energy shaping of linear time–in variant systems with hybrid mode selector . In Submitted to 11th IF A C Symposium on Nonlinear Contr ol Systems (NOLCOS 2019) , 2019. [30] Arjan J V an Der Schaft and Johannes Maria Schumacher . An intr oduction to hybrid dynamical systems , volume 251. Springer London, 2000. [31] Ivana Ko vacic and Michael J Brennan. The Duffing equation: nonlin- ear oscillators and their behaviour . John Wile y & Sons, 2011. [32] Zhou Lu, Hongming Pu, Feicheng W ang, Zhiqiang Hu, and Liwei W ang. The expressiv e power of neural networks: A vie w from the width. In Advances in Neural Information Pr ocessing Systems , pages 6231–6239, 2017. [33] Ronen Eldan and Ohad Shamir . The power of depth for feedforward neural networks. In Confer ence on learning theory , pages 907–940, 2016.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment