Evaluating Conformance Measures in Process Mining using Conformance Propositions (Extended version)

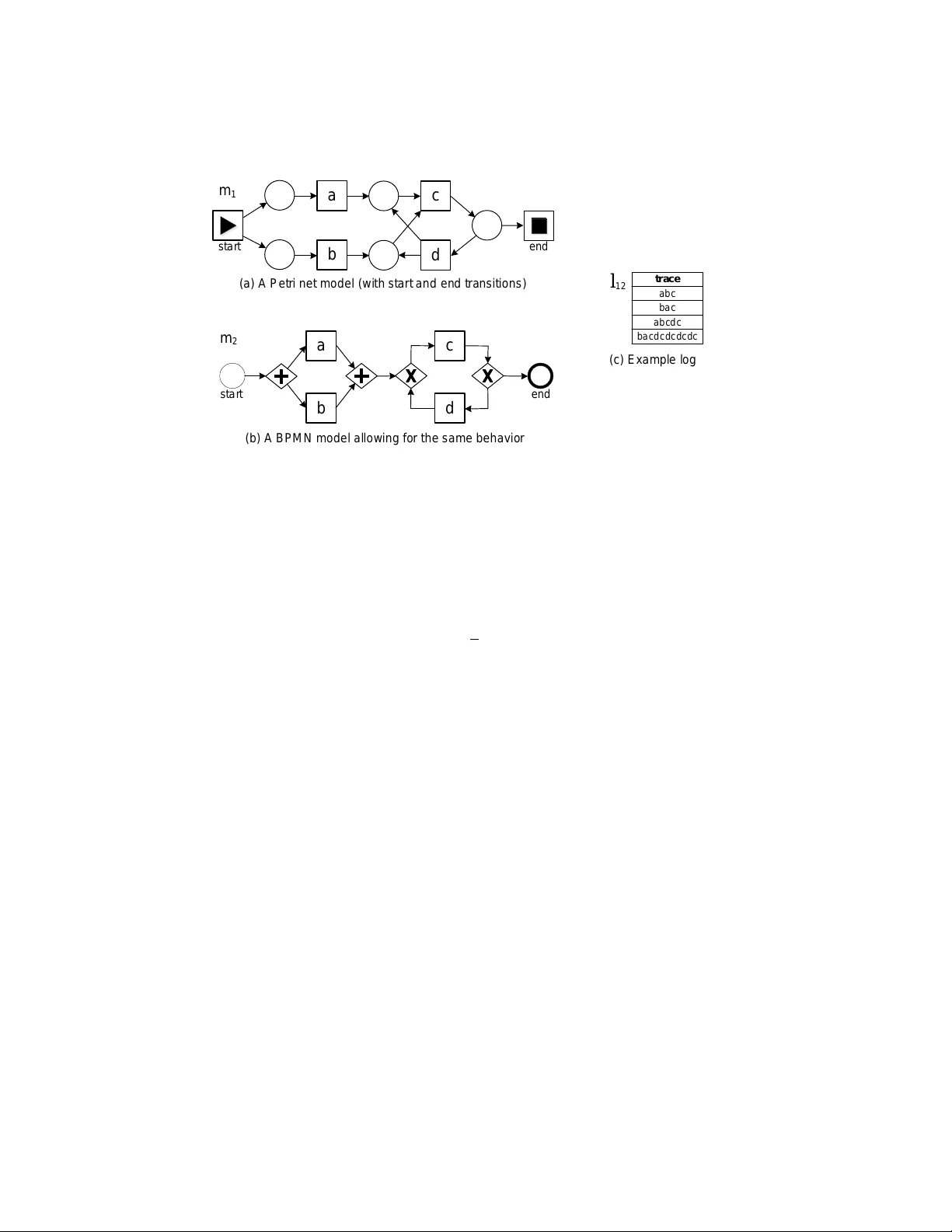

Process mining sheds new light on the relationship between process models and real-life processes. Process discovery can be used to learn process models from event logs. Conformance checking is concerned with quantifying the quality of a business pro…

Authors: Anja F. Syring, Niek Tax, Wil M.P. van der Aalst