On the Contributions of Visual and Textual Supervision in Low-Resource Semantic Speech Retrieval

Recent work has shown that speech paired with images can be used to learn semantically meaningful speech representations even without any textual supervision. In real-world low-resource settings, however, we often have access to some transcribed spee…

Authors: Ankita Pasad, Bowen Shi, Herman Kamper

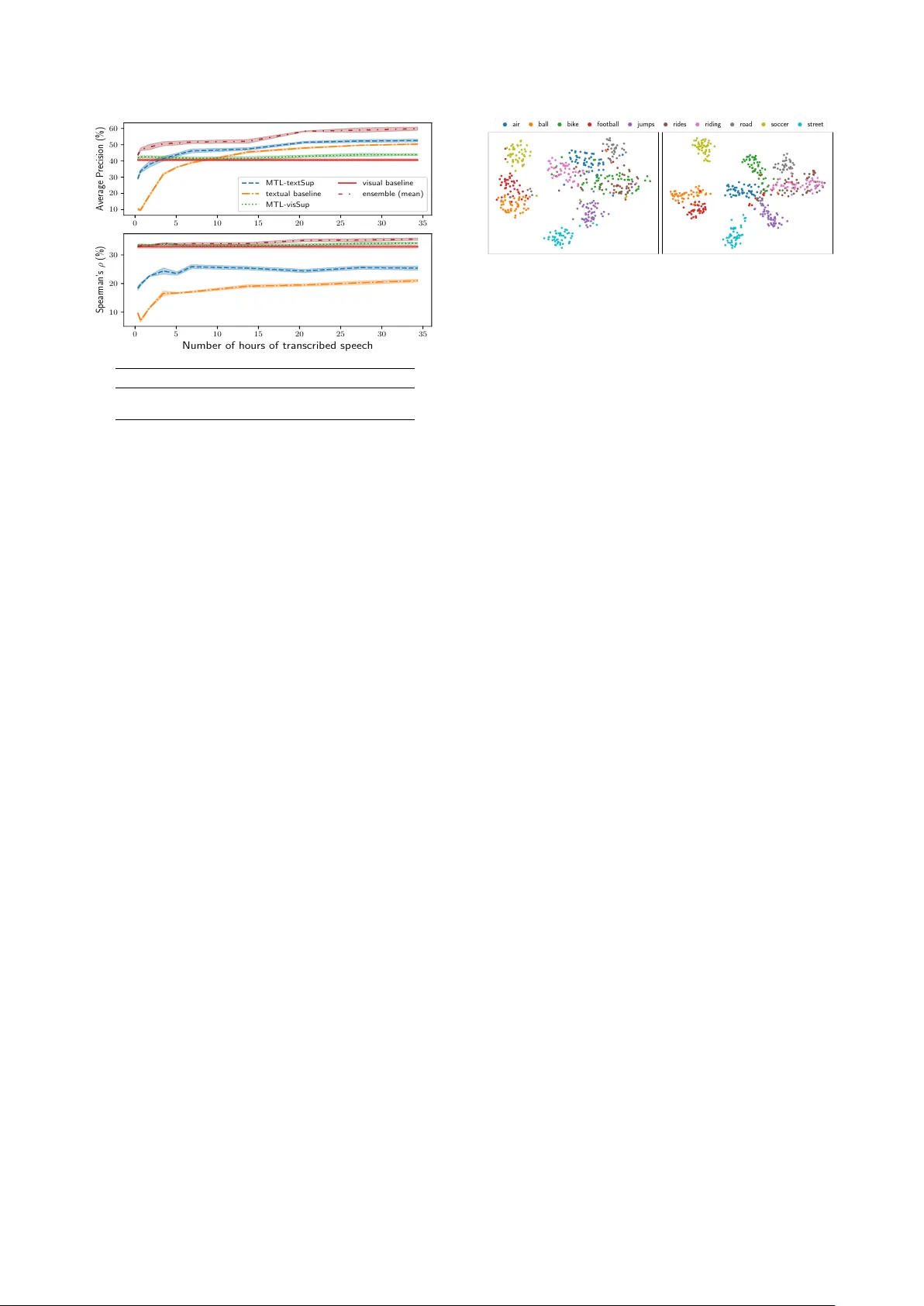

On the Contrib utions of V isual and T extual Supervision in Low-Resour ce Semantic Speech Retrie val Ankita P asad 1 , Bowen Shi 1 , Herman Kamper 2 , Kar en Livescu 1 1 T oyota T echnological Institute at Chicago, USA 2 Dept. E&E Engineering, Stellenbosch Uni versity , South Africa { ankitap,bshi,klivescu } @ttic.edu, kamperh@sun.ac.za Abstract Recent work has sho wn that speech paired with images can be used to learn semantically meaningful speech representations ev en without any textual supervision. In real-world lo w-resource settings, ho we ver , we often ha ve access to some transcribed speech. W e study whether and ho w visual grounding is useful in the presence of v arying amounts of textual supervision. In particular , we consider the task of semantic speech retriev al in a low-resource setting. W e use a previously studied data set and task, where models are trained on images with spoken captions and e valuated on human judgments of semantic rele v ance. W e propose a multitask learning approach to leverage both visual and te xtual modalities, with visual supervision in the form of key- word probabilities from an external tagger . W e find that visual grounding is helpful ev en in the presence of textual supervision, and we analyze this ef fect over a range of sizes of transcribed data sets. W ith ∼ 5 hours of transcribed speech, we obtain 23% higher av erage precision when also using visual supervision. Index T erms : speech search, multi-modal modelling, visual grounding, semantic retriev al, multitask learning 1. Introduction In many languages and domains, there can be insuf ficient data to train modern large-scale models for common speech processing tasks. Recent work has begun exploring alternati ve sources of weak supervision, such as visual grounding from images [1 – 7]. Such work has dev eloped approaches for training models for tasks such as cross-modal retriev al [2 – 4]; unsupervised learning of word-like and phone-like units [8 – 11]; and retrieval tasks like keyw ord search, query-by-example search, and semantic search [7, 12, 13]. Much of this recent work is in the conte xt of zer o textual supervision, that is, using no transcribed speech, and the results show that visual grounding alone provides a strong supervisory signal. Howe ver , in many low-resource settings we also hav e access to a small amount of textual supervision. This is the setting that we address here. In particular , we consider the task of semantic speech retriev al using models trained on a data set of images paired with spoken and written captions. In semantic speech retrie v al, the input is a te xtual query, a written word in our setting, and the task is to find utterances in a speech corpus that are semantically rele vant to the query [14, 15]. The query word need not exactly occur in the retriev ed utter- ances; for example, the query beac h should retrie ve the e xactly matched utterance “a dog retriev es a branch from a beach ” as well as the semantically matched utterance “people at an ocean- side resort. ” One common approach is to cascade an automatic speech recognition (ASR) system with a text-based information retriev al method [16]. High-quality ASR, howe ver , requires significant amounts of transcribed speech audio and text for language modelling. Kamper et al. [7, 12] proposed learning a semantic speech retrie val model solely from images of natural scenes paired with unlabelled spoken captions. This approach uses an external visual tagger to automatically obtain text labels from the training images, and these are used as targets for train- ing a speech-to-keyw ord network. No textual supervision is thus used. For analysis, the approach was compared to a supervised model trained only with text supervision. The visually super- vised model was shown to ha ve an edge over the textually trained model in retrieving non-exact keyword matches. In retrie ving the exact matches, the te xt-based model prov ed superior . Based on these observations, we hypothesize that visual- and text-based supervision can be complementary for semantic speech retrieval in lo w-resource settings where both are av ailable. V isual supervision could, e.g., provide a signal to distinguish acoustically similar but semantically unrelated words. Here we consider a regime where the amount of transcribed speech audio is not enough to train a full ASR system, but is enough to provide a useful additional supervision signal. Using a corpus of images with spoken captions, we propose a multitask learning (MTL) approach where visual supervision is obtained from an external image tagger and text supervision is obtained from (a limited amount of) transcribed spok en captions. Each type of target is giv en as a bag of words. Additionally , we include a representation learning loss to encourage similarity between the visual and spoken representations. W e consider different amounts of transcribed speech audio to inv estigate the ef fect of MTL in different low-resource settings and to understand the trade-off between visual and textual super- vision. Experiments using human semantic rele v ance judgments show that our ne w MTL approach incorporating both modalities performs better than using either one in isolation.W e achieve consistent improv ements over the entire range of transcribed data set sizes considered. This demonstrates that the benefit of visual grounding remains ev en when textual supervision is a v ailable. 2. A pproach As a starting point, we use the model of [7], which addresses the semantic retriev al task using a training set of images and their spoken captions with no textual supervision at all. In this ap- proach, a vision model —an image tagger trained on an external data set of tagged images—is used to produce a bag of semantic keyw ords for each image, along with their posterior probabilities. These posteriors serve as supervision for a speec h model that takes as input a spoken utterance and outputs a bag of keywords describing the utterance. At test time, these predicted keywords are used for semantic speech retrie val, by retrie ving those utter- ances for which the model predicts a probability higher than a giv en detection thr eshold for an input query word. W e mak e two ke y changes to the model of [7], as illustrated l vis Shared CNN lay ers l bow Res Net -152 (fixed) FC layers l rep FC layers Image 0.6 0.1 0.01 1 0 1 Te x t A group of young boys playing soccer Conver sion to bag- of -wor ds FC layers External image tagger MTL - visSup MTL - textSu p * + vis * + bow y vis y bow 0.4 0.03 0.1 0.2 0.01 0.05 s v Speech-(M FCCs) Figure 1: The multitask speec h model with visual supervision (left) and te xtual supervision (right). Either of the textually supervised and visually supervised branc hes (or both, ensembled) can be used during infer ence. in Figure 1. First, we consider the case where we have both the visual supervision (the vector of ke yword probabilities) and textual supervision, obtained from transcriptions of the training utterances. Specifically , for the textual supervision, we con vert each transcription into a bag of ground-truth content words oc- curring in the utterance. The textual and visual supervision are used together in a multitask learning (MTL) approach, where our model consists of two branches, one that produces the “visual keyw ords” and one that produces “textual k eywords”. The second change we make is to add a r epresentation loss term. The purpose of this is to encourage intermediate repre- sentations learned by the speech model to match those in the external visual tagger . This loss is similar to ones used in recent work on unsupervised joint learning of visual and speech rep- resentations, e.g. for cross-modal retriev al [2]. In our case the representation loss can be viewed as a regularizer or as a third task in the MTL frame work. This loss helps us tak e adv antage of the fact that the visual tagger is trained on much more data than the speech model, so we expect its internal representations themselves to be useful during training of the speech network. As a final, minor change, we use a stronger pre-trained visual network (ResNet-152) in the visual tagger relati ve to [7] (more details about the vision model are giv en in Section 3). W e note that other concurrent work also in vestigates MTL for speech with visual and textual supervision (applied to dif fer- ent tasks) [4]. A key distinction is that we focus on the specific question of ho w useful visual grounding is in the presence of varying amounts of te xtual supervision. 2.1. Model Details Each training utterance U = u 1 , u 2 , ..., u T is paired with an image I . Each frame u t is an acoustic feature v ector , MFCCs in our case. 1 The vision model provides weak labels, y vis ∈ [0 , 1] N vis , where N vis is the number of ke ywords in the visual tag set. This serves as the ground truth for the visually supervised branch output, f vis ( U ) = ˆ y vis , of the speech model. Each training utterance U is also optionally paired with a multi-hot bag-of-words vector y bow ∈ { 0 , 1 } N bow , obtained from the transcriptions of the spok en captions, where N bow is the number of unique keyw ords in the text labels and each dimension indicates the presence of absence of a particular word. This 1 Earlier w ork on this data set compared MFCCs to filterbank features, and found that MFCCs worked similarly or better [12]. vector serves as a ground truth for the output of the textually supervised branch, f bow ( U ) = ˆ y bow , of the speech model. These task-specific supervised losses are optimized jointly with an unsupervised multi-view representation loss. In par- ticular , we use a margin-based contrasti ve loss [17] between the speech representation s and visual representation v at an intermediate layer within each model. The total loss is a weighted sum of these three losses: ` = α vis ` vis + α bow ` bow + (1 − α bow − α vis ) ` rep , where each of the loss terms is a function of a training utterance U and either (1) a corresponding image representation v , (2) a visual target y vis , or (3) a textual tar get y bow (if av ailable). Both of the supervised task losses, ` vis and ` bow , are summed cross entropy losses between the predicted and ground-truth vectors, as follo ws (where sup ∈{ vis,bow } ): ` sup = − | N sup | X w =1 { y sup,w log ˆ y sup,w + (1 − y sup,w ) log[1 − ˆ y sup,w ] } where ˆ y sup is a function of the speech input and y sup is function of the image/transcription. The representation loss is a contrastiv e loss, similar to ones used in prior work on multi-vie w represen- tation learning [2, 17, 18], computed by sampling a fixed number of negati ve e xamples within a mini-batch (size B ) corresponding to both images and utterances: ` rep = ( 1 | V | X v 0 ∈ V max[0 , m + d cos ( v , s ) − d cos ( v 0 , s )] + 1 | S | X s 0 ∈ S max[0 , m + d cos ( v , s ) − d cos ( v , s 0 )] ) where { v , s } are the representations of the correct vision-speech pair; { v 0 , s } and { v , s 0 } are negativ e (non-matching) pairs; d cos ( v , s ) is the cosine distance between the representations; n neg = | V | = | S | is the number of ne gati ve pairs; and m is a margin indicating the minimum desired dif ference between the positiv e-pair distances and neg ativ e-pair distances. 3. Experimental Setup 3.1. Data W e use three training sets and one ev aluation data set, identical to those used in prior work [7], allo wing for direct comparison. (A) Image-text pairs : The union of MSCOCO [19] and Flickr30k [20], with ∼ 149k images ( ∼ 107k training, ∼ 42k dev) paired with 5 written captions each. This is an external data set used to train the image tagger before our MTL approach is applied. W e are taking adv antage of the fact that, in contrast to speech, labelled resources for images are more plentiful (in fact, we are implicitly also using the ev en larger training set of the pre-trained ResNet). The a v ailability of such lar ge visual data sets allows us to train a strong e xternal image tagger . (B) Image-speec h pairs : The Flickr8k Audio Captions Cor- pus [21], consisting of ∼ 8k images paired with 5 spoken captions each, amounting to a total of ∼ 46 hours of speech data ( ∼ 34 hours training, ∼ 6 hours de v , and ∼ 6 hours test speech data). The images in this set are disjoint from those in set A. (C) Speech-te xt pairs : The spoken captions in the Flickr8k Audio Captions Corpus have written transcripts as well. W e use subsets of these transcripts with varying sizes: from just ∼ 21 minutes to the complete ∼ 34 hours of labelled speech. (D) Human semantic rele vance judgments : For semantic speech retrie v al evaluation, we use the human rele v ance judg- ments from [7]. 1000 utterances from the Flickr8k Audio Cap- tions Corpus were manually annotated via Amazon Mechanical T urk with their semantic relev ance for each of 67 query words. Each (utterance, keyw ord) pair was labeled by 5 annotators. W e use both the majority v ote of the annotators (as “hard labels”) and the actual number of votes (“soft labels”) for e v aluation. 3.2. Implementation Details The vision model (Figure 1, left) is an ImageNet pre-trained ResNet-152 [22] (which is k ept fixed), with a set of four 2048- unit fully connected layers added at the top, follo wed by a final softmax layer that produces posteriors for the N vis tags. This image tagger is used to provide the visual supervision y vis . The fully connected layers in the vision model are trained once on set A and kept fixed throughout the e xperiments. The speech model (Figure 1, middle) consists of two branches, one with textual supervision and the other with visual supervision as targets. Except for the output layer , the architec- ture for these two networks is identical and exactly the same as the model in [7]. Each network consists of three con volutional layers, with the output max-pooled over time to get a 1024- dimensional embedding, followed by feedforward layers, with a final sigmoid layer producing either N vis or N bow scores in [0 , 1] . The parameters for con volutional layers in these two branches are shared while the upper layer parameters are task-specific. Hereafter , the visually supervised branch and the textually super - vised branch of this model are referred to as MTL-visSup and MTL-textSup respecti vely . The input speech is represented as MFCCs, zero-padded (or truncated 2 ) to 8 seconds (800 frames). For r epresentation learning we need the dimensions for the learned speech and vision feature vectors to match. A two-layer ReLU feedforw ard netw ork is used to transform the intermediate 2048-dimensional vision feature vectors to 1024 dimensions. The output of the shared branch of the speech model is used as the speech representation. Parameters of the additional layers are learned jointly with the speech model. For MTL, each mini-batch is sampled from either set B or set C with probability proportional to the number of data points in each set, as in [23]. The size of set C varies, as described in 3.1. N vis is kept fixed at 1000 and N bow is one of 1k, 2k, 5k and 10k (each time keeping the most common content words in set A) and 2 99.5% of the utterances are 8 s or shorter . optimized along with other hyperparameters. Hyperparameters include α vis , α bow , m , n neg , N bow , batch size, and learning rate. Different settings are found to be optimal as the set C is v aried. Adam optimization [24] is used for both branches with early stopping. For early stopping, we use F -score on the Flickr8k Audio Captions validation set with a detection threshold of 0.3. Since no dev elopment set is av ailable for semantic retrieval, the F -score is ev aluated for the task of exact retriev al (i.e., ke yword spotting) using the 67 keyw ords from set D. 3.3. Baselines W e compare our proposed models to two baselines. The visual baseline has access to visual supervision alone. This baseline replicates the vision-speech model from [7], but is improved here by using a pre-trained ResNet-152 (rather than VGG-16) for fair comparison with our models. The visual baseline is equiv alent to MTL-visSup, when no textual supervision is used. The tex- tual baseline has access to just the textual supervision, and is equiv alent to MTL-textSup when using no visual supervision. Different baseline scores are obtained as the set C changes. 3.4. Evaluation Metrics At test time we hav e access to just the spoken utterances. For ev aluation we use the output probability vectors of both, MTL- visSup and MTL-textSup. W e ev aluate semantic retrie val perfor - mance on set D (67 query words, 1000 spok en utterance search set). W e measure performance using the follo wing ev aluation metrics, commonly used for retriev al tasks [25, 26]. Pr ecision at 10 ( P @10 ) and pr ecision at N ( P @ N ) measure the precision of the top 10 and top N retriev als, respectively , where N is the number of ground-truth matches. A verage pr ecision is the area under the precision-recall curve as the detection threshold is varied. Spearman’ s rank corr elation coef ficient (Spearman’ s ρ ) measures the correlation between the utterance ranking induced by the predicted probability vectors and the ground-truth “proba- bility” vectors. The latter is approximated using the number of votes each query word gets for a given utterance. All of these metrics except for Spearman’ s ρ are used for the hyperparameter tuning on exact ke yword retrie v al performance. 4. Results and Discussion Figure 2 presents the semantic retrie val performance of the MTL model as the amount of text supervision is varied. For our MTL models, we can use either output (MTL-visSup or MTL- textSup) or the av erage of the two (ensemble). Note that the visual baseline result is a horizontal line in each plot, as this baseline does not use any textual supervision. W e present the results for Spearman’ s ρ , which ev aluates with respect to the “soft” human labels, and av erage precision, which uses the hard majority decisions. The trends for other hard label-based metrics ( P @10, P @ N ) follow the same trend as av erage precision. The first clear conclusion from these results is that visual grounding is still helpful even when some speech transcripts are av ailable. This is, to our knowledge, the first time that this has been demonstrated for visually grounded models of speech semantics. In terms of average precision, the multitask model with both visual and textual supervision is better than the baseline text-supervised model even when using all of the transcribed speech. Ensembling the outputs gi v es a large performance boost ov er using only MTL-textSup: ∼ 28% when using 1.7 hours (5%) of transcribed speech and ∼ 14% when using 34.4 hours (100%). Even individually , the best performance at any lev el 0 5 10 15 20 25 30 35 Numb er of hours of transcrib ed sp eech 10 20 30 Sp ea rman’s ρ (%) 0 5 10 15 20 25 30 35 10 20 30 40 50 60 Average Precision (%) MTL-textSup textual baseline MTL-visSup visual baseline ensemble (mean) Hours T extual baseline V isual baseline MTL ensemble 1.7 18.6 40.6 48.6 34.4 50.4 40.6 59.9 Figure 2: T op: Semantic retrieval performance, in terms of averag e pr ecision (AP) and Spearman’ s ρ . Shading ar ound the curves in the plots indicates standard de viation over multiple runs with differ ent random initializations. Bottom: A verag e pr ecision (%) for a low-r esour ce and higher-r esour ce setting. of supervision is obtained with one of the MTL models (MTL- textSup or MTL-visSup). In terms of Spearman’ s ρ , the trends are somewhat dif ferent from average precision. Here, the MTL-visSup outperforms MTL-textSup at all le vels of supervision, and the visual baseline alone is almost as good as the MTL-visSup. This finding is in line with earlier work [7], which found that the visual baseline does particularly well in terms of Spearman’ s ρ , which is more permissiv e of non-exact semantic matches. In some sense, Spear- man’ s ρ uses a more “complete” measure of the ground truth, since it considers the full range of human judgments rather than just the majority opinion. Effect of repr esentation loss. The results in Figure 2 use the su- pervised losses as well as the representation loss. Removing the latter reduces av erage precision by roughly 2-4% for most points on the curve, while for Spearman’ s ρ it makes little dif ference. Howe ver , when tuning for e xact retrie val on the dev elopment set, we find much larger gains from the representation loss, roughly 7-15% in av erage precision. This difference could be an artifact of tuning and testing on different tasks. Effect of varying N bow . W e observe that a higher N bow of 10k words is preferred at lower supervision, while at higher supervi- sion ha ving just 1k w ords in the output is best. Our interpretation is that ha ving more words in the output than we will be e valuating on is equiv alent to training the model on additional tasks which we do not care about at test time. This additional MTL-like set- ting has a re gularization ef fect which is helpful in lo wer -resource settings, but as we ha ve access to more and more labelled data, we no longer benefit from regularization. Other modeling alternati ves. W e also considered other ways to combine the multiple sources of supervision: (1) by splitting the conv olutional layers, (2) by pre-training on the visual task and fine-tuning on the textual task, or (3) by using a hierarchical multitask approach—inspired by prior related work [27 – 29] where the (more semantic) visual supervision is at a higher le vel and the (exact) te xtual supervision is at a lower le vel. Our MTL model (Figure 1) outperforms these other approaches. air ball bik e fo otball jumps rides riding road so ccer street Figure 3: t-SNE visualization of the r epresentations learned with ∼ 1.7 hours of transcribed speec h, using the textual baseline (left) and MTL-textSup (right). Qualitative analysis. W e visualize embeddings from the penul- timate layer of the model by passing a set of isolated word segments through the model. Figure 3 sho ws 2D t-SNE [30] vi- sualizations of these embeddings from both the textual baseline model and the MTL-textSup at low supervision.Qualitativ ely , the representation learned by the MTL model results in more distinct clusters than the baseline.W e hav e also examined the retriev ed utterances for these models and found that, at lower supervision, MTL-textSup outputs higher false positiv es than MTL-visSup due to acoustically similar words. For instance, for the query “tree”, MTL-textSup retrie ves utterances containing the acoustically similar word “street”. This effect reduces as we train MTL-textSup on higher amount of te xt data. 5. Conclusion V isual grounding has become a commonly used source of weak supervision for speech in the absence of te xtual supervision. Our motiv ation here was to, first, examine the contribution of visual grounding when some transcribed speech is also a v ailable and, second, explore how best to combine both visual and textual supervision for a semantic speech retriev al task. W e proposed a multitask speech-to-keyw ord model that has both visually super - vised and textually supervised branches, as well as an additional explicit speech-vision representation loss as a regularizer . W e explored the performance of this model in a lo w-resource setting with various amounts of te xtual supervision, from none at all to 34 hours of transcribed speech. Our main finding is that vi- sual grounding is indeed helpful e ven in the presence of te xtual supervision. Experiments ov er a range of le vels of supervision show that joint training with both visual and te xtual supervision results in consistently improv ed retriev al. A limitation of the current work is that the set of queries and human judgments is small. A natural ne xt step is to collect more human e v aluation data and to consider a wider variety of queries, including multi-word queries. Another next step is to widen the types of speech domains that we consider , for example to e xplore whether visually grounded training can learn retriev al models that are applicable also to speech that is not describing a visual scene. On a technical le vel, there is room for more exploration of different types of multi-view representation losses (such as ones based on canonical correlation analysis [31, 32]), as well as more structured speech models that can localize the rele vant words/phrases for a gi ven query . 6. Acknowledgements This material is based upon work supported by the Air Force Office of Scientific Research under aw ard number F A9550-18-1- 0166, by NSF award number 1816627, and by a Google Faculty A ward to Herman Kamper . 7. References [1] G. Synnae ve, M. V ersteegh, and E. Dupoux, “Learning words from images and speech, ” in NIPS W orkshop Learn. Semantics, 2014. [2] D. Harwath, A. T orralba, and J. R. Glass, “Unsupervised learning of spoken language with visual context, ” in Proc. NIPS, 2016. [3] G. Chrupała, L. Gelderloos, and A. Alishahi, “Representations of language in a model of visually grounded speech signal, ” in Proc. A CL, 2017. [4] G. Chrupała, “Symbolic inducti ve bias for visually grounded learn- ing of spoken language, ” in Proc. A CL, 2019. [5] O. Scharenborg et al. , “Linguistic unit discovery from multi-modal inputs in unwritten languages: Summary of the “Speaking Rosetta” JSAL T 2017 W orkshop, ” in Proc. ICASSP, 2018. [6] H. Kamper and M. Roth, “V isually grounded cross-lingual ke y- word spotting in speech, ” in Proc. SL TU, 2018. [7] H. Kamper , G. Shakhnarovich, and K. Li vescu, “Semantic speech retriev al with a visually grounded model of untranscribed speech, ” IEEE T rans. Audio, Speech, Language Process. , vol. 27, no. 1, pp. 89–98, 2019. [8] L. Gelderloos and G. Chrupała, “From phonemes to images: Levels of representation in a recurrent neural model of visually-grounded language learning, ” Proc. COLING, 2016. [9] D. Harwath and J. R. Glass, “Learning word-lik e units from joint audio-visual analysis, ” in Proc. ACL, 2017. [10] D. Harwath, A. Recasens, D. Sur ´ ıs, G. Chuang, A. T orralba, and J. Glass, “Jointly discov ering visual objects and spok en words from raw sensory input, ” in Proc. ECCV, 2018. [11] D. Harwath and J. Glass, “T owards visually grounded sub-word speech unit discovery , ” in Proc. ICASSP, 2019. [12] H. Kamper, S. Settle, G. Shakhnarovich, and K. Livescu, “V isu- ally grounded learning of keyw ord prediction from untranscribed speech, ” in Proc. Interspeech, 2017. [13] H. Kamper , A. Anastassiou, and K. Li vescu, “Semantic query-by- example speech search using visual grounding, ” in Proc. ICASSP , 2019. [14] J. S. Garofolo, C. G. Auzanne, and E. M. V oorhees, “The TREC spoken document retrie v al track: A success story , ” in Proc. CBMIA, 2000. [15] L.-s. Lee, J. R. Glass, H.-y . Lee, and C.-a. Chan, “Spoken content retriev al—beyond cascading speech recognition with text retrie val, ” IEEE T rans. Audio, Speech, Language Process. , vol. 23, no. 9, pp. 1389–1420, 2015. [16] C. Chelba, T . J. Hazen, and M. Saraclar , “Retrie val and bro wsing of spoken content, ” IEEE Signal Proc. Mag., vol. 25, no. 3, 2008. [17] K. M. Hermann and P . Blunsom, “Multilingual distrib uted repre- sentations without word alignment, ” in Proc. ICLR, 2014. [18] W . He, W . W ang, and K. Livescu, “Multi-view recurrent neural acoustic word embeddings, ” in Proc. ICLR, 2017. [19] T .-Y . Lin, M. Maire, S. Belongie, J. Hays, P . Perona, D. Ramanan, P . Doll ´ ar , and C. L. Zitnick, “Microsoft COCO: Common objects in context, ” in Proc. ECCV, 2014. [20] P . Y oung, A. Lai, M. Hodosh, and J. Hockenmaier , “From image descriptions to visual denotations: New similarity metrics for se- mantic inference over event descriptions, ” T rans. A CL , vol. 2, pp. 67–78, 2014. [21] D. Harwath and J. R. Glass, “Deep multimodal semantic embed- dings for speech and images, ” in Proc. ASRU, 2015. [22] K. He, X. Zhang, S. Ren, and J. Sun, “Deep residual learning for image recognition, ” in Proc. CVPR, 2016. [23] V . Sanh, T . W olf, and S. Ruder , “ A hierarchical multi-task approach for learning embeddings from semantic tasks, ” in Proc. AAAI , 2019. [24] D. P . Kingma and J. Ba, “ Adam: A method for stochastic optimiza- tion, ” in Proc. ICLR, 2015. [25] T . J. Hazen, W . Shen, and C. White, “Query-by-example spoken term detection using phonetic posteriorgram templates, ” in Proc. ASR U, 2009. [26] Y . Zhang and J. R. Glass, “Unsupervised spoken keyword spotting via segmental DTW on Gaussian posteriorgrams, ” in Proc. ASR U , 2009. [27] A. Søgaard and Y . Goldber g, “Deep multi-task learning with lo w lev el tasks supervised at lower layers, ” in Proc. ACL, 2016. [28] S. T oshniwal, H. T ang, L. Lu, and K. Livescu, “Multitask Learn- ing with Low-Le vel Auxiliary Tasks for Encoder-Decoder Based Speech Recognition, ” in Proc. Interspeech, 2017. [29] R. Sanabria and F . Metze, “Hierarchical Multi Task Learning With CTC, ” in Proc. SL T, 2018. [30] L. v . d. Maaten and G. Hinton, “V isualizing data using t-sne, ” Journal of Machine Learning Research , vol. 9, no. Nov , pp. 2579– 2605, 2008. [31] W . W ang, R. Arora, K. Livescu, and J. Bilmes, “On deep multi- view representation learning, ” in Proc. ICML, 2015. [32] Y . Gong, Q. Ke, M. Isard, and S. Lazebnik, “ A multi-view embed- ding space for modeling internet images, tags, and their semantics, ” International Journal of Computer V ision , v ol. 106, no. 2, pp. 210– 233, 2014.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment