Training Multi-layer Spiking Neural Networks using NormAD based Spatio-Temporal Error Backpropagation

Spiking neural networks (SNNs) have garnered a great amount of interest for supervised and unsupervised learning applications. This paper deals with the problem of training multi-layer feedforward SNNs. The non-linear integrate-and-fire dynamics empl…

Authors: Navin Anwani, Bipin Rajendran

T R A I N I N G M U L T I - L A Y E R S P I K I N G N E U R A L N E T W O R K S U S I N G N O R M A D B A S E D S P A T I O - T E M P O R A L E R R O R B AC K P R O PAG A T I O N A P R E P R I N T Navin Anwani ∗ Department of Electrical Engineering Indian Institute of T echnology Bombay Mumbai, 400076, India anwaninavin@gmail.com Bipin Rajendran Department of Electrical and Computer Engineering New Jerse y Institute of T echnology NJ, 07102 USA bipin@njit.edu July 30, 2019 A B S T R AC T Spiking neural networks (SNNs) have garnered a great amount of interest for supervised and un- supervised learning applications. This paper deals with the problem of training multi-layer feed- forward SNNs. The non-linear integrate-and-fire dynamics employed by spiking neurons make it difficult to train SNNs to generate desired spike trains in response to a gi ven input. T o tackle this, first the problem of training a multi-layer SNN is formulated as an optimization problem such that its objectiv e function is based on the deviation in membrane potential rather than the spike arriv al instants. Then, an optimization method named Normalized Approximate Descent (NormAD), hand- crafted for such non-con ve x optimization problems, is employed to deri ve the iterativ e synaptic weight update rule. Ne xt, it is reformulated to ef ficiently train multi-layer SNNs, and is shown to be ef fectiv ely performing spatio-temporal error backpropagation. The learning rule is validated by training 2 -layer SNNs to solv e a spike based formulation of the XOR problem as well as training 3 - layer SNNs for generic spike based training problems. Thus, the ne w algorithm is a k ey step to wards building deep spiking neural netw orks capable of efficient e vent-triggered learning. K eyw ords supervised learning · spiking neuron · normalized approximate descent · leaky integrate-and-fire · multilayer SNN · spatio-temporal error backpropagation · NormAD · XOR problem 1 Introduction The human brain assimilates multi-modal sensory data and uses it to learn and perform complex cognitiv e tasks such as pattern detection, recognition and completion. This ability is attributed to the dynamics of approximately 10 11 neurons interconnected through a network of 10 15 synapses in the human brain. This has motiv ated the study of neural networks in the brain and attempts to mimic their learning and information processing capabilities to create smart learning machines . Neurons, the fundamental information processing units in brain, communicate with each other by transmitting action potentials or spikes through their synapses. The process of learning in the brain emerges from synaptic plasticity viz., modification of strength of synapses triggered by spiking activity of corresponding neurons. Spiking neurons are the third generation of artificial neuron models which closely mimic the dynamics of biological neurons. Unlike previous generations, both inputs and the output of a spiking neuron are signals in time. Specifically , these signals are point processes of spikes in the membrane potential of the neuron, also called a spike train. Spiking neural networks (SNNs) are computationally more po werful than previous generations of artificial neural netw orks as they incorporate temporal dimension to the information representation and processing capabilities of neural networks [1, 2, 3]. Owing to the incorporation of temporal dimension, SNNs naturally lend themselv es for processing of signals ∗ Corresponding author A P R E P R I N T - J U L Y 3 0 , 2 0 1 9 in time such as audio, video, speech, etc. Information can be encoded in spike trains using temporal codes, rate codes or population codes [4, 5, 6]. T emporal encoding uses exact spike arri val time for information representation and has far more representational capacity than rate code or population code [7]. Howe ver , one of the major hurdles in dev eloping temporal encoding based applications of SNNs is the lack of efficient learning algorithms to train them with desired accuracy . In recent years, there has been significant progress in dev elopment of neuromorphic computing chips, which are specialized hardware implementations that emulate SNN dynamics inspired by the parallel, event-dri ven operation of the brain. Some notable examples are the TrueNorth chip from IBM [8], the Zeroth processor from Qualcomm [9] and the Loihi chip from Intel [10]. Hence, a breakthrough in learning algorithms for SNNs is apt and timely , to complement the progress of neuromorphic computing hardware. The present success of deep learning based methods can be traced back to the breakthroughs in learning algorithms for second generation artificial neural networks (ANNs) [11]. As we will discuss in section 2, there has been work on learning algorithms for SNNs in the recent past, but those methods have not found wide acceptance as they suffer from computational inefficiencies and/or lack of reliable and fast con ver gence. One of the main reasons for unsatis- factory performance of algorithms developed so far is that those efforts have been centered around adapting high-le vel concepts from learning algorithms for ANNs or from neuroscience and porting them to SNNs. In this work, we uti- lize properties specific to spiking neurons in order to develop a supervised learning algorithm for temporal encoding applications with spike-induced weight updates. A supervised learning algorithm named NormAD , for single layer SNNs was proposed in [12]. F or a spike domain training problem, it was demonstrated to con verge at least an order of magnitude faster than the pre vious state-of-the- art. Recognizing the importance of multi-layer SNNs for supervised learning, in this paper we extend the idea to derive NormAD based supervised learning rule for multi-layer feedforward spiking neural networks. It is a spike-domain analogue of the error backpropagation rule commonly used for ANNs and can be interpreted to be a realization of spatio-temporal error backpropag ation. The deriv ation comprises of first formulating the training problem for a multi- layer feedforward SNN as a non-con vex optimization problem. Next, the Normalized Approximate Descent based optimization, introduced in [12], is employed to obtain an iterati ve weight adaptation rule. The new learning rule is successfully validated by employing it to train 2 -layer feedforward SNNs for a spike domain formulation of the XOR problem and 3 -layer feedforward SNNs for general spike domain training problems. This paper is organized as follo ws. W e begin with a summary of learning methods for SNNs documented in litera- ture in section 2. Section 3 provides a brief introduction to spiking neurons and the mathematical model of Leaky Integrate-and-Fire (LIF) neuron, also setting the notations we use later in the paper . Supervised learning problem for feedforward spiking neural networks is discussed in section 4, starting with the description of a generic training problem for SNNs. Next we present a brief mathematical description of a feedforw ard SNN with one hidden layer and formulate the corresponding training problem as an optimization problem. Then Normalized Approximate Descent based optimization is employed to deriv e the spatio-temporal error backpropagation rule in section. 5. Simulation ex- periments to demonstrate the performance of the new learning rule for some exemplary supervised training problems are discussed in section. 6. Section 7 concludes the de velopment with a discussion on directions for future research that can le verage the algorithm developed here towards the goal of realizing e vent-triggered deep spiking neural networks. 2 Related W ork One of the earliest attempts to demonstrate supervised learning with spiking neurons is the SpikeProp algorithm [13]. Howe ver , it is restricted to single spike learning, thereby limiting its information representation capacity. SpikeProp was then extended in [14] to neurons firing multiple spik es. In these studies, the training problem was formulated as an optimization problem with the objectiv e function in terms of the difference between desired and observ ed spik e arri val instants and gradient descent was used to adjust the weights. Howe ver , since spike arriv al time is a discontinuous function of the synaptic strengths, the optimization problem is non-con vex and gradient descent is prone to local minima. The biologically observed spike time dependent plasticity (STDP) has been used to deriv e weight update rules for SNNs in [15, 16, 17]. ReSuMe and DL-ReSuMe took cues from both STDP as well as the W idrow-Hof f rule to formulate a supervised learning algorithm [15, 16]. Though these algorithms are biologically inspired, the training time necessary to con ver ge is a concern, especially for real-world applications in large networks. The ReSuMe algorithm has been extended to multi-layer feedforward SNNs using backpropag ation in [18]. Another notable spike-domain learning rule is PBSNLR [19], which is an offline learning rule for the spiking per- ceptron neuron (SPN) model using the perceptron learning rule. The PSD algorithm [20] uses Widro w-Hoff rule to 2 A P R E P R I N T - J U L Y 3 0 , 2 0 1 9 empirically determine an equiv alent learning rule for spiking neurons. The SP AN rule [21] conv erts input and output spike signals into analog signals and then applies the Widro w-Hof f rule to deri ve a learning algorithm. Further , it is applicable to the training of SNNs with only one layer . The SW A T algorithm [22] uses STDP and BCM rule to deri ve a weight adaptation strategy for SNNs. The Normalized Spiking Error Back-Propagation (NSEBP) method proposed in [23] is based on approximations of the simplified Spike Response Model for the neuron. The multi-STIP algorithm proposed in [24] defines an inner product for spike trains to approximate a learning cost function. As opposed to the above approaches which attempt to develop weight update rules for fixed network topologies, there are also some efforts in developing feed-forward networks based on ev olutionary algorithms where new neuronal connections are progressiv ely added and their weights and firing thresholds updated for ev ery class label in the database [25, 26]. Recently , an algorithm to learn precisely timed spikes using a leaky integrate-and-fire neuron was presented in [27]. The algorithm con verges only when a synaptic weight configuration to the giv en training problem exists, and can not provide a close approximation, if the exact solution does not exist. T o overcome this limitation, another algorithm to learn spike sequences with finite precision is also presented in the same paper . It allows a windo w of width around the desired spike instant within which the output spike could arriv e and performs training only on the first deviation from such desired behavior . While it mitigates the non-linear accumulation of error due to interaction between output spikes, it also restricts the training to just one discrepancy per iteration. Backpropagation for training deep networks of LIF neurons has been presented in [28], derived assuming an impulse-shaped post-synaptic current kernel and treating the discontinuities at spik e e vents as noise. It presents remarkable results on MNIST and N-MNIST benchmarks using rate coded outputs, while in the present work we are interested in training multi-layer SNNs with temporally encoded outputs i.e., representing information in the timing of spikes. Many previous attempts to formulate supervised learning as an optimization problem employ an objecti ve function formulated in terms of the difference between desired and observed spike arri val times [13, 14, 29, 30]. W e will see in section 3 that a leaky integrate-and-fire (LIF) neuron can be described as a non-linear spatio-temporal filter , spatial filtering being the weighted summation of the synaptic inputs to obtain the total incoming synaptic current and temporal filtering being the leaky integration of the synaptic current to obtain the membrane potential. Thus, it can be argued that in order to train multi-layer SNNs, we would need to backpropagate error in space as well as in time, and as we will see in section 5, it is indeed the case for proposed algorithm. Note that while the membrane potential can directly control the output spike timings, it is also relati vely more tractable through synaptic inputs and weights compared to spike timing. This observ ation is le veraged to deriv e a spaio-temporal error backpropagation algorithm by treating supervised learning as an optimization problem, with the objectiv e function formulated in terms of the membrane potential. 3 Spiking Neurons Spiking neurons are simplified models of biological neurons e.g., the Hodgkin-Huxley equations describing the de- pendence of membrane potential of a neuron on its membrane current and conductivity of ion channels [31]. A spiking neuron is modeled as a multi-input system that receives inputs in the form of sequences of spikes, which are then trans- formed to analog current signals at its input synapses. The synaptic currents are superposed inside the neuron and the result is then transformed by its non-linear integrate-and-fire dynamics to a membrane potential signal with a sequence of stereotyped events in it, called action potentials or spikes. Despite the continuous-time variations in the membrane potential of a neuron, it communicates with other neurons through the synaptic connections by chemically inducing a particular current signal in the post-synaptic neuron each time it spikes. Hence, the output of a neuron can be com- pletely described by the time sequence of spikes issued by it. This is called spike based information r epr esentation and is illustrated in Fig. 1. The output, also called a spike train, is modeled as a point process of spike ev ents. Though the internal dynamics of an individual neuron is straightforward, a network of neurons can exhibit complex dynamical behaviors. The processing po wer of neural networks is attributed to the massively parallel synaptic connections among neurons. 3.1 Synapse The communication between any two neurons is spike induced and is accomplished through a directed connection between them known as a synapse. In the cortex, each neuron can receive spike-based inputs from thousands of other neurons. If we model an incoming spik e at a synapse as a unit impulse, then the behavior of the synapse to translate it to an analog current signal in the post-synaptic neuron can be modeled by a linear time in variant system with transfer function w α ( t ) . Thus, if a pre-synaptic neuron issues a spike at time t f , the post-synaptic neuron receiv es a current i ( t ) = w α ( t − t f ) . Here the wav eform α ( t ) is known as the post-synaptic current kernel and the scaling factor w is called the weight of the synapse. The weight varies from synapse-to-synapse and is representati ve of its conductance, 3 A P R E P R I N T - J U L Y 3 0 , 2 0 1 9 Figure 1: Illustration of spike based information representation: a spiking neuron assimilates multiple input spike trains to generate an output spike train. Figure adapted from [12]. whereas α ( t ) is independent of synapse and is commonly modeled as α ( t ) = [exp( − t/τ 1 ) − exp( − t/τ 2 )] u ( t ) , (1) where u ( t ) is the Heaviside step function and τ 1 > τ 2 . Note that the synaptic weight w can be positiv e or negati ve, depending on which the synapse is said to be e xcitatory or inhibitory respectively . Further, we assume that the synaptic currents do not depend on the membrane potential or rev ersal potential of the post-synaptic neuron. Let us assume that a neuron receiv es inputs from n synapses and spikes arriv e at the i th synapse at instants t i 1 , t i 2 , . . . . Then, the input signal at the i th synapse (before scaling by synaptic weight w i ) is giv en by the expression c i ( t ) = X f α ( t − t i f ) . (2) The synaptic weights of all input synapses to a neuron are usually represented in a compact form as a weight vector w = [ w 1 w 2 · · · w n ] T , where w i is the weight of the i th synapse. The synaptic weights perform spatial filtering ov er the input signals resulting in an aggregate synaptic current recei ved by the neuron: I ( t ) = w T c ( t ) , (3) where c ( t ) = [ c 1 ( t ) c 2 ( t ) · · · c n ( t ) ] T . A simplified illustration of the role of synaptic transmission in overall spike based information processing by a neuron is shown in Fig. 2, where an incoming spike train at a synaptic input is translated to an analog current with an amplitude depending on weight of the synapse. The resultant current at the neuron from all its upstream synapses is transformed non-linearly to generate its membrane potential with instances of spikes viz., sudden sur ge in membrane potential followed by an immediate drop. Synaptic Plasticity The response of a neuron to stimuli greatly depends on the conductance of its input synapses. Conductance of a synapse (the synaptic weight) changes based on the spiking acti vity of the corresponding pre- and post-synaptic neurons. A neural network’ s ability to learn is attributed to this activity dependent synaptic plasticity . T aking cues from biology , we will also constrain the learning algorithm we dev elop to hav e spike-induced synaptic weight updates. 3.2 Leaky Integrate-and-Fire (LIF) Neuron In leaky integrate-and-fire (LIF) model of spiking neurons, the transformation from aggregate input synaptic current I ( t ) to the resultant membrane potential V ( t ) is governed by the following differential equation and reset condition [32]: C m dV ( t ) dt = − g L ( V ( t ) − E L ) + I ( t ) , (4) V ( t ) − → E L when V ( t ) ≥ V T . Here, C m is the membrane capacitance, E L is the leak re versal potential, and g L is the leak conductance. If V ( t ) exceeds the threshold potential V T , a spike is said to have been issued at time t . The expression V ( t ) − → E L when V ( t ) ≥ V T denotes that V ( t ) is reset to E L when it exceeds the threshold V T . Assuming that the neuron issued its 4 A P R E P R I N T - J U L Y 3 0 , 2 0 1 9 (a) (b) (c) Figure 2: Illustration of a simplified synaptic transmission and neuronal integration model: (a) ex emplary spikes (stimulus) arriving at a synapse, (b) the resultant current being fed to the neuron through the synapse and (c) the resultant membrane potential of the post-synaptic neuron. latest spike at time t l , Eq. (4) can be solved for any time instant t > t l , until the issue of the ne xt spike, with the initial condition V ( t l ) = E L as V ( t ) = E L + ( I ( t ) u ( t − t l )) ∗ h ( t ) (5) V ( t ) − → E L when V ( t ) ≥ V T , where ‘ ∗ ’ denotes linear con volution and h ( t ) = 1 C m exp( − t/τ L ) u ( t ) , (6) with τ L = C m /g L is the neuron’ s leakage time constant. Note from Eq. (5) that the aggregate synaptic current I ( t ) obtained by spatial filtering of all the input signals is first gated with a unit step located at t = t l and then fed to a leaky integrator with impulse response h ( t ) , which performs temporal filtering. So the LIF neuron acts as a non-linear spatio-temporal filter and the non-linearity is a result of the reset at ev ery spike. Using Eq. (3) and (5) the membrane potential can be represented in a compact form as V ( t ) = E L + w T d ( t ) , (7) where d ( t ) = [ d 1 ( t ) d 2 ( t ) · · · d n ( t ) ] T and d i ( t ) = ( c i ( t ) u ( t − t l )) ∗ h ( t ) . (8) From Eq. (7), it is evident that d ( t ) carries all the information about the input necessary to determine the membrane potential. It should be noted that d ( t ) depends on weight vector w , since d i ( t ) for each i depends on the last spiking instant t l , which in turn is dependent on the weight vector w . The neuron is said to hav e spiked only when the membrane potential V ( t ) reaches the threshold V T . Hence, minor changes in the weight vector w may eliminate an already existing spike or introduce new spikes. Thus, spike arri val time t l is a discontinuous function of w . Therefore, Eq. (7) implies that V ( t ) is also discontinuous in weight space. 5 A P R E P R I N T - J U L Y 3 0 , 2 0 1 9 Supervised learning problem for SNNs is generally framed as an optimization problem with the cost function described in terms of the spike arriv al time or membrane potential. Howe ver , the discontinuity of spike arriv al time as well as V ( t ) in weight space renders the cost function discontinuous and hence the optimization problem non-con vex. Commonly used steepest descent methods can not be applied to solv e such non-con vex optimization problems. In this paper , we extend the optimization method named Normalized Appr oximate Descent , introduced in [12] for single layer SNNs to multi-layer SNNs. 3.3 Refractory Period After issuing a spike, biological neurons can not immediately issue another spike for a short period of time. This short duration of inactivity is called the absolute refractory period ( ∆ abs ). This aspect of spiking neurons has been omitted in the above discussion for simplicity , but can be easily incorporated in our model by replacing t l with ( t l + ∆ abs ) in the equations abov e. Armed with a compact representation of membrane potential in Eq. (7), we are no w set to deriv e a synaptic weight update rule to accomplish supervised learning with spiking neurons. 4 Supervised Learning using F eedforward SNNs Supervised learning is the process of obtaining an approximate model of an unkno wn system based on av ailable training data, where the training data comprises of a set of inputs to the system and corresponding outputs. The learned model should not only fit to the training data well but should also generalize well to unseen samples from the same input distribution. The first requirement viz. to obtain a model so that it best fits the gi ven training data is called training problem. Next we discuss the training problem in spike domain, solving which is a stepping stone to wards solving the more constrained supervised learning problem. Figure 3: Spike domain training problem: Gi ven a set of n input spike trains fed to the SNN through its n inputs, determine the weights of synaptic connections constituting the SNN so that the observed output spike train is as close as possible to the giv en desired spike train. 4.1 T raining Problem A canonical training problem for a spiking neural network is illustrated in Fig. 3. There are n inputs to the network such that s in,i ( t ) is the spike train fed at the i th input. Let the desired output spike train corresponding to this set of input spike trains be gi ven in the form of an impulse train as s d ( t ) = f X i =1 δ ( t − t i d ) . (9) Here, δ ( t ) is the Dirac delta function and t 1 d , t 2 d , ..., t f d are the desired spike arriv al instants ov er a duration T , also called an epoch. The aim is to determine the weights of the synaptic connections constituting the SNN so that its output s o ( t ) in response to the given input is as close as possible to the desired spike train s d ( t ) . NormAD based iterati ve synaptic weight adaptation rule was proposed in [12] for training single layer feedforward SNNs. Howe ver , there are many systems which can not be modeled by any possible configuration of single layer SNN and necessarily require a multi-layer SNN. Hence, now we aim to obtain a supervised learning rule for multi- layer spiking neural networks. The change in weights in a particular iteration of training can be based on the given set of input spike trains, desired output spike train and the corresponding observed output spike train. Also, the weight adaptation rule should be constrained to hav e spike induced weight updates for computational efficienc y . For 6 A P R E P R I N T - J U L Y 3 0 , 2 0 1 9 simplicity , we will first derive the weight adaptation rule for training a feedforward SNN with one hidden layer and then state the general weight adaptation rule for feedforward SNN with an arbitrary number of layers. Imaginary Buffer Line Perf ormance Metric T raining performance can be assessed by the correlation between desired and observed outputs. It can be quantified in terms of the cross-correlation between low-pass filtered v ersions of the two spike trains. The correlation metric which was introduced in [33] and is commonly used in characterizing the spike based learning efficienc y [12, 34] is defined as C = h L ( s d ( t )) , L ( s o ( t )) i k L ( s d ( t )) k · k L ( s o ( t )) k . (10) Here, L ( s ( t )) is the low-pass filtered spike train s ( t ) obtained by con volving it with a one-sided falling exponential i.e., L ( s ( t )) = s ( t ) ∗ (exp( − t/τ LP ) u ( t )) , with τ LP = 5 ms. Figure 4: Feedforward SNN with one hidden layer ( n → m → 1 ) also known as 2-layer feedforw ard SNN. 4.2 Feedf orward SNN with One Hidden Layer A fully connected feedforward SNN with one hidden layer is sho wn in Fig. 4. It has n neurons in the input layer , m neurons in the hidden layer and 1 in the output layer . It is also called a 2-layer feedforward SNN, since the neurons in input layer provide spike based encoding of sensory inputs and do not actually implement the neuronal dynamics. W e denote this network as a n → m → 1 feedforward SNN. This basic framework can be extended to the case where there are multiple neurons in the output layer or the case where there are multiple hidden layers. The weight of the synapse from the j th neuron in the input layer to the i th neuron in the hidden layer is denoted by w h,ij and that of the synapse from the i th neuron in the hidden layer to the neuron in output layer is denoted by w o,i . All input synapses to the i th neuron in the hidden layer can be represented compactly as an n -dimensional vector w h,i = [ w h,i 1 w h,i 2 · · · w h,in ] T . Similarly input synapses to the output neuron are represented as an m -dimensional vector w o = [ w o, 1 w o, 2 · · · w o,m ] T . Let s in,j ( t ) denote the spike train fed by the j th neuron in input layer to neurons in hidden layer . Hence, from Eq. (2), the signal fed to the neurons in the hidden layer from the j th input (before scaling by synaptic weight) c h,j ( t ) is gi ven as c h,j ( t ) = s in,j ( t ) ∗ α ( t ) . (11) Assuming t last h,i as the latest spiking instant of the i th neuron in the hidden layer , define d h,i ( t ) as d h,i ( t ) = c h ( t ) u t − t last h,i ∗ h ( t ) , (12) 7 A P R E P R I N T - J U L Y 3 0 , 2 0 1 9 where c h ( t ) = [ c h, 1 c h, 2 · · · c h,n ] T . From Eq. (7), membrane potential of the i th neuron in hidden layer is giv en as V h,i ( t ) = E L + w T h,i d h,i ( t ) . (13) Accordingly , let s h,i ( t ) be the spike train produced at the i th neuron in the hidden layer . The corresponding signal fed to the output neuron is giv en as c o,i ( t ) = s h,i ( t ) ∗ α ( t ) . (14) Defining c o ( t ) = [ c o, 1 c o, 2 · · · c o,m ] T and denoting the latest spiking instant of the output neuron by t last o we can define d o ( t ) = c o ( t ) u t − t last o ∗ h ( t ) . (15) Hence, from Eq. (7), the membrane potential of the output neuron is giv en as V o ( t ) = E L + w T o d o ( t ) (16) and the corresponding output spike train is denoted s o ( t ) . 4.3 Mathematical Formulation of the T raining Problem T o solve the training problem employing an n → m → 1 feedforward SNN, effecti vely we need to determine synaptic weights W h = [ w h, 1 w h, 2 · · · w h,m ] T and w o constituting its synaptic connections, so that the output spike train s o ( t ) is as close as possible to the desired spike train s d ( t ) when the SNN is excited with the giv en set of input spike trains s in,i ( t ) , i ∈ { 1 , 2 , ..., n } . Let V d ( t ) be the corresponding ideally desired membrane potential of the output neuron, such that the respective output spike train is s d ( t ) . Also, for a particular configuration W h and w o of synaptic weights of the SNN, let V o ( t ) be the observed membrane potential of the output neuron in response to the giv en input and s o ( t ) be the respective output spike train. W e define the cost function for training as J ( W h , w o ) = 1 2 Z T 0 (∆ V d,o ( t )) 2 | e ( t ) | dt, (17) where ∆ V d,o ( t ) = V d ( t ) − V o ( t ) (18) and e ( t ) = s d ( t ) − s o ( t ) . (19) That is, the cost function is determined by the difference ∆ V d,o ( t ) , only at the instants in time where there is a discrepancy between the desired and observed spike trains of the output neuron. Thus, the training problem can be expressed as follo wing optimization problem: min J ( W h , w o ) s.t. W h ∈ R m × n , w o ∈ R m (20) Note that the optimization with respect to w o is same as training a single layer SNN, provided the spike trains from neurons in the hidden layer are known. In addition, we need to deriv e the weight adaptation rule for synapses feeding the hidden layer viz., the weight matrix W h , such that spikes in the hidden layer are most suitable to generate the desired spikes at the output. The cost function is dependent on the membrane potential V o ( t ) , which is discontinuous with respect to w o as well as W h . Hence the optimization problem (20) is non-conv ex and susceptible to local minima when solved with steepest descent algorithm. 5 NormAD based Spatio-T emporal Error Backpropagation In this section we apply Normalized Approximate Descent to the optimization problem (20) to derive a spike domain analogue of error backpropag ation. First we deriv e the training algorithm for SNNs with single hidden layer , and then we provide its generalized form to train feedforward SNNs with arbitrary number of hidden layers. 5.1 NormAD – Normalized A pproximate Descent Follo wing the approach introduced in [12], we use three steps viz., (i) Stochastic Gradient Descent, (ii) Normalization and (iii) Gradient Approximation, as elaborated below to solv e the optimization problem (20). 8 A P R E P R I N T - J U L Y 3 0 , 2 0 1 9 5.1.1 Stochastic Gradient Descent Instead of trying to minimize the aggregate cost over the epoch, we try to minimize the instantaneous contribution to the cost at each instant t for which e ( t ) 6 = 0 , independent of that at any other instant and expect that it minimizes the total cost J ( W h , w o ) . The instantaneous contrib ution to the cost at time t is denoted as J ( W h , w o , t ) and is obtained by restricting the limits of integral in Eq. (17) to an infinitesimally small interv al around time t : J ( W h , w o , t ) = 1 2 (∆ V d,o ( t )) 2 e ( t ) 6 = 0 0 otherwise. (21) Thus, using stochastic gradient descent, the prescribed change in any weight v ector w at time t is gi ven as: ∆ w ( t ) = − k ( t ) · ∇ w J ( W h , w o , t ) e ( t ) 6 = 0 0 otherwise. Here k ( t ) is a time dependent learning rate. The change aggregated ov er the epoch is, therefore ∆ w = Z T t =0 − k ( t ) · ∇ w J ( W h , w o , t ) · | e ( t ) | dt = Z T t =0 k ( t ) · ∆ V d,o ( t ) · ∇ w V o ( t ) · | e ( t ) | dt. (22) Minimizing the instantaneous cost only for time instants when e ( t ) 6 = 0 also renders the weight updates spike-induced i.e., it is non-zero only when there is either an observed or a desired spike in the output neuron. 5.1.2 Normalization Observe that in Eq. (22), the gradient of membrane potential ∇ w V o ( t ) is scaled with the error term ∆ V d,o ( t ) , which serves two purposes. First, it determines the sign of the weight update at time t and second, it giv es more importance to weight updates corresponding to the instants with higher magnitude of error . But V d ( t ) and hence error ∆ V d,o ( t ) is not known. Also, dependence of the error on w h,i is non-linear, so we eliminate the error term ∆ V d,o ( t ) for neurons in hidden layer by choosing k ( t ) such that | k ( t ) · ∆ V d,o ( t ) | = r h , (23) where r h is a constant. From Eq. (22), we obtain the weight update for the i th neuron in the hidden layer as ∆ w h,i = r h Z T t =0 ∇ w h,i V o ( t ) e ( t ) dt, (24) since sgn (∆ V d,o ( t )) = sgn ( e ( t )) . For the output neuron, we eliminate the error term by choosing k ( t ) such that k k ( t ) · ∆ V d,o ( t ) · ∇ w o V o ( t ) k = r o , where r o is a constant. From Eq. (22), we get the weight update for the output neuron as ∆ w o = r o Z T t =0 ∇ w o V o ( t ) k∇ w o V o ( t ) k e ( t ) dt. (25) Now , we proceed to determine the gradients ∇ w h,i V o ( t ) and ∇ w o V o ( t ) . 5.1.3 Gradient Approximation W e use an approximation of V o ( t ) which is affine in w o and giv en as b d o ( t ) = c o ( t ) ∗ b h ( t ) (26) ⇒ V o ( t ) ≈ b V o ( t ) = E L + w T o b d o ( t ) , (27) where b h ( t ) = ( 1 / C m ) exp ( − t/τ 0 L ) u ( t ) with τ 0 L ≤ τ L . Here, τ 0 L is a hyper -parameter of learning rule that needs to be determined empirically . Similarly V h,i ( t ) can be approximated as b d h ( t ) = c h ( t ) ∗ b h ( t ) (28) ⇒ V h,i ( t ) ≈ b V h,i ( t ) = E L + w T h,i b d h ( t ) . (29) 9 A P R E P R I N T - J U L Y 3 0 , 2 0 1 9 Note that b V h,i ( t ) and b V o ( t ) are linear in weight vectors w h,i and w o respectiv ely of corresponding input synapses. From Eq. (27), we approximate ∇ w o V o ( t ) as ∇ w o V o ( t ) ≈ ∇ w o b V o ( t ) = b d o ( t ) . (30) Similarly ∇ w h,i V o ( t ) can be approximated as ∇ w h,i V o ( t ) ≈ ∇ w h,i b V o ( t ) = w o,i ∇ w h,i b d o,i ( t ) , (31) since only b d o,i ( t ) depends on w h,i . Thus, from Eq. (26), we get ∇ w h,i V o ( t ) ≈ w o,i ∇ w h,i c o,i ( t ) ∗ b h ( t ) . (32) W e know that c o,i ( t ) = P s α t − t s h,i , where t s h,i denotes the s th spiking instant of i th neuron in the hidden layer . Using the chain rule of differentiation, we get ∇ w h,i c o,i ( t ) ≈ X s δ t − t s h,i b d h t s h,i V 0 h,i t s h,i ∗ α 0 ( t ) . (33) Refer to the appendix A for a detalied deriv ation of Eq. (33). Using Eq. (32) and (33), we obtain an approximation to ∇ w h,i V o ( t ) as ∇ w h,i V o ( t ) ≈ w o,i · X s δ t − t s h,i b d h t s h,i V 0 h,i t s h,i ∗ α 0 ( t ) ∗ b h ( t ) . (34) Note that the key enabling idea in the deriv ation of the above learning rule is the use of the inv erse of the time rate of change of the neuronal membrane potential to capture the dependency of its spike time on its membrane potential, as shown in the appendix A in detail. 5.2 Spatio-T emporal Err or Backpropagation Incorporating the approximation from Eq. (30) into Eq. (25), we get the weight adaptation rule for w o as ∆ w o = r o Z T 0 b d o ( t ) k b d o ( t ) k e ( t ) dt. (35) Similarly incorporating the approximation made in Eq. (34) into Eq. (24), we obtain the weight adaptation rule for w h,i as ∆ w h,i = r h · w o,i · Z T t =0 X s δ t − t s h,i b d h t s h,i V 0 h,i t s h,i ∗ α 0 ( t ) ∗ b h ( t ) e ( t ) dt. (36) Thus the adaptation rule for the weight matrix W h is giv en as ∆ W h = r h · Z T t =0 U h ( t ) w o b d T h ( t ) ∗ α 0 ( t ) ∗ b h ( t ) e ( t ) dt, (37) where U h ( t ) is a m × m diagonal matrix with i th diagonal entry giv en as u h,ii ( t ) = P s δ t − t s h,i V 0 h,i ( t ) . (38) 10 A P R E P R I N T - J U L Y 3 0 , 2 0 1 9 Note that Eq. (37) requires O ( mn ) con volutions to compute ∆ W h . Using the identity (deriv ed in appendix B) Z t ( x ( t ) ∗ y ( t )) z ( t ) dt = Z t ( z ( t ) ∗ y ( − t )) x ( t ) dt, (39) equation (37) can be equiv alently written in following form, which lends itself to a more efficient implementation in volving only O (1) con volutions. ∆ W h = r h · Z T t =0 e ( t ) ∗ α 0 ( − t ) ∗ b h ( − t ) U h ( t ) w o b d T h ( t ) dt. (40) Rearranging the terms as follows brings forth the inherent process of spatio-temporal backpr opagation of err or hap- pening during NormAD based training. ∆ W h = r h · Z T t =0 U h ( t ) ( w o e ( t )) ∗ α 0 ( − t ) ∗ b h ( − t ) b d T h ( t ) dt. (41) Here spatial backpropagation is done through the weight vector w o as e spat h ( t ) = w o e ( t ) (42) and then temporal backpropagation by con volution with time re versed kernels α 0 ( t ) and b h ( t ) and sampling with U h ( t ) as e temp h ( t ) = U h ( t ) e spat h ( t ) ∗ α 0 ( − t ) ∗ b h ( − t ) . (43) It will be more evident when we generalize it to SNNs with arbitrarily man y hidden layers. From Eq. (36), note that the weight update for synapses of a neuron in hidden layer depends on its own spiking acti vity thus suggesting the spike-induced nature of weight update. Howe ver , in case all the spikes of the hidden layer vanish in a particular training iteration, there will be no spiking activity in the output layer and as per Eq. (36) the weight update ∆ w h,i = 0 for all subsequent iterations. T o avoid this, regularization techniques such as constraining the av erage spike rate of neurons in the hidden layer to a certain range can be used, though it has not been used in the present work. Figure 5: Fully connected feedforward SNN with L layers ( N 0 → N 1 → N 2 · · · N L − 1 → 1 ) 5.2.1 Generalization to Deep SNNs For the case of feedforward SNNs with two or more hidden layers, the weight update rule for output layer remains the same as in Eq. (35). Here, we provide the general weight update rule for any particular hidden layer of an arbitrary fully connected feedforward SNN N 0 → N 1 → N 2 · · · N L − 1 → 1 with L layers as shown in Fig. 5. This can be obtained by the straight-forward extension of the deriv ation for the case with single hidden layer discussed above. For this discussion, the subscript h or o indicating the layer of the corresponding neuron in the previous discussion is replaced by the layer index to accommodate arbitrary number of layers. The iterativ e weight update rule for synapses connecting neurons in layer l − 1 to neurons in layer l viz., W l (0 < l < L ) is gi ven as follo ws: ∆ W l = r h Z T t =0 e temp l ( t ) b d T l ( t ) dt for 0 < l < L, (44) 11 A P R E P R I N T - J U L Y 3 0 , 2 0 1 9 where e temp l ( t ) = ( U l ( t ) e spat l ( t ) ∗ α 0 ( − t ) ∗ b h ( − t ) 1 < l < L e ( t ) l = L, (45) performs temporal backpr opagation follo wing the spatial bac kpr opagation as e spat l ( t ) = W T l +1 e temp l +1 ( t ) for 1 < l < L. (46) Here U l ( t ) is an N l × N l diagonal matrix with n th diagonal entry giv en as u l,nn ( t ) = P s δ t − t s l,n V 0 l,n ( t ) , (47) where V l,n ( t ) is the membrane potential of n th neuron in layer l and t s l,n is the time of its s th spike. From Eq. (45), note that temporal backpropagation through layer l requires O ( N l ) conv olutions. 6 Numerical validation In this section we validate the applicability of NormAD based spatio-temporal error backpropagation to the training of multi-layer SNNs. The algorithm comprises of Eq. (44) - (47). 6.1 XOR Problem XOR problem is a prominent example of non-linear classification problems which can not be solved using the sin- gle layer neural network architecture and hence compulsorily require a multi-layer network. Here, we present how proposed NormAD based training w as employed to solve a spike domain formulation of the XOR problem for a multi- layer SNN. The XOR problem is similar to the one used in [13] and represented by T able 1. There are 3 input neurons and 4 different input spike patterns giv en in the 4 rows of the table, where temporal encoding is used to represent logical 0 and 1 . The numbers in the table represent the arriv al time of spikes at the corresponding neurons. The bias input neuron always spikes at t = 0 ms. The other two inputs can hav e two types of spiking acti vity viz., presence or absence of a spike at t = 6 ms, representing logical 1 and 0 respectiv ely . The desired output is coded such that an early spike (at t = 10 ms) represents a logical 1 and a late spike (at t = 16 ms) represents a logical 0 . Input spike time (ms) Output Bias Input 1 Input 2 spike time (ms) 0 - - 16 0 - 6 10 0 6 - 10 0 6 6 16 T able 1: XOR Problem set-up from [13], which uses arriv al time of spike to encode logical 0 and 1 . In the network reported in [13], the three input neurons had 16 synapses with axonal delays of 0 , 1 , 2 , ..., 15 ms re- spectiv ely . Instead of having multiple synapses we use a set of 18 different input neurons for each of the three inputs such that when the first neuron of the set spikes, second one spikes after 1 ms, third one after another 1 ms and so on. Thus, there are 54 input neurons comprising of three sets with 18 neurons in each set. So, a 54 → 54 → 1 feedforward SNN is trained to perform the XOR operation in our implementation. Input spike rasters corresponding to the 4 input patterns are shown in Fig. 6 (left). W eights of synapses from the input layer to the hidden layer were initialized randomly using Gaussian distribution, with 80% of the synapses having positive mean weight (e xcitatory) and rest 20% of the synapses ha ving ne gati ve mean weight (inhibitory). The network was trained using NormAD based spatio-temporal error backpropagation. Figure 6 plots the output spike raster (on right) corresponding to each of the four input patterns (on left), for an exemplary initialization of the weights from the input to the hidden layer . As can be seen, con vergence w as achieved in less than 120 training iterations in this experiment. 12 A P R E P R I N T - J U L Y 3 0 , 2 0 1 9 Figure 6: XOR problem: Input spike raster (left) and corresponding output spike raster (right - blue dots) obtained during NormAD based training of a 54 → 54 → 1 SNN with vertical red lines marking the position of desired spikes. The output spike raster is plotted for one in e very 5 training iterations for clarity . The necessity of a multi-layer SNN for solving an XOR problem is well known, but to demonstrate the effecti veness of NormAD based training to hidden layers as well, we conducted two experiments. For 100 independent random initializations of the synaptic weights to the hidden layer, the SNN was trained with (i) non-plastic hidden layer , and (ii) plastic hidden layer . The output layer was trained using Eq. (35) in both the experiments. Figures 7a and 7b show the mean and standard deviation respectiv ely of spike correlation against training iteration number for the two experiments. For the case with non-plastic hidden layer , the mean correlation reached close to 1, but the non-zero standard de viation represents a sizable number of experiments which did not con verge ev en after 800 training iterations. When the synapses in hidden layer were also trained, con ver gence was obtained for all the 100 initializations within 400 training iterations. The con vergence criteria used in these experiments was to reach the perfect spike correlation metric of 1 . 0 . 6.2 T raining SNNs with 2 Hidden Layers Next, to demonstrate spatio-temporal error backpropag ation through multiple hidden layers, we applied the algorithm to train 100 → 50 → 25 → 1 feedforward SNNs for general spike based training problems. The weights of synapses feeding the output layer were initialized to 0 , while synapses feeding the hidden layers were initialized using a uniform random distribution and with 80% of them excitatory and the rest 20% inhibitory . Each training problem comprised of n = 100 input spike trains and one desired output spike train, all generated to hav e Poisson distributed spikes with arri val rate 20 s − 1 for inputs and 10 s − 1 for the output, over an epoch duration T = 500 ms. Figure 8 shows the progress of training for an exemplary training problem by plotting the output spike rasters for various training iterations ov erlaid on plots of vertical red lines denoting the positions of desired spikes. 13 A P R E P R I N T - J U L Y 3 0 , 2 0 1 9 (a) Mean spike correlation (b) Standard deviation of spik e correlation Figure 7: Plots of (a) mean and (b) standard de viation of spike correlation metric over 100 different initializations of 54 → 54 → 1 SNN, trained for the XOR problem with non-plastic hidden layer (red asterisk) and plastic hidden layer (blue circles). Figure 8: Illustrating NormAD based training of an exemplary problem for 3-layer 100 → 50 → 25 → 1 SNN. The output spike rasters (blue dots) obtained during one in every 20 training iterations (for clarity) is shown, overlaid on plots of vertical red lines marking positions of the desired spikes. T o assess the gain of training hidden layers using NormAD based spatio-temporal error backpropagation, we ran a set of 3 experiments. For 100 different training problems for the same SNN architecture as described above, we studied the effect of (i) training only the output layer weights, (ii) training only the outer 2 layers and (iii) training all the 3 layers. Figure 9 plots the cumulativ e number of SNNs trained against number of training itertions for the 3 cases, 14 A P R E P R I N T - J U L Y 3 0 , 2 0 1 9 Figure 9: Plots showing cumulativ e number of training problems for which con vergence was achie ved out of total 100 different training problems for 3-layer 100 → 50 → 25 → 1 SNNs. where the criteria for completion of training is reaching the correlation metric of 0 . 98 or above. Figures 10a and 10b show plots of mean and standard de viation respectively of spike correlation against training iteration number for the 3 experiments. As can be seen, in the third experiment when all 3 layers were trained, all 100 training problems con ver ged within 6000 training iterations. In contrast, the first 2 experiments have non-zero standard deviation even until 10000 training iterations indicating non-con ver gence for some of the cases. In the first eperiment, where only synapses feeding the output layer were trained, con ver gence was achieved only for 71 out of 100 training problems after 10000 iterations. Howe ver , when the synapses feeding the top two layers or all three layers were trained, the number of cases reaching con vergen vce rose to 98 and 100 respectiv ely , thus proving the effecti veness of the proposed NormAD based training method for multi-layer SNNs. 7 Conclusion W e developed NormAD based spaio-temporal error backpropagation to train multi-layer feedforward spiking neural networks. It is the spike domain analogue of error backpropagation algorithm used in second generation neural net- works. The deriv ation was accomplished by first formulating the corresponding training problem as a non-conv ex optimization problem and then employing Normalized Approximate Descent based optimization to obtain the weight adaptation rule for the SNN. The learning rule was validated by applying it to train 2 and 3 -layer feedforward SNNs for a spike domain formulation of the XOR problem and general spike domain training problems respecti vely . The main contribution of this work is hence the dev elopment of a learning rule for spiking neural networks with arbi- trary number of hidden layers. One of the major hurdles in achieving this has been the problem of backpropagating errors through non-linear leaky integrate-and-fire dynamics of a spiking neuron. W e have tackled this by introducing temporal error backpropagation and quantifying the dependence of the time of a spike on the corresponding membrane potential by the in verse temporal rate of change of the membrane potential. This together with the spatial backpropa- gation of errors constitutes NormAD based training of multi-layer SNNs. The problem of local con ver gence while training second generation deep neural networks is tackled by unsupervised pr etraining prior to the application of error backpropagation [11, 35]. Dev elopment of such unsupervised pretraining techniques for deep SNNs is a topic of future research, as NormAD could be applied in principle to develop SNN based autoencoders. 15 A P R E P R I N T - J U L Y 3 0 , 2 0 1 9 (a) Mean spike correlation (b) Standard deviation of spik e correlation Figure 10: Plots of (a) mean and (b) standard deviation of spike correlation metric while partially or completely training 3-layer 100 → 50 → 25 → 1 SNNs for 100 different training problems. A ppendices A Gradient A pproximation Deriv ation of Eq. 33 is presented below: ∇ w h,i c o,i ( t ) = X s ∂ α t − t s h,i ∂ t s h,i · ∇ w h,i t s h,i ( from Eq. (14) ) = X s − α 0 t − t s h,i · ∇ w h,i t s h,i (A.1) T o compute ∇ w h,i t s h,i , let us assume that a small change δ w h,ij in w h,ij led to changes in V h,i ( t ) and t s h,i by δ V h,i ( t ) and δ t s h,i respectiv ely i.e., V h,i ( t s h,i + δ t s h,i ) + δ V h,i ( t s h,i + δ t s h,i ) = V T . (A.2) From Eq. (29), δ V h,i ( t ) can be approximated as δ V h,i ( t ) ≈ δ w h,ij · b d h,j ( t ) , (A.3) 16 A P R E P R I N T - J U L Y 3 0 , 2 0 1 9 hence from Eq. (A.2) above V h,i ( t s h,i ) + δ t s h,i V 0 h,i ( t s h,i ) + δ w h,ij · b d h,j ( t s h,i + δ t s h,i ) ≈ V T = ⇒ δ t s h,i δ w h,ij ≈ − b d h,j ( t s h,i + δ t s h,i ) V 0 h,i ( t s h,i ) ( since V h,i ( t s h,i ) = V T ) = ⇒ ∂ t s h,i ∂ w h,ij ≈ − b d h,j ( t s h,i ) V 0 h,i ( t s h,i ) = ⇒ ∇ w h,i t s h,i ≈ − b d h t s h,i V 0 h,i t s h,i . (A.4) Thus using Eq. (A.4) in Eq. (A.1) we get ∇ w h,i c o,i ( t ) ≈ X s α 0 t − t s h,i b d h t s h,i V 0 h,i t s h,i ≈ X s δ t − t s h,i b d h t s h,i V 0 h,i t s h,i ∗ α 0 ( t ) . (A.5) Note that approximation in Eq. (A.4) is an important step tow ards obtaining weight adaptation rule for hidden layers, as it now allows us to approximately model the dependence of the spiking instant of a neuron on its inputs using the in verse of the time deri vati ve of its membrane potential. B Lemma 1. Given 3 functions x ( t ) , y ( t ) and z ( t ) Z t ( x ( t ) ∗ y ( t )) z ( t ) dt = Z t ( z ( t ) ∗ y ( − t )) x ( t ) dt. Pr oof. By definition of linear conv olution Z t ( x ( t ) ∗ y ( t )) z ( t ) dt = Z t Z u x ( u ) y ( t − u ) du z ( t ) dt. Changing the order of integration, we get Z t ( x ( t ) ∗ y ( t )) z ( t ) dt = Z u x ( u ) Z t y ( t − u ) z ( t ) dt du = Z u x ( u ) ( y ( − u ) ∗ z ( u )) du = Z t ( z ( t ) ∗ y ( − t )) x ( t ) dt. Acknowledgment This research was supported in part by the U.S. National Science Foundation through the grant 1710009. The authors acknowledge the in valuable insights gained during their stay at Indian Institute of T echnology , Bombay where the initial part of this work was concei ved and conducted as a part of a master’ s thesis project. W e also acknowledge the re viewer comments which helped us expand the scope of this w ork and bring it to its present form. 17 A P R E P R I N T - J U L Y 3 0 , 2 0 1 9 References [1] W olfgang Maass. Networks of spiking neurons: the third generation of neural netw ork models. Neural networks , 10(9):1659–1671, 1997. [2] Sander M. Bohte. The e vidence for neural information processing with precise spike-times: A surve y . 3(2):195– 206, May 2004. [3] Patrick Crotty and William B Levy . Energy-efficient interspike interval codes. Neur ocomputing , 65:371 – 378, 2005. Computational Neuroscience: T rends in Research 2005. [4] William Bialek, David W arland, and Rob de Ruyter van Steveninck. Spikes: Exploring the Neural Code . MIT Press, Cambridge, Massachusetts, 1996. [5] Wulfram Gerstner, Andreas K. Kreiter , Henry Markram, and Andreas V . M. Herz. Neural codes: Firing rates and beyond. Pr oceedings of the National Academy of Sciences , 94(24):12740–12741, 1997. [6] Steven A. Prescott and T errence J. Sejnowski. Spike-rate coding and spike-time coding are affected oppositely by different adaptation mechanisms. Journal of Neur oscience , 28(50):13649–13661, 2008. [7] Simon Thorpe, Arnaud Delorme, and Rufin V an Rullen. Spike-based strategies for rapid processing. Neural networks , 14(6):715–725, 2001. [8] Paul A Merolla, John V Arthur , Rodrigo Alvarez-Icaza, Andrew S Cassidy , Jun Sawada, Filipp Akopyan, Bryan L Jackson, Nabil Imam, Chen Guo, Y utaka Nakamura, et al. A million spiking-neuron integrated cir - cuit with a scalable communication network and interface. Science , 345(6197):668–673, 2014. [9] Jeff Gehlhaar . Neuromorphic processing: A new frontier in scaling computer architecture. In Pr oceedings of the 19th International Confer ence on Arc hitectural Support for Pr ogramming Languages and Operating Systems (ASPLOS) , pages 317–318, 2014. [10] Michael Mayberry . Intels ne w self-learning chip promises to accelerate artificial intelligence, Sept 2017. [11] Geoffrey E Hinton, Simon Osindero, and Y ee-Whye T eh. A fast learning algorithm for deep belief nets. Neural computation , 18(7):1527–1554, 2006. [12] Navin Anwani and Bipin Rajendran. Normad-normalized approximate descent based supervised learning rule for spiking neurons. In Neural Networks (IJCNN), 2015 International J oint Conference on , pages 1–8. IEEE, 2015. [13] Sander M Bohte, Joost N Kok, and Han La Poutre. Error -backpropagation in temporally encoded networks of spiking neurons. Neur ocomputing , 48(1):17–37, 2002. [14] Olaf Booij and Hieu tat Nguyen. A gradient descent rule for spiking neurons emitting multiple spikes. Informa- tion Processing Letters , 95(6):552–558, 2005. [15] Filip Ponulak and Andrzej Kasinski. Supervised learning in spiking neural networks with resume: sequence learning, classification, and spike shifting. Neural Computation , 22(2):467–510, 2010. [16] A. T aherkhani, A. Belatreche, Y . Li, and L. P . Maguire. Dl-resume: A delay learning-based remote supervised method for spiking neurons. IEEE T ransactions on Neural Networks and Learning Systems , 26(12):3137–3149, Dec 2015. [17] H ´ elene Paugam-Moisy , R ´ egis Martinez, and Samy Bengio. A supervised learning approach based on stdp and polychronization in spiking neuron networks. In ESANN , pages 427–432, 2007. [18] I. Sporea and A. Grning. Supervised learning in multilayer spiking neural networks. Neural Computation , 25(2):473–509, Feb 2013. [19] Y an Xu, Xiaoqin Zeng, and Shuiming Zhong. A new supervised learning algorithm for spiking neurons. Neural computation , 25(6):1472–1511, 2013. [20] Qiang Y u, Huajin T ang, Kay Chen T an, and Haizhou Li. Precise-spike-dri ven synaptic plasticity: Learning hetero-association of spatiotemporal spike patterns. PloS one , 8(11):e78318, 2013. [21] Ammar Mohemmed, Stefan Schliebs, Satoshi Matsuda, and Nikola Kasabov . Span: Spike pattern association neuron for learning spatio-temporal spike patterns. International journal of neural systems , 22(04), 2012. [22] J. J. W ade, L. J. McDaid, J. A. Santos, and H. M. Sayers. SWAT: A spiking neural network training algorithm for classification problems. IEEE T ransactions on Neural Networks , 21(11):1817–1830, Nov 2010. [23] Xiurui Xie, Hong Qu, Guisong Liu, Malu Zhang, and Jrgen Kurths. An efficient supervised training algorithm for multilayer spiking neural networks. PLOS ONE , 11(4):1–29, 04 2016. 18 A P R E P R I N T - J U L Y 3 0 , 2 0 1 9 [24] Xianghong Lin, Xiangwen W ang, and Zhanjun Hao. Supervised learning in multilayer spiking neural networks with inner products of spike trains. Neur ocomputing , 2016. [25] Stefan Schliebs and Nik ola Kasabov . Evolving spiking neural network—a survey . Evolving Systems , 4(2):87–98, Jun 2013. [26] SNJEZANA SOL TIC and NIKOLA KASABO V . Knowledge extraction from ev olving spiking neural networks with rank order population coding. International Journal of Neural Systems , 20(06):437–445, 2010. PMID: 21117268. [27] Raoul-Martin Memmesheimer , Ran Rubin, Bence P . lveczky , and Haim Sompolinsky . Learning precisely timed spikes. Neur on , 82(4):925 – 938, 2014. [28] Jun Haeng Lee, T obi Delbruck, and Michael Pfeiffer . T raining deep spiking neural networks using backpropa- gation. F r ontiers in Neur oscience , 10:508, 2016. [29] Y an Xu, Xiaoqin Zeng, Lixin Han, and Jing Y ang. A supervised multi-spike learning algorithm based on gradient descent for spiking neural networks. Neural Networks , 43:99–113, 2013. [30] R ˘ azvan V Florian. The chronotron: a neuron that learns to fire temporally precise spike patterns. PloS one , 7(8):e40233, 2012. [31] Alan L Hodgkin and Andrew F Huxley . A quantitativ e description of membrane current and its application to conduction and excitation in nerv e. The J ournal of physiology , 117(4):500, 1952. [32] Richard B Stein. Some models of neuronal variability . Biophysical journal , 7(1):37, 1967. [33] M. C. W . van Rossum. A nov el spike distance. Neural computation , 13(4):751–763, 2001. [34] Filip Ponulak and Andrzej Kasi ´ nski. Supervised learning in spiking neural networks with ReSuMe: Sequence learning, classification, and spike shifting. Neural Comput. , 22(2):467–510, February 2010. [35] Dumitru Erhan, Y oshua Bengio, Aaron Courville, Pierre-Antoine Manzagol, Pascal V incent, and Samy Bengio. Why does unsupervised pre-training help deep learning? J. Mach. Learn. Res. , 11:625–660, March 2010. 19

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

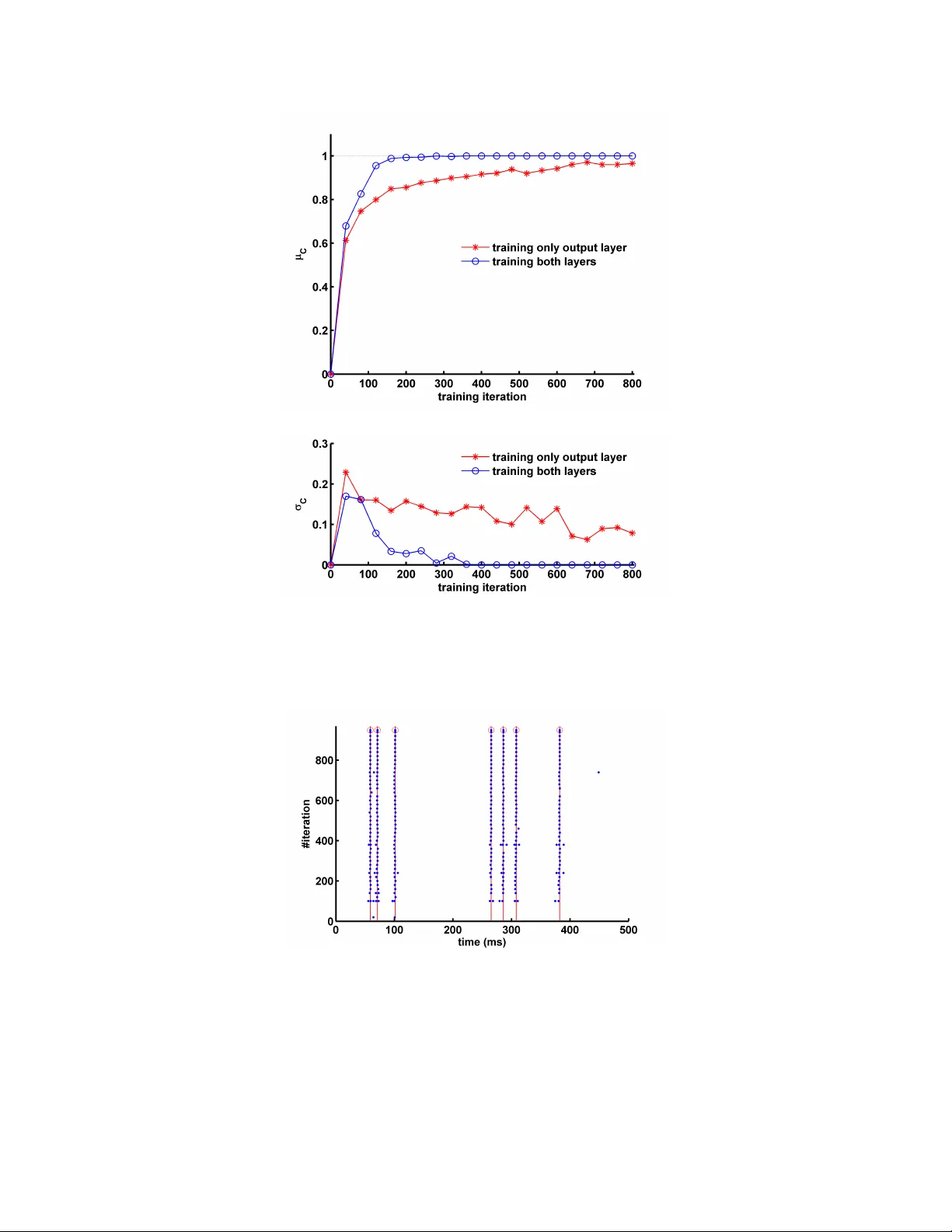

Leave a Comment