Adaptive Navigation Scheme for Optimal Deep-Sea Localization Using Multimodal Perception Cues

Underwater robot interventions require a high level of safety and reliability. A major challenge to address is a robust and accurate acquisition of localization estimates, as it is a prerequisite to enable more complex tasks, e.g. floating manipulati…

Authors: Arturo Gomez Chavez, Qingwen Xu, Christian A. Mueller

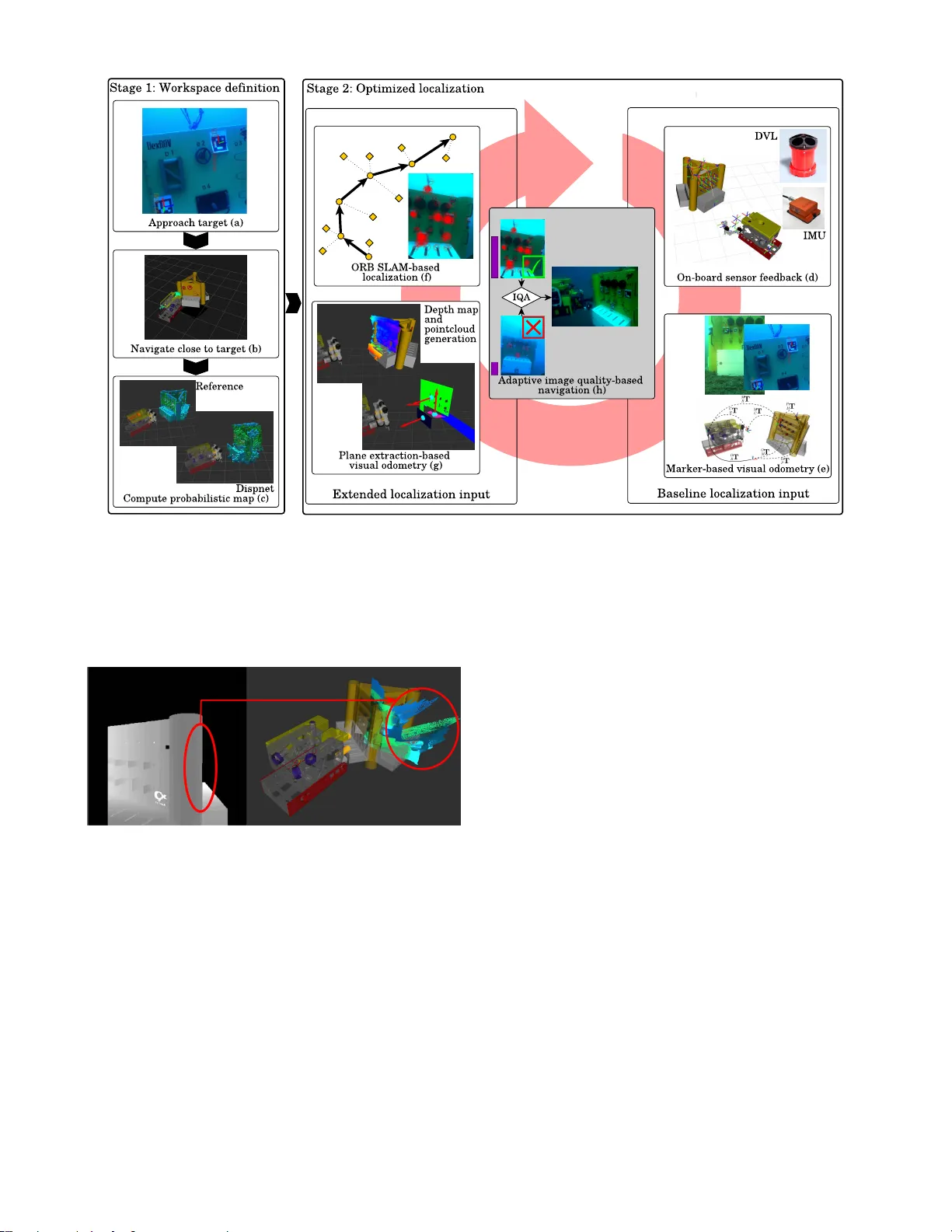

Adaptiv e Na vigation Scheme f or Optimal Deep-Sea Localization Using Multimodal P er ception Cues Arturo Gomez Chav ez 1 , Qingwen Xu 2 , Christian A. Mueller 1 , S ¨ oren Schwertfeger 2 and Andreas Birk 1 Abstract — Underwater robot inter ventions requir e a high level of safety and reliability . A major challenge to address is a rob ust and accurate acquisition of localization estimates, as it is a prer equisite to enable more complex tasks, e.g. floating manipulation and mapping. State-of-the-art na vigation in commer cial operations, such as oil&gas production (OGP), rely on costly instrumentation. These can be partially replaced or assisted by visual navigation methods, especially in deep- sea scenarios where equipment deployment has high costs and risks. Our work presents a multimodal approach that adapts state-of-the-art methods from on-land robotics, i.e., dense point cloud generation in combination with plane representation and registration, to boost underwater localization performance. A two-stage na vigation scheme is proposed that initially generates a coarse probabilistic map of the workspace, which is used to filter noise from computed point clouds and planes in the second stage. Furthermore, an adaptive decision-making approach is introduced that determines which per ception cues to incorporate into the localization filter to optimize accuracy and computation performance. Our approach is inv estigated first in simulation and then validated with data from field trials in OGP monitoring and maintenance scenarios. I . I N T R O D U C T IO N The marine en vironment is challenging for automation technologies. Y et, oceans are one of the main forces dri ving commerce, employment, economic rev enue and natural re- sources exploitation, which in turn triggers profound interest in the development of ne w technologies to facilitate inter- vention tasks, e.g., in oil&gas production (OGP). Remote Operated V ehicles (R O Vs) are the current work-horse used for these tasks, which include inspection of ships, submerged structures and valv es manipulation. In particular , manipulation tasks are e xtremely challenging without stable and accurate robot localization. In general, a global positioning based navigation is desirable to correct measurements from inertial na vigation systems (INS) in a tightly-coupled approach [1]. Howe ver , such data has to be transmitted acoustically through ultra-short/long baseline (USBL/LBL) beacons that ha ve low bandwidth [2], signal delays and deployment constraints. Additional sensors, i.e., Doppler velocity logs (D VLs) and digital compasses, can improv e the localization accuracy but still not at the required standards to perform floating-base manipulation. 1 Authors are with the Robotics Group, Computer Science & Electrical Engineering, Jacobs Uni versity Bremen, German y . { a.gomezchavez, chr.mueller, a.birk } @jacobs-university.de 2 Authors are with the School of Information Sci- ence T echnology of ShanghaiT ech Univ ersity @shanghaitech.edu.cn * This research recei ved funding from the European Union’s Horizon 2020 Frame work Programme, project ref. 635491: “Effecti ve dexterous RO V operations in presence of communication latencies (DexRO V)”. (a) (b) (c) Fig. 1: (a) RO V performing oil&gas valv e manipulation tasks. (b) R O V stereo camera system and manipulation arms. (c) RO V first person view while approaching oil&gas panel. W e present a na vigation scheme that uses visual odometry (V O) methods based on stereo camera imagery and an initial probabilistic map of the working space to boost localization accuracy in challenging conditions. The application scenario is the monitoring and de xterous manipulation of a OGP panel (Fig. 1) within the EU-project DexR O V [3], [4] T o address the challenges of underwater vision, we com- bine plane registration and feature tracking methods. 3D planes are extracted from dense point cloud (DPC) gener- ators, which produce complete disparity maps at the cost of depth accuracy; density being the key factor to find reliable 3D planes. This is particularly useful in structured or man-made en vironments, which predominantly contain planar surfaces, like the installations used in OGP . Further - more, a decision-making strate gy based on image quality is introduced: it allows to select the visual odometry method to be used in order to obtain reliable measurements and impro ve computation times. In summary our contributions are: • Dev elopment of dif ferent visual odometry (V O) modal- ities based on: knowledge-enabled landmarks, 3D plane registration and feature tracking. • Integration of the multimodal VO into an underwater localization filter that adapts its inputs based on image quality assessments. • A two-step navig ation scheme for structured environ- ments. Initial suboptimal localization measurements are used to compute a coarse probabilistic 3D map of the workspace. In turn, this map is used to filter noise and optimize the integration of further measurements. • V alidation of the presented scheme in realistic field conditions with data from sea trails of f-shore the coast of Marseille, France. I I . R E L A T E D W O R K A great number of theoretical approaches on localization filters for marine robotics have been proposed in the litera- ture. In recent years, this also includes increasing efforts to address practical issues such as multi-rate sampling, sensor glitches and dynamic en vironmental conditions. In [5], a revie w of the state-of-the-art in underwater localization is presented and classified into three main classes: inertial/dead reckoning, acoustic and geophysical. The surve yed methods show a clear shift from technolo- gies like USBL/LBL positioning systems [6] tow ards two research areas. First, dynamic multiagent systems which include a surface vehicle that complements the underwater vehicles position with GPS data [7]; and secondly , the inte- gration of visual navigation techniques, i.e., visual odome- try [8] and SLAM [9], into marine systems. W e also inte grate inertial data from D VL and IMU with vision-based tech- niques using standard 2D features and in addition 3D plane registration. The work in [10] sho ws that the combination of standard visual features with geometric visual primiti ves increases odometry robustness in low texture regions, highly frequent in underwater scenes. Three methods are commonly used for plane primiti ve extraction: RANSA C, Hough transform and Region Growing (RG). State of the art methods [11], [12], [13] often use RG because it exploits the connectivity information of the 3D points and, thus, have more consistent results in the presence of noise. These are better suited for our application since the input point cloud for the plane extraction algorithm is not directly generated from an RGB-D camera b ut from a stereo image processing pipeline. W e compare some of these stereo pipelines to in vestigate their impact on the overall localization accuracy (see Sec. IV -A). Finally , to test the complete framework, we used the con- tinuous system inte gration and validation (CSI) architecture proposed in our previous work [14]. W ith this architecture, parts of the dev elopmental stack can be synchronized with real-world data from field trials to close the discrepanc y be- tween simulation and the real world; this process is inspired by the simulation in the loop (SIL) methodology [3]. Based on this, we first compute the accuracy of our approach in an optimized simulation en vironment reflecting similar light conditions as observed in underwater trials. Then, its ef fectiveness is v alidated on field trial data featuring real-world en vironmental conditions. I I I . M E T H O D O L O G Y Figure 2 sho ws the proposed two-stage na vigation scheme: First stage - W orkspace definition with loose localization 1.1. Approach the target (oil&gas panel) until its global 3D pose its confidently determined based on a priori knowledge; see Sec. III-A, Fig. 2(a). 1.2. Na vigate close to the tar get using odometry from navigation sensors and visual landmarks ( baseline localization ); see Sec. III-A.2 and Fig. 2(a)(b). 1.3. Compute a probabilistic map from stereo input of the target object/area while navigating based on the odometry uncertainty; see examples in Fig. 2(c). Second stage - Optimized localization 2.1 Evaluate the reliability of the visual input, i.e., stereo image quality (Sec. III-D, Fig. 2(h)), and determine which of the next VO modalities to use: 2.2.a Extract planes (Sec. III-B.2) from dense point- clouds (Sec. III-B.1), filtered using the probabilistic map computed in the first stage to pre vent large drifts and noise artifacts. See Fig. 2(g)and Fig. 3. 2.2.b Extract and track rob ust 2D features from imagery; see Sec. III-C.2, Fig. 2(f). 2.3. Compute visual odometry either from plane reg- istration or feature tracking (Sec. III-C.1, III-C.2) depending on the image quality assessment (IQA) and integrate the results into the localization filter . The objecti ve of the first stage is to compute a probabilistic map (octomap [15]) of the expected R O V workspace area. A coarse 3D representation of the scene can be obtained giv en fe w samples. Figure 2(c) illustrates this by comparing the octomap generated with a simulated RGB-D camera (reference) and the one generated by pointclouds from stereo imagery . High precision is not crucial here since mapping is not the final goal, b ut to filter spurious 3D pointclouds, e.g., from dynamic objects like fish or from processing artifacts, as sho wn in Fig. 3. W e will now describe in detail each component from the second stage, optimized localization . A. Knowledge-enabled localization Underwater missions are cost-intensive and high-risk, thus prior kno wledge about the mission reduces the risk of f ailures and increases safety . Especially in visual inspection or ma- nipulation tasks of man-made structures, the incorporation of prior knowledge can be exploited. Therefore, we built a knowledge base that contains properties of task-related objects. Along with offline information, like CAD models and kinematic descriptions of the robot and OGP panel, the knowledge base is updated based on current information gathered ov er the execution of a task, e.g. panel v alve poses. 1) P anel P ose Estimation: The panel pose estimation is the basis for projecting the panel model and its kinematic properties into the world-model. This further enables reliable task benchmarking in simulation and real operations, i.e., manipulation of valv es and handles. Our approach incorpo- rates offline knowledge such as the panel CAD model and Fig. 2: Proposed two-stage navigation scheme. First stage – W orkspace definition : (a) recognize the target and compute its pose based on visual markers, (b) navigate close to the tar get based on na vigational sensors and visual markers, (c) generate probabilistic map with stereo imagery and Dispnet; RGB-D camera based probabilistic map displayed for reference. Second stage – Optimized localization : (f)-(g) multimodal localization inputs which are incorporated to a final Kalman filter -based localization estimate. An image quality assessment (IQA) is introduced (h) to v alidate reliability of the extended localization inputs to boost the accuracy of the estimates given by the baseline inputs (see Sec. III-D). Fig. 3: Comet-like artifacts (right) produced in 3D point- clouds by noisy depth maps (left). These are further filtered by the probabilistic map generated in stage 1 (Fig. 2(c)) to later extract planes and obtain visual odometry (Fig. 2(g)). visual markers placed at predefined locations, see Fig. 2(a). Based on this augmentation of the panel with markers, we exploit the panel as a fixed landmark and infer the robot pose whenev er a visual marker is in the camera view as described in the next Sec. III-A.2. The panel pose in odometry frame T O P can be reliably estimated using the detected marker pose w .r .t. the camera frame T C M , the camera pose on the robot frame T R C , the panel pose in marker frame T M P , and the current robot pose in odometry frame T O R , see Fig. 2(e): T O P = T O R T R C T C M T M P (1) When n dif ferent markers are constantly detected during k image frames I , n pose estimates T O P are extracted. These are used to compute the mean position and orientation, determined by spherical linear interpolation (Slerp) . 2) V isual Landmark-Based Odometry: Once the panel pose has been estimated and fixed, the robot pose can be inferred e very time there is a visual marker observ ation and used as an Extended Kalman Filter input modality . Fig. 2(e) sho ws a sample pose estimate of a visual marker; note that the panel is partially observ ed b ut the marker is used to infer the panel pose through the space transformations. Since the panel pose is fix ed, the robot pose T O R can be estimated as follows: T O R = T O P T P M T M C T C R (2) where T O P is the panel pose in odometry frame, T P M is one marker pose in panel frame, T M C is the camera pose w .r .t. the marker and T C R is the robot fixed pose w .r .t. the camera. Further on, the means of robot position ¯ p O R and orientation ¯ q O R w .r .t. the odometry frame are estimated from multiple marker detections using Slerp . In addition, a cov ariance matrix C O R for the robot pose is computed: C O R = diag( σ 2 p x , σ 2 p y , σ 2 p z , σ 2 q φ , σ 2 q θ , σ 2 q ψ ) . (3) The full robot pose estimate T O R = h ¯ p O R , ¯ q O R i along with the respecti ve cov ariance matrix C O R is then tak en as an input for the localization filter in the final setup. Alternatively , it can be used as a ground truth value to optimize parameters, i.e., sensor biases and associated cov ariances, since it is difficult to acquire absolute global ground truth underwater . For more details refer to our work in [3]. B. Dense depth mapping and plane e xtraction 1) Depth map computation: This is a preprocessing step for the plane-based odometry computation (Sec. III-C.1). W e consider two state-of-the-art real-time dense point cloud (DPC) generators: Ef ficient Large-Scale Stereo Matching (ELAS) [16] and Dispnet [17]. ELAS is geometry based and relies on a probabilistic model built from sparse ke ypoints matches and a triangulated mesh, which is used to com- pute remaining ambiguous disparities. Since the probabilistic prior is piece wise linear , the method is rob ust against uniform textured areas. Dispnet is a data dri ven approach that uses a con volutional neural network for disparity regression. The reason to compare these methods is due to their underlying distinct approaches, which in turn offer dif ferent advantages and disadv antages. For example, ELAS has faster processing times in CPU and outputs more precise but incomplete maps when specular reflections or very large textureless areas occur . Dispnet produces a complete map ev en in the presence of image distortions, but smooths the disparity changes around object boundaries. On top of the depth estimation, it is important to in- clude techniques that reduce noise-induced artif acts com- monly present when performing outdoor stereo reconstruc- tion. Fig. 3 sho ws an example where object borders produce comet-like streaks fading away from the camera position. W e also encountered this artifact when running the system with Dispnet during sea field trials. It was observed that when the GPU RAM memory gets overloaded some layers of the Dispnet neural network produce spurious results. In order to mitigate these artifacts, the incoming point cloud is filtered by rejecting points which do not intersect with the octomap [15] representing the workspace obtained from the first navigation stage ( loose localization ). For an efficient nearest-neighbor search between point cloud and oc- tomap voxels, a kd -tree is applied to represent the workspace octomap. Consequently , a substantial amount of points is neglected (Fig. 2(g) – depth map point cloud generation) and also reduces computation cost and memory . 2) Plane extr action: Due to the noisy nature of stereo generated pointclouds, we used a region growing based technique for plane e xtraction [11]. It also outputs a co vari- ance matrix that describes the planarity of the found planes, which can then be integrated for a better estimation of the localization uncertainty (Fig. 2(g) – plane extraction-based visual odometry). Moreov er , it ef ficiently represents not only planes but found holes as polygons, which allows to reason about the quality of the data. In summary , point clouds are segmented into sev eral planes Π = { π i | i = 1 , ..., N } . Initially , an arbitrary point p 0 and its nearest neighbor p 1 form an initial set P i representing the plane π i . Then the set P i grows by finding its nearest neighbors p j and adding p j to P i if p j belongs to π i (plane test), and stops when no more points can be added. Afterwards, the process continues until all the points are distributed into a plane set P or considered as noise. C. V isual odometry The visual markers attached to the panel (Sec. III-A.2) are not alw ays observable. Therefore, further methods are beneficial to aid na vigation. Here we adapt plane-based and featured-based odometry methods to our scenario to exploit structures and features found in the environment. 1) Odometry fr om plane r e gistration: After plane segmen- tation (Sec. III-B.2), the plane normal equals to the eigen vector with smallest eigen value of Matrix A : A = Γ n ( x, x ) Γ n ( x, y ) Γ n ( x, z ) Γ n ( y , x ) Γ n ( y , y ) Γ n ( y , z ) Γ n ( z , x ) Γ n ( z , y ) Γ n ( z , z ) (4) where Γ n ( α, β ) = P n j ( α j − m α )( β j − m β ) , α, β ∈ { x, y , z } and m is the mass center of the points in plane set P i . T o update the matrix A and hence the planes normal representation as fast as possible when new points are considered, the sum of orthogonal distances Γ l ( α, β ) is updated with a new point p l +1 as follows: Γ l +1 ( α, β ) =Γ l ( α, β ) + α l +1 β l +1 − m α ( l + 1)( l +1 X j =1 p j + m α ( l + 1)) + m α ( l )( l X j =1 p j + m α ( l )) (5) Then the relative pose C R T rel in camera frame at time t and t + 1 can be calculated by the extracted planes. Here we exploit the plane registration method in [18] to estimate rotation only . As shown in Sec. IV -A experiment, in our deep-sea scenario we commonly encountered between 3 to 5 planes per frame, and at least 4 plane correspondences are necessary to estimate translation. Suppose planes extracted at time t and t + 1 be Π 1 = { 1 π i | i = 1 , ..., M } and Π 2 = { 2 π j | j = 1 , ..., N } respec- tiv ely , then the M × N candidate pairs ( 1 π i , 2 π j ) are filtered and registered by the following tests from [18], which are adapted to our deep-sea setup: • Size-Similarity T est: The Hessian matrix H of the plane parameters deriv ed from plane extraction is proportional to the number of points in the plane π . Thus, the Hessian of two matched planes should be similar , i.e., | log | 1 H i | + − l og | 2 H i | + | < ¯ L det (6) where | H i | + is the product of the singular values of H i and ¯ L det is the similarity threshold. • Cross-Angle T est: The angle between two planes ( 1 π i 1 , 1 π i 2 ) in frame 1 F should be approximately the same as the angle to the correspondent two planes ( 2 π j 1 , 2 π j 2 ) in frame 2 F , described as 1 ˆ n > i 1 1 ˆ n i 2 ≈ 2 ˆ n > j 1 2 ˆ n j 2 (7) where ˆ n k is the normal to the plane π k . • Parallel Consistency T est: T wo plane pairs ( 1 π i 1 , 2 π j 1 ) from the frames 1 F and 2 F are defined as parallel if their normals meet 1 ˆ n > i 1 1 ˆ n i 2 ≈ 1 and 2 ˆ n > j 1 2 ˆ n j 2 ≈ 1 , or 1 ˆ n > i 1 1 ˆ n i 2 ≈ − 1 and 2 ˆ n > j 1 2 ˆ n j 2 ≈ − 1 . If only one plane is extracted from the current frame, it is tested only by the Size-Similarity test because others require at least two plane correspondences. Then the filtered plane pairs are used to calculate the rotation 1 2 R between frame 1 F and 2 F by the equation: max 1 2 R ζ r = constant + S X i =1 ω i 1 ˆ n i · ( 1 2 R 2 ˆ n i ) (8) which can be solved by Dav enport’ s q-method and where w i are weights in versely proportional to the rotational un- certainty . If the rotation 1 2 R is represented as quaternion 1 2 ˆ q , Eq. 8 can be written as: max 1 2 ˆ q ζ r = S X i =1 ω i 1 2 ˆ q K 1 2 ˆ q (9) Then the cov ariance 1 2 C ˆ q ˆ q of quaternion 1 2 ˆ q is 1 2 H ˆ q ˆ q = 2( K − µ max ( K ) I ) (10a) 1 2 C ˆ q ˆ q = − 1 2 H ˆ q ˆ q + (10b) where µ max ( K ) is the maximum eigen value of K , deriv ed from Dav enport’ s q-method. The cov ariance 1 2 C ˆ q ˆ q and rotation 1 2 ˆ q are used as input for the our navigation filter . 2) F eatur e-based tracking: Whenev er there are distinctiv e and suf ficient 2D texture features in the en vironment, related methods provide a reliable and fast way to compute odom- etry . Here, ORB-SLAM2 [19] is used. It consists of three main threads: tracking, local mapping, and loop closing. Considering our application, we briefly describe the tracking process. When a ne w stereo image is input, the initial guess of the pose C R T 0 is estimated through the tracked features from the last recei ved image. Afterw ards, the pose C R T can be improv ed by conducting bundle adjustment on a memorized local map M i . Moreov er, the tracking thread also decides whether the stereo image should be an image keyframe or not. When tracking f ails, new images are matched with stored keyframes to re-localize the robot. ORB-SLAM2 was chosen because direct V O methods (DSO,LSD-SLAM) assume brightness constanc y throughout image regions [20], which seldom happens in underw ater due to light backscatter . Likewsie, visual-inertial SLAM meth- ods (VINS,OKVIS) require precise synchronization between camera and IMU [21], but by hardware design all sensors are loose-coupled in our application. D. Adaptive image quality based navigation At the end of the localization pipeline the EKF can inte- grate all the inputs based on their measurement confidence, i.e., co variance matrix. For efficienc y , it is preferable to filter out low confidence odometry values before using them for the EKF . This could be done by examining the cov ariance matrix after the vision processing. But computation time is an important factor in our real-time application. W e hence use decision criteria on the sensor (image) quality to omit visual odometry computations, which are likely to generate low confidence results. W e introduced multiple visual odometry modalities in Sec. III-C; see Fig. 2(e)(f)(g). The visual marker-based odometry , as part of the baseline inputs, is not filtered out due to its high reliability and precision. Feature tracking ORB- SLAM localization is highly dependent on image quality; textureless regions and low-contrast significantly reduce its accuracy . In contrast, plane-based odometry copes well with textureless en vironments given that there is an underlying structure. But it is very computationally demanding due to dense depth estimation and plane extraction (Sec. III-B). Based on this, we propose an image quality assessment (IQA) to reason about which visual cues to use in the localization pipeline. W e aggreg ate a non-reference image quality measure based on Minko wski Distance (MDM) [22] and the number of tracked ORB features between consecuti ve frames. The MDM provides three values in the [0 , 1] range describing the contrast distortion in the image; thus, the num- ber of ORB features is normalized based on the predefined maximum number of features to track. If each of these IQA values is defined as m I ( t ) , the final measurement for each timestamp t is their av erage m I ( t ) . Experiments in Sec. IV -B show the performance of these IQA measurements. I V . E X P E R I M E N T A L R E S U L T S The first two experiments are performed in simulation to analyze their algorithmic beha vior . The simulator ambient lighting parameters are adjusted to match conditions from the sea trials. The simulated RO V na vigates around while keeping ≈ 1 . 5 m from the OGP panel since it was found to be an optimal distance for our stereo camera baseline of 30 cm ; also a constant z -axis v alue (depth) is kept. First, we ev aluate the impact of the dense pointcloud generators, ELAS and Dispnet, on the plane extraction, registration and orientation computation (Sec. III-B.2, III- C.1). Furthermore, we study ho w the filter based on the probabilistic map generated from the first stage of our navigation scheme improves performance. The second ex- periment assesses the accuracy of the VO approaches, i.e., our plane registration and feature tracking (ORB-SLAM2), with different types of imagery . The last experiment tests our complete pipeline with real-world data from DexR O V field trials in the sea of Marseille, France (see Fig. 1). The cameras used are Point Grey Grasshoppers2 which hav e a resolution of 688 × 516 pixels and 10 Hz rate; both are in underwater housings with flat-panel that allows for image rectification using the PinAx model [23]. A. Plane se gmentation fr om dense depth maps In this first experiment, we in vestigate how the plane extraction and re gistration algorithms perform with different dense point cloud generators. T able I sho ws the experiment results. W e use as a baseline the simulated RGB-D camera av ailable in the Gazebo simulation engine, which provides ground truth depth/disparity maps. T o measure the accuracy of the stereo disparities (second column) the same principle as the 2015 KITTI stereo benchmark w as follo wed [24], all disparity dif ferences greater than 3 pixels or 5% from the ground truth are considered erroneous. The coverage score (third column) counts how man y image pixels have a valid associated disparity value; te xtureless regions reduce this value. Furthermore, we also count the number of extracted planes and holes within them (fourth and fifth column) using the method from Sec. III-B.2. Finally , the last column of T able I sho ws the orientation error computed from the plane registration, Sec. III-C.1. It is important to note that the tests are performed one more time using the probabilistic map generated from the first stage of our methodology (Fig. 2(c)) as a filter . W e draw the next conclusions from this experiment: 1) ELAS depth maps have more accurate 3D information at the cost of incomplete maps, which produce higher number of planes and holes due to the inability to connect regions corresponding to the same planes. 2) Since these redundant planes are still accurate space representations, they produce better orientation estima- tions than the complete but inaccurate Dispnet maps. 3) Filtering point clouds with the probabilistic map boosts accuracy and reduces the coverage of both methods, which validates the efficienc y of our two-stage navi- gation scheme. 4) Dispnet + Filter orientation accuracy is even greater than the one based on the simulated RGB-D camera. From our observations, Dispnet + Filter generates less planes than the RGB-D depth maps; RGB-D very high accuracy produces planes for small objects such as the panel’ s valv es which add ambiguity . 5) Thus, highly accurate point clouds (overfitting) neg a- tiv ely affects plane registration, i.e., the likelihood of incorrectly registering nearby small planes increases. 6) Based on the 367 analyzed image frames, the mean number of planes generated with Dispet+Filter per frame is 3 or 4 . B. Image quality based navigation performance Based on the pre vious experiment, we choose Dispnet as dense point cloud generator . T o analyze the strengths and weaknesses of the V O methods, the R O V circles the panel while acquiring very diverse stereo imagery . The panel has three sides with distinct purposes: valve manipulation (side 1), dextereous grasping (side 2) and te xtureless side (side T ABLE I: Dense map, plane extraction and orientation measures on simulated stereo sequences Method Accuracy Co verage Planes Holes Error [ ◦ ] RGB-D 1.0 1.0 1620 456 11 . 7 ± 3 . 3 ELAS 0.781 0.579 2708 713 16 . 2 ± 7 . 3 Dispnet 0.713 0.943 1987 123 19 . 4 ± 8 . 5 ELAS+Filter 0.854 0.468 2061 204 12 . 1 ± 6 . 4 Dispnet+Filter 0.798 0.833 1254 18 09 . 3 ± 2 . 1 3), see Fig. 4. This last panel side helps e valuating ho w the methods w ork with scarce image features, and how our image quality measure m I ( t ) from Sec. III-D ev aluates this. The expected error pose is defined as the difference between the ground-truth robot pose in simulation T O R S and the robot pose determined from visual odometry T O R V O : m ( E ) = d T O R S , T O R V O = h d ¯ p O R S , ¯ p O R V O , d ¯ q O R S , ¯ q O R V O i (11) where d ¯ p O P S , ¯ p O P V O is the Euclidean distance be- tween positions and d ¯ q O P S , ¯ q O P V O is the minimal geodesic distance between orientations. For our experi- ments, we also compute the lag-one autocorr elation m A = P t T O R F ( t ) T O R F ( t − 1) on the EKF filter predicted poses T O R F ; m A is a measure of trajectory smoothness, important to prev ent the robot from performing sudden jumps. 1) V isual odometry accuracy: First, we ev aluate the ac- curacy of the proposed V O methods. Fig. 5(top) sho ws the results, the time axis has been normalized and the error is logarithmically scaled for better readability . The orange horizontal lines indicate the time when no visual marker is detected, and the vertical red lines show when the R O V is transitioning into another side of the panel. As expected, the orientation deriv ed from the markers is the most accurate but it also presents the outliers with the largest errors, e.g., close to 0 . 1 s and 0 . 7 s in Fig. 5. The feature tracking ORB-SLAM2 method presents the greatest error but with the least v ariance. When there are very scarce features to track, such as in panel’ s side 3, the error abruptly increases until a memory-sav ed keyframe from the panel’ s side 1 is seen again; close to time 1 . 0 s . The plane registration method has similar accuracy as the visual marker -based odometry and with scarce outliers only when the R O V transitions between panel sides. During these periods the corner of the panel is seen, which is not a planar but cylindrical surface. Then, depending on the vie wpoint it will be represented with different v arious planes. These results are complemented by T able II(a), which shows the higher computational costs of the plane-based V O. During field trials, the o verall R O V perception + manipulation control system and its graphical interface hav e reported peaks of 92% GPU RAM usage; hence, using Dispnet can lead to GPU overuse and spurious 3D maps. Like wise, the slow update times of plane-based V O might limit the R O V (a) Side 1 (b) Side 2 (c) Side 3 Fig. 4: Oil&gas panel sides for (a) valve manipulation, (b) dexterous grasping, (c) and textureless re gion visualization. velocity . For these reasons, V O based on feature tracking is giv en preference when the image quality is good. 2) Image quality assessment: In this experiment, we val- idate that the proposed image quality measure m I ( t ) detects when an image has low contrast and/or lar ge uniform texture regions. This is shown in Fig. 5(bottom); m I ( t ) is the lowest when panel side 3 is in vie w and when the RO V navigates around the corners. As it can be seen, m I ( t ) mostly shows an in verse behavior than the V O accuracy with ORB-SLAM2. Based on this simulations, we set a threshold ( ≈ 0 . 45) for m I ( t ) to only trigger the computationally expensi ve plane- based VO when the image quality is poor . When using the IQA to decide which VO inputs to integrate into the localization filter ( EKF-adaptive ), we reduce the pose error and increase the smoothness of the followed trajectory , see T able II(b). Simply integrating all odometry inputs ( EKF- all ) does not boost performance as the Kalman filter does not reason about the quality of the sensor data except for examining the inputs cov ariance matrix. 10 − 1 10 0 10 1 10 2 Visual Markers ORB-SLAM2 Plane registration 0 . 0 0 . 2 0 . 4 0 . 6 0 . 8 1 . 0 time 0 . 0 0 . 2 0 . 4 0 . 6 0 . 8 1 . 0 IQA m I ( t ) Panel side 1 Panel side 2 Panel side 3 IQA m I ( t ) orientation error [deg] Fig. 5: (T op) Orientation error for different visual odometry methods. No markers detected for sampling times marked orange , and changes of panel side with a red line. (Bottom) Image quality measurement m I ( t ) per stereo pair . T ABLE II: Image quality based navigation performance V O- ORB V O- planes CPU [%] 3.2 6.8 GPU [%] 0.1 17.6 T ime [ s ] 0.145 3.151 EKF-all EKF- adpativ e ¯ m M ,F ( h ¯ p i ) [m] 0.73 ± 0.38 0.61 ± 0.14 ¯ m M ,F ( h ¯ q i ) [deg] 8.93 ± 4.22 3.02 ± 1.06 m A 0.92 0.95 (a) Computation performance (b) Pose error and traj. autocorrelation C. F ield trials localization In the following experiments, we use the visual landmarks (markers) pose estimates T O R M as ground truth since they are quite accurate and the robot global pose can be obtained from them (Sec. III-A.2). W e perform three different tests T Li explained in T able III; the corresponding results are shown in T able IV and Fig. 6. In these tests we compute the measure m M ,F ( T Li ) = d T O R M , T O R F as defined in equation 11, plus the lag-one autocorr elation m A ( T Li ) . The use of visual landmarks has shown to substantially improv e the localization filter accuracy [3] compared to using only na vigation sensors. W ith data from DexR O V sea trials, we first ev aluate this method in T L 1 and use it as reference. Fig. 6(a) shows that the majority of the largest errors occur when the robot is closer to the panel’ s corners because markers are observed from highly ske wed perspectives. Or when markers are not in view for a long period of time, e.g., in refer ence point 1 in Fig. 6(a). Of course, there can be other sources of error lik e spurious DVL measurements that affect the o verall accurac y . In test T L 2 , we use our navigation scheme based on IQA. T able IV and Fig. 6(b) sho w great reduction in the pose/orientation error ( ≈ %50) and an increase in the au- tocorrelation measure, i.e., smoother trajectories. Moreover , errors at the panel’ s corners decrease, e.g. refer ence point 1 . Howe ver , the largest errors still happen at these locations; after all, less features are observable and cylindrical corners (see Fig. 1(a)) are imperfectly modeled by planes. Finally in test T L 3 , we analyze the performance of our method without the use of visual landmarks. The objectiv e is to strive towards a more general localization filter that can function without fiducial landmarks. T able IV sho ws that although the position and orientation error increase, they are not far from T L 1 results. Furthermore, the error v ariance is significantly less; in Fig 6(c) the circles representing the pose errors ha ve a more uniform size. This is more suitable for control algorithms, i.e., w aypoint na vigation and manipulation, which need a certain response time to con ver ge into desired states. Highly v ariable measures may cause T ABLE III: Description of localization tests T Li T est Description T L 1 EKF with real-world data, using navigation sensors and visual markers. T L 2 T L 1 plus visual odometry from plane registration (Sec. III-C.1) and ORB-SLAM2 feature tracking (Sec. III-C.2); selectively used based on IQA (Sec. III-D). T L 3 T L 2 minus odometry from visual markers i.e., navigation sen- sors and image quality based VO inputs. T ABLE IV: T ests T Li measure results for position/orientation error and trajectory autocorrelation T L 1 T L 2 T L 3 ¯ m M ,F ( T Li h ¯ p i )[m] 0.65 ± 0.58 0.31 ± 0.11 0.85 ± 0.22 ¯ m M ,F ( T Li h ¯ q i )[deg] 14.65 ± 8.42 7.21 ± 2.10 11.89 ± 4.55 m A ( T Li ) 0.88 0.94 0.91 − 4 − 2 0 2 4 6 odometry x [m] − 4 − 3 − 2 − 1 0 1 2 3 4 odometry y [m] start end ref. point 1 10 − 2 10 − 1 10 0 position/orientation error (a) T L 1 - Localization using navigation sensors and visual landmarks − 4 − 2 0 2 4 6 odometry x [m] − 4 − 3 − 2 − 1 0 1 2 3 4 odometry y [m] start end 10 − 2 10 − 1 10 0 position/orientation error (b) T L 2 - Localization using navigation sensors, visual landmarks and visual odometry − 4 − 2 0 2 4 6 odometry x [m] − 4 − 3 − 2 − 1 0 1 2 3 4 odometry y [m] start end 10 − 2 10 − 1 10 0 position/orientation error (c) T L 3 - Localization using navigation sensors and visual odometry Fig. 6: Robot poses (triangles) with orientation error d ¯ q O R S , ¯ q O R F (triangle color) and position error d ¯ p O R S , ¯ p O R F (circle color and log-scaled circle radius) as the RO V circles the oil & gas panel. Only the instances where poses from visual markers T O R M can be computed are shown since these are used as ground truth. controllers to not con verge. The same adv antage can be said about the high autocorrelation values from T L 2 and T L 3 . In contrast, T L 1 variances are more than 50% of the mean error . V . C O N C L U S I O N Underwater operations are harsh due to the dynamic en vi- ronment and the limited access to the system. Howe ver , the commercial demand to dev elop these technologies increases ev ery year . One of the many challenges to tackle, and com- monly the first in the work pipeline, is the achiev ement of robust, reliable and precise localization. For this reason, we in vestigate the use of visual odometry in underwater struc- tured scenarios, especially a plane-based method adapted for underwater use with stereo processing and a standard feature based method. Furthermore, an image quality assessment is introduced that allo ws decision making to e xclude computa- tionally expensi ve visual processing, which is likely to lead to results with high uncertainty . The approach is validated in simulation and especially also in challenging field trials. R E F E R E N C E S [1] A. T al, I. Klein, and R. Katz, “Inertial navig ation system/doppler velocity log (ins/dvl) fusion with partial dvl measurements, ” Sensors , vol. 17, 2017. [2] L. Stutters, H. Liu, C. Tiltman, and D. Brown, “Navigation tech- nologies for autonomous underwater vehicles, ” Systems, Man, and Cybernetics, P art C: Applications and Reviews, IEEE T ransactions on , vol. 38, Aug 2008. [3] C. A. Mueller , T . Doernbach, A. Gomez Chavez, D. K oehntopp, and A. Birk, “Robust Continuous System Integration for Critical Deep-Sea Robot Operations Using Knowledge-Enabled Simulation in the Loop, ” in International Confer ence on Intelligent Robots and Systems , 2018. [4] A. Birk, et al. , “Dexterous underwater manipulation from onshore locations: Streamlining ef ficiencies for remotely operated underwater vehicles, ” IEEE Robotics Automation Magazine , vol. 25, Dec 2018. [5] L. Paull, S. Saeedi, M. Seto, and H. Li, “A UV navig ation and localization: A re view , ” IEEE J ournal of Oceanic Engineering , vol. 39, Jan 2014. [6] M. Morgado, P . Oliveira, and C. Silvestre, “Tightly coupled ultrashort baseline and inertial navigation system for underwater v ehicles: An experimental validation, ” Journal of F ield Robotics , vol. 30, 2013. [7] R. Campos, N. Gracias, and P . Ridao, “Underwater multi-vehicle tra- jectory alignment and mapping using acoustic and optical constraints, ” Sensors (Basel) , vol. 16, p. 387, Mar 2016. [8] K. Sukvichai, K. W ongsuwan, N. Kaewnark, and P . Wisanuvej, “Implementation of visual odometry estimation for underwater robot on ros by using raspberrypi 2, ” in 2016 International Conference on Electr onics, Information, and Communications (ICEIC) , Jan 2016. [9] M. F . Fallon, J. F olkesson, H. McClelland, and J. J. Leonard, “Re- locating underw ater features autonomously using sonar-based slam, ” IEEE Journal of Oceanic Engineering , vol. 38, July 2013. [10] P . F . Proenca and Y . Gao, “Probabilistic RGB-D Odometry based on Points, Lines and Planes Under Depth Uncertainty, ” ArXiv e-prints , June 2017. [11] J. Poppinga, N. V askevicius, A. Birk, and K. Pathak, “Fast plane detection and polygonalization in noisy 3d range images, ” in Intelligent Robots and Systems (IR OS) , 2008. [12] C. Feng, Y . T aguchi, and V . R. Kamat, “Fast plane extraction in organized point clouds using agglomerativ e hierarchical clustering, ” in 2014 IEEE International Conference on Robotics and A utomation (ICRA) , May 2014. [13] P . F . Proenc ¸ a and Y . Gao, “Fast Cylinder and Plane Extraction from Depth Cameras for V isual Odometry, ” ArXiv e-prints , Mar . 2018. [14] T . Fromm, C. A. Mueller, M. Pfingsthorn, A. Birk, and P . Di Lillo, “Efficient Continuous System Integration and V alidation for Deep-Sea Robotics Applications, ” in Oceans (Aber deen) , 2017. [15] A. Hornung, K. M. Wurm, M. Bennewitz, C. Stachniss, and W . Bur- gard, “OctoMap: An ef ficient probabilistic 3D mapping framew ork based on octrees, ” Autonomous Robots , 2013. [16] A. Geiger, M. Roser, and R. Urtasun, “Efficient large-scale stereo matching, ” in Computer V ision – A CCV 2010 , 2011. [17] N. Mayer , et al. , “ A large dataset to train con volutional networks for disparity , optical flow , and scene flow estimation, ” in IEEE Confer ence on Computer V ision and P attern Recognition (CVPR) , June 2016. [18] K. P athak, A. Birk, N. V askevicius, and J. Poppinga, “Fast registra- tion based on noisy planes with unknown correspondences for 3-d mapping, ” IEEE T ransactions on Robotics , vol. 26, 2010. [19] R. Mur-Artal and J. D. T ard ´ os, “Orb-slam2: An open-source slam system for monocular, stereo, and rgb-d cameras, ” IEEE T ransactions on Robotics , vol. 33, 2017. [20] S. Park, T . Sch ¨ ops, and M. Pollefeys, “Illumination change robustness in direct visual slam, ” in 2017 IEEE International Confer ence on Robotics and Automation (ICRA) , 2017. [21] E. M. Alexander Buyv al, Ilya Afanasyev , “Comparative analysis of ros-based monocular slam methods for indoor navigation, ” vol. 10341, 2017. [22] H. Z. Nafchi and M. Cheriet, “Efficient no-reference quality assess- ment and classification model for contrast distorted images, ” IEEE T ransactions on Br oadcasting , v ol. 64, June 2018. [23] T . Luczynski, M. Pfingsthorn, and A. Birk, “The Pinax-model for accurate and efficient refraction correction of underwater cameras in flat-pane housings, ” Ocean Engineering , v ol. 133, 2017. [24] M. Menze and A. Geiger, “Object scene flow for autonomous v ehi- cles, ” in IEEE Conference on Computer V ision and P attern Recogni- tion (CVPR) , June 2015.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment