Characterizing Audio Adversarial Examples Using Temporal Dependency

Recent studies have highlighted adversarial examples as a ubiquitous threat to different neural network models and many downstream applications. Nonetheless, as unique data properties have inspired distinct and powerful learning principles, this pape…

Authors: Zhuolin Yang, Bo Li, Pin-Yu Chen

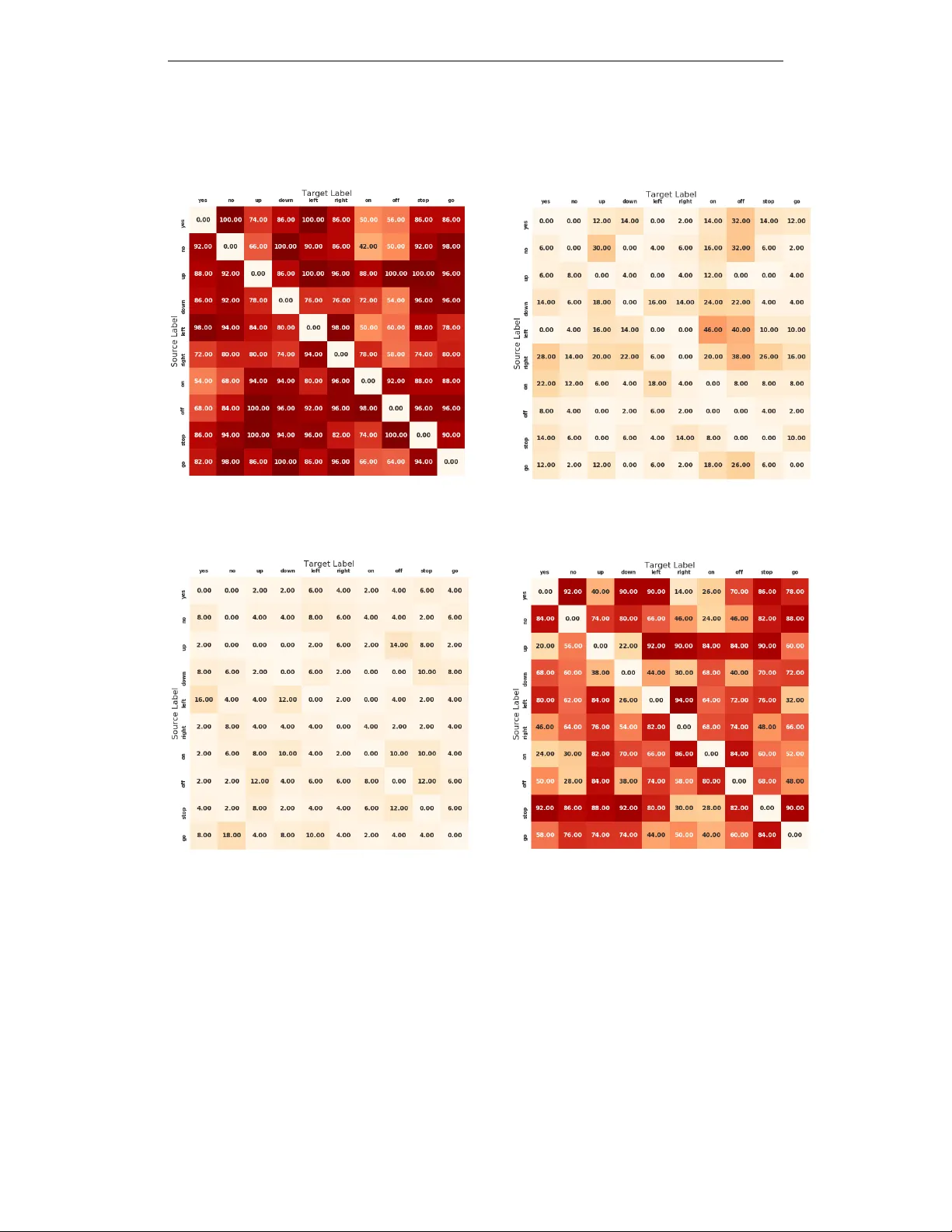

C H A R A C T E R I Z I N G A U D I O A D V E R S A R I A L E X A M P L E S U S I N G T E M P O R A L D E P E N D E N C Y Zhuolin Y ang Shanghai Jiao T ong Univ ersity Bo Li Univ ersity of Illinois at Urbana–Champaign Pin-Y u Chen IBM Research Dawn Song UC, Berkeley A B S T R AC T Recent studies hav e highlighted adversarial e xamples as a ubiquitous threat to dif- ferent neural network models and man y downstream applications. Nonetheless, as unique data properties hav e inspired distinct and po werful learning principles, this paper aims to explore their potentials to wards mitigating adversarial inputs. In particular , our results reveal the importance of using the temporal dependency in audio data to gain discriminate power against adversarial examples. T ested on the automatic speech recognition (ASR) tasks and three recent audio adversarial attacks, we find that (i) input transformation developed from image adv ersarial de- fense provides limited robustness improv ement and is subtle to advanced attacks; (ii) temporal dependenc y can be exploited to gain discriminativ e power against audio adv ersarial e xamples and is resistant to adaptiv e attacks considered in our experiments. Our results not only sho w promising means of improving the rob ust- ness of ASR systems, but also of fer novel insights in exploiting domain-specific data properties to mitigate negati v e effects of adv ersarial examples. 1 I N T RO D U C T I O N Deep Neural Networks (DNNs) ha ve been widely adopted in a v ariety of machine learning applica- tions (Krizhevsk y et al., 2012; Hinton et al., 2012; Levine et al., 2016). Howe v er , recent w ork has demonstrated that DNNs are vulnerable to adv ersarial perturbations (Sze gedy et al., 2014; Goodfel- low et al., 2015). An adversary can add ne gligible perturbations to inputs and generate adversarial examples to mislead DNNs, first found in image-based machine learning tasks (Goodfello w et al., 2015; Carlini & W agner, 2017a; Liu et al., 2017; Chen et al., 2017b;a; Su et al., 2018). Beyond images, giv en the wide application of DNN-based audio recognition systems, such as Google Home and Amazon Ale xa, audio adversarial examples ha ve also been studied recently (Car- lini & W agner, 2018; Alzantot et al., 2018; Cisse et al., 2017; Kreuk et al., 2018). Comparing between image and audio learning tasks, although their state-of-the-art DNN architectures are quite different (i.e., con volutional v .s. recurrent neural networks), the attacking methodology to wards gen- erating adversarial examples is fundamentally unanimous - finding adversarial perturbations through the lens of maximizing the training loss or optimizing some designed attack objectiv es. For exam- ple, the same attack loss function proposed in (Cisse et al., 2017) is used to generate adversarial examples in both visual and speech recognition models. Nonetheless, dif ferent types of data usu- ally possess unique or domain-specific properties that can potentially be used to gain discriminati ve power against adversarial inputs. In particular, the temporal dependenc y in audio data is an innate characteristic that has already been widely adopted in the machine learning models. Howe ver , in addition to improving learning performance on natural audio examples, it is still an open question on whether or not the temporal dependency can be exploited to help mitigate negati ve effects of adversarial e xamples. The focus of this paper has two folds. First, we inv estigate the robustness of automatic speech recognition (ASR) models under input transformation , a commonly used technique in the image domain to mitigate adversarial inputs. Our experimental results sho w that four implemented trans- formation techniques on audio inputs, including wa veform quantization, temporal smoothing, do wn- 1 sampling and autoencoder reformation, provide limited rob ustness improvement against the recent attack method proposed in (Athalye et al., 2018), which aims to circumvent the gradient obfuscation issue incurred by input transformations. Second, we demonstrate that temporal dependency can be used to gain discriminativ e power against adv ersarial e xamples in ASR. W e perform the proposed temporal dependency method on both the LIBRIS (Graetz et al., 1986) and Mozilla Common V oice datasets against three state-of-the-art attack methods (Carlini & W agner, 2018; Alzantot et al., 2018; Y uan et al., 2018) considered in our experiments and show that such an approach achieves promis- ing identification of non-adaptiv e and adapti ve attacks. Moreover , we also verify that the proposed method can resist strong proposed adaptive attacks in which the defense implementations are kno wn to an attacker . Finally , we note that although this paper focuses on the case of audio adversarial examples, the methodology of le veraging unique data properties to improv e model robustness could be naturally extended to different domains. The promising results also shed new lights in designing adversarial defenses against attacks on v arious types of data. Related work An adversarial example for a neural network is an input x adv that is similar to a natural input x but will yield different output after passing through the neural network. Currently , there are two different types of attacks for generating audio adversarial examples: the Speech-to- Label attack and the Speech-to-T e xt attack. The Speech-to-Label attack aims to find an adversarial example x adv close to the original audio x but yields a different (wrong) label. T o do so, Alzantot et al. proposed a genetic algorithm (Alzantot et al., 2018), and Cisse et al. proposed a probabilistic loss function (Cisse et al., 2017). The Speech-to-T ext attack requires the transcribed output of the adversarial audio to be the same as the desired output, which has been made possible by Carlini and W agner (Carlini & W agner, 2018) using optimization-based techniques operated on the raw wav e- forms. Iter et al. lev eraged extracted audio features called Mel Frequency Cepstral Coefficients (MFCCs) (Iter et al., 2017). Y uan et al. demonstrated practical “wav-to-API” audio adversarial attacks (Y uan et al., 2018). Another line of research focuses on adv ersarial training or data augmen- tation to improve model rob ustness (Serdyuk et al., 2016; Michelsanti & T an, 2017; Sriram et al., 2017; Sun et al., 2018), which is beyond our scope. Our proposed approach focuses on gaining the discriminativ e power against adversarial examples through embedded temporal dependency , which is compatible with any ASR model and does not require adv ersarial training or data augmentation. 2 D O L E S S O N S F RO M I M A G E A D V E R S A R I A L E X A M P L E S T R A N S F E R T O A U D I O D O M A I N ? Although in recent years both image and audio learning tasks hav e witnessed significant break- throughs accomplished by advanced neural networks, these two types of data ha ve unique properties that lead to distinct learning principles. In images, the pix els entail spatial correlations corresponding to hierarchical object associations and color descriptions, which are lev eraged by the con v olutional neural networks (CNNs) for feature extraction. In audios, the wa veforms possess apparent temporal dependency , which is widely adopted by the recurrent neural networks (RNNs). For the segmen- tation task in image domain, spatial consistenc y has played an important role in improving model robustness (Lowe, 1999). Howe ver , it remains unknown whether temporal dependenc y can ha ve a similar ef fect of improving model robustness against audio adversarial examples. In this paper , we aim to address the follo wing fundamental questions: (a) do lessons learned fr om image adversarial examples transfer to the audio domain? ; and (b) can temporal dependency be used to discriminate audio adversarial e xamples? Moreov er , studying the discriminati ve po wer of temporal dependency in audios not only highlights the importance of using unique data properties tow ards b uilding rob ust machine learning models, b ut also aids in devising principles for in vestig ating more complex data such as videos (spatial + temporal properties) or multimodal cases (e.g., images + texts). Here we summarize two primary findings concluded from our experimental results in Section 4. A udio input transformation is not effective against adversarial attacks Input transformation is a widely adopted defense technique in the image domain, o wing to its lo w operation cost and easy in- tegration with the existing netw ork architecture (Luo et al., 2015; W ang et al., 2016; Dziugaite et al., 2016). Generally speaking, input transformation aims to perform certain feature transformation on the raw image in order to disrupt the adv ersarial perturbations before passing it to a neural network. Popular approaches include bit quantization, image filtering, image reprocessing, and autoencoder reformation (Xu et al., 2017; Guo et al., 2017; Meng & Chen, 2017). Howe ver , many existing 2 methods are shown to be bypassed by subsequent or adapti ve adversarial attacks (Carlini & W agner, 2017b; He et al., 2017; Carlini & W agner, 2017c; Lu et al., 2018). Moreo ver , Athalye et al. (Athalye et al., 2018) has pointed out that input transformation may cause obfuscated gradients when gener- ating adversarial examples and thus gives a false sense of robustness. They also demonstrated that in many cases this gradient obfuscation issue can be circumv ented, making input transformation still vulnerable to adversarial examples. Similarly , in our experiments we find that audio input transfor- mations based on wav eform quantization, temporal filtering, signal do wn sampling or autoencoder reformation suffers from similar weakness: the tested model with input transformation becomes fragile to adv ersarial examples when one adopts the attack considering gradient obfuscation as in (Athalye et al., 2018). T emporal dependency possesses strong discriminative power against adversarial examples in automatic speech recognition Instead of input transformation, in this paper we propose to exploit the inherent temporal dependency in audio data to discriminate adversarial examples. T ested on the automatic speech recognition (ASR) tasks, we find that the proposed methodology can ef fecti vely detect audio adversarial examples while minimally affecting the recognition performance on nor- mal examples. In addition, experimental results show that a considered adaptiv e adversarial attack, ev en when knowing e very detail of the deployed temporal dependency method, cannot generate adversarial e xamples that bypass the proposed temporal dependency based approach. Combining these two primary findings, we conclude that the weakness of defense techniques iden- tified in the image case is very likely to be transferred to the audio domain. On the other hand, exploiting unique data properties to develop defense methods, such as using temporal dependency in ASR, can lead to promising defense approaches that can resist adaptiv e adversarial attacks. 3 I N P U T T R A N S F O R M A T I O N A N D T E M P O R A L D E P E N D E N C Y I N A U D I O D A T A In this section, we will introduce the effect of basic input transformations on audio adversarial examples, and analyze temporal dependenc y in audio data. W e will also sho w that such temporal dependency can be potentially le veraged to discriminate audio adv ersarial examples. 3 . 1 A U D I O A D V E R S A R I A L E X A M P L E S U N D E R S I M P L E I N P U T T R A N S F O R M A T I O N S Inspired by image input transformation methods and as a first attempt, we applied some primitive signal processing transformations to audio inputs. These transformations are useful, easy to imple- ment, fast to operate and ha ve deli vered se veral interesting findings. Quantization: By rounding the amplitude of audio sampled data into the nearest integer multiple of q , the adversarial perturbation could be disrupted since its amplitude is usually small in the input space. W e choose q = 128 , 256 , 512 , 1024 as our parameters. Local smoothing: W e use a sliding window of a fixed length for local smoothing to reduce the adversarial perturbation. For an audio sample x i , we consider the K − 1 samples before and after it, denoted by [ x i − K +1 , . . . , x i , . . . , x i + K − 1 ] , as a local reference sequence and replace x i by the smoothed value (a verage, median, etc) of its reference sequence. Down sampling: Based on sampling theory , it is possible to do wn-sample a band-limited audio file without sacrificing the quality of the reco vered signal while mitigating the adversarial perturbations in the reconstruction phase. In our experiments, we down-sample the original 16kHz audio data to 8kHz and then perform signal recov ery . A utoencoder: In adversarial image defending field, the MagNet defensive method (Meng & Chen, 2017) is an effecti v e way to remov e adversarial noises: Implement an autoencoder to project the adversarial input distrib ution space into the benign distribution. In our experiments, we implement a sequence-to-sequence autoencoder, and the whole audio will be cut into frame-lev el pieces passing through the autoencoder and concatenate them in the final stage, while using the whole audio passing the autoencoder directly is prov ed to be ineffecti ve and hard to utilize the underlying information. 3 Figure 1: Pipeline and example of the proposed temporal dependenc y (TD) based method for dis- criminating audio adversarial e xamples. 3 . 2 T E M P O R A L D E P E N D E N C Y B A S E D M E T H O D ( T D ) Due to the fact that audio sequence has explicit temporal dependency (e.g., correlations in consec- utiv e wa veform segments), here we aim to explore if such temporal dependency will be affected by adversarial perturbations. The pipeline of the temporal dependency based method is shown in Fig- ure 1. Given an audio sequence, we propose to select the first k portion of it (i.e., the prefix of length k ) as input for ASR to obtain transcribed results as S k . W e will also insert the whole sequence into ASR and select the prefix of length k of the transcribed result as S { whole,k } , which has the same length as S k . W e will then compare the consistency between S k and S { whole,k } in terms of temporal dependency distance. Here we adopt the word error rate (WER) as the distance metric (Le venshtein, 1966). For normal/benign audio instance, S k and S { whole,k } should be similar since the ASR model is consistent for different sections of a given sequence due to its temporal dependency . Ho we ver , for audio adversarial examples, since the added perturbation aims to alter the ASR ouput tow ard the targeted transcription, it may fail to preserve the temporal information of the original sequence. Therefore, due to the loss of temporal dependency , S k and S { whole,k } in this case will not be able to produce consistent results. Based on such hypothesis, we lev erage the prefix of length k of the transcribed results and the transcribed k portion to potentially recognize adversarial inputs. 4 E X P E R I M E N T A L R E S U L T S The presentation flows of the experimental results are summarized as follo ws. W e will first in- troduce the datasets, target learning models, attack methods, and ev aluation metrics for different defense/detection methods that we focus on. W e then discuss the defense/detection effecti veness for different methods against each attack respecti vely . Finally , we ev aluate strong adaptiv e attacks against these defense/detection methods. W e show that due to different data properties, the autoen- coder based defense cannot ef fectiv ely recov er the ground truth for adversarial audios and may also hav e ne gativ e ef fects on benign instances as well. Input transformation is less ef fectiv e in defending adversarial audio than images. In addition, ev en when some input transformation is effecti v e for recov ering some adversarial audio data, we find that it is easy to perform adaptive attacks against them. The proposed TD method can effecti vely detect adversarial audios generated by different at- tacks tar geting on v arious learning tasks (classification and speech-to-text translation). In particular , we propose dif ferent types of strong adapti ve attacks against the TD detection method. W e show that these strong adapti ve attacks are not able to generate ef fecti ve adv ersarial audio ag ainst TD and we provide some case studies to further understand the performance of TD. 4 . 1 E X P E R I M E N TA L S E T U P In our experiments, we measure the effecti v eness on sev eral adversarial audio generation methods. For audio classification attack, we used Speech Commands dataset. F or speech-to-text attack, we benchmark each method on both LibriSpeech and Mozilla Common V oice dataset. In particular , for the Commander Song attack (Y uan et al., 2018), we measure on the generated adversarial audios giv en by the authors. Dataset 4 LibriSpeech dataset : LibriSpeech (Panayotov et al., 2015) is a corpus of approximately 1000 hours of 16Khz English speech deriv ed from audiobooks from the LibriV ox project. W e used samples from its test-clean dataset in their website and the av erage duration is 4.294s. W e generated adversarial examples using the attack method in (Carlini & W agner, 2018). Mozilla Common V oice dataset : Common V oice is a large audio dataset provided by Mozilla. This dataset is public and contains samples from human speaking audio files. W e used the 16Khz- sampled data released in (Carlini & W agner, 2018), whose average duration is 3.998s. The first 100 samples from its test dataset is used to mount attacks, which is the same attack experimental setup as in (Carlini & W agner, 2018). Speech Commands dataset: Speech Commands dataset (W arden, 2018) is a audio dataset contains 65000 audio files. Each audio is just a single command lasting for one second. Commands are ”yes”, ”no”, ”up”, ”down”, ”left”, ”right”, ”on”, ”of f”, ”stop”, and ”go”. Model and learning tasks For speech-to-text task, we use DeepSpeech speech-to-te xt transcription network, which is a biRNN based model with beam search to decode text. For audio classification task, we use a con v olutional speech commands classification model. For the Command Song attack, we ev aluate the performance on Kaldi speech recognition platform. Attack Methods Genetic algorithm based attack against audio classification (GA) : For audio classification task, we consider the state-of-the-art attack proposed in (Alzantot et al., 2018). Here an audio classification model is attack ed and the audio classes include “yes, no, up, down, etc. ”. They aimed to attack such a network to misclassify an adversarial instance based on either tar geted or untar geted attack. Commander Song attac k a gainst speech-to-te xt tr anslation (Commander) : Commander Song (Y uan et al., 2018) is a speech-to-te xt targeted attack which can attack an audio extracted from a popular song. The adversarial audio can ev en be played over the air with its adversarial characteristics. Since the Commander Song codes are not av ailable, we measure the effecti veness of the generated adversarial audios gi ven by the authors. Optimization based attack against speech-to-te xt translation (Opt) : W e consider the targeted speech- to-text attack proposed by (Carlini & W agner, 2018), which uses CTC-loss in a speech recognition system as an objectiv e function and solves the task of adversarial attack as an optimization problem. Evaluation Metrics For defense method such as input transformation, since it aims to recover the ground truth (original instances) from adversarial instances, we use the word error rate (WER) and character error rate (CER) (Le venshtein, 1966) as ev aluation metrics to measure the recovery effi- ciency . WER and CER are commonly used metrics to measure the error between recovered text and the ground truth in word lev el or character lev el. Generally speaking, the error rate (ER) is defined by E R = S + D + I N , where S, D , I is the number of substitutions, deletions and insertions calculated by dynamic string alignment, and N is the total number of w ord / character in the ground truth te xt. T o fairly evaluate the effecti veness of these transformations against speech-to-text attack, we also report the ratio of translation distance between instance and corresponding ground truth before and after transformation. For instance, as a controlled experiment, gi ven a audio instance x (adversarial instance is denoted as x adv ), its corresponding ground truth y , and the ASR function g ( · ) , we calcu- late the effecti v eness ratio for benign instances as R benig n = D ( g ( T ( x )) ,y ) D ( g ( x ) ,y ) , where T ( · ) denotes the result of transformation and D ( · , · ) characterizes the distance function (WER and CER in our case). For adv ersarial audio, we calculate the similar effecti veness ratio as R adv = D ( g ( T ( x adv )) ,y ) D ( g ( x adv ) ,y ) . For detection method , the standard ev aluation metric is the area under curve (A UC) score, aiming to ev aluate the detection efficienc y . The proposed TD method is the first data-specific metric to detect adversarial audio, which focuses on how many adversarial instances are captured (true positive) without af fecting benign instances (false positi ve). Therefore, we follo w the standard criteria and report A UC for TD. For the proposed TD method, we compare the temporal dependency based on WER, CER, as well as the longest common prefix (LCP). LCP is a commonly used metric to ev aluate the similarity between two strings. Giv en strings b 1 and b 2 , the corresponding LCP is defined as max b 1 [: k ]= b 2 [: k ] k , where [: k ] represents the prefix of length k of a translated sentence. 5 T able 1: List of adversarial audio based attacks and corresponding e valuation results for defense and detection methods Learning tasks Classification Speech-to-T e xt Attack methods Genetic Algorithm (GA) CommanderSong (Commander) Opt. attack (Opt) Datasets SpeechCommand Some popular songs LibriSpeech CommonV oice Evaluation metrics of defense A v erage attack success rate T ar get command recognition rate Effecti veness ratio R benign / R adv Defense Method W ithout defense 84% 100% 1.0 / 1.0 1.0 / 1.0 Input trans. Down Samp. 3.2% 8% 3.87 / 0.40 1.13 / 0.73 Quan-256 2.1% 4% 1.13 / 0.28 1.56 / 0.74 Median-4 2.7% 4% 1.18 / 0.34 0.98 / 0.77 Autoencoder 8.2% - 9.84 / 0.97 2.09 / 0.80 Evaluation metrics for detection - Detection rate A UC score Detection results of TD Method - 1.00 0.930 0.936 4 . 2 E V A L U A T I O N O F D E F E N S E M E T H O D S AG A I N S T A D V E R S A R I A L AU D I O In this section we measured our defense method of audoencoder based defense and input transfor - mation defense for classification attack (GA) and speech-to-text attack (Commander and Opt). W e summarize our work in T able 1 and list some basic results. For Commander , due to unreleased train- ing data, we are not able to train an autoencoder . For GA and Opt we hav e sufficient data to train autoencoder . Input T ransformation as Defense Here we perform the primitive input transformation for audio classification targeted attacks and ev aluate the corresponding effects. Due to the space limitation, we defer the results of untargeted attacks to the supplemental materials. GA W e first ev aluate our input transformation against the audio classification attack (GA) in (Alzan- tot et al., 2018). W e implemented their attack with 500 iterations and limit the magnitude of adver - sarial perturbation within 5 (smaller than the quantization we used in transformation) and generated 50 adversarial examples per attack task (more targets are shown in supplementary material). The attack success rate is 84% on av erage. For the ease of illustration, we use Quantization-256 as our in- put transformation. As observ ed in Figures 2 and 3, the attack success rates decreased to only 2 . 1% , and 63 . 8% of the adv ersarial instances ha ve been conv erted back to their original (true) label. W e also measure the possible ef fects on original audio due to our transformation methods: the original audio classification accuracy without our transformation is 89 . 2% , and the rate slightly decreased to 89 . 0% after our transformation, which means the ef fects of input transformation on benign instances are negligible. In addition, it also sho ws that for classification tasks, such input transformation is more ef fectiv e in mitig ating negati v e ef fects of adversarial perturbation. This potential reason could be that classification tasks do not rely on audio temporal dependency but focuses on local features, while speech-to-text task will be harder to defend based on the tested input transformations. Figure 2: Attack success rates (%) Figure 3: Attack success (%) after transformation Commander W e also ev aluate our input transformation method against the Commander Song at- tack (Y uan et al., 2018), which implemented an Air-to-API adversarial attack. In the paper, the authors reported 91% attack detection rate using their defense method. W e measured our Quan-256 input transformation on 25 adv ersarial examples obtained via personal communications. Based on 6 the same detection e valuation metric in (Y uan et al., 2018) 1 , Quan-256 attains 100% detection rate for characterizing all the adversarial e xamples. Opt Here we consider the state-of-the-art audio attack proposed in (Carlini & W agner, 2018). W e separately choose 50 audio files from two audio datasets (Common V oice, LIBRIS) and generate attacks based on the CTC-loss. W e ev aluate se veral primiti ve signal processing methods as input transformation under WER and CER metrics in T able A1 and A2. W e then also e valuate the WER and CER based ef fectiv eness ratio we mentioned before to Quanify the ef fecti veness of transforma- tion. R benig n are sho wn in the brack ets for the first tw o columns in T able A1 and A2, while R adv is shown in the brackets of last tw o columns within those tables. W e compute our results using both ground truth and adversarial tar get “This is an adversarial e xample” as references. Here small R benig n which is close to 1 indicates that transformation has little effect on benign instances, small R adv represents transformation is effecti ve recov ering adversarial audio back to benign. From T ables A1 and A2 we sho wed that most of input transformations (e.g., Median-4, Downsampling and Quan-256) effecti vely reduce the adversarial perturbation without affecting the original audio too much. Although these input transformations show certain effecti veness in defending against adversarial audios, we find that it is still possible to generate adv ersarial audios by adaptiv e attack in Section 4.4. A utoencoder as Defense T ow ards defending against (non-adaptiv e) adversarial images, MagNet (Meng & Chen, 2017) has achiev ed promising performance by using an antoencoder to mitigate adv ersarial perturbation. In- spired by it, here we apply a similar autoencoder structure for audio and test if such input transfor- mation can be applied to defending against adversarial audio. W e apply a MagNet-like method for feature-extracted audio spectrum map: we b uild an encoder to compress the information of origin audio features into latent vector z , then use z for reconstruction by passing through another decoder network under frame level and combine them to obtain the transformed audio (Hsu et al., 2017). Here we analyzed the performance of Autoencoder transformation in both GA and Opt attack. W e find that MagNet which gained great ef fectiv eness on defending adversarial images in the obli vious attack setting (Carlini & W agner, 2017c; Lu et al., 2018), has limited effect on audio defense. GA W e presented our results in T able 1 that against classification attack, Autoencoder did not per- form well by only reducing attack success rate to 8 . 2% defeat by other input transformation methods. Since you can reduce attack success rate to 10% by just destroying the origin audio data and altering to random guess, it’ s hard to say that Autoencoder method has good performance. Opt W e report that the autoencoder works not very well for transforming benign instances (57.6 WER in Common V oice compared to 27.5 WER without transformation, 30.0 WER in LIBRIS compared to 12.4 WER without transformation), also fails to recover adversarial audio (76.5 WER in Common V oice and 99.4 WER in LIBRIS). This sho ws that the non-adaptive additi ve adv ersarial perturbation can bypass the MagNet-like autoencoder on audio, which implies different robustness implications of image and audio data. 4 . 3 E V A L U A T I O N O F T D D E T E C T I O N M E T H O D A G A I N S T A D V E R S A R I A L AU D I O In this section, we will ev aluate the proposed TD detection method on different attacks. W e will first report the A UC for detecting different attacks with TD to demonstrate the effecti veness, and we will provide some additional analysis and e xamples to help better understand TD. W e only ev aluate our TD method on speech-to-text attacks (Commander and Opt) because the audio in the Speech Commands dataset for classification attack is just a single command lasting for one second and thus its temporal dependency is not ob vious. Commander In Commander Song attack, we directly examine whether the generated adversarial audio is consistent with its prefix of length k or not. W e report that by using TD method with k = 1 2 , all the generated adversarial samples sho wed inconsistency and thus were successfully detected. Opt Here we show the empirical performance of distinguishing adversarial audios by leveraging the temporal dependency of audio data. In the experiments, we use these three metrics, WER, 1 The authors set the detection threshold to be 0 and we used the same setting here. 7 CER and LCP , to measure the inconsistency between S k and S { whole,k } . As a baseline, we also directly train a one layer LSTM with 64 hidden feature dimensions based on the collected adversarial and benign audio instances for classification. Some examples of translated results for benign and adversarial audios are sho wn in T able 2. Here we consider three types of adversarial targets: short – he y google ; medium – this is an adversarial example ; and long – he y google please cancel my medical appointment . W e report the A UC score for these detection results for k = 1 / 2 in T able 3. T able 2: Examples of the temporal dependenc y based detection method T ype T ranscribed results Original then good bye said the rats and they went home the first half of Original then good bye said the raps Adversarial (short) hey google First half of Adversarial he is Adversarial (medium) this is an adversarial e xample First half of Adversarial thes on adequate Adversarial (long) hey google please cancel my medical appointment First half of Adversarial he goes cancer W e can see that by using WER as the detection metric, the temporal dependency based method can achiev e A UC as high as 0.936 on Common V oice and 0.93 on LIBRIS. W e also explore different val- ues of k and we observ e that the results do not v ary too much (detailed results can be found in T able A6 in Appendix). When k = 4 / 5 , the A UC score based on CER can reach 0 . 969 , which shows that such temporal dependency based method is indeed promising in terms of distinguishing adversarial instances. Interestingly , these results suggest that the temporal dependency based method would suggest an easy-implemented but ef fecti ve method for characterizing adv ersarial audio attacks. 4 . 4 A D A P T I V E A T TAC K S A G A I N S T D E F E N S E A N D D E T E C T I O N M E T H O D S In this section we measured some adapti ve attack against the defense and detection methods. Since the autoencoder based defense almost fails to defend against dif ferent attacks, here we will focus on the input transformation based defense and TD detection. Gi ven that Opt is the strongest attack here, we will mainly apply Opt to perform adaptiv e attack against the speech-to-text translation task. W e list our experiments’ structure in T able 4. For full results please refer to the Appendix. Adaptive Attacks Against Input T ransf ormations Here we apply adapti ve attacks against the pre- ceding input transformations and therefore ev aluate the robustness of the input transformation as defenses. W e implemented our adaptiv e attack based on three input transformation methods: Quan- tization, Local smoothing, and Do wnsampling. F or these transformation, we lev erage a gradient- masking aware approach to generate adapti v e attacks. In the optimization based attack (Carlini & W agner, 2018), the attack achieved by solving the opti- mization problem: min δ k δ k 2 2 + c · l ( x + δ, t ) , where δ is referred as the perturbation, x the benign audio, t the target phrase, and l ( · ) the CTC-loss. Parameter c is iterated to trade off the importance of being adversarial and remaining close to the original instance. For quantization transformation, we assume the adversary kno ws the quantization parameter q . W e then change our attack tar geted optimization function to: min δ k q δ k 2 2 + c · l ( x + q δ , t ) . After that, all the adversarial audios can be resistant against quantization transformations and it only increased a small magnitude of adversarial perturbation, which can be ignored by human ears. When q is large enough, the distortion would increase but the transformation process is also inef fectiv e due to too much information loss. For do wnsampling transformation, the adaptiv e attack is conducted by performing the attack on the sampled elements of origin audio sequence. Since the whole process is differentiable, we can do adaptiv e attack through gradient directly and all the adversarial audios are able to attack. For local smoothing transformation, it is also dif ferentiable in case of av erage smoothing transfor - mation, so we can pass the gradient ef fecti vely . T o attack against median smoothing transformation, we can just con v ert the gradient back to the median and update its v alue, which is similar to the max- pooling layer’ s back propagation process. By implementing the adaptiv e attack, all the smoothing transformation is shown to be inef fecti ve. 8 T able 3: A UC results of the proposed temporal dependency method Dataset LSTM TD (WER) TD (CER) TD (LCP ratio) Common V oice 0.712 0.936 0.916 0.859 LIBRIS 0.645 0.930 0.933 0.806 T able 4: Ev aluation of adapti ve attacks Attack methods Optimization based attack (Opt) Datasets LibriSpeech CommonV oice Evaluation metrics of adapti v e attack Attack success rate Defense Method Input trans. Down Samp. 92% 90% Quan-256 98% 100% Median-4 98% 96% Evaluation metrics for detection A UC score Detection results of TD Method 0.930 0.936 Segment attack 2% success rate 2% success rate Concatenation attack Failed. Failed. Combination attack under both random k A and k D 0.873 0.877 W e chose our samples randomly from LIBRIS and Common V oice audio dataset with 50 audio samples each. W e implemented our adaptive attack on the samples and passed them through the corresponding input transformation. W e use down-sampling from 16kHZ to 8kHZ, median / average smoothing with one-sided sequence length K = 4 , quantization method with q = 256 as our input transformation methods. In (Carlini & W agner, 2018), Decibels (a logarithmic scale that measures the relativ e loudness of an audio sample) is applied as the measurement of magnitude of perturbation: dB ( x ) = max i 20 · log 10 ( x i ) , which x referred as adversarial audio sampled sequence. The relati ve perturbation is calculated as dB x ( δ ) = dB ( δ ) − dB ( x ) , where δ is the crafted adversarial noise. W e measured our adaptiv e attack based on the same criterion. W e show that all the adaptiv e attacks become effecti ve with reasonable perturbation, as shown in T able 6. As suggested in (Carlini & W agner, 2018), almost all the adversarial audios ha ve distortion dB x ( δ ) from -15dB to -45dB which is tolerable to human ears. From T able 6, the added perturbation are mostly within this range. Adaptive Attacks Against T emporal Dependency Based Method T o thoroughly ev aluate the ro- bustness of temporal dependency based method, we also perform strong adaptiv e attack against it. Notably , even if the adversary kno ws k , the adapti ve attack is hard to conduct due to the f act that this process is non-differentiable. Therefore, we propose three types of strong adaptive attacks here aiming to explore the rob ustness of the temporal based method. Segment attack : Giv en the knowledge of k , we first split the audio into two parts: the prefix of length k of the audio S k and the rest S k − . W e then apply similar attack to add perturbation to only S k . W e hope this audio can be attacked successfully without changing S k − since the second part would not receiv e gradient updates. Therefore, when performing the temporal based consistency check, T ( S k ) would be translated consistently with T ( S { whole,k } ). Concatenation attack : T o maximally lev erage the information of k , here we propose two ways to attack both S k and S k − individually , and then concatenate them together . 1. the tar get of S k is the first k − portion of adversarial tar get, and S k − is attacked to the rest. 2. the target of S k is the whole adversarial target, while we attack S k − to be silence, which means S k − transcribing nothing. This is dif ferent from segment attack where S k − is not modified at all. Combination attack : T o balance attack success rate for both sections and the whole sentence against TD, we apply the attack objective function as min δ k δ k 2 2 + c · ( l ( x + δ, t ) + l (( x + δ ) k , t k ) , where x refers to the whole sentence. For segment attack, we found that in most cases the attack cannot succeed, that the attack success rate remains at 2% for 50 samples in both LIBRIS and Common V oice datasets, and some of the examples are sho wn in Appendix. W e conjecture the reasons as: 1. S k alone is not enough to be attacked to the adversarial target due to the temporal dependency; 2. the speech recognition results 9 T able 5: A UC of detecting Combination Attack based on TD method Combination Detection TD metrics Attack Parameter k D WER CER LCP k A = { 1 2 } 1/2 0.607 0.518 0.643 2/3 0.957 0.965 0.881 Rand(0.2, 0.8) 0.889 0.882 0.776 k A = { 1 2 , 2 3 , 3 4 } 1/2 0.665 0.682 0.604 2/3 0.653 0.664 0.564 3/4 0.633 0.653 0.601 Rand(0.2, 0.8) 0.785 0.832 0.642 T able 6: The dB x ( δ ) ev aluation of adapti ve attack Dataset Non-adaptiv e Downsample Quantization-256 Median-4 A verage-4 LIBRIS -36.06 -21.42 -11.02 -23.58 -25.64 CommmonV oice -35.65 -20.91 -9.48 -23.42 -25.12 on S k − cannot be applied to the whole recognition process and therefore break the recognition process for S k . For concatenation attack, we also found that the attack itself fails. That is, the transcribed result of adv ( S k )+ adv ( S k − ) differs from the translation result of S k + S k − . Some examples are shown in Appendix. The failure of the concatenation adaptiv e attack more explicitly shows that the temporal dependency plays an important role in audio. Even if the separate parts are successfully attacked into the tar get, the concatenated instance will again totally break the perturbation and therefore render the adaptive attack inef ficient. On the contrary , such concatenation will hav e negligible ef fects on benign audio instances, which provides a promising direction to detect adv ersarial audio. For combination attack, we v ary the section portion k D used by TD and e valuate the cases where the adaptiv e attacker uses the same/different section k A . W e define Rand(a,b) as uniformly sampling from [a,b]. W e consider stronger attacker , for whom the k A can be a set containing random sections. The detection results for different settings are sho wn in T able 5. From the results we can see that when | k A | = 1 , if the attack er uses the same k A as k D to perform adaptive attack, the attack can achiev e relative good performance and if attacker uses different k A , the attack will f ail with A UC abov e 85%. W e also ev aluate the case that defender randomly sample k D during the detection and find that it’ s very hard for adapti ve attacker to perform attacks, which can improv e model robustness in practice. For | k A | > 1 , the attacker can achieve some attack success when the set contains k D . But when | k A | increases, the attacker’ s performance becomes worse. The complete results are gi v en in Appendix. Notably , the random sample based TD appears to be robust in all cases. 5 C O N C L U S I O N This papers proposes to exploit the temporal dependency property in audio data to characterize audio adversarial examples. Our experimental results show that while four primitiv e input transformations on audio fail to withstand adapti ve adversarial attacks, temporal dependency is sho wn to be resistant to these attacks. W e also demonstrate the po wer of temporal dependency for characterizing adver- sarial examples generated by three state-of-the-art audio adversarial attacks. The proposed method is easy to operate and does not require model retraining. W e believ e our results shed new lights in exploiting unique data properties to ward adv ersarial robustness. R E F E R E N C E S Moustafa Alzantot, Bharathan Balaji, and Mani Sri vasta v a. Did you hear that? adversarial examples against automatic speech recognition. arXiv pr eprint arXiv:1801.00554 , 2018. Anish Athalye, Nicholas Carlini, and David W agner . Obfuscated gradients give a false sense of security: Circumventing defenses to adversarial examples. arXiv preprint , 10 2018. Nicholas Carlini and Da vid W agner . T ow ards e v aluating the robustness of neural netw orks. In IEEE Symposium on Security and Privacy , 2017 , 2017a. Nicholas Carlini and Da vid W agner . Adv ersarial e xamples are not easily detected: Bypassing ten detection methods. In A CM W orkshop on Artificial Intelligence and Security , pp. 3–14, 2017b. Nicholas Carlini and David W agner . Magnet and” ef ficient defenses against adv ersarial attacks” are not robust to adv ersarial examples. arXiv preprint , 2017c. Nicholas Carlini and Da vid W agner . Audio adversarial examples: T argeted attacks on speech-to- text. arXiv preprint , 2018. Hongge Chen, Huan Zhang, Pin-Y u Chen, Jinfeng Y i, and Cho-Jui Hsieh. Show-and-fool: Crafting adversarial e xamples for neural image captioning. arXiv pr eprint arXiv:1712.02051 , 2017a. Pin-Y u Chen, Y ash Sharma, Huan Zhang, Jinfeng Y i, and Cho-Jui Hsieh. Ead: elastic-net attacks to deep neural networks via adversarial e xamples. arXiv pr eprint arXiv:1709.04114 , 2017b. Moustapha Cisse, Y ossi Adi, Natalia Ne vero v a, and Joseph K eshet. Houdini: Fooling deep struc- tured prediction models. arXiv pr eprint arXiv:1707.05373 , 2017. Gintare Karolina Dziugaite, Zoubin Ghahramani, and Daniel M Roy . A study of the effect of jpg compression on adversarial images. arXiv preprint , 2016. Ian Goodfellow , Jonathon Shlens, and Christian Szegedy . Explaining and harnessing adversarial examples. In ICLR , 2015. RD Graetz, Roger P Pech, MR Gentle, and JF O’Callaghan. The application of landsat image data to rangeland assessment and monitoring: the de v elopment and demonstration of a land image-based resource information system (libris). Rangeland J ournal , 1986. Chuan Guo, Mayank Rana, Moustapha Ciss ´ e, and Laurens v an der Maaten. Countering adversarial images using input transformations. arXiv pr eprint arXiv:1711.00117 , 2017. W arren He, James W ei, Xinyun Chen, Nicholas Carlini, and Dawn Song. Adversarial example defenses: Ensembles of weak defenses are not strong. arXiv preprint , 2017. Geoffre y Hinton, Li Deng, Dong Y u, George E Dahl, Abdel-rahman Mohamed, Navdeep Jaitly , Andrew Senior , V incent V anhoucke, P atrick Nguyen, T ara N Sainath, et al. Deep neural networks for acoustic modeling in speech recognition: The shared vie ws of four research groups. IEEE Signal Pr ocessing Magazine , 29(6):82–97, 2012. W ei Ning Hsu, Y u Zhang, and James Glass. Unsupervised domain adaptation for robust speech recognition via v ariational autoencoder-based data augmentation. arXiv pr eprint arXiv:1707.06265 , 2017. Dan Iter, Jade Huang, and Mike Jermann. Generating adversarial examples for speech recognition. T echcical Report , 2017. Felix Kreuk, Y ossi Adi, Moustapha Cisse, and Joseph Keshet. Fooling end-to-end speaker verifica- tion by adversarial e xamples. arXiv pr eprint arXiv:1801.03339 , 2018. Alex Krizhe vsky , Ilya Sutskev er , and Geoffrey E Hinton. ImageNet classification with deep con vo- lutional neural networks. In NIPS , pp. 1097–1105, 2012. V . I Levenshtein. Binary codes capable of correcting deletions, insertions and rev ersals. Soviet Physics Doklady , 10(1):845–848, 1966. Serge y Le vine, Chelsea Finn, T re vor Darrell, and Pieter Abbeel. End-to-end training of deep visuo- motor policies. JMLR , 17(39):1–40, 2016. Y anpei Liu, Xinyun Chen, Chang Liu, and Dawn Song. Delving into transferable adversarial exam- ples and black-box attacks. In ICLR , 2017. 11 David G Lowe. Object recognition from local scale-in v ariant features. In Computer vision, 1999. The proceedings of the seventh IEEE international confer ence on , volume 2, pp. 1150–1157. Ieee, 1999. Pei-Hsuan Lu, Pin-Y u Chen, Kang-Cheng Chen, and Chia-Mu Y u. On the limitation of MagNet defense against L 1 -based adversarial e xamples. arXiv pr eprint arXiv:1805.00310 , 2018. Y an Luo, Xavier Boix, Gemma Roig, T omaso Poggio, and Qi Zhao. Fo veation-based mechanisms alleviate adv ersarial examples. arXiv preprint , 2015. Dongyu Meng and Hao Chen. Magnet: a two-pronged defense against adversarial examples. In Pr oceedings of the 2017 A CM SIGSA C Confer ence on Computer and Communications Security , pp. 135–147. A CM, 2017. Daniel Michelsanti and Zheng-Hua T an. Conditional generative adversarial networks for speech enhancement and noise-robust speak er verification. arXiv preprint , 2017. V . Panayotov , G. Chen, D. Pove y , and S. Khudanpur . Librispeech: An asr corpus based on public domain audio books. In 2015 IEEE International Confer ence on Acoustics, Speech and Signal Pr ocessing (ICASSP) , pp. 5206–5210, 2015. Dmitriy Serdyuk, Kartik Audhkhasi, Phil ´ emon Brakel, Bhuvana Ramabhadran, Samuel Thomas, and Y oshua Bengio. In v ariant representations for noisy speech recognition. arXiv pr eprint arXiv:1612.01928 , 2016. Anuroop Sriram, Heewoo Jun, Y ashesh Gaur, and Sanjee v Satheesh. Robust speech recognition using generativ e adversarial netw orks. arXiv pr eprint arXiv:1711.01567 , 2017. Dong Su, Huan Zhang, Hongge Chen, Jinfeng Y i, Pin-Y u Chen, and Y upeng Gao. Is robustness the cost of accurac y?–a comprehensi ve study on the robustness of 18 deep image classification models. arXiv pr eprint arXiv:1808.01688 , 2018. Sining Sun, Ching-Feng Y eh, Mari Ostendorf, Mei-Y uh Hwang, and Lei Xie. Data augmentation with adversarial e xamples for robust speech recognition. Researc hGate , 2018. Christian Sze gedy , W ojciech Zaremba, Ilya Sutsk ev er , Joan Bruna, Dumitru Erhan, Ian Goodfello w , and Rob Fergus. Intriguing properties of neural networks. In ICLR , 2014. Qinglong W ang, W enbo Guo, II Ororbia, G Ale xander , Xinyu Xing, Lin Lin, C Lee Giles, Xue Liu, Peng Liu, and Gang Xiong. Using non-in vertible data transformations to b uild adversarial-rob ust neural networks. arXiv preprint , 2016. Pete W arden. Speech commands: A dataset for limited-vocab ulary speech recognition. arXiv pr eprint arXiv:1804.03209 , 2018. W eilin Xu, David Ev ans, and Y anjun Qi. Feature squeezing: Detecting adversarial examples in deep neural networks. arXiv preprint , 2017. Xuejing Y uan, Y uxuan Chen, Y ue Zhao, Y unhui Long, Xiaokang Liu, Kai Chen, Shengzhi Zhang, Heqing Huang, Xiaofeng W ang, and Carl A Gunter . Commandersong: A systematic approach for practical adversarial v oice recognition. arXiv pr eprint arXiv:1801.08535 , 2018. 12 A P P E N D I X 5 . 1 R E S U LT S O N “ AU T O E N C O D E R T R A N S F O R M AT I O N M E T H O D F O R S P E E C H - T O - T E X T A T TAC K ” A N D “ P R I M I T I V E T R A N S F O R M A T I O N F O R S P E E C H - T O - T E X T A T T AC K ” T able A1: Ev aluation on Common V oice with language model T ransformation Methods OriginWER(%) OriginCER(%) AdvWER(%) AdvCER(%) W ithout transformations 27.5 14.3 95.9 80.1 Autoencoder 57.6 (2.09) 34.1 (2.38) 76.5 (0.80) 49.8 (0.62) Median-4 27.0 (0.98) 14.6 (1.02) 73.6 (0.77) 42.4 (0.53) Downsample 31.2 (1.13) 17.6 (1.23) 69.6 (0.73) 41.2 (0.51) Quan-128 34.4 (1.25) 21.3 (1.49) 75.9 (0.79) 45.3 (0.57) Quan-256 42.9 (1.56) 26.7 (1.87) 70.7 (0.74) 41.8 (0.52) Quan-512 52.4 (1.90) 37.1 (2.59) 68.5 (0.71) 45.0 (0.56) Quan-1024 62.4 (2.27) 47.2 (3.3) 70 (0.73) 51.2 (0.64) T able A2: Ev aluation on LIBRIS with language model T ransformation Methods OriginWER(%) OriginCER(%) AdvWER(%) AdvCER(%) W ithout transformations 3.05 1.46 102.8 86.5 Autoencoder 30.0 (9.84) 15.1 (10.34) 99.4 (0.97) 58.1 (0.67) Median-4 3.6 (1.18) 1.7 (1.16) 35.1 (0.34) 19.0 (0.22) Downsample 11.8 (3.87) 5.7 (3.90) 41.2 (0.40) 21.8 (0.25) Quan-128 3.2 (1.04) 1.5 (1.03) 49.7 (0.48) 28.2 (0.33) Quan-256 3.5 (1.13) 1.7 (1.16) 29.1 (0.28) 15.4 (0.18) Quan-512 12.0 (3.93) 6.6 (4.52) 25.1 (0.24) 13.3 (0.15) Quan-1024 30.7 (10.06) 20.3 (13.90) 36.6 (0.36) 24.1 (0.28) T able A3: Ev aluation on Common V oice without passing through language model T ransformation Methods OriginWER(%) OriginCER(%) AdvWER(%) AdvCER(%) W ithout transformations 37.7 18.5 95.8 83.0 Median-4 43.4 (1.15) 20.4 (1.10) 83.0 (0.87) 46.5 (0.56) Down sampling 47.2 (1.25) 23.3 (1.26) 77.6 (0.81) 43.9 (0.53) Quantization-128 47.3 (1.25) 25.7 (1.39) 80.7 (0.84) 49.0 (0.59) Quantization-256 52.5 (1.39) 29.2 (1.58) 73.4 (0.77) 43.6 (0.53) Quantization-512 64.1 (1.70) 37.5 (2.03) 73.7 (0.77) 44.2 (0.53) Quantization-1024 72.1 (1.91) 50.4 (2.72) 76.9 (0.80) 53.0 (0.64) T able A4: Ev aluation on LIBRIS without passing through language model T ransformation Methods OriginWER(%) OriginCER(%) AdvWER(%) AdvCER(%) W ithout transformations 12.4 7.05 105.3 91.7 Median-4 16.4 (1.32) 8.0 (1.13) 57.9 (0.55) 27.5 (0.30) Downsample 24.2 (1.95) 13.0 (1.84) 60.9 (0.58) 31.2 (0.34) Quantization-128 13.4 (1.08) 7.6 (1.08) 66.1 (0.63) 37.1 (0.40) Quantization-256 16.3 (1.31) 8.9 (1.26) 48.6 (0.46) 24.0 (0.26) Quantization-512 27.5 (2.21) 13.8 (1.96) 47.0 (0.45) 23.0 (0.25) Quantization-1024 46.8 (3.77) 25.4 (3.60) 52.3 (0.50) 30.0 (0.33) 13 5 . 2 M O R E R E S U LT S O N P R I M I T I V E T R A N S F O R M AT I O N M E T H O D F O R AU D I O C L A S S I FI C A T I O N A T TAC K Figure A1: Successful attack rates Figure A2: Unchanged label rates Figure A3: Successful attack rates after transformation Figure A4: Unchanged label rates after transformation 5 . 3 M O R E R E S U LT S O N A D A P T I V E A T TAC K S AG A I N S T T E M P O R A L D E P E N D E N C Y BA S E D M E T H O D 14 T able A5: Examples of Se gment Attack and Concatenation attack T ype T ranscribed results Original and he leaned against the wa lost in re verie y the first half of Original and he leaned against the wa Adaptiv e attack target this is an adversarial example Adaptiv e attack result this is an adversarial losin v er the first half of Adv . this is a agamsa Adaptiv e attack target okay google please cancel my medical appointment Adaptiv e attack result okay google please cancel my medcalosin ver the first half of Adv . okay go please Original why one morning there came a quantity of people and set to work in the loft Attack target this is an adversarial e xample S k this is an S k − adversarial e xample S k + S k − this is a quantity of people and set to work in a lift S k this is an adversarial e xample S k − sil S k + S k − this is an adernari eanquatete of pepl and sat to work in the loft T able A6: A UC scores of different k k WER CER LCP 1 / 2 0.930 0.933 0.806 2 / 3 0.930 0.948 0.826 3 / 4 0.933 0.938 0.839 4 / 5 0.955 0.969 0.880 5 / 6 0.941 0.962 0.858 T able A7: A UC of detecting Combination Attack based on TD method Combination Detection TD metrics Attack Parameter k D WER CER LCP k A = { 1 2 } 1/2 0.607 0.518 0.643 2/3 0.957 0.965 0.881 3/4 0.943 0.951 0.875 Rand(0.2, 0.8) 0.889 0.882 0.776 k A = { 2 3 } 1/2 0.932 0.912 0.860 2/3 0.611 0.543 0.604 3/4 0.956 0.944 0.872 Rand(0.2, 0.8) 0.879 0.890 0.762 k A = { 1 2 , 2 3 } 1/2 0.633 0.690 0.552 2/3 0.536 0.615 0.524 3/4 0.942 0.974 0.934 Rand(0.2, 0.8) 0.801 0.880 0.664 k A = { 1 2 , 2 3 , 3 4 } 1/2 0.665 0.682 0.604 2/3 0.653 0.664 0.564 3/4 0.633 0.653 0.601 Rand(0.2, 0.8) 0.785 0.832 0.642 k A = { 1 2 , 2 3 , 3 4 , 4 5 } 1/2 0.701 0.712 0.615 2/3 0.684 0.701 0.583 3/4 0.681 0.693 0.613 Rand(0.2, 0.8) 0.742 0.811 0.623 k A = { 1 2 , 2 3 , 3 4 , 4 5 , 5 6 } 1/2 0.736 0.784 0.601 2/3 0.723 0.763 0.612 3/4 0.715 0.755 0.584 Rand(0.2, 0.8) 0.734 0.801 0.620 k A = Rand(0.2, 0.8) 1/2 0.880 0.881 0.824 2/3 0.922 0.972 0.831 3/4 0.952 0.968 0.894 Rand(0.2, 0.8) 0.873 0.875 0.799 15

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment