Achieving Super-Resolution with Redundant Sensing

Analog-to-digital (quantization) and digital-to-analog (de-quantization) conversion are fundamental operations of many information processing systems. In practice, the precision of these operations is always bounded, first by the random mismatch erro…

Authors: Diu Khue Luu, Anh Tuan Nguyen, Zhi Yang

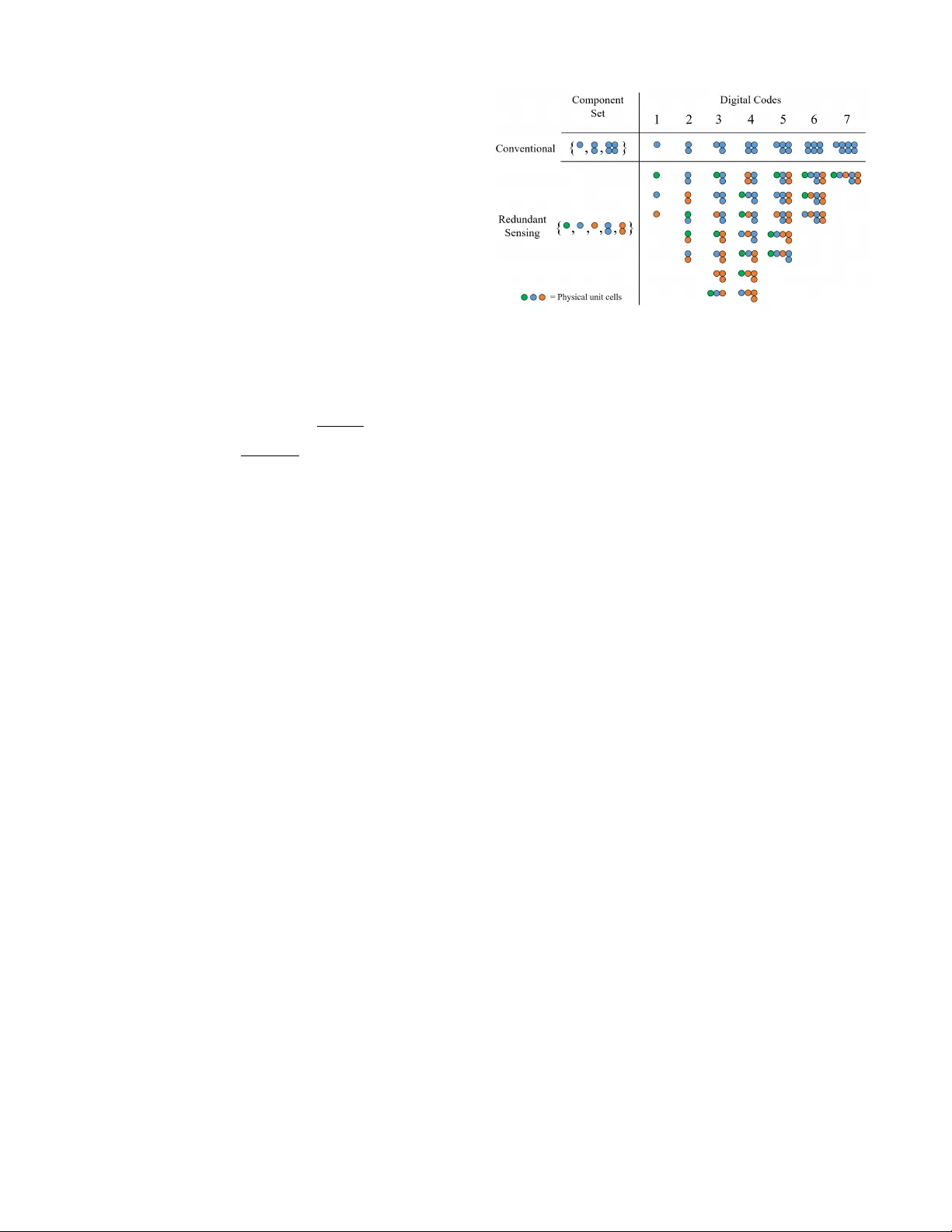

1 Achie ving Super -Resolution with Redundant Sensing Diu Khue Luu ∗ , Anh T uan Nguyen ∗ , Student Member , IEEE, and Zhi Y ang 1 , Member , IEEE, Abstract —Analog-to-digital (quantization) and digital-to- analog (de-quantization) con version are fundamental operations of many information processing systems. In practice, the precision of these operations is always bounded, first by the random mis- match error (ME) occurred during system implementation, and subsequently by the intrinsic quantization error (QE) determined by the system architecture itself. In this manuscript, we present a new mathematical interpr etation of the pre viously proposed redundant sensing (RS) architectur e that not only suppresses ME but also allo ws achieving an effective resolution exceeding the system’s intrinsic resolution, i.e. super-r esolution (SR). SR is enabled by an endogenous property of redundant structures regarded as “code diffusion” where the refer ences’ value spreads into the neighbor sample space as a result of ME. The proposed concept opens the possibility for a wide range of applications in low-power fully-integrated sensors and devices where the cost- accuracy trade-off is inevitable. Index T erms —Super -resolution, redundant sensing, analog-to- digital converter , quantization, mismatch error . I . I N T RO D U C T I O N T HE process of quantization i.e. analog-to-digital conv er - sion (ADC) and the re verse operation de-quantization i.e. digital-to-analog con version (D AC) are the basis of all modern sensory data acquisition systems. They allow “digital” artificial systems to sense and interact with the “analog” physical world. Quantization is essentially a lossy data com- pression process where information from a higher-resolution space is represented in lower -resolution counterpart. In practi- cal implementations, the precision of this process is always bounded by the system resource constraints such as size, power , bandwidth, and memory , etc. For example, in many integrated circuits ADC and D A C designs, an addition 1-bit of resolution or 2x precision often require a 4x increase of chip area and power consumption [10], [18], [20]. While ultra- high resolution ADCs/DA Cs up to 32-bit is possible, the large size and power consumption limit the use of these de vices in many practical applications. Similarly , higher resolution image sensor requires more pixel count and buffer memory thus also results in larger device and power consumption. While it is possible to improve the pixel density , the smaller pixel size is associated with increased noise which limits the sensor’ s dynamic range [9], [17]. Super-resolution (SR) are techniques that aim at achiev- ing an ef fecti ve resolution exceeding the precision that the system’ s resource constraints commonly permit. They hav e wide applications v arious in fields of engineering and science ∗ Co-first authors. 1 E-mail: yang5029@umn.edu. Department of Biomedical Engineering, University of Minnesota, Min- neapolis, MN 55455, USA. concerning imaging and instrumentation where higher reso- lution data acquisition is always desired [3], [15]. Previous SR techniques focus on recov ering fine details of the object of interest by integrating the information obtained from coarse observations. These techniques could be generally divided into two primary classes: modeling-based and oversampling-based, which are also known as single-frame and multi-frame in image processing. Modeling-based (single-frame) techniques such as [2], [4], [5], [11], [14] focus on modeling the input sources from av ailable data points and reconstructing the missing information by means of approximation. On the other hand, oversampling-based (multi-frame) techniques such as [6], [12], [13], [19] acquire and combine multiple samples of the input obtained at various spatial or temporal instants to extract the sub- least-significant-change information. In this manuscript, we present a new approach based on the redundant sensing (RS) [21], [23], [24] that requires neither modeling nor ov ersampling of the input signals. A RS structure is essentially a redundant system of information representation where each outcome in the sample space can be generated by multiple distinct system configurations. In practice, these configurations are always affected by random mismatch error (ME), which conv entionally , is considered as a ”problem“ causing con version error and degrading the system’ s ov erall precision. Y et here we sho w that ME allows actual values of the system’ s redundant configurations to “dif fuse” into the neighbor sample space such that with a sufficient level, a RS structure has the information capability to quantize the data at an effecti ve resolution beyond the con ventional resource constraints. Our SR technique is fundamentally dif ferent from previous approaches because it does not inv olve reconstructing the missing information. Instead, the mechanism aims at direct sampling of higher-precision data points provided the quan- tizer’ s endogenous structure is correctly optimized. Indeed, the fine-detailed information content of the input signal is nev er lost during quantization. This is achiev ed not only because of the RS architecture itself but also by elegantly manipulating ME - an undesirable precision-limiting factor in conv entional designs. In the following sections, the background and mechanisms which facilitate SR in a RS architecture are explained. W e use Monte Carlo simulations to demonstrate an extra 8-9 bits resolution or 256-512x precision can be accomplished on top of a 10-bit quantizer at 95% sample space. Lastly , we discuss potential applications and practical considerations of the proposed technique in fully-inte grated miniaturized biomedical devices where the structure’ s complexity can be 2 mitigated by approximation or con veniently circumvented. I I . S U P E R - R E S O L U T I O N A. Quantization & Mismatch Err or Quantization 1 is a process of mapping a continuous set (analog) to a finite set of discrete values (digital). Without loss of generality , we can assume a N 0 -bit quantizer di vides the continuous interval [0, 1) into 2 N 0 partitions defined by a set of refer ences θ 0 ≤ θ 1 ≤ ... ≤ θ 2 N 0 where each partition is mapped onto a digital code d ranging from 0 to 2 N 0 − 1 : x A ∈ [ θ d , θ d +1 ) → x D = d, ∀ d = 0 , 1 , ..., 2 N 0 − 1 (1) where x A is the analog input and x D is the digital output. The quantizer’ s effective r esolution can be quantified by the Shannon entr opy H N 0 as follows: M N 0 = 2 N 0 − 1 X d =0 Z θ d +1 θ d x A − d + 0 . 5 2 N 0 2 dx A H N 0 = − log 2 p 12 · M N 0 (2) where M is the normalized total mean-square-error integrated ov er each digital code. It can be sho wn that H N 0 ≤ N 0 for all values of reference θ d . Equality occurs only when 2 N 0 references are equally spaced, i.e. ∀ i, j : θ i +1 − θ i = θ j +1 − θ j . This fundamental maximum value of entropy is referred as the Shannon limit , where the device’ s resolution is bounded only by its intrinsic quantization error (QE). In practice, the quantizer’ s precision is also affected by the randomly occurred ME resulting in the undesirable de viation of the references and degradation of entropy . For example, integrated circuits ADC or D A C chips such as [21] generate their references by arrays of identical elementary components regarded simply as unit cells . A N 0 -bit device generally has 2 N 0 − 1 unit cells which could be miniature capacitors, resistors or transistors. The random mismatch of indi vidual unit cells due to variations of the fabrication process and other non- ideal factors is one of the primary sources of ME that could significantly deteriorate the device’ s precision [1], [8], [16]. T o ef fectively control the unit cells, they are always grouped into bundles regarded simply as components . Grouping sig- nificantly reduces the number of control signals required. For example, with the con ventional binary-weighted method, 2 N 0 − 1 unit cells are arranged into N 0 components with the nominal weight of { 2 0 , 2 1 , ..., 2 N 0 − 1 } . Such system is orthogonal because with N 0 binary control signals, i.e. 0/1 bits, 2 N 0 references corresponding to each digital code in [0 , 2 N 0 − 1] can be uniquely created by selecting and assem- bling the components according to the binary numeral system. B. Redundant Sensing RS is a design frame work that aims at engineering redun- dancy for enhancing the system’ s performance regarding ac- curacy and precision, instead of reliability and fault-tolerance like con ventional designs [23]. A practical RS implementation 1 De-quantization is defined similarly and share the same characteristics. Fig. 1. Illustration of a simple 3-bit redundant sensing (RS) structure where representational redundancy (RPR) and entangled redundancy (ETR) can be achiev ed by utilizing a non-orthogonal grouping method without the need for replication. While using the same amount of physical resource (i.e. 7 unit- cells), in the RS structure, each digital code can be created by multiple distinct assemblies of components, each expresses a different, partially correlated distribution with respect to random ME. must satisfy two criteria, namely repr esentational r edundancy (RPR) and entangled redundancy (ETR) [24]. RPR refers to a non-orthogonal scheme of information representation where every outcome in the sample space is encoded by numerous distinct system configurations. Each configuration responses differently to ME such that in any giv en instance, there almost always exists one or more con- figurations that have smaller errors than the con ventional representation. ETR refers to the implementation of the RS structure such that the statistical distribution of different system configura- tions is partially correlated (i.e. entangled) allowing a large degree of redundancy without incurring excessi ve resource ov erhead. ETR should be differentiated from conv entional replication-based method to realize redundancy where the degree of redundancy is linearly proportional to the resource utilization. Fig. 1 illustrates a simple example where a 3-bit RS struc- ture with both RPR and ETR properties can be accomplished by utilizing a non-orthogonal grouping method without the need for replication. While using the same amount of physical resource (i.e. 7 unit cells), in the RS structure, each digital code can be created by multiple distinct assemblies of components, each expresses a dif ferent, partially correlated distribution with respect to random ME. This redundant system of information representation has been shown to suppress ME by allowing searching for the optimal component assembly with the least error with respect to each and ev ery digital code [23]. In this work, we will sho w that such redundant mechanism can be elegantly exploited to realize an effecti ve resolution beyond the con ventional limit of N 0 bounded by QE. C. Code Diffusion Fig. 2 sho ws the estimated probability density function (PDF) of all the references { θ 0 , θ 1 , ... } that can be generated by an example RS structure at various mismatch ratio . The mismatch ratio σ m is defined as the standard deviation of each unit cell which is assumed to have a Gaussian distrib ution 3 Fig. 2. (a-c) Estimated probability density function (PDF) of all the references { θ 0 , θ 1 , ... } that can be generated by an example RS structure at various mismatch ratio σ m (0-10%) and (d-f) their respective zoom-in views which sho w the PDF’ s segments centered at each element of Θ N 0 . W ith sufficient le vel of mismatch ratio, the references “diffuse” e venly across the sample space which allows approximating elements of a higher-resolution set Θ N 1 , Θ N 2 , ... and facilitating SR. with unity mean. Monte Carlo simulations ( n = 1000 ) are performed on a redundant structure similar to one implemented in [21] with N 0 = 10 . Clearly , in the absence of ME or σ m = 0 (Fig. 2a, d), regardless ho w the unit cells are grouped and assembled, an array of 2 N 0 − 1 identical units can only generate a finite number of references belonging to the follo wing discrete set of values: Θ N 0 = { 0 2 N 0 , 1 2 N 0 , ..., 2 N 0 − 1 2 N 0 , 2 N 0 2 N 0 } (3) Θ N 0 is regarded as the intrinsic refer ence set (IRS) corre- sponding to an effecti ve resolution N 0 . The elements of Θ N 0 are marked by Dirac delta functions in Fig. 2a, d. As σ m assumes non-zero values (Fig. 2b, e), the PDF’ s segment centered at each element of Θ N 0 is widen as the actual values generated by different component assemblies be gin “diffusing” into the neighbor sample space. This property is unique to a RS structure because (i) there are numerous dif- ferent component assemblies that can generate references with the same nominal values, i.e. RPR, and (ii) the distribution of these assemblies are partially independent with respect to random ME, i.e. ETR. Subsequently , the spreading of the PDF occurs at every trial of ME, not merely the result of the Monte Carlo sampling. In an ordinary quantizer , code diffusion is undesirable because it makes the references deviates from Θ N 0 , thus results in the degradation of the Shannon entropy as shown in equation 2. In fact, our previous system in [23] was designed to re verse the diffusing process by searching for the assemblies that are closest to each element of Θ N 0 . Howe ver , from another perspecti ve, code diffusion implies that the same system could generate references within the sample space’ regions that are belonged to the IRS of a higher resolution N k = N 0 + k : Θ N k = { 0 2 N k , 1 2 N k , ..., 2 N k − 1 2 N k , 2 N k 2 N k } (4) where Θ N 0 ⊂ Θ N 1 ⊂ ... ⊂ Θ N k ( k = 1 , 2 , ... ) as marked in the x-axis of Fig. 2d, e, f. W ith sufficient le vel of mismatch ratio (Fig. 2c, f), the reference’ s PDF covers almost all the sample space with relatively ev en chances. Subsequently , there is an adequate possibility a set of assemblies closely approximating Θ N k can be found that would allo w sampling at an effecti ve resolution N k beyond the system intrinsic resolution N 0 . It is also interesting to point out that ME, which is con ventionally regarded as an undesirable non-ideal factor , is the crucial element that enable SR. Maximal ef fecti veness of SR is obtained only when the mismatch ratio reaches a 4 certain le vel ∼ 10% which is considered excessiv e large in many ordinary applications. Such mechanism is only possible because the number of distinct references that can be generated by a RS structure is significantly larger than the cardinality of both Θ N 0 and Θ N k due to redundancy . In an orthogonal structure such as the binary system, the number of distinct references is strictly 2 N 0 = | Θ N 0 | , which is smaller than | Θ N k | for all k . Further - more, not only the number of different component assemblies but also the mutual correlation between them play an important role. Ideally , we want the assemblies to spread evenly across all the sample space to hav e the maximum chance of approxi- mating Θ N k . This characteristic is determined by the device’ s internal architecture, i.e. how the components are designed. D. Gr ouping Method The gr ouping method (GM) is the way unit cells are arranged into components. Almost all conv entional designs can be categorized as binary-weighted (BW) structures where the quantization partitions are uniquely encoded according to the binary numeral system. In contrast, the proposed RS architecture employs a different strategy to realize redundancy with both RPR and ETR properties. There is no limitation to how the unit cells are grouped. While GM does not alter the number of unit cells, thus has little effect on the resource constraints, it determines the system’ s endogenous architecture and greatly affects the references’ number and distribution. The design of GM differentiates one redundant structure from another . Let assume a giv en GM assembles 2 N 0 − 1 unit cells into n components with the nominal weight ¯ C = { ¯ c 1 , ¯ c 2 , ..., ¯ c n } and the actual weight C = { c 1 , c 2 , ..., c n } with respect to random ME. Each subset of C , encoded by the binary string d = d 1 d 2 ...d n ( d i ∈ { 0 , 1 } ), generates a normalized reference θ d as follows: θ d = Σ n i =1 d i c i / (1 + Σ n i =1 c i ) (5) Let Φ is the set of all references that can be generated by system. T o achiev e an effecti ve resolution N k is essentially to search for a subset ˆ Θ N k ⊂ Φ that closely approximates Θ N k . Clearly , SR can only be accomplished in a redundant structure as | Φ | > | Θ N k | or n > N k . The previously proposed RS architecture employed a class of GM that was inspired by the binocular structure of the human visual system. They yield the nominal weight ¯ C RS = ¯ C RS , 0 ∪ ¯ C RS , 1 according to the following formula with param- eters ( s, N 0 0 ) satisfied 1 ≤ N 0 0 < N 0 , 1 ≤ s ≤ N 0 − N 0 0 : ¯ C RS , 1 = { ¯ c 1 ,i | ¯ c 1 ,i = 2 N 0 − N 1 + i − s } ¯ C RS , 0 = { ¯ c 0 ,j | ¯ c 0 ,j = ( 2 j , if j < N 0 − N 0 0 2 j − ¯ c 1 ,j − N 0 + N 0 0 , otherwise } (6) where i ∈ [0 , N 1 − 1] , j ∈ [0 , N 0 − 1] . The special case of ¯ C RS where N 0 0 = N 0 − 1 and s = 1 called the “half-split” Fig. 3. A comparison of the reference distribution between the HS and UN grouping method. The UN method yields more uniform distrib ution across different regions of the sample space, especially the two ends, which would translate to better SR potential. (HS) array has been demonstrated in [21]. It has the following nominal weight ¯ C HS = ¯ C HS , 0 ∪ ¯ C HS , 1 where: ¯ C HS , 0 = { 2 0 , 2 1 , ..., 2 N 0 − 2 } ∪ { 2 0 } ¯ C HS , 1 = { 2 0 , 2 1 , ..., 2 N 0 − 2 } (7) Among the RS structures, the HS design has the largest number of components thus the greatest degree of redundancy while contains a reasonable number of components of 2 N 0 − 1 . Also, the simplicity of the design allows it to be implemented in hardware with minimal complexity as presented in [21]. The distribution of Φ H S is sho wn in Fig. 3. While the HS method has a high level of redundancy , their distributions are not necessarily optimal for achieving SR. The references mostly concentrate into the middle region of the sample space leaving the two ends inadequately cov ered and vulnerable to errors. In this manuscript, we propose an enhanced GM that is specifically designed to support SR. It has a more uniform distribution of references to maximize the co verage of the sam- ple space. The “UNiform” (UN) method yields the following nominal weight ¯ C UN = ¯ C UN , 0 ∪ ¯ C UN , 1 ∪ ... ∪ ¯ C UN , b log 2 N 0 c where: ¯ C UN ,i = { ¯ c i,j | ¯ c i,j = 2 j } ¯ C UN , 0 = { ¯ c 0 ,l | ¯ c 0 ,l = 2 l , if l < N 0 − N 1 2 l − b log 2 N 0 c P m =1 2 l − N 0 + N m , otherwise } (8) where N i = d N i − 1 / 2 e ∀ i ∈ [1 , b log 2 N 0 c ] , j ∈ [0 , N i − 1] , l ∈ [0 , N 0 − 1] . The intuition behind the UN design is to divide the components of a binary-weighted array into numerous sub-arrays with dif ferent resolutions N 1 , N 2 , ... that reduce in log scale. This maximizes the distribution of small and large components ov er the digital codes while retaining the total number of components at a reasonable value of 2 N 0 similar 5 Fig. 4. Achiev able SR in HS and UN redundant structures: (a, b) mean ( µ [ H N k ] ) and (c, d) standard deviation ( σ [ H N k ] ) of the entropy of a N 0 = 10 bits device at various targeted resolution N k = N 0 + k and mismatch ratio σ m . W ith sufficient mismatch ratio, 3-4 bits increase of effectiv e resolution or 8x-16x enhancement of precision. to the HS structure. All the remaining components form the base array ¯ C UN , 0 . As a comparison, with N 0 = 10 , the BW , HS and UN methods yield the following nominal component set: ¯ C BW = { 1 , 2 , 4 , 8 , 16 , 32 , 64 , 128 , 256 , 512 } (10 elements ) ¯ C HS = { 1 , 1 , 1 , 2 , 2 , 4 , 4 , 8 , 8 , 16 , 16 , 32 , 32 , 64 , 64 , 128 , 128 , 256 , 256 } (19 elements ) ¯ C UN = { 1 , 1 , 1 , 1 , 2 , 2 , 2 , 2 , 4 , 4 , 4 , 8 , 8 , 16 , 16 , 31 , 62 , 123 , 245 , 490 } (20 elements ) (9) As sho wn in Fig. 3, the UN method gi ves significantly “flatter” distribution of references which would translate to more ev en code dif fusion ov er dif ferent regions of the sample space. In the following sections, we will show this property helps suppress errors near the two ends of the sample space and results in more SR potential in general. E. Be yond The Shannon Limit SR in the context of this work should be understood as a resource-constraint problem. The precision of a sensor consists of 2 N 0 − 1 unit cells was previously thought to be bound by the Shannon limit of N 0 determined by QE. By arranging the unit cells in a specific manner to realize a redundant structure and exploiting the statistical property of random ME, we aim to achiev e an ef fective resolution beyond this con ventional “limit”. The Shannon limit exists because the ordinary expression of entropy as shown in Equation 2 is computed against a reference set of only 2 N 0 + 1 v alues { θ 0 , ..., θ N 0 } which is the maximum number of distinct references a con ventional binary- weighted array can generate. This limitation does not apply to a redundant architecture. A HS or UN structure has a reference set Φ HS / Φ UN with as much as ∼ 2 2 N 0 distinct elements. The key to achiev e SR is to find a subset ˆ Θ N k from Φ HS / Φ UN such that ˆ Θ N k closely approximates the IRS Θ N k at the resolution N k . This can only be accomplished when there is random ME that allows the elements of Φ HS / Φ UN to diffuse across the sample space. Hence, the concept of SR does not contradict with the conv entional Shannon limit, but a new interpretation of the Shannon theory beyond its ordinary understanding that only applies in a practical redundant architecture. The Shannon entropy in Equation 2 can be con veniently 6 Fig. 5. Root mean square error (RMSE) computed over the sample space ( N 0 = 10 , σ m = 10% ). Note that the x-axis only shows the first and last 5% of the sample space. At a high-resolution, errors mostly occur at the two ends where the le vel of redundancy is lower . This can result in significant degradation of the overall entropy . The UN method is designed to have flatter code distribution which helps shape the error to the extreme end. modified to represent the ef fective resolution at a targeted resolution N k by replacing N 0 ← N k and extend the scope to θ d to include all the values in ˆ Θ N k . Fig. 4 show the mean and standard deviation (STD) of the estimated entropy of a N 0 = 10 bit device using Monte Carlo simulations ( n = 1000 ) at various targeted resolution and mismatch ratio. The optimal set ˆ Θ N k is found using exhaustiv e search. As our analysis of code diffusion suggested, the best per- formance of SR is obtained with the mismatch ratio above ∼ 10% . Both HS and UN grouping method offers 3-4 bits increase of effecti ve resolution or 8x-16x enhancement of precision. The entropy’ s STD is less than 0 . 2 -bit within 10- 50% mismatch ratio where the UN method has a marginally better outcome. These results suggest that the solution for SR is consistent which in practical applications, will translate to the good yield of the de vice under random error . Furthermore, the consistency of the mechanism implies that ME may not need to be truly “random”. In certain application, 10% random deviation may seem unrealistic. Instead, the deviation can be intentionally added to the structure during the design process. Even if these artificial pseudo-random deviations could carry a certain level of error , the consistency of SR mechanism guarantees that a solution can always be found. F . Reduced-Range Sampling Fig. 5 shows the distribution of the root mean square error (RMSE) over the sample space or the value of p M N k ( d ) at each digital code d in Equation 2 before the summation. At a high-resolution, errors mostly occur at the two ends of the sample space. These are regions that have a lower le vel of redundancy as implied by the code distribution presented in Fig. 3. The UN technique is designed to hav e better spreading of the codes compared to the HS design, thus help mitigate parts of the error by shaping it to the extreme ends. Howe ver , because of the nature of the grouping, it is mathematically not possible to cover all the sample space equally . Nev ertheless, we argue that many applications actually do not utilize the entire sample space equally due to numerous practical reasons. The majority of sensors are calibrated such that the signals that need to be captured fall within the middle of the sample space. This is because most signals do not distribute uniformly across the sample, “centering” the data minimize the chance of the signal going be yond the sampling range causing distortion and loss of information. Suppose we can simply ignore the two extreme ends, the proposed technique allows realizing a continuous sampling range centered at the middle of the sample space where the ov erall effecti ve resolution can be significantly enhanced. Let δ ∈ [0 , 1] is the length of a continuous region centered at the middle of the sample space where data are captured. This ef fecti vely reduces the full-range and dynamic range of the device which results in a lower Shannon limit: max( H N k ,δ ) = log 2 ( δ 2 N k ) = N k + log 2 δ (10) The normalized total mean square error and entropy are now only integrated ov er a smaller range of digital codes: M N k ,δ = b (1 − 1 − δ 2 ) · 2 N k c X d = b 1 − δ 2 · 2 N k c Z θ d +1 θ d x A − d + 0 . 5 2 N k 2 dx A H N k ,δ = − log 2 p 12 · M N k ,δ (11) Fig. 6 shows the estimated entrop y of the same system in Fig. 4 but at δ = 95% sample space. The UN method excels over the HS structure because it is specifically designed to minimize errors at two ends. By sacrificing 5% of the sample space - a reasonable engineering trade-off, an increase of 8-9 bits effecti ve resolution or 256x-512x enhancement of precision is feasible with the UN structure. 7 Fig. 6. Achiev able SR of the same HS and UN redundant structures in Fig. 4 ( N 0 = 10 , N k = N 0 + k ) but at δ = 95% sample space. By sacrificing 5% of the sample space - a reasonable engineering trade-off, an increase of 8-9 bits effectiv e resolution or 256x-512x enhancement of precision is feasible with the UN structure. I I I . P R AC T I C A L C O N S I D E R A T I O N S & A P P L I C A T I O N S In practice, the greatest challenge for utilizing the proposed SR as well as any RS architecture is to determine the cor- rect configuration of the system among numerous redundant possibilities. In the conte xt of this work, achieving SR at N k requires solving the following optimization problem: Problem: ∀ θ i ∈ Θ N k , find a subset of C = { c 1 , c 2 , ...c n } such that it generates a refer ence ˆ θ i which minimize the err or | θ i − ˆ θ i | This is essentially a version of the 0-1 knapsack pr oblem , which has been shown to NP-hard [7]. Because of Θ N 0 ⊂ Θ N 1 ⊂ ... ⊂ Θ N k , achie ving SR at any targeted resolution N k is as hard as the non-SR case of N 0 giv en the actual weights all of the components are kno wn. Howe ver , this does not necessarily ne gate the practicality of the proposed technique. The practical solution to this seemingly unsolvable problem may be specific to each application. In [21], we hav e sho wn an implementation of a high- precision ADC in inte grated circuits where a RS structure was utilized. The optimization problem was successfully over - come by employing a heuristic-based approximation algorithm which was deriv ed to compute the near-optimal system con- figuration on-the-fly gi ven the input signal and the estimated weights of all components. The proposed algorithm w as suf- ficiently simple such that it can be implemented on-chip with an adequate accuracy and a time complexity of merely O (1) . This example suggests that approximation would be a viable approach for implementing SR in practice. Realizing SR for ADC would enable enormous boost of performance in various high-precision imaging and instrumentation systems as ADC is one of the core components of many sensors and devices. SR would also find many uses in D A C devices. For example, an implantable neurostimulator such as [22] requires a D A C to generate its internal reference current. Higher resolution D AC is always desirable as it gives more precise control of the stimulation current which could imply better modulation of neural circuits. Fig. 7a shows the functional blocks of the neurostimulator and the schematic of its current DA C where each unit cell is a MOS transistor . Although mostly time-inv ariant, transistor mismatch is particularly complex because it not only depends on the device’ s physical size ( W /L ) but also the operating conditions such as biasing voltage, loading current, parasitics, etc. As a proof-of-concept demonstration, we design and 8 Fig. 7. (a) A neurostimulator such as [22] requires a high-resolution DA C to generate its internal reference current. (b) Monte Carlo simulations at the schematic-lev el using the transistor’ s statistical model (both process and variation) show an average of 12-bit effecti ve resolution or a gain of 4-bit extra precision ( N 0 = 8 bits, δ = 95% ) can be achieved by solely exploiting the natural mismatch of the transistors simulate a SR D A C in the GlobalFoundries BCDLite 0.18 µ m process using 30V transistors with minimum feature size ( W /L = 4 . 0 / 0 . 5 µm ). The D A C architecture employs the UN grouping and has an intrinsic resolution of N 0 = 8 bits. Monte Carlo simulations ( n = 16 ) at the schematic-level are performed using the transistor’ s statistical model (both process and variation) provided by the foundry without an y added pseudo-random mismatch. The model should account for the majority of the mismatch except for the parasitic resistance of metal connections in the layout. Fig. 7b shows the simulation results where an av erage of 12-bit effecti ve resolution or a gain of 4-bit extra precision at δ = 95% can be achie ved by solely exploiting the natural mismatch of the transistors. The results show a concrete example where the proposed SR mechanism can be utilized to greatly enhance the performance of a high-precision device. Moreov er , unlike the ADC example, the neurostimulator’ s operations are always governed by an external controller during normal operation. The controller regularly communi- cates with the neurostimulator to update its parameters and trigger its function when needed. Subsequently , the optimal system setting at every D AC output can be simply determined upfront via foreground calibration and saved on an external memory which is accessed by the controller at any instant. This effecti vely circumvents the computational-hard problem by diverting it into a memory-hard problem which could be more easily handled in certain circumstances. For instance, assuming a tar geted SR of 16-bit is to be achiev ed with 20 components, storing all the optimal configurations would require 2 16 × 20 = 1 . 3 · 10 6 bits or 163KB of memory per D AC - a tri vial amount for an external flash memory . I V . C O N C L U S I O N This work presents a new interpretation of the RS archi- tecture that allows quantization or de-quantization processes to achie ve an ef fective resolution many folds beyond the limitation that their resource constraints commonly permit. Using Monte Carlo simulations, we sho w that SR is feasible by elegantly exploiting the statistical property called “code diffusion” that is unique to a redundant structure in the presence of random ME. By applying the proposed technique on a 10-bit de vice, a profound theoretical increase of 8-9 bits effecti ve resolution or 256-512x enhancement of precision at 95% sample space is demonstrated. W e also point out the challenges of utilizing the proposed mechanism in practice b ut argue that they can be overcome by means of approximation or av oided in certain conditions. W e en vision the proposed technique would gi ve rise to wide applications in various fields of imaging and data acquisition instrumentation, especially fully-integrated sensors and devices where higher resolution is always desired. A C K N O W L E D G M E N T S This work was sponsored by the Defense Adv anced Re- search Projects Agency (D ARP A), Biological T echnologies Office (BTO), contract No. HR0011-17-2-0060 as well as internal funding from the Uni versity of Minnesota. R E F E R E N C E S [1] Pelgrom, M. J., Duinmaijer, A. C. and W elbers, A. P . ”Matching Properties of MOS Transistors.“ IEEE Journal of Solid-State Cir cuits , vol. 24(5), pp. 1433-9, 1989. [2] Freeman, W . T ., Jones, T . R. and Pasztor , E. C. ”Example-Based Super- Resolution.“ IEEE Computer Graphics and Applications , vol. 22(2), pp. 56-65, 2002. [3] Park, S. C., P ark, M. K. and Kang, M. G. ”Super -Resolution Image Reconstruction: A T echnical Overvie w .“ IEEE Signal Processing Mag- azine , vol. 20(3), pp. 21-36, 2003. [4] T ipping, M. E. and Bishop, C. M. ”Bayesian Image Super-Resolution.“ Advances in Neural Information Pr ocessing Systems (NIPS) , pp. 1303- 10, 2003. [5] Chang, H., Y eung, D.-Y . and Xiong, Y . ”Super-Resolution Through Neighbor Embedding.“ IEEE Computer V ision and P attern Recognition (CVPR) , vol. 1, 2004. [6] Farsiu, S., Robinson, M. D., Elad, M. and Milanfar , P . ”Fast and Robust Multiframe Super Resolution.“ IEEE T ransactions on Image Pr ocessing , vol. 13(10), pp. 1327-44, 2004. [7] Kellerer , H., Pferschy , U. and Pisinger, D. ”Introduction to NP- Completeness of Knapsack Problems, Knapsack Problems.“ Springer , pp. 483-93, 2004. [8] Croon, J. A., Sansen, W . M. and Maes, H. E. ”Matching Properties of Deep Sub-Micron MOS Transistors.“ Springer , 2005. 9 [9] El Gamal, A. and Eltoukhy , H. ”CMOS Image Sensors.“ IEEE Cir cuits and Devices Magazine , vol. 21(3), pp. 6-20, 2005. [10] Murmann, B. ”A/D Conv erter T rends: Power Dissipation, Scaling and Digitally Assisted Architectures.“ IEEE Custom Integrated Circuits Confer ence (CICC) , pp. 105-12, 2008. [11] Glasner , D., Bagon, S. and Irani, M. ”Super-Resolution From A Single Image.“ IEEE International Confer ence on Computer V ision , pp. 349-56, 2009. [12] Lu, Y . M. and V etterli, M. ”Spatial Super-Resolution of A Diffu- sion Field by T emporal Oversampling in Sensor Networks.“ IEEE International Confer ence on Acoustics, Speech and Signal Processing (ICASSP) , pp. 2249-52, 2009. [13] Li, X., Hu, Y ., Gao, X., T ao, D. and Ning, B. ”A Multi-Frame Image Super-Resolution Method.“ Signal Pr ocessing , vol. 90(2), pp. 405-14, 2010. [14] Y ang, J., Wright, J., Huang, T . S. and Ma, Y . ”Image Super-Resolution via Sparse Representation.“ IEEE T ransactions on Image Pr ocessing , vol. 19(11), pp. 2861-73, 2010. [15] T ian, J. and Ma, K.-K. ”A Survey on Super-Resolution Imaging.“ Signal, Image and V ideo Pr ocessing , vol. 5(3), pp. 329-42, 2011. [16] Fredenbur g, J. A. and Flynn, M. P . ”Statistical Analysis of ENOB and Y ield in Binary W eighted ADCs and DA Cs with Random Element Mismatch.“ IEEE T ransactions on Circuits and Systems I: Re gular P apers , vol. 59(7), pp. 1396-408, 2012. [17] Fossum, E. R. and Hondongwa, D. B. ”A Revie w of The Pinned Photodiode for CCD and CMOS Image Sensors.“ IEEE Journal of The Electr on Devices Society , vol. 2(3), pp. 33-43, 2014. [18] Fredenbur g, J. A. and Flynn, M. P . ”ADC T rends and Impact on SAR ADC Architecture and Analysis.“ IEEE Custom Inte grated Circuits Confer ence (CICC) , pp. 1-8, 2015. [19] Huang, Y ., W ang, W . and W ang, L. ”Bidirectional Recurrent Conv olu- tional Networks for Multi-Frame Super -Resolution.“ Advances in Neur al Information Pr ocessing Systems (NIPS) , pp. 235-43, 2015. [20] Murmann, B. ”The Race for The Extra Decibel: A Brief Review of Current ADC Performance T rajectories.“ IEEE Solid-State Cir cuits Magazine , vol. 7(3), pp. 58-66, 2015. [21] Nguyen, A. T ., Xu, J. and Y ang, Z. ”A 14-Bit 0.17mm 2 SAR ADC In 0.13 µ m CMOS for High Precision Nerve Recording.“ IEEE Custom Inte grated Circuits Conference (CICC) , pp. 1-4, 2015. [22] Nguyen, A. T ., Xu, J., T am, W .-k., Zhao, W ., W u, T . and Y ang, Z. ”A Programmable Fully-Integrated Microstimulator for Neural Implants and Instrumentation.“ IEEE Biomedical Cir cuits and Systems Conference (BioCAS) , pp. 472-5, 2016. [23] Nguyen, A. T ., Xu, J. and Y ang, Z. ”A Bio-Inspired Redundant Sensing Architecture.“ Advances in Neural Information Pr ocessing Systems (NIPS) , pp. 2379-87, 2016. [24] Nguyen, A. T ., Xu, J., Luu, D. K., Zhao, Q. and Y ang, Z. ”Advancing System Performance with Redundancy: From Biological to Artificial Designs,“ arXiv:1802.05324 [cs.IT] , Feb. 2018.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment