Fast, Robust and Non-convex Subspace Recovery

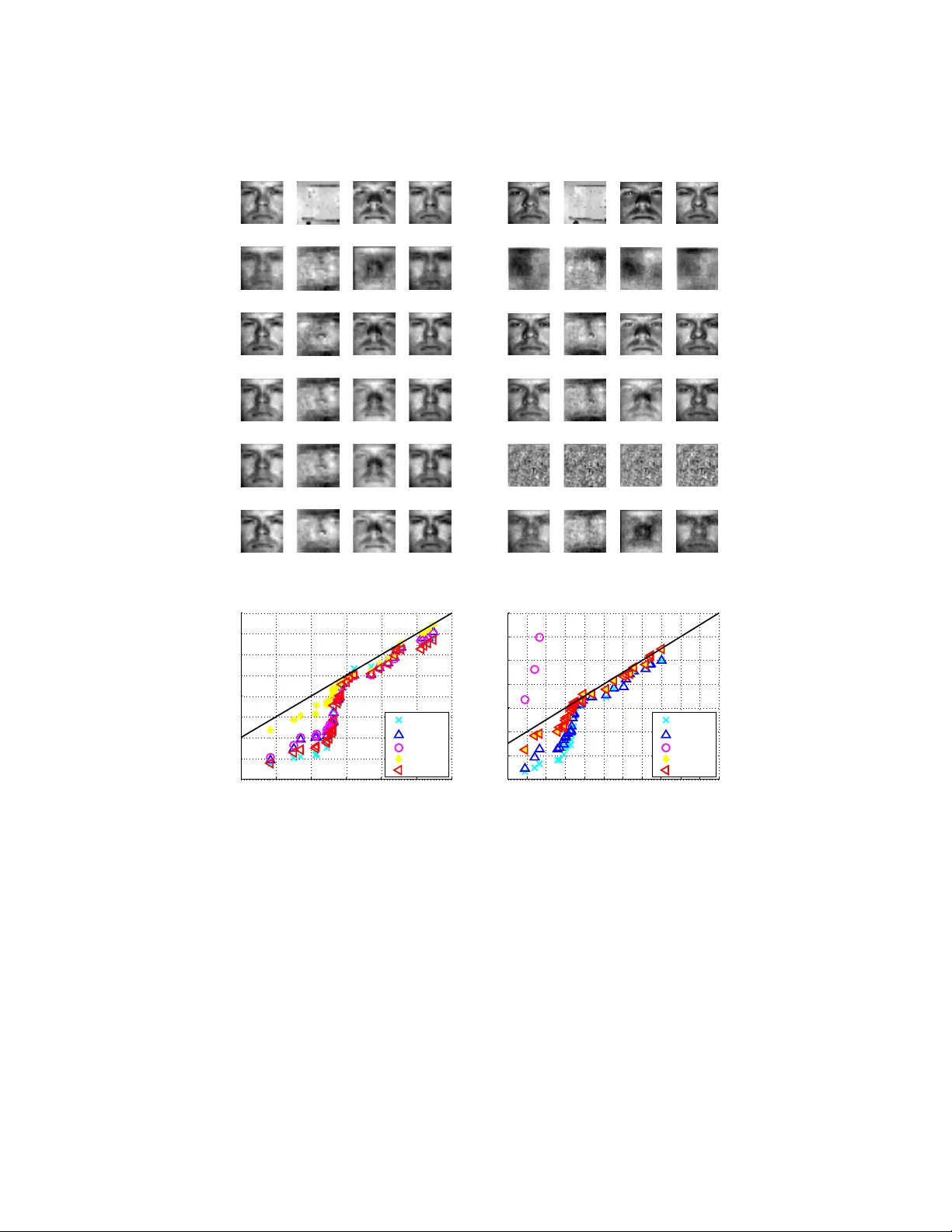

This work presents a fast and non-convex algorithm for robust subspace recovery. The data sets considered include inliers drawn around a low-dimensional subspace of a higher dimensional ambient space, and a possibly large portion of outliers that do …

Authors: Gilad Lerman, Tyler Maunu