End-to-end Models with auditory attention in Multi-channel Keyword Spotting

In this paper, we propose an attention-based end-to-end model for multi-channel keyword spotting (KWS), which is trained to optimize the KWS result directly. As a result, our model outperforms the baseline model with signal pre-processing techniques …

Authors: Haitong Zhang, Junbo Zhang, Yujun Wang

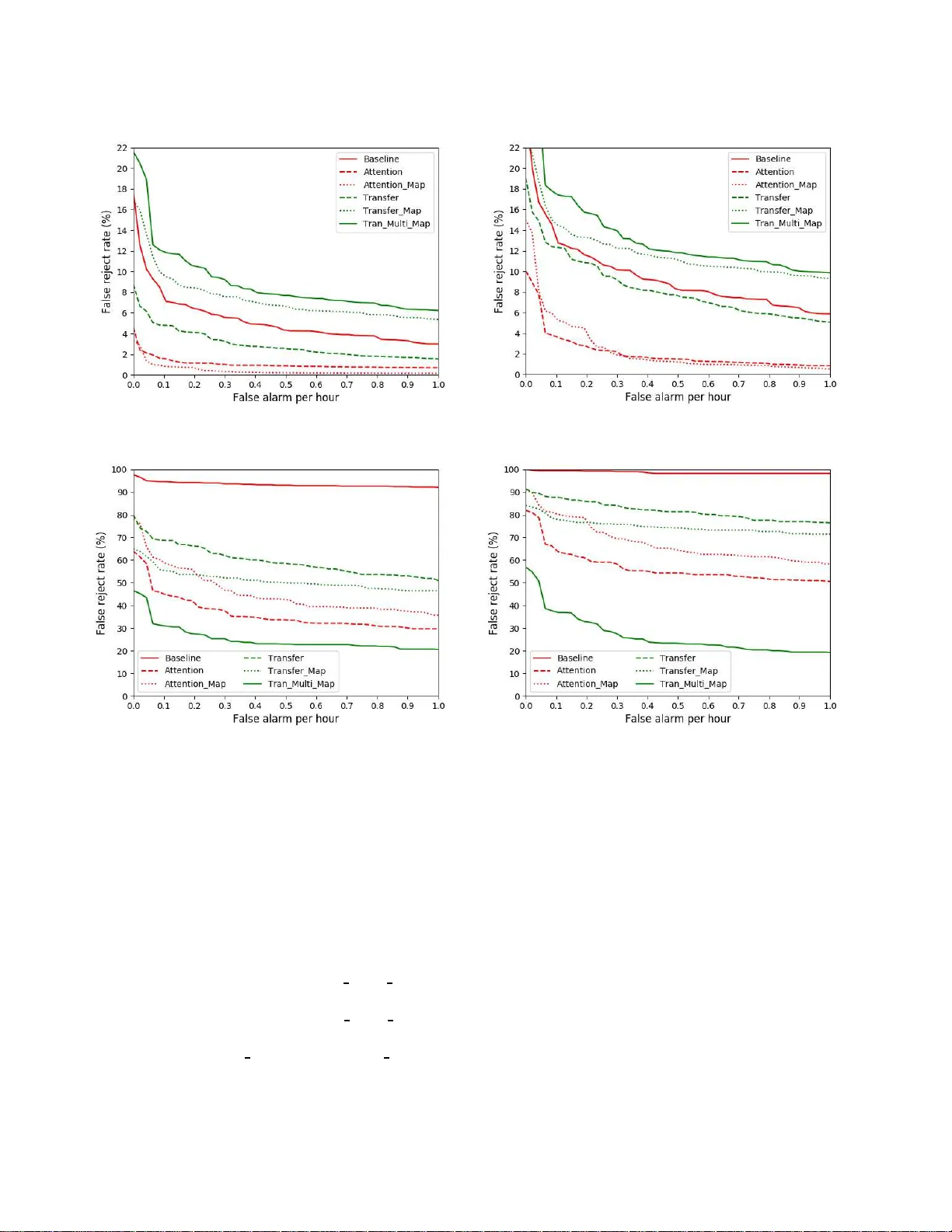

END-TO-END MODELS WITH AUDITOR Y A TTENTION IN MUL TI-CHANNEL KEYWORD SPOTTING Haitong Zhang Junbo Zhang Y ujun W ang Xiaomi Inc., Beijing, China { zhanghaitong, zhangjunbo, wangyuju n } @xiaomi.com ABSTRACT In this paper , we propos e a n attention-based end-to- end model for multi-channel keyword spotting ( KWS), which is trained to optimize the KWS result directly . As a result, our model outperforms the ba seline model with signal pre-processing techniques in both the clean and noisy testing da ta . W e also found that multi-task learning results in a better performance when the tra in- ing and testing da ta are similar . T ransfer lear ning and multi-target spectral mapping can d ramatically en- hance the robustness to the noisy enviro nment. At 0.1 false alarm (F A) per hour , the model with transfer learning and multi-target map p ing gain a n absolute 30% improvement in the wake-up rate in the noisy data with SNR a bout -20 . Index T erms — attention-based, end-to-end, multi- channel keyword spotting, single/multi-target spectral mapping, transfer lear ning 1. INTRODUCTION Keyword spotting is a task to detect a pre-defined key- word from a continuous stream of speech. KWS re- cently has drawn increasing attention since it is used as a wake-up wor d on mobile de vices. In this case, the KWS model should satisfy the r equirement of high ac- curacy , low-latency , and small-footprint. The methods ba sed on large vocabulary contin - uous speech recognition system (L VCSR) are used to process the audio offlin e [ 1 ]. They generate rich lattices and sea rch f or the keyword. Due to high - latency , these methods are not suitable for the mobile devices. Another competitive technique for KWS is the keyword/fi ller Hidden M arkov Model (HMM) [ 2 ]. HMMs are trained separately for the keyword and non- keyword segments. At runtime, a V iterbi searching is needed to search for the keyword, which can be com- putationally expensive due to the HMM typology . W ith the success of the application of neural net- work (NN) in automatic speech recogn ition (ASR), both keyword and non-keyword audio segments are used to train the same a c oustic NN-based model. For exam- ple, in the Deep KWS model propo sed by [ 3 ], a sin- gle DNN mo del is used to output the posterior prob- ability of the sub-segment of the keyword a nd to make the KWS decision based on a confidence score using a posterior smoothing. T o improve the per formance, the more powerful neural networks such as convolution al neural network (CNN) [ 4 ] and recurrent neural network (RNN) [ 5 ] are used to substitute DNN. Inspired by these models, some e nd-to-end models have been proposed to directly output the probability of the whole keyword instead of sub-word, without any searching method or posterior handling [ 6 – 8 ]. Although tremendous improvement has been made, the previous models mainly focus on single-channel KWS. In industry , people usually use the microphone array for more complicated situation s. Thus some sig- nal processing te c hniques should be applied to convert the multi-channel signal into single-channel. How- ever , these pre-processing techniques are sub- optimal because they are not optimized towards the final goal of interest [ 9 ]. There a re extensive literature in lear ning useful representations for multi-channel input in speech recognition . For example, [ 10 ] c oncatenates the multi- channel sign al into the network input. In [ 11 ], CNN is used to implicitly explore the spatial relationship between multiple channels. Attention-based methods have also been proposed to model the auditory atten- tion in multi-channel speech recognition [ 12 ]. Inspired by the applica tion of attention mechanism in ASR [ 12 ], we propose an attention-based end-to-e nd model for multi-channel KWS. C ompared with [ 12 ], our attention mechanism is computationally cheaper , which is more suitab le in the KWS task. T ra nsfer learning and multi-target spectral mapping are incorporated in the model to achieve a n better result in the noisy eva luation data. W e d escribe the propos ed model in Section 2. The experiment da ta, setup, and results follow in section 3. Section 4 c loses with the conclusion . x 1 x 2 x 3 ... x T Attention Mechanism x ′ 3 x ′ 2 x ′ 1 ... x ′ T Encoder h 3 h 2 h 1 ... h T y 3 y 2 y 1 ... y T Fig. 1 : The proposed model architecture. 2. THE PROPOSED MODEL As illustrated in Fig. 1 , the model mainly consists of three c omponents: (i) the attention mechanism, (ii) the sequence-to-sequence training, (iii) the decoding smoothing . 2.1. Attention Mechanism The a ttention mec ha nism we use is the soft attention, as proposed in [ 1 3 ]. For each time-step, we compute a 6-dimensional attention weight vector A c h t as followed: A c h t = s o f t m a x ( V t ∗ t a nh ( W x c h t + b ) ) (1) x ′ t = c h ∑ c = 1 A j t x j t (2) where x c h t is a 6 × 40 input feature matrix, W is a 40 × 128 weight matrix, b is a 128 -dimension bia s vector , and V t is a 1 28-dimension vector . A softmax function is applied for normalization. x ′ t is the weighted sum of the multi-channel inputs x c h t . 2.2. Sequence to sequence training The tra ining framework we use is sequence-to-sequence. The encoder learns the higher representations h for the enhanced speech features x ′ . W ith a linear transfor- mation and a softmax function, the probability of the whole keyword can be predicted at each frame. (a) The firs t situation for recording. (b) The s e cond si tuation for recording. Fig. 2 : T wo situations f or recor ding the noisy testing data in a 4 m*4m*3. 5 m labatory , where N denotes noise and R de notes recording device . Multi-ta sk Learning. T o improve the performance, we ta ke the spectral map ping as an a uxiliary task f or our KWS model. The model learns the nonlinear map- ping between the multi-channel spee ch fea tures and the single-channel spee ch features, which is inspired by [ 14 ]. The target speech fe a tures come from the tr a- ditional signal processing techniques. Although we depreciate the idea of separating the front-end signal processing techniques and the acoustic model, we con- jecture whether the multi-task fra mework can improve the performa nce. The loss function is: Lo ss to t a l = α ∗ L o s s KW S + ( 1 − α ) L o s s M a p c l e a n (3) T ransfer Learning. W e also a dopt transfer lea r ning to improve the perf orma nce in the noisy environment. T ransfer learning [ 15 ] refers to initializing the model parameters with the corresponding parameters of a trained model. Here we initialize the network using the proposed multi-channel KWS model trained with the relatively clean data, and fine-tune the model with only noisy data . Multi-ta rget Mapping. Since it is dif ficult to train the model with all noisy data, we propose multi-target spectral mapping. W e conjecture that with mor e map- ping targets, the spectral mapping can converge better than learning the nonlinear relationshi p between the noisiest input and the cleanest output. Compared with the spectral mapping mentioned a bove, two extra map- ping targets are involved when training (deta iled in sub-section 3.1 ). The loss function is described a s fol- lowed: Lo ss to t a l = α ∗ Lo ss KW S + β ∗ Lo ss M a p c l e a n + θ ∗ Lo ss M a p no i s e 1 + δ ∗ L o s s M a p n o i s e 2 (4) with the constraint that α + β + θ + δ = 1. 2.3. Decoding When decoding, our model, ta ke s as the input a 6 ∗ 40 feature matrix and outputs the keyword spotting prob- ability at ea ch frame. W e adopt a posterior pro babil- ity smoothing method, and finally the d ecision is made based on the average probability of n frames. 3. EXPERIMENTS 3.1. Datasets The training data consists of 2 40k utterances of the key- word (which includes 120k ones with e c ho a nd the other without echo), and 2 0 0 hours of negative examples, with 1 0% of them used for validation. The evaluation data includes 50 hours of filler data and 48 k keywor d data (with 50 % e cho keywords and 50% non-echo ones). W e a lso record 1k noisy keywords as Fig. 2 illustrates. They consists of two equal pa r ts, which are recorded in two situations. The first half (refer red to har d-noisy data, with the average SNR about -20) is recorded as shown in Fig. 2a where the recording device is close to the music noise and the speaker is 3 meters away from the device. The other ( referred to easy-noisy data, with the average SNR a bout -18) are recorded as Fig. 2b shows. The distance between the device and speaker remains unchanged, but the between the device a nd the music source is 1 meter . Besides that, we also recorded 50 hours of music for the multi-target mapping experiment. In the exp e r- iment, we randomly a dd music to the 120k non-echo keywords and 200 hours of negative examples to gen- erate the noisy training data . Algorithm 1 shows the procedure of c reating multiple mapping targets. 3.2. Experiment setups In the b a seline singl e-cha nnel KWS model, the front- end component ma inly includes bea mforming and acoustic echo ca ncellation ( AEC). These two blocks are constructed as proposed in [ 16 ] and [ 1 7 ], respectively . The input feature in all the e x periment is the train- able PCEN [ 18 ]. The 40-dimension filter -bank fea- tures are extracted using a window of 25 ms with a shift of 10ms. The encoder in the e x periment is two GRU ( Gated Recurrent Units) layers [ 19 ] and one fully- connected layer . Both the GRU and FC layer have 128 units, with a 0.9 dropout rate. In the multi-task models, two tasks use two sepa rate FC layers. Adam opti- mizer [ 20 ] is used to update the training para mete r s, with the batch size a nd initial lea rning rate is 64 a nd 0.001 , respectively . The α , β , θ , a n d δ value in the spectral mapping is 0. 5, 0.2,0.2 ,a nd 0.1, respectively , since they are reasonably good in the de v e lopment data. Algorithm 1 Procedure for creating noisy training data in the multi-target spectra l mapping 1: for each multi-channel wa v a in all wavs d o 2: select one music clip b randomly 3: add b into a, with SNR is a bout -10 ⊲ Input 4: convert a into single-channel c ⊲ T a rget 1 5: convert b into single-channel d 6: add d into c, with SNR is a bout +5 ⊲ T a rget 2 7: add d into c, with SNR is a bout +10 ⊲ T a rget 3 8: return Input, T arget 1, T arget 2, T a rget 3 3.3. Impact of Attention mechanism W e first ev a luate the performance of the baseline model and the proposed multi-channel models. The base- line model uses the signal processing techniques in sub-section 3.2 . The input of the signal pr ocessing techniques is seven channels, while the input of the proposed model (i.e. Attention) is only six channels, without the reference signal for AEC. It is obvious that Attention outperforms the baseline model in all the evaluation data sets. At 0.5 false alarm (F A ) per hour , Attention gains an absolute 4% improve- ment and 7 %, respectively in the non-echo and echo data (Fig. 3a and Fig. 3 b ). The difference in the per formances becomes la rger in the noi sy da ta ( Fig. 3c and Fig. 3d ). The performance improvements are 40 % and 60%, r espectively in the hard-noisy data and easy-noisy da ta. This great dif- ference may be larg ely attributed to that the sign al processing techniques are not robust to the noisy en- vironment, e specially when the noise is close to the wake-up device. 3.4. Impact of multi-task learning As indicated in Fig. 3a and Fig. 3b , the pro posed model with spectral ma pping ( i.e. Mapping) outperforms At- tention slightly , which to some degree confirms our con- jecture. However , the result gets worse in the noisy da ta (Fig. 3c and Fig. 3d ). Such a difference lie in the differ- ence between the training data and noisy testing d ata. 3.5. Impact of transfer learning and multi-ta rget map- ping T o increase the noise-robustness of the model, we ini- tialize the model with the parameters of Attention and fine-tune the model with the artificial noisy training data (deta iled in sub-section 3.1 ). As shown in Fig. 3 a and Fig. 3b , a ll the mo dels with transfer learning (i.e. T ransfer , T ra nsfer map, and T ran Multi Map) perform worse than Attention in both non-echo and echo testing (a) ROC for the Non-echo data (b) ROC for the Echo data (c) RO C for the Easy-noisy data (d) ROC fo r the Hard-noisy data Fig. 3 : The results of the baseline model and the proposed models, with the smoothing frame n = 12. data. The reason lies in the difference between the train- ing data and the testing data. However , the main ta rget of the tra nsfer learning and multi-target mapping is the noisy da ta. A s illustrated in Fig. 3c and Fig. 3d , trans- fer learning and single-target spectr a mapping do not result in a better result than A ttention, which confirms the difficulties in training the model with only noisy data. However , the model with the transfer learning and multi-target mapping (i.e. T ran Multi Map) out- performs all the models by a larg e margin in the no isy data. At 0.5 fa lse alarm per hour , T ran Multi map gains an absolute 30% and 10 % improvement over A ttention, respectively in the hard noisy data and easy noisy da ta. 4. CONCLUSIONS W ith out the reference signal for AEC, the proposed attention-based model for multi-channel KWS out- performs the baseline model in a ll the testing data. W ith spectral mapping, the perf ormance can gain a slight improvement when the training data and test- ing data a re similar . In addition, transfer learning and multi-target spectra l mapping can enhance the model’s robustness to the noisy environment, which shed lights on the NN- b ased speech enhancement in ASR. 5. ACKNOWLEDGE The authors would like to thanks the colleagues f rom the acoustic group for useful discussion. 6. REFERENCES [1] David RH Miller , M icha e l Kleber , Chia- L in Kao, Owen Kimball, Thomas Colthurst, Stephen A Lowe, Richard M Schwartz, a nd Herbert Gish. Rapid and accurate spoken term detection. In Eighth Annual Conference of th e International Speech Communication Association , 2 007. [2] Richard C Rose and Douglas B Paul. A hidden markov model based keyword recognition system. In Acoustics, Speech, and Signal Processing, 1990. ICASSP-90., 1990 I nternational Conference on , pa ges 129–1 32. IEEE, 1990. [3] Guoguo Chen, Carolina Para da, and Georg Heigold. Small-f ootprint keyword spotting using deep neura l networks. In Acoustics, Sp eech and Signal Processing (ICASSP), 2014 IEEE I nternational Confere nce o n , pages 4 087– 4091. IE EE, 201 4. [4] T a ra N Sainath and Carolina Parada . Convo- lutional neural networks for small-footprint key- word spottin g. In Sixteenth Annual Confer ence of the International Sp eech Communication Association , 2015. [5] Ming Sun, A nirudh Raju, George T ucker , Sankaran Panchapagesan, Gengshen Fu, Arinda m Mandal, Spyros Matsoukas, Nikko Strom, and Shiv V ita- ladevuni. Max-pooling loss training of long short- term memory networks for small-footprint key- word spotting. In Spoken Lang uage T echnology Work- shop (SL T) , 2016 IEEE , pages 47 4 –480 . IE EE, 2016. [6] Y e B ai, Jiangyan Y i, Hao Ni, Zhengqi W en, Bin Liu, Y a Li, and Jianhua T ao. End- to-end keywords spot- ting based on connectionist temporal classification for ma nda rin. In Chinese Spoken Language Process- ing (I SCSLP), 2016 10 th International Sym posium on , pages 1 –5. IEEE, 201 6. [7] Sercan O Arik, Ma rkus Kliegl, Rewon C hild, Joel Hestness, Andrew Gibiansky , Chris Fougner , R yan Prenger , and Adam Coates. C onvolutional recur- rent neural networks for small-footprint keyword spotting. arXiv preprint arXiv:1703 .0539 0 , 2017. [8] Changhao Shan, Junbo Zhang, Y ujun W ang, and Lei Xie. Attention-based end-to-e nd models for small-footprint keywor d spotting. arXiv preprint arXiv:1803 .1091 6 , 201 8 . [9] Michael L Seltzer . B ridging the gap: T owards a unified framework for hands-free speech recog- nition using microphone arra ys. In Hand s-Free Speech Communication and Microphone Arrays, 2 008. HSCMA 200 8 , pages 104–1 07. IE EE, 200 8. [10] Y ulan Liu, Pengyuan Zhang, and Thomas Hain. Using neural network front-ends on fa r field mul- tiple microphones based speech recognition. In Acoustics, Speech and Signal Processing (ICASSP), 2014 IEEE I nternational Conference on , pages 5542– 5546. IEEE, 2 014. [11] Steve Renals and Pa wel Swietojanski. Neural net- works for distant speech recogni tion. In Hands- free Speech Communication and Microphone Arrays (HSCMA), 20 14 4th Joint Works hop o n , pages 1 72– 176. IEEE, 20 14. [12] Suyoun Kim and Ian L a ne. Recurrent mod- els for auditory attention in multi-microph one distance spe e ch recogn ition. arXiv preprint arXiv:1511 .0640 7 , 2015. [13] F A Chowdhury , Quan W ang, Ignacio Lopez Moreno, and Li W a n. Attention-based models for text-dependent speaker ve r ification. arXiv preprint arXiv:1710 .1047 0 , 2017. [14] Zhuo Chen, S hinji W atanabe, Hakan Erdogan, and John R Hershey . Speech enhancement and r ecog- nition using multi-task learning of long short-term memory recurrent neural networks. In Six teenth Annual Confer ence of th e International Speech Commu- nication Association , 2015 . [15] Sinno Jialin Pan, Qiang Y ang, e t al. A survey on transfer lear ning. I EEE T ransactions on knowledge and data engineering , 22( 1 0):13 45–13 59, 201 0. [16] Franc ¸ ois Gro ndin, Dominic L ´ etourneau, Franc ¸ ois Ferland, V incent Rousseau, and Fra nc ¸ ois M ichaud. The manyear s open framework. Autonomous Robots , 34( 3):21 7–232 , 201 3. [17] Jean-Ma rc V a lin. On adjusting the learning rate in frequency domain echo cancellation with d ouble- talk. IEEE T ransactions on Audio, Speech, and Lan- guage Processing , 15(3):10 30–1 034, 2007. [18] Y uxuan W ang, Pascal Getreuer , Thad Hughes, Richard F L yo n, and Rif A Saurous. T rainable frontend for robust and far-field keyword spotting. In 2 017 IEEE I nternational Conference on Acoustics, Speech and Signal Processi ng ( ICASSP) , pages 5 670– 5674. IEEE, 2 017. [19] Junyoung Chung, Caglar Gulcehre, KyungHyun Cho, and Y oshua Bengio . Empirical evaluation of gated recurrent neural networks on sequence mod- eling. arXiv preprint arXiv:1412.35 55 , 201 4. [20] Diederik P Kingma and Jimmy Ba. Adam: A method f or stochastic optimization. arXiv preprint arXiv:1412 .6980 , 2 014.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment