Intrinsic Isometric Manifold Learning with Application to Localization

Data living on manifolds commonly appear in many applications. Often this results from an inherently latent low-dimensional system being observed through higher dimensional measurements. We show that under certain conditions, it is possible to constr…

Authors: Ariel Schwartz, Ronen Talmon

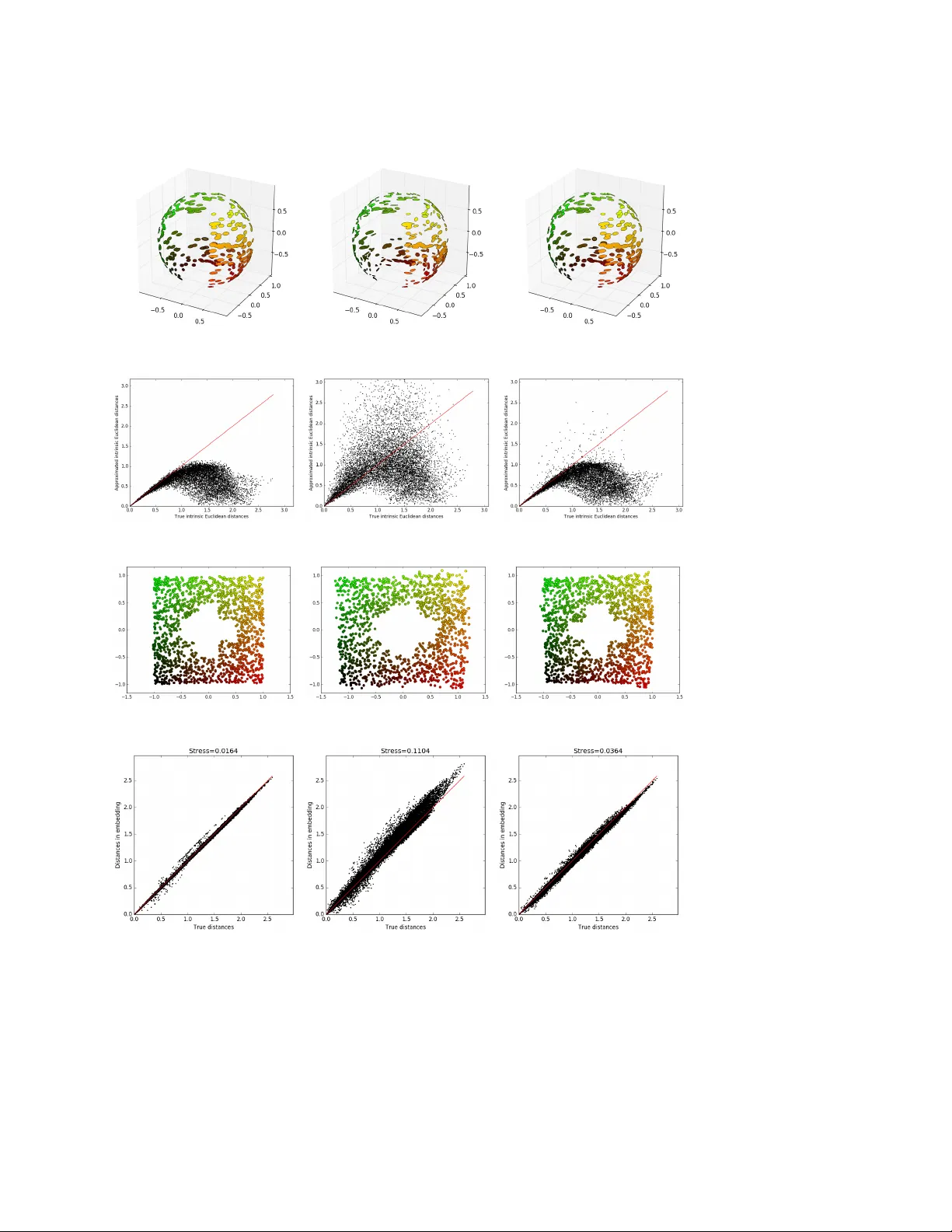

INTRINSIC ISOMETRIC MANIF OLD LEARNING WITH APPLICA TION TO LOCALIZA TION ∗ ARIEL SCHW AR TZ † AND RONEN T ALMON ‡ Abstract. Data living on manifolds commonly app ear in many applications. Often this re- sults from an inherently laten t low-dimensional system being observed through higher dimensional measurements. W e show that under certain conditions, it is p ossible to construct an intrinsic and isometric data represen tation, whic h resp ects an underlying latent intrinsic geometry . Namely , we view the observed data only as a pro xy and learn the structure of a laten t unobserved intrinsic man- ifold, whereas common practice is to learn the manifold of the observ ed data. F or this purp ose, we build a new metric and propose a metho d for its robust estimation by assuming mild statistical priors and by using artificial neural netw orks as a mechanism for metric regularization and parametriza- tion. W e show successful application to unsup ervised indoor lo calization in ad-hoc sensor net w orks. Specifically , we show that our prop osed metho d facilitates accurate lo calization of a moving agen t from imaging data it collects. Imp ortantly , our metho d is applied in the same wa y to tw o different imaging mo dalities, thereb y demonstrating its intrinsic and modality-in v arian t capabilities. Key w ords. manifold learning, intrinsic, isometric, metric estimation, inv erse problem, sensor inv ariance, positioning AMS sub ject classifications. 57R40, 57M50, 65J22, 57R50 1. In tro duction. Making measuremen ts is an in tegral part of ev eryday life, and particularly , exploration and learning. How ev er, w e are usually not in terested in the v alues of the acquired measurements themselv es, but rather in understanding the la- ten t system which drives these measuremen ts and might not b e directly observ able. F or example when considering a radar system, w e are not interested in the pattern of the electromagnetic w av e received and measured by the antenna, but rather in the location, size and velocity of the ob ject captured by the wa v e reflection pat- tern. This example highlights the difference b et w een “observe d” prop erties, which can b e directly measured, and “intrinsic” prop erties corresponding to the latent driv- ing system. The importance of this distinction b ecomes central when the same latent system is observed and represen ted in m ultiple forms; the differen t measuremen ts and observ ation mo dalities ma y hav e different observ ed properties, y et they share certain common intrinsic properties and structures, since they are all generated b y the same driving system. Differen t observ ation mo dalities are not all equally suitable for examining the underlying driving system. It is often the case that systems, whic h are go v erned b y a small num ber of v ariables (and are hence inherently lo w-dimensional in nature), are observ ed via redundant complex high-dimensional measurements, obscuring the true lo w-dimensional and m uc h simpler nature of the laten t system of in terest. As a result, when trying to tac kle signal processing and machine learning tasks, a considerable amoun t of effort is put initially on c ho osing or building an appropriate represen tation of the observ ed data. Muc h researc h in the fields of mac hine learning and data mining has been devoted to metho ds for learning wa ys to represent information in an unsup ervised fashion, ∗ Submitted to the editors DA TE. † Viterbi F aculty of Electrical Engineering, T echnion – Israel Institute of T echnology , Israel ( ariels@technion.campus.ac.il ‡ Viterbi F aculty of Electrical Engineering, T echnion – Israel Institute of T echnology , Israel ( ro- nen@ee.technion.ac.il 1 2 A. SCHW AR TZ AND R. T ALMON directly from observ ed data, aiming to lo w er the dimensionalit y of the observ ations, simplify them and uncov er properties and structures of their latent driving system [ 4 ]. This field of research has recently gained considerable attention due to the abilit y to acquire, store and share large amounts of information, leading to the av ailability of large scale data sets. Suc h large data sets are often generated by systems, which are not well understo o d and for whic h tailoring data representations based on prior kno wledge is imp ossible. A particular representation learning sub class of metho ds is manifold learning [ 4 , 42 , 12 , 3 , 35 , 48 , 31 ]. In manifold learning it is assumed that observ ed data lie near a lo w-dimensional manifold embedded in a higher-dimensional ambien t observ ation space. T ypically the goal then is to embed the data in a low-dimensional Euclidean space while preserving some of their prop erties. Most manifold learning methods operate directly on the observ ed data and rely on their geometric prop erties [ 42 , 4 , 12 , 15 , 23 , 31 , 32 , 37 , 44 ]. This observ ed geometry , ho wev er, can be significan tly influenced b y the observ ation mo dality whic h is often arbitrary and without a clear kno wn connection to the latent system of interest. Consequen tly , it is subpar to preserve observed prop erties, which might b e irrelev an t, and instead, one should seek to rely on intrinsic prop erties of the data, which are inheren t to the latent system of interest and inv ariant to the observ ation mo dalit y . In trinsic geometry can b e esp ecially b eneficial when it adheres to some useful structure, such as that of a vector space. Indeed there exist many systems whose dy- namics and state space geometry can be simply described within an am bient E uclidean space. Unfortunately , when suc h systems are observ ed via an unknown non-linear ob- serv ation function, this structure is typically lost. In such cases, it is adv an tageous to not only circumv en t the influence of the observ ation function but also to explicitly preserv e the global intrinsic geometry of the latent system. W e claim that in man y situations, metho ds whic h are b oth in trinsic and glob- ally isometric are b etter suited for manifold learning. In this pap er, w e prop ose a dimensionalit y reduction method whic h robustly estimates the push-forward metric of an unknown observ ation function using a parametric estimation implemented b y an Artificial Neural Netw ork (ANN). The estimated metric is then used to calcu- late intrinsic-isometric geometric prop erties of the latent system state space directly from observed data. Our metho d uses these geometric properties as constrain ts for em b edding the observ ed data in to a low-dimensional Euclidean space. The prop osed metho d is th us able to unco v er the underlying geometric structure of a latent system from its observ ations without explicit kno wledge of the observ ation mo del. The structure of this pap er is as follo ws. In section 2 we present the general problem of localization based on measurements acquired using an unknown observ a- tion mo del. This problem pro vides motiv ation for developing an in trinsic-isometric manifold learning metho d. In section 3 we formulate the problem mathematically . In section 4 we present our dimensionality reduction metho d, whic h mak es use of the push-forw ard metric as an intrinsic metric. In section 5 , w e propose a robust metho d for estimating this intrinsic metric directly from the observed data using an ANN. In section 6 we present the results of our prop osed algorithm on synthetic data sets. In addition, w e revisit the localization problem describ ed in section 2 in the sp ecific case of image data and sho w that the prop osed intrinsic-isometric manifold learning approac h indeed allows for sensor inv arian t p ositioning and mapping in realistic con- ditions using highly non-linear observ ation mo dalities. In section 7 we conclude the w ork, discuss some key issues and outline p ossible future research directions. INTRINSIC ISOMETRIC MANIFOLD LEARNING 3 2. The lo calization problem and motiv ation. Our motiv ating example is that of mapping a 2-dimensional region and p ositioning an agent within that region based on measurements it acquires. W e denote the set of p oin ts b elonging to the region b y X ⊆ R 2 and the lo cation of the agent by x ∈ X (as illustrated in Figure 1 ). A t each p osition the agen t makes measurements y = f ( x ), which are functions of its lo cation. The v alues of the measuremen ts are observ able to us; how ev er, we cannot directly observ e the shap e of X or the location of the agen t x , therefore they represen t a latent system of interest. Fig. 1: Agent in a 2-dimensional space Fig. 2: Creation of the observed manifold W e sim ultaneously consider t w o distinct possible measuremen t models as de- scrib ed in Figure 3 . The first measuremen t mo dality described in Figure 3a is concep- tually similar to measuring Received Signal Strength (RSS) v alues at x from an tennas transmitting from differen t locations. Such measuremen ts t ypically deca y as the agen t is further aw ay from the signal origin. The second p ossible measurement mo del, vi- sualized in Figure 3b , consists of measurements, which are more complex and do not ha ve an obvious interpretable connection to the lo cation of the agent. Although b oth of these measurement mo dalities are 3-dimensional, the set of observ able mea- suremen ts resides on a 2-dimensional manifold embedded in 3-dimensional ambien t observ ation space, as visualized in Figure 2 . This is due to the inherit 2-dimensional 4 A. SCHW AR TZ AND R. T ALMON (a) First measurement mo dality (b) Second measurement mo dality Fig. 3: Two differen t observ ation function modalities. a A “free-space” signal strength deca y model. The an tenna symbol represents the lo cation from which the signal originates b A second measuremen t mo dalit y of arbitrary and unkno wn nature. In b oth cases the v ariation in color represen ts different measurement v alues . nature of the latent system. The resp ectiv e observ ation functions, f and e f , of these measurement mo dalities are b oth bijectiv e on X , i.e. no tw o locations within X correspond to the same set of measuremen t v alues. Therefore, one can ask whether the lo cation of the agent x can b e inferred given the corresponding measuremen t v alues. If the measurement mo del represen ted b y the observ ation function is known, this b ecomes a standard problem of mo del-based lo calization, whic h is a sp ecific case of non-linear in verse problems [ 20 ]. How ev er, if the observ ation function is unknown or dep ends on many unknown parameters, recov ering the lo cation from the measuremen ts b ecomes m uc h more chal- lenging. The latter case represen ts man y real-life scenarios. F or example, acoustic and electromagnetic wa ve propagation models, which are complex and dep ends on man y a priori unknown factors such as ro om geometry , locations of w alls, reflection, transmission, absorption of materials, etc. Image data can also serve as a possible indirect measurement of position. w e note that although the image acquisition mo del is w ell known, the actual image acquired also depends on the geometry and look of the surrounding en vironmen t. If the surrounding environmen t is not kno wn a priori then this observ ation modality also falls into the category of observ ation via an unknown mo del. In this work, we will consider the case of observ ation via an unknown mo del, and address the follo wing question: is it b e p ossible to retriev e x from f ( x ) without kno wing f ? Giv en that the described problem in volv es the recov ery of a lo w-dimensional struc- ture from an observ able manifold embedded in a higher-dimensional ambien t space, w e are inclined to use a manifold learning approac h. How ev er, standard manifold learning algorithms do not yield satisfactory results, as can be seen in Figure 4 . While some of the metho ds provide an interpretable low-dimensional parametrization of the latent space b y preserving the basic top ology of the manifold, none of the tested metho ds INTRINSIC ISOMETRIC MANIFOLD LEARNING 5 reco ver the true lo cation of the agen t or the structure of the region it mo v es in. Fig. 4: Manifold learning for positioning and mapping based on observed measure- men ts . The first ro w corresponds to the application of manifold learning metho ds to the manifold created via the observ ation function visualized in Figure 3a . The second ro w corresp onds to application of manifold learning metho ds to the manifold cre- ated via the observ ation function visualized in Figure 3b . The b ottom row represents application of manifold learning metho ds directly to the latent manifold This problem demonstrates the inadequacy of existing manifold learning algo- rithms for uncov ering the intrinsic geometric structure of a latent system of interest from its observ ations. W e attribute this inadequacy to tw o main factors: the lack of intrinsicness and the lac k of ge ometry pr eservation or isometry . Existing manifold learning algorithms generate new representations of data, while preserving certain observe d prop erties. When data originates from a latent system measured via an observ ation function, the observed measurements are affected by the sp ecific (and of- ten arbitrary) observ ation function, which in turn affects the learned lo w-dimensional represen tation. As a consequence, the same latent system observed via different ob- serv ation mo dalities is represented differen tly when manifold learning metho ds are applied (as can b e seen in Figure 4 ). This fails to capture the intrinsic c haracter- istics of the different observed manifolds, which originate from the same laten t lo w- dimensional system. Settings in v olving different measurement mo dalities of unknown measuremen t modalities necessitate manifold learning algorithms, whic h are intrinsic , i.e., that their learned representation is indep enden t of the observ ation function and in v ariant to the wa y in which the latent system is measured. In trinsicness b y itself is not enough in order to retriev e the laten t geometric struc- ture of the data. b e seen in Figure 4 , even when the same manifold learning methods are applied directly to the low-dimensional latent space, thus av oiding an y observ a- tion function, preserv ation of the geometric structure of the latent low-dimensional space is not guaran teed. In order to explicitly preserv e the geometric structure of the laten t manifold, the learned representation needs to also be isometric , i.e., distance preserving. In this pap er, w e present a manifold learning metho d, which is isometric with r esp e ct to the latent intrinsic ge ometric structur e . W e will show that, under certain 6 A. SCHW AR TZ AND R. T ALMON assumptions, our metho d allows for the retriev al of x and X from observ ed data without requiring explicit knowledge of the specific observ ation mo del, thus solving a “blind in verse problem”. In the con text of motiv ating p ositioning problem, we ac hiev e sensor-in v ariant lo calization, enabling the same p ositioning algorithm to be applied to problems from a broad range of signal domains with m ultiple types of sensors. 3. Problem form ulation. Let X b e a path-connected n -dimensional manifold em b edded in R n . W e refer to X as the intrinsic manifold and it represents the set of all the p ossible laten t states of a lo w-dimensional system. These states are only indirectly observ able via an observ ation function f : X → R m whic h is a con tinuously- differen tiable injective function that maps the latent manifold in to the observ ation space R m . The image of f is denoted by Y = f ( X ) and is referred to as the observed manifold. Since f is injective w e hav e that n ≤ m . Here, we fo cus on a discrete setting, and define tw o finite sets of sample p oints from X and Y . Let X s = { x i } N i =1 b e the set of N in trinsic p oints sampled from X , and let Y s = f ( X s ) b e the corresp onding set of observ ations of X s . Under the ab ov e setting and giv en access only to Y ∫ , w e wish to generate a new em b edding e X s = { ˜ x i } N i =1 of the observed p oints Y s = { y i } N i =1 in to n -dimensional Euclidean space while respecting the geometric structure of the laten t sample set X s . In order to provide a quan titativ e measure for “intrinsic structure preserv ation”, w e utilize the stress function commonly used for Multi Dimensional Scaling (MDS) [ 8 , 14 , 11 ]. This function p enalizes the discrepancies b etw een the pairwise distances in the constructed em b edding and an “ideal” target distance or dissimilarity . In our case the target distances are the true pairwise distances in the in trinsic space. This results in the following cost function: (1) σ e X s = X i

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment