Symbol Error Rate Performance of Box-relaxation Decoders in Massive MIMO

The maximum-likelihood (ML) decoder for symbol detection in large multiple-input multiple-output wireless communication systems is typically computationally prohibitive. In this paper, we study a popular and practical alternative, namely the Box-rela…

Authors: Christos Thrampoulidis, Weiyu Xu, Babak Hassibi

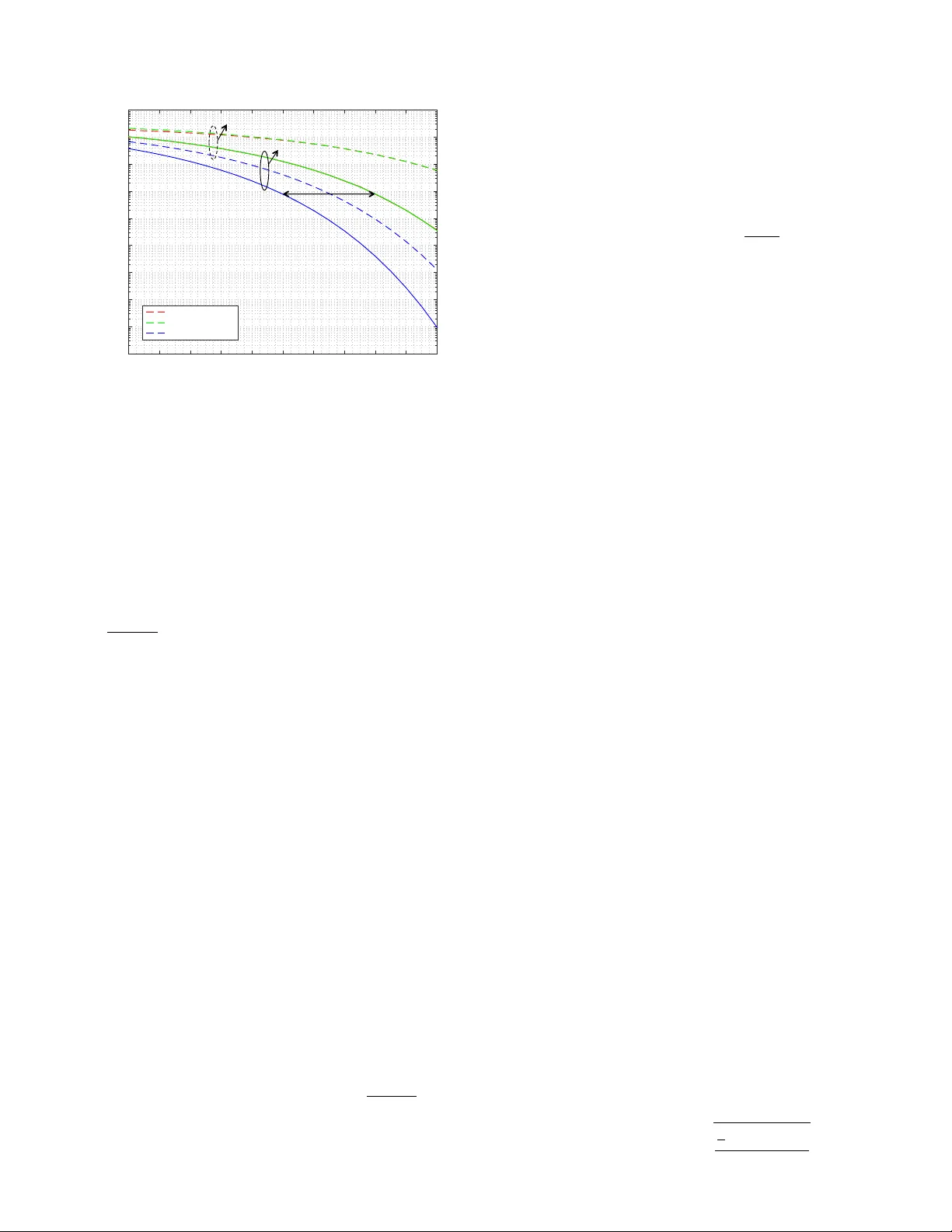

1 Symbol Error Rate Performance of Box-relaxation Decoders in Massi v e MIMO Christos Thrampoulidis ? , W eiyu Xu † , Babak Hassibi ‡ Abstract —The maximum-likelihood (ML) decoder f or sym- bol detection in large multiple-input multiple-output wireless communication systems is typically computationally prohibiti ve. In this paper , we study a popular and practical alternative, namely the Box-relaxation optimization (BR O) decoder , which is a natural conv ex relaxation of the ML. F or iid real Gaus- sian channels with additive Gaussian noise, we obtain exact asymptotic expressions for the symbol error rate (SER) of the BR O. The formulas are particularly simple, they yield useful insights, and they allow accurate comparisons to the matched- filter bound (MFB) and to the zero-f orcing decoder . F or BPSK signals the SER performance of the BRO is within 3 dB of the MFB for square systems, and it approaches the MFB as the number of receiv e antennas gro ws large compared to the number of transmit antennas. Our analysis further characterizes the empirical density function of the solution of the BR O, and shows that error events for any fixed number of symbols are asymptotically independent. The fundamental tool behind the analysis is the con vex Gaussian min-max theor em. I . I N T R O D U C T I O N The problem of recov ering an unkno wn vector of symbols that belong to a finite constellation from a set of noise corrupted linearly related measurements arises in numerous applications, and in particular in multiple-input multiple output (MIMO) wireless communication systems [ 1 , 2 , 3 , 4 ]. As a re- sult, a large host of e xact and heuristic optimization algorithms hav e been proposed over the years. Exact algorithms, such as sphere decoding and its variants, become computationally prohibitiv e as the problem dimension grows, a scenario that is typical in modern massi ve MIMO systems, e.g., [ 2 ]. Heuristic algorithms such as zero-forcing, MMSE, decision-feedback, etc., [ 5 , 6 , 7 , 8 ] have inferior performances that are often difficult to precisely characterize. One popular heuristic is the so called box-relaxation optimization decoder, which is a natural con vex relaxation of the maximum-likelihood (ML) decoder , and which allows one to recover the signal via efficient con ve x optimization followed by hard thresholding, e.g., [ 9 , 10 , 11 ]. Despite its popularity , very little is known analytically about the decoding performance of this method. In this paper , we close this gap by deriving exact asymptotic error-rate characterizations under the assumption of real Gaus- sian wireless channel and additi ve Gaussian noise. ? Research Laboratory of Electronics, MIT , Cambridge, USA, † Department of ECE, Univ ersity of Iowa, Iow a city , USA, ‡ Department of Electrical Engeeniring, Caltech, Pasadena, USA. A. Pr oblem formulation W e consider the problem of recovering an unkno wn vector x 0 of n transmitted symbols each belonging to a finite constel- lation from the noisy multiple-input multiple-output relation, y = Ax 0 + z ∈ R m , where A ∈ R m × n is the MIMO channel matrix (assumed to be known) and z ∈ R m is the noise vector . W e assume iid real Gaussian channel with additive Gaussian noise. In particular, A has entries iid N (0 , 1 /n ) and z has entries iid N (0 , σ 2 ) . The normalization is such that the signal-to-noise ratio (SNR) v aries inv ersely proportional to the noise variance σ 2 . W e are interested in the large-system limit, where both the number n of transmit antennas and the number m of receiv e antennas are large. For simplicity of exposition we assume, for the most part of the paper , that x 0 is an n- dimensional BPSK vector , i.e., x 0 ∈ {± 1 } n . Extensions to M-ary constellations are also pro vided. Maximum-Likelihood decoder . The ML decoder for BPSK signal recovery , which maximizes the block error proba- bility (assuming the x 0 ,i are equally likely) is giv en by min x ∈{± 1 } n k y − Ax k 2 . Solving for the e xact ML solution is often computationally intractable, especially when n is lar ge, and therefore a v ariety of heuristics hav e been proposed (zero- forcing, mmse, decision-feedback, etc.) [ 12 , 8 ]. Box-relaxation optimization decoder . The heuristic we con- sider in this paper is the box-relaxation optimization (BRO) decoder [ 9 , 10 , 11 ]. It consists of two steps. The first one in volves solving a con vex relaxation of the ML algorithm, where x ∈ {± 1 } n is relaxed to x ∈ [ − 1 , 1] n . The output of the optimization is hard-thresholded in the second step to produce the final binary estimate. Formally , the algorithm outputs an estimate x ∗ of x 0 giv en as ˆ x = arg min − 1 ≤ x i ≤ 1 k y − Ax k 2 , (1a) x ∗ = sign( ˆ x ) , (1b) where the sign( · ) function returns the sign of its input and acts element-wise on input vectors. The BRO decoder naturally extends to the case of recov ering signals from higher-order constellations; see Section III . Symbol error rate . W e ev aluate the performance of the decoder by the symbol error rate (SER), defined as SER := 1 n n X i =1 1 { x ∗ i 6 = x 0 ,i } , (2) with 1 {} used to denote the indicator function. A closely related quantity that is also of interest is the symbol-error 2 probability P e , which is defined as the expectation of the SER a veraged over the noise, o ver the channel, and over the constellation. Formally , P e := E [SER] = 1 n n X i =1 Pr ( x ∗ i 6 = x 0 ,i ) . (3) B. Contribution and r elated work In this paper , we derive the first rigorous precise char- acterization of the SER for the BR O decoder in the large- system limit, where the numbers m and n of receive and transmit antennas grow proportionally large at a fixed rate δ = m/n . W e complement the precise error formulas with closed-form, tight, upper and lower bounds that are simple functions of the SNR and of δ . These bounds allow useful insights on the decoding performance of the BR O, and they allow a quantitativ e comparison to the matched-filter bound (MFB) and to the zero-forcing (ZF) decoder . As a concrete example, for BPSK signals the SER of the BR O at high- SNR is Q ( p ( δ − 1 / 2)SNR) , where the Q -function is the tail probability of the standard normal distribution. This value is within 3 dB of the MFB for square systems, and it approaches the MFB as m approaches n . Finally , we ev aluate the large- system empirical distribution of the output of the BR O, and we show that error events for an y fixed number of symbol-errors are asymptotically independent. T o the best of our knowledge, a precise formula for the SER was unkno wn for the BR O. W e remark that the replica method developed in statistical mechanics can be used to giv e formulas for the SER of various detectors in multiuser detection for code-division multiple access (CDMA) or mas- siv e MIMO systems. Ho wever , the replica method inv olves a set of conjectured assumptions that remain mostly un verified by rigorous means; please see [ 13 , 8 , 14 ] and references therein. In contrast, our analysis is rigorous, and the techniques used are fundamentally different. They are based on recent advances in comparison inequalities for Gaussian processes; in particular , the conv ex Gaussian min-max theorem [ 15 , 16 ]. The present paper is a significantly extended version of our conference paper in [ 17 ] 1 . In a related recent line of work [ 20 , 21 , 22 ], the authors have proposed and have inv estigated the performance of a ne w class of iterative decoding methods for signal detection in large MIMO systems, which rely on approximate message passing (AMP) [ 23 ]. The decoding methods that these papers discuss are different than the BR O decoder , and the analysis tools used are also dif ferent than the ones presented here. Interestingly , after our paper [ 17 ] appeared, the authors of [ 22 ] used our results to sho w that their proposed algorithm achie ves the same error -rate performance as the BRO decoder . C. P aper Organization In Section II we analyze the performance of the BRO for BPSK signals. The main theorem of this section, namely 1 The analysis framew ork that we present here is general and can be used to analyze the performance of other decoders as well. F or example, see our recent papers with co-authors [ 18 , 19 ], which b uild upon the framework of this work. Theorem II.1 , characterizes the SER and leads to an accurate comparison of the BR O to the MFB and to the ZF decoder . W e e xtend the results to M-P AM constellations in Section III . Section IV includes the main technical result of the paper , namely Theorem IV .1 , as well as its detailed proof. The paper concludes in Section V with a discussion on future research directions. Finally , some technical proofs are deferred to the Appendix. I I . T H E B RO D E C O D E R F O R B P S K S I G NA L S W e precisely analyze the error-rate performance of the BRO decoder for BPSK signals. Our main Theorem II.1 in Section II-A e v aluates its symbol error rate, and simple, closed-form (upper and lower) bounds are computed in Section II-B . In Sections II-C and II-D we use these bounds to compare the BR O decoder to the matched-filter bound, and to the zero- forcing decoder , respectiv ely . A. Pr ecise SER performance Our main result e xplicitly characterizes the limiting behav- ior of the SER of the BR O in ( 1 ), under a large-system limit in which m, n → + ∞ at a proportional (constant) rate δ > 0 . The SNR is assumed constant; in particular , it does not scale with n . Note that for x 0 ∈ {± 1 } n , SNR = 1 /σ 2 . W e use standard notation plim n →∞ X n = X to denote that a sequence of random variables X n con verges in pr obability tow ards a constant X . All limits will be taken in the regime m, n → + ∞ , m/n = δ ; to keep notation short we simply write n → ∞ . Finally , we use Q ( · ) denote the Q -function as- sociated with the standard normal density p ( h ) = 1 √ 2 π e − h 2 / 2 . Theorem II.1 (SER for BPSK signals) . Let SER denote the symbol-err or-rate of the box-relaxation optimization decoder in ( 1 ) , for some fixed but unknown BPSK signal x 0 ∈ {± 1 } n . F ix a constant SNR and a constant δ ∈ ( 1 2 , + ∞ ) . Then, in the limit of m, n → + ∞ , m/n = δ , it holds: plim n →∞ SER = Q 1 τ ∗ , wher e τ ∗ is the unique positive minimizer of the strictly conve x function F : (0 , + ∞ ) → R defined as: F ( τ ) := τ δ − 1 2 + 1 / SNR τ + τ + 4 τ Q 2 τ − r 2 π e − 2 τ 2 . (4) The theorem explicitly characterizes the high-probability limit of the SER over the randomness of the channel matrix A , and of the noise vector z . The function F ( τ ) in ( 4 ) is deterministic, strictly con vex, and is parametrized by the value of the SNR and by the proportionality factor δ . The proof of the theorem uses the con vex Gaussian min-max theorem (CGMT) [ 15 , 16 ], which has thus far found major use in precisely quantifying the squared-error performance of regularized M-estimators in high-dimensions, such as the LASSO [ 16 ]. In this paper we extend the applicability of the CGMT to the characterization of the SER performance, to arriv e to Theorem II.1 . More than that, along the way we 3 10 log 10 ( SN R ) -4 -2 0 2 4 6 8 10 12 14 P e 10 -5 10 -4 10 -3 10 -2 10 -1 10 0 Simulation Theory =1 =0 . 7 Fig. 1: Symbol-error probability of the BR O as a function of SNR for dif ferent v alues of the ratio δ of receive to transmit antennas. The theoretical prediction follows from Theorem II.1 . For the simulations, we used n = 512 . The data are sample a verages of the SER over 20 independent realizations of the channel matrix and of the noise vector for each v alue of the SNR . prov e a number of even stronger statements reg arding the error performance of the BRO. W e: (i) establish the large-system error performance of the BRO for a wide class of performance metrics; this class includes the squared-error and the SER as special cases. (ii) explicitly characterize the limiting empirical distrib ution of the output ˆ x of ( 1a ). (iii) sho w that error e vents for any fixed number of bits are asymptotically independent. Please refer to Theorem IV .1 and to Corollary IV .1 for the formal statements of these results. The detailed proof of Theorem II.1 is also deferred to Section IV . Some further remarks on Theorem II.1 are giv en below . 1) On δ > 1 2 : The theorem holds as long as the ratio of proportionality δ is (strictly) greater than 1/2. T o begin with, note that this allows for the number of receive antennas to be less than the number of transmit antennas, and as low as (almost) half of them. When δ < 1 the system of linear equations y = Ax is underdetermined; hence, recovering the true solution is generally ill-posed e ven in the the absence of noise. Ho wev er, in the problem of interest it is a-priori known that the true solution x 0 only takes values {± 1 } n . The BR O decoder uses that information by enforcing an ` ∞ -norm constraint in ( 1a ). Of course, this idea of using con vex optimization with (typically non-smooth) constraints that promote the particular structure of the unknown signal x 0 to solve underdetermined system of equations, is one of the core ideas that emerged from the Compressed Sensing literature (e.g. [ 24 ]). In fact, it is by now well-understood that in the noiseless case the program in ( 1a ) successfully recov ers the true x 0 ∈ {± 1 } n with high probability ov er the randomness of A if and only if δ > 1 / 2 ([ 24 , 25 ]). The same necessary condition naturally arises out of our proof of Theorem II.1 . 2) Pr obability of err or: Recall from ( 3 ) that the symbol- error probability is given as P e = E [SER] . Also, the SER is bounded between 0 and 1 . Thus, using Theorem II.1 we sho w in Appendix A1 that P e con verges (deterministically) to the same value Q (1 /τ ∗ ) . Corollary II.1 ( P e ) . Under the setting of Theor em II.1 , let P e denote the symbol-err or pr obability of the BR O and τ ∗ be the minimizer of ( 4 ) . Then, lim n →∞ P e = Q (1 /τ ∗ ) . 3) Solving for τ ∗ : In order to ev aluate the large-system limit of the SER , one needs to compute the unique positiv e minimizer of F ( τ ) in ( 4 ). The function F is strictly con vex, hence this can be done numerically in an efficient w ay . Due to con ve xity , τ ∗ can also be described as the unique solution to the first order optimality conditions of the minimization program (see Lemma A.2 ). By further analyzing the properties of τ ∗ , we derive in Section II-B simple closed-form (upper and lower) bounds on the quantity of interest, namely Q (1 /τ ∗ ) . 4) Numerical illustr ation: Figure 1 illustrates the accuracy of the prediction of Theorem II.1 . Note that although the theorem requires n → ∞ , the prediction is already accurate for n on the scale of a few hundreds. B. Simple bounds and high-SNR r e gime W e derive simple, closed-form upper and lo wer bounds on Q (1 /τ ∗ ) , the limiting v alue of the SER . W e further sho w that these bounds are tight. The proof is deferred to Appendix A2 . Theorem II.2 (Closed-form bounds) . Let τ ∗ be the unique minimizer of ( 4 ) . Then, for all values of δ > 1 / 2 and all values of SNR > 0 , it holds, Q ( √ δ · SNR) < Q (1 /τ ∗ ) ≤ Q ( p ( δ − 1 / 2) · SNR) . (5) Furthermor e, the upper bound becomes tight as SNR → + ∞ . In vie w of Theorem II.1 , the statement in ( 5 ) directly establishes upper and lower bounds on the (asymptotic) SER performance of the BR O. These bounds are giv en in closed- form and are simple functions of δ and of SNR . As stated in the theorem, the upper bound in ( 5 ) becomes tight in the high-SNR regime. Hence, for SNR 1 , in the limit of n → ∞ , SER ≈ Q ( p ( δ − 1 / 2) · SNR) . (6) A formal statement of this result is given in Theorem A.1 in Appendix A2 . The fact that τ ∗ ≈ 1 / p ( δ − 1 / 2) S N R when SNR 1 , can be intuitively understood as follo ws: at high- SNR we expect τ ∗ to be going to zero (correspondingly SER or Q (1 /τ ∗ ) to be small). When this is the case, the last two summands in ( 4 ) are negligible; then, τ ∗ is the solution to min τ > 0 τ δ − 1 2 + 1 / SNR τ , which gives the derided result. For illustration, in Figure 2 we have plotted the high- SNR expression for the SER in ( 6 ) versus its exact v alue as predicted by Theorem II.1 . It is seen that, as already discussed, 4 10 log 10 ( SN R ) 5 6 7 8 9 10 11 12 13 14 15 P e 10 -9 10 -8 10 -7 10 -6 10 -5 10 -4 10 -3 10 -2 10 -1 10 0 BRO (Thm. 2.1) BRO (High-SNR) MFB =1 =0 . 7 3d B Fig. 2: Symbol-error probability of the BR O (in red, see Theorem II.1 ) in comparison to high-SNR approximation (in green, see ( 6 )) and to the matched filter bound (in blue, see ( 7 )), for δ = 0 . 7 (dashed lines) and δ = 1 (solid lines). Theorem II.2 successfully predicts that the red curves are sandwiched between the corresponding green (upper-bound) and blue ones (lower-bound). the high-SNR expression is an upper bound, and in fact a good proxy , for the true probability of error at all values of SNR. The approximation becomes better with increasing δ . Finally , in Section II-C , we show that the lower bound Q ( √ δ · SNR) has an operational meaning: it is equal to the bit error probability of an isolated bit transmission ov er the channel, which is also known as the matc hed filter bound in digital communications. C. Comparison to the matched filter bound Here, we compare the performance of the BRO to an idealistic case, where all n − 1 , b ut 1 , bits of x 0 are known to us. As is customary in the field, we refer to the symbol error probability of this case as the matched filter bound (MFB) and denote it by P M F B e . The MFB corresponds to the probability of error in detecting (say) x 0 ,n ∈ {± 1 } from: ˜ y = x 0 ,n a n + z , where ˜ y = y − P n − 1 i =1 x 0 ,i a i is assumed known, and, a i denotes the i th column of A . (This can be equi valently thought of as the error probability of an isolated transmission of only the last bit o ver the channel.) The ML estimate is equal to the sign of the projection of the vector ˜ y to the direction of a n . W ithout loss of generality we assume that x 0 ,n = +1 . Then, the output of the matched filter becomes sign( ˜ X ) , where ˜ X = k a n k 2 + σ 2 ˜ z n , and ˜ z n ∼ N (0 , 1) . Recall that the entries of the m - dimensional vector a n are iid N (0 , 1 /n ) , so it holds plim n →∞ k a n k = δ . Hence, lim n →∞ P M F B e = lim n →∞ P ( ˜ X < 0) = Q ( √ δ · SNR) . (7) First, observe that this formula coincides with the lower bound on the probability of error of the BR O deriv ed in Theorem II.2 . Combined, they establish formally that the MFB is (strictly) better than the BR O. Of course, this is naturally expected since the former is an idealistic scheme. Next, when compared to the upper-bound on the probability of error of the BRO derived in Theorem II.2 , the formula in ( 7 ), leads to the follo wing conclusion: The BR O achie ves a desired symbol-err or pr obability at a higher SNR value by at most 10 log 10 δ δ − 1 / 2 dB than that pr edicted by the MFB. In particular , in the square case ( δ = 1 ), where the number of receiv e and transmit antennas are the same, the BR O is 3dB off the MFB (cf., Figure 2 ). When the number of receiv e antennas is much larger , i.e., when δ 1 , then the performance of the BRO approaches the MFB. D. Box-r elaxation vs Zer o-for cing In this section, we use Theorem II.1 to compare the perfor - mance of the BR O to another widely used decoder , namely the zer o-forcing (ZF) decoder . The ZF decoder obtains an estimate x ∗ ZF of x 0 as follows ˆ x ZF = arg min x ∈ R n k y − Ax k 2 , (8a) x ∗ ZF = sign( ˆ x ZF ) . (8b) Observe that this is very similar to the BR O, only that in ( 8a ) the minimization is unconstr ained . Therefore, in contrast to the BR O, for the ZF decoder we require δ > 1 , i.e., the number of recei ve antennas be larger than the number of transmit antennas. When this is the case and n is large, A is full column-rank with probability one, and ( 8a ) has a unique closed-form solution: ˆ x ZF = ( A T A ) − 1 A T y . (9) In particular , it is a well-kno wn result in the literature ho w to use standard tools from random matrix theory to derive the symbol-error probability of the ZF decoder (e.g. [ 7 ]). For con venience of the reader , we briefly summarize the main idea here. W ithout loss of generality , consider the last bit x n of x . Further let A = QR be the QR decomposition of A , such that Q ∈ R m × n is a matrix with orthogonal columns and R ∈ R n × n is upper triangular . Define ˜ y := Q T y and ˜ z := Q T z and note that ˜ y n = R nn x n + ˜ z n , where R nn is the n th diagonal element of R . From the rotational in v ariance of the Gaussian distribution, it holds ˜ z n ∼ N (0 , σ 2 ) . Next, we use the following well-known facts, e.g., [ 7 , Lem. 1]: (i) Q and R are independent matrices. Hence, ˜ z n is independent of R nn ; (ii) R nn is such that n R 2 nn is χ 2 random v ariable with ( m − n + 1) degrees of freedom. Thus, by the corresponding formula for BPSK single-input single- output (SISO) Gaussian channel, the symbol-error probability of the zero-forcing decoder is P Z F e = E γ 1 ,...,γ m − n +1 h Q s 1 n P m − n +1 i =1 γ 2 i σ 2 i , 5 where γ i ’ s are iid standard Gaussians N (0 , 1) . But, plim n →∞ P m − n +1 i =1 γ 2 i n = ( δ − 1) , giving lim n →∞ P Z F e = Q ( p ( δ − 1) · SNR) . (10) Comparing this formula to the upper bound on the probabil- ity of error of the BR O deri ved in Theorem II.2 , we formally quantify the superiority of the BR O over the ZF decoder: The BR O achie ves the same performance as the ZF decoder at a lower SNR value by at least 10 log 10 δ − 1 2 δ − 1 dB . This holds for δ > 1 . Howe ver , Theorem II.1 further sho ws that the BR O can successfully decode even when δ < 1 , and in particular as low as 1 / 2 . Abov e, we deriv ed formula ( 10 ) using tools from random matrix theory . Alternatively , we can obtain the same result using the CGMT , and the proof technique is very similar to that of Theorem II.1 . The use of random-matrix-theory tools for the analysis of the ZF decoder is in large possible because the minimizer ˆ x ZF of ( 8a ) can be e xpressed in closed-form as a function of A and z (see ( 9 )). On the contrary , this is not the case with the BR O decoder and the use of the CGMT is critical for establishing Theorem II.1 . I I I . E X T E N S I O N T O M - P A M C O N S T E L L A T I O N S A. Setting Each transmit antenna sends a symbol x 0 ,i that take values x 0 ,i ∈ C := {± 1 , ± 3 , . . . , ± ( M − 1) } , for some M = 2 b and b a positiv e integer . When each antenna transmits a single bit, i.e. b = 1 , then x 0 ∈ {± 1 } n and the setting is the same as in Section II . As always, we assume additiv e Gaussian noise of variance σ 2 . The ML decoder is given by min x ∈C n k y − Ax k 2 , but it is often computationally intractable for large number of receiv e/transmit antennas. W e consider , the natural extension of the box-relaxation decoder for BPSK in ( 1 ). Specifically , for M-P AM symbol transmission, the BR O outputs an estimate x ∗ of x 0 as follows: ˆ x = arg min − ( M − 1) ≤ x i ≤ ( M − 1) k y − Ax k 2 , (11a) x ∗ i = arg min c ∈C | ˆ x i − c | . (11b) The optimization in ( 11a ) is con vex, and ( 11b ) simply selects the symbol v alue c that is closest to the solution ˆ x i among a total of M choices: {± 1 , ± 3 , . . . , ± ( M − 1) } . Therefore, the proposed decoder is computationally ef ficient. In the next section, we ev aluate its error-rate performance. B. SER performance Theorem III.1 belo w precisely characterizes the large- system limit of the SER of the BRO in ( 11 ) under an M-P AM transmission. W e assume that a typical sequence of symbols is sent o ver the channel, i.e., each transmitted symbol x 0 ,i takes values {± 1 , ± 3 , . . . , ± ( M − 1) } with equal probability 1 / M . The result extends to other distributions over the constellation, 5 10 15 20 10 -3 10 -2 10 -1 10 0 simulation theory BPSK 4-PAM 8-PAM Fig. 3: Symbol error probability of the Box Relaxation Opti- mization (BRO) in ( 11 ) as a function of the SNR for BPSK, 4-P AM and 8-P AM signals. The theoretical prediction follows from Theorem III.1 . For the simulations, we used n = 512 and δ = 1 . 2 . The data are av erages over 20 independent realizations of the channel matrix and of the noise vector for each value of the SNR. but for simplicity we focus on this typical case. F or a typical sequence, the average po wer of the transmitted vector x 0 is E [ x 2 0 ,i ] = (2 / M ) X i =1 , 3 ...,M − 1 i 2 = ( M 2 − 1) / 3 . Therefore, the SNR of the system becomes SNR = ( M 2 − 1) / 3 σ 2 . (12) Theorem III.1 ( SER for M-P AM) . Let SER denote the symbol error rate of the detection scheme in ( 11 ) , for a typical transmitted signal x 0 such that each symbol x 0 ,i takes values {± 1 , ± 3 , . . . , ± ( M − 1) } with equal pr obability 1 / M . F ix a constant noise variance σ 2 (eqv ., a constant SNR as in ( 12 ) ) and a constant δ ∈ (1 − 1 M , + ∞ ) . Then, in the limit of m, n → ∞ , m/n = δ , it holds: plim n →∞ SER = 2 1 − 1 M Q 1 τ ∗ , wher e τ ∗ is the unique positive minimizer of the strictly conve x function F M : (0 , + ∞ ) → R defined as: F M ( τ ) := τ 2 δ − M − 1 M + σ 2 2 τ + 1 M X k =2 , 4 ,..., 2( M − 1) S ( τ ; k ) , (13) with, S ( τ ; k ) := τ + k 2 τ Q k τ − k √ 2 π e − k 2 2 τ 2 . (14) Theorem III.1 generalizes Theorem II.1 , and the former reproduces the latter for M = 2 . Figure 3 illustrates the accuracy of the prediction. The proof of the theorem is defered to Appendix C . 6 Most of the remarks that followed the statement of Theorem II.1 in Section II , are readily extended to general M-P AM constellations. The guarantees of Theorem III.1 hold as long as the ratio of transmit to receive antennas δ is larger than 1 − 1 / M . Thus, successful transmission is possible with fewer number of receive than transmit antennas. The minimum allowed ratio increases for higher-order constellations. Similar to Theorem II.2 , we can show the following simple upper bound on probability of error P e for all values of SNR : lim n →∞ P e ≤ 2 1 − 1 M Q r δ − 1 + 1 M 3 M 2 − 1 SNR ! . (15) Moreov er , the bound is tight at high-SNR. Of course, for M = 2 , this coincides with the upper bound in ( 5 ). I V . P RO O F O F M A I N R E S U LT This section includes the proof of Theorem II.1 . In fact, tow ards proving the theorem, we obtain a more general result which is stated as Theorem IV .1 below . For simplicity , we make use of the following notation onwards. W e say that an ev ent E holds with probability approaching 1 ( w .p.a.1 ) if lim n →∞ P ( E ) = 1 . Also, we use the following shorthands: X n P − → X to denote conv ergence in probability; X d = Y to denote that the random variables X and Y hav e the same distribution; and, k · k to denote the n-dimensional Euclidean norm. A. Main technical r esult As far as the performance is concerned, we can assume without loss of generality that x 0 = + 1 n = (1 , 1 , . . . , 1) . Also, it is con venient to re-write ( 1a ) by changing the variable to the err or vector w := x − x 0 = x − 1 : ˆ w := arg min − 2 ≤ w i ≤ 0 k z − Aw k . (16) Then, observ e that the SER defined in ( 2 ) is written in terms of the error vector w as: SER = 1 n n X i =1 1 { ˆ w i ≤− 1 } . (17) The following theorem characterizes the limit of the empir- ical distribution of the optimal solution ˆ w in ( 16 ), and yields Theorem II.1 as a corollary . Theorem IV .1 (Lipschitz metrics and empirical distrib ution) . Recall the definition of τ ∗ in Theor em II.1 , and assume, without loss of gener ality , that x 0 = + 1 . Let ˆ w be as in ( 16 ) and consider its (normalized) empirical density function µ ˆ w := n − 1 n X i =1 δ ˆ w i . Further , consider the function θ : R → [ − 2 , 0] : θ ( γ ) := 0 , if γ ≥ 0 , τ ∗ γ , if − 2 τ ∗ ≤ γ < 0 , − 2 , if γ < − 2 τ ∗ , -2 -1.5 -1 -0.5 0 0 0.2 0.4 0.6 0.8 -2 -1.5 -1 -0.5 0 0 0.2 0.4 0.6 0.8 -2 -1.5 -1 -0.5 0 0 0.2 0.4 0.6 0.8 -2 -1.5 -1 -0.5 0 0 0.2 0.4 0.6 0.8 Fig. 4: Empirical distrib ution of the error vector w := ˆ x − x 0 (conditioned on x 0 = + 1 ) for the solution ˆ x of the BR O. The empirical histograms shown are averages over 200 realizations of the channel matrix and of the noise vector for n = 256 number of transmit antennas. They are compared to the asymptotic limiting distribution predicted by Theorem IV .1 , see ( 18 ). The limiting density is supported in the interval [ − 2 , 0] and has point masses both at − 2 and 0 . Dif ferent v alues of δ and of SNR are sho wn. and let µ W be the density measure of a random variable W W d = θ ( N (0 , 1)) . (18) The following are true: (a). µ ˜ w con verg es weakly in probability to µ W . 2 (b). F or all Lipschitz functions ψ : R → R with Lipschitz constant L (independent of n ), it holds 1 n n X i =1 ψ ( ˆ w i ) P − → E W [ ψ ( W )] . Theorem IV .1 is the main technical result of this paper . In Section IV -B we sho w how it can be used to prov e Theorem II.1 . Next, in Section IV -C we rely again on Theorem IV .1 to prove that error events for any fixed number of bits are asymptotically independent. The rest of Section IV is de voted to the proof of Theorem IV .1 . B. Pr oof of Theor em II.1 On the one hand, by ( 17 ), it suffices to prov e that 1 n P n i =1 1 { ˆ w i ≤− 1 } P − → Q (1 /τ ∗ ) . On the other hand, it is easily checked that E W [ 1 { W ≤− 1 } ] = E γ ∼N (0 , 1) [ 1 { γ ≤− 1 /τ ∗ } ] = Q (1 /τ ∗ ) . Note that the indicator function 1 { W ≤− 1 } is not Lip- schitz, so we cannot directly apply Theorem IV .1 (b). Howe ver , since the discontinuity point (i.e., − 1 ) of the indicator function has µ W -measure zero, and also W is a continuous random variable, one can appropriately approximate the indicator with Lipschitz functions and conclude the desired based on Theorem IV .1 (b). This is a some what standard argument, b ut 2 Note that µ ˜ w defines a (sequence of) random probability measure(s); on the other hand, µ W is a deterministic measure. W e use terminology that is standard in the theory of random matrices and say that a sequence of random measures µ n con ver ges weakly to a deterministic measure µ if for every continuous compactly supported ψ : R ψ d µ n P − → R ψ d µ (see for example [ 26 , pg. 160]). 7 we reproduce a detailed proof of the claim in Lemma A.3 in Appendix B for completeness. C. Independence of Err or Events Here, we obtain as a corollary of Theorem IV .1 that error ev ents for any fixed number of bits are asymptotically inde- pendent. W e defer the proof of the corollary to Appendix B2 . Corollary IV .1 (Independence of error events) . Under the notation and definition of Theor em IV .1 , let ψ i : R → R , i = 1 , . . . , k be bounded Lipschitz functions for fixed k ≥ 2 . Then, it holds n − k X 1 ≤ i 1 ,...,i k ≤ n ψ 1 ( ˆ w i 1 ) · · · ψ k ( ˆ w i k ) P − → k Y ` =1 E [ ψ ` ( W ` )] , wher e the expectations of the right-hand side ar e with r espect to W 1 , . . . , W k that are iid random variables distributed as θ ( N (0 , 1)) . Mor eover , it holds n − k X 1 ≤ i 1 ,...,i k ≤ n 1 { ˆ w i 1 ≤− 1 ,..., ˆ w i k ≤− 1 } P − → ( Q (1 /τ ∗ )) k . D. The con ve x Gaussian min-max theorem The fundamental tool behind our analysis is the con ve x Gaussian min-max theorem (CGMT) [ 16 , 15 ]. The CGMT associates with a primary optimization (PO) problem a sim- plified auxiliary optimization (A O) problem from which we can tightly infer properties of the original (PO), such as the optimal cost, the optimal solution, etc.. In particular , the (PO) and (A O) problems are defined respectiv ely as follo ws: Φ( G ) := min w ∈S w max u ∈S u u T Gw + ψ ( w , u ) , (19a) φ ( g , h ) := min w ∈S w max u ∈S u k w k g T u − k u k h T w + ψ ( w , u ) , (19b) where G ∈ R m × n , g ∈ R m , h ∈ R n , S w ⊂ R n , S u ⊂ R m and ψ : R n × R m → R . Denote w Φ := w Φ ( G ) and w φ := w φ ( g , h ) an y optimal minimizers in ( 19a ) and ( 19b ), respectiv ely . Further let S w , S u be con vex and compact sets, ψ be continuous and con ve x-concav e on S w × S u , and, G , g and h all have entries iid standard normal. Theorem IV .2 (CGMT , [ 16 ]) . Let S be an arbitrary open subset of S w and S c = S w / S . Denote φ S c ( g , h ) the optimal cost of the optimization in ( 19b ) , when the minimization over w is now constrained over w ∈ S c . Suppose there exist constants φ and η > 0 such that in the limit of n → + ∞ it holds w .p.a.1: (i) φ ( g , h ) ≤ φ + η , and, (ii) φ S c ( g , h ) ≥ φ +2 η . Then, lim n →∞ Pr( w Φ ∈ S ) = 1 . It is not hard to argue that the conditions (i) and (ii) regarding the optimal cost of the (A O) imply the following for its solution: w φ ∈ S w .p.a.1. The non-trivial and powerful part of the theorem is that the same conclusion is true for the optimal solution w Φ of the (PO) as well. The CGMT builds upon a classical result due to Gordon [ 27 ]. Gordon’ s original result is classically used to establish non-asymptotic probabilistic lower bounds on the minimum singular v alue of Gaussian matrices [ 28 ], and has a number of other applications in high-dimensional conv ex geometry [ 29 , 30 ]. The idea of combining the GMT with con ve xity is attributed to Stojnic [ 31 ]. Thrampoulidis et. al. built and significantly extended on this idea arriving at the CGMT as it appears in [ 16 , Thm. 6.1]. E. Pr oof of Theor em IV .1 1) Strate gy: W e will first pro ve Theorem IV .1 (b); Part (a) will then follow by standard arguments from the theory of weak con ver gence. As mentioned the proof is based on the use of the CGMT . The first step is to identify the (PO) and the (A O), such that ˆ w is optimal for the (PO). Then, our goal is to apply Theorem IV .2 to the following set S := { v : n − 1 n X i =1 ψ ( v i ) − E W [ ψ ( W )] < } , (20) where > 0 is arbitrary . T o see that this is desired note that if for all > 0 it holds w ∈ S w .p.a.1, then n − 1 P n i =1 ψ ( w i ) P − → E W [ ψ ( W )] . Thus, the bulk of the proof amounts to checking that the conditions of Theorem IV .2 are satisfied for S in ( 20 ). For the rest of the proof, we fix > 0 and denote S := S , for conv enience 2) Identifying the (PO) and the (A O): Using the CGMT for the analysis of the SER , requires as a first step e xpressing the optimization in ( 1a ) in the form of a (PO) as it appears in ( 19a ). It is easy to see that ( 16 ) is equi valent to 1 √ n min − 2 ≤ w i ≤ 0 max k u k≤ 1 u T Aw − u T z . (21) Observe that the constraint sets above are both con vex and compact; also, the objectiv e function is conv ex in w and concav e in u . These are consistent with the requirements of the CGMT . The corresponding (A O) problem becomes: φ ( g , h ) := 1 n min − 2 ≤ w i ≤ 0 max k u k≤ 1 ( k w k g − √ n z ) T u − k u k h T w . (22) Note the normalization to account for the variance of the entries of A . Onw ards, we refer to the optimization in ( 22 ) as the (A O) problem. 3) Simplifying the (AO): W e begin by simplifying the (A O) problem as it appears in ( 22 ). First, since both g and z have entries iid Gaussian, then, the v ector k w k g − √ n z has entries iid N (0 , p k w k 2 + nσ 2 ) . Hence, for our purposes and using some ab use of notation so that g continues to denote a vector with iid standard normal entries, the first term in ( 22 ) can be treated as p k w k 2 + nσ 2 g T u , instead. As a next step, fix the norm of u to say k u k = β . Optimizing ov er its direction is now straightforw ard, and gives min − 2 ≤ w i ≤ 0 max 0 ≤ β ≤ 1 β n p k w k 2 + nσ 2 k g k − h T w . In f act, it is easy to now further optimize over β as well: its optimizing value is 1 if the term in the parenthesis is non- 8 negati ve, and, is 0 otherwise. With this, the (A O) simplifies to the following: φ ( g , h ) = min − 2 ≤ w i ≤ 0 r k w k 2 n + σ 2 k g k √ n − 1 n h T w + , (23) where we used the notation ( · ) + := max {· , 0 } . In order to perform the optimization ov er w , we will express the “square-root term” χ := χ ( w ) := p k w k 2 /n + σ 2 in a variational form. First, observe that all w ∈ [ − 2 , 0] n satisfy σ 2 ≤ χ ≤ 4 + σ 2 := T . Hence, we can write χ = min 0 ≤ τ ≤ T τ 2 + χ 2 2 τ . W ith this trick, the minimization over the entries of w becomes separable as follows: min 0 ≤ τ ≤ T τ k g k 2 √ n + σ 2 k g k 2 τ √ n + 1 n n X i =1 min − 2 ≤ w i ≤ 0 k g k 2 τ √ n w 2 i − h i w i . (24) In particular , the optimal ˜ w i := ˜ w i ( g , h ) of ( 22 ) satisfies ˜ w i = 0 , if h i ≥ 0 , ˜ τ √ n k g k h i , if − 2 k g k ˜ τ √ n ≤ h i < 0 , − 2 , if h i < − 2 k g k ˜ τ √ n . (25) where, ˜ τ := ˜ τ ( g , h ) is the solution to the follo wing: φ ( g , h ) = min 0 ≤ τ ≤ T τ k g k 2 √ n + σ 2 k g k 2 τ √ n + 1 n n X i =1 υ n τ √ n k g k ; h i + , (26) with, υ n : υ n ( α ; h ) := 0 , if h ≥ 0 , − α 2 h 2 , if − 2 α ≤ h < 0 , 2 α + 2 h , if h ≤ − 2 α , for all α > 0 and h ∈ R . W e remark that the minimization in ( 26 ) is con ve x. (The easiest way to see this is noting that the objectiv e function in ( 24 ) is jointly conv ex in w and τ ). 4) Con verg ence pr operties of the (A O): Now that the (A O) is simplified as in ( 26 ), we study here its behavior in the limit of m, n → ∞ with m/n = δ . First, we compute the point-wise (in τ ) limit of the objecti ve function in ( 26 ). Clearly , k g k / √ n P − → √ δ . (27) Also, conditioned on the value of n − 1 / 2 k g k , the random v ari- able P n i =1 υ n ( τ √ n/ k g k ; h i ) is a sum of absolutely integrable iid random variables. Hence, combining the WLLN with ( 27 ) it follows that, for all τ > 0 , 1 n n X i =1 υ n τ √ n k g k ; h i P − → Y τ √ δ where, Y ( α ) := − α 2 Z 2 α 0 h 2 p ( h )d h + 2 α Q 2 α − 2 Z ∞ 2 α hp ( h )d h = − α 4 + α 2 Z ∞ 2 α h − 2 α 2 p ( h )d h. (28) Next, the point-wise con vergence implies uniform con ver- gence, thanks to con vexity . This follo ws from [ 32 , Cor .. II.1], which is also kno wn in the literature of estimation theory as the con vexity lemma: point wise con ver gence of conv ex functions implies uniform con vergence in compact subsets (see also [ 33 , Lem. 7.75]). Hence, the random optimization in ( 26 ) con verges to the following deterministic optimization (for con venience we rescale the optimization v ariable τ as follows: τ := τ √ δ ): φ := min 0 ≤ τ ≤ ( T / √ δ ) τ δ 2 + σ 2 2 τ + Y ( τ ) . (29) Expanding the square in the second summand in ( 28 ) and applying integration by parts, it can be checked that the objectiv e function in ( 29 ) is exactly 2 F ( τ ) , where F ( τ ) is defined in ( 4 ). When δ > 1 / 2 , all summands in the objectiv e function in ( 29 ) are non-negati ve for all τ > 0 . Thus, φ ≥ 0 , and consequently (recall ( 26 )), φ ( g , h ) P − → φ. (30) W e remark that the objecti ve function in ( 29 ) is strictly con vex in the optimization variable τ . (Its con vexity follows directly as it is the point-wise limit of con vex functions in ( 26 ), which is known to be conv ex. Alternativ ely , and to further check strict con vexity , it can be sho wn that the second deriv ativ e is positive.) Hence, there is a unique minimizer , call it τ ∗ . With these, it only takes a standard argument (e.g., see [ 34 , Thm. 2.1]) to further conclude that the minimizer ˜ τ ( g , h ) of ( 26 ) conv erges in probability to τ ∗ √ δ , i.e. δ − 1 / 2 ˜ τ ( g , h ) P − → τ ∗ . (31) 5) The optimal solution of the (AO): W e now hav e all the tools necessary to study the properties of the optimal solution ˜ w of the (A O). The lemma below establishes that for Lipschitz functions, ˜ w ∈ S (recall the definition of S in ( 20 )). Lemma IV .1 (Lipschitz conv ergence of the (A O)) . Let ψ : R → R be L -Lipschitz, ˜ w = ˜ w ( g , h ) as in ( 25 ) , and random variable W as in the statement of Theorem IV .1 . It holds, 1 n P n i =1 ψ ( ˜ w i ) P − → E W [ ψ ( W )] . Pr oof. For i = 1 , . . . , n , define v i := θ ( h i ) (recall the definition of θ in the statement of Theorem IV .1 ). The WLLN giv es n − 1 n X i =1 ψ ( v i ) P − → E γ ∼N (0 , 1) [ ψ ( θ ( γ ))] = E W [ ψ ( W )] , (32) where we also used the Gaussianity of h i and ( 18 ). Hence, it will sufficec for the proof to show that | n − 1 P n i =1 ( ψ ( ˜ w i ) − ψ ( v i )) | P − → 0 . W e show this using the Lipschitz assumption and ( 31 ). First, by the Lipschitz property: | ψ ( ˜ w i ) − ψ ( v i ) | ≤ L | ˜ w i − v i | . (33) Next, the expression of ˜ w in ( 25 ), along with ( 27 ) and with ( 31 ), they can be used to show that the RHS in ( 33 ) is appropriately small. Formally , writing ξ := ξ ( g , h ) = ˜ τ √ n k g k for simplicity , it follows from the continuous mapping theorem 9 that for some η > 0 (the value of which to be chosen later) we hav e w .p.a.1: | ξ − τ ∗ | ≤ η , and, | 2 ξ − 2 τ ∗ | ≤ η . Hence, w .p.a.1: | ˜ w i − v i | ≤ max n | τ ∗ − ξ || h i | 1 { h i ≥ max {− 2 /τ ∗ , − 2 /ξ }} , | τ ∗ h i + 2 | 1 {− 2 /τ ∗ ≤ h i ≤− 2 /ξ } , | ξ h i + 2 | 1 {− 2 /ξ ≤ h i ≤− 2 /τ ∗ } o ≤ η ( η + 2 /τ ∗ ) + η + η ( η + τ ∗ ) . For any ζ > 0 , choose η = min { √ ζ 2 , ζ 4 ( 1 τ ∗ + 1+ τ ∗ 2 ) } , such that in view of ( 33 ) | ψ ( ˜ w i ) − ψ ( v i ) | ≤ Lζ , which completes the proof. 6) Satisfying the conditions of the CGMT : The follo wing result uses Lemma IV .1 and strong-con ve xity of the (A O) to show that the optimal cost of the (A O) strictly increases when the optimization is constrained outside the set S defined in ( 20 ). The proof is deferred to Appendix B3 . Lemma IV .2 (Strong con vexity of the (A O)) . Let ψ : R → R be L-Lipschitz, W a random variable as in the statement of Theor em IV .1 , and S := S the set defined in ( 20 ) . F inally , denote f ( w ) := f ( w ; g , h ) the objective function in ( 23 ) . Ther e exists constant C > 0 , such that the following statement holds w .p.a.1, min w ∈ [ − 2 , 0] n w ∈S c f ( w ; g , h ) ≥ φ ( g , h ) + C L . The lemma above essentially verifies conditions (i) and (ii) of the CGMT Theorem IV .2 . T o be specific, let C as in the statement of Lemma IV .2 , φ as in ( 29 ), and, choose η := C 3 L . From ( 30 ) it holds w .p.a.1: | φ ( g , h ) − φ | ≤ η . Combine this with Lemma IV .2 to conclude that φ S c ( g , h ) ≥ φ + 2 η w .p.a.1, as desired. 7) Completing the pr oof: At the end of last section we showed that the conditions of the CGMT Theorem IV .2 are satisfied. Hence, its application yields that any minimizer ˆ w of the (PO) in ( 16 ) satisfies ˆ w ∈ S w .p.a.1. This pro ves part (b) of Theorem IV .1 . It remains to prove Part (a). Recall the note in Footnote 2 : it suffices to prove that 1 n n X i =1 ψ ( ˆ w i ) P − → E W [ ψ ( W )] , (34) for all continuous functions with compact support. Of course, the statement in ( 34 ) is true for Lipschitz continuous functions from part (b) of the theorem. But, continuous compactly supported functions are also bounded. The implication from Lipschitz bounded functions to continuous bounded functions is standard and is part of what is known in the literature as the Portmanteau Theorem; see for example [ 35 , Thm. 13.16]. V . D I S C U S S I O N A N D F U T U R E W O R K In this paper we ha ve used the recently developed CGMT framew ork in [ 15 , 16 ] to precisely compute the large-system error-rate performance of the popular box-relaxation optimiza- tion method for recov ering signals from M-ary constellations, when the channel matrix and additive noise are both iid real Gaussians. The deri ved formulas were previously unknown. Also, the CGMT was previously only used to analyze squared- error performance; here, we illustrate for the first time its use to analyze the error-rate performance of con vex optimization- based massiv e MIMO decoders. In future work, we seek to extend the analysis to complex Gaussian channels with symbols originating from complex- valued constellations. At its core, this task requires extending the CGMT to complex-valued Gaussian matrices, an extension that is currently una v ailable; thus, it poses a challenging, yet practically important, research direction. What appears more accessible is establishing the universality of our results for iid channels beyond Gaussians. W e believe that this is possible by combining the ideas of our paper for extended use of the CGMT with the techniques in [ 36 ], where the univ ersality property has been prov en for the squared-error (rather than for the symbol-error-rate). For BPSK signal recov ery using the BRO, we proved in Corollary IV .1 that error e vents for any fixed number of bits in the solution of the BR O are iid. This fact has potentially significant consequences to be explored. For e xample, it im- plies that, when a block of data is in error, only a few of its bits are. This means that the output of the BR O can be used by various local methods to further reduce the SER. W e are planning to e xplore such implications further in future work. R E F E R E N C E S [1] H. Q. Ngo, E. G. Larsson, and T . L. Marzetta, “Energy and spectral efficiency of very large multiuser mimo systems, ” Communications, IEEE T ransactions on , vol. 61, no. 4, pp. 1436–1449, 2013. [2] F . Rusek, D. Persson, B. K. Lau, E. G. Larsson, T . L. Marzetta, O. Edfors, and F . T ufvesson, “Scaling up mimo: Opportunities and challenges with very large arrays, ” IEEE Signal Pr ocessing Magazine , vol. 30, no. 1, pp. 40–60, 2013. [3] C.-K. W en, J.-C. Chen, K.-K. W ong, and P . T ing, “Message passing algorithm for distributed downlink regularized zero- forcing beamforming with cooperativ e base stations, ” W ireless Communications, IEEE T ransactions on , vol. 13, no. 5, pp. 2920–2930, 2014. [4] T . L. Narasimhan and A. Chockalingam, “Channel hardening- exploiting message passing (chemp) receiv er in large-scale mimo systems, ” Selected T opics in Signal Pr ocessing, IEEE Journal of , vol. 8, no. 5, pp. 847–860, 2014. [5] M. Gr ¨ otschel, L. Lov ´ asz, and A. Schrijver , Geometric algo- rithms and combinatorial optimization . Springer Science & Business Media, 2012, vol. 2. [6] G. J. Foschini, “Layered space-time architecture for wireless communication in a fading environment when using multi- element antennas, ” Bell labs technical journal , vol. 1, no. 2, pp. 41–59, 1996. [7] B. Hassibi and H. V ikalo, “On the sphere-decoding algorithm i. e xpected complexity , ” Signal Processing , IEEE T ransactions on , vol. 53, no. 8, pp. 2806–2818, 2005. [8] D. Guo and S. V erd ´ u, “Multiuser detection and statistical mechanics, ” KLUWER INTERNA TIONAL SERIES IN ENGI- NEERING AND COMPUTER SCIENCE , pp. 229–278, 2003. [9] P . H. T an, L. K. Rasmussen, and T . J. Lim, “Constrained maximum-likelihood detection in cdma, ” Communications, IEEE T ransactions on , v ol. 49, no. 1, pp. 142–153, 2001. [10] A. Y ener , R. D. Y ates, and S. Ulukus, “Cdma multiuser de- tection: A nonlinear programming approach, ” Communications, IEEE T ransactions on , v ol. 50, no. 6, pp. 1016–1024, 2002. 10 [11] W .-K. Ma, T . N. Davidson, K. M. W ong, Z.-Q. Luo, and P .-C. Ching, “Quasi-maximum-likelihood multiuser detection using semi-definite relaxation with application to synchronous cdma, ” Signal Processing , IEEE T ransactions on , vol. 50, no. 4, pp. 912–922, 2002. [12] S. V erdu, Multiuser detection . Cambridge university press, 1998. [13] T . T anaka, “ A statistical-mechanics approach to large-system analysis of CDMA multiuser detectors, ” IEEE T ransactions on Information Theory , vol. 48, no. 11, pp. 2888–2910, Nov 2002. [14] D. Guo and S. V erd ´ u, “Randomly spread CDMA: Asymptotics via statistical physics, ” IEEE T ransactions on Information The- ory , vol. 51, no. 6, pp. 1983–2010, 2005. [15] C. Thrampoulidis, S. Oymak, and B. Hassibi, “Regularized linear regression: A precise analysis of the estimation error, ” in Pr oceedings of The 28th Conference on Learning Theory , 2015 , 2015. [16] C. Thrampoulidis, E. Abbasi, and B. Hassibi, “Precise error analysis of regularized M -estimators in high-dimensions, ” arXiv pr eprint , 2016. [17] C. Thrampoulidis, E. Abbasi, W . Xu, and B. Hassibi, “Ber analysis of the box relaxation for bpsk signal recov ery , ” in Acoustics, Speech and Signal Pr ocessing (ICASSP), 2016 IEEE International Conference on . IEEE, 2016, pp. 3776–3780. [18] I. B. Atitallah, C. Thrampoulidis, A. Kammoun, T . Al-Naf fouri, B. Hassibi, and M.-S. Alouini, “Ber analysis of regularized least squares for bpsk recov ery , ” in 2017 IEEE International Confer- ence on Acoustics, Speech and Signal Pr ocessing (ICASSP). IEEE , 2017. [19] I. B. Atitallah, C. Thrampoulidis, A. Kammoun, T . Y . Al- Naffouri, M.-S. Alouini, and B. Hassibi, “The box-lasso with application to gssk modulation in massiv e mimo systems, ” in Information Theory (ISIT), 2017 IEEE International Symposium on . IEEE, 2017, pp. 1082–1086. [20] C. Jeon, R. Ghods, A. Maleki, and C. Studer , “Optimality of large mimo detection via approximate message passing, ” in Information Theory (ISIT), 2015 IEEE International Symposium on . IEEE, 2015, pp. 1227–1231. [21] R. Ghods, C. Jeon, A. Maleki, and C. Studer , “Optimal large- mimo data detection with transmit impairments, ” in Communi- cation, Control, and Computing (Allerton), 2015 53r d Annual Allerton Conference on . IEEE, 2015, pp. 1211–1218. [22] C. Jeon, A. Maleki, and C. Studer , “On the performance of mis- matched data detection in large mimo systems, ” in Information Theory (ISIT), 2016 IEEE International Symposium on . IEEE, 2016, pp. 180–184. [23] D. L. Donoho, A. Maleki, and A. Montanari, “Message-passing algorithms for compressed sensing, ” Proceedings of the Na- tional Academy of Sciences , v ol. 106, no. 45, pp. 18 914–18 919, 2009. [24] V . Chandrasekaran, B. Recht, P . A. Parrilo, and A. S. W illsky , “The con vex geometry of linear in verse problems, ” F oundations of Computational Mathematics , vol. 12, no. 6, pp. 805–849, 2012. [25] D. Amelunxen, M. Lotz, M. B. Mccoy , and J. A. Tropp, “Living on the edge: Phase transitions in con ve x programs with random data, ” Information and Infer ence , vol. 3, no. 3, pp. 224–294, Sep. 2014. [26] T . T ao, T opics in random matrix theory . American Mathemat- ical Society , 2012, vol. 132, Graduate Studies in Mathematics. [27] Y . Gordon, “Some inequalities for gaussian processes and applications, ” Israel Journal of Mathematics , vol. 50, no. 4, pp. 265–289, 1985. [28] R. V ershynin, “Introduction to the non-asymptotic analysis of random matrices, ” arXiv preprint , 2010. [29] Y . Gordon, “On milman’ s inequality and random subspaces which escape through a mesh in R n , ” 1988. [30] M. Ledoux and M. T alagrand, Pr obability in Banach Spaces: isoperimetry and pr ocesses . Springer, 1991, vol. 23. [31] M. Stojnic, “ A framework to characterize performance of lasso algorithms, ” arXiv preprint , 2013. [32] P . K. Andersen and R. D. Gill, “Cox’ s regression model for counting processes: a large sample study , ” The annals of statistics , pp. 1100–1120, 1982. [33] K.-J. Miescke and F . Liese, Statistical Decision Theory: Esti- mation, T esting, and Selection . Springer Ne w Y ork, 2008. [34] W . K. Newey and D. McFadden, “Large sample estimation and hypothesis testing, ” Handbook of econometrics , vol. 4, pp. 2111–2245, 1994. [35] A. Klenke, Probability theory: a compr ehensive course . Springer Science & Business Media, 2013. [36] S. Oymak and J. A. T ropp, “Univ ersality laws for random- ized dimension reduction, with applications, ” arXiv pr eprint arXiv:1511.09433 , 2015. A P P E N D I X A. Supplementary pr oofs for Section II 1) Cor ollary II.1 : The corollary follows from Theorem II.1 when combined with the following statement, which we prove here:“If SER ( A , z ) P − → c , for some deterministic constant c , then, P e → c. ” For con venience, let us define the random variable X := X ( A , z ) := SER ( A , z ) . W ith this notation, P e = E A , z [ X ] . Thus, for any > 0 , P e ≤ E [ X | | X − c | ≤ ] + E [ X | | X − c | > ] · P ( | X − c | > ) . ≤ ( c + ) + P ( | X − c | > ) , where in the second inequality we used the fact that X ≤ 1 . Notice that ( c + ) + P ( | X − c | > ) → ( c + ) as n → ∞ , since X P − → c , by assumption. In a similar vein, P e ≥ E [ X | | X − c | ≤ ] · P ( | X − c | ≤ ) ≥ ( c − ) · P ( | X − c | > ) , where, again, ( c − ) P ( | X − c | ≤ ) → ( c − ) as n → ∞ , since X P − → c . Since the abov e hold for all , we ha ve sho wn that P e → c , as desired. 2) Pr oof of Theorem II.2 : Here, we prove the first part of the theorem, namely the lo wer and upper bounds on Q (1 /τ ∗ ) . The tightness of the upper bound at high-SNR is sho wn later in Section A3 . Due to the decreasing nature of the function Q , it suffices to prove that p ( δ − 1 / 2) · SNR < τ − 1 ∗ < √ δ · SNR . (35) This is shown in Lemma A.2 (b) belo w . The proof of the lemma builds on understanding the behavior of the function F ( τ ) in ( 4 ). The function F is composed of 4 additiv e terms. The first is linear in τ and the second is simply 1 /τ . W e view the remaining terms as a single function of τ , namely S 2 ( τ ) := τ + 4 τ Q 2 τ − q 2 π e − 2 τ 2 , and we gather its properties in Lemma A.1 below . Lemma A.1. F ix a positive inte ger ` > 0 and consider the function S : (0 , ∞ ) → R defined as follows: S ` ( α ) := S ( α ; ` ) := α + ` 2 α Q ` α − 1 √ 2 π `e − ` 2 2 α 2 . (36) 11 The following statements are true. (a) The first two derivatives S 0 ` ( α ) and S 00 ` ( α ) ar e given as follows S 0 ` ( α ) = ` α √ 2 π e − ` 2 2 α 2 + 1 − ` 2 α 2 Q ` α . (37) S 00 ` ( α ) = 2 ` 2 α 2 Q ` α . (b) The function S ` ( α ) is strictly con vex. (c) The derivative S 0 ` ( α ) is strictly incr easing. Mor eover lim α → 0 + S ` ( α ) = 0 < S 0 ` ( α ) < 1 2 = lim α → + ∞ S ` ( α ) . Pr oof. Statement (a) follows easily by direct calculations. It can be readily observed that S 00 ` ( α ) is strictly greater than 0 for all α > 0 . This proves statement (b). For the last statement, we argue as follows: S 0 ` ( α ) is strictly increasing by strict con vexity of S ` ( α ) . Thus, it suffices to compute the limits of S 0 ` ( α ) at 0 and + ∞ . Easily , lim α → + ∞ S 0 ` ( α ) = lim α → + ∞ Q ( `/α ) = 1 / 2 . For the limit α → 0 + , use the follo wing facts: (i) in the limit of x → + ∞ : Q ( x ) ∼ p ( x ) /x , and, (ii) lim x → + ∞ xe − x 2 / 2 = 0 , to conclude with the desired. Observe that F ( τ ) in ( 4 ) can be written as F ( τ ) = τ ( δ − 1 2 ) − 1 /S N R τ + S ( τ ; 2) . (38) W e are now ready to state and prov e Lemma A.2 . Lemma A.2. [Properties of τ ∗ ] Let τ ∗ be defined as in The- or em II.1 , i.e . the (unique) positive minimizer of the function F ( τ ) in ( 4 ) . The following hold. (a) τ ∗ is the unique positive solution of the equation δ − 1 2 − 1 / SNR τ 2 + G ( τ − 1 ) = 0 , (39) wher e G ( u ) := p (2 /π ) ue − 2 u 2 + (1 − 4 u 2 ) · Q (2 u ) . (40) (b) τ ∗ satisfies ( 35 ) . Pr oof. Recall from Theorem II.1 that the function F ( τ ) in ( 4 ) is strictly con ve x. Hence, τ ∗ is the unique positi ve solution to the first-order optimality condition: F 0 ( τ ) := d d τ F ( τ ) = 0 . It is con venient for the rest of the proof to define a function H : (0 , ∞ ) → R as follo ws: H ( u ) := F 0 ( u − 1 ) . Also, note from ( 37 ) that G in ( 40 ) satisfies G ( u ) = S 0 2 ( u − 1 ) . (41) In particular , properties of G to be used later in the proof follow from Lemma A.1 . Starting with ( 38 ) and using Lemma A.1 (a) and ( 41 ): H ( u ) := δ − 1 2 − u 2 SNR + r 2 π ue − 2 u 2 + (1 − 4 u 2 ) Q (2 u ) | {z } := G ( u ) . This proves the first statement. Moreov er , since F ( τ ) is strictly con ve x, we have that F 0 ( τ ) is strictly increasing, and equiv alently that H ( u ) is a decreasing function of u . Next, we prov e that, τ − 1 ∗ ≥ p ( δ − 1 / 2)SNR =: τ − 1 0 . (42) From Lemma A.1 (c) and ( 41 ), G ( u ) > 0 , for all u > 0 . Hence, H ( τ − 1 0 ) = G ( τ − 1 0 ) > 0 . But, H ( u ) is decreasing and τ − 1 ∗ is its unique zero, from which ( 42 ) follows. Finally , we sho w that τ − 1 ∗ < √ δ · SNR := τ − 1 1 . (43) Note that, H ( τ − 1 1 ) = − 1 2 + G ( τ − 1 1 ) . Again, from Lemma A.1 (c) and ( 41 ), it follows that G ( u ) < 1 / 2 . Therefore, H ( τ − 1 1 ) < 0 . Combine this with the fact that H ( u ) is decreasing and τ − 1 ∗ is its unique zero, to conclude with ( 43 ), as desired. 3) High-SNR r e gime: Theorem A.1 below formalizes and prov es ( 6 ). Theorem A.1 (High-SNR regime) . As in the statement of Theor em II.1 , fix δ ∈ ( 1 2 , ∞ ) and let SER denote the bit err or pr obability of the detection sc heme in ( 1 ) for some fixed but unknown BPSK signal x 0 ∈ {± 1 } n . F or any > 0 , ther e exists constant SNR := SNR( ) suc h that for all values SNR > SNR , it holds lim m,n →∞ m/n → δ P SER Q p ( δ − 1 / 2) SNR − 1 > = 0 . Pr oof. Fix any > 0 . Recall τ ∗ := τ ∗ (SNR) , the minimizer of ( 4 ), and define for con venience: τ 0 := τ 0 (SNR) = p ( δ − 1 / 2)SNR − 1 . (44) W e will prove that there exists SNR( ) , such that Q τ ∗ − 1 Q ( τ 0 − 1 ) − 1 ≤ 2 , (45) for all SNR ≥ SNR( ) . This would suffice to complete the proof of the theorem. T o see this, write SER Q ( τ 0 − 1 ) − 1 = SER − Q ( τ ∗ − 1 ) Q ( τ 0 − 1 ) + Q ( τ ∗ − 1 ) Q ( τ 0 − 1 ) − 1 ≤ | SER − Q ( τ ∗ − 1 ) | Q ( τ 0 − 1 ) + Q ( τ ∗ − 1 ) Q ( τ 0 − 1 ) − 1 , and observ e the following. (a) The last term abov e is further upper bounded by / 2 using ( 45 ) for large enough SNR > SNR( ) . (b) From Theorem II.1 , for all values of SNR , there exist large enough m, n such that the nominator of the first term is upper bounded by ( / 2) Q ( τ 0 − 1 ) with probability 1. In what follows, we show ( 45 ), which is a deterministic statement about the minimizer τ ∗ := τ ∗ (SNR) of ( 4 ). W e use Lemma A.2 . 12 From ( 35 ), we have that lim SNR → + ∞ τ − 1 ∗ = + ∞ . (46) Also, recall from ( 44 ) that ( δ − 1 / 2) = τ − 2 0 SNR . Substituting this in ( 39 ) we find that 0 ≤ τ − 2 ∗ − τ − 2 0 = SNR · G ( τ − 1 ∗ ) (47) for G as in ( 40 ) (also, recall ( 41 )). The non-negati vity abo ve follows from the lower bound in ( 35 ). From Lemma A.1 (c) and ( 41 ), G is decreasing in (0 , ∞ ) . Using this, and applying the lo wer bound in ( 35 ) once more, ( 47 ) leads to the follo wing: 0 ≤ τ − 2 ∗ − τ − 2 0 ≤ SNR · G ( τ − 1 0 ) = SNR · G ( p ( δ − 1 / 2)SNR) . (48) But, from Lemma A.1 (c) the limit of the right-hand side as SNR → + ∞ is equal to 0 . Combining, lim SNR → + ∞ ( τ − 2 ∗ − τ − 2 0 ) = 0 . (49) Next, write τ − 2 ∗ − τ − 2 0 = τ − 2 ∗ (1 − τ 2 ∗ τ 2 0 ) and combine ( 46 ) with ( 49 ) to further show that lim SNR → + ∞ τ ∗ τ 0 = 1 . (50) W e are now ready to prove ( 45 ). For simplicity , we write f ( x ) ∼ g ( x ) instead of lim x → + ∞ f ( x ) g ( x ) = 1 . It is well kno wn that Q ( x ) ∼ p ( x ) /x . Therefore, Q ( τ − 1 ∗ ) Q ( τ − 1 0 ) ∼ p ( τ − 1 ∗ ) p ( τ − 1 0 ) τ 0 τ ∗ = τ 0 τ ∗ exp − τ − 2 ∗ − τ − 2 0 2 ∼ 1 , where the second line follo ws from ( 49 ) and ( 50 ). B. Supplementary pr oofs for Section IV 1) F r om Lipsc hitz to the indicator function: Lemma A.3 (Approximating the indicator) . Let µ be a continuous measure on the real line such that c ∈ R is a point of measur e zer o. Further let { µ n } be a sequence of random measur es inde xed by n such that as n → + ∞ , Z ψ d µ n P − → Z ψ d µ, for all Lipschitz functions ψ : R → R . F or the indicator function χ c ( α ) := 1 { α ≤ c } it holds that, Z χ c d µ n P − → Z χ c d µ. Pr oof. Fix any , ζ > 0 and consider the random v ariable X = R χ c d µ n − R χ c d µ . Note that is random since the measures µ n are random. It will suf fice to show that there exists N ∗ such that for all n > N ∗ : P ( X > ) ≤ ζ . Let η > 0 , the exact value of which to be determined later, and, consider the following functions parametrized by η : ψ η ( α ) := 1 , α ≤ c 1 − 1 η ( α − c ) , c ≤ α ≤ c + η 0 , α ≥ c + η , and ψ η ( α ) := 1 , α ≤ c − η − 1 η ( α − c ) , c − η ≤ α ≤ c 0 , α ≥ c. These functions are both Lipschitz with Lipschitz constant 1 /η . Define, the random variable Y η as Y η := max { Z ψ η d µ n − Z ψ η d µ , Z ψ η d µ n − Z ψ η d µ } . From the assumption of the lemma there is N ( , ζ , η ) such that for all n ≥ N ( , ζ , η ) : P ( Y η > / 2) ≤ ζ . (51) Moreov er , ψ η ( α ) ≤ χ c ( α ) ≤ ψ η ( α ) . Thus, X ≤ Y η + Z | ψ η − ψ η | d µ ≤ Y η + µ { [ c − η , c + η ] } , (52) where for the second inequality we further used the fact that | ψ η − ψ η | is upper bounded by 1 and has support [ c − η, c + η ] . Finally , from continuity of µ and the fact that c is µ -measure zero, we can choose η = η ∗ ( ) such that µ { [ c − η , c + η ] } ≤ / 2 . (53) Combining, ( 51 )–( 53 ), we conclude, as desired, that there is N ∗ := N ( , ζ , η ∗ ( )) such that for all n > N ∗ it holds P ( X > ) ≤ P ( Y η > / 2) ≤ ζ . 2) Pr oof of Cor ollary IV .1 : On the one hand, by Theorem IV .1 (b), it holds for all ` = 1 , . . . , k that ψ ` ( ˆ w ) := n − 1 n X i =1 ψ ` ( ˆ w i ) P − → E W ` [ ψ ` ( W ` )] . On the other hand, for some constant C > 0 k Y ` =1 ψ ` ( ˆ w ) − n − k X 1 ≤ i 1 ,...,i k ≤ n ψ 1 ( ˆ w i 1 ) · · · ψ k ( ˆ w i k ) ≤ C n . T o see this, e xpand the product term on the left-hand side and use the boundedness of the functions ψ ` . Combining the abov e proves the first statement of the corollary . The second statement follows with the exact same argument starting from Theorem II.1 and observing that 1 { ˆ w i 1 ≤− 1 ,..., ˆ w i k ≤− 1 } = Q k ` =1 1 { ˆ w i ` } . 3) Pr oof of Lemma IV .2 : Denote, ψ := E W [ ψ ( W )] . From Lemma IV .1 , it holds w .p.a.1: | 1 n P n i =1 ψ ( ˜ w i ) − ψ | ≤ / 2 . Hence, by definition of the set S and the triangle inequality , it holds w .p.a.1 that for all w ∈ S c : | 1 n P n i =1 ψ ( w i ) − 1 n P n i =1 ψ ( ˜ w i ) | ≥ / 2 . Then, the Lipschitz property of ψ guarantees that k w − ˜ w k √ n ≥ 2 L . (54) In what follows we sho w that n · f ( w ) is C -strongly con ve x for appropriate constant C > 0 . In view of ( 54 ) and recalling φ ( g , h ) = f ( ˜ w ) , this will suf fice to complete the proof. 13 It can be checked that the Hessian ∇ 2 f ( w ) satisfies n ∇ 2 f ( w ) < k g k 2 √ n σ 2 q k w k 2 n + σ 2 I . Further use the fact that k g k 2 / √ n ≥ √ δ / 2 w .p.a.1 and k w k 2 ≤ 4 n , to conclude that w .p.a.1 F is C n -strongly con ve x with C := σ 2 √ δ 2 √ σ 2 +4 , or f ( w ) ≥ f ( ˜ w ) + C 2 k w − ˜ w k √ n . C. Pr oof of Theor em III.1 The proof of the theorem requires repeating, mutatis mutan- dis, the line of arguments detailed in Section IV for the proof of Theorem II.1 . W e omit most of the details for bre vity , and only show the necessary calculations that yield to function F M in ( 13 ). The idea is the same as in Section IV : thanks to the CGMT , it suffices to analyze a corresponding Auxiliary Optimization (A O) instead of the original optimization in ( 11a ). Repeating the steps in Section IV -E3 , the corresponding (A O) becomes (compare to Eqn. ( 24 )): min τ ≥ 0 τ k g k 2 √ n + σ 2 k g k 2 τ √ n + 1 n n X i =1 min x − 0 ,i ≤ w i ≤ x + 0 ,i k g k 2 τ √ n w 2 i − h i w i , where, as always w = x 0 − x denotes the “error-v ector” and we further defined x − 0 ,i := − ( M − 1) − x 0 ,i and x + 0 ,i := ( M − 1) − x 0 ,i . For simplicity in notation, further denote A = k g k ˜ τ √ n . Then, the optimal ˜ w i := ˜ w i ( g , h , x 0 ) satisfies ˜ w i = x − 0 ,i , if h i < A x − 0 ,i , 1 A h i , if A x − 0 ,i ≤ h i ≤ A x + 0 ,i , x + 0 ,i , if h i > A x + 0 ,i . (55) where, ˜ τ := ˜ τ ( g , h , x 0 ) is the solution to the following: min τ > 0 τ k g k 2 √ n + σ 2 k g k 2 τ √ n + 1 n n X i =1 υ n ˜ τ √ n k g k ; h i , x − 0 ,i , x + 0 ,i + , (56) with υ n ( α ; h, `, u ) := 1 2 α ` 2 − h` , if αh < `, − α 2 h 2 , if ` ≤ αh ≤ u, 1 2 α u 2 − hu , if αh > u. This is of course very similar to Equation ( 26 ). Next, we follow the same steps as in Section IV -E4 and study the con vergence of the (A O) in ( 56 ). For the first tw o summands in ( 56 ), we use the fact that k g k √ n P − → √ δ . For the third summand, recall that each x 0 ,i takes values ± 1 , ± 3 , . . . , ± ( M − 1) with equal probability 1 / M . Let j = 1 , 3 , . . . , M − 1 and denote, ` j := ( M − 1) − j and u j := ( M − 1) + j. Then, the pairs ( x − 0 ,i , x + 0 ,i ) take v alues ( − u j , ` j ) and ( − ` j , u j ) with equal probability 1 / M each. W ith these, 1 n P n i =1 υ n τ √ n k g k ; h i , x − 0 ,i , x + 0 ,i P − → Y τ √ δ , where Y ( α ) := 1 M X j =1 , 3 ,...,M − 1 E h ∼N (0 , 1) [ υ n ( α ; h, − u j , ` j )] + 1 M X j =1 , 3 ,...,M − 1 E h ∼N (0 , 1) [ υ n ( α ; h, − ` j , u j )] . (57) Simple calculations show that E h ∼N (0 , 1) [ υ n ( α ; h, `, u )] = − α 2 + α 2 Z ∞ ` α ( h − ` α ) 2 p ( h )d h + α 2 Z ∞ u α ( h − u α ) 2 p ( h )d h. For con venience, define (see also Lemma A.1 ) S ( α ; ` ) := α Z ∞ ` α ( h − ` α ) 2 p ( h )d h = α + ` 2 α Q ` α − 1 √ 2 π `e − ` 2 2 α 2 . (58) Putting all these together with ( 57 ) and grouping terms we find that Y ( α ) = 1 M X j =1 , 3 ,...,M − 3 − α + S ( α ; ` j ) + S ( α ; u j ) + 1 M − α 2 + S ( α ; u M − 1 ) = − α 2 M − 1 M + 1 M X j =1 , 3 ,...,M − 3 { S ( α ; ` j ) + S ( α ; u j ) } + 1 M S ( α ; u M − 1 ) . Observe that Y ( α ) is nonnegati ve for α > 0 as long as δ > M − 1 M . Therefore, we can repeat the technical arguments of Section IV -E4 , to conclude that the random optimization in ( 56 ) conv erges to the follo wing deterministic optimization (where, for con venience, we hav e rescaled the optimization variable τ as follows τ := τ √ δ ): min τ > 0 τ δ 2 + σ 2 2 τ + Y ( τ ) . (59) The objectiv e function in ( 59 ) can be identified with the function F M ( τ ) in the statement of the theorem. From Lemma A.1 (b) the second deriv ative of F M ( τ ) is strictly positi ve for τ > 0 , hence ( 59 ) has a unique minimizer , which we denote τ ∗ . With arguments same as in the end of Section IV -E4 , we can show that √ δ ˜ τ ( g , h , x 0 ) P − → τ ∗ . Finally , we sk etch ho w all these leads to the desired, namely: 1 n n X i =1 1 { x ∗ i 6 = x 0 ,i } P − → 2 1 − 1 M Q ( τ − 1 ∗ ) . First, consider the case: x 0 ,i ∈ {± 1 , ± 3 , . . . , ± ( M − 3) } . Then, the thresholding rule ( 11b ) implies that there is an error iff | ˜ w i | > 1 . Equiv alently , in view of ( 55 ), and noting that x + 0 ,i ≥ 2 , it follows that and error occurs iff | h i | > A . Next, consider the case(s) x 0 ,i = M − 1 (or , x 0 ,i = − ( M − 1) ). Then the error event corresponds to ˜ w i < − 1 (or , ˜ w i > 1 ), which in view of ( 55 ) translates to h i < − A (or h i > A ). Putting these together and conditioning on the high-probability e vents k g k / √ n P − → √ δ and ˜ τ P − → τ ∗ , we find that 1 n n X i =1 1 { arg min s ∈C | x 0 ,i + ˜ w i − s |6 = x 0 ,i } P − → 2 M ( M − 2) Q ( τ − 1 ∗ ) + Q ( τ − 1 ∗ ) = 2 1 − 1 M Q ( τ − 1 ∗ ) .

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment