Speech Dereverberation with Context-aware Recurrent Neural Networks

In this paper, we propose a model to perform speech dereverberation by estimating its spectral magnitude from the reverberant counterpart. Our models are capable of extracting features that take into account both short and long-term dependencies in t…

Authors: Joao Felipe Santos, Tiago H. Falk

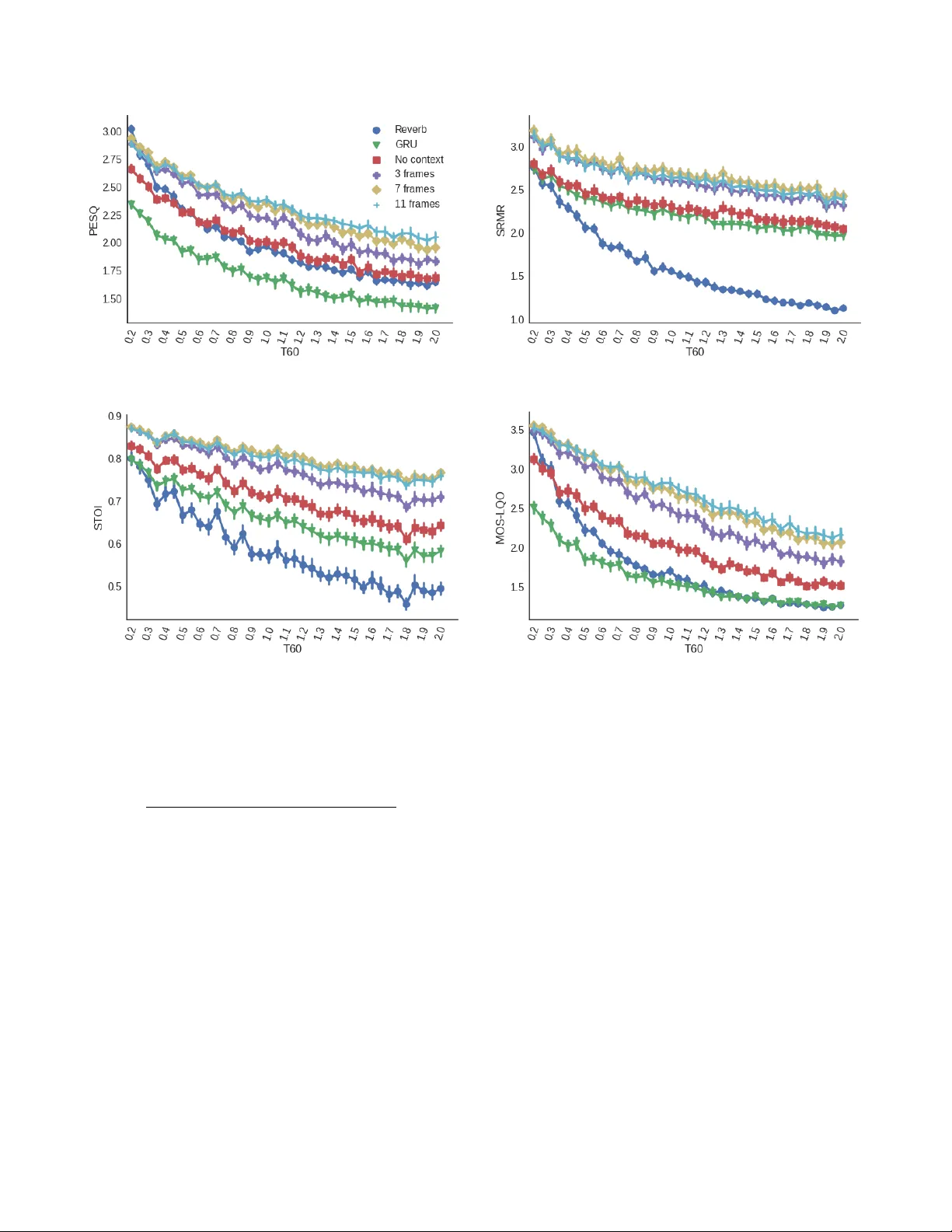

1 Speech Dere v erberation with Context-a ware Recurrent Neural Networks Jo ˜ ao Felipe Santos and T iago H. Falk Abstract —In this paper , we propose a model to perform speech dere verberation by estimating its spectral magnitude from the re verberant counterpart. Our models ar e capable of extracting features that take into account both short and long-term de- pendencies in the signal through a con volutional encoder (which extracts features from a short, bounded context of frames) and a recurr ent neural network for extracting long-term information. Our model outperforms a recently pr oposed model that uses different context information depending on the rev erberation time, without r equiring any sort of additional input, yielding impro vements of up to 0.4 on PESQ, 0.3 on STOI, and 1.0 on POLQA relative to re verberant speech. W e also show our model is able to generalize to real room impulse responses even when only trained with simulated room impulse responses, different speakers, and high rev erberation times. Lastly , listening tests show the proposed method outperforming benchmark models in reduction of perceiv ed r ev erberation. Index T erms —Dere verberation, speech enhancement, deep learning, recurrent neural networks, reverberation. I . I N T R O D U C T I O N R EVERBERA TION plays an important role in the per- ceiv ed quality of a sound signal produced in an enclosed en vironment. In highly rev erberant en vironments, perceptual artifacts such as coloration and echoes are added to the direct sound signal, thus drastically reducing speech sig- nal intelligibility , particularly for the hearing impaired [1]. Automatic speech recognition (ASR) performance is also sev erely affected, especially when reverberation is combined with additiv e noise [2]. T o deal with the distortions in such en vironments, several types of speech enhancement systems hav e been proposed, ranging from single-channel systems based on simple spectral subtraction [3] to multistage systems which le verage signals from multiple microphones [4]. Deep neural networks (DNNs) are currently part of many large-scale ASR systems, both as separate acoustic and lan- guage models as well as in end-to-end systems. T o make these systems robust to rev erberation, different strategies ha ve been explored, such as feature enhancement during a preprocessing stage [5] and DNN-based beamforming on raw multichannel speech signals for end-to-end solutions [6]. On the other hand, the application of DNNs to more general speech enhancement problems such as denoising [7], dereverberation [8], and source separation [9] is comparati vely in early stages. Recently , sev eral works have explored deep neural networks for speech enhancement through two main approaches: spec- tral estimation and spectral masking. In the first, the goal of the neural network is to predict the magnitude spectrum of enhanced speech signal directly , while the latter aims at predicting some form of an ideal mask (either a binary or a ratio mask) to be applied to the distorted input signal. Most of the published work in the area uses an architecture similar to the one first presented in [8]: a relativ ely large feed- forward neural network with three hidden layers containing sev eral hundred units (1600 in [8]), having as input a conte xt window containing an arbitrary number of frames (11 in [8]) of the log-magnitude spectrum and as target the dry/clean center frame of that window . The output layer uses a sigmoid activ ation function, which is bounded between 0 and 1, and normalizes targets between 0 and 1 using the minimum and maximum energies in the data. Moreover , an iterativ e signal reconstruction scheme inspired by [10] is used to reduce the effect of using the re verberant phase for reconstruction of the enhanced signal. In [11], the authors performed a study on target feature acti- vation and normalization and their impacts on the performance of DNN based speech derev erberation systems. The authors compared the target acti vation/normalization scheme in [8] with a linear (unbounded) acti vation function and output nor- malized by its mean and v ariance. Their experiments showed the latter activ ation/normalization scheme leads to higher PESQ and frequency-weighted segmental SNR (fwSegSNR) scores than the sigmoid/min-max scheme. In a follow-up study [12], the same authors proposed a rev erberation-time-aware model for dereverberation that le ver - ages knowledge of the fullband reverberation time (T60) in two dif ferent ways. First, the step size of the short-time F ourier transform (STFT) is adjusted depending on T60, v arying from 2 ms up to 8 ms (the window size is fixed at 32 ms). Second, the frame context used at the input of the network is also adjusted, from 1 frame (no context) up to 11 frames (5 future and 5 past frames). Since the input of the network has a fix ed size, the context length is adjusted by zeroing the unused frames. The model was trained using speech from the TIMIT dataset con volv ed with 10 room impulse responses (RIR) generated at a room with fixed geometry (6 by 4 by 3 meters), with T60 ranging from 0.1 to 1.0 s. The full training data had about 40 hours of reverberant speech, but the authors also presented results on a smaller subset with only 4 hours. The model proposed was a feedforward neural network with 3 hidden layers with 2048 hidden units each, trained using all the different step sizes and frame context configurations in order to find the best configuration for each T60 value. The authors considered the true T60 value to be known at test time (oracle T60), as well as estimated using the T60 estimator proposed by K eshav arz et al. [13]. 2 The other well-kno wn approach for speech enhancement using deep neural networks is to predict arbitrary ideal masks instead of the magnitude spectrum [14]. The ideal binary mask (IBM) target transforms the speech enhancement problem into a classification problem, where the goal of the model is to predict which time-frequency cells from the input should be masked, and has been sho wn to improve intelligibility substan- tially . The ideal binary mask is defined quantitativ ely based on a local criterion threshold for the signal-to-noise ratio (SNR). Namely , if the SNR of a giv en time-frequency cell is lower than the threshold, that time-frequency cell is masked (set to zero). Alternatively , the ideal ratio mask is closely related to the frequency-domain W iener filter with uncorrelated speech and noise. It is a soft masking technique where the mask value corresponds to the local ratio between the signal and the signal-plus-noise energies for each time-frequency cell. Recently , [15] has proposed the complex ideal ratio mask, which is applied to the real and imaginary components of the STFT instead of just the magnitude. Models based on mask prediction usually include se veral dif ferent features at the input (such as amplitude modulation spectrograms, RAST A-PLP , MFCC, and gammatone filterbank energies), instead of using just the magnitude spectrum from the STFT representation like the works pre viously described here. Masks are also often predicted in the gammatone filterbank domain. In [16], the authors present a model that predicts log- magnitude spectrum and their delta/delta-delta, then perform enhancement by solving a least-squares problem with the predicted features, which aims at improving the smoothness of the enhanced magnitude spectrum. The method was shown to improve cepstral distance (CD), SNR, and log-likelihood ratio (LLR), but caused slight degradation of the speech- to-rev erberation modulation energy ratio (SRMR). They also reported that the DNN mapping causes distortion for high T60. V ery few studies use architectures other than feed-forward for dere verberation. In [17], the authors propose an architec- ture based on long short-term memory (LSTM) for derev er- beration. Howe ver , they only report mean-squared error and word-error rates for a baseline ASR system (the REVERB Challenge ev aluation system) and do not report its effect on objectiv e metrics for speech quality and intelligibility . The method described in [9] also uses recurrent neural networks based on LSTMs for speech separation in the mel-filterbank energies domain. Although their system predicts soft masks, similar to the ideal ratio mask, they use a signal-approximation objectiv e instead of predicting arbitrary masks (i.e., the target are the mel-filterbank features of the clean signal, not an arbitrarily-designed mask). Most current models reported in the literature only explore one of two possible conte xts from the re verberant signal. Feed- forward models with a fixed window of an arbitrary number of past and future frames only take into account the local context (e.g. [12]) and are unable to represent the long-term structure of the signal. Also, since feed-forward models do not hav e an internal state that is kept between frames, the model is not aw are of the frames it has predicted previously , which can lead to artifacts due to spectral discontinuities. LSTM- based architectures, on the other hand, are able to learn both short- and long-term structure. Ho wev er, learning either of these structures is not enforced by the training algorithm or the architecture, so one cannot control whether the internal state will represent short-term, long-term context, or both. In this paper, we propose a novel architecture for speech derev erberation that leverages both short- and long-term con- text information. First, fixed local context information is generated directly from the input sequence by a conv olutional context encoder . W e train the netw ork to learn ho w to use long- term context information by using recurrent layers and training it to enhance entire sentences at once, instead of a single frame at a time. Additionally , we le verage residual connections from the input to hidden layers and between hidden layers. W e show that combining short and long-term conte xts, as well as including such residual connections, substantially improv es the dereverberation performance across four different objec- tiv e speech quality and intelligibility metrics (PESQ, SRMR, STOI, and POLQA), and also reduces the amount of perceiv ed rev erberation according to subjective tests. I I . P R O P O S E D M O D E L The architecture of the proposed model can be seen in Fig. 1. As discussed pre viously , our model combines both short- and long-term context by using a con volutional context encoder to create a representation of the short-term structure of the signal, and a recurrent decoder which is able to learn long-term structure from that representation. The decoder also benefits from residual connections, which allo w each of its recurrent stages to hav e access both to a representation of the input signal and the state of the pre vious recurrent layer . Each of the blocks is further detailed in the sections to follo w . A. Context encoder As sho wn in other studies, incorporating past and future frames can help on the task of estimating the current frame for derev erberation. Most works, howe ver , use a fix ed context window as the input to a fully-connected layer [8], [11]. In this work, we decided to extract local context features using 2D conv olutional layers instead. By using a 2D con volutional layer , these features encode local context both in the frequency and the time axis. Our context encoder is composed by a single 2D con volutional layer with 64 filters with kernel sizes of (21 , C ) , where 21 corresponds to the number of frequenc y bins cov ered by the kernel and C to the number of frames cov ered by the kernel in the time axis. In our implementation, C is always an odd number as we use an equal number of past and future frames in the context window (e.g., C = 11 means the current frame plus 5 past and 5 future frames). W e report the performance of the model for different values of C in section IV -A. The con volution has a stride of 2 in the frequency axis and is not strided in the time axis. B. Decoder Follo wing the encoder , we ha ve a stack of three gated recurrent unit (GR U) layers [18] with 256 units each. The input to the first layer is the output of the con volutional context 3 Fig. 1. Architecture of the proposed model encoder with all of the channels concatenated to yield an input of shape ( F , T ) , where F = 64 × B − N context + 1 2 + 1 , 64 is the number of filters in the con volutional layer, T is the number of STFT frames in a given sentence, B is the number of FFT bins (257 in our experiments), and b . c is the floor operation (rounds its argument down to an integer). The outputs to the remaining GR U layers are a combina- tion of af fine projections of the input and the states of the previous GR U layers. Consider x enc ( t ) to be the encoded input at timestep t , and h 1 ( t ) , h 2 ( t ) , h 3 ( t ) as the hidden state at timestep t for the first, second, and third GR U layers, respectiv ely . Then, the inputs i 1 ( t ) , i 2 ( t ) , i 3 ( t ) are as follows: i 1 ( t ) = x enc ( t ) , i 2 ( t ) = f 2 ( x ( t )) + g 1 , 2 ( h 1 ( t )) , i 3 ( t ) = f 3 ( x ( t )) + g 1 , 3 ( h 1 ( t )) + g 2 , 3 ( h 2 ( t )) , where f i , g i,j are affine projections from the input or previous hidden states. The parameters of those projections are learned during training, and all projections hav e an output dimension of 256 in order to match the input dimension of the GRUs when added together . Similarly , the output layer follo wing the stacked GR Us has as its input the sum of af fine projections o i of h 1 , h 2 , h 3 : i out ( t ) = o 1 ( h 1 ( t )) + o 2 ( h 2 ( t )) + o 3 ( h 3 ( t )) . The parameters of those projections are also learned during training. All of these projections hav e an output dimension of 256. I I I . E X P E R I M E N TA L S E T U P A. Datasets In order to assess the benefits of the proposed architecture for speech dere verberation, we ran a series of e xperiments with both the proposed model and two other models as baselines: the T60-aware model proposed in [12] and a similar model without T60 information that uses a fixed overlap of 16 ms and a fixed context of 11 frames (5 past and 5 future frames). For the T60 aware model, we extracted T60 values directly from the RIRs (oracle T60s) using a method similar to the one used for the A CE Challenge dataset [19], [20]. The fullband T60 we used was computed as the av erage of the estimates for the bands with center frequencies of 400 Hz, 500 Hz, 630 Hz, 800 Hz, 1000 Hz, and 1250 Hz. For the single speaker experiments, a recording of the IEEE dataset uttered by a single male speaker was used [21]. The dataset consists of 72 lists with 10 sentences each recorded under anechoic and noise-free conditions. W e used the first 67 lists for the training set and the remaining 5 lists for testing. Rev erberant utterances were generated by conv olving randomly selected subsets of the utterances in the training set with 740 RIRs generated using a fast implementation of the image-source method [22], with T60 ranging from 0.2 s to 2.0 s in 0.05 s steps. T wenty different RIRs (with different room geometry , source-microphone positioning and absorption characteristics) were generated for each T60 value. Fifty random utterances from the training set were con volved with each of these 740 RIRs, resulting in 37,000 files. A random subset of 5% of these files was selected as a validation set and used for model selection and the remaining 35,150 files were used to train the models. The test set was generated in a similar way , but using a different set of 740 simulated RIRs and 5 utterances (randomly selected from the test lists) were con volved with each RIR. For the multi-speaker experiments, we performed a similar procedure but using the TIMIT dataset [23] instead of the IEEE dataset. The default training set (without the “SA” ut- terances, since these utterances were recorded by all speakers) was used for generating the training and validation sets, and the test set (with the SA utterances removed as well) was used for generating the test set. The training and test sets had a total of 462 and 168 speakers, respecti vely . The utterances were con volved with the same RIRs used for the single speak er experiments. A total of 3696 clean utterances were used for the training and validation set, and 1336 for the test set. As with the single speaker dataset, 50 sentences were chosen at random from the training and validation sets (which include all of the utterances from all speakers) and con volved to each of the 740 simulated RIRs to generate the training/validation 4 sets. The test set w as generated following the same procedure as used for the single speaker dataset. Additionally , in order to explore the performance of the pro- posed and baseline models on realistic settings, we tested the same single speaker models described abov e with sentences con volved with real RIRs from the A CE Challenge dataset [19]. W e used the RIRs corresponding to channels 1 and 5 of the cruciform microphone array for all of the se ven rooms and two microphone positions, leading to a total of 28 RIRs. The test sentences were the same as for the experiments with simulated RIRs. The interested reader can refer to [19] for more details about the A CE Challenge RIRs. B. Model T raining Both the proposed model and the baselines were imple- mented using the PyT orch library [24] (revision e1278d4 ) and trained using the Adam optimizer [25] with a learning rate of 0.001, β 1 = 0 . 9 , and β 2 = 0 . 999 . The models were trained for 100 epochs and the parameters corresponding to the epoch with the lo west validation error were used for e valuation. Since sequences in the dataset have dif ferent lengths, we padded sequences in each minibatch and used masking to compute the MSE loss only for valid timesteps. The models used for ev aluation were the ones with the lowest validation loss amongst the 100 epochs. C. Objective evaluation metrics W e compare the performance of the models using four dif- ferent objectiv e metrics. The Perceptual Evaluation of Speech Quality (PESQ) [26] and Perceptual Objective Listening Qual- ity Assessment (POLQA) [27] are both ITU-T standards for intrusiv e speech quality measurement. The PESQ standard was designed for a very limited test scenario (automated assessment of band-limited speech quality by a user of a telephony system), and was superseded by POLQA. The two metrics w ork in a similar way , by computing and accumu- lating distortions, and then mapping them into a five-point mean opinion score (MOS) scale. Even though PESQ is not recommended as a metric for enhanced or reverberant speech, sev eral works report PESQ scores for these types of processing and we report it here for the sak e of completeness. POLQA is a more complete model and allo ws measurement in a broader set of conditions (e.g., super-wideband speech, combined additi ve noise and re verberation). The Short-Time Objectiv e Intelligi- bility (STOI) [28] metric is an intrusiv e speech intelligibility metric based on the correlation of normalized filterbank en- velopes in short-time (400 ms) frames of speech. Finally , the speech-to-rev erberation modulation energy ratio (SRMR) is a non-intrusiv e speech quality and intelligibility metric based on the modulation spectrum characteristics of clean and distorted speech, which has been shown to perform well for rev erberant and dere verberated speech [29], [30]. D. Subjective listening tests T o further g auge the benefits of the proposed architecture for speech enhancement, we performed a small-scale subjecti ve T ABLE I N U MB E R O F PAR A M E TE R S I N E AC H M O D EL Model # of parameters GR U 4,458,753 W u2016 and W u2017 14,711,041 Proposed without context 1,838,593 Proposed, context = 3 frames 7,429,121 Proposed, context = 7 frames 7,434,497 Proposed, context = 11 frames 7,439,873 listening test to assess how effecti ve different methods are in reducing the amount of perceiv ed rev erberation. In particular, comparisons with the baseline model W u2016 were performed as it resulted in improved performance with unmatched speak- ers relati ve to the benchmark W u2017 model. The test protocol we used was the recently proposed MUSHRAR test [31]. For the re verberation perception tests, fiv e random samples of the TIMIT test dataset were chosen for each of simulated RIRs with T60s of 0.6, 0.9, 1.2, and 1.5 s and participants were asked to compare the outputs of all models and the rev erberant signal to a reference signal. In addition to the model outputs and the re verberant signal, a hidden reference and anchor were used for both experiments: the reference signal was the anechoic signal con volv ed with an RIR with T60 of 0.2 s and the anchor was the anechoic signal con volv ed with a RIR with a T60 of 2.0 s. Users were asked to rate the amount of perceiv ed rev erberation on a 0-100 scale, with higher values corresponding to higher perceived re verberation. A total of nine participants took part in the test. T ests were performed using a web interface which is freely a vailable online 1 . I V . E X P E R I M E N T A L R E S U LT S In this Section, we present the results for four different experiments, namely: A. Evaluation of the ef fect of the context size (using the single-speaker , simulated RIR dataset) B. Comparison between the proposed architecture and base- lines on matched conditions (same speaker , simulated RIRs for training and testing) C. Comparison between the proposed architecture and base- lines on mismatched speakers (multispeaker dataset with simulated RIRs) D. Comparison between the proposed architecture and base- lines under realistic reverberation conditions (models trained on single-speaker , simulated RIR dataset, and tested on single-speaker , real RIR dataset). Since some of the models have a lar ge difference in the number of learnable parameters, we list the number of param- eters for each of the models that was used in our e xperiments in T able I. A. Effect of context size W e first analyze the effect of the context size in the proposed model by comparing models with context sizes of 3, 7, and 11. W e also included a baseline model based on 1 Software av ailable at https://github .com/jfsantos/mushra- ruby 5 GR Us without residual connections and a model without the context encoder and residual connections in these tests. All the models were trained and tested with single-speaker data con volved with simulated RIRs. The results can be seen in Figure 2. For this and all of the subsequent experiments in this section, we have subplots for the four objective metrics listed in Section III-C: (a) PESQ, (b) SRMR, (c) STOI and (d) POLQA in this order . The GR U-only model (without the context encoder and residual connections) underperforms in all metrics, and in the cases of PESQ and POLQA, ev en leads to lower scores than the rev erberant utterances. Adding only the residual connections and no context at all (which allows the model to perform at real-time) significantly increases the performance and leads to impro vements in all metrics, except for rev erberation times under 400 ms (noticeable in both PESQ and POLQA). Adding the context encoder, ev en with a short context of one pre vious and one future frame (shown as “3 frames” in the plots), leads to additional improvements. Adding more frames (“7 frames” and “11 frames”) improv es the performance even further . Howe ver , we see diminishing returns around 7-11 frames, as these two models have similar performances for SRMR and STOI and only a slight dif ference in PESQ and POLQA. Since the model with a context of 11 frames (5 past and 5 future frames) showed the best performance amongst all context sizes, in the next experiments we sho w only results with this context size for the proposed model. B. Comparison with baseline models Figure 3 shows a comparison between our best model, W u2016 (fixed STFT window and hop sizes) and W u2017 (STFT window and hop sizes dependent on the oracle T60 values). The interested reader is referred to this manuscript’ s supplementary material page to listen to audio samples gen- erated by the proposed and benchmark algorithms 2 . A more complete audio demo can be found in [32]. It can be seen that our model outperforms these two feed-forward mod- els in most scenarios and metrics, except for SRMR with T60 < 0 . 7 s, where the results are very similar . It should be noted, ho we ver , that the SRMR metric is less accurate for lower T60s [29]. Even though it uses oracle T60 information, the model W u2017 does not have a large improvement in metrics when compared to W u2016, especially in the STOI and POLQA metrics (which are more sensitiv e to the effects of re verberation than PESQ). Using T60 information to adapt the STFT representation seems to have a stronger effect for lower T60. It should also be noted that these models lead to a reduction in PESQ scores for lower rev erberation times in the PESQ and POLQA metrics, which is probably due to the introduction of artifacts. This is also observed for the proposed model, but only in the PESQ metric and only for T60 < 0 . 4 s. On the other hand, STOI indicates all models lead to an improv ement in intelligibility . 2 http://www .seaandsailor .com/demo/index.html C. Unmatched vs. matched speak ers Although testing derev erberation models with single speaker datasets allo ws assessing basic model functionality , in real- world conditions, training a model for a single speaker is not practical and has very limited applications. It is important to ev aluate the generalization capabilities of such models with mismatched speakers, as this is a more likely scenario. Figure 4 shows the results for W u2016, W u2017, the proposed model, as well as for the reverberant files in the test set. Between the baseline models, we can see that W u2017 no w underperforms W u2016 in all metrics, and either reduces metric scores (as for PESQ and POLQA for low T60) or does not change them. Howe ver , the version of the model that does not depend on T60 leads to higher scores. Although we do not hav e a clear explanation for this behaviour , we believ e the optimal scores for the STFT hop size and context might depend both on T60 and speaker , but the W u2017 model uses fix ed values that depend only on T60. Since the model has now less data from each speaker , it was not able to exploit these adapted features properly . The proposed model, on the other hand, outperforms both baselines in 3 out of 4 metrics, only achieving similar scores in the SRMR metric. Compared to W u2016, our model leads to improvements of around 0.4 in PESQ, 0.1 in STOI, and 0.5 in POLQA. D. Real vs. simulated RIR In our last e xperiment, we test the generalization capability of the models trained on simulated RIRs to real RIR. T o that end, we used speech conv olved with real RIRs from the ACE Challenge dataset, as specified in Section IV . The results are reported in Figure 5. Note that the x-axis in that figure does not hav e linear spacing in time, as we are sho wing the results for each T60 as a single point uniformly spaced from its nearest neighbours in the data. Note also the lar ger variability in scores for re verberant files, which is due to the scores here not being av eraged across many RIRs. In this test, the proposed method achie ves the highest scores in all metrics. It is also the only method to improv e PESQ and POLQA across all scenarios, while the baselines either decrease or do not improve such metrics. Although the baselines do improv e STOI in most cases, in some scenarios they actually decrease ST OI. E. Subjective Listening T ests The results of MUSHRAR tests are summarized in T able IV -E. The results for the original, unprocessed reverberant files are also reported. When rating ho w rev erberant the enhanced stimuli were, participants rated the outputs of the proposed model as less re verberant than the baseline and re verberant sig- nals for all T60 values, while the baseline model only achie ved a lower rev erberation perception for lower rev erberation times. V . D I S C U S S I O N A. Effect of the conte xt size and r esidual connections In this paper , we propose the addition of a fe w components to the architecture of deep neural networks for derev erberation. 6 (a) (b) (c) (d) Fig. 2. Ef fect of context size on scores: (a) PESQ, (b) SRMR, (c) STOI, and (d) POLQA. T ABLE II R E SU LT S O F T H E M U S HR A R T E S T F O R R E VE R B E RAT IO N P E RC E P TI O N F O R D I FFE R E NT T 6 0 V A L UE S . L O WE R I S B E T TE R . Model 0.6 s 0.9 s 1.2 s 1.5 s Rev erb 42.62 60.06 71.73 82.24 W u2016 32.27 38.08 46.27 43.89 Proposed 24.44 23.89 29.93 31.64 Using a context window for speech enhancement is not a new idea, and most studies using deep neural netw orks use similar context sizes. The nov elty in our approach, howe ver , is to use a con volutional layer as a local context encoder , which we believ e helps the model to learn ho w to extract local features both in the time and the frequency axes in a more efficient way than just using a single context window as the input for a feedforward model. This local context, together with the residual input connections, is used as an input for the recurrent decoder , which is able to learn longer-term features. Similar architectures (sa ve for the residual connections) have been successfully used for speech recognition tasks [33] but, to the best of our kno wledge, this is the first work where such an architecture is used with the goal of estimating the clean speech magnitude spectrum. Regarding the eff ect of the context size, our results agree with the intuition that including more past and future frames would help in predicting the current frame. Ho wever , we also show that there is a very small difference between using 7 vs. 11 frames as context. A windo w of 7 frames encompasses a total of 144 ms, vs. 208 ms for 11 frames. Both sizes are still much smaller than most of the reverberation times being used for our study , but they already start allowing the model to easily extract features related to amplitude modulations with lower frequencies, which are very important for speech intelligibility . W e believe the combination of short- and long-term contexts, by having both the short-term context encoder and longer-term features through the recurrent layers, allows our architecture to benefit e ven from a shorter context window at the input. Lo wer modulation frequencies can still be captured through the recurrent layers, although we cannot explicitly control or assess how long these contexts are since they are learned implicitly through training and might be input- dependant. W e also introduce the use of residual and skip connections in the context of speech enhancement. Residual connections hav e a very close corresponding method in speech enhance- ment, namely spectral subtraction. The output of a speech 7 (a) (b) (c) (d) Fig. 3. Single speaker , simulated RIR scores: (a) PESQ, (b) SRMR, (c) STOI, and (d) POLQA. enhancement model is likely to be very similar to the input, sav e for a signal that has to be subtracted from it (e.g. in the case of additive noise). In our case, our model is not restricted to subtraction due to how our architecture was designed. Consider the architecture as shown in Figure 1. The input to each recurrent layer is the sum of projections of the corrupted input (via the linear layers f 1 , f 2 , and f 3 ), and the output of the pre vious layers (via the linear layers g 1 , g 2 , and g 3 ). One possible solution to the problem, gi ven this architecture, is for each recurrent layer to perform a new stage of spectral estimation gi ven the dif ference between what the previous stage has predicted and the input at a giv en time. The output layer uses skip connections to the output of each recurrent layer and combines their predictions, allowing each layer to specialize on removing dif ferent types of distortions or to successiv ely improv e the signal. Our architecture was inspired by the work on generative models by Alex Graves [34], which uses a similar scheme of connections between recurrent layers. The recently proposed W av eNet architecture [35], which is a generativ e model for audio signals able to synthesize high- quality speech, also makes use of residual connections and skip connections from each intermediate layer and the output layer . B. Comparison with baselines As seen in the previous section, our model outperforms both baselines based on feed-forward neural networks. One clear advantage of our approach is that we do not need to predict T60, which is a hard problem in itself and adds another layer of complexity to the model, especially under the presence of other distortions such as background noise [19]. Regarding model size, as seen in T able 1, it is important to note that despite ha ving a significantly smaller number of parameters than the baseline models, our proposed model consistently outperforms them in most experiments. Our best model (shown in the last line of T able 1) has approximately half the number of parameters but uses these parameters more efficiently because of its architectural characteristics. Although the authors of [12] argue that their model leads to improv ements in PESQ scores, the differences reported were not significant. Also, the authors hav e used PESQ scores for selecting the best models; ho we ver , that might be an issue, especially because PESQ is not a recommended metric for rev erberant/dereverberated speech. In our e xperiments we used the best v alidation loss (MSE) for model selection for all the models, including the baselines. Using a proper speech quality 8 (a) (b) (c) (d) Fig. 4. Scores for experiment with unmatched speakers: (a) PESQ, (b) SRMR, (c) STOI, and (d) POLQA. or intelligibility metric as a function for model selection would be a good, but costlier solution because one has to generate/ev aluate samples using that metric for all epochs, while MSE can be computed directly from the output of the model. C. Generalization capabilities Another important aspect of our study , when compared to [12], is that we train and test the models under sev eral different room impulse responses, both real and simulated. The results we report for W u2017 hav e much lower perfor- mance than those reported in [12] for similar T60 values; howe ver , it must be noted we used random room geometries and random source and microphone locations for our RIRs, while in their e xperiments the authors used a fixed room geometry with different T60 values (corresponding to different absorption coefficients in the surfaces of the room) and a fixed location. Although they tried to show the model generalizes to different room sizes, they tested generalization by means of two tests: (a) a single different room size with the same source-microphone positioning and (b) and a single different source-microphone positioning with fixed room geometry . W e believ e those experiments were not sufficient to sho w the generalization capabilities of the model, and experiments 1 and 3 in our work confirm that hypothesis. In our work, we tried to expose the model to sev eral different room geometries and source-microphone positioning, since this is closer to real- world conditions and helps the model to better generalize to unseen rooms and setups. D. Anedoctal comparison with mask-based methods Although we did not compare our method to masking alternativ es in this study , we would like to briefly mention the results reported in the most recent paper with a masking approach [36], which used a similar single-speaker dataset (IEEE sentences uttered by a male speaker), simulated and real RIR for the derev erberation task. Although the range of T60s in our study and theirs is not similar , we can roughly compare the metrics of our model in the same range used in their study . The ST OI improv ements for our method are higher than the so-called cRM method proposed in that paper: our method has a ST OI improvement of approximately 0.2 in all simulated T60s they report (0.3, 0.6, and 0.9 s), while their highest improvement is of 0.06 for 0.9 s. The cRM method, howe ver , slightly decreased STOI for a T60 of 0.3 s. 9 (a) (b) (c) (d) Fig. 5. Scores for experiment with real RIRs: (a) PESQ, (b) SRMR, (c) STOI, and (d) POLQA. E. Study limitations Although we report both PESQ and SRMR scores in this work, these results should be taken cautiously . PESQ has been shown to not correlate well with re verberant and derev erberated speech. SRMR, on the other hand, is a non- intrusiv e metric, so it is af fected by speech- and speaker- related variability [29]. It is also more sensitiv e to high rev erberation times (0.8 s as shown in [37]), so measurements in low rev erberation times might not be accurate enough for drawing conclusions about the performance of a given model. A characteristic common to all DNN-based models and also other algorithms based on spectral magnitude estimation is a higher le vel of distortion due to reusing the reverberant phase, especially in higher T60. This is added to magnitude estimation errors which are also higher in higher T60, since the input signal is very different from the target due to the combined ef fect of coloration and longer decay times. Although we do not try to tackle this issue here, there are recent dev elopments in the field that could be applied jointly with our model, such as the method recently proposed in [38]. This exploration is left for future work. V I . C O N C L U S I O N W e proposed a nov el deep neural network architecture for performing speech dere verberation through magnitude spec- trum estimation. W e showed that this architecture outperforms current state-of-the-art architectures and generalizes ov er dif- ferent room geometries and T60s (including real RIR), as well as to different speakers. Our architecture extracts features both in a local context (i.e., a few frames to the past/future of the frame being estimated) as well as long-term context. As future work, we intend to explore improv ed cost functions (e.g., incorporating sparsity in the outputs [39]) as well as applying the architecture to signals distorted with both additiv e noise and rev erberation. W e also intend to propose a multichannel extension of the architecture in the future. Finally , we intend to explore a number of solutions to the issue of reconstructing the signal using the re verberant phase. A C K N O W L E D G E M E N T The authors would like to acknowledge funding from NSERC, FQRNT , and Google, as well as NVIDIA for the donation of the T esla K40 GPUs used for the experiments. 10 R E F E R E N C E S [1] A. C. Neuman, M. Wroblewski, J. Hajicek, and A. Rubinstein, “Com- bined effects of noise and reverberation on speech recognition perfor- mance of normal-hearing children and adults, ” Ear and Hearing , vol. 31, no. 3, pp. 336–344, Jun. 2010. [2] T . Y oshioka, A. Sehr , M. Delcroix, K. Kinoshita, R. Maas, T . Nakatani, and W . K ellermann, “Making machines understand us in rev erberant rooms: Robustness against rev erberation for automatic speech recognition, ” IEEE Signal Processing Magazine , vol. 29, no. 6, pp. 114–126, No v . 2012. [Online]. A vailable: http: //ieeexplore.ieee.or g/lpdocs/epic03/wrapper .htm?arnumber=6296524 [3] S. Boll, “ A spectral subtraction algorithm for suppression of acoustic noise in speech, ” in Acoustics, Speech, and Signal Processing, IEEE International Confer ence on ICASSP ’79. , vol. 4, Apr. 1979, pp. 200– 203. [4] B. Cauchi, I. K odrasi, R. Rehr, S. Gerlach, A. Juki ´ c, T . Gerkmann, S. Doclo, and S. Goetze, “Combination of MVDR beamforming and single-channel spectral processing for enhancing noisy and rev erberant speech, ” EURASIP Journal on Advances in Signal Pr ocessing , vol. 2015, no. 1, pp. 1–12, 2015. [Online]. A vailable: http://link.springer .com/article/10.1186/s13634- 015- 0242- x [5] M. Mimura, S. Sakai, and T . Kawahara, “Re verberant speech recognition combining deep neural networks and deep autoencoders augmented with a phone-class feature, ” EURASIP Journal on Advances in Signal Processing , vol. 2015, no. 1, p. 62, Jul. 2015. [Online]. A vailable: http://asp.eurasipjournals.com/content/2015/1/62/abstract [6] T . N. Sainath, R. J. W eiss, K. W . Wilson, A. Narayanan, and M. Bacchiani, “Factored spatial and spectral multichannel raw wav eform CLDNNs, ” in Acoustics, Speech and Signal Pr ocessing (ICASSP), 2016 IEEE International Conference on . IEEE, 2016, pp. 5075–5079. [Online]. A vailable: http://ieeexplore.ieee.org/abstract/ document/7472644/ [7] Y . Xu, J. Du, L. R. Dai, and C. H. Lee, “ A Regression Approach to Speech Enhancement Based on Deep Neural Networks, ” IEEE/ACM T ransactions on Audio, Speec h, and Language Pr ocessing , vol. 23, no. 1, pp. 7–19, Jan. 2015. [8] K. Han, Y . W ang, D. W ang, W . S. W oods, I. Merks, and T . Zhang, “Learning spectral mapping for speech derev erberation and denoising, ” IEEE T ransactions on Audio, Speech, and Language Pr ocessing , vol. 23, no. 6, pp. 982–992, 2015. [Online]. A vailable: http://dl.acm.org/citation.cfm?id=2817133 [9] F . W eninger, J. R. Hershey , J. Le Roux, and B. Schuller, “Discriminativ ely trained recurrent neural networks for single-channel speech separation, ” in Signal and Information Pr ocessing (GlobalSIP), 2014 IEEE Global Conference on . IEEE, 2014, pp. 577–581. [Online]. A vailable: http://ieeexplore.ieee.org/abstract/document/7032183/ [10] D. Griffin and J. Lim, “Signal estimation from modified short-time Fourier transform, ” IEEE T ransactions on Acoustics, Speech, and Signal Pr ocessing , vol. 32, no. 2, pp. 236–243, Apr . 1984. [11] B. W u, K. Li, M. Y ang, and C. H. Lee, “ A study on target feature activ ation and normalization and their impacts on the performance of DNN based speech dereverberation systems, ” in 2016 Asia-P acific Signal and Information Processing Association Annual Summit and Conference (APSIP A) , Dec. 2016, pp. 1–4. [12] ——, “ A Re verberation-T ime-A ware Approach to Speech Dereverber - ation Based on Deep Neural Networks, ” IEEE/ACM T ransactions on Audio, Speech, and Language Processing , vol. 25, no. 1, pp. 102–111, Jan. 2017. [13] A. Keshav arz, S. Mosayyebpour, M. Biguesh, T . A. Gulliver , and M. Esmaeili, “Speech-Model Based Accurate Blind Reverberation T ime Estimation Using an LPC Filter, ” IEEE T ransactions on A udio, Speech, and Language Processing , v ol. 20, no. 6, pp. 1884–1893, Aug. 2012. [Online]. A vailable: http://ieeexplore.ieee.org/lpdocs/epic03/ wrapper .htm?arnumber=6171837 [14] Y . W ang, A. Narayanan, and D. W ang, “On Training T argets for Super- vised Speech Separation, ” IEEE/ACM T ransactions on Audio, Speech, and Language Processing , vol. 22, no. 12, pp. 1849–1858, Dec. 2014. [15] D. S. W illiamson, Y . W ang, and D. W ang, “Complex ratio masking for joint enhancement of magnitude and phase, ” in 2016 IEEE International Confer ence on Acoustics, Speech and Signal Pr ocessing (ICASSP) , Mar . 2016, pp. 5220–5224. [16] X. Xiao, S. Zhao, D. H. H. Nguyen, X. Zhong, D. L. Jones, E. S. Chng, and H. Li, “Speech dere verberation for enhancement and recognition using dynamic features constrained deep neural networks and feature adaptation, ” EURASIP Journal on Advances in Signal Pr ocessing , vol. 2016, no. 1, p. 4, 2016. [Online]. A vailable: http://link.springer .com/article/10.1186/s13634- 015- 0300- 4 [17] M. M. S. S. T . Kawahara, “Speech dereverberation using long short- term memory , ” 2015. [Online]. A vailable: https://pdfs.semanticscholar . org/b382/72d3b17c43452862943c40376e8fc2ad5eb5.pdf [18] K. Cho, B. van Merrienboer, D. Bahdanau, and Y . Bengio, “On the properties of neural machine translation: Encoder-decoder approaches, ” in Eighth W orkshop on Syntax, Semantics and Structur e in Statistical T ranslation , 2014. [Online]. A vailable: http://arxiv .org/abs/1409.1259 [19] J. Eaton, N. Gaubitch, A. Moore, and P . Naylor, “The ACE challenge #x2014; Corpus description and performance ev aluation, ” in 2015 IEEE W orkshop on Applications of Signal Processing to Audio and Acoustics (W ASP AA) , Oct. 2015, pp. 1–5, bibtex: eaton ace 2015. [20] M. Karjalainen, P . Ansalo, A. M ¨ akivirta, T . Peltonen, and V . V ¨ alim ¨ aki, “Estimation of modal decay parameters from noisy response measurements, ” J ournal of the Audio Engineering Society , vol. 50, no. 11, pp. 867–878, 2002. [Online]. A vailable: http://www .aes.org/e- lib/bro wse.cfm?elib=11059 [21] P . C. Loizou, “Speech enhancement based on perceptually motivated Bayesian estimators of the magnitude spectrum, ” Speech and Audio Pr ocessing, IEEE T ransactions on , vol. 13, no. 5, pp. 857–869, 2005. [Online]. A vailable: http://ieeexplore.ieee.org/xpls/abs all.jsp? arnumber=1495469 [22] E. A. Lehmann and A. M. Johansson, “Diffuse reverberation model for ef ficient image-source simulation of room impulse responses, ” Audio, Speech, and Language Processing , IEEE T ransactions on , vol. 18, no. 6, pp. 1429–1439, 2010. [Online]. A vailable: http: //ieeexplore.ieee.or g/xpls/abs all.jsp?arnumber=5299028 [23] J. S. Garofolo, L. F . Lamel, W . M. Fisher , J. G. Fiscus, D. S. Pallett, and N. L. Dahlgren, “TIMIT acoustic phonetic continuous speech corpus LDC93S1 (web download), ” 1993. [24] The PyT orch developers. PyT orch. http://pytorch.org. [25] D. Kingma and J. Ba, “ Adam: A method for stochastic optimization, ” in International Conference for Learning Representations , 2015. [26] ITU-T P .862, “Perceptual ev aluation of speech quality: An objectiv e method for end-to-end speech quality assessment of narrow-band tele- phone network and speech coders, ” ITU T elecommunication Standard- ization Sector (ITU-T), T ech. Rep., 2001. [27] ITU-T P . 863, “Perceptual Objective Listening Quality Assessment (POLQA), ” ITU T elecommunication Standardization Sector (ITU-T), T ech. Rep., 2011. [28] C. H. T aal, R. C. Hendriks, R. Heusdens, and J. Jensen, “ An algorithm for intelligibility prediction of time–frequency weighted noisy speech, ” Audio, Speech, and Language Processing , IEEE T ransactions on , v ol. 19, no. 7, pp. 2125–2136, 2011. [29] J. F . Santos, M. Senoussaoui, and T . H. Falk, “ An updated objectiv e intelligibility estimation metric for normal hearing listeners under noise and re verberation, ” in International W orkshop on Acoustic Signal En- hancement (IW AENC) , September 2014, pp. 55–59. [30] T . H. Falk, C. Zheng, and W .-Y . Chan, “ A non-intrusi ve quality and intelligibility measure of reverberant and dereverberated speech, ” IEEE T ransactions on Audio, Speec h, and Language Pr ocessing , vol. 18, no. 7, pp. 1766–1774, Sep. 2010. [31] B. Cauchi, H. Javed, T . Gerkmann, S. Doclo, S. Goetze, and P . Naylor , “Perceptual and instrumental ev aluation of the perceiv ed level of rev er- beration, ” in 2016 IEEE International Conference on Acoustics, Speech and Signal Pr ocessing (ICASSP) , March 2016, pp. 629–633. [32] Santos, J. F . and Falk, T . H., “Supplementary materials for the paper “Speech dereverberation using context-aware recurrent neural networks”, ” accessed on 24 July 2017. [Online]. A vailable: https://figshare.com/s/c639aed9049d5de00c1b [33] A. Hannun, C. Case, J. Casper, B. Catanzaro, G. Diamos, E. Elsen, R. Prenger, S. Satheesh, S. Sengupta, A. Coates, and others, “DeepSpeech: Scaling up end-to-end speech recognition, ” arXiv preprint arXiv:1412.5567 , 2014. [Online]. A vailable: http: //arxiv .org/abs/1412.5567 [34] A. Grav es, “Generating sequences with recurrent neural networks, ” arXiv preprint arXiv:1308.0850 , 2013. [Online]. A vailable: http: //arxiv .org/abs/1308.0850 [35] A. v . d. Oord, S. Dieleman, H. Zen, K. Simonyan, O. V inyals, A. Grav es, N. Kalchbrenner , A. Senior, and K. Kavukcuoglu, “W aveNet: A Generativ e Model for Raw Audio, ” arXiv:1609.03499 [cs] , Sep. 2016, arXiv: 1609.03499. [Online]. A vailable: http://arxiv .org/abs/1609.03499 [36] D. Williamson and D. W ang, “Time-Frequency Masking in the Complex Domain for Speech Derev erberation and Denoising, ” IEEE/ACM T rans- actions on Audio, Speech, and Language Processing , vol. PP , no. 99, pp. 1–1, 2017. 11 [37] M. Senoussaoui, J. F . Santos, and T . H. F alk, “Speech temporal dynamics fusion approaches for noise-robust reverberation time estimation, ” in 2017 IEEE International Conference on Acoustics, Speech and Signal Pr ocessing (ICASSP) , Mar . 2017, pp. 5545–5549. [38] F . Mayer, D. S. Williamson, P . Mo wlaee, and D. W ang, “Impact of phase estimation on single-channel speech separation based on time-frequency masking, ” The Journal of the Acoustical Society of America , vol. 141, no. 6, pp. 4668–4679, Jun. 2017. [Online]. A vailable: http://asa.scitation.org/doi/abs/10.1121/1.4986647 [39] A. Jukic, T . van W aterschoot, T . Gerkmann, and S. Doclo, “ A general framew ork for incorporating time–frequency domain sparsity in mul- tichannel speech dereverberation, ” Journal of the Audio Engineering Society , vol. 65, no. 1/2, pp. 17–30, 2017. Jo ˜ ao Felipe Santos received his bachelor degree in electrical engineering from the Federal University of Santa Catarina (Brazil) in 2011 and an M.Sc. degree in telecommunications from the Institut National de la Recherche Scientifique (INRS) in 2014, where he entered the Dean’s honour list and was awarded the Best M.Sc. Thesis A ward. He is currently a Ph.D. candidate in telecommunications at the same institute. His main research interest is in applications of deep learning to speech and audio signal processing applications (speech enhancement, speech synthesis, speech recognition, and audio scene classification). Tiago H. F alk (SM’14) received the B.Sc. degree from the Federal University of Pernambuco, Brazil, in 2002, and the M.Sc. and Ph.D. degrees from Queen’ s Univ ersity , Canada, in 2005 and 2008, respectively , all in electrical engineering. In 2010, he joined INRS, Montreal, Canada, in 2010, where he is currently an Associate Professor and heads the Multimedia/Multimodal Signal Analysis and Enhancement Laboratory . His research interests include multimedia/biomedical signal analysis and enhancement, pattern recognition, and their interplay in the development of biologically inspired technologies.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment