Sound Event Detection in Synthetic Audio: Analysis of the DCASE 2016 Task Results

As part of the 2016 public evaluation challenge on Detection and Classification of Acoustic Scenes and Events (DCASE 2016), the second task focused on evaluating sound event detection systems using synthetic mixtures of office sounds. This task, whic…

Authors: Gregoire Lafay (1), Emmanouil Benetos (2), Mathieu Lagrange (3) ((1) IRCCyN

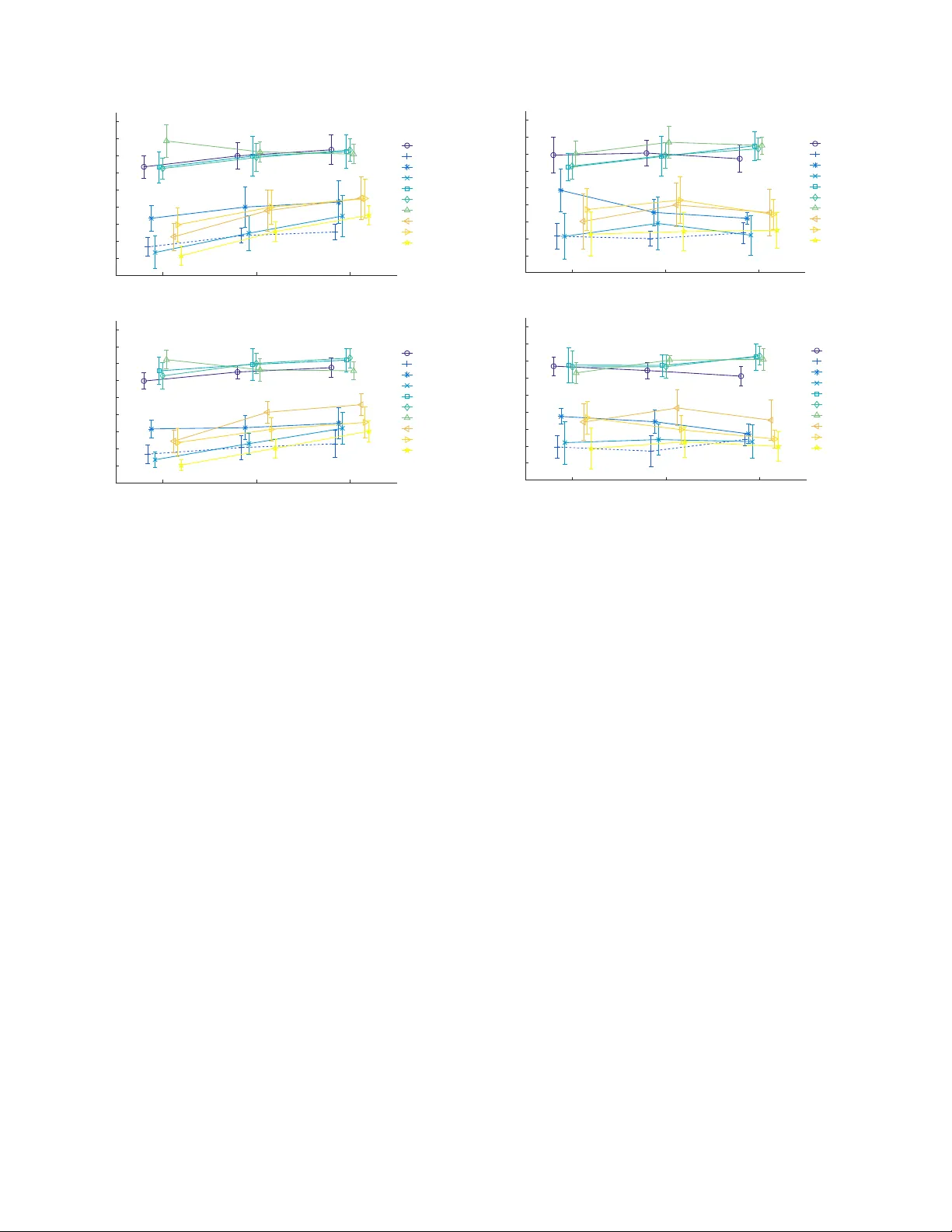

2017 IEEE W orkshop on Applications of Signal Processing to Audio and Acoustics October 15-18, 2017, New P altz, NY SOUND EVENT DETECTION IN SYNTHETIC A UDIO: ANAL YSIS OF THE DCASE 2016 T ASK RESUL TS Gr ´ egoir e Lafay 1 , Emmanouil Benetos 2 and Mathieu Lagr ange 1 1 LS2N, CNRS, Ecole Centrale de Nantes, France 2 School of EECS, Queen Mary Uni versity of London, UK ABSTRA CT As part of the 2016 public evaluation challenge on Detection and Classification of Acoustic Scenes and Events (DCASE 2016), the second task focused on ev aluating sound ev ent detection systems using synthetic mixtures of office sounds. This task, which fol- lows the ‘Ev ent Detection - Office Synthetic’ task of DCASE 2013, studies the behaviour of tested algorithms when facing controlled lev els of audio complexity with respect to background noise and polyphony/density , with the added benefit of a very accurate ground truth. This paper presents the task formulation, evaluation metrics, submitted systems, and provides a statistical analysis of the results achiev ed, with respect to v arious aspects of the ev aluation dataset. Index T erms — Sound e vent detection, experimental valida- tion, DCASE, acoustic scene analysis, sound scene analysis 1. INTR ODUCTION The emerging area of computational sound scene analysis (also called acoustic scene analysis) addresses the problem of automat- ically analysing environmental sounds, such as urban or nature sound en vironments [1]. Applications of sound scene analysis in- clude smart homes/cities, security/surveillance, ambient assisted living, sound indexing, and acoustic ecology . A core problem in sound scene analysis is sound e vent detection (SED), which in- volv es detecting and classifying sounds of interest present in an au- dio recording, specified by start/end times and sound labels. A key challenge in SED is the detection of multiple overlapping sounds, also called polyphonic sound event detection . T o that end, the pub- lic evaluation challenge on Detection and Classification of Acous- tic Scenes and Events (DCASE 2013) [1] ran tasks for both mono- phonic and polyphonic SED, attracting 7 and 3 submissions respec- tiv ely . The follow-up challenge, DCASE 2016 [2], attracted submis- sions from ov er 80 international teams amongst 4 dif ferent tasks. The 2nd task of DCASE 2016 [2] is entitled “Sound event detec- tion in synthetic audio”, and follows the polyphonic “Event Detec- tion - Office Synthetic” task of DCASE 2013. For the 2016 chal- lenge, T ask 2 attracted 10 submissions from 9 international teams, totalling 37 authors. The main aim of this task is to e valuate the performance of polyphonic sound event detection systems in of- fice environments, using audio sequences generated by artificially concatenating isolated office sounds. The ev aluation data was gen- erated using v arying lev els of noise and polyphony . The benefits of this approach include very accurate ground truth, and the fact that the scene complexity can be broken into dif ferent types, al- lowing us to finely study the sensitivity of the evaluated algorithms EB is supported by a UK RAEng Research Fellowship (RF/128). to a given scene characteristic. Here, we ev aluate differences be- tween systems with respect to aspects of the dataset, including the ev ent to background ratio (EBR), the scene type (monophonic vs. polyphonic scenes), and scene density . This paper presents the for- mulation of DCASE 2016 - T ask 2, including generated datasets, ev aluation metrics, submitted systems, as well as a detailed statisti- cal analysis of the results achieved, with respect to various aspects of the ev aluation dataset. 2. D A T ASET CREA TION 2.1. Isolated Sounds The isolated sound dataset used for simulating the scenes has been recorded in the offices of LS2N (France). 11 sound classes hav e been considered, presented in T able 1. Compared to DCASE 2013, 5 classes are no longer considered: alert (due to its too broad defini- tion and large diversity compared to other classes), printer , switch , mouse click , and pen dr op . After detailed post-analysis of the pre vi- ous challenge, those latter classes that hav e been badly recognised and often confused were found to be too short in terms of duration. The class printer is no longer considered since it is composed of sounds that are much longer than the ones of the other classes. 2.2. Simulation process For simulating the recordings used for T ask 2, the morphological sound scene model proposed in [3] is used. T wo core parameters are considered to simulate the acoustic scenes: • E B R : the event-to-background ratio, i.e. the average am- plitude ratio between sound ev ents and the background • nec : the number of ev ents per class For each scene, the onset locations are randomly sampled using a uniform distribution. For monophonic scenes, a post-treatment that takes into account the duration of the event is done by moving some onsets in order to fulfill the constraint that two e vents must not overlap. 3 lev els are considered for each parameter: E B R : - 6, 0 and +6 dB ; nec : 1, 2, and 3 for a monophonic scene, and 3, 4, and 5 for polyphonic ones. This set of parameters leads to 18 experimental conditions. 2.3. T ask Datasets The training dataset consists of 220 isolated sounds containing 20 sounds per event class (see subsection 2.1). The scenes of the de- velopment dataset are built using the isolated sounds of the training dataset mixed with 1 background sample. One scene is built for each experimental condition, leading to 18 acoustic scenes. Only 2017 IEEE W orkshop on Applications of Signal Processing to Audio and Acoustics October 15-18, 2017, New P altz, NY Index Name Description 1 door knock knocking a door 2 door slam closing a door 3 speech sentence of human speech 4 laugh human laughter 5 throat human clearing throat 6 cough human coughing 7 drawer open / close of a desk drawer 8 keyboard computer keyboard typing 9 keys dropping keys on a desk 10 phone phone ringing 11 page turn turning a page T able 1: Sound event classes used in DCASE 2016 - T ask 2. the E B R is an independent parameter, so for each combination of nec and monophony/polyphon y , the chosen samples are different. The scenes of the test dataset are b uilt using the same rules from a set of 440 ev ent samples, using 40 samples per class, and 3 back- ground samples. For each of the 18 e xperimental conditions, the simulation is replicated 3 times, leading to a dataset of 54 acoustic scenes, with each scene being 2 minutes long. For each replication, the onset locations are different. The total duration of the develop- ment and test datasets is 34 and 108 minutes, respectiv ely . 3. EV ALU A TION 3.1. Metrics Among the four metrics considered in the DCASE 2016 challenge [4], the class-wise event-based F-measure ( F cweb ) is considered in this paper for providing a statistical analysis of the results. This met- ric, which was also used in DCASE 2013 [1] and on ev aluating the scene synthesizer used in this work [3], assumes a correctly identi- fied sound event if its onset is within 200 ms from a ground truth onset with the same class label. Results are computed per sound ev ent class and are subsequently av eraged amongst all classes. 3.2. Statistical Analysis Contrary to the data published in the DCASE 2016 Challenge web- site [2] where the computation of the performance is done by con- catenating all acoustic scenes along time and computing a single metric, here performance is computed separately for each acoustic scene of the ev aluation corpus, and av eraged for specific groups of recordings, allowing us to perform statistical significance analyses. Once computed, analysis of the results is done in 3 steps. First, results are considered globally , without considering the different ex- perimental conditions individually . Differences between systems are analysed using a repeated measure ANO V A [5] with one fac- tor being the different systems. Second, the performance dif ference between monophonic and polyphonic acoustic scenes is analysed using a repeated measure ANO V A with one within-subject factor being the different systems and one between-subject factor being the monophonic/polyphonic factor . Third, the impact of nec and E B R on the performance of the systems considering separately the monophonic and polyphonic acoustic scenes is ev aluated. F or nec , the differences between the systems are evaluated using a repeated measure ANO V A with one within-subject factor being the dif ferent systems and one between-subject factor being nec . For the E B R factor , performance differences between systems are analysed using System Featur es Classifier Background Reduction Estimation K omatsu [9] VQT NMF-MLD x Choi [10] Mel DNN x x Hayashi 1 [11] Mel BLSTM-PP x Hayashi 2 [11] Mel BLSTM-HMM x Phan [12] GTCC RF x Giannoulis [13] Mel CNMF x Pikrakis [14] Bark T emplate matching x V u [15] CQT RNN Gutierr ez [16] MFCC KNN x K ong [17] Mel DNN Baseline [18] VQT NMF T able 2: DCASE 2016 - T ask 2: description of submitted systems. For bre vity , acronyms are defined in the respecti ve citations. a repeated measure ANO V A with 2 within-subject factors, namely the different systems and the E BR . For the repeated measure ANO V A, the sphericity is ev aluated with a Maulchy test [6]. If the sphericity is violated, the p -value is computed with a Greenhouse-Geisser correction [7]. In this case, we note p gg the corrected p -v alue. P ost hoc analysis is done by fol- lowing the T ukey-Kramer procedure [8]. A significance threshold of α = 0 . 05 is chosen. 4. SYSTEMS For T ask 2, 10 systems were submitted from various international research laboratories together with one baseline system pro vided by the authors. An outline of the ev aluated systems is provided in T a- ble 2. Most systems can be split into sev eral successiv e processing blocks: a feature computation step optionally preceded by a denois- ing step (either estimating the background lev el in a training stage or performing background reduction) and a classification step where for each feature frame one or se veral ev ent labels may be triggered. Considering feature extraction, several design options have been taken, which are summarised belo w: • mel/bark : auditory model-inspired time/frequency represen- tations with a non linear frequency axis • VQT/CQT : variable- or constant-Q transforms • MFCC : Mel-frequency cepstral coefficients • GTCC : Gammatone filterbank cepstral coefficients Sev eral classifiers have been considered to process those fea- tures, ranging from nearest neighbour classifiers to spectrogram fac- torisation methods (such as NMF) to deep neural networks (such as RNNs). Classifiers are outlined in T able 2, with more details to be found in the respecti ve technical report of each submission [9]-[18]. 5. RESUL TS 5.1. Global Analysis Results in terms of F cweb are plotted on Fig. 1. An ANO V A analysis on F cweb shows a positive effect of the system type: F [10 , 530] = 466 , p gg < 0 . 01 ( F stands for the F-statistic and its arguments stand for the degrees of freedom for systems and error , respec- tiv ely). P ost hoc analysis clusters systems into 4 groups where each pair of systems within a group does not hav e any statistical differ- ences in terms of performance: 2017 IEEE W orkshop on Applications of Signal Processing to Audio and Acoustics October 15-18, 2017, New P altz, NY Komatsu Hayashi 1 Hayashi 2 Choi Phan Giannoulis Pikrakis Gutierrez Vu Baseline Kong Fcweb 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 Figure 1: Global performance of the systems submitted to DCASE 2016 - T ask 2, in terms of F cweb . Baseline performance is shown with a straight line. 1. K omatsu , Hayashi 1 , Hayashi 2 and Choi : performance ranges from 67% ( Choi ) to 71% ( K omatsu ); 2. Phan , Giannoulis and Pikrakis : performance ranges from 34% ( Pikrakis ) to 36% ( Phan ); 3. Baseline , V u and Gutierr ez : performance ranges from 21% ( Baseline ) to 23% ( Gutierr ez ); 4. K ong : performance is 2%. Among the 10 systems, 7 significantly improve upon the Base- line . Systems of Group 2 improv e around 15%, while those of Group 1 reach an improv ement close to 45%. The impact of the chosen classifier is difficult to grasp from the analysis of the results, as there is a wide variety of chosen architecture for the systems of Group 1. Concerning features though, 3 among 4 use Mel spectro- grams. Background estimation/reduction is of importance, as the 3 systems that do not e xplicitly handle it have the lowest performance. The least performing system is Kong as its results are system- atically below the baseline. One possible explanation of this weak performance is the poor management of the training phase of the DNN classifier [17]. It is kno wn that such architecture requires a large amount of data in order to be rob ustly trained, and without any data augmentation strategy such as the one used by Choi [10], the amount of data provided with the training dataset is not sufficient. The performance of K ong will therefore not be discussed further . 5.2. Monophonic vs. Polyphonic Scenes Results by scene type (monophonic vs. polyphonic scenes) are plot- ted on Fig. 2. The ANO V A analysis performed on F cweb shows a positi ve ef fect of the type of system ( F [9 , 468] = 358 , p gg < 0 . 01 ), b ut no significant effect of polyphony ( F [1 , 52] = 3 . 5 , p = 0 . 07 ). An interaction between scene type and system is never - theless noted. Thus, perhaps surprisingly , the scene type does not affect sys- tem performance, as the systems are on a verage able to handle both scene types equi valently . P ost hoc analysis on the scene type sho ws that among the 10 systems, 4 have their performance reduced while considering polyphonic scenes: Choi , Giannoulis , Komatsu and Pikrakis . For those systems, performance is reduced for polyphonic scenes, which probably e xplains the significant ef fect of interaction between scene type and system showed by the ANO V A analysis. P ost hoc analysis on the type of system considering mono- phonic or polyphonic scenes allows us to cluster submitted systems Komatsu Choi Hayashi 1 Hayashi 2 Giannoulis Pikrakis Phan Gutierrez Vu Baseline Fcweb 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 monophonic polyphonic Figure 2: Impact of polyphony on the performance of the systems submitted to DCASE 2016 - T ask 2, in terms of F cweb . Baseline performance is shown with a straight line. into the same 3 groups as those identified with the global perfor- mance, with the Kong system being removed. The only difference is the loss of the significant difference between systems Gutierr ez and Pikrakis on polyphonic scenes. 5.3. Background Le vel Results on v arying le vels of background noise are plotted on Fig. 3a for monophonic scenes. The ANO V A analysis shows a significant effect of the type of system ( F [9 , 72] = 80 , p gg < 0 . 01 ), the E B R ( F [2 , 16] = 164 , p gg < 0 . 01 ), and their interaction ( F [18 , 144] = 6 . 5 , p gg < 0 . 01 ). This interaction shows that the higher the E B R , the better the system performs, and this stands for all the systems except one: Komatsu . For the P ost hoc analysis, we study if the submitted systems reach performance that is significantly superior to the one achieved by the baseline. T o summarize the results for the monophonic scenes, the K omatsu system achieves the best performance, partic- ularly for high levels of background ( E B R = − 6 dB ). This is the only system that has its performance decreasing as a function of the E B R , probably due to the proposed noise estimation dictio- nary learned during the test phase. The systems of Choi , Hayashi 1 and Hayashi 2 systematically perform better than the others and equal the K omatsu system for E B R of 0 and +6 dB . For those 3 systems, raising the background level ( 6 dB → − 6 dB ) leads to a drop of performance of about 10% . The remaining systems out- perform the baseline only for some lev els of E B R . Those systems appear not to handle robustly the background, as their performance is notably lo wer as a function of its le vel, from − 10 to − 20% for an E B R of 6 dB to − 6 dB . Only Gutierr ez maintains its superiority with respect to the baseline in all cases. Considering polyphonic scenes, results are plotted on Fig. 3b. The ANO V A analysis shows a significant effect of the type of sys- tem ( F [9 , 72] = 113 , p gg < 0 . 01 ), of the E B R ( F [2 , 16] = 127 , p gg < 0 . 01 ), and of their interaction ( F [18 , 144] = 15 , p gg < 0 . 01 ). As with the monophonic scenes, the higher the E B R , the better the system performs, and this stands for all systems except one: Komatsu . Results for polyphonic scenes are thus similar to the ones achiev ed on monophonic scenes at the exception of 1) V u , Pikrakis and Gutierrez that have performance equiv alent to that of the baseline for all E B R le vels and 2) Phan , which reaches perfor - mance that is abov e the baseline for E B R s of 0 and +6 dB . 2017 IEEE W orkshop on Applications of Signal Processing to Audio and Acoustics October 15-18, 2017, New P altz, NY EBR -6 0 6 Fcw eb 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 Choi Baseline Giannoulis Gutierrez Hayashi 1 Hayashi 2 Komatsu Phan Pikrakis Vu (a) EBR -6 0 6 Fcw eb 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 Choi Baseline Giannoulis Gutierrez Hayashi 1 Hayashi 2 Komatsu Phan Pikrakis Vu (b) Figure 3: Impact of the background lev el ( E B R ) on the perfor- mance of the systems submitted to DCASE 2016 - T ask 2, consid- ering F cweb for (a) monophonic and (b) polyphonic scenes. 5.4. Number of Events Considering monophonic scenes, results are plotted in Fig. 4a. The ANO V A analysis sho ws a significant ef fect of the type of system ( F [9 , 216] = 264 , p gg < 0 . 01 ), but not on the number of ev ents ( nec ) ( F [2 , 24] = 0 . 5 , p = 0 . 6 ). An interaction between the two can nevertheless be observed. Similar conclusions are obtained for polyphonic scenes (Fig. 4b). The ANO V A analysis shows a signif- icant effect of the type of system ( F [9 , 216] = 170 , p gg < 0 . 01 ), but not of nec ( F [2 , 24] = 0 . 1 , p = 0 . 9 ) and some interaction between the two. Consequently , it is difficult to conclude on the influence of nec on the differences between systems. Some trends can be observed though. For monophonic scenes, augmentation of the number of events leads to a systematic per- formance improvement of 2 systems ( Hayashi 1 , Hayashi 2 ) and a decrease for 1 system ( Giannoulis ). For polyphonic scenes, an improv ement is observed for 3 systems ( Hayashi 1 , Hayashi 2 , K o- matsu ) and a decrease for 3 others ( Giannoulis , Pikrakis , Choi ). Contrary to the level of background which on average reduces the performance of the systems, it appears that the impact of the num- ber of e vents v aries from system to system. Hayashi 1 and Hayashi 2 react positiv ely to the augmentation of nec whereas Giuliano has its performance systematically decreasing, probably due to dif ferent miss detection / false alarm tradeof fs. 6. DISCUSSION Among the 3 experimental factors ev aluated, only the E B R seems to hav e a significant influence on the performance of the algorithm. No significant impact is observed for the polyphony and the num- nec 1 2 3 Fcw eb 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 Choi Baseline Giannoulis Gutierrez Hayashi 1 Hayashi 2 Komatsu Phan Pikrakis Vu (a) nec 3 4 5 Fcw eb 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 Choi Baseline Giannoulis Gutierrez Hayashi 1 Hayashi 2 Komatsu Phan Pikrakis Vu (b) Figure 4: Impact of the number of ev ents ( nec ) on the performance of the systems submitted to DCASE 2016 - T ask 2, considering F cweb for (a) monophonic and (b) polyphonic scenes. ber of events. Those results clearly demonstrate the usefulness of considering carefully the background in the design of the system. For all the E B R s considered, 4 systems ( Hayashi 1 , Hayashi 2 , Komatsu and Choi ) significantly have better performance than the baseline. Among those, K omatsu is the only one that obtains significantly higher performance than the other systems, this for an E B R of − 6 dB . Thus, the K omatsu system can be considered as the system with the best generalization capabilities, thanks to an efficient modelling of the background. In light of those results, we believ e that considering simulated acoustic scenes is very useful for acquiring knowledge about the properties and the behaviors of the ev aluated systems in a wider variety of conditions that can be tested using recorded and anno- tated material. W e acknowledge that the sole use of simulated data cannot be considered for definiti ve ranking of systems. Though, considering only recorded and manually annotated data as of to- day’ s scale does not allow researchers to precisely quantify which aspect of complexity of the scene is impacting the rele vant issues to tackle while designing recognition systems. Recorded data are in- deed often scarce resources as the design of large datasets that hav e a wide variety of recording conditions is complex and costly . Also, reaching a consensus between annotators can be hard. T aking a more methodological view , such a use of simulated data has recently received a lot of attention in machine learning re- search, inspired by experimental paradigms that are commonly used in experimental psychology and neuroscience where a special care of the stimuli is taken in order to inspect a given property of the system under scrutin y . This principle led to some interesting out- comes in a slightly different paradigm for a better understanding of the inner behavior of deep learning architectures [19, 20]. 2017 IEEE W orkshop on Applications of Signal Processing to Audio and Acoustics October 15-18, 2017, New P altz, NY 7. REFERENCES [1] D. Stowell, D. Giannoulis, E. Benetos, M. Lagrange, and M. D. Plumbley , “Detection and classification of acous- tic scenes and events, ” IEEE T ransactions on Multimedia , vol. 17, no. 10, pp. 1733–1746, October 2015. [2] http://www .cs.tut.fi/sgn/arg/dcase2016/, 2016 challenge on Detection and Classification of Acoustic Scenes and Events (DCASE 2016). [3] G. Lafay , M. Lagrange, M. Rossignol, E. Benetos, and A. Roebel, “ A morphological model for simulating acous- tic scenes and its application to sound event detection, ” IEEE/A CM T ransactions on Audio, Speech, and Language Pr ocessing , vol. 24, no. 10, pp. 1854–1864, October 2016. [4] A. Mesaros, T . Heittola, and T . V irtanen, “Metrics for poly- phonic sound event detection, ” Applied Sciences , v ol. 6, no. 6, 2016. [5] J. Dem ˇ sar , “Statistical comparisons of classifiers ov er multiple data sets, ” Journal of Machine learning r esearc h , v ol. 7, no. Jan, pp. 1–30, 2006. [6] J. W . Mauchly , “Significance test for sphericity of a normal n -variate distribution, ” Ann. Math. Statist. , vol. 11, no. 2, pp. 204–209, June 1940. [7] S. W . Greenhouse and S. Geisser , “On methods in the analysis of profile data, ” Psychometrika , vol. 24, no. 2, pp. 95–112, 1959. [8] J. W . T ukey , “Comparing individual means in the analysis of variance, ” Biometrics , vol. 5, no. 2, pp. 99–114, 1949. [9] T . K omatsu, T . T oizumi, R. K ondo, and Y . Senda, “ Acoustic ev ent detection method using semi-supervised non-ne gativ e matrix factorization with a mixture of local dictionaries, ” DCASE2016 Challenge, T ech. Rep., September 2016. [10] I. Choi, K. Kwon, S. H. Bae, and N. S. Kim, “DNN-based sound ev ent detection with exemplar -based approach for noise reduction, ” DCASE2016 Challenge, T ech. Rep., September 2016. [11] T . Hayashi, S. W atanabe, T . T oda, T . Hori, J. L. Roux, and K. T akeda, “Bidirectional LSTM-HMM hybrid system for polyphonic sound e vent detection, ” DCASE2016 Challenge, T ech. Rep., September 2016. [12] H. Phan, L. Hertel, M. Maass, P . Koch, and A. Mertins, “Car- forest: Joint classification-regression decision forests for over- lapping audio e vent detection, ” DCASE2016 Challenge, T ech. Rep., September 2016. [13] P . Giannoulis, G. Potamianos, P . Maragos, and A. Katsama- nis, “Improv ed dictionary selection and detection schemes in sparse-cnmf-based ov erlapping acoustic e vent detection, ” DCASE2016 Challenge, T ech. Rep., September 2016. [14] A. Pikrakis and Y . Kopsinis, “Dictionary learning as- sisted template matching for audio ev ent detection (legato), ” DCASE2016 Challenge, T ech. Rep., September 2016. [15] T . H. V u and J.-C. W ang, “ Acoustic scene and event recog- nition using recurrent neural networks, ” DCASE2016 Chal- lenge, T ech. Rep., September 2016. [16] J. Gutierrez-Arriola, R. Fraile, A. Camacho, T . Durand, J. Jar - rin, and S. Mendoza, “Synthetic sound event detection based on MFCC, ” DCASE2016 Challenge, T ech. Rep., September 2016. [17] Q. Kong, I. Sobieraj, W . W ang, and M. Plumbley , “Deep neural netw ork baseline for DCASE challenge 2016, ” DCASE2016 Challenge, T ech. Rep., September 2016. [18] E. Benetos, G. Lafay , and M. Lagrange, “DCASE2016 task 2 baseline, ” DCASE2016 Challenge, T ech. Rep., September 2016. [19] I. J. Goodfellow , J. Shlens, and C. Szegedy , “Explaining and harnessing adversarial examples, ” in International Confer ence on Learning Repr esentations , May 2015. [20] A. Nguyen, J. Y osinski, and J. Clune, “Deep neural networks are easily fooled: High confidence predictions for unrecogniz- able images, ” in 2015 IEEE Confer ence on Computer V ision and P attern Recognition (CVPR) , June 2015, pp. 427–436.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment