Feature extraction using Latent Dirichlet Allocation and Neural Networks: A case study on movie synopses

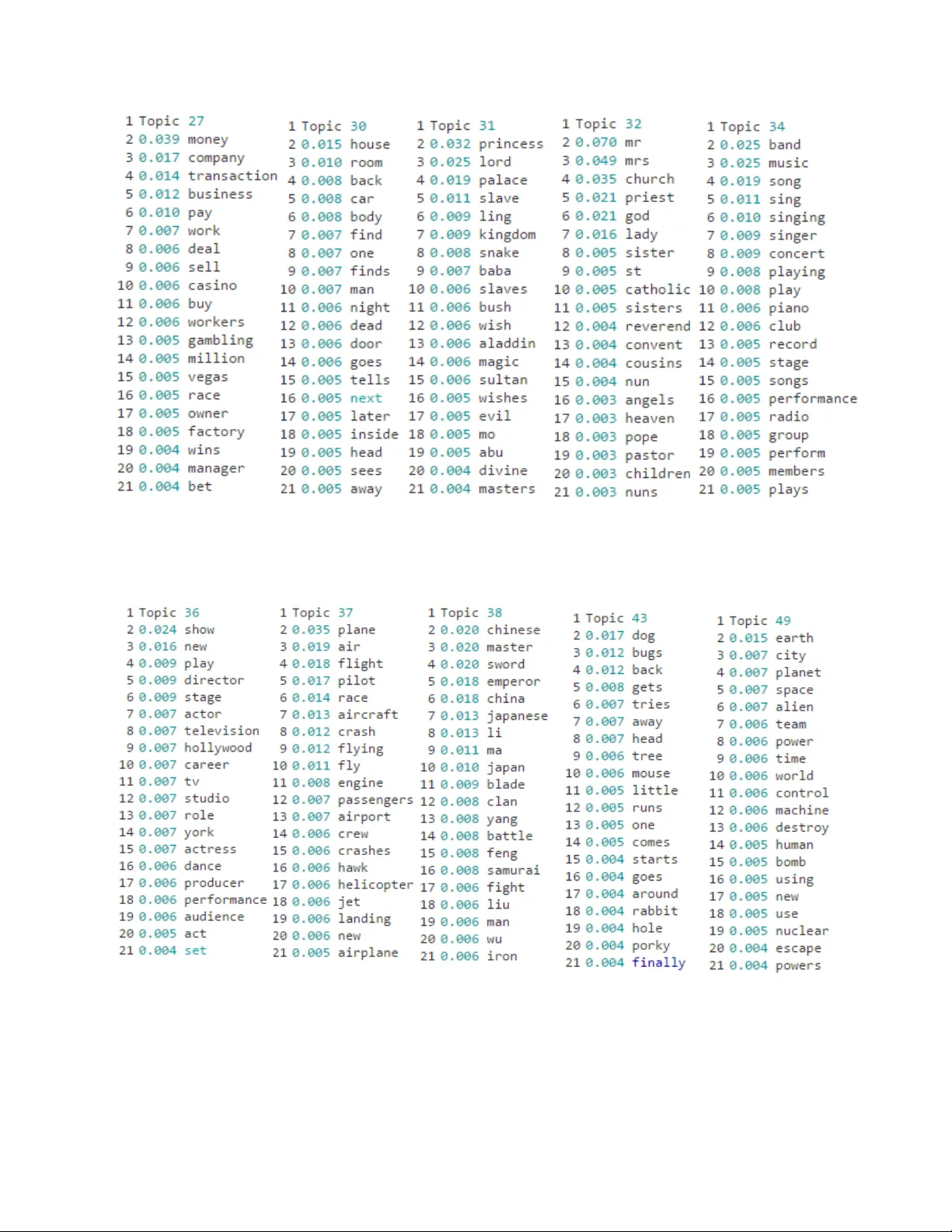

Feature extraction has gained increasing attention in the field of machine learning, as in order to detect patterns, extract information, or predict future observations from big data, the urge of informative features is crucial. The process of extrac…

Authors: Despoina Christou