Actively Learning to Attract Followers on Twitter

Twitter, a popular social network, presents great opportunities for on-line machine learning research. However, previous research has focused almost entirely on learning from passively collected data. We study the problem of learning to acquire follo…

Authors: Nir Levine, Timothy A. Mann, Shie Mannor

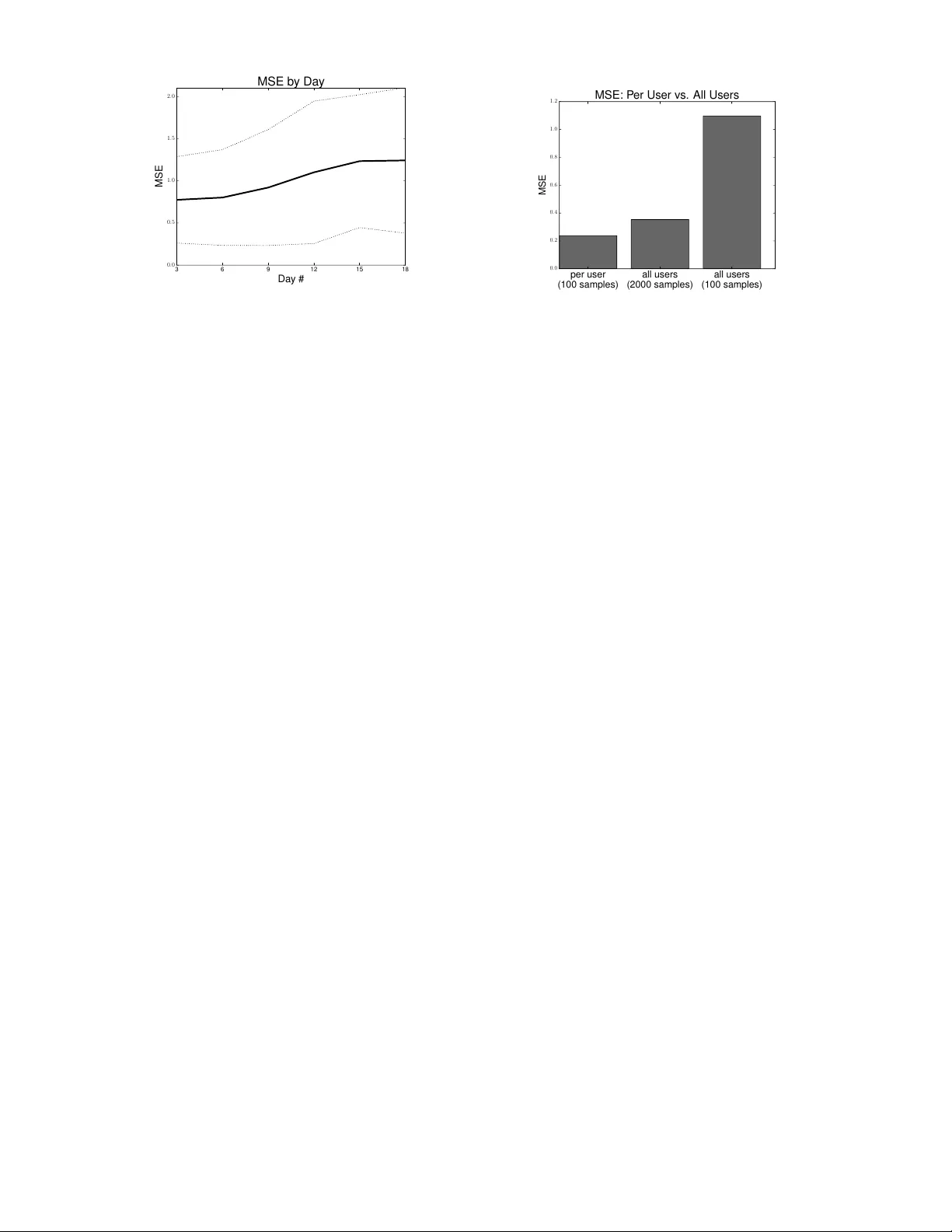

Activ ely Lear ning to Attract F ollowers on T witter Nir Levine Electrical Engineering The T echnion Haifa, Israel levinir@campus.technion.ac.il Timoth y A. Mann Electrical Engineering The T echnion Haifa, Israel mann@ee.technion.ac.il Shie Mannor Electrical Engineering The T echnion Haifa, Israel shie@ee.technion.ac.il Abstract T witter , a popular social netw ork, presents great opportunities for on-line machine learning research. Howe ver , pre vious research has focused almost entirely on learning from passiv ely collected data. W e study the problem of learning to acquire followers through normativ e user behavior , as opposed to the mass following policies applied by many bots. W e formalize the problem as a contextual bandit problem, in which we consider retweeting content to be the action chosen and each tweet (content) is accompanied by context. W e design re ward signals based on the change in followers. The result of our month long e xperiment with 60 agents suggests that (1) aggregating experience across agents can adversely impact prediction accuracy and (2) the T witter community’ s response to dif ferent actions is non-stationary . Our findings suggest that activ ely learning on-line can provide deeper insights about how to attract followers than machine learning ov er passiv ely collected data alone. Keyw ords: Reinforcement Learning, On-line Learning, Contextual Bandits, T witter Acknowledgements The research leading to these results has recei ved funding from the European Research Council under the European Unions Sev enth Framew ork Programme (FP/2007-2013) / ERC Grant Agreement n.306638. 1 Introduction T witter is an on-line social network that allows people to post short messages (140 characters maximum), called status updates or tweets. It also allows users to read status updates posted by other users. T wo additional actions are: (1) re-posting another user’ s status update, this action is called retweeting, and (2) fa voriting a tweet, i.e. the tweet is marked as a fa vorite of the user . As one of the largest social networks, T witter provides a great opportunity for e valuating machine learning algorithms on real-world data and ev aluating them on-line. W e target the problem of attracting follo wers in a community on T witter and ar gue that activ ely learning can provide deeper insights than learning o ver passi vely collected data alone. W ithout performing acti ve experiments it is dif ficult to determine whether factors turned up in the analysis are only correlated, but not causally related, with attracting follo wers. Moreover these factors may change ov er time or vary depending on the history of an individual user . Therefore running experiments where algorithms control an account (rather than simply observing its behavior) can provide useful insights into the de velopment of on-line relationships. One dif ficulty of creating agents with the goal of acquiring followers is that nai ve e xploitativ e strategies, such as mass following, are quite successful. This kind of aggressive following policy is easily labeled as bot behavior and is treated accordingly by the T witter community . Our objecti ve is to learn strategies that attract followers but a void violating behavioral norms. By doing so, the agents receiv e follo wers based on providing a v aluable service rather than exploiting other users. T witter has been the subject of intense research. Most of the work so far applied machine learning on passively collected data as opposed to learning from data collected on-line. Howe ver , collecting data on-line allows the agent to respond to the social network in real-time and choose actions most suitable to that time. This allows us to learn what causes users to follo w (not just what is correlated). Although the problem of acquiring followers seems to be a popular subject on the Internet, we are unaware of any academic research that has examined an on-line approach for learning to attract follo wers. The main contributions of this w ork are: (1) W e formalized the problem for learning to attract follo wers as a contextual bandit problem [3]. Our formulation encourages normativ e (rather than exploitati ve) behaviors, because the action space focuses on retweeting content (not follo wing users). (2) W e ex ecuted a month long on-line experiment with 60 agents. Each agent interacted directly with the T witter API and controlled a liv e T witter account. (3) W e provide e vidence for advantages of activ e learning ov er learning from passively collected data: the reward signal, based on the change in followers is non-stationary . Analyzing a data set collected in the past may result in poor performance, and more data is not always better . Although we had limited data for each individual agent, we found that aggregating data from multiple agents resulted in less accurate prediction. Therefore, learning should be applied to individual agents. 2 The Setting One of the main contributions of this work is the development of a well-defined problem for acti vely learning in a social netw ork. T o our knowledge, no pre vious work has proposed a well-defined, activ e problem in social networks. W e allo w a learning system to control a single user account on the T witter social network. W e will refer to this learning system as an agent. Our first intuition w as to identify a multi-armed bandit problem [2], where the agent plays in a series of rounds. Let A be a set of K ≥ 2 actions. At each round, the agent selects an action a ∈ A associated with an initially unkno wn probability distribution o ver rew ards. Once an action is selected, a re ward is sampled from its corresponding distrib ution. The goal of the agent is to maximize its expected re ward. T o formalize a problem as a multi-armed bandit, we needed to answer a few questions: (1) What are the rewards? (2) What are the actions av ailable to the agent? (3) What is a round? 2.0.1 Rewards Our objectiv e is to design an agent that learns how to acquire followers. Howe ver , gaining follo wers is a rare ev ent. A re ward based entirely on the change in follo wers is difficult to learn from because the signal is sparse. Thus, we constructed re ward signals from both changes in number of followers and other related e vents as explained in section 3. 2.0.2 Actions The number of messages that can be expressed in 140 characters is too large to address directly . Additionally , users can perform other actions like following other users, retweeting existing status updates, and fa voriting tweets, thereby significantly increasing the number of possible actions. Howe ver , a traditional multi-armed bandit problem assumes that the number of actions K is reasonably small. 1 In our experiment we used other users’ content as actions rather than trying to in vent new content. Furthermore, we restricted the set of tweets considered by the agent for retweeting to recent tweets containing the word “baseball”. W e chose the domain “baseball” because it has a large community b ut still represents a specialized domain where agents can learn to retweet v aluable content. Since in our setting it is very unlikely to sample more than once a specific action, we modeled the problem as a contextual bandit problem [3], which is a generalization of the multi-armed bandit problem. In each trial t , the algorithm observes a set A t of possible actions sampled from a distribution ρ with support A , where A is the collection of all tweets. Each action a ∈ A t corresponds to a status update that can be retweeted and is described by a feature vector x t,a . This feature vector x t,a describes properties of the action and the state of the en vironment. After choosing an action, the algorithm recei ves a re ward r t,a t from which it learns and improv es to the next trial. The objectiv e of the agent is to learn a decision rule µ that maximizes r = E A t ∼ ρ [ r t,a t | µ ] , (1) where r is the e xpected rew ard. In our experiment we use decision rules of the form µ ( A t ) = arg max a ∈A t f ( x t,a ) (2) where f is a learned function that predicts the expected re ward. Due to the high-dimension of X = { x t,a } , the feature space, we estimate rew ards using function approximation/regression. The result of function approximation is a function f : X → R that predicts the reward receiv ed for selecting the action a ∈ A t corresponding to x t,a . 2.0.3 Rounds A round could potentially be any constant length of time. Howe ver , enforcing normati ve beha vior along with providing enough time between actions to allow other users to respond, led us to choose a time period of one hour between rounds. A typical round in our s etting is as following. First, the agent requests and recei ves a collection of recent tweets from the T witter API about a specialized domain (e.g., baseball). Next, the agent examines the tweets it receiv ed from the T witter API and retweets one of them. Finally , the agent sleeps for an hour and requests information about what changed. The agent then uses this information to calculate a rew ard and updates its decision rule. This finishes the round and a new round begins immediately . 2.0.4 The Exploration-Exploitation Dilemma Exploration, trying actions with uncertain reward, is a critical issue in multi-armed bandit problems. W e want the agent to choose an action that will ha ve a high probability of attracting followers (exploitation), but the agent also needs to try various actions to learn what attracts follo wers (exploration). For simplicity , we use a simple the popular -greedy exploration strate gy , which selects a random action with probability and the action with the highest predicted rew ard with probability (1 − ) . 3 Experiment W e designed and executed an experiment to determine whether simple machine learning algorithms could learn to attract more followers on T witter than a random baseline. W e created 60 T witter accounts, each agent controlled one account. Every hour t ≥ 0 , each agent requested a collection of tweets A t from T witter . The agents selected a tweet a t ∈ A t to be retweeted (i.e., a status update) based on a list of features that were extracted from the tweets, x t,a t . One hour later a reward signal for a t was computed by the agent. In line with our objective of maintaining normati ve behavior , agents only follo wed the user that posted a t (before the agent retweeted it) with probability P ( f oll ow ) = 0 . 5 . The entire experiment was performed by using T witter API. 1 The rew ard signal used during the experiment w as r t,a t = α 0 ∆ a,t + α 1 ∆ u,t + α 2 f t + α 3 w t , (3) where ∆ a,t is the change in the number of agent’ s followers, ∆ u,t is the change in the number of follo wers for the tweet’ s original poster , f t is the number of fav orites the tweet receiv ed, and w t is the number of retweets made to this tweet. The coef ficients were α 0 = 100 , α 1 = 10 , α 2 = 10 , and α 3 = 1 , aligned with our objecti ve we gav e significantly higher weight to change in number of agent’ s followers. Our experiment focused on tweets containing the string “baseball”, because there is a constant flow of status updates and the topic is specialized enough so that an agent might learn useful knowledge about the domain. Status updates with of fensiv e language were filtered before the agent made a selection. W e di vided the agents in three groups of 20: (1) uniform random (UR), (2) a gradient-based estimator (GE), and (3) a batch-based estimator (BE). GE and BE estimated the reward signal for each status update from a collection of 87 features. W e extracted features from the tweet and the user that posted it. W e selected features based on prior work, such as [6, 5], along with others. T o encourage exploration, GE and BE selected a status update according to the uniform random rule with probability = 0 . 05 . 1 https://dev.twitter.com/docs/api/1.1 2 3.1 Uniform Random (UR) Agents The baseline UR algorithm selected retweets according to a uniform random distribution over the set of status updates. Thus, UR does not do any learning. 3.2 Gradient Estimator (GE) Agents The GE algorithm incrementally updated a linear function approximator . GE applied gradient descent to minimize Mean Squared Error (MSE) and used a constant learning rate ( η = 0 . 1 ). T o better utilize the data, we introduced an adviser [4] to the GE algorithm. The adviser observes tweets not retweeted by the agent, and one hour later , at the same time the agent observ es its reward, the adviser computes a modified rew ard signal r 0 t,a t = β 0 ∆ a,u t + β 1 f a,t + β 2 w a,t , (4) for the other tweets where ∆ a,u t is the change in the number of followers for the tweet’ s original poster , f a,t is number of fav orites the tweet recei ved, w a,t is the number of retweets made to this tweet, and coefficients β 0 = 10 , β 1 = 10 , β 2 = 1 . Then, the adviser generates a hypothesis, using a batch training approach. The hypothesis generated by the adviser is weighted with the hypothesis generated by the agent. T o reduce noise in the re ward signal, GE only takes a learning step each 8 hours, av eraging over all the hypotheses, to generate a ne w hypothesis as suggested by [1]. 3.3 Batch-based Estimator (BE) Agents The BE algorithm used Ordinary Least Squares (OLS) to train a linear function approximator after each round on all instances where the algorithm recei ved the reward signal (3). All samples were weighted the same and the algorithm minimized MSE over the training samples. The BE algorithm used only samples it had selected to retweet (i.e., it did not use an advisor like the GE agents). 4 Experimental Results W e ran the experiment for about one month (May 9 – June 11, 2014) generating more than 600 status-updates for each account. At the end of the experiment, the average number of followers for the different groups was: 41.1 for UR, BE finished with 41.6, and GE had 44.95. Thus, GE acquired about 10% more follo wers on average than UR. A one sided T -test on the UR and GE groups shows that the difference is statistically significant with p = 0 . 0291 (i.e., 97.09% confidence that the two groups are generated by distributions with dif ferent means). Howe ver , the difference between UR and BE groups was not significant (see the ne xt section for details). T wo weeks before the end of the e xperiment, the a verage number of followers was 23.6, 23.6, and 23.8 for BE, UR, and GE respectiv ely . Howe ver , ov er the last two weeks the difference between UR and GE grew 11 fold. This demonstrates that machine learning can attract more followers than a random strategy . It also raises the question: Why did GE outperform UR, while BE did not? In the next section, we analyze our experimental data to gain insight on attracting follo wers. 5 Importance of Active Learning For comparison, throughout the analysis we normalized the rewards to have zero mean and a standard deviation of one. W e noticed the poor performance of the BE algorithm. When we tested the performance offline, we found that Ordinary Least Squares (OLS) resulted in div ergence. Therefore, while analyzing the data we used dif ferent function approximation models, Ridge Regression, LASSO, Elastic Net, and Support V ector Regression (SVR). 2 These methods apply types of regularization, thus gav e better results (in off-line training). In this section we sho w the importance of activ ely learning from data as opposed to learning from passi vely collected data. W e identify two main problems in learning from passively collected: (1) the relationship between the features and reward function appears to be non-stationary , and (2) generalizing between agents tends to degrade prediction accuracy . 5.1 Nonstationarity By examining the data, we found considerable evidence that the re ward signal is a non-stationary function of our features. Thus training only on passi vely collected data is probably not satisfactory , because the best actions for acquiring follo wers seem to be time sensitiv e. For each BE agent data (sorted chronologically) we trained and e valuated SVR model (achie ved the smallest MSE). W e divided the samples into chunks containing 100 sequential instances. The size was selected by experimentation yielding the smallest MSE. Each chunk was split into 75% training data and 25% testing data. Finally , we took the median MSE ov er all of the agents. The median MSE was 0.24, an improv ement of 15% ov er when trained with all the data together . Thus, training with all data resulted in more error . 2 The implementations used in our analysis are av ailable at http://scikit-learn.org . 3 3 6 9 12 15 18 Da y # 0 . 0 0 . 5 1 . 0 1 . 5 2 . 0 MSE MSE b y Da y Figure 1: MSE of an SVR algorithm trained on the first 100 samples and tested on the remainder of the data. The MSE is plotted as a function of time (in days) after the 100 samples were collected. The error increases over time indicating that the rew ard signal we are trying to predict is nonstationary . per user (100 samples) all users (2000 samples) all users (100 samples) 0 . 0 0 . 2 0 . 4 0 . 6 0 . 8 1 . 0 1 . 2 MSE MSE: P er User vs . All Users Figure 2: Comparison of MSE per user with 100 sam- ples, all users with 2000 samples, and all users with 100 samples (75% training, 25% test). Combining data between users negati vely impacts prediction ac- curacy . Next we looked at predicting the number of followers rather than the reward signal (3) used in our experiments. For each agent, we trained SVR on the first 100 samples (sorted chronologically) and then used the remaining samples as test data. Figure 1 sho ws that the MSE increases as the e xperiment progresses. This is consistent with our hypothesis that the best strate gy for attracting follo wers is changing ov er time. These findings suggests that the re ward signal is non-stationary , therefore learning from on-line data may result in more accurate predictions, as opposed to learning from passiv ely collected data. 5.2 Generalizing Across Users W e examined the ev olution of the weights learned by the GE agents. Specifically , we examined the median values and standard deviations for the weights between all the agents. The median values did not con verge to a single point on weights space. On the contrary , the standard deviations increased o ver time, meaning the agents were learning dif ferent hypotheses. Figure 2 sho ws the increase in error for BE agents when generalizing between agents compared to training on a single agent’ s data. W e sho w the MSE for an SVR algorithm in three different cases, (1) per user with 100 samples, (2) combined data with 2000 samples, (3) combined data with 100 samples (using a mo ving window over the data). The MSE increases when generalizing over data from multiple users. When we used the same sample size (100) the MSE increased dramatically (around 4 fold). Even when we used a 20 times bigger training sets for the combined users’ data (corresponding to 100 samples from each agent), the MSE still increased compared to the per user setup. This demonstrates the importance of an agent learning from its own history . Simply generalizing ov er multiple agents’ history actually degrades performance e ven with significantly more training data. References [1] Ofer Dekel and Y oram Singer . Data-driv en online to batch con versions. In NIPS , volume 18, page 267, 2005. [2] Tze Leung Lai and Herbert Robbins. Asymptotically efficient adaptiv e allocation rules. Advances in applied mathematics , 6(1):4–22, 1985. [3] Lihong Li, W ei Chu, John Langford, and Robert E. Schapire. A contextual-bandit approach to personalized ne ws article recom- mendation. In Pr oceedings of the 19th International Conference on W orld W ide W eb , WWW ’10, pages 661–670, New Y ork, NY , USA, 2010. ACM. [4] V inay N Papudesi and Manfred Huber . Learning from reinforcement and advice using composite reward functions. In FLAIRS Confer ence , pages 361–365, 2003. [5] Bongwon Suh, Lichan Hong, Peter Pirolli, and Ed H Chi. W ant to be retweeted? large scale analytics on factors impacting retweet in twitter network. In Social computing (socialcom), 2010 ieee second international confer ence on , pages 177–184. IEEE, 2010. [6] Dan Zarrella. Science of retweets. Retrieved December , 15:2009, 2009. 4

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment