Self-informed neural network structure learning

We study the problem of large scale, multi-label visual recognition with a large number of possible classes. We propose a method for augmenting a trained neural network classifier with auxiliary capacity in a manner designed to significantly improve …

Authors: David Warde-Farley, Andrew Rabinovich, Dragomir Anguelov

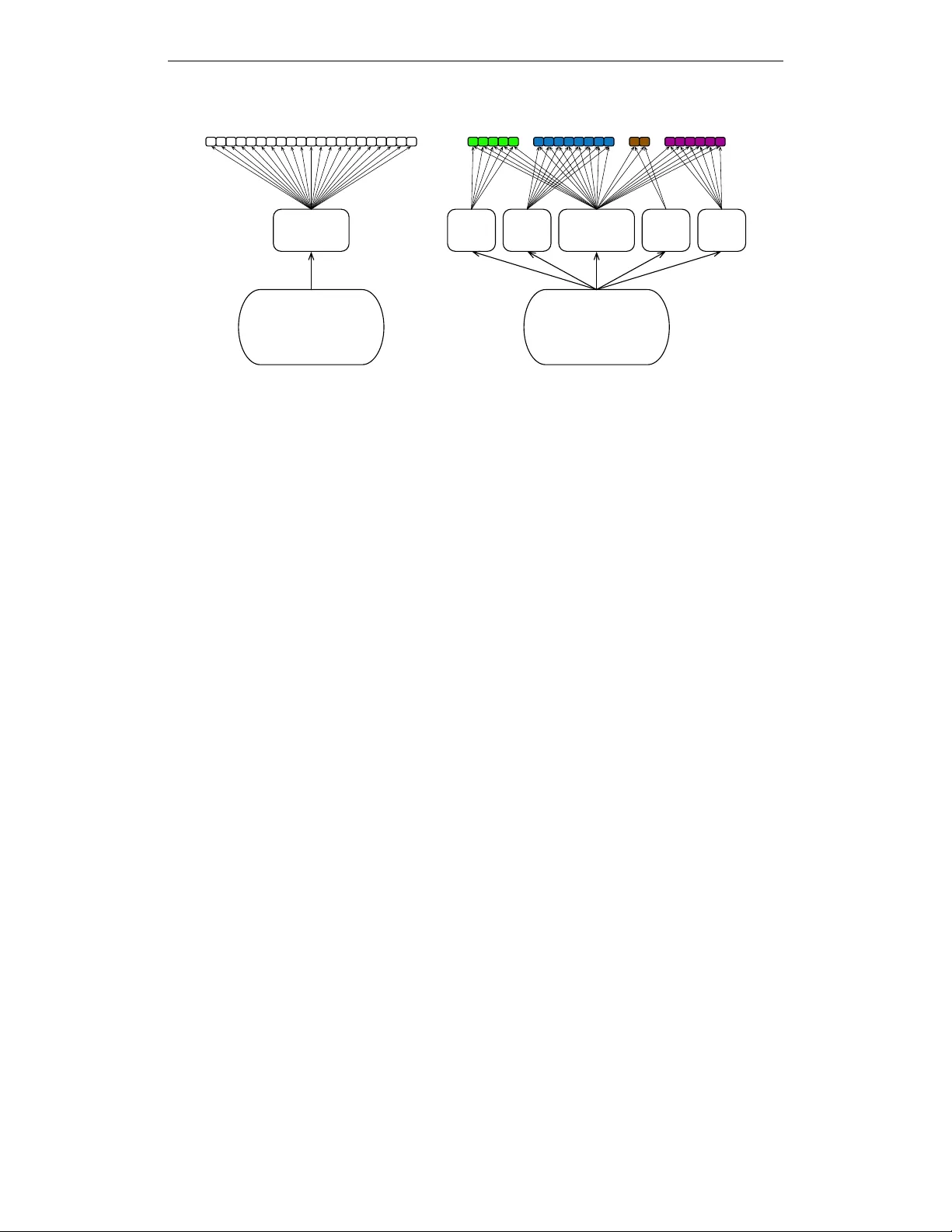

Accepted as a workshop contrib ution at ICLR 2015 S E L F - I N F O R M E D N E U R A L N E T W O R K S T R U C T U R E L E A R N I N G David W arde-Farley ∗ D ´ epartement d’Informatique et de Recherche Op ´ erationelle Univ ersit ´ e de Montr ´ eal Montreal, Quebec, Canada wardefar@iro.umontreal.ca Andrew Rabinovich & Dragomir Anguelov Google, Inc. Mountain V iew , CA 94043, USA { amrabino,dragomir } @google.com A B S T R AC T W e study the problem of large scale, multi-label visual recognition with a large number of possible classes. W e propose a method for augmenting a trained neural network classifier with auxiliary capacity in a manner designed to significantly improv e upon an already well-performing model, while minimally impacting its computational footprint. Using the predictions of the network itself as a descrip- tor for assessing visual similarity , we define a partitioning of the label space into groups of visually similar entities. W e then augment the network with auxilliary hidden layer pathways with connectivity only to these groups of label units. W e report a significant improvement in mean av erage precision on a large-scale ob- ject recognition task with the augmented model, while increasing the number of multiply-adds by less than 3%. 1 I N T RO D U C T I O N In the conte xt of lar ge scale visual recognition, it is not uncommon for state-of-the-art con volutional networks to be trained for days or weeks before conv ergence (Krizhevsk y et al., 2012; Sermanet et al., 2014; Szegedy et al., 2014). Performing exhausti ve architecture search is quite challenging and computationally expensiv e. Furthermore, once a satisfactory architecture has been discovered, it can be extremely dif ficult to improve upon; small changes to the architecture more often decrease performance than improve it. In architectures containing fully-connected layers, naively increasing the dimensionality of such layers increases the number of parameters between them quadratically , increasing both the computational workload and the tendency to wards overfitting. In settings where the domain of interest comprises thousands of classes, improving performance on specific subdomains can prove challenging, as the jointly learned features that succeed on the ov erall task on a verage may not be suf ficient for correctly identifying the “long tail” of classes, or for making fine-grained distinctions between very similar entities. Side information in the form of metadata – for example, from Freebase (Bollacker et al., 2008) – often only roughly corresponds to the kind of similarity that would make correct classification challenging. In the context of object classification, visually similar entities may belong to vastly different high-lev el categories (e.g. a sporting activity and the equipment used to perform it), whereas two entities in the same high-level semantic category may bear little resemblance to one another visually . A traditional approach to building increasingly accurate classifiers is to av erage the predictions of a large ensemble. In the case of neural netw orks, a common approach is to add more layers or making existing layers significantly larger , possibly with additional re gularization. These strategies present a significant problem in runtime-sensiti ve production en vironments, where a classifier must be rapidly ∗ W ork done while D W F was an intern at Google. 1 Accepted as a workshop contrib ution at ICLR 2015 Low-level features Generalist Low-level features Generalist Specialist Specialist Specialist Specialist Figure 1: A schematic of the augmentation process. Left: the original network. Right: the network after augmentation. ev aluated in a matter of milliseconds to comply with service-level agreements. It is therefore often desirable to increase a classifier’ s capacity in a way that significantly improv es performance while minimally impacting the computational resources required to ev aluate the classifier; howe ver , it is not immediately obvious ho w to satisfy these two competing objectives. W e present a method for judiciously adding capacity to a trained neural network using the network’ s own predictions on held-out data to inform the augmentation of the network’ s structure. W e demon- strate the ef ficacy of this method by using it to significantly improve upon the performance of a state-of-the-art industrial object recognition pipeline based on Sze gedy et al. (2014) with less than 3% extra computational o verhead. 2 M E T H O D S Giv en a trained network, we ev aluate the network on a held out dataset in order to compute a con- fusion matrix. W e then apply spectral clustering (Chung, 1997) to generate a partitioning of the possible labels. W e augment the trained network’ s structure by adding additional stacks of fully connected layers, connected in parallel with the pre-existing stack of fully-connected layers. The output of each “aux- iliary head” is connected by a weight matrix only to a subset of the output units, corresponding to the label clusters discov ered by spectral clustering. W e train the augmented network by initializing the pre-existing portions of the network (minus the classifier layer’ s weights and biases) to the parameters of the original network, and by randomly ini- tializing the remaining portions. W e train holding the pre-existing weights and biases fixed, learning only the hidden layer weights for the ne w portions and retraining the classifier layer’ s weights. This allows for training to focus on making good use of the auxiliary capacity rather than adapting the pre-initialized weights to compensate for the presence of the ne w hidden units. Note that it is also possible to fine-tune the whole network after training the augmented section, though we did not perform such fine-tuning in the experiments described belo w . 3 R E L A T E D W O R K Our method can be seen as similar in spirit to the mixture of experts approach of Jacobs et al. (1991). Rather than jointly learning a gating function as well as experts to be gated, we employ as a starting point a strong generalist network, whose outputs then inform decisions about which specialist networks to deploy for dif ferent subsets of classes. Our specialists also do not train with the original data as input b ut rather a higher-le vel feature representation output by the original network’ s con volutional layers. 2 Accepted as a workshop contrib ution at ICLR 2015 Recent work on distillation (Hinton et al., 2014), building on earlier work termed model compres- sion (Bucilu et al., 2006), emphasizes the idea that a great deal of valuable information can be gleaned from the non-maximal predictions of neural network classifiers. Distillation makes use of the av eraged ov erall predictions of sev eral expensi ve-to-e valuate neural networks as “soft targets” in order to train a single network to both predict the correct label and mimic the overall predictions of the ensemble as closely as possible. As in Hinton et al. (2014), we use the predictions of the model itself, howe ver we use this knowledge in the pursuit of carefully adding capacity to a single, already trained network, rather than mimicking the performance of many networks with one. Our approach is arguably complementary , and could conceivably be applied after distilling an ensemble into a single mimic network in order to further improv e fine-grained performance. 4 E X P E R I M E N T S Our base model consists of the same con v olutional Inception architecture employed in GoogLeNet (Szegedy et al., 2014), plus two fully connected hidden layers of 4,096 rectified lin- ear (ReLU) units each. Our output layer consists of logistic units, one per class. W e e valuated the trained network on 9 million images not used during training. Let g j ( x ) = 1 , if example x has ground truth annotation for class j 0 , otherwise (1) M i,K ( x ) = 1 , if model M ’ s top K predicted labels on example x includes class i 0 , otherwise (2) W e compute the follo wing matrix on the hold-out set S : A = [ a ij ]; a ij = E x ∈ S [ M i,K ( x ) · g j ( x )] (3) using K = 100 . W e use the seemingly lar ge value of K = 100 in order to recov er annotations for a large fraction of possible classes on at least one example in the hold-out set. W e term the detection of class i in the context of ground truth class j a confusion of i with j ; the ( i, j ) th entry of this matrix thus encodes the fraction of the time class i is “confused” with class j on the hold-out set. W e also e xperimented with the matrix A = [ a ij ]; a ij = E x ∈ S [ M i,K ( x ) · M j,K ( x )] (4) wherein we eschew the use of ground truth and only look at co-detections , again with K = 100 . W e symmetrize either matrix as B = A T A , and apply spectral clustering using B as our similarity matrix, following the formulation of Ng et al. (2002). In all of our experiments, our specialist sub- networks consisted of two layers of 512 ReLUs each. W e ev aluate our method on an expanded version of the JFT dataset described in Hinton et al. (2014), an internal Google dataset with a training set of approximately 100 million images spanning 17,000 classes. 5 R E S U L T S 5 . 1 L A B E L C L U S T E R S R E C O V E R E D In T able 1, we observe that spectral clustering on the matrix B was able to successfully recover clusters consisting of visually similar entities. 5 . 2 T E S T S E T P E R F O R M A N C E I M P RO V E M E N T S W e ev aluate on a balanced test set with the same number of classes per image. F or each of the confusion and co-detection cases, we compare against a network with identical capacity and topol- ogy (i.e. same number of labels per cluster) with labels randomly permuted, in order to assess the importance of the particular partitioning discovered while carefully controlling for the number of parameters being learned. 3 Accepted as a workshop contrib ution at ICLR 2015 Runway , Handshake, Douglas dc-3, T armac, Boeing, Air show , Interceptor, Hospital ship, Coast guard, Republic p-47 thunderbolt, Sikorsky sh-3 sea king, Boeing 737, Mcdonnell douglas dc-10, Air force, Boeing 757, Boeing 717, Hov ercraft, Lockheed ac-130, McDonnell Douglas, Tra vel, Aircraft engine, Flight, Y awl, Lockheed c-5 galaxy , Cockpit, Bomber, Lockheed p-3 orion, A vro lancaster, Jet aircraft. . . Pickled food, Grilled food, North african cuisine, V inegret, W oku, Lasagne, Lard, Meringue, Peanut butter and jelly sandwich, Sparkling wine, Salting, Raclette, Mussel, Galliformes, Chemical compound, Succotash, Cucurbita, Alcoholic beverage, Bento, Osechi, Ok onomiyaki, Nabemono, Miso soup, Dango, Onigiri, T empura, Mochi, Soba, Shiitake, Indian cuisine, Andhra food, Foie gras, Krill, Sour cream, Saumagen, Compote. . . Lingonberry , Rooibos, Persimmon, Rutabaga, Banana family , Ensete, Apple, V iola, Shamrock, W alnut, Beech, Poppy , Kimjongilia, Chicory , Bay leaf, Melon, Grain, Juniper, Spruce, Fir , Birch family , Hawthorn, Guav a, Gooseberry , Tick, Pouchong, Bonsai, Caraway , Fennel, Sea anemone, Maple sugar, Boysenberry , Mustard and cabbage family, Pond, Moss, Daik on, Wild ginger , Groundcover , Holly , V iburnum lentago, Ivy family , Mustard seed. . . T able 1: Examples of partial sets of labels grouped together by performing spectral clustering on the base network’ s confusions, based on the 100 top scoring predictions. The first row appears aviation-related, the second focusing on mainly food, and the third broadly concerned with plant-related entities. Description mAP @ top 50 # Multiply-Adds Extra Computation Base network 36.80% 1.52B 1.000 × Base + 6 heads, confusions 39.41% 1.56B 1.026 × Base + 6 heads, randomized 32.97% ” ” Base + 13 heads, co-detections 38.07% 1.60B 1.053 × Base + 13 heads, randomized 32.13% ” ” While both methods improv e upon the base network, the use of ground truth appears to provide a significant edge. Our best performing network, with 6 specialist heads, increases the number of multiply-adds required for ev aluation from 1.52 billion to 1.56 billion, a modest increase of 2.6%. W e also provide, in Figure 2, an e valuation of our best performing JFT network against the ImageNet 1,000-class test set, on the subset of JFT classes that can be mapped to classes from the ImageNet task (approximately 660 classes). These results are thus not directly comparable to results obtained on the ImageNet training set; a more direct comparison is left to follow-up w ork. Figure 2: A preliminary ev aluation of our trained network on the subset of classes in JFT that are mappable to the 1,000-class ImageNet classification task. 6 C O N C L U S I O N S & F U T U R E W O R K W e hav e presented a simple and general method for improving upon trained neural network classi- fiers by carefully adding capacity to groups of output classes that the trained model itself considers similar . While we demonstrate results on a computer vision task, this is not an assumption underly- ing the approach, and we plan to extend it to other domains in follo w-up work. In these e xperiments we hav e allocated a fixed extra capacity to each label group, regardless of the number of labels in that group. Further in vestig ation is needed into strategies for the allocation of 4 Accepted as a workshop contrib ution at ICLR 2015 capacity to each label group. Seemingly relev ant factors include both the cardinality of each group and the amount of training data av ailable for the labels contained therein; howe ver , the difficulty of the discrimination task does not necessarily scale with either of these. In the case of the particular con volutional network we ha ve described, it is not ob vious that the best place to connect these auxiliary stacks of hidden layers is follo wing the last con volutional layer . Most of the capacity , and therefore arguably most of the discriminati ve knowledge in the network, is contained in the fully connected layers, and appealing to this part of the network for augmentation purposes seems natural. Nonetheless, it is possible that one or more layers of group-specific con vo- lutional feature maps could be beneficial as well. Note that the augmentation procedure could also theoretically be applied more than once, and not necessarily in the same location. Each subsequent clustering and retraining step could potentially identify a complementary di vision of the label space, capturing new information. Finally , this can be seen as a small step towards the “conditional computation” en visioned by Ben- gio (2013), wherein rele v ant pathways of a large network are conditionally acti v ated based on task relev ance. Here we have focused on the relativ ely large gains to be had with computationally inex- pensiv e, targeted augmentations. Similar strate gies could pave the way towards networks with much higher capacity specialists that are only ev aluated when necessary . R E F E R E N C E S Bengio, Y oshua. Deep learning of representations: Looking forward. In Statistical Languag e and Speech Pr ocessing , pp. 1–37. Springer , 2013. Bollacker , K urt, Evans, Colin, Paritosh, Prav een, Sturge, Tim, and T aylor , Jamie. Freebase: a collaborativ ely created graph database for structuring human knowledge. In Pr oceedings of the 2008 A CM SIGMOD international confer ence on Manag ement of data , pp. 1247–1250. ACM, 2008. Bucilu, Cristian, Caruana, Rich, and Niculescu-Mizil, Alexandru. Model compression. In Pr o- ceedings of the 12th ACM SIGKDD international confer ence on Knowledge discovery and data mining , pp. 535–541. A CM, 2006. Chung, Fan RK. Spectral graph theory , volume 92. American Mathematical Soc., 1997. Hinton, Geoffrey E., V inyals, Oriol, and Dean, Jeff. Distilling the knowledge in a neural network. In NIPS 2014 Deep Learning W orkshop , 2014. Jacobs, Robert A, Jordan, Michael I, Nowlan, Ste ven J, and Hinton, Geoffre y E. Adaptive mixtures of local experts. Neural computation , 3(1):79–87, 1991. Krizhevsk y , Alex, Sutskev er , Ilya, and Hinton, Geoffrey E. Imagenet classification with deep conv o- lutional neural networks. In Advances in neural information pr ocessing systems , pp. 1097–1105, 2012. Ng, Andrew Y , Jordan, Michael I, W eiss, Y air , et al. On spectral clustering: Analysis and an algo- rithm. Advances in neural information pr ocessing systems , 2:849–856, 2002. Sermanet, Pierre, Eigen, David, Zhang, Xiang, Mathieu, Michael, Fergus, Rob, and LeCun, Y ann. Overfeat: Integrated recognition, localization and detection using conv olutional networks. In International Conference on Learning Representations (ICLR2014) . CBLS, April 2014. Szegedy, C., Liu, W ., Jia, Y ., Sermanet, P ., Reed, S., Anguelov, D., Erhan, D., V anhoucke, V ., and Rabinovich, A. Going Deeper with Con volutions. ArXiv e-prints , September 2014. 5

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment