Compressed Matrix Multiplication

Motivated by the problems of computing sample covariance matrices, and of transforming a collection of vectors to a basis where they are sparse, we present a simple algorithm that computes an approximation of the product of two n-by-n real matrices A…

Authors: Rasmus Pagh

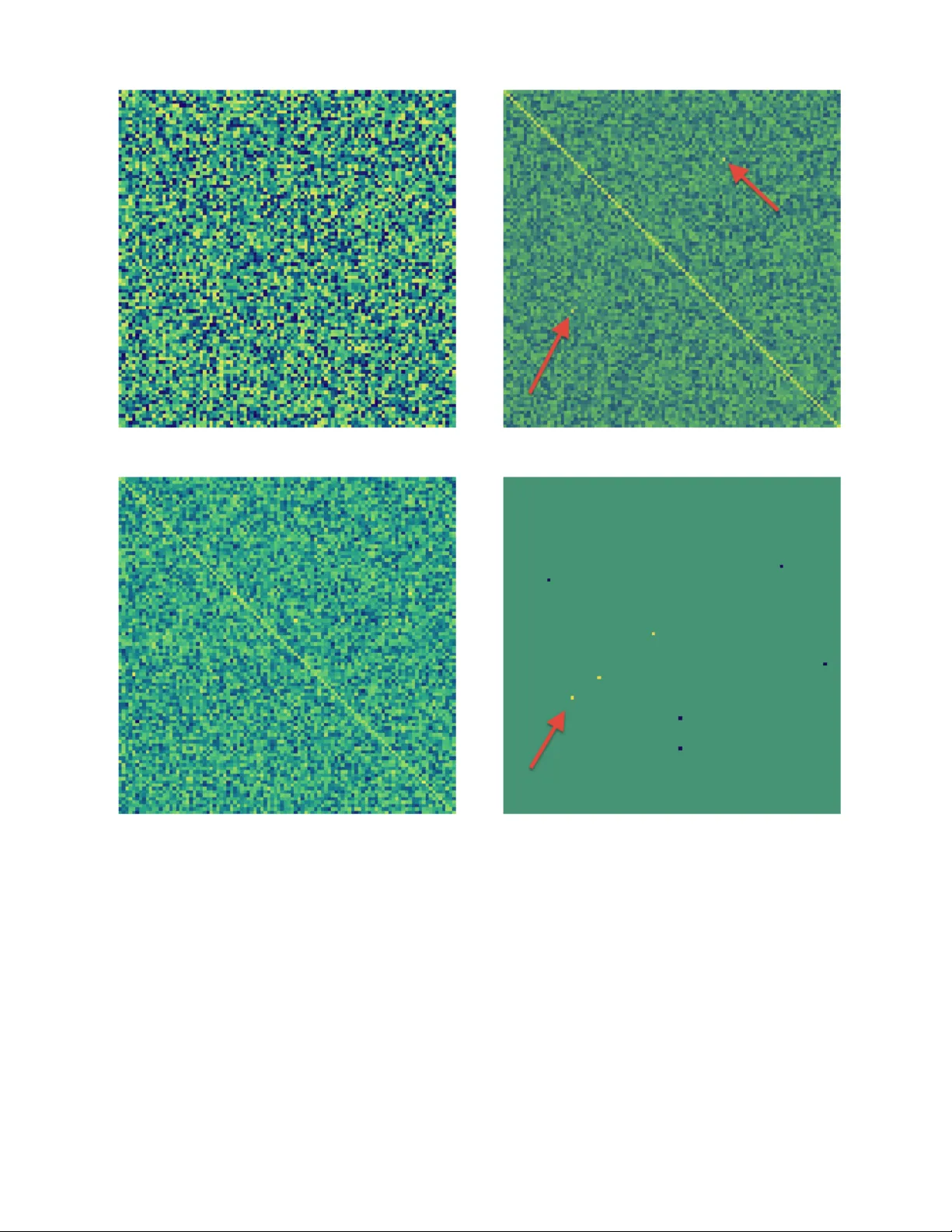

Compressed Matrix Multiplication ∗ Rasmus P agh IT University of Copenhagen pagh@itu.dk ABSTRA CT Motiv ated by the problems of computing sample cov ariance matrices, and of transforming a collection of ve ctors to a basis where they are sparse, we present a simple algorithm that computes an appro ximation of the pro duct of tw o n - b y- n real matrices A and B . Let || AB || F denote the F robe- nius norm of AB , and b b e a parameter determining the time/accuracy trade-off. Giv en 2-wise independent hash functions h 1 , h 2 : [ n ] → [ b ], and s 1 , s 2 : [ n ] → {− 1 , +1 } the algorithm works by first “compressing” the matrix pro duct in to the polynomial p ( x ) = n X k =1 n X i =1 A ik s 1 ( i ) x h 1 ( i ) ! n X j =1 B kj s 2 ( j ) x h 2 ( j ) ! . Using FFT for polynomial multiplication, we can compute c 0 , . . . , c b − 1 suc h that P i c i x i = ( p ( x ) mod x b )+( p ( x ) div x b ) in time ˜ O ( n 2 + nb ). An unbiased estimator of ( AB ) ij with v ariance at most || AB || 2 F /b can then be computed as: C ij = s 1 ( i ) s 2 ( j ) c ( h 1 ( i )+ h 2 ( j )) mo d b . Our approach also leads to an algorithm for computing AB exactly , whp., in time ˜ O ( N + nb ) in the case where A and B ha ve at most N nonzero en tries, and AB has at most b nonzero entries. Also, we use error-correcting co des in a no vel wa y to recov er significan t en tries of AB in near-linear time. Categories and Subject Descriptors F.2 [ ANAL YSIS OF ALGORITHMS AND PR OB- LEM COMPLEXITY ]: Numerical Algorithms and Prob- lems; G.3 [ PROBABILITY AND ST A TISTICS ]; G.4 [ MA THEMA TICAL SOFTW ARE ]; E.2 [ D A T A STOR- A GE REPRESENT A TIONS ]: Hash-table represent ations ∗ This w ork was done while visiting Carnegie Mellon Uni- v ersity . It is supported by the Danish National Research F oundation under the Sapere Aude program. . 1. INTR ODUCTION Sev eral computational problems can b e phrased in terms of matrix products where the normalized result is exp ected to consist mostly of numerically small entries: • Given m samples of a multiv ariate random v ariable ( X 1 , . . . , X n ), compute the sample c ovarianc e matrix whic h is used in statistical analyses. If most pairs of random v ariables are independent, the corresp onding en tries of the sample co v ariance matrix will be concen- trated around zero. • Linearly transform all col umn vectors in a matrix B to an orthogonal basis A T in which the columns of B are appro ximately sparse. Suc h batch transformations are common in lossy data compression algorithms suc h as JPEG, using prop erties of sp ecific orthogonal bases to facilitate fast computation. In b oth cases, an approximation of the matrix product may be as go od as an exact computation, since the main issue is to iden tify large entries in the result matrix. In this paper w e consider n -by- n matrices with real v alues, and devise a combinatorial algorithm for the sp ecial case of computing a matrix pro duct AB that is “compressible” . F or example, if AB is sparse it falls into our class of compressible products, and we are able to g ive an efficient algorithm. More generally , if the F robenius norm of AB is dominated b y a sparse subset of the entries, we are able to quickly compute a go o d appro ximation of the product AB . Our method can b e seen as a compressed sensing metho d for the matrix pro duct, with the nonstandard idea that the sketc h of AB is computed without explicitly constructing AB . The main technic al idea is to use FFT [11] to efficiently compute a linear sket ch of an outer product of tw o vectors. W e also mak e use of error-correcting co des in a nov el wa y to achiev e reco very of the entries of AB ha ving highest magnitude in near-linear time. Our main conceptual messages are: • It is p ossible to deriv e a fast and simple, “combinato- rial” algorithm for matrix mul tiplication in tw o cases: When the output matrix is sparse, and when an addi- tiv e error on each output entry is acceptable. • It is in teresting to co nsider the use of c ompressed sens- ing tec hniques for computational problems where re- sults (or important in termediate results) can b e rep- resen ted using sparse vectors. W e outline some such targets in the conclusion. • The interfac e betw een theoretical computer science and statistics is an area where there is high p oten tial for cross-fertilization (see also [21] for arguments in this direction). 1.1 Related work Matrix multiplication with sparse output. Lingas [20] considered the problem of computing a ma- trix pro duct AB with at most ¯ b en tries that are not trivial ly zero. A matrix entry is said to be trivially zero if every term in the corresp onding dot pro duct is zero. In general ¯ b can be muc h larger than the num b er b of nonzeros because zeros in the matrix pro duct ma y b e due to cancellations. Lingas sho wed, by a reduction to fast rectangular matrix m ultiplica- tion, that this is possible in time O ( n 2 ¯ b 0 . 188 ). Observ e that for ¯ b = n 2 this b ecomes identical to the O ( n 2 . 376 ) b ound by Coppersmith and Winograd [12]. Y uster and Zwic k [25] devised asymptotically fast algo- rithms for the case of sparse input matrices, using a matrix partitioning idea. Amossen and Pagh [4] extended this re- sult to be more efficient in the case where also the output matrix is sparse. In the dense input setting of Lingas, this leads to an improv ed time complexity of O ( n 1 . 724 ¯ b 0 . 408 ) for n ≤ ¯ b ≤ n 1 . 25 . Iw en and Spencer [19] sho wed ho w to use compressed sens- ing to compute a matrix pro duct AB in time O ( n 2+ ε ), for an y given constan t ε > 0, in the sp ecial case where e ach c olumn of AB contains at most n 0 . 29462 nonzero v alues. (Of course, by symmetry the same result holds when there is sparseness in each row.) All the results described ab o ve work b y reduction to fast rectangular matrix multiplication, so the algorithms are not “com binatorial.” How ever, Lingas [20] observ ed that a time complexit y of O ( n 2 + ¯ bn ) is achi eved b y the column-row method, a simple combinatoria l algorithm. Also, replac- ing the fast rectangular matrix multiplicat ion in the result of Iw en and Sp encer [19] by a na ¨ ıv e matrix multiplication algorithm, and making use of randomized sparse reco very methods (see [15]), leads to a combinatorial algorithm run- ning in time ˜ O ( n 2 + nb ) when eac h colu mn of AB has O ( b/n ) nonzero v alues. Appr oximate matrix multiplication. The result of [19] is not restricted to sparse matrix pro d- ucts: Their algorithm is show n to compute an approximate matrix product in time O ( n 2+ ε ) assuming that the result can b e appro ximated w ell b y a matrix with sparse column v ectors. The approximation pro duced is one with at most n 0 . 29462 nonzero v alues in each column, and is almost as goo d as the b est appro ximation of this form. Ho wev er, if some column of AB is dense, the appro ximation may differ significan tly from AB . Historically , Cohen and Lewis [10] w ere the first to con- sider randomized algorithms for approximate matrix mul- tiplication, with theoretical results restricted to the case where input matrices do not hav e negative en tries. Sup- pose A has column v ectors a 1 , . . . , a n and B has ro w vectors b 1 , . . . , b n . The pro duct of A and B can b e written as a sum of n outer pr o ducts : AB = n X k =1 a k b k . (1) The metho d of Cohen and Lewis can b e understoo d as sam- pling each outer pro duct according to the weigh t of its en- tries, and comb ining these samples to produce a matrix C where eac h entry is an un biased estimator for ( AB ) ij . If n 2 c samples are taken, for a parameter c ≥ 1, the difference betw een C and AB can b e b ounded, whp., in terms of the F rob enius norm 1 of AB , namely || AB − C || F = O ( || AB || F / √ c ) . (This is not shown in [10], but follows from the fact that eac h estimator has a scaled binomial distribution.) Drineas, Kannan, and Mahoney [14] show ed ho w a sim- pler sampling strategy can lead to a goo d approximation of the form C R , where matrices C and R consist of c columns and c rows of A and B , resp ectively . Their main error bound is in terms of the F rob enius norm of the difference: || AB − C R || F = O ( || A || F || B || F / √ c ). The time to compute C R using the classical algorithm is O ( n 2 c ) — asymptoti- cally faster results are p ossible b y fast rectangular matrix m ultiplication. Drineas et al. also give b ounds on the ele- men twise differences | ( AB − C R ) ij | , but the best su ch b ound obtained is of size Ω( M 2 n/ √ c ), where M is the magnitude of the l argest entry in A and B . This is a rather w eak b ound in general, since the largest p ossible magnitude of an en try in AB is M 2 n . Sarl´ os [23] show ed how to achiev e the same F rob enius norm error guarantee using c AMS sketc hes [2] on rows of A and columns of B . Again, if the classical matrix multiplica- tion algorithm is used to combine the sk etches, the time com- plexit y is O ( n 2 c ). This metho d giv es a stronger error b ound for each individ ual entry of the approximation matrix. If we write an entry of AB as a dot product, ( AB ) ij = ˜ a i · ˜ b j , the magnitude of the additive error is O ( || ˜ a i || 2 || ˜ b j || 2 / √ c ) with high probability (see [23, 1]). In con trast to the previous re- sults, this approximation can b e computed in a single pass o ver the input matrices. Clarkson and W oo druff [8] further refine the results of Sarl´ os, and show that the space usage is nearly optimal in a streaming setting. 1.2 New results In this pap er we improv e existing results in cases where the matrix product is “compressible” — in fact, w e produce a compressed representation of AB . Let N ≤ 2 n 2 denote the n umber of nonzero entries in A and B . W e obtain an appro ximation C by a combinatorial algorithm running in time ˜ O ( N + nb ), making a single pass o ver the input while using space O ( b lg n ), suc h that: • If AB has at most b nonzero en tries, C = AB whp. • If AB has F robenius norm q when removing its b largest en tries, the error of each entry is bounded, whp., b y | C ij − ( AB ) ij | < q / √ b . Compared to Cohen and Lewis [10] we av oid the restric- tion that input matrices cannot contain negativ e entries. Also, their method will pro duce only an approximate result 1 The F r ob enius norm of a matrix A is defined as || A || F = s X i,j A 2 ij . ev en when AB is sparse. Finally , their metho d inherently uses space Θ( n 2 ), and hence is not able to exploit compress- ibilit y to ac hieve smaller space usage. Our algorithm is faster than existing matrix mul tiplication algorithms for sparse outputs [4, 20] whenever b < n 6 / 5 , as w ell as in situations where a large num b er of cancellations mean that b ¯ b . As a more conceptual con tribution it is to our kno wledge the only “simple” algorithm to signif- ican tly improv e on Strassen’s algorithm for sparse outputs with man y en tries that are not trivially zero. The simple, combinatorial algorithms deriv ed from Drineas et al. [14] and Sarl´ os [23 ] yield error guara ntees that are gen- erally incomparable with those achiev ed here, when allow- ing same time b ound, i.e., c = Θ( b/n ). The F rob enius error bound we achiev e is: || AB − C || F ≤ || AB || F p n/c . Our result b ears some similarity to the result of Iwen and Spencer [19], since both results b e seen as compressed sens- ing of the product AB . One b asic difference is that Iwen and Spencer perform compressed sensing on eac h column of AB ( n sparse signals), while we treat the whole matrix AB as a single sparse signal. This means that we are robust tow ards sk ewed distribution of large v alues among the columns. 2. ALGORITHM AND ANAL YSIS W e will view the matrix AB as the set of pairs ( i, j ), where the weigh t of item ( i, j ) is ( AB ) ij . Our approach is to compute a linear sketc h p a k b k for each outer pro duct of (1), and then compute P k p a k b k to obtain a sketc h for AB . F or exp osition, we first describ e ho w to compute an AMS sk etch of this weigh ted set in time O ( n ), and then extend this to the more accurate Count Sketch . In the follo wing w e use [ n ] to denote the set { 1 , . . . , n } . 2.1 AMS sketches Alon, Matias, and Szegedy [2] describ ed and analyzed the follo wing approac h to sketc hing a data stream z 1 , z 2 , . . . , where item z i ∈ [ n ] has weigh t w i : T ake a 2-wise inde- pendent 2 function s : [ n ] → {− 1 , +1 } (whic h w e will call the sign function ), and compute the sum X = P i s ( z i ) w i (whic h we refer to as the AMS sketch ). W e will use a sign function on pairs ( i, j ) that is a pro duct of sign functions on the coordinates: s ( i, j ) = s 1 ( i ) s 2 ( j ). Indyk and McGre- gor [18], and Brav erman et al. [5] hav e previously analyzed momen ts of AMS sk etches with hash functions of this form. Ho wev er, for our purp oses it suffices to observe that s ( i, j ) is 2-wise indep enden t if s 1 and s 2 are 2-wise indep enden t. The AMS sk etch of an outer product uv , where b y definition ( uv ) ij = u i v j , is: X ( i,j ) ∈ [ n ] × [ n ] s ( i, j ) ( uv ) ij = n X i =1 s 1 ( i ) u i ! n X j =1 s 2 ( j ) v j ! . That is, the sketc h for the outer pro duct is simply the pro d- uct of the sketc hes of the tw o vect ors (using different hash functions). A single AMS sketc h has v ariance roughly || uv || 2 F , whic h is to o large for our purposes. T aking the a verage of 2 W e will use “ h is a a k -wise independent hash function” as a shorthand for “ h is c hosen uniformly at random from a k -wise indep enden t family of hash functions, indep enden tly of all other random choices made by the algorithm” . b such sketc hes to reduce the v ariance b y a factor √ b would increase the time to retriev e an estimator for an entry in the matrix pro duct by a factor of b . By using a different sketc h w e can a void this problem, and additionally get better esti- mates for compressible matrices. 2.2 Count sketches Our algorithm will use the Count Sketch of Charik ar, Chen and F arach-C olton [7], which has precision at least as goo d as the estimator obtained by taking the av erage of b AMS sk etches, but is muc h b etter for sk ewed distributions. The method maintains a sketc h of any desire d size b , using a 2-wise independent splitting function h : [ n ] → { 0 , . . . , b − 1 } to divide the items in to b groups. F or eac h group, an AMS sk etch is main tained using a 2-wise independent hash func- tion s : [ n ] → {− 1 , +1 } . That is, the sketc h is the vector ( c 0 , . . . , c b − 1 ) where c k = P i, h ( z i )= k s ( z i ) w i . An unbiased estimator for the total weigh t of an item z is c h ( z ) s ( z ). T o obtain stronger guarantees, one can tak e the median of sev- eral estimators constructed as ab o ve (with different sets of hash functions) — w e return to this in section 3.3. Sketc hing a matrix pr oduct naïvely. Since Count Sketch is linear we can compute the sk etc h for AB by sk etching eac h of the outer pro ducts in (1), and adding the sketc h vectors. Each outer pro duct has O ( n 2 ) terms, which means that a direct approach to computing its sk etch has time complexity O ( n 2 ), resulting in a total time complexit y of O ( n 3 ). Impr oving the comple xity W e now show how to improv e the complexity of the outer product sk etching from O ( n 2 ) to O ( n + b lg b ) b y c ho osing the hash functions used by CountSketch in a “decompos- able” wa y , and applying FFT. W e use the sign function s ( i, j ) defined in section 2.1, and similarly decomp ose the function h as follows: h ( i, j ) = h 1 ( i ) + h 2 ( j ) mod b , where h 1 and h 2 are chosen independently at random from a 3-wise indep en- den t family . It is well-kno wn that this also makes h 3-wise independent [6, 22]. Given a vector u ∈ R n and functions h t : [ n ] → { 0 , . . . , b − 1 } , s t : [ n ] → {− 1 , +1 } we define the follo wing p olynomial: p h t ,s t u ( x ) = n X i =1 s t ( i ) u i x h t ( i ) . The p olynomial can b e represented either in the standard basis as the vector of co efficien ts of monomials x 0 , . . . , x b − 1 , or in the discr ete F ourier b asis as the v ector ( p h t ,s t u ( ω 0 ) , p h t ,s t u ( ω 1 ) , . . . , p h t ,s t u ( ω b − 1 )) , where ω is a complex num b er such that ω b = 1. The ef- ficien t transformation to the latter representatio n is known as the fast F ourier transform (FFT), and can b e computed in time O ( b log b ) when b is a p o wer of 2 [11]. T aking component wise pro ducts of the vectors representing p h 1 ,s 1 u and p h 2 ,s 2 v in the F ourier basis we get a vector p ∗ where p ∗ t = p h 1 ,s 1 u ( ω t ) p h 2 ,s 2 v ( ω t ). Now consider the following p oly- nomial: p ∗ uv ( x ) = X k X i,j h ( i,j )= k s ( i, j ) ( uv ) ij x k Using ω t h ( i,j ) = ω t h 1 ( i )+ t h 2 ( j ) w e hav e that p ∗ uv ( ω t ) = X i,j s ( i, j ) ( uv ) ij ω h ( i,j ) t = X i s 1 ( i ) u i ω t h 1 ( i ) ! X j s 2 ( j ) v j ω t h 2 ( j ) ! = p ∗ t . That is, p ∗ is the representation, in the discrete F ourier basis, of p ∗ uv . No w observ e that the co efficien ts of p ∗ uv ( x ) are the entries of a Count Sketch for the outer pro d- uct uv using the sign function s ( i, j ) and splitting function h ( i, j ). Thus, applying the inv erse FFT to p ∗ w e compute the Count Sketch of uv . The pseudoco de of figure 1, called with parameter d = 1, summarizes the encoding and decoding functions discussed so far. F or simplicity the pseudo code assumes that the hash functions inv olved are fully random. A practical implemen- tation of the inv olved hash functions is character-based tab- ulation [22], but for the b est theoretical space b ounds we use polynomial hash functions [13]. T ime and space analysis. W e analyze each iteration of the outer lo op. Computing p uv ( x ) takes time O ( n + b lg b ), where the first term is the time to construct the polynomials, and the last term is the time to multiply the p olynomials, using FFT [11]. Comput- ing the sketc h for each outer pro duct and summing it up tak es time O ( n 2 + nb lg b ). Fin ally , in time O ( n 2 ) we can obtain the estimate for each entry in AB . The analysis can b e tightened when A and B are sparse or rectangular, and it suffices to compute the sketc h that allo ws random access to the estimate C . Suppose that A is n 1 -b y- n 2 , and B is n 2 -b y- n 3 , and they conta in N n 2 nonzero en tries. It is straigh tforward to see that eac h iteration runs in time O ( N + n 2 b lg b ), assuming that A and B are giv en in a form that allows the nonzero en tries of a column (ro w) to be retriev ed in linear time. The required hash functions, with constant ev aluation time, can b e stored in space O ( d ) using p olynomial hash func- tions [13]. The space required for the rest of the compu- tation is O ( db ), since we are handling O ( d ) polynomials of degree less than b . F urther, access to the input is restricted to a single pass if A is stored in column-ma jor order, and B is stored in ro w-ma jor order. Lemma 1. CompressedPr oduct ( A, B , b, d ) runs in time O ( d ( N + n 2 b lg b )) , and uses sp ace for O ( db ) re al numb ers, in addition to the input. W e note that the time b ound ma y b e m uch smaller than n 2 , whic h is the num ber of en tries in the approximate product C . This is b ecause C is not constructed explicitly . In section 4 w e address ho w to efficiently extract the b largest entries of C . 3. ERR OR AN AL YSIS W e can obtain t wo kinds of guaran tees on the approxi- mation: One in terms of the F rob enius norm (section 3.1), whic h applies even if w e use just a single set of hash func- tions ( d = 1), and stronger guarantees (section 3.3) that require the use of d = O (lg n ) hash functions. Section 3.2 function CompressedProduct ( A, B , b, d ) for t := 1 to d do s 1 [ t ] , s 2 [ t ] ∈ R Maps( { 1 , . . . , n } → {− 1 , +1 } ) h 1 [ t ] , h 2 [ t ] ∈ R Maps( { 1 , . . . , n } → { 0 , . . . , b − 1 } ) p [ t ] := 0 for k := 1 to n do ( pa, pb ) := ( 0 , 0 ) for i := 1 to n do pa [ h 1 ( i )] := pa [ h 1 ( i )] + s 1 [ t ]( i ) A ik end for for j := 1 to n do pa [ h 2 ( j )] := pb [ h 2 ( j )] + s 2 [ t ]( j ) B kj end for ( pa, pb ) := (FFT( pa ) , FFT( pb )) for z := 1 to b do p [ t ][ z ] := p [ t ][ z ] + pa [ z ] pb [ z ] end for end for end for end for for t := 1 to d do p [ t ] := FFT − 1 ( p [ t ]) end for return ( p, s 1 , s 2 , h 1 , h 2 ) end function Decompress ( i, j ) for t := 1 to d do X t := s 1 [ t ]( i ) s 2 [ t ]( j ) p [ t ][( h 1 ( i ) + h 2 ( j )) mod b ] return Median ( X 1 , . . . , X d ) end Figure 1: Metho d for enco ding an approximate represen tation of AB of size bd , and corresp onding metho d for deco ding its entries. Maps ( D → C ) de- notes the set of functions from D to C , and ∈ R de- notes indep endent, random choi ce. (Limited ran- domness is sufficient to obtain our guarantee s, but this is not reflected in the pseudo co de.) W e use 0 to denote a zero vector (or array) of suitable size that is able to hold complex num b ers. FFT( p ) de- notes the discrete fourier transform of vector p , and FFT − 1 its inv erse. considers the application of our result to cov ariance matrix estimation. 3.1 Frobenius norm guarantee Theorem 2. F or d = 1 and any ( i ∗ , j ∗ ) , the function c al l Decompress ( i ∗ , j ∗ ) c omputes an unbiase d estimator for ( AB ) i ∗ j ∗ with varianc e b ounde d by || AB || 2 F /b . Proof. F or i, j ∈ { 1 , . . . , n } let K i,j be the indicator v ariable for the ev ent h ( i, j ) = h ( i ∗ , j ∗ ). Since h is 3-wise independent these even ts are 2-wise indep enden t. W e can write X as: X = s ( i ∗ , j ∗ ) X i,j K i,j s ( i, j )( AB ) ij . Observ e that K ( i ∗ , j ∗ ) = 1, E [ s ( i ∗ , j ∗ ) s ( i, j )] = 0 whenever ( i, j ) 6 = ( i ∗ , j ∗ ), and E [ s ( i ∗ , j ∗ ) 2 ] = 1. This implies that E [ X ] = ( AB ) i ∗ j ∗ . T o bound the v ariance of X w e rewrite it as: X = ( AB ) i ∗ j ∗ + s ( i ∗ , j ∗ ) X ( i,j ) 6 =( i ∗ ,j ∗ ) K i,j s ( i, j )( AB ) ij (2) Since s ( i ∗ , j ∗ ) 2 = 1 and the v alues K i,j , i, j ∈ { 1 , . . . , n } , and s ( i, j ), i, j ∈ { 1 , . . . , n } are 2-wise indep enden t the terms ha ve cov ariance zero, so the v ariance is simply the sum X ( i,j ) 6 =( i ∗ ,j ∗ ) V ar ( K i,j s ( i, j )( AB ) ij ) . W e hav e that E [ K i,j s ( i, j )( AB ) ij ] = 0, so the v ariance of eac h term is equal to its second momen t: E [( K i,j s ( i, j )( AB ) ij ) 2 ] = ( AB ) 2 ij E [ K i,j ] = ( AB ) 2 ij /b . Summing o ver all terms w e get that V ar ( X ) ≤ || AB || 2 F /b . As a consequence of the lemma, w e get from Cheb ychev’s inequalit y that each estimate is accurate with probability 3 / 4 up to an additiv e error of 2 || AB || F / √ b . F or sufficiently large d = O (lg n ) this holds for all en tries with high proba- bilit y , by Chernoff b ounds. 3.2 Covariance matrix estimation W e no w consider the application of our result to cov ari- ance matrix estimation from a set of samples. The co v ari- ance matrix captures pairwise correlations among the com- ponents of a mu ltiv ariate random v ariable X = ( X 1 , . . . , X n ) T . It is defined as co v( X ) = E[( X − E[ X ])( X − E[ X ]) T ]. W e can arrange observ ations x 1 , . . . , x m of X as columns in an n -b y- m matrix A . Figure 2 illustrates ho w approxim ate matrix m ultiplication can b e used to find correlations among rows of A (corresp onding to components of X ). In the follo wing w e present a theoretical analysis of this approach. The sample me an of X is ¯ x = 1 m P m i =1 x i . The sample c ovarianc e matrix Q is an unbiased estimator of co v( X ), giv en by: Q = 1 m − 1 m X i =1 ( x i − ¯ x )( x i − ¯ x ) T . Let ¯ x 1 denote the n -by- m matrix that has all columns equal to ¯ x . Then we can write Q as a matrix pro duct: Q = 1 m − 1 ( A − ¯ x 1)( A − ¯ x 1) T . T o simplify calculations we consider computation of ˜ Q whic h is deriv ed from Q by setting ent ries on the diagonal to zero. Notice that a linear sketc h of Q can b e transformed easily in to a linear sk etch of ˜ Q , and that a sketc h of ˜ Q also allo ws us to quickly approximate Q . Entry ˜ Q ij , i 6 = j is a random v ariable that has exp ectation 0 if X i and X j are independent. It is computed as 1 m − 1 times the sum o ver m observ ations of ( X i − E[ X i ])( X j − E[ X j ]). Assuming inde- pendence and using the formula from [17], this means that its v ariance is m ( m − 1) 2 V ar( X i )V ar( X j ) < 4 m V ar( X i )V ar( X j ), for m ≥ 2. If cov( X ) is a diagonal matrix (i.e., every pair of v ariables is independent), the exp ected squared F robenius norm of ˜ Q is: E[ || ˜ Q || 2 F ] = 2 X i 7 / 8. Finally observ e that it is unlik ely that d/ 2 or more es tima- tors ha ve larger error. As in the pro of of Theorem 5 w e get that the probability that this occurs is o (2 − d/ 3 ) = o ( n − 2 ). Th us, the probability that the median estimator has larger error is o ( n − 2 ). 4. SUBLINEAR RESUL T EXTRA CTION In analogy with the sparse recov ery literature (see [15] for an ov erview) we now consider the task of extracting the most significan t co efficients of the appro ximation matrix C in time o ( n 2 ). In fact, if we allow the compression algorithm to use a factor O (lg n ) more time and space, the time complexity for decompression will b e O ( b lg 2 n ). Our main to ol is error- correcting co des, previously applied to the sparse recov ery problem by Gilb ert et al. [16]. Ho wev er, compared to [16] w e are able to pro ceed in a more direct wa y that av oids iterativ e deco ding. W e note that a similar result could b e obtained by a 2-dimensional dy adic decomp osition of [ n ] × [ n ], but it seems that this would result in time O ( b lg 3 n ) for decompression. F or ∆ ≥ 0 let S ∆ = { ( i, j ) | | ( AB ) ij | > ∆ } denote the set of entries in AB with magnitude larger than ∆, and let L denote the b/κ largest entries in AB , for some constan t κ . Our goal is to compute a set S of O ( b ) en tries that con- tains L ∩ S ∆ where ∆ = O ( q Err b/κ F ( AB ) /b ). In tuitively , with high probabilit y w e should output en tries in L if their magnitude is significan tly ab o ve the standard deviation of en tries of the approximation C . 4.1 A pproach The basic approac h is to compute a sequence of Count Sketches using the same set of hash functions to approxi- mate differen t submatrices of AB . The set of sk etches that con tain a particular entry will then reveal (with high prob- abilit y) the lo cation of the entry . The submatrices are con- structed using a goo d error-correcting co de E : [ n ] → { 0 , 1 } ` , where ` = O (lg n ). Let I E r denote the diagonal matrix where en try ( i, i ) equals E ( i ) r , bit n umber r of E ( i ). Then I E r A is the matrix that is d erived from A by c hanging entries to zero in those ro ws i for whic h E ( i ) r = 0. Similarly , w e can deriv e B I E r from B by c hanging en tries to zero in columns j where E ( j ) r = 0. The matrix sk etches that w e compute are: C r · = ( I E r A ) B , for r ∈ { 1 , . . . , ` } , and C · r = A ( B I E r − ` ) , for r ∈ { ` + 1 , . . . , 2 ` } (3) W e aim to show the follo wing result. Theorem 7. Assume d = O (lg n ) is sufficiently larg e. Ther e exists a c onstant κ such that if ∆ ≥ κ q Err b/κ F ( AB ) /b then FindSignificantEntries (∆) r eturns a set of O ( b ) p o- sitions that includes the p ositions of the b/κ entries in AB having the highest magnitudes, p ossibly omitting entries with magnitude b elow ∆ . The running time is O ( b lg 2 n ) , sp ac e usage is O ( b lg n ) , and the r esult is c orr e ct with pr ob ability 1 − 1 n . Com bining this with Theorem 5 and Lemma 1 we obtain: Corollar y 8. L et A b e an n 1 -by- n 2 matrix, and B an n 2 -by- n 3 matrix, with N nonzer o entries in total. F urther supp ose that AB is known to have at most b nonzer o entries. Then a sp arse r epr esentation of AB , c orr e ct with pr ob ability 1 − 1 n , c an b e c ompute d in time O ( N + n 2 b lg b + b lg 2 n ) , using sp ac e O ( b lg n ) in addition to the input. function FindSignificantEntries (∆) S := ∅ for t := 1 to d do for k := 1 to b do for r := 1 to 2 ` do X r := | p ( t,r ) [ k ] | end for s := for r := 1 to 2 ` do if X r > ∆ / 2 then s := s || 1 else s := s || 0 end for ( i, j ) := Decode ( s ) Inser t (( i, j ) , S ) end for end for for ( i, j ) ∈ S do if |{ ( i, j ) } ∩ S | < d/ 2 then Delete (( i, j ) , S ) end if end for return S end Figure 3: Metho d for computing the p ositions of O ( b ) significan t matrix en tries of magnitude ∆ or more. String concatenation is denoted || , and de- notes the empty string. Decode ( s ) deco des the (cor- rupted) codewords formed b y the bit string s (whic h m ust hav e length a multiple of ` ), returning an arbi- trary result if no co deword is found within distance δ ` . Inser t ( x, S ) inserts a copy of x into the multiset S , and Delete ( x, S ) deletes all copies of x from S . FindSignificantEntries can b e used in conjunction with Decompress to obtain a sparse approximation. 4.2 Details W e now fill in the details of the approach sketc hed in section 4.1. Consider the matrix sk etches of (3). W e use p ( t,r ) to denote p olynomial t in the sketc h num b er r , for r = 1 , . . . , 2 ` . F or concreteness we consider an expand er co de [24], which is able to efficien tly correct a fraction δ = Ω(1) errors. Given a string within Hamming distance δ ` from E ( x ), the input x can b e recov ered in time O ( ` ), if the decoding algorithm is given access to a (fixed) lo okup table of size O ( n ). (W e assume without loss of generality that δ ` is integer.) Pseudoco de for the algorithm computing the set of p o- sitions ( i, j ) can b e found in figure 3. F or each splitting function h ( t ) ( i, j ) = ( h ( t ) 1 ( i ) + h ( t ) 2 ( j )) mod b , and each hash v alue k we try to recov er any entry ( i, j ) ∈ L ∩ S ∆ with h ( t ) ( i, j ) = k . The recov ery will succeed with go o d proba- bilit y if there is a unique suc h en try . As argued in section 3.3 w e get uniqueness for all but a small fraction of the splitting functions with high probabilit y . The algorithm first reads the relev ant magnitude X r from eac h of the 2 ` sketc hes. It then pro duces a binary string s that enco des which sketc hes hav e low and high magnitude (below and abov e ∆ / 2, resp ectiv ely). This string is then decoded into a pair of co ordinates ( i, j ), that are inserted in a m ultiset S . A post-pro cessing step remov es “spurious” en tries from S that were not inserted for at least d/ 2 splitting functions, before the set is returned. 4.2.1 Pr oof of theor em 7 It is easy to see that FindSi gnificantEntries can be im- plemen ted to run in exp ected time O ( db` ), which is O ( b lg 2 n ), and space O ( db ), which is O ( b lg n ). The implemen tation uses the linear time algorithm for Decode [24], and a hash table to maintain the m ultiset S . Also, since we insert db positions into the multiset S , and output only those that hav e cardinality d/ 2 or more, the set returned clearly has at most 2 b distinct positions. It remains to see that eac h entry ( i, j ) ∈ L ∩ S ∆ is returned with high probabilit y . Notice that (( I E r A ) B ) ij = ( ( AB ) ij if E ( i ) r = 1 0 otherwise ( A ( B I E r )) ij = ( ( AB ) ij if E ( j ) r = 1 0 otherwise . Therefore, conditioned on h ( t ) ( i, j ) = k we hav e that the random v ariable X r = | p ( t,r ) [ k ] | has E [ X r ] ∈ { 0 , | ( AB ) ij |} , where the v alue is determined by the r th bit of the string ˆ s = E ( i ) || E ( j ). The algorithm correctly deco des the r th bit if X r ≤ ∆ / 2 for ˆ s r = 0, and X r > ∆ / 2 for ˆ s r = 1. In particular, the decoding of a bit is correct if ( AB ) ij ≥ ∆ and X r deviates b y at most ∆ / 2 from its expectation. F rom the pro of of Theorem 6 we see that the proba- bilit y that the error of a single estimator is greater than 12 q Err b/ 20 F ( AB ) /b is at most 1 / 8. If ∆ is at least t wice as large, this error bound implies correct deco ding of the bit deriv ed from the estimator, assuming ( AB ) i ∗ j ∗ > ∆. Ad- justing constan ts 12 and 20 to a larger v alue κ the error probabilit y can b e decreased to δ / 3. This means that the probabilit y that there are δ ` errors or more is at most 1 / 3. So with probability 2 / 3 Decode correctly identifies ( i ∗ , j ∗ ), and inserts it in to S . Repeating this for d different hash functions the exp ected n umber of copies of ( i ∗ , j ∗ ) in S is at least 2 d/ 3, and by Chernoff bounds the probability tha t there are less than d/ 2 copies is 2 − Ω( d ) . F or sufficiently large d = O (lg n ) the prob- abilit y that any entry of magnitude ∆ or more is missed is less than 1 /n . 5. ESTIMA TING COMPRESSIBILITY T o apply theorems 5 and 6 it is useful to b e able to com- pute bounds on compressibility of AB . In the follo wing sub- sections we consider, respectively , estimation of the n umber of nonzero entries, and of the Err F v alue. 5.1 Number of nonzero entries An constan t-factor estimate of ¯ b ≥ b can be computed in time O ( N lg N ) using Cohen’s metho d [9] or its refinement for matrix products [3]. Recall that ¯ b is an upper bound on the n umber of nonzeros, when not taking in to accoun t that there may b e zeros in AB that are due to cancellation of terms. W e next show how to tak e cancellation of terms into consideration, to get a truly output-sensitiv e algorithm. The idea is to p erform a doubling search that terminates (whp.) when we arrive at an upp er b ound on the num b er of nonzero entries that is within a factor O (1) from the true v alue. Initially , we mul tiply A and B b y random diagonal matrices (on left and righ t side, resp ectivel y). This will not c hange the n umber of nonzero entries, but by the Sch wartz- Zippel lemma it ensures that a linear combinati on of entries in AB is zero (whp.) only when all these en tries are zero. The doubling search creates sk etches for AB using b = 2 , 4 , 8 , 16 , . . . until, say , 4 5 b entries of the sketc h vector b e- come zero for all hash functions h (1) , . . . , h ( d ) . Since there is no cancellation (whp.), this means that the num b er of dis- tinct hash v alues (under h ( i, j )) of nonzero entries ( i, j ) is at most b/ 5. W e wish to b ound the probabilit y that this happ ens when the true num b er ˜ b of nonzero entries is larger than b . The expected num b er of hash collisions is ˜ b 2 /b . If the num b er of distinct hash v alues of nonzero en tries is at most b/ 5 the a verage num b er of collisions p er entry is ˜ b/ ( b/ 5) − 1. This means that, assuming ˜ b ≥ b , the observed num b er of colli- sions can b e low er b ounded as: ˜ b ˜ b b/ 5 − 1 / 2 ≥ ˜ b 2 b/ 5 4 5 / 2 ≥ 2 ˜ b ˜ b + 1 ˜ b 2 ! /b . Note that the observ ed v alue is a factor 2 ˜ b ˜ b +1 ≥ 4 / 3 larger than the exp ectation. So Mark ov’s inequality implies that this happens with probabilit y at most 3 / 4. If it happ ens for all d hash functions, we can conclude with probability 1 − (3 / 4) − d that b is an upper b ound on the num b er of nonzero en tries. Con versely , as so on as b/ 5 exceeds the num b er ˜ b of nonzero en tries we are guaran teed to finish the doubling search. This means w e get a 5 -approximation of the num b er of non-zeros. 5.2 Upper bounding Err b/κ F ( AB ) T o b e able to conclude that the result of FindSignificant- Entries is correct with high probability , using theorem 7, w e need an upper b ound on q Err b/κ F ( AB ) /b . W e will in fact sho w ho w to estimate the larger v alue q Err 0 F ( AB ) /b = || AB || F / √ b, so the allo wed v alue for ∆ found ma y not b e tight. W e leav e it as an op en problem to efficiently compute a tight upp er bound on q Err b/κ F ( AB ) /b . The idea is to make use of the AMS sketc h X of AB using the approach described in section 2.1 (summing the sk etches for the outer pro ducts). If we use 4-wise indep en- den t hash functions s 1 and s 2 , Indyk an d McGregor [18] (see also Bra verman et al. [5] for a slight correction of this result) ha ve shown that X 2 is an unbiased estimator for the sec- ond moment of the input, which in our case equals || AB || 2 F , with v ariance at most 8 E [ X 2 ] 2 . By Chebyc hev’s inequality this means that X 2 is a 32-appro ximation of || AB || 2 F with probabilit y 3 / 4. (Arbitrarily b etter approximation can b e ac hieved, if needed, by taking the mean of several estima- tors.) T o make sure that we get an upper b ound with high probabilit y we tak e the median of d = O (log n ) estimators, and mul tiply this v alue by 32. The total time for computing the upper bound is O ( dn 2 ). 6. CONCLUSION W e ha ve seen that matrix multiplication allo ws surpris- ingly simple and efficient approximation algorithms in cases where the result is sparse or, more generally , its F rob enius norm is dominated by a sparse subset of the entries. Of course, this can b e combined with kno wn reductions of ma- trix multiplic ation to (smaller) matrix products [14, 23] to yield further (multiplicativ e error) approximation results. Ac knowledgemen t. The author thanks the anonymous review ers for insightful commen ts, Andrea Campagna, Kon- stan tin Kutzko v, and Andrzej Lingas for suggestions impro v- ing the presentation, Petros Drineas for information on re- lated work, and Seth Pettie for p oin ting out the work of Iw en and Spencer. 7. REFERENCES [1] Noga Alon, Phillip B. Gibb ons, Y ossi Matias, and Mario Szegedy . T racking join and self-join sizes in limited storage. J. Comput. Syst. Sci , 64(3):719–747, 2002. [2] Noga Alon, Y ossi Matias, and Mario Szegedy . The space complexit y of approximating the frequency momen ts. J. Comput. Syst. Sci , 58(1):137–147, 1999. [3] Rasm us Resen Amossen, Andrea Campagna, and Rasm us Pagh. Better size estimation for sparse matrix products. In Pr o c e e dings of 13th International Workshop on Appr oximation, R andomization, and Combinatorial Optimization (APPRO X-RANDOM) , v olume 6302 of L e ctur e Notes in Computer Scienc e , pages 406–419. Springer, 2010. [4] Rasm us Resen Amossen and Rasm us Pagh. F aster join-pro jects and sparse matrix m ultiplications. In Pr o c e e dings of 12th International Confer enc e on Datab ase The ory (ICDT) , v olume 361 of ACM International Confer enc e Pr o c e e ding Series , pages 121–126. ACM, 2009. [5] Vladimir Bra verman, Kai-Min Ch ung, Zhenming Liu, Mic hael Mitzenmac her, and Rafail Ostrovsky . AMS without 4-wise indep endence on product domains. In 27th International Symp osium on The or etic al Asp e cts of Computer Scienc e (ST A CS) , volume 5 of L eibniz International Pr o c e e dings in Informatics (LIPIcs) , pages 119–130, 2010. [6] J. La wrence Carter and Mark N. W egman. Universal classes of hash functions. Journal of Computer and System Scienc es , 18(2):143–154, 1979. [7] Moses Charik ar, Kevin Chen, and Martin F arach-Colto n. Finding frequen t items in data streams. The or. Comput. Sci , 312(1):3–15, 2004. [8] Kenneth L. Clarkson and David P . W oo druff. Numerical linear algebra in the streaming model. In Pr o c e e dings of Symp osium on The ory of Computing (STOC) , pages 205–214. A CM Press, 2009. [9] Edith Cohen. Structure prediction and computation of sparse matrix pro ducts. J. Comb. Optim , 2(4):307–332, 1998. [10] Edith Cohen and Da vid D. Lewis. Approximating matrix m ultiplication for pattern recognition tasks. Journal of Algorithms , 30(2):211–252, 1999. [11] James W. Co oley and John W. T uk ey . An algorithm for the machine calculation of complex F ourier series. Mathematics of Computation , 19(90):297–301, 1965. [12] Don Coppersmith and Shmuel Winograd. Matrix m ultiplication via arithmetic progressions. Journal of Symb olic Computation , 9(3):251–280, 1990. [13] Martin Dietzfelbinger, Joseph Gil, Y ossi Matias, and Nic holas Pipp enger. Polynomial hash functions are reliable (extended abstract). In W erner Kuich, editor, Au tomata, L anguages and Pr o gr amming, 19th International Col lo quium , v olume 623 of L e ctur e Notes in Computer Scienc e , pages 235–246, Vienna, Austria, 13–17 July 1992. Springer-V erlag. [14] P etros Drineas, Ra vi Kannan, and Michael W. Mahoney . F ast Monte Carlo algorithms for matrices I: Appro ximating matrix m ultiplication. SIAM Journal on Computing , 36(1):132–157, 2006. [15] A. Gilbert and P . Indyk. Sparse reco very using sparse matrices. Pr o c e e dings of the IEEE , 98(6):937 –947, 2010. [16] Anna C. Gilb ert, Yi Li, Ely Porat, and Martin J. Strauss. Appro ximate sparse recov ery: optimizing time and measurements. In Pr o c e e dings of the 42nd AC M symp osium on The ory of c omputing , STOC ’10, pages 475–484, New Y ork, NY, USA, 2010. ACM. [17] Leo A. Go odman. On the exact v ariance of pro ducts. Journal of the Americ an Statistic al Asso ciation , 55(292):pp. 708–713, 1960. [18] Piotr Indyk and Andrew McGregor. Declaring independence via the sketc hing of sketc hes. In Pr o c e e dings of the 19th Annual A CM-SIAM Symp osium on Discr ete Algorithms (SODA ) , pages 737–745. SIAM, 2008. [19] Mark A. Iwen and Craig V. Sp encer. A note on compressed sensing and the complexity of matrix m ultiplication. Inf. Pr o c ess. L ett , 109(10):468–471, 2009. [20] Andrzej Lingas. A fast output-sensitive algorithm for bo olean matrix multiplication. In ESA , volume 5757 of L e ctur e Notes in Computer Scienc e , pages 408–419. Springer, 2009. [21] Mic hael W. Mahoney . Algorithmic and statistical persp ectives on large-scale data analysis, Octob er 08 2010. T o app ear in Combinatorial Scientific Computing, Chapman and Hall/CR C Press, 2011. [22] Mihai Pˇ atra¸ scu and Mikkel Thorup. The p ow er of simple tabulation hashing. In Pr o c e e dings of the 43r d annual ACM Symp osium on The ory of Computing (STOC) , pages 1–10. A CM, 2011. [23] T am´ as Sarl´ os. Improv ed appro ximation algorithms for large matrices via random pro jections. In IEEE Symp osium on F oundations of Computer Scienc e (FOCS ) , pages 143–152. IEEE Computer So ciety , 2006. [24] Mic hael Sipser and Daniel A. Spielman. Expander codes. IEEE T r ansactions on Information The ory , 42(6):1710–1722, 1996. [25] Raphael Y uster and Uri Zwic k. F ast sparse matrix m ultiplication. AC M T r ansactions on Algorithms , 1(1):2–13, 2005.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment