Clustering Partially Observed Graphs via Convex Optimization

This paper considers the problem of clustering a partially observed unweighted graph---i.e., one where for some node pairs we know there is an edge between them, for some others we know there is no edge, and for the remaining we do not know whether o…

Authors: Yudong Chen, Ali Jalali, Sujay Sanghavi

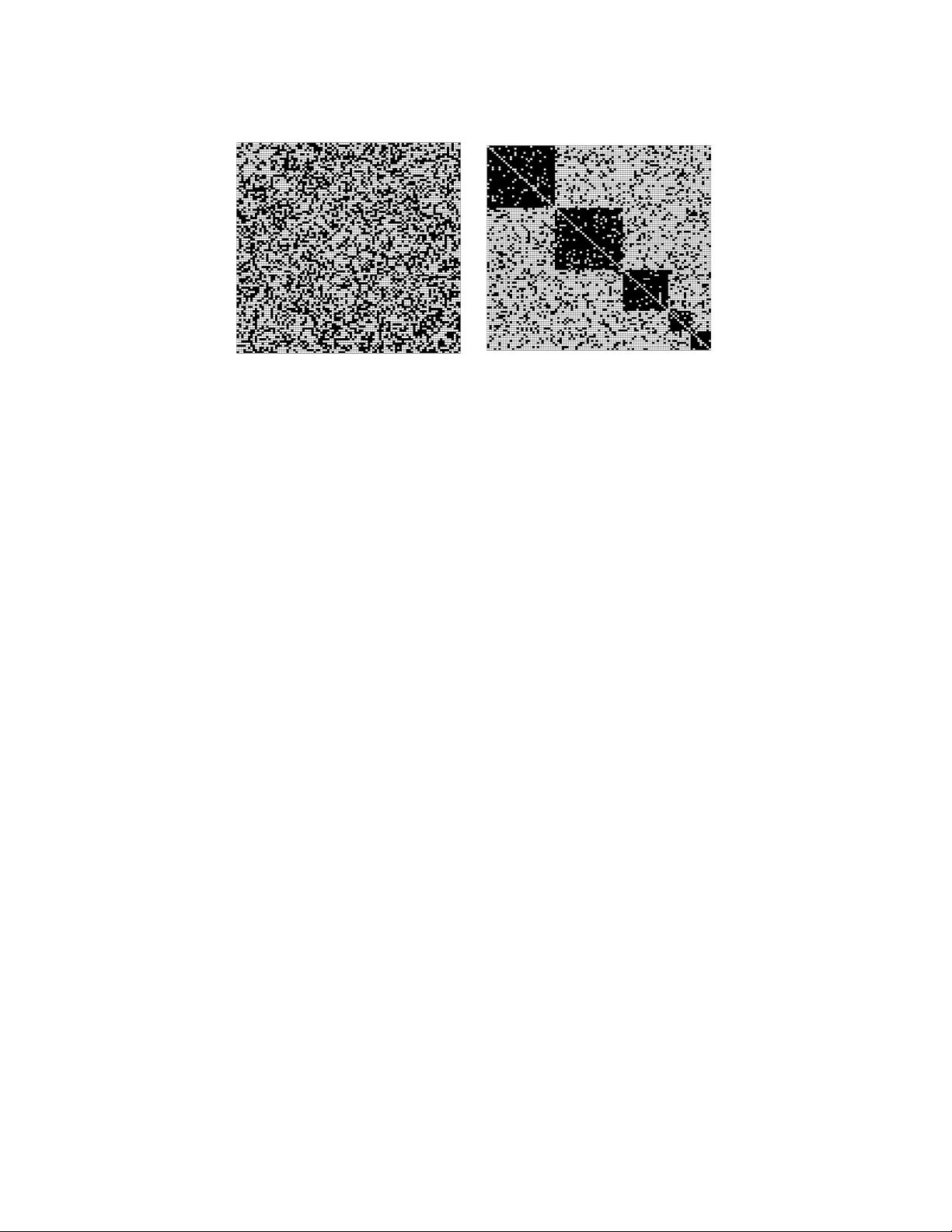

Journal of Machine Learning Research 15 (2014) 2213-2238 Submitted 12/11; Revised 2/13; Published 6/14 Clustering Partially Obser ved Graphs via Con vex Optimization Y udong Chen Y D C H E N @ U T E X A S . E D U Ali Jalali A L I J @ M A I L . U T E X A S . E D U Sujay Sangha vi S A N G H A V I @ M A I L . U T E X A S . E D U Department of Electrical and Computer Engineering The University of T exas at A ustin Austin, TX 78712, USA Huan Xu M P E X U H @ N U S . E D U . S G Department of Mechanical Engineering National University of Singapor e Singapor e 117575, SINGAPORE Editor: Marina Meila Abstract This paper considers the problem of clustering a partially observed unweighted graph—i.e., one where for some node pairs we know there is an edge between them, for some others we kno w there is no edge, and for the remaining we do not know whether or not there is an edge. W e want to organize the nodes into disjoint clusters so that there is relatively dense (observed) connectivity within clusters, and sparse across clusters. W e take a nov el yet natural approach to this problem, by focusing on finding the clustering that minimizes the number of “disagreements”—i.e., the sum of the number of (observed) missing edges within clusters, and (observed) present edges across clusters. Our algorithm uses con v ex optimization; its basis is a reduction of disagreement minimization to the problem of recovering an (unknown) lo w-rank matrix and an (unknown) sparse matrix from their partially observed sum. W e ev aluate the performance of our algorithm on the classical Planted Partition/Stochastic Block Model. Our main theorem provides sufficient conditions for the success of our algorithm as a func- tion of the minimum cluster size, edge density and observ ation probability; in particular , the results characterize the tradeof f between the observ ation probability and the edge density g ap. When there are a constant number of clusters of equal size, our results are optimal up to logarithmic factors. Keyw ords: graph clustering, con v ex optimization, sparse and lo w-rank decomposition 1. Introduction This paper is about the following task: giv en partial observation of an undirected unweighted graph, partition the nodes into disjoint clusters so that there are dense connections within clusters, and sparse connections across clusters. By partial observ ation, we mean that for some node pairs we kno w if there is an edge or not, and for the other node pairs we do not know—these pairs are un- observed . This problem arises in several fields across science and engineering. For e xample, in sponsored search, each cluster is a submarket that represents a specific group of advertisers that do most of their spending on a group of query phrases—see e.g., Y ahoo!-Inc (2009) for such a project at Y ahoo. In VLSI and design automation, it is useful in minimizing signaling between compo- nents (Kernighan and Lin, 1970). In social networks, clusters may represent groups of people with c 2014 Y udong Chen, Ali Jalali, Sujay Sanghavi and Huan Xu. C H E N , J A L A L I , S A N G H A V I A N D X U similar interest or background; finding clusters enables better recommendations and link predic- tion (Mishra et al., 2007). In the analysis of document databases, clustering the citation graph is often an essential and informative first step (Ester et al., 1995). In this paper , we will focus not on specific application domains, but rather on the basic graph clustering problem itself. Partially observed graphs appear in many applications. For example, in online social networks like Facebook, we observe an edge/no edge between two users when they accept each other as a friend or explicitly decline a friendship suggestion. For the other user pairs, howe ver , we simply hav e no friendship information between them, which are thus unobserved. More generally , we have partial observations whenev er obtaining similarity data is difficult or expensi ve (e.g., because it re- quires human participation). In these applications, it is often the case that most pairs are unobserved, which is the regime we are particularly interested in. As with any clustering problem, we need a precise mathematical definition of the clustering criterion with potentially a guaranteed performance. There is relativ ely fe w existing results with prov able performance guarantees for graph clustering with partially observed node pairs. Many existing approaches to clustering fully observed graphs either require an additional input (e.g., the number of clusters k required for spectral or k -means clustering methods), or do not guarantee the performance of the clustering. W e revie w existing related w ork in Section 1.2. 1.1 Our A ppr oach W e focus on a natural formulation, one that does not require any other extraneous input besides the graph itself. It is based on minimizing disagr eements , which we now define. Consider any candidate clustering; this will hav e (a) observed node pairs that are in dif ferent clusters, but have an edge between them, and (b) observed node pairs that are in the same cluster , but do not hav e an edge between them. The total number of node pairs of types (a) and (b) is the number of disagree- ments between the clustering and the giv en graph. W e focus on the problem of finding the optimal clustering—one that minimizes the number of disagreements. Note that we do not pre-specify the number of clusters. For the special case of fully observed graphs, this formulation is exactly the same as the problem of correlation clustering , first proposed by Bansal et al. (2002). They sho w that exact minimization of the abov e objectiv e is NP-hard in the worst case—we surve y and com- pare with this and other related work in Section 1.2. As we will see, our approach and results are dif ferent. W e aim to achie ve the combinatorial disagreement minimization objectiv e using matrix split- ting via con ve x optimization. In particular, as we show in Section 2 below , one can represent the adjacency matrix of the giv en graph as the sum of an unkno wn low-rank matrix (corresponding to “ideal” clusters) and a sparse matrix (corresponding to disagreements from this “ideal” in the gi ven graph). Our algorithm either returns a clustering, which is guaranteed to be disagreement minimiz- ing, or returns a “failure”—it ne ver returns a sub-optimal clustering. For our main analytical result, we e v aluate our algorithm’ s performance on the natural and classical planted partition/stochastic block model with partial observations. Our analysis provides stronger guarantees than are current results on general matrix splitting (Cand ` es et al., 2011; Hsu et al., 2011; Li, 2013; Chen et al., 2013). The algorithm, model and results are giv en in Section 2. W e prov e our theoretical results in Section 3 and provide empirical results in Section 4. 2214 C L U S T E R I N G P A RT I A L L Y O B S E RV E D G R A P H S V I A C O N V E X O P T I M I Z AT I O N 1.2 Related W ork Our problem can be interpreted in the general clustering context as one in which the presence of an edge between two points indicates a “similarity”, and the lack of an edge means “no similarity”. The general field of clustering is of course vast, and a detailed survey of all methods therein is beyond our scope here. W e focus instead on the three sets of papers most relev ant to the problem here: the work on correlation clustering, on the planted partition/stochastic block model, and on graph clustering with partial observ ations. 1 . 2 . 1 C O R R E L A T I O N C L U S T E R I N G As mentioned, for a completely observed graph, our problem is mathematically precisely the same as correlation clustering formulated in Bansal et al. (2002); in particular a “+” in correlation cluster - ing corresponds to an edge in the graph, a “-” to the lack of an edge, and disagreements are defined in the same way . Thus, this paper can equiv alently be considered as an algorithm, and guarantees, for corr elation clustering under partial observations . Since correlation clustering is NP-hard, there has been much work on devising alternativ e approximation algorithms (Bansal et al., 2002; Emmanuel and Fiat, 2003). Approximations using con v ex optimization, including LP relaxation (Charikar et al., 2003; Demaine and Immorlica, 2003; Demaine et al., 2006) and SDP relaxation (Swamy, 2004; Mathieu and Schudy, 2010), possibly follo wed by rounding, hav e also been developed. W e emphasize that we use a dif ferent con vex relaxation, and we focus on understanding when our con- ve x program yields an optimal clustering without further rounding. W e note that Mathieu and Schudy (2010) use a con ve x formulation with constraints enforcing positi ve semi-definiteness, triangle inequalities and fixed diagonal entries. For the fully observed case, their relaxation is at least as tight as ours, and since they add more constraints, it is possible that there are instances where their con v ex program works and ours does not. Howe ver , this seems hard to prove/dispro ve. Indeed, in the full observation setting they consider , their exact recov ery guarantee is no better than ours. Moreov er , as we argue in the next section, our guarantees are order-wise optimal in some important regimes and thus cannot be improv ed ev en with a tighter relaxation. Practically , our method is faster since, to the best of our knowledge, there is no lo w- complexity algorithm to deal with the Θ( n 3 ) triangle inequality constraints required by Mathieu and Schudy (2010). This means that our method can handle lar ge graphs while their result is practi- cally restricted to small ones ( ∼ 100 nodes). In summary , their approach has higher computational complexity , and does not provide significant and characterizable performance gain in terms of exact cluster recov ery . 1 . 2 . 2 P L A N T E D P A RT I T I O N M O D E L The planted partition model, also known as the stochastic block-model (Condon and Karp, 2001; Holland et al., 1983), assumes that the graph is generated with in-cluster edge probability p and inter-cluster edge probability q (where p > q ) and fully observed. The goal is to recover the latent cluster structure. A class of this model with τ , max { 1 − p, q } < 1 2 is often used as benchmark for avera ge case performance for correlation clustering (see, e.g., Mathieu and Schudy, 2010). Our theoretical results are applicable to this model and thus directly comparable with existing work in this area. A detailed comparison is provided in T able 1. For fully observed graphs, our result matches the previous best bounds in both the minimum cluster size and the difference between in-cluster/inter-cluster densities. W e would like to point out that nuclear norm minimization has 2215 C H E N , J A L A L I , S A N G H A V I A N D X U been used to solv e the closely related planted clique problem (Alon et al., 1998; Ames and V av asis, 2011). Paper Cluster size K Density dif ference (1 − 2 τ ) Boppana (1987) n/ 2 ˜ Ω( 1 √ n ) Jerrum and Sorkin (1998) n/ 2 ˜ Ω( 1 n 1 / 6 − ) Condon and Karp (2001) ˜ Ω( n ) ˜ Ω( 1 n 1 / 2 − ) Carson and Impagliazzo (2001) n/ 2 ˜ Ω( 1 √ n ) Feige and Kilian (2001) n/ 2 ˜ Ω( 1 √ n ) McSherry (2001) ˜ Ω( n 2 / 3 ) ˜ Ω( q n 2 K 3 ) Bollob ´ as and Scott (2004) ˜ Ω( n ) ˜ Ω( q 1 n ) Giesen and Mitsche (2005) ˜ Ω( √ n ) ˜ Ω( √ n K ) Shamir and Tsur (2007) ˜ Ω( √ n ) ˜ Ω( √ n K ) Mathieu and Schudy (2010) ˜ Ω( √ n ) ˜ Ω(1) Rohe et al. (2011) ˜ Ω( n 3 / 4 ) ˜ Ω( n 3 / 4 K ) Oymak and Hassibi (2011) ˜ Ω( √ n ) ˜ Ω( √ n K ) Chaudhuri et al. (2012) ˜ Ω( √ n ) ˜ Ω( √ n K ) This paper ˜ Ω( √ n ) ˜ Ω( √ n K ) T able 1: Comparison with literatur e. This table sho ws the lo wer -bound requirements on the minimum clus- ter size K and the density dif ference p − q = 1 − 2 τ that existing literature needs for exact recov ery of the planted partitions, when the graph is fully observed and τ , max { 1 − p, q } = Θ(1) . Some of the results in the table only guarantee recovering the membership of most, instead of all, nodes. T o compare with these results, we use the soft- Ω notation ˜ Ω( · ) , which hides the log arithmic f actors that are necessary for recov ering all nodes, which is the goal of this paper . 1 . 2 . 3 P A RT I A L L Y O B S E RV E D G R A P H S The pre vious work listed in T able 1, except Oymak and Hassibi (2011), does not handle partial observ ations directly . One natural way to proceed is to impute the missing observations with no- edge, or random edges with symmetric probabilities, and then apply any of the results in T able 1. This approach, howe v er , leads to sub-optimal results. Indeed, this is done explicitly by Oymak and Hassibi (2011). They require the probability of observation p 0 to satisfy p 0 & √ K min n , where n is the number of nodes and K min is the minimum cluster size; in contrast, our approach only needs p 0 & n K 2 min (both right hand sides have to be less than 1 , requiring K min & √ n , so the right hand side of our condition is order -wise smaller and thus less restrictiv e.) Shamir and T ishby (2011) deal with partial observ ations directly and shows that p 0 & 1 n suf fices for reco vering tw o clusters of size Ω( n ) . Our result is applicable to much smaller clusters of size ˜ Ω( √ n ) . In addition, a nice feature of 2216 C L U S T E R I N G P A RT I A L L Y O B S E RV E D G R A P H S V I A C O N V E X O P T I M I Z AT I O N our result is that it explicitly characterizes the tradeoffs between the three relev ant parameters: p 0 , τ , and K min ; theoretical result like this is not a v ailable in pre vious work. There exists other work that considers partial observations, but under rather different settings. For example, Balcan and Gupta (2010), V oev odski et al. (2010) and Krishnamurthy et al. (2012) consider the clustering problem where one samples the rows/columns of the adjacency matrix rather than its entries. Hunter and Strohmer (2010) consider partial observations in the features rather than in the similarity graph. Eriksson et al. (2011) show that ˜ Ω( n ) acti vely selected pairwise similarities are sufficient for recovering a hierarchical clustering structure. Their results seem to rely on the hierarchical structure. When disagreements are present, the first split of the cluster tree can recov ers clusters of size Ω( n ) ; our results allo w smaller clusters. Moreover , they require activ e control ov er the observ ation process, while we assume random observ ations. 2. Main Results Our setup for the graph clustering problem is as follo ws. W e are giv en a partially observed graph of n nodes, whose adjacency matrix is A ∈ R n × n , which has a i,j = 1 if there is an edge between nodes i and j , a i,j = 0 if there is no edge, and a i,j = “?” if we do not kno w . (Here we follow the con v ention that a i,i = 0 for all i .) Let Ω obs , { ( i, j ) : a i,j 6 =? } be the set of observed node pairs. The goal to find the optimal clustering, i.e., the one that has the minimum number of disagreements (defined in Section 1.1) in Ω obs . In the rest of this section, we present our algorithm for the abov e task and analyze its perfor- mance under the planted partition model with partial observations. W e also study the optimality of the performance of our algorithm by deri ving a necessary condition for any algorithm to succeed. 2.1 Algorithm Our algorithm is based on con v ex optimization, and either (a) outputs a clustering that is guaranteed to be the one that minimizes the number of observed disagreements, or (b) declares “failure”. In particular , it ne ver produces a suboptimal clustering. 1 W e now briefly present the main idea and then describe the algorithm. Consider first the fully observed case, i.e., ev ery a i,j = 0 or 1. Suppose also that the graph is already ideally clustered—i.e., there is a partition of the nodes such that there is no edge between clusters, and each cluster is a clique. In this case, the matrix A + I is no w a low-rank matrix, with the rank equal to the number of clusters. This can be seen by noticing that if we re-order the rows and columns so that clusters appear together , the result would be a block-dia gonal matrix, with each block being an all-ones submatrix—and thus rank one. Of course, this re-ordering does not change the rank of the matrix, and hence A + I is exactly low-rank. Consider now any giv en graph, still fully observed. In light of the abov e, we are looking for a decomposition of its A + I into a low-rank part K ∗ (of block-diagonal all-ones, one block for each cluster) and a remaining B ∗ (the disagreements), such that the number of non-zero entries in B ∗ is as small as possible; i.e., B ∗ is sparse. Finally , the problem we look at is recov ery of the best K ∗ when we do not observe all entries of A + I . The idea is depicted in Figure 1. 1. In practice, one might be able to use the “failed” output with rounding as an approximate solution. In this paper, we focus on the performance of the unrounded algorithm. 2217 C H E N , J A L A L I , S A N G H A V I A N D X U Figure 1: The adjacency matrix of a graph drawn from the planted partition model before and after proper reordering (i.e., clustering) of the nodes. The figure on the right is indicative of the matrix as a superposition of a sparse matrix and a low-rank block diagonal one. W e propose to perform the matrix splitting using conv e x optimization (Chandrasekaran et al., 2011; Cand ` es et al., 2011). Our approach consists of dropping any additional structural require- ments, and just looking for a decomposition of the gi ven A + I as the sum of a sparse matrix B and a low-rank matrix K . Recall that Ω obs is the set of observed entries, i.e., the set of elements of A that are kno wn to be 0 or 1; we use the follo wing con v ex program: min B , K λ k B k 1 + k K k ∗ s.t. P Ω obs ( B + K ) = P Ω obs ( A + I ) . (1) Here, for any matrix M , the term P Ω obs ( M ) keeps all elements of M in Ω obs unchanged, and sets all other elements to 0; the constraints thus state that the sparse and lo w-rank matrix should in sum be consistent with the observed entries of A + I . The term k B k 1 = P i,j | b i,j | is the ` 1 norm of the entries of the matrix B , which is well-kno wn to be a con v ex surrogate for the number of non- zero entries k B k 0 . The second term k K k ∗ = P s σ s ( K ) is the nuclear norm (also kno wn as the trace norm), defined as the sum of the singular v alues of K . This has been sho wn to be the tightest con v ex surrogate for the rank function for matrices wi th unit spectral norm (Fazel, 2002). Thus our objecti ve function is a conv ex surrogate for the (natural) combinatorial objecti ve λ k B k 0 + rank ( K ) . The optimization problem (1) is, in fact, a semidefinite program (SDP) (Chandrasekaran et al., 2011). W e remark on the above formulation. (a) This formulation does not require specifying the number of clusters; this parameter is effecti vely learned from the data. The tradeoff parameter λ is artificial and can be easily determined: since any desired K ∗ has trace e xactly equal to n , we simply choose the smallest λ such that the trace of the optimal solution is at least n . This can be done by , e.g., bisection, which is described below . (b) It is possible to obtain tighter conv e x relaxations by adding more constraints, such as the diagonal entry constraints k i,i = 1 , ∀ i , the positive semi- definite constraint K 0 , or even the triangular inequalities k i,j + k j,k − k i,k ≤ 1 . Indeed, this is done by Mathieu and Schudy (2010). Note that the guarantees for our formulation (to be presented in the next subsection) automatically imply guarantees for an y other tighter relaxations. W e choose to focus on our (looser) formulation for two reasons. First, and most importantly , even with the e xtra 2218 C L U S T E R I N G P A RT I A L L Y O B S E RV E D G R A P H S V I A C O N V E X O P T I M I Z AT I O N constraints, Mathieu and Schudy (2010) do not deliv er better exact recov ery guarantees (cf. T able 1). In fact, we sho w in Section 2.3 that our results are near optimal in some important regimes, so tighter relaxations do not seem to provide additional benefits in exact recovery . Second, our formulation can be solved efficiently using existing Augmented Lagrangian Multiplier methods (Lin et al., 2009). This is no longer the case with the Θ( n 3 ) triangle inequality constraints enforced by Mathieu and Schudy (2010), and solving it as a standard SDP is only feasible for small graphs. W e are interested in the case when the con vex program (1) produces an optimal solution K that is a block-diagonal matrix and corresponds to an ideal clustering. Definition 1 (V alidity) The con vex pr ogr am (1) is said to pr oduce a valid output if the low-rank matrix part K of the optimal solution corresponds to a graph of disjoint cliques; i.e., its r ows and columns can be r e-or dered to yield a bloc k-diagonal matrix with all ones for eac h bloc k. V alidity of a gi ven K can be easily check ed via elementary re-ordering operations. 2 Our first simple but useful insight is that whene v er the con vex program (1) yields a v alid solution, it is the disagree- ment minimizer . Theorem 2 F or any λ > 0 , if the solution of (1) is valid, then it is the clustering that minimizes the number of observed disagr eements. Our complete clustering procedure is giv en as Algorithm 1. It takes the adjacency matrix of the graph A and outputs either the optimal clustering or declares failure. Setting the parameter λ is done via binary search. The initial value of λ is not crucial; we use λ = 1 32 √ ¯ p 0 n based on our theoretical analysis in the next sub-section, where ¯ p 0 is the empirical fraction of observed pairs. T o solve the optimization problem (1), we use the fast algorithm de veloped by Lin et al. (2009), which is tailored for matrix splitting and takes advantage of the sparsity of the observations. By Theorem 2, whene ver the algorithm results in a v alid K , we ha ve found the optimal clustering. Algorithm 1 Optimal-Cluster( A ) λ ← 1 32 √ ¯ p 0 n while not terminated do Solve (1) to obtain the solution ( B , K ) if K is v alid then Output the clustering in K and EXIT . else if trace ( K ) > n then λ ← λ/ 2 else if trace ( K ) < n then λ ← 2 λ end if end while Declare Failure. 2. If we re-order a valid K such that identical rows and columns appear together , it will become block-diagonal. 2219 C H E N , J A L A L I , S A N G H A V I A N D X U 2.2 Perf ormance Analysis For the main analytical contribution of this paper , we provide conditions under which the above algorithm will find the clustering that minimizes the number of disagreements among the observed entries. In particular, we characterize its performance under the standard and classical planted partition/stochastic block model with partial observ ations, which we no w describe. Definition 3 (Planted Partition Model with Partial Obser vations) Suppose that n nodes ar e par- titioned into r clusters, each of size at least K min . Let K ∗ be the low-rank matrix corr esponding to this clustering (as described above). The adjacency matrix A of the graph is generated as follows: for each pair of nodes ( i, j ) in the same cluster , a i,j =? with pr obability 1 − p 0 , a i,j = 1 with pr obability p 0 p , or a ij = 0 otherwise, independent of all others; similarly , for ( i, j ) in differ ent clusters, a i,j =? with pr obability 1 − p 0 , a i,j = 1 with pr obability p 0 q , or a i,j = 0 otherwise. Under the abov e model, the graph is observed at locations chosen at random with probability p 0 . In expectation a fraction of 1 − p of the in-cluster observ ations are disagreements; similarly , the fraction of disagreements in the across-cluster observations is q . Let B ∗ = P Ω obs ( A + I − K ∗ ) be the matrix of observed disagreements for the original clustering; note that the support of B ∗ is contained in Ω obs . The following theorem provides a sufficient condition for our algorithm to recov er the original clustering ( B ∗ , K ∗ ) with high probability . Combined with Theorem 2, it also sho ws that under the same condition, the original clustering is disagreement minimizing with high probability . Theorem 4 Let τ = max { 1 − p, q } . Ther e exist universal positive constants c and C such that, with pr obability at least 1 − cn − 10 , the original clustering ( B ∗ , K ∗ ) is the unique optimal solution of (1) with λ = 1 32 √ np 0 pr ovided that p 0 (1 − 2 τ ) 2 ≥ C n log 2 n K 2 min . (2) Note that the quantity τ is (an upper bound of) the probability of ha ving a disagreement, and 1 − 2 τ is (a lower bound of) the density gap p − q . The suf ficient condition in the theorem is given in terms of the three parameters that define problem: the minimum cluster size K min , the density g ap 1 − 2 τ , and the observ ation probability p 0 . W e remark on these parameters. • Minimum cluster size K min . Since the left hand side of the condition (2) in Theorem 4 is no more than 1 , it imposes a lower -bound K min = ˜ Ω( √ n ) on the cluster sizes. This means that our method can handle a growing number ˜ O ( √ n ) of clusters. The lower -bound on K min is attained when 1 − 2 τ and p 0 are both Θ(1) , i.e., not decreasing as n gro ws. Note that all rele v ant works require a lo wer -bound at least as strong as ours (cf. T able 1). • Density gap 1 − 2 τ . When p 0 = Θ(1) , our result allo ws this gap to be v anishingly small, i.e., ˜ Ω √ n K min , where a larger K min allo ws for a smaller gap. As we mentioned in Section 1.2, this matches the best av ailable results (cf. T able 1), including those in Mathieu and Schudy (2010) and Oymak and Hassibi (2011), which use tighter con v ex relaxations that are more computationally demanding. W e note that directly applying existing results in the low-rank- plus-sparse literature (Cand ` es et al., 2011; Li, 2013) leads to weaker results, where the gap be bounded belo w by a constant. 2220 C L U S T E R I N G P A RT I A L L Y O B S E RV E D G R A P H S V I A C O N V E X O P T I M I Z AT I O N • Observation probability p 0 . When 1 − 2 τ = Θ(1) , our result only requires a vanishing fraction of observations, i.e., p 0 can be as small as ˜ Θ n K 2 min ; a larger K min allo ws for a smaller p 0 . As mentioned in Section 1.2, this scaling is better than prior results we know of. • T radeof fs. A nov el aspect of our result is that it shows an explic it tradeoff between the ob- serv ation probability p 0 and the density gap 1 − 2 τ . The left hand side of (2) is linear in p 0 and quadratic in 1 − 2 τ . This means if the number of observations is four times larger , then we can handle a 50% smaller density gap. Moreover , p 0 can go to zero quadratically faster then 1 − 2 τ . Consequently , treating missing observations as disagreements would lead to quadratically weak er results. This agrees with the intuition that handling missing entries with kno wn locations is easier than correcting disagreements whose locations are unkno wn. W e would like to point out that our algorithm has the capability to handle outliers. Suppose there are some isolated nodes which do not belong to any cluster , and they connect to each other and each node in the clusters with probability at most τ , with τ obeying the condition (2) in Theorem 4. Our algorithm will classify all these edges as disagreements, and hence automatically re veal the identity of each outlier . In the output of our algorithm, the low rank part K will ha v e all zeros in the columns and ro ws corresponding to outliers—all their edges will appear in the disagreement matrix B . 2.3 Lower Bounds W e now discuss the tightness of Theorem 4. Consider first the case where K min = Θ( n ) , which means there are a constant number of clusters. W e establish a fundamental lower bound on the density gap 1 − 2 τ and the observation probability p 0 that are required for any algorithm to correctly recov er the clusters. Theorem 5 Under the planted partition model with partial observations, suppose the true clus- tering is chosen uniformly at random fr om all possible clusterings with equal cluster size K . If K = Θ( n ) and τ = 1 − p = q > 1 / 100 , then for any algorithm to corr ectly identify the clusters with pr obability at least 3 4 , we need p 0 (1 − 2 τ ) 2 ≥ C 1 n , wher e C > 0 is an absolute constant. Theorem 5 generalizes a similar result in Chaudhuri et al. (2012), which does not consider partial observ ations. The theorem applies to any algorithm re gardless of its computational comple xity , and characterizes the fundamental tradeoff between p 0 and 1 − 2 τ . It sho ws that when K min = Θ( n ) , the requirement for 1 − 2 τ and p 0 in Theorem 4 is optimal up to logarithmic f actors, and cannot be significantly improv ed by using more complicated methods. For the general case with K min = O ( n ) , only part of the picture is kno wn. Using non-rigorous arguments, Decelle et al. (2011) sho w that 1 − 2 τ & √ n K min is necessary when τ = Θ(1) and the graph is fully observed; otherwise recovery is impossible or computationally hard. According to this lower -bound, our requirement on the density gap 1 − 2 τ is probably tight (up to log factors) for all K min . Howe v er , a rigorous proof of this claim is still lacking, and seems to be a difficult problem. Similarly , the tightness of our condition on p 0 and the tradeoff between p 0 and τ is also unclear in this regime. 2221 C H E N , J A L A L I , S A N G H A V I A N D X U 3. Proofs In this section, we prov e Theorems 2 and 4. The proof of Theorem 5 is deferred to Appendix B. 3.1 Proof of Theorem 2 W e first prov e Theorem 2, which says that if the optimization problem (1) produces a valid matrix, i.e., one that corresponds to a clustering of the nodes, then this is the disagreement minimizing clustering. Consider the following non-con ve x optimization problem min B , K λ k B k 1 + k K k ∗ s.t. P Ω obs ( B + K ) = P Ω obs ( I + A ) , K is v alid, (3) and let ( B , K ) be an y feasible solution. Since K represents a v alid clustering, it is positi v e semidef- inite and has all ones along its diagonal. Therefore, it obeys k K k ∗ = trace ( K ) = n . On the other hand, because both K − I and A are adjacenc y matrices, the entries of B = I + A − K in Ω obs must be equal to − 1 , 1 or 0 (i.e., it is a disagreement matrix). Clearly any optimal B must hav e zeros at the entries in Ω c obs . Hence k B k 1 = kP Ω obs ( B ) k 0 when K is valid. W e thus conclude that the above optimization problem (3) is equi v alent to minimizing kP Ω obs ( B ) k 0 subject to the same constraints. This is e xactly the minimization of the number of disagreements on the observed edges. Now notice that (1) is a relaxed version of (3). Therefore, if the optimal solution of (1) is v alid and thus feasible to (3), then it is also optimal to (3), the disagreement minimization problem. 3.2 Proof of Theorem 4 W e now turn to the proof of Theorem 4, which provides guarantees for when the con ve x program (1) recov ers the true clustering ( B ∗ , K ∗ ) . 3 . 2 . 1 P R O O F O U T L I N E A N D P R E L I M I N A R I E S W e overvie w the main steps in the proof of Theorem 4; details are provided in Sections 3.2.2 – 3.2.4 to follow . W e would like to show that the pair ( B ∗ , K ∗ ) corresponding to the true clustering is the unique optimal solution to our con v ex program (1). This in volv es the follo wing three steps. Step 1: W e sho w that it suf fices to consider an equiv alent model for the observation and dis- agreements. This model is easier to handle, especially when the observ ation probability and density gap are v anishingly small, which is the regime of interest in this paper . Step 2: W e write down the sub-gradient based first-order sufficient conditions that need to be satisfied for ( B ∗ , K ∗ ) to be the unique optimum of (1). In our case, this in v olves showing the existence of a matrix W —the dual certificate —that satisfies certain properties. This step is technical—requiring us to deal with the intricacies of sub-gradients since our conv ex function is not smooth—but otherwise standard. Luckily for us, this has been done pre viously (Chandrasekaran et al., 2011; Cand ` es et al., 2011; Li, 2013). Step 3: Using the assumptions made on the true clustering K ∗ and its disagreements B ∗ , we construct a candidate dual certificate W that meets the requirements in step 2, and thus certify ( B ∗ , K ∗ ) as being the unique optimum. 2222 C L U S T E R I N G P A RT I A L L Y O B S E RV E D G R A P H S V I A C O N V E X O P T I M I Z AT I O N The crucial Step 3 is where we go beyond the existing literature on matrix splitting (Chan- drasekaran et al., 2011; Cand ` es et al., 2011; Li, 2013). These results assume the observation prob- ability and/or density gap is at least a constant, and hence do not apply to our setting. Here we provide a refined analysis, which leads to better performance guarantees than those that could be obtained via a direct application of existing sparse and lo w-rank matrix splitting results. Next, we introduce some notations used in the rest of the proof of the theorem. The following definitions related to K ∗ are standard. By symmetry , the SVD of K ∗ has the form UΣU T , where U ∈ R n × r contains the singular vectors of K ∗ . W e define the subspace T , UX T + YU T : X , Y ∈ R n × r , which is spanned of all matrices that share either the same column space or the same ro w space as K ∗ . For any matrix M ∈ R n × n , its orthogonal projection to the space T is giv en by P T ( M ) = UU T M + MUU T − UU T MUU T . The projection onto T ⊥ , the complement orthogonal space of T , is gi ven by P T ⊥ ( M ) = M − P T ( M ) . The following definitions are related to B ∗ and partial observations. Let Ω ∗ = { ( i, j ) : b ∗ i,j 6 = 0 } be the set of matrix entries corresponding to the disagreements. Recall that Ω obs is the set of observed entries. F or any matrix M and entry set Ω 0 , we let P Ω 0 ( M ) ∈ R n × n be the matrix obtained from M by setting all entries not in the set Ω 0 to zero. W e write Ω 0 ∼ Ber 0 ( p ) if the entry set Ω 0 does not contain the diagonal entries, and each pair ( i, j ) and ( j, i ) ( i 6 = j ) is contained in Ω 0 with probability p , independent all others; Ω 0 ∼ Ber 1 ( p ) is defined similarly except that Ω 0 contains all the diagonal entries. Under our partially observed planted partition model, we have Ω obs ∼ Ber 1 ( p 0 ) and Ω ∗ ∼ Ber 0 ( τ ) . Se veral matrix norms are used. k M k and k M k F represent the spectral and Frobenius norms of the matrix M , respecti vely , and k M k ∞ , max i,j | m i,j | is the matrix infinity norm. 3 . 2 . 2 S T E P 1 : E Q U I V A L E N T M O D E L F O R O B S E RV AT I O N S A N D D I S AG R E E M E N T S It is easy show that increasing p or decreasing q can only make the probability of success higher , so without loss of generality we assume 1 − p = q = τ . Observe that the probability of success is completely determined by the distribution of (Ω obs , B ∗ ) under the planted partition model with partial observ ations. The first step is to sho w that it suffices to consider an equiv alent model for generating (Ω obs , B ∗ ) , which results in the same distribution but is easier to handle. This is in the same spirit as Cand ` es et al. (2011, Theorems 2.2 and 2.3) and Li (2013, Section 4.1). In particular , we consider the follo wing procedure: 1. Let Γ ∼ Ber 1 ( p 0 (1 − 2 τ )) , and Ω ∼ Ber 0 2 p 0 τ 1 − p 0 +2 p 0 τ . Let Ω obs = Γ ∪ Ω . 2. Let S be a symmetric random matrix whose upper-triangular entries are independent and satisfy P ( s i,j = 1) = P ( s i,j = − 1) = 1 2 . 3. Define Ω 0 ⊆ Ω as Ω 0 = n ( i, j ) : ( i, j ) ∈ Ω , s i,j = 1 − 2 k ∗ i,j o . In other words, Ω 0 is the entries of S whose signs are consistent with a disagreement matrix. 4. Define Ω ∗ = Ω 0 \ Γ , and ˜ Γ = Ω obs \ Ω ∗ . 5. Let B ∗ = P Ω ∗ ( S ) . 2223 C H E N , J A L A L I , S A N G H A V I A N D X U It is easy to verify that (Ω obs , B ∗ ) has the same distribution as in the original model. In particular , we hav e P [( i, j ) ∈ Ω obs ] = p 0 , P [( i, j ) ∈ Ω ∗ , ( i, j ) ∈ Ω obs ] = p 0 τ and P [( i, j ) ∈ Ω ∗ , ( i, j ) / ∈ Ω obs ] = 0 , and observe that gi v en K ∗ , B ∗ is completely determined by its support Ω ∗ . The advantage of the above model is that Γ and Ω are independent of each other , and S has random signed entries. This facilitates the construction of the dual certificate, especially in the regime of vanishing p 0 and 1 2 − τ considered in this paper . W e use this equiv alent model in the rest of the proof. 3 . 2 . 3 S T E P 2 : S U FFI C I E N T C O N D I T I O N S F O R O P T I M A L I T Y W e state the first-order conditions that guarantee ( B ∗ , K ∗ ) to be the unique optimum of (1) with high probability . Here and henceforth, by with high pr obability we mean with probability at least 1 − cn − 10 for some uni versal constant c > 0 . The follo wing lemma follo ws from Theorem 4.4 in Li (2013) and the discussion thereafter . Lemma 6 (Optimality Condition) Suppose 1 (1 − 2 τ ) p 0 P T P Γ P T − P T ≤ 1 2 . Then ( B ∗ , K ∗ ) is the unique optimal solution to (1) with high pr obability if there e xists W ∈ R n × n such that 1. P T ( W + λ P Ω S − UU > ) F ≤ λ n 2 , 2. kP T ⊥ ( W + λ P Ω S ) k ≤ 1 4 , 3. P Γ c ( W ) = 0 , 4. kP Γ ( W ) k ∞ ≤ λ 4 . Lemma 9 in the appendix guarantees that the condition 1 (1 − 2 τ ) p 0 P T P Γ P T − P T ≤ 1 2 is satisfied with high probability under the assumption of Theorem 4. It remains to sho w the existence of a desired dual certificate W which satisfies the four conditions in Lemma 6 with high probability . 3 . 2 . 4 S T E P 3 : D UA L C E RT I FI C A T E C O N S T R U C T I O N W e use a variant of the so-called golfing scheme (Cand ` es et al., 2011; Gross, 2011) to construct W . Our application of golfing scheme, as well as its analysis, is different from pre vious work and leads to stronger guarantees. In particular , we go beyond e xisting results by allowing the fraction of observed entries and the density gap to be v anishing. By definition in Section 3.2.2, Γ obeys Γ ∼ Ber 1 ( p 0 (1 − 2 τ )) . Observe that Γ may be considered to be generated by Γ = S 1 ≤ k ≤ k 0 Γ k , where the sets Γ k ∼ Ber 1 ( t ) are independent; here the parameter t obeys p 0 (1 − 2 τ ) = 1 − (1 − t ) k 0 , and k 0 is chosen to be d 5 log n e . This implies t ≥ p 0 (1 − 2 τ ) /k 0 ≥ C 0 n log n K 2 min for some constant C 0 , with the last inequality holds under the assumption of Theorem 4. For any random entry set Ω 0 ∼ Ber 1 ( ρ ) , define the operator R Ω 0 : R n × n 7→ R n × n by R Ω 0 ( M ) = n X i =1 m i,i e i e T i + ρ − 1 X 1 ≤ i ) F = k ∆ k 0 k F ≤ k 0 Y k =1 kP T − P T R Γ k P T k ! UU T − λ P T P Ω ( S ) F ( a ) ≤ e − k 0 ( UU T F + λ kP T P Ω ( S ) k F ) ( b ) ≤ n − 5 ( n + λ · n ) ≤ (1 + λ ) n − 4 ( c ) ≤ 1 2 n 2 λ. Here, the inequality (a) follows Lemma 9 with 1 = e − 1 , (b) follows from our choices of λ and k 0 and the fact that kP T P Ω ( S ) k F ≤ kP Ω ( S ) k F ≤ n , and (c) holds under the assumption λ ≥ 1 32 √ n in the theorem. Inequality 4: W e have kP Γ ( W ) k ∞ = kP Γ ( W k 0 ) k ∞ ≤ k 0 X k =1 kR Γ i ∆ i − 1 k ∞ ≤ t − 1 k 0 X k =1 k ∆ k − 1 k ∞ , 2225 C H E N , J A L A L I , S A N G H A V I A N D X U where the first inequality follo ws from (5) and the triangle inequality . W e proceed to obtain k 0 X k =1 k ∆ k − 1 k ∞ ( a ) = k 0 X k =1 k − 1 Y i =1 ( P T − P T R Γ i P T )( UU T − λ P T P Ω ( S )) ∞ ( b ) ≤ k 0 X k =1 1 2 k UU T − λ P T P Ω ( S ) ∞ ( c ) ≤ 1 K min + λ s p 0 n log n K 2 min , (6) where (a) follo ws from (4), (b) follo ws from Lemma 11 and (c) follo ws from Lemma 12. It follo ws that kP Γ ( W ) k ∞ ≤ 1 t 1 K min + n log n K 2 min λ ≤ k 0 p 0 (1 − 2 τ ) 1 K min + λ s p 0 n log n K 2 min ! ≤ 1 4 λ, where the last inequality holds under the assumption of Theorem 4 Inequality 2: Observe that by the triangle inequality , we have kP T ⊥ ( W + λ P Ω ( S )) k ≤ λ kP T ⊥ ( P Ω ( S )) k + kP T ⊥ ( W k 0 ) k . For the first term, standard results on the norm of a matrix with i.i.d. entries (e.g., see V ershynin 2010) gi ve λ kP T ⊥ ( P Ω ( S )) k ≤ λ kP Ω ( S ) k ≤ 1 32 √ p 0 n · 4 r 2 p 0 τ n 1 − p 0 + 2 p 0 τ ≤ 1 8 It remains to sho w that the second term is bounded by 1 8 . T o this end, we observe that kP T ⊥ ( W k 0 ) k ( a ) = k 0 X k =1 kP T ⊥ ( R Γ k ∆ k − 1 − ∆ k − 1 ) k ≤ k 0 X k =1 k ( R Γ k − I ) ∆ k − 1 k ( b ) ≤ C r n log n t k 0 X k =1 k ∆ k − 1 k ∞ ( c ) ≤ C s k 0 n log n p 0 (1 − 2 τ ) 1 K min + λ s p 0 n log n K 2 min ! ≤ 1 8 , where in (a) we use (5) and the fact that ∆ k ∈ T , (b) follows from Lemma 10, and (c) follows from (6). This completes the proof of Theorem 4. 2226 C L U S T E R I N G P A RT I A L L Y O B S E RV E D G R A P H S V I A C O N V E X O P T I M I Z AT I O N 0 0.2 0.4 0.6 0.8 0 0.2 0.4 0.6 0.8 1 p 0 Prob. of Success n = 200 n = 400 n = 1000 n = 2000 0 50 100 150 200 250 0 0.2 0.4 0.6 0.8 1 p 0 n / l o g ( n ) Prob. of Success n = 200 n = 400 n = 1000 n = 2000 Figure 2: Simulation results verifying the performance of our algorithm as a function of the observation probability p 0 and the graph size n . The left pane shows the probability of successful recovery under different p 0 and n with fixed τ = 0 . 2 and K min = n/ 4 ; each point is an average over 5 trials. After proper rescaling of the x-axis, the curves align as shown in the right pane, indicating a good match with our theoretical results. 4. Experimental Results W e e xplore via simulation the performance of our algorithm as a function of the v alues of the model parameters ( n, K min , p 0 , τ ) . W e see that the performance matches well with the theory . In the e xperiment, each test case is constructed by generating a graph with n nodes divided into clusters of equal size K min , and then placing a disagreement on each pair of node with probability τ independently . Each node pair is then observed with probability p 0 . W e then run Algorithm 1, where the optimization problem (1) is solved using the fast algorithm in Lin et al. (2009).. W e check if the algorithm successfully outputs a solution that equals to the underlying true clusters. In the first set of experiments, we fix τ = 0 . 2 and K min = n/ 4 and vary p 0 and n . For each ( p 0 , n ) , we repeat the experiment for 5 times and plot the probability of success in the left pane of Figure 2. One observes that our algorithm has better performance with larger p 0 and n , and the success probability exhibits a phase transition. Theorem 4 predicts that, with τ fixed and K min = n/ 4 , the transition occurs at p 0 ∝ n log 2 n K 2 min ∝ log 2 n n ; in particular , if we plot the success probability against the control parameter p 0 n log 2 n , all curves should align with each other . Indeed, this is precisely what we see in the right pane of Figure 2 where we use p 0 n log n as the control parameter . This shows that Theorem 4 gi ves the correct scaling between p 0 and n up to an extra log f actor . In a similar fashion, we run another three sets of experiments with the following settings: (1) n = 1000 and τ = 0 . 2 with v arying ( p 0 , K min ) ; (2) K min = n/ 4 and p 0 = 0 . 2 with varying ( τ , n ) ; (3) n = 1000 and p 0 = 0 . 6 with varying ( τ , K min ) . The results are shown in Figures 3, 4 and 5; note that each x -axis corresponds to a control parameter chosen according to the scaling predicted by Theorem 4. Again we observe that all the curv es roughly align, indicating a good match with the theory . In particular , by comparing Figures 2 and 4 (or Figures 3 and 5), one verifies the quadratic tradeof f between observ ations and disagreements (i.e., p 0 vs. 1 − 2 τ ) as predicted by Theorem 4. Finally , we compare the performance of our method with spectral clustering, a popular method for graph clustering. For spectral clustering, we first impute the missing entries of the adjacency 2227 C H E N , J A L A L I , S A N G H A V I A N D X U 0 0.005 0.01 0.015 0 0.2 0.4 0.6 0.8 1 p 0 K 2 m i n Prob. of Success K min = 125 K min = 100 K min = 50 Figure 3: Simulation results verifying the performance of our algorithm as a function of the observation probability p 0 and the cluster size K min , with n = 1000 and τ = 0 . 2 fix ed. 0 5 10 15 20 0 0.2 0.4 0.6 0.8 1 ( 1 − 2 τ ) p n / l o g ( n ) Prob. of Success n = 200 n = 400 n = 1000 n = 2000 Figure 4: Simulation results verifying the performance of our algorithm as a function of the disagreement probability τ and the graph size n , with p 0 = 0 . 2 and K min = n/ 4 fixed. matrix with either zeros or random 1 / 0 ’ s. W e then compute the first k principal components of the adjacency matrix, and run k -means clustering on the principal components (von Luxburg, 2007); here we set k equal to the number of clusters. The adjacency matrix is generated in the same f ashion as before using the parameters n = 2000 , K min = 200 and τ = 0 . 1 . W e v ary the observ ation proba- bility p 0 and plot the success probability in Figure 6. It can be observed that our method outperforms spectral clustering with both imputation schemes; in particular , it requires fe wer observ ations. 5. Conclusion W e proposed a con ve x optimization formulation, based on a reduction to decomposing low-rank and sparse matrices, to address the problem of clustering partially observed graphs. W e showed that under a wide range of parameters of the planted partition model with partial observations, our method is guaranteed to find the optimal (disagreement-minimizing) clustering. In particular , our method succeeds under higher le vels of noise and/or missing observ ations than existing methods in 2228 C L U S T E R I N G P A RT I A L L Y O B S E RV E D G R A P H S V I A C O N V E X O P T I M I Z AT I O N 0 0.05 0.1 0.15 0.2 0 0.2 0.4 0.6 0.8 1 ( 1 − 2 τ ) K m i n Prob. of Success K min = 125 K min = 100 K min = 50 Figure 5: Simulation results verifying the performance of our algorithm as a function of the disagreement probability τ and the cluster size K min , with n = 1000 and p 0 = 0 . 6 fixed. 0.05 0.1 0.15 0.2 0.25 0 0.2 0.4 0.6 0.8 1 p 0 Prob. of Success Our Method Spectral (Zero) Spectral (Rand) Figure 6: Comparison of our method and spectral clustering under different observation probabilities p 0 , with n = 2000 , K min = 200 and τ = 0 . 1 . For spectral clustering, two imputation schemes are considered: (a) Spectral (Zero), where the missing entries are imputed with zeros, and (b) Spectral (Rand), where the y are imputed with 0 / 1 random v ariables with symmetric probabilities. The result shows that our method reco v ers the underlying clusters with fewer observ ations. this setting. The effecti v eness of the proposed method and the scaling of the theoretical results are v alidated by simulation studies. This work is moti v ated by graph clustering applications where obtaining similarity data is ex- pensi ve and it is desirable to use as few observations as possible. As such, potential directions for future work include considering different sampling schemes such as activ e sampling, as well as dealing with sparse graphs with very fe w connections. Acknowledgments S. Sanghavi would like to acknowledge DTRA grant HDTRA1-13-1-0024 and NSF grants 1302435, 1320175 and 0954059. H. Xu is partially supported by the Ministry of Education of Singapore 2229 C H E N , J A L A L I , S A N G H A V I A N D X U through AcRF Tier T wo grant R-265-000-443-112. The authors are grateful to the anonymous re- vie wers for their thorough revie ws of this work and valuable suggestions on improving the manuscript. A ppendix A. T echnical Lemmas In this section, we provide sev eral auxiliary lemmas required in the proof of Theorem 4. W e will make use of the non-commutativ e Bernstein inequality . The following version is given by Tropp (2012). Lemma 7 (T ropp, 2012) Consider a finite sequence { M i } of independent, random n × n matrices that satisfy the assumption E M i = 0 and k M i k ≤ D almost surely . Let σ 2 = max ( X i E h M i M > i i , X i E h M > i M i i ) . Then for all θ > 0 we have P h X M i ≥ θ i ≤ 2 n exp − θ 2 2 σ 2 + 2 D θ / 3 . ≤ ( 2 n exp − 3 θ 2 8 σ 2 , for θ ≤ σ 2 D ; 2 n exp − 3 θ 8 D , for θ ≥ σ 2 D . (7) Remark 8 When n = 1 , this becomes the standard two-sided Bernstein inequality . W e will also make use of the follo wing estimate, which follows from the structure of U . P T ( e i e > j ) 2 F = UU T e i 2 + UU T e j 2 − UU T e i 2 UU T e j 2 ≤ 2 n K 2 min , ∀ 1 ≤ i, j ≤ n. The first auxiliary lemma controls the operator norm of certain random operators. A similar result was gi v en in Cand ` es et al. (2011, Theorem 4.1). Our proof is different from theirs. Lemma 9 Suppose Ω 0 is a set of entries obeying Ω 0 ∼ Ber 1 ( ρ ) . Consider the operator P T − P T R Ω 0 P T . F or some constant C 0 > 0 , we have kP T − P T R Ω 0 P T k < 1 with high pr obability pr ovided that ρ ≥ C 0 n log n 2 1 K 2 min and 1 ≤ 1 . Proof For each ( i, j ) , define the indicator random variable δ ij = 1 { ( i,j ) ∈ Ω 0 } . W e observe that for any matrix M ∈ T , ( P T R Ω 0 P T − P T ) M = X 1 ≤ i j ) , M E P T ( e i e > j + e j e > i ) . 2230 C L U S T E R I N G P A RT I A L L Y O B S E RV E D G R A P H S V I A C O N V E X O P T I M I Z AT I O N Here S ij : R n × n 7→ R n × n is a linear self-adjoint operator with E [ S ij ] = 0 . Using the fact that P T ( e i e > j ) = P T ( e j e > i ) > and M is symmetric, we obtain the bounds kS ij k ≤ ρ − 1 P T ( e i e > j ) F P T ( e i e > j + e j e > i ) F ≤ ρ − 1 · 2 P T ( e i e > j ) 2 F ≤ 4 n K 2 min ρ , and E X 1 ≤ i j ) , M E D P T ( e i e > j + e j e > i ) , e i e > j E P T ( e i e > j + e j e > i ) F = ρ − 1 − 1 X 1 ≤ i j ) 2 F m i,j P T ( e i e > j + e j e > i ) F ≤ ρ − 1 − 1 X 1 ≤ i j ) 2 F m i,j ( e i e > j + e j e > i ) F ≤ ρ − 1 − 1 4 n K 2 min X 1 ≤ i j + e j e > i ) F = ρ − 1 − 1 4 n K 2 min k M k F , which means E h P 1 ≤ i 0 , we have k ( I − R Ω 0 ) M k < s C 0 n log n ρ k M k ∞ , with high pr obability pr ovided that ρ ≥ C 0 log n n . 2231 C H E N , J A L A L I , S A N G H A V I A N D X U Proof Define δ ij as before. Notice that R Ω 0 ( M ) − M = X i j + e j e > i . Here the symmetric matrix S ij ∈ R n × n satisfies E [ S ij ] = 0 , k S ij k ≤ 2 ρ − 1 k M k ∞ and the bound E h P i i + e j e > j ≤ ρ − 1 − 1 diag X j m 2 1 ,j , . . . , X j m 2 n,j ≤ ρ − 1 − 1 n k M k 2 ∞ ≤ 2 ρ − 1 n k M k 2 ∞ . When ρ ≥ 16 β log n 3 n , we apply the first inequality in the Bernstein inequality (7) to obtain P " P i 0 , we have k ( P T − P T R Ω 0 P T ) M k ∞ < 3 k M k ∞ , with high pr obability pr ovided that ρ ≥ C 0 n log n 2 3 K 2 min and 3 ≤ 1 . Proof Define δ ij as before. Fix an entry index ( a, b ) . Notice that ( P T R Ω 0 P T M − P T M ) a,b = X i j + e j e > i , e a e > b E . The random v ariable ξ ij satisfies E [ ξ ij ] = 0 and obeys the bounds | ξ ij | ≤ 2 p − 1 P T ( e i e > j ) F P T ( e a e > b ) F | m i,j | ≤ 4 n K 2 min ρ k M k ∞ 2232 C L U S T E R I N G P A RT I A L L Y O B S E RV E D G R A P H S V I A C O N V E X O P T I M I Z AT I O N and E X i j + e j e > i , e a e > b E 2 ≤ ρ − 1 − 1 k M k 2 ∞ X i j + e j e > i , P T ( e a e > b ) E 2 ≤ 2 ρ − 1 − 1 k M k 2 ∞ P T ( e a e > b ) 2 F ≤ 2 ρ − 1 − 1 2 n K 2 min k M k 2 ∞ ≤ 4 n K 2 min ρ k M k 2 ∞ . When ρ ≥ 64 β n log n 3 K 2 min 2 3 and 3 ≤ 1 , we apply the first inequality in the Bernstein inequality (7) with n = 1 to obtain P h ( P T R Ω 0 P T M − P T M ) a,b ≥ 3 k M k ∞ i ≤ 2 exp − 3 2 3 k M k 2 ∞ 8 4 n K 2 min ρ k M k 2 ∞ ≤ 2 n − 2 β . Applying the union bound then yields P [ kP T R Ω 0 P T M − P T M k ∞ ≥ 3 k M k ∞ ] ≤ 2 n 2 − 2 β . The last lemma bounds the matrix infinity norm of P T P Ω ( S ) for a ± 1 random matrix S . Lemma 12 Suppose Ω ∼ Ber 0 2 p 0 τ 1 − p 0 +2 p 0 τ and S ∈ R n × n has i.i.d. symmetric ± 1 entries . Under the assumption of Theor em 4, for some constant C 0 , we have with high pr obability kP T P Ω ( S ) k ∞ ≤ C 0 s p 0 n log n K 2 min . Proof By triangle inequality , we hav e kP T P Ω ( S ) k ∞ ≤ UU T P Ω ( S ) ∞ + P Ω ( S ) UU T ∞ + UU T P Ω ( S ) UU T ∞ , so it suffices to sho w that each of these three terms are bounded by C q p 0 n log n K 2 min w .h.p. for some constant C . Under the assumption on Ω and S in the lemma statement, each pair of symmetric entries of P Ω ( S ) equals ± 1 with probability ρ , p 0 τ 1 − p 0 +2 p 0 τ and 0 otherwise; notice that ρ ≤ p 0 2 since τ ≤ 1 2 . Let s ( i ) T be the i th row of UU T . From the structure of U , we know that for all i and j , s ( i ) j ≤ 1 K min , 2233 C H E N , J A L A L I , S A N G H A V I A N D X U and for all i , n X j =1 s ( i ) j 2 ≤ 1 K min . W e now bound UU T P Ω ( S ) ∞ . For simplicity , we focus on the (1 , 1) entry of UU T P Ω ( S ) and denote this random variable as X . W e may write X as X = P i s (1) i ( P Ω ( S )) i, 1 , for which we hav e E h s (1) i ( P Ω ( S )) i, 1 i = 0 , s (1) i ( P Ω ( S )) i, 1 ≤ s (1) i ≤ 1 K min , a.s. V ar ( X ) = X i :( i, 1) ∈ Ω ( s (1) i ) 2 · 2 ρ ≤ p 0 K min . Applying the standard Bernstein inequality then gi ves P " | X | > C s p 0 n log n K 2 min # ≤ 2 exp − C 2 p 0 n log n K 2 min / 2 p 0 K min + 2 C √ p 0 n log n 3 K 2 min . Under the assumption of Theorem 4, the right hand side abov e is bounded by 2 n − 12 . It follows from the union bound that UU T P Ω ( S ) ∞ ≤ C q p 0 n log n K 2 min w .h.p. Clearly , the same bound holds for P Ω ( S ) UU T ∞ . Finally , let K be the size of the cluster that node j is in. Observe that due to the structure of UU > , we hav e UU T P Ω ( S ) UU T i,j = X l UU > P Ω ( S ) i,l UU > l,j ≤ 1 K · K · UU > P Ω ( S ) ∞ , which implies UU T P Ω ( S ) UU T ∞ ≤ UU > P Ω ( S ) ∞ . This completes the proof of the lemma. A ppendix B. Proof of Theorem 5 W e use a standard information theoretical argument, which improv es upon a related proof by Chaud- huri et al. (2012). Let K be the size of the clusters (which are assumed to hav e equal size). For simplicity we assume n/K is an integer . Let F be the set of all possible partition of n nodes into n/K clusters of equal size K . Using Stirling’ s approximation, we ha ve M , |F | = 1 ( n/K )! n K n − K K · · · K K ≥ n 3 K n (1 − 1 K ) ≥ c 1 2 n 1 , which holds for K = Θ( n ) . Suppose the clustering Y is chosen uniformly at random from F , and the graph A is generated from Y according to the planted partition model with partial observ ations, where we use a ij =? for 2234 C L U S T E R I N G P A RT I A L L Y O B S E RV E D G R A P H S V I A C O N V E X O P T I M I Z AT I O N unobserved pairs. W e use P A | Y to denote the distribution of A giv en Y . Let ˆ Y be any measurable function of the observ ation A . A standard application of Fano’ s inequality and the con ve xity of the mutual information (Y ang and Barron, 1999) gi ves sup Y ∈F P h ˆ Y 6 = Y | Y i ≥ 1 − M − 2 P Y (1) , Y (2) ∈F D P A | Y (1) k P A | Y (2) + log 2 log M , (8) where D ( ·k· ) denotes the KL-div ergence. W e no w upper bound this div er gence. Giv en Y ( l ) , l = 1 , 2 , the a i,j ’ s are independent of each other , so we ha ve D P A | Y (1) k P A | Y (2) = X i,j D P a i,j | Y (1) k P a i,j | Y (2) . For each pair ( i, j ) , the KL-div er gence is zero if y (1) i,j = y (2) i,j , and otherwise satisfies D P a i,j | Y (1) k P a i,j | Y (2) ≤ p 0 (1 − τ ) log p 0 (1 − τ ) p 0 τ + p 0 τ log p 0 τ p 0 (1 − τ ) + (1 − p 0 ) log 1 − p 0 1 − p 0 = p 0 (1 − 2 τ ) log 1 − τ τ ≤ p 0 (1 − 2 τ ) 1 − τ τ − 1 ≤ c 2 p 0 (1 − 2 τ ) 2 , where c 2 > 0 is a universal constant and the last inequality holds under the assumption τ > 1 / 100 . Let N be the number of pairs ( i, j ) such that y (1) i,j 6 = y (2) i,j . When K = Θ( n ) , we have N ≤ |{ ( i, j ) : y (1) i,j = 1 } ∪ { ( i, j ) : y (2) i,j = 1 }| ≤ n 2 . It follo ws that D P A | Y (1) k P A | Y (2) ≤ N · c 2 p 0 (1 − 2 τ ) 2 ≤ c 2 n 2 p 0 (1 − 2 τ ) 2 . Combining pieces, for the left hand side of (8) to be less than 1 / 4 , we must hav e p 0 (1 − 2 τ ) 2 ≥ C 1 n . References N. Alon, M. Kriv ele vich, and B. Sudakov . Finding a large hidden clique in a random graph. In Pr oceedings of the 9th annual A CM-SIAM Symposium on Discrete Algorithms , pages 457–466, 1998. B. Ames and S. V av asis. Nuclear norm minimization for the planted clique and biclique problems. Mathematical Pr ogramming , 129(1):69–89, 2011. M. F . Balcan and P . Gupta. Robust hierarchical clustering. In Proceedings of the Conference on Learning Theory (COLT) , 2010. N. Bansal, A. Blum, and S. Chawla. Correlation clustering. In Pr oceedings of the 43r d Symposium on F oundations of Computer Science , 2002. 2235 C H E N , J A L A L I , S A N G H A V I A N D X U B. Bollob ´ as and A. D. Scott. Max cut for random graphs with a planted partition. Combinatorics, Pr obability and Computing , 13(4-5):451–474, 2004. R. B. Boppana. Eigen values and graph bisection: An av erage-case analysis. In Pr oceedings of the 28th Annual Symposium on F oundations of Computer Science , pages 280–285, 1987. E. Cand ` es and B. Recht. Exact matrix completion via con v ex optimization. F oundations of Com- putational mathematics , 9(6):717–772, 2009. E. Cand ` es, X. Li, Y . Ma, and J. Wright. Robust principal component analysis? Journal of the ACM , 58(3):11, 2011. T . Carson and R. Impagliazzo. Hill-climbing finds random planted bisections. In Pr oceedings of the 12th annual A CM-SIAM Symposium on Discr ete Algorithms , pages 903–909, 2001. V . Chandrasekaran, S. Sanghavi, P . Parrilo, and A. Willsk y . Rank-sparsity incoherence for matrix decomposition. SIAM J ournal on Optimization , 21(2):572–596, 2011. M. Charikar , V . Guruswami, and A. W irth. Clustering with qualitati ve information. In Pr oceedings of the 44th Annual IEEE Symposium on F oundations of Computer Science , pages 524–533, 2003. K. Chaudhuri, F . Chung, and A. Tsiatas. Spectral clustering of graphs with general degrees in the extended planted partition model. Journal of Mac hine Learning Resear c h , 2012:1–23, 2012. Y . Chen, A. Jalali, S. Sanghavi, and C. Caramanis. Low-rank matrix recovery from errors and erasures. IEEE T ransactions on Information Theory , 59(7):4324–4337, 2013. A. Condon and R. M. Karp. Algorithms for graph partitioning on the planted partition model. Random Structur es and Algorithms , 18(2):116–140, 2001. A. Decelle, F . Krzakala, C. Moore, and L. Zdeborov ´ a. Asymptotic analysis of the stochastic block model for modular networks and its algorithmic applications. Physical Review E , 84(6):066106, 2011. E. D. Demaine and N. Immorlica. Correlation clustering with partial information. Appr oxima- tion, Randomization, and Combinatorial Optimization: Algorithms and T echniques , pages 71–80, 2003. E. D. Demaine, D. Emanuel, A. Fiat, and N. Immorlica. Correlation clustering in general weighted graphs. Theor etical Computer Science , 361(2):172–187, 2006. D. Emmanuel and A. Fiat. Correlation clustering minimizing disagreements on arbitrary weighted graphs. In Pr oceedings of the 11th Annual Eur opean Symposium on Algorithms , pages 208–220, 2003. B. Eriksson, G. Dasarathy , A. Singh, and R. Nowak. Activ e clustering: Robust and ef ficient hierar - chical clustering using adapti vely selected similarities. Arxiv pr eprint arXiv:1102.3887 , 2011. M. Ester , H. Kriegel, and X. Xu. A database interface for clustering in large spatial databases. In Pr oceedings of the International Confer ence on Knowledge Discovery and Data Mining , pages 94–99, 1995. 2236 C L U S T E R I N G P A RT I A L L Y O B S E RV E D G R A P H S V I A C O N V E X O P T I M I Z AT I O N M. Fazel. Matrix Rank Minimization with Applications . PhD thesis, Stanford Univ ersity , 2002. U. Feige and J. Kilian. Heuristics for semirandom graph problems. Journal of Computer and System Sciences , 63(4):639–671, 2001. J. Giesen and D. Mitsche. Reconstructing many partitions using spectral techniques. In Fundamen- tals of Computation Theory , pages 433–444. Springer , 2005. D. Gross. Recovering lo w-rank matrices from few coef ficients in any basis. IEEE T ransactions on Information Theory , 57(3):1548–1566, 2011. P . W . Holland, K. B. Laskey , and S. Leinhardt. Stochastic blockmodels: Some first steps. Social networks , 5(2):109–137, 1983. D. Hsu, S. M. Kakade, and T . Zhang. Robust matrix decomposition with sparse corruptions. IEEE T ransactions on Information Theory , 57(11):7221–7234, 2011. B. Hunter and T . Strohmer . Spectral clustering with compressed, incomplete and inaccurate mea- surements. A v ailable at https://www.math.ucdavis.edu/ ˜ strohmer/papers/ 2010/SpectralClustering.pdf , 2010. M. Jerrum and G. B. Sorkin. The metropolis algorithm for graph bisection. Discr ete Applied Mathematics , 82(1-3):155–175, 1998. B. W . Kernighan and S. Lin. An efficient heuristic procedure for partitioning graphs. Bell System T echnical J ournal , 49(2):291–307, 1970. A. Krishnamurthy , S. Balakrishnan, M. Xu, and A. Singh. Efficient acti v e algorithms for hierarchi- cal clustering. In Pr oceedings of the 29th International Confer ence on Machine Learning , pages 887–894, 2012. X. Li. Compressed sensing and matrix completion with constant proportion of corruptions. Con- structive Appr oximation , 37(1):73–99, 2013. Z. Lin, M. Chen, L. W u, and Y . Ma. The Augmented Lagrange Multiplier Method for Exact Reco v- ery of Corrupted Lo w-Rank Matrices. UIUC T echnical Report UILU-ENG-09-2215 , 2009. C. Mathieu and W . Schudy . Correlation clustering with noisy input. In Pr oceedings of the 21st Annual A CM-SIAM Symposium on Discr ete Algorithms , pages 712–728, 2010. F . McSherry . Spectral partitioning of random graphs. In Pr oceedings of the 42nd IEEE Symposium on F oundations of Computer Science , pages 529–537, 2001. N. Mishra, I. Stanton R. Schreiber , and R. E. T arjan. Clustering social netw orks. In Algorithms and Models for W eb-Graph , pages 56–67. Springer , 2007. S. Oymak and B. Hassibi. Finding dense clusters via low rank + sparse decomposition. A vailable on arXi v:1104.5186v1, 2011. K. Rohe, S. Chatterjee, and B. Y u. Spectral clustering and the high-dimensional stochastic block- model. The Annals of Statistics , 39(4):1878–1915, 2011. 2237 C H E N , J A L A L I , S A N G H A V I A N D X U O. Shamir and N. T ishby . Spectral clustering on a b udget. In Pr oceedings of the 14th International Confer ence on Artificial Intelligence and Statistics , pages 661–669, 2011. R. Shamir and D. Tsur . Improv ed algorithms for the random cluster graph model. Random Struc- tur es & Algorithms , 31(4):418–449, 2007. C. Swamy . Correlation clustering: maximizing agreements via semidefinite programming. In Pr o- ceedings of the 15th Annual A CM-SIAM Symposium on Discr ete Algorithms , 2004. J. A. T ropp. User-friendly tail bounds for sums of random matrices. F oundations of Computational Mathematics , 12(4):389–434, 2012. R. V ershynin. Introduction to the non-asymptotic analysis of random matrices. Arxiv pr eprint arxiv:1011.3027 , 2010. K. V oev odski, M. F . Balcan, H. Roglin, S. H. T eng, and Y . Xia. Efficient clustering with limited distance information. arXiv pr eprint arXiv:1009.5168 , 2010. U. von Luxb ur g. A tutorial on spectral clustering. Statistics and Computing , 17(4):395–416, 2007. Y ahoo!-Inc. Graph partitioning. A vailable at http://research.yahoo.com/project/2368, 2009. Y . Y ang and A. Barron. Information-theoretic determination of minimax rates of con ver gence. The Annals of Statistics , 27(5):1564–1599, 1999. 2238

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment