Multiplicative random walk Metropolis-Hastings on the real line

In this article we propose multiplication based random walk Metropolis Hastings (MH) algorithm on the real line. We call it the random dive MH (RDMH) algorithm. This algorithm, even if simple to apply, was not studied earlier in Markov chain Monte Ca…

Authors: Somak Dutta

Multiplicativ e random w alk Metrop olis-Hastings on the 1 real line 2 Somak Dutta Univ ersity of Chicago, IL, USA. 3 Abstract 4 In this article w e prop ose m ultiplication based random walk Metropolis Hastings 5 (MH) algorithm on the real line. W e call it the random dive MH (RDMH) algorithm. 6 This algorithm, ev en if simple to apply , was not studied earlier in Marko v chain Mon te 7 Carlo literature. One should not confuse RDMH with R WMH. It is sho wn that they 8 are different, conceptually , mathematically and op erationally . The kernel asso ciated 9 with theRDMH algorithm is sho wn to ha ve standard prop erties lik e irreducibilit y , ap e- 10 rio dicit y and Harris recurrence under some mild assumptions. These ensure basic 11 con vergence (ergo dicit y) of the k ernel. F urther the kernel is sho wn to b e geometric 12 ergo dic for a large class of target densities on R . This class even con tains realistic 13 target densities for whic h random walk or Langevin MH are not geometrically ergo dic. 14 Three sim ulation studies are giv en to demonstrate the mixing prop ert y and sup eriorit y 15 of RDMH to standard MH algorithms on real line. A share-price return data is also 16 analyzed and the results are compared with those a v ailable in the literature. 17 Key words : Marko v chain Monte Carlo; Metrop olis-Hastings algorithm; Random walk 18 algorithm; Langevin algorithm; Multiplicativ e random walk; Geometric ergo dicit y; Thic k 19 tailed density; Share-price return. 20 AMS classification n umber Primary : 65C05, 65C40. Se c ondary : 60J10. 21 1 Random dive MH 2 1 In tro duction 22 Supp ose π is a density on a state-space X with resp ect to some dominating measure λ . Most 23 often the state-space is a subset of the Euclidean space and λ is the Leb esgue measure. In 24 this article w e will alwa ys assume λ to b e the Leb esgue measure. Statisticians’ main aim 25 is to study the c haracteristics of the densit y π . Sometimes (say , in Bay esian inference) π 26 ma y b e a complicated (p ossibly unnormalized) densit y whic h is not analytically tractable. 27 So, to study the characteristics of π , statisticians try to draw a sample from π . But then 28 also there ma y not exist an y effectiv e simulation pro cedure to simulate from π . Th us the 29 goal is shifted to draw an approximate sample from π . Marko v chain Monte Carlo (MCMC) 30 pro vides a metho d for doing this. The MCMC metho ds, quite famous for their effectiveness 31 in dra wing an approximate sample from a target densit y are widely used. One of the most 32 famous MCMC algorithms is the Metrop olis-Hastings (MH) algorithm ( Metrop olis et al. , 33 1953 ; Hastings , 1970 ). Giv en a curren t state x ∈ X the MH algorithm prop oses a new 34 state y from a pr op osal kernel density q ( x → y ) on X , and accepts it with the acceptance 35 probabilit y 36 α ( x → y ) = min π ( y ) q ( y → x ) π ( x ) q ( x → y ) , 1 (1.1) 37 The corresp onding MH k ernel has stationary distribution π ( · ). 38 The random w alk MH (R WMH) has the prop osal k ernel q ( x → y ) = q ( | x − y | ). W e 39 notice that generation a p oin t from suc h a density is same as generating an from q ( ) and 40 then setting y = x + . Other algorithms like Langevin MH (LMH), has prop osal kernel 41 N ( x + ( σ 2 / 2) ∇ log π ( x ) , σ 2 ) 42 All of the aforementioned algorithms hav e certain disadv antages. F or example, b oth the 43 R WMH and LMH ha ve slow mixing rates in certain cases. The acceptance rate for R WMH 44 is high if q ( · ) is concentrated around zero (i.e. smal l step size ) but then a long c hain is 45 required to explore a substan tial part of the state space. If a diffused proposal is used then 46 the acceptance rate drops. F or a m ulti–mo dal target (which is not kno wn a priori in most 47 cases) both the R WMH and the LMH chain may remain stuc k at one or few of the mo des and 48 ma y still pass con v ergence diagnostics. Obviously any inference based on suc h samples will 49 b e incorrect. Also, an imp ortant prop ert y like geometric ergo dicity which are sufficient for 50 CL T type results of ergo dic a verages are either not satisfied by these algorithms (see Rob erts , 51 1999 , for examples) or some strong assumption on π ( · ) is needed. F or example geometric 52 Random dive MH 3 ergo dicit y to hold for R WMH it is necessary (and sufficien t) that the target density is lo g- 53 c onc ave in the tail ( Mengersen and Tw eedie , 1996 ). A density π con tinuous and p ositive on 54 R is log-concav e in the tail if there exists an α > 0 and some x 1 > 0 such that 55 y ≥ x ≥ x 1 = ⇒ log π ( x ) − log π ( y ) ≥ α ( y − x ) y ≤ x ≤ − x 1 = ⇒ log π ( x ) − log π ( y ) ≥ α ( x − y ) . (1.2) 56 and similarly LMH is also geometrically ergo dic under a strong assumption giv en in Theorem 57 4.1 of Rob erts and Tweedie ( 1996 ). These conditions are not satisfied for a large class of 58 densities (e.g. the densities with thick-tails). 59 Th us a MH metho d whic h allo ws the proposed state to b e far a wa y from the curren t state 60 and y et has go o d acceptance rate will b e of muc h use in the statistical computing problems. 61 It is ev en b etter if the algorithm is geometrically ergo dic for a class of densities m uch larger 62 than the classes for whic h this property is enjo yed b y the standard algorithms. In this article 63 w e propose a new MH algorithm based on multiplying a random quantit y with the states. 64 Ev en if the algorithm app ears simple we found that it has excellent conv ergence and mixing 65 prop erties. It can explore the state space quite faster than the standard MCMC algorithms 66 and has geometric ergo dicit y prop erty for a huge class of target densities, for which the 67 standard algorithms fails to be geometric ergo dic. The main reason for this is b ecause the 68 div es can b e made large or small eac h with significan t probabilities. If the random multiplier 69 is close to one, then the prop osed p oint will b e close to the current state and con v ersely . In 70 R WMH, ho wev er, this prop osal cannot b e controlled easily . If the step size is c hosen large 71 then most of the prop osed points would b e far aw ay from the curren t states and if the step 72 size is chosen small then most of the prop osed p oints would b e very close to the current 73 states. 74 There is obviously one issue with this algorithm – the origin is an absorbing state. How- 75 ev er, this is not vital since in ma jor practical problems the v ariables are con tinuous and the 76 origin has no mass. Th us w e can safely remo ve it from the state space without disturbing 77 the conv ergence. W e emphasize that the RDMH algorithm exploits the multiplicativ e group 78 structure of R − { 0 } . The algorithm fails when the origin has p ositiv e probabilit y attac hed 79 to it (for example, when the state-space is the set of in tegers). The R WMH agorithm still 80 w ork in that case. In other problems suc h as Bay esian testing with point n ull hypothesis 81 and tw o-sided alternative, where the target distribution has a con tin uous part and also has 82 Random dive MH 4 a mass at zero, both RDMH and R WMH fails. 83 MCMC techniques b eing extremely p opular, the literature is ric h with algorithms – 84 sp ecialized or generic in nature. Man y of them are sp ecial cases of MH algorithms with 85 differen t forms of prop osal densities. W e refer to Liu ( 2008 ) and Robert and Casella ( 2004 ) 86 for b o ok length discussions. 87 The structure of the article is as follo ws. W e describ e the new algorithm in section 2 . As 88 claimed already , the algorithm is new in the sense that it is completely differen t in concept 89 and in structure from the a v ailable multiplicativ e random walk MH. This is discussed in 90 details in section 3 . W e discuss its con v ergence prop erties in section 4 . In section 5.1 we 91 compare RDMH with the standard algorithms. Sp ecifically we consider a bimo dal target 92 and see how RDMH explores the mo des while R WMH cannot. W e also consider an extreme 93 mixture example where one of the comp onents has very lo w disp ersion compared to other. 94 W e then consider a thic k tailed target for whic h R WMH is not geometric ergo dic while 95 RDMH is . In this example we see how asymptotic normalit y holds for the ergodic av erages 96 using RDMH while it fails to hold for the R WMH algorithm.In Section 5.3 we analyze a 97 share price return data. T ypically in such problems the p osterior of one or more parameters 98 are thick-tailed and asymmetric. The data and mo de we consider are analyzed in F ernandez 99 and Steel ( 1998 ) using Gibbs sampler. W e found that the Gibbs sampler failed to explore 100 the tail of the p osterior of the lo cation parameter while RDMH sampler did that with ease. 101 W e conclude this article with an outlo ok on further works in Section 6 . 102 2 Random div e MH 103 Supp ose that π is a target density function, probably unnormalized, on R . A t each iteration 104 the algorithm prop oses a state y from the current state x by multiplying a random quan tit y 105 with x (i.e. y = x ). The proposal is accepted with some probabilit y dep ending on x and y . 106 W e can classify the prop osals into tw o classes dep ending on whether | | ≤ 1 or > 1. W e call 107 the case where | | ≤ 1 an inner dive and the case where | | > 1 an outer dive . Notice that an 108 outer dive can also b e obtained by dividing the state x by an with | | < 1. Hence w e can 109 restrict the set from whic h the random m ultiplier is dra wn to the set Y = ( − 1 , 1) − { 0 } . 110 Ob viously the p oint zero is not considered so that the chain do es not get stuc k at zero. A t 111 Random dive MH 5 eac h iteration w e can take an inner dive or an outer dive at random. W e call the chain 112 symmetric if the probability for an inner div e is half and asymmetric otherwise. In this 113 article we shall only consider the symmetric RDMH only . So with a prop osal densit y g ( ) 114 for on Y , the algorithm is giv en in Algorithm 2.1 . 115 Algorithm 2.1. R andom dive MH on R 116 • Input: Initial value x 0 6 = 0 , and numb er of iterations N . 117 • Fo r t = 0 , . . . , N − 1 118 1. Generate ∼ g ( · ) and u ∼ U (0 , 1) indep endently 119 2. If 0 < u < 1 / 2 , set 120 x 0 = x t and α ( x t , ) = min π ( x 0 ) π ( x t ) | | , 1 121 3. Else set 122 x 0 = x t / and α ( x t , ) = min π ( x 0 ) π ( x t ) 1 | | , 1 123 4. Set 124 x t +1 = x 0 with probabilit y α ( x t , ) x t with probabilit y 1 − α ( x t , ) 125 • End for 126 Notice that RDMH is an MH algorithm with 127 q ( x → y ) = (1 / 2) g ( y /x ) 1 | x | I ( | y | < | x | ) + (1 / 2) g ( x/y ) | x | y 2 I ( | y | > | x | ) (2.1) 128 Hence it follows that π ( · ) is indeed stationary for the c hain. Ho wev er other properties do 129 not follow easily – the assumptions in general results discussed in Rob erts and Rosenthal 130 ( 2004 ) do not hold in this case. W e pro ve them separately in the following section. Notice 131 also that the terms | | or its inv erse in the acceptance ratios corresp ond to the Jacobian of 132 the transformations : x 7→ x and x 7→ x/ resp ectively . The acceptance ratios are free from 133 the prop osal density g as in R WMH. 134 F or each x 6 = 0 w e define the inner and outer acceptance regions resp ectively as, 135 a ( x ) = { ∈ Y : π ( x ) | | /π ( x ) ≥ 1 } 136 A ( x ) = { ∈ Y : π ( x/ ) / ( π ( x ) | | ) ≥ 1 } 137 Random dive MH 6 Let r ( x ) = Y − a ( x ) and R ( x ) = Y − A ( x ) b e the p otential inner and outer rejection regions 138 resp ectiv ely . W e see that for each x 6 = 0, the rejection probability is giv en by 139 ρ ( x ) = Z R ∗ (1 − α ( x → y )) q ( x → y ) dy 140 = 1 2 Z | y | < | x | (1 − α ( x → y )) 1 | x | g y x dy + 1 2 Z | y |≥| x | (1 − α ( x → y )) | x | y 2 g x y dy . 141 Substituting = y /x in the first integral and = x/y in the second we get, 142 ρ ( x ) = 1 2 Z | | < 1 (1 − α ( x → x )) g ( ) d + 1 2 Z | | < 1 (1 − α ( x → x/ )) g ( ) d 143 = 1 2 Z r ( x ) 1 − π ( x ) | | π ( x ) g ( ) d + 1 2 Z R ( x ) 1 − π ( x/ ) π ( x ) | | g ( ) d 144 Ob viously , in an y MH algorithm the prop osal density pla ys an imp ortan t role in terms of 145 con vergence. In this case also a go o d c hoice of g is needed for faster conv ergence. Ho wev er 146 as w e shall see in Theorem 3 that the c hain is geometric ergodic under an extremely weak 147 restriction on g . Hence the discussion on the c hoices of g is p ostp oned till the end of Section 148 4 . 149 It is quite straigh tforw ard to extend the algorithm to higher dimensions. The v ariables 150 ma y b e updated either sequen tially or join tly . While updating join tly at eac h iteration, outer 151 div es should b e applied to a random num b er of comp onen ts (whic h ma y b e zero) and inner 152 div es to the rest. The Jacobian terms in the acceptance ratios will then b e ratios of pro ducts 153 of ’s. The algorithm is given in Algorithm 6.1 of Section 6 . 154 3 Dissimilarit y from R WMH 155 It is already seen that the RDMH algorithm is a special case of MH class of algorithms. 156 Ho wev er, it is not in any case similar to the random walk t yp e algorithms. The term 157 multiplic ative r andom walk (also kno wn as the log-random w alk MH) is not new in the 158 statistics literature. How ev er, it has been developed only when the state space is the positive 159 half of the real line as X t +1 = X t exp( N t ) where N t are i.i.d following some distributions on 160 the real line (see, Dellap ortas and Rob erts, 2003, pp. 18 for details and Jasra et. al. , 161 2005, for application). Obviously this reduces to the simple R WMH on R by observing that 162 Random dive MH 7 log X t +1 = log X t + N t . It is useless for problems ha ving entire real line as support because 163 a part of the state space is nev er visited (i.e the c hain b ecomes r e ducible ), that is, p ositiv e 164 (negativ e) initial v alues restrict the chain to take only positive (resp ectiv ely negativ e) v alues. 165 The RDMH, how ever, is dev elop ed when the state sap ce is entire real line. The term 166 exp( N t ) ab ov e can not b e equal to t since t can tak e b oth p ositive and negativ e v alues. 167 Hence the RDMH algorithm cannot b e considered as a sp ecial case of log-random walk MH. 168 F or distributions with (0 , ∞ ) as supp ort the obvious wa y to emply RDMH is to reparametrize 169 b y taking logarithm so that the supp ort b ecomes R . 170 4 Con v ergence Prop erties 171 Let us denote the k ernel the of the RDMH c hain b y K ( x → y ). Imp ortan t properties lik e 172 irreducibilit y and ap erio dicity (see Roberts and Rosen thal , 2004 , for definitions) are satisfied 173 b y the RDMH under minor assumption: 174 Theorem 1. If for al l 0 < δ < 1 , inf δ < | | < 1 g ( ) > 0 and π ( · ) is b ounde d and p ositive on every 175 c omp act subset of R , then the chain is λ -irr e ducible (and henc e π -irr e ducible) and ap erio dic. 176 Pr o of. Supp ose x 6 = 0 and A ∈ B ( R ) has λ ( A ) > 0. Then there exists a compact set 177 C = { u : r ≤ | u | ≤ R } , such that λ ( A ∩ C ) > 0 where 0 < r < | x | < R < ∞ . Define, 178 I x = [ −| x | , | x | ] A ∗ = A ∩ C m = inf y ∈ C π ( y ) and M = sup y ∈ C π ( y ) 179 and a = inf r/R < | | < 1 g ( ). The kernel K of the chain satisfies, 180 K ( x, A ) ≥ Z A q ( y | x ) min π ( y ) q ( y → x ) π ( x ) q ( x → y ) , 1 dy 181 ≥ Z A ∗ ∩ I x 1 2 | x | g ( y /x ) min π ( y ) π ( x ) y x , 1 dy 182 + Z A ∗ ∩ I c x | x | 2 y 2 g ( x/y ) min π ( y ) π ( x ) y x , 1 dy 183 ≥ a 2 R min n mr M R , 1 o λ ( A ∗ ∩ I x ) + r a 2 R 2 min n mr M R , 1 o λ ( A ∗ ∩ I c x ) 184 ≥ c λ ( A ∩ C ) > 0 185 where c = min n a 2 R , r a 2 R 2 o × min n mr M R , 1 o . Random dive MH 8 Th us we see that, the c hain is λ - irr e ducible . F urther since each measurable set with p ositive 186 Leb esgue measure can be accessed in a single step implies the c hain is ap erio dic . 187 Th us the RDMH chain is er go dic and hence by Theorem 4 of Rob erts and Rosen thal 188 ( 2004 ), 189 || K n ( x, · ) − π ( · ) || T V → 0 as n → ∞ (4.1) 190 for π − almost ev ery x ∈ R . Where , || ν 1 − ν 2 || T V is the well-kno wn total variation distanc e 191 b et w een t w o probabilit y measures ν 1 and ν 2 , defined as 192 || ν 1 − ν 2 || T V = sup A | ν 1 ( A ) − ν 2 ( A ) | (4.2) 193 F or further prop erties of the total v ariation distance see Meyn and Tweedie ( 1993 ); Rob erts 194 and Rosenthal ( 2004 ); Rob ert and Casella ( 2004 ) or Liu ( 2008 ). A sufficient condition for 195 ( 4.1 ) to hold for all x rather than π –almost ev ery x is the Harris r e curr enc e of the chain. A 196 Mark ov chain ( X n ) is called Harris recurrent if for ev ery x in the state space and for ev ery 197 set B ∈ B ( R ) such that π ( B ) > 0, 198 P ( ∃ n : X n ∈ B | X 0 = x ] = 1 . 199 Ob viously the p oint zer o creates a problem in our RDMH algorithm. How ev er, if we remov e 200 the single p oint zero from the state space, then since the chain is already π -irreducible we 201 use Lemma 7.3 of Robert and Casella ( 2004 ) to conclude that 202 Corollary 1. Under the assumptions of The or em 1 , the RDMH chain is Harris r e curr ent 203 on R ∗ = R − { 0 } . 204 Th us ( 4.1 ) holds for an y nonzero x , i.e. an y nonzero starting v alue ensures conv ergence 205 of the chain. 206 A subset C of X is called smal l if there exists a p ositive integer n , a num b er δ > 0 and 207 a nontrivial measure ν such that 208 K n ( x, A ) ≥ δ ν ( A ) ∀ x ∈ C , ∀ A ∈ B ( X ) (4.3) 209 W e will c haracterize the small sets for RDMH. In most of the MH algorithms an y b ounded 210 subset of the state space is small. Ho wev er this is not the case with RDMH. W e first state 211 a result for RDMH k ernel useful in c haracterizing the small sets. 212 Random dive MH 9 Lemma 1. Supp ose ( x n ) is a se quenc e of p ositive (ne gative) numb ers de cr e asing (r esp. in- 213 cr e asing) to zer o, then K ( x n , · ) w − → δ 0 , wher e δ 0 is the distribution de gener ate d at zer o. 214 Pr o of. Without loss we assume ( x n ) ↓ 0. Supp ose y < 0. Then K ( x n , ( −∞ , y ]) ≤ 1 2 Z 0 x n /y g ( ) d → 0 . Also for y > 0, for sufficien tly large n , K ( x n , ( y , ∞ ) ≤ 1 2 Z x n /y 0 g ( ) d → 0 , so that K ( x n , ( −∞ , y ]) → 1. This completes the pro of. 215 Theorem 2. Supp ose the c onditions in The or em 1 holds. Then a set E is smal l if and only 216 if its closur e, ¯ E is a c omp act subset of R ∗ = R − { 0 } . 217 Pr o of. Supp ose first that ¯ E is compact subset of R ∗ . Then r := 1 2 × inf {| x | : x ∈ E } > 0 and R = 2 × sup {| x | : x ∈ E } < ∞ . So letting C = { u : r ≤ | u | ≤ R } , it is seen from the proof of theorem 1 that, 218 K ( x, A ) ≥ c λ ( A ∩ C ) , ∀ x ∈ E , ∀ A ∈ B ( R ) 219 Since λ C ( A ) := λ ( A ∩ C ) is a nonzero measure on ( R , B ( R )), this shows that E is small. 220 No w supp ose that E is small. Clearly ±∞ cannot b e limit p oin ts of E since for an y fixed 221 n and b ounded A , K n ( x, A ) → 0 as | x | → ∞ . W e shall also show that zero cannot b e a 222 limit p oint of E . This will show that ¯ E is compact subset of R ∗ . So supp ose on the contrary 223 that zero is a limit p oin t of E . Then there exists a sequence ( x n ) in E whic h monotonically 224 con verges to zero. Hence for any m ∈ N and any measurable set A , by Lemma 1 , 225 K m ( x n , A ) = Z R ∗ K m − 1 ( y , A ) K ( x n , dy ) − → I (0 ∈ A ) as n → ∞ . 226 So that ( 4.3 ) cannot hold for all x ∈ E and all A con tradicting the assumption that E is 227 small. 228 W e now turn to w ards geometric ergo dicity . An irreducible Marko v kernel K (irreducible 229 with resp ect to some σ − finite measure ν ) with in v ariant distribution π ( · ) is said to b e 230 geometric ergo dic if 231 sup A ∈B ( R ) | K n ( x, A ) − π ( A ) | ≤ M ( x ) ρ n , ∀ n ∈ N 232 Random dive MH 10 for some ρ < 1, where M ( x ) < ∞ , for π − a.e. x ∈ R . 233 Geometric ergodicit y is imp ortant in MCMC applications for the CL T of ergo dic a verages 234 ˆ h N = 1 N N X i =1 h ( X i ) , N ∈ N 235 of some function h ev aluated at eac h state of the Marko v c hain ( X i ). Corollary 2.1 of Roberts 236 and Rosenthal ( 1997 ) (based on w ork of Kipnis and V aradhan , 1986 ) states that if a Marko v 237 k ernel P is geometric ergo dic and reversible then for any function h on the state space such 238 that E π | h | 2 < ∞ 239 √ N ( ˆ h N − π ( h )) w − → N (0 , σ 2 h ) as N → ∞ 240 Suc h a CL T easily ma y not hold if the k ernel is not geometric ergo dic (see Rob erts , 1999 , 241 for examples) or Section 5.2 of this article. Geometric ergo dicity has multifarious usefulness 242 discussed in Jones and Hobert ( 2001 ) and Rob erts and Rosenthal ( 1998 ). 243 T o show that RDMH chain is geometrically ergo dic, w e put the following restriction on 244 π . 245 Assumption (A1). F or some 1 < p ≤ ∞ 246 lim | x |→∞ π ( x ) /π ( x ) = | | p (4.4) 247 where the notation | | ∞ should be interpreted as 0 for eac h ∈ Y . Notice that this is true 248 for most of the p osterior densities in Ba y esian literature where MCMC finds extremely high 249 applications. The condition (A1) giv en ab ov e is basically a regularly v arying t yp e restriction 250 on π . F urther discussion on regularly v arying functions can b e found in F eller ( 1971 , pp. 251 275–284). 252 Recall that for each x 6 = 0, the rejection probabilit y is 253 ρ ( x ) = 1 2 Z r ( x ) 1 − π ( x ) | | π ( x ) g ( ) d + 1 2 Z R ( x ) 1 − π ( x/ ) π ( x ) | | g ( ) d 254 W e now give a bound on ρ ( x ) in the follo wing lemma. 255 Lemma 2. Assume (A1). Then 256 ρ ( x ) → 1 2 Z Y (1 − | | p − 1 ) g ( ) d, as | x | → ∞ 257 ρ ( x ) → 1 2 Z Y (1 − | | ) g ( ) d, as x → 0 258 Random dive MH 11 Pr o of. Notice that as | x | → ∞ , r ( x ) → φ, R ( x ) → Y and as x → 0, R ( x ) → φ, r ( x ) → Y . 259 Hence the result follows from dominated conv ergence theorem. 260 No w w e state a helpful result without proof. 261 Lemma 3. Fix p > 1 . F or e ach ∈ Y and s ∈ (0 , 1) define 262 ϕ ( s, ) = | | s + | | 1 − s − | | 263 ψ p ( s, ) = | | ps + | | p − ps − 1 − | | p − 1 264 With ψ ∞ ( s, ) ≡ 0 . Then 265 (a) ϕ ( s, ) < 1 for al l ∈ Y and s ∈ (0 , 1) . 266 (b) ψ p ( s, ) < 1 for al l ∈ Y and 0 < s < 1 / 2 − 1 / (2 p ) . 267 As discussed in Theorem 15.0.1 of Meyn and Tw eedie ( 1993 ), the RDMH chain is geo- 268 metric ergodic if and only if for some small set E , some function V : R ∗ → [1 , ∞ ) whic h is 269 finite at least for one x , γ < 1 and some b E < ∞ , the ge ometric drift c ondition holds: 270 K V ( x ) ≤ γ V ( x ) + b E I ( x ∈ E ) , (4.5) 271 where K V ( x ) = R R ∗ K ( x → y ) V ( y ) dy . In our case, we know any compact set of R ∗ is small. 272 So if we can show for some contin uous V : R ∗ → [1 , ∞ ) which is b ounded on every compact 273 subset of R ∗ , the following conditions hold: 274 lim sup | x |→∞ K V ( x ) V ( x ) < 1 and lim sup x → 0 K V ( x ) V ( x ) < 1 (4.6) 275 then we can choose a num b er γ < 1 and a small set E = { x : r ≤ | x | ≤ R } for some 276 0 < r < R < ∞ , suc h that K V ( x ) < γ V ( x ) for all x / ∈ E . Also b E = sup x ∈ E K V ( x ) < ∞ 277 since V is b ounded on E . Hence w e see that ( 4.5 ) holds. 278 W e now state and prov e the most imp ortan t theorem of this section. 279 Theorem 3. Supp ose that c onditions in The or em 1 holds to gether with c ontinuity of π ( x ) 280 and (A1). F urther assume the fol lowing: for some s 0 ∈ (0 , 1) , 281 1 Z − 1 | | − s 0 g ( ) d < ∞ (4.7) 282 Then the chain is ge ometric al ly er go dic. 283 Random dive MH 12 Pr o of. In view of discussion preceding the statemen t of the theorem we only need to sho w 284 ( 4.6 ). Fix 0 < s < min { s 0 , 1 / 2 − 1 / (2 p ) } . Then b y ( 4.7 ) 285 1 Z − 1 | | − s g ( ) d < ∞ (4.8) 286 No w Notice that, for eac h x 6 = 0, and any function V : R ∗ → [1 , ∞ ) 287 K V ( x ) V ( x ) = 1 2 Z a ( x ) g ( ) V ( x ) V ( x ) d + 1 2 Z A ( x ) g ( ) V ( x/ ) V ( x ) d + 1 2 Z r ( x ) g ( ) π ( x ) π ( x ) | | V ( x ) V ( x ) d + 1 2 Z R ( x ) g ( ) π ( x/ ) π ( x ) | | V ( x/ ) V ( x ) d + ρ ( x ) (4.9) 288 Cho ose p ositive constants c 1 , c ∗ 2 and c ∗∗ 2 suc h that the function 289 V ( x ) = c 1 π ( x ) − s I ( | x | > 1) + c ∗ 2 x − s I (0 < x ≤ 1) + c ∗∗ 2 ( − x ) − s I ( − 1 ≤ x < 0) 290 is contin uous 1 and V ( x ) ≥ 1 for all x 6 = 0 and let c 2 = min { c ∗ 2 , c ∗∗ 2 } and C 2 = max { c ∗ 2 , c ∗∗ 2 } . 291 W e now work with this V to show ( 4.6 ) 292 Case I : Supp ose | x | → ∞ . Then assumption (A1) implies that A ( x ) → φ and r ( x ) → φ . 293 Notice in this case 294 V ( x/ ) V ( x ) = π ( x ) π ( x/ ) s ≤ | | − s ∀ ∈ A ( x ) 295 Hence by ( 4.8 ) the second in tegral in ( 4.9 ) conv erges to zero. Also, since ∀ ∈ r ( x ) 296 π ( x ) π ( x ) | | V ( x ) V ( x ) ≤ max ( π ( x ) π ( x ) 1 − s | | , π ( x ) π ( x ) 1 − s | || x | − s | | − s c 1 c 2 ) ≤ max | | s , M s c 1 c 2 , 297 where M = sup π ( x ), the third integral in ( 4.9 ) also conv erges to zero. F urther since 298 V ( x ) /V ( x ) → | | ps it follows from ( 4.9 ) and Lemma 2 that 299 lim sup | x |→∞ K V ( x ) V ( x ) ≤ (1 / 2) 1 Z − 1 | | ps g ( ) d + (1 / 2) 1 Z − 1 | | p − ps − 1 g ( ) d 300 +(1 / 2) 1 Z − 1 (1 − | | p − 1 ) g ( ) d 301 = 1 2 1 Z − 1 ψ p ( s, ) g ( ) d + 1 2 302 < 1 by Lemma 3 303 1 The follo wing c hoices do the job: sup | x | > 1 π ( x ) s < c 1 < ∞ , c ∗ 2 = c 1 π (1) − s and c ∗∗ 2 = c 1 π ( − 1) − s Random dive MH 13 Case II : Supp ose no w that x → 0. In this case a ( x ) → φ and R ( x ) → φ . Also 304 V ( x ) /V ( x ) ≤ C 2 c 2 | | − s for all | x | < 1 implies that the first in tegral in ( 4.9 ) con verges to 305 zero by ( 4.8 ). Also ∀ | x | < 1 and ∀ ∈ R ( x ) 306 π ( x/ ) π ( x ) | | V ( x/ ) V ( x ) ≤ max | | s , π ( x/ ) 1 − s π ( x ) | x | s | | s − 1 C 2 c 1 ≤ max | | s , m − s C 2 c 1 , 307 where m = inf | x | < 1 π ( x ) > 0, so that the fourth in tegral in ( 4.9 ) conv erges to zero. Hence b y 308 con tinuit y of π ( · ) at zero, 309 lim sup x → 0 K V ( x ) V ( x ) ≤ 1 2 1 Z − 1 | | s g ( ) d + 1 2 1 Z − 1 | | 1 − s g ( ) d + 1 2 1 Z − 1 (1 − | | ) g ( ) d 310 = 1 2 1 Z − 1 ϕ ( s, ) g ( ) d + 1 2 311 < 1 by Lemma 3 312 This completes the pro of. 313 Remark: It is conjectured in Atc had ´ e and Perron ( 2007 ) that an MH chain is ge- 314 ometric ergo dic if the rejection probabilit y is b ounded a wa y from 1. They ha ve pro ved 315 the result with an additional assumption that the con tinuous part of the MH kernel, i.e. 316 α ( x → y ) q ( x → y ) dy whic h is an op erator on L 2 ( π ), is compact. Unfortunately this extra 317 assumption do es not hold for RDMH (along with most of the MH algorithms). Had the 318 conjecture b een prov ed, we could ha ve claimed readily that RDMH is geometrically ergo dic 319 b y using Lemma 2 . This would not require the extra assumption ( 4.7 ) on g . 320 The class of densities satisfying (A1) together with the assumptions of Theorem 1 and 321 con tinuit y is quite large. This class obviously includes the following classes: 322 1. The class of thick-tailed densities π ( x ) ∼ 1 p ( x ) m as | x | → ∞ where p ( x ) is a p olynomial 323 satisfying p ( x ) > 0 for all sufficiently large | x | . F or example, the t -densities fall in this 324 class. 325 2. The class of densities which are equally log-concav e in the tw o tails, i.e., for some 326 M > 0 and some α > 0, 327 | y | > | x | > M = ⇒ log π ( x ) − log π ( y ) ≥ α ( | y | − | x | ) (4.10) 328 Random dive MH 14 This is a stronger v ersion of ( 1.2 ) for ( 4.10 ) implies ( 1.2 ). Notice that for these densities, 329 p = ∞ in (A1). Examples of suc h densities are the normal densities and their mixtures, 330 double exp onential density etc. 331 3. The class of densities of the form h ( x ) exp( − κ m p | x − θ | ) where m > 1. 332 In some problems, ho w ever, the target π ( x ) is log-concav e in the tails but the rates at 333 whic h π ( x ) con v erges to zero are not same for the tw o tails. One example of suc h densities 334 is f ( x ) = exp( x − exp( x )). Notice that if Y follows the standard exponential distribution 335 E xp (1) then X = log Y has density f ( x ). It can b e seen that f ( x ) /f ( x ) → 0 holds for each 336 > 0, as | x | → ∞ and also for each < 0 and as x → ∞ . But f ( x ) /f ( x ) → ∞ if < 0 and 337 x → −∞ . W e assume for these kind of densities exactly one tail dominates, i.e. exactly one 338 the following is true for eac h ∈ ( − 1 , 0). 339 lim x →−∞ π ( x ) /π ( x ) → ∞ and lim x →∞ π ( x ) /π ( x ) → 0 (4.11) 340 lim x →−∞ π ( x ) /π ( x ) → 0 and lim x →∞ π ( x ) /π ( x ) → ∞ (4.12) 341 Notice that it is sufficient to w ork with ( 4.11 ) b ecause if ( 4.12 ) holds for a target π , then 342 π ( − x ) satisfies ( 4.11 ). F or these kind of densities (A1) do es not hold. How ever, the next 343 theorem assures that the RDMH chain is still geometric ergo dic. The pro of is along the line 344 of Theorem 3 and so we just presen t a sketc h. 345 Theorem 4. Supp ose π is c ontinuous and the assumptions in The or em 1 holds. Supp ose 346 further that π ( x ) satisfies ( 4.11 ) and that g satisfies the r e gularity c ondition ( 4.7 ) . Then the 347 RDMH chain is ge ometric er go dic. 348 Pr o of. Notice that in this case we hav e the follo wing as x → ∞ w e still ha ve a ( x ) → Y and 349 r ( x ) → φ . But 350 A ( x ) ∩ (0 , 1) → φ A ( x ) ∩ ( − 1 , 0) → ( − 1 , 0) 351 and 352 R ( x ) ∩ (0 , 1) → (0 , 1) R ( x ) ∩ ( − 1 , 0) → φ. 353 Th us in 4.9 (with the same choice of V as in theorem 3 ) we can further split the in tegrals 354 on in tersections of the domains with ( − 1 , 0) and (0 , 1). On each such domain either the 355 Random dive MH 15 in tegrand conv erges to zero or it is b ounded and the domain of the integral conv erges to the 356 empt y set. Hence 357 lim sup x →∞ K V ( x ) V ( x ) ≤ lim sup x →∞ ρ ( x ) < 1 / 2 358 since, 359 2 ρ ( x ) = Z r ( x ) ∩ (0 , 1) 1 − π ( x ) | | π ( x ) g ( ) d + Z r ( x ) ∩ ( − 1 , 0) 1 − π ( x ) | | π ( x ) g ( ) d 360 + Z R ( x ) ∩ (0 , 1) 1 − π ( x/ ) π ( x ) | | g ( ) d + Z R ( x ) ∩ ( − 1 , 0) 1 − π ( x/ ) π ( x ) | | g ( ) d 361 → 1 Z 0 g ( ) d < 1 as x → ∞ 362 Also as x → −∞ , it can b e seen that A ( x ) → φ and R ( x ) → Y hold but 363 a ( x ) ∩ (0 , 1) → (0 , 1) , a ( x ) ∩ ( − 1 , 0) → φ 364 and 365 r ( x ) ∩ (0 , 1) → φ r ( x ) ∩ ( − 1 , 0) → ( − 1 , 0) 366 Hence similarly , 367 lim sup x →∞ K V ( x ) V ( x ) ≤ lim sup x →∞ ρ ( x ) < 1 368 since in this case 369 2 ρ ( x ) = Z r ( x ) ∩ (0 , 1) 1 − π ( x ) | | π ( x ) g ( ) d + Z r ( x ) ∩ ( − 1 , 0) 1 − π ( x ) | | π ( x ) g ( ) d 370 + Z R ( x ) ∩ (0 , 1) 1 − π ( x/ ) π ( x ) | | g ( ) d + Z R ( x ) ∩ ( − 1 , 0) 1 − π ( x/ ) π ( x ) | | g ( ) d 371 → 2 0 Z − 1 g ( ) d + 1 Z 0 g ( ) d < 2 as x → −∞ 372 This verifies the first condition of ( 4.6 ). V erification of the second condition of ( 4.6 ) is 373 already done in c ase II of Theorem 3 . 374 W e now return to the choices of g . Let B ( x ; a, b ) denote the densit y of a Beta( a, b ) random 375 v ariable. A general class of prop osal densities satisfying ( 4.7 ) is then given by 376 g ( ) = γ B ( − ; a 1 , b 1 ) I ( − 1 < < 0) + (1 − γ ) B ( ; a 2 , b 2 ) I (0 < < 1) . 377 Random dive MH 16 for some 0 < γ < 1 and some positive n umbers a 1 , a 2 , b 1 , b 2 . Notice that a straigh tforward 378 c hoice of g is uniform distribution o ver (-1,1) whic h corresp onds to the case γ = 1 / 2 and 379 a 1 = a 2 = b 1 = b 2 = 1. This indeed allo ws for large div es but the acceptance rate ma y 380 drop. F urther if the simulated is very close to zero and a outer dive is taken, then the 381 prop osed state will hav e large magnitude and result in numerical instability . Sp ecially when 382 the p osterior is highly steep then such large dives are not sensible. W e shall nevertheless use 383 this choic e of g in the next section and sho w it works well. If how ev er, the target density is 384 steep then a go o d idea would b e to generate ’s close to 1. This can b e ac hiv ed b y 385 1. making γ small (e.g. γ ≤ 0 . 2) and 386 2. making a 2 high and b 2 small (e.g. a 2 ≥ 2 and 0 < b 2 ≤ 1). 387 5 Application 388 In this section w e consider t wo sim ulation studies. W e first consider a bimodal target densit y 389 and show ho w the RDMH algorithm explores the modes but the R WMH chain either gets 390 stuc k at the mo de (if the prop osal v ariance is mo derate) or explores the mo des at high v alue 391 of prop osal v ariance but has very low acceptance rate. In the next example w e consider 392 another simulation study on a thick tailed target. The R WMH and the LMH algorithms 393 are not geometrically ergo dic for this target under any kind of prop osal (thick-tailed or thin 394 tailed) and this has a serious effect when we try to construct a confidence set based on 395 asymptotic normalit y of ergo dic av erages – for the latter do es not hold in this case. How ever 396 the RDMH is still geometric ergo dic and a CL T holds for the ergo dic a v erages. 397 5.1 Exploring a m ultimo dal target 398 Example 1. Consider the mixture distribution 399 π ( x ) = 0 . 5 φ ( x ; 0 , 0 . 25) + 0 . 5 φ ( x ; 10 , 0 . 25) 400 where φ ( x ; µ, σ ) = exp ( − 0 . 5( x − µ ) 2 /σ 2 )) / ( σ √ 2 π ) is the normal densit y with mean µ and 401 v ariance σ 2 . 402 Clearly this is a bimo dal distribution with t wo separated mo des at x = 0 and x = 10. 403 W e compare the RDMH with the R WMH here. W e choose g , as the uniform distribution on 404 Random dive MH 17 Figure 5.1: Histograms and T rue density(solid curv e) based on 30,000 sample v alues out of 50,000 sample v alues (burn-in = 20,000) for the bimo dal example in section 5.1 (a) R WMH: τ = 2, initial v alue: x 0 = 0 acceptance rate = 29.916%, (b) R WMH: τ = 2, initial v alue: x 0 = 10 acceptance rate = 29.656%, (c) R WMH: τ = 5, initial v alue: x 0 = 10 acceptance rate = 14.31%, (d) RDMH, initial v alue: x 0 = − 2, acceptance rate = 30.172% Y = ( − 1 , 1) − { 0 } i.e. 405 g ( ) = 1 / 2 − 1 < < 1 , 6 = 0 406 F or the R WMH, we c ho ose the prop osal q ( x 0 | x ) = φ ( x 0 ; x, τ 2 ) for different choices of 407 τ . Figure 5.1 (panels (a) and (b)) shows that R WMH remains stuck at one of the mo des 408 for an arbitrary but reasonable choice of τ , and with arbitrary initial v alue. This indicates 409 significan t non-robustness of R WMH with resp ect to the initial v alue and the c hoice of τ ev en 410 if it is geometric ergo dic in this case. Only when τ has b een appropriately c hosen, R WMH 411 p erforms adequately (Figure 5.1 , panel (c)). W e remark that suc h “righ t” choice is p ossible 412 only if bimodality of the target p osterior is anticipated b eforehand, whic h is unrealistic. Ev en 413 for the appropriate choice of τ w e notice that the acceptance rate of R WMH is rather small 414 (14.31%). In contrast RDMH adequately explored the en tire state space without requiring 415 kno wledge of the target density (Figure 5.1 , panel (d)), or tuning of the proposal. The 416 acceptance rate, which is 30.172%, m uc h encouraging compared to the R WMH algorithm. 417 Random dive MH 18 Example 2. No w we consider a more challenging case similar to one considered by Chen 418 and Kim ( 2006 , p. 1632–1634) in their Example 2. The target is a mixture of univ ariate 419 normals: 420 π ( x ) = 0 . 5 φ ( x ; 0 , 10 − 4 ) + 0 . 5 φ ( x ; 5 , 1) 421 Sev eral sp ecialized algorithms are av ailable for such ne e d le-in-haystack problem among whic h the equi-energy sampler b y Kou et. al. ( 2006 ) is w orth mentioning. The equi-energy sampler is extremely efficient once the tuning parameters are c hosen carefully . F or our purp ose we c hose few prop osals at random and study their p erformances. In particular, we run each c hain (of length 30,000 eac h after discarding first 20,000 burn-ins) 100 times and estimate ˆ P = 1 30000 30000 X i =1 I ( | X t | < 0 . 05) , where X t denotes the Mark ov chain. F rom T able 5.1 it can b e seen that all the proposals 422 w ork quite well. The third and fifth proposal results small M.S.E’s p erhaps due to the fact 423 that they put more w eight near zero (the multiplier is close to zero and hence so is the 424 prop osed state) and one of the mo de is at zero. How ev er, since in practice, it need not b e 425 the case it might result in p o or acceptance rates. So, a prop osal that generates random 426 m ultiplier close to 1 should b e preferred. 427 Prop osal E ( ˆ P ) s.d.( ˆ P ) MSE( ˆ P ) Avg. accep. rates U(-1,1) 0.5115 0.1361 0.0184 37.14% 0 . 15 B ( − ; 1 , 1) + 0 . 85 B ( ; 1 , 1) 0.4953 0.1218 0.0147 41.08% 0 . 15 B ( − ; 0 . 5 , 1) + 0 . 85 B ( ; 0 . 5 , 1) 0.4938 0.0470 0.0022 28.51% 0 . 15 B ( − ; 1 , 0 . 5) + 0 . 85 B ( ; 1 , 0 . 5) 0.4912 0.1767 0.0310 56.79% 0 . 5 B ( − ; 0 . 5 , 0 . 5) + 0 . 5 B ( ; 0 . 5 , 0 . 5) 0.5024 0.0520 0.0027 37.91% T able 5.1: Mean, standard deviation and mean squared errors of ˆ P for different prop osals. The av erage acceptance rates are also rep orted. Random dive MH 19 5.2 Exploring a thic k-tailed target 428 In this section w e consider a thick-tailed target density π for which R WMH and LMH are 429 not geometrically ergo dic but RDMH is. W e c hose 430 π ( x ) = 2 π 1 (1 + x 2 ) 2 (5.1) 431 It is easy to v erify that E π | X | 2 < ∞ . Any other thic k tailed densit y or a densit y whic h is 432 not log-concav e in the tail could hav e b een chosen in place of ( 5.1 ). 433 Notice that ∇ log π ( x ) → 0 as | x | → ∞ . So π cannot b e log-concav e in the tail and 434 hence the R WMH chain is not geometrically ergodic. Moreov er, Theorem 4.3 of Rob erts and 435 Tw eedie ( 1996 ) assures that the LMH is not geometrically ergodic either. T able 5.2: P-v alues of tests for normality p erformed on the samples of means. Acceptance P-v alue of Algorithm rate (%) s.e. AD CVM Lill RDMH 66.43 0.0074 0.8242 0.8241 0.5737 R WMH ( N (0 , 1 . 5 2 )) 46.99 0.0298 R WMH ( C (0 , 1)) 44.95 0.0182 LMH (scale = 2) 87.90 0.1767 0 LMH (scale = 3) 79.39 0.5460 LMH (scale = 4) 78.17 0.8631 436 Our parameter of interest w as E π X . W e compared the RDMH algorithm with the R WMH 437 and the LMH algorithms. F or RDMH prop osal g we again chose the uniform distribution 438 o ver ( − 1 , 1). F or R WMH, how ev er w e c hose t wo prop osals – one thin-tailed and one thic k- 439 tailed. The the thin-tailed proposal is a normal distribution with mean zero and v ariance 440 1 . 15 2 ( N (0 , 1 . 5 2 ) while thick-tailed prop osal is the standard Cauch y distribution ( C (0 , 1)). 441 The scale param ters for the LMH were chosen to b e 2, 3 and 4. F or each of the algorithms, 442 w e ran 1000 indep endent chains of lengths 50,000 eac h and obtained the means of last 443 40,000 v alues of eac h suc h c hain. Thus w e obtained six samples of estimates of E π X each 444 of which had size 1000. Three tests were p erformed on each of these three samples in order 445 to quantitativ ely assess the normality b ehavior. The three tests were the Anderson–Darling 446 (AD) test, Cramer–v on Mises (CVM) test and the Lilliefors (Lill) test for normality . The 447 Random dive MH 20 T able 5.3: Perf ormances of the algorithms in terms of Kolmogorov-Smirno v distances. Metho d: RDMH R WMH LMH N (0 , 1 . 5 2 ) C (0 , 1) Scale = 2 Scale = 3 Scale = 4 KS v alue 0.0202 0.0788 0.0647 0.3682 0.4665 0.5000 p-v alue 0.7997 0.0338 0.0365 0 0 0 descriptions of the tests can b e found in Tho de ( 2002 ). The tests were p erformed b y nortest 448 pac k age of R -statistical softw are. The p-v alues together with the av erage acceptance rate of 449 eac h of the 1000 chains and standard error of each of the six samples of empirical means are 450 rep orted in T able 5.2 . The QQ–plots are shown in Figure 5.2 and the auto-correlation plots 451 for a typical run of the samplers are shown in Figure 5.3 (no thinning). 452 It is seen both from the p-v alues and the QQ–plots that normalit y holds for the empirical 453 means obtained by RDMH algorithm while in the R WMH and the LMH algorithms they are 454 far from normality . 455 Next, to judge ho w fast RDMH con verges in this scenario we conducted a further study . 456 F or eac h of the algorithms we ran thousand indep endent chains of length 1000 each and 457 calculated d ( ˆ F , F 0 ) – the Kolmogoro v–Smirno v distance b etw een the empirical c.d.f ( ˆ F ) and 458 the true c.d.f. 459 F 0 ( x ) = 1 π arctan x + 1 2 + 1 2 π sin(2 arctan x ) 460 b y the formula 461 d ( ˆ F , F 0 ) = sup x ∈ R | ˆ F ( x ) − F 0 ( x ) | 462 and also obtained the p-v alues for testing H 0 : ˆ F = F 0 against the tw o sided alternative. 463 T able 5.3 rep orts the a v erages of the Kolmogorov-Smirno v distances and the p-v alues from 464 the 1000 independent chains. This sho ws that the RDMH chain conv erges m uch faster 465 compared to the R WMH and the LMH algorithms. 466 5.3 Share price return data 467 In this section we consider the daily price returns of Abb ey National share b etw een July 31 468 and Octob er 8, 1991. The data is presen ted in T able 1 of Buc kle ( 1995 ). W e consider the 469 simple lo cation-scale model proposed and analyzed in F ernandez and Steel ( 1998 ). Let p i , i = 470 Random dive MH 21 ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● −3 −2 −1 0 1 2 3 −0.02 −0.01 0.00 0.01 0.02 (1) Theoretical Quantiles Sample Quantiles ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● −3 −2 −1 0 1 2 3 −0.2 0.0 0.2 0.4 0.6 (2) Theoretical Quantiles Sample Quantiles ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● −3 −2 −1 0 1 2 3 −0.15 −0.10 −0.05 0.00 0.05 (3) Theoretical Quantiles Sample Quantiles ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● −3 −2 −1 0 1 2 3 −1 0 1 2 (4) Theoretical Quantiles Sample Quantiles ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● −3 −2 −1 0 1 2 3 −5 0 5 10 (5) Theoretical Quantiles Sample Quantiles ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● −3 −2 −1 0 1 2 3 −5 0 5 10 15 (6) Theoretical Quantiles Sample Quantiles Figure 5.2: QQ–plots of six samples of empirical means. (1) : RDMH; (2) : R WMH with N (0 , 1 . 5 2 ) prop osal; (3) R WMH with C (0 , 1) prop osal; (4) - (6) : LMH with scales 2, 3 and 4 resp ectively Figure 5.3: Auto-correlation plots of six samplers. (1) : RDMH; (2) : R WMH with N (0 , 1 . 5 2 ) prop osal; (3) R WMH with C (0 , 1) prop osal; (4) - (6) : LMH with scales 2, 3 and 4 resp ectiv ely Random dive MH 22 0 , . . . , 49 denote the price data in T able 1 of Buc kle ( 1995 ) and y i = ( p i − p i − 1 ) /p i − 1 , i = 471 1 , . . . , 49. F ernandez and Steel ( 1998 ) mo deled the data as follo ws: 472 p ( y 1 , . . . , y n | β , σ, ν , γ ) = " 2 γ + 1 γ × Γ(( ν + 1) / 2) Γ( ν / 2) √ π ν σ − 1 # n × n Y i =1 1 + ( y i − β ) 2 ν σ 2 1 γ 2 I ( y i > β ) + γ 2 I ( y i < β ) − ( ν +1) / 2 (5.2) 473 with indep endent priors on the parameters as follo ws: 474 p ( β ) = 1 ; p ( σ ) = 1 /σ 475 p ( ν ) = d exp( − dν ) ; p ( φ ) = b a Γ( a ) − 1 φ a − 1 exp( − bφ ) 476 where φ = γ 2 . The h yp er-parameters are giv en by F ernandez and Steel ( 1998 ) as d = 477 0 . 1 , a = 1 / 2 and b = 1 /π . W e log-transform all the parameters except β so that the state 478 space b ecomes R 4 . That is, w e re-parametrize: ˜ σ = log σ, ˜ ν = log ν and ˜ γ = log γ . W e 479 up dated the parameters ( β , ˜ σ , ˜ ν , ˜ γ ) sequentially with the following prop osal densities: 480 g β ( ) = 0 . 80 B ( ; 2 , 1) I (0 < < 1) + 0 . 20 B ( − ; 2 , 1) I ( − 1 < < 0) 481 g ˜ σ ( ) = 0 . 80 B ( ; 3 , 0 . 5) I (0 < < 1) + 0 . 20 B ( − ; 3 , 0 . 5) I ( − 1 < < 0) 482 g ˜ ν ( ) = 0 . 80 B ( ; 3 , 0 . 5) I (0 < < 1) + 0 . 20 B ( − ; 3 , 0 . 5) I ( − 1 < < 0) 483 g ˜ γ ( ) = 0 . 80 B ( ; 2 , 0 . 5) I (0 < < 1) + 0 . 20 B ( − ; 2 , 0 . 5) I ( − 1 < < 0) 484 where B ( ; a, b ) is the Beta densit y prop ortional to a − 1 (1 − ) b − 1 I (0 < < 1). W e tried 485 couple of other suc h mixtures to o and the results were very close. Using a proposal densit y 486 uniform on ( − 1 , 1) is not a goo d idea in this case as discussed before. 487 F ernandez and Steel ( 1998 ) used a Gibbs sampler approach with data-augmen tation. 488 They faced some n umerical difficulties and p erturb ed the y i ’s slightly to resolv e the n umerical 489 problems. The RDMH sampler, ho wev er, did not face an y n umerical problem. The results 490 obtained b y RDMH differs from the Gibbs sampler p erhaps due to this reason. Actually , the 491 p osteriors of σ, ν and γ were same whether w e used Gibbs sampler or not (see the pap er by 492 ( F ernandez and Steel , 1998 ) for the Gibbs sampler output). The p osterior of β were quite 493 dissimilar for the RDMH and Gibbs samplers. F or the Gibbs sampler the p osterior w as 494 mainly concentrated b et w een − 0 . 016 and 0 . 002 while it w as concentrated b et ween − 0 . 005 495 and 0 . 015 for the RDMH chain. Clearly , the Gibbs sampler fails to co v er the long tail of the 496 p osterior of β while the RDMH explores it quite easily . 497 Random dive MH 23 Figure 5.4: Densit y histograms of the posteriors in share price return data example. Figure 5.5: T raceplots of the the last thousand iterations of the RDMH chain for the share price return data example. Random dive MH 24 0 10 20 30 40 0.0 0.2 0.4 0.6 0.8 1.0 Lag ACF β 0 10 20 30 40 0.0 0.2 0.4 0.6 0.8 1.0 Lag ACF log ( σ ) 0 10 20 30 40 0.0 0.2 0.4 0.6 0.8 1.0 Lag ACF log ( ν ) 0 10 20 30 40 0.0 0.2 0.4 0.6 0.8 1.0 Lag ACF log ( γ ) Figure 5.6: Auto-correlation function of the RDMH sampler. P arameter RDMH Gibbs mean s.d. mean s.d. β 0.0066 0.0029 − 0 . 0068 0.0028 σ 0.0091 0.0018 0.0091 0.0018 T able 5.4: Posterior summaries for β and σ for the RDMH and Gibbs chains. The result for the Gibbs chain are taken from F ernandez and Steel ( 1998 ). P arameter mean(RDMH) s.d. (RDMH) ν 8.0119 7.0520 γ 0.6745 0.1408 T able 5.5: Posterio r summaries for ν and γ for the RDMH c hain. Random dive MH 25 T o ensure we also ran a random walk MH sampler and found that the results for the 498 R WMH sampler coincided with that of the RDMH sampler. The summaries of the RDMH 499 sampler is giv en in T able 5.4 and 5.5 and the histograms and traceplots of the same are 500 giv en in Figure 5.4 and 5.5 resp ectively . The auto correlation plots of the RDMH c hains are 501 giv en in Figure 5.6 . W e ran the sampler for 160,000 iterations and discarded the first 10,000 502 samples as burn-ins. W e then thinned the remaining 150,000 samples by 5. Conv ergence 503 w as ac hieved m uc h earlier though. W e also found that the mixing for the RDMH sampler 504 w as superior to that of the R WMH sampler. 505 6 F urther w orks 506 W e conclude this article with some purview of p ossible extension to higher dimension. Sup- 507 p ose π is a densit y supp orted on R k and g is density on Y k . Then the algorithm is given in 508 Algorithm 6.1 . 509 Algorithm 6.1. R andom dive MH on R k 510 • Input: Initial value x (0) with no comp onent equal to 0, and numb er of iterations N . 511 • Fo r t = 0 , . . . , N − 1 512 1. Generate ∼ g ( · ) and u i ∼ U (0 , 1) , i = 1 , . . . , k indep endently 513 2. Fo r each i if 0 < u i < 1 / 2 , set x 0 i = x ( t ) i i . else set x 0 i = x ( t ) i / i 514 3. Let I = { 1 ≤ i ≤ k : u i < 1 / 2 } and set 515 α ( x , ) = min ( π ( x 0 ) π ( x ) Q i ∈ I i Q j / ∈ I j , 1 ) 516 4. Set 517 x ( t +1) = x 0 with probabilit y α ( x ( t ) , ) x ( t ) with probabilit y 1 − α ( x ( t ) , ) 518 • End for 519 This algorithm is still irreducible and ap erio dic. It is also Harris recurren t on R ∗ k and 520 ev ery compact subset of R ∗ k is still small. The pro of is along the same line as Theorem 1 521 and 2 . Geometric ergo dicit y is, how ev er, a prop erty that requires a different approach. It is 522 Random dive MH 26 exp ected that geometric ergodicity of this algorithm still holds for a large class of densities 523 (esp ecially the thick-tailed ones) on higher dimensions. W e hop e that this article would 524 dra w atten tion of the researchers and the question regarding geometric ergo dicit y in higher 525 dimension situation would b e settled. 526 The prop osal density g ( ) on Y k can b e chosen to b e the pro duct of prop osal densities 527 on Y . In such a case, one should choose the univ ariate prop osals whic h generate ’s close 528 to 1 with high probabilities eac h (for example, the mixture prop osals in Section 5.3 ). This 529 will ensure that the prop osed states are not to o far aw ay from the curren t state (in R k ) to 530 reduce the acceptance rate significan tly . 531 Ac kno wledgemen t 532 Thanks are due to tw o anonymous referees whose critical reading of the manuscript and 533 constructiv e commen ts lead to ma jor improv ement of the paper. 534 References 535 A tchad ´ e, Y. F. and P erron, F. (2007). On the geometric ergo dicit y of Metropolis-Hastings 536 algorithms. Statistics 41 (1), 77–84. 537 Buc kle, D. J. (1995). Ba y esian inference for stable distributions. J. A mer. Statist. Asso c. , 538 90 (430), 605–613. 539 Chen, M.H. and Kim, S. (2006). Discussion of ”Equi-Energy Sampler“ by Kou, Zhou and 540 W ong. The A nnals of Statistics , 34 (4), 1629–1635. 541 Dellap ortas, P . and Rob erts, G. O. (2003) A n intr o duction to MCMC . In J. Møller (ed.), 542 Sp atial Statistics and Computational Metho ds, Lecture Notes in Statistics num b er 173 543 Springer-V erlag, New Y ork, pp. 1–41 544 F eller, W. (1971). A n intr o duction to pr ob ability and its applic ations, V ol. II . Wiley , New 545 Y ork. 546 F ernandez, C. and Steel, M. F. J. (1998) On Bay esian mo deling of fat tails and skewness. 547 J. A mer. Statist. Asso c. , 93 (441), 359–371. 548 Random dive MH 27 Hastings, W. K. (1970). Mon te Carlo sampling using Marko v chains and their applications. 549 Biometrika , 57 (1), 97–109. 550 Jasra, A., Holmes, C. C. and Stephens, D. A. (2005). Mark ov c hain Mon te Carlo metho ds 551 and the lab el switching problem in Bay esian mixture mo deling. Statistic al Scienc e 20 (1), 552 50–67. 553 Jones, G. J. and Hobert, J. P . (1996). Honest exploration of in tractable probability distri- 554 butions via Marko v Chain Monte Carlo. Statistic al Scienc e 16 (4), 312–334. 555 Kipnis, C. and V aradhan, S. R. S. (1986). Central limit theorem for additive function- 556 als of rev ersible Mark ov processes and applications to simple exclusions. Comm. Math. 557 Phys. 104 , 1–19. 558 Kou, S. C. and Zhou, Q. and W ong, W. H. (2006). Discussion pap er equi-energy sampler with 559 applications in statistical inference and statistical mechanics. A nnals of Statistics. 34 (4), 560 1581–1619. 561 Liu, J. S. (2008). Monte Carlo str ate gies in scientific c omputing . New Y ork: Springer V erlag. 562 Mengersen, K. L. and Tweedie, R. L. (1996). Rates of conv ergence of the Hastings and 563 Metrop olis algorithms. The A nnals of Statistics 24 (1), 101–121. 564 Metrop olis, N., Rosen bluth, A., Rosenbluth, R., T eller, A., and T eller, E. (1953). Equation 565 of state calculations b y fast computing machines. Journal of Chemic al Physics , 21 (6), 566 1087–1092. 567 Meyn, S. P . and Tw eedie, R. L. (1993). Markov chains and sto chastic stability . Springer- 568 V erlag, London, New Y ork. 569 Rob ert, C. P . and Casella, G. (2004). Monte Carlo statistic al metho ds . Springer V erlag. 570 Rob erts, G. O. (1999). A note on acceptance rate criteria for CL Ts for Metrop olis-Hastings 571 algorithms. Journal of Applie d Pr ob ability 36 (4), 1210–1217. 572 Rob erts, G. O. and Rosenthal, J. S. (1997). Geometric ergo dicity and h ybrid Mark ov c hains. 573 Ele ctr on. Comm. Pr ob ab 2 (2), 13–25. 574 Random dive MH 28 Rob erts, G. O. and Rosenthal, J. S. (1998). Marko v-c hain Mon te Carlo: some practical 575 implications of theoretical results. Canadian Journal of Statistics 26 (1), 5–20. 576 Rob erts, G. O. and Rosenthal, J. S. (2004). General state space Mark ov chains and MCMC 577 algorithms. Pr ob ability Surveys 1 , 20–71. 578 Rob erts, G. O. and Tweedie, R. L. (1996). Exp onential con v ergence of Langevin distributions 579 and their discrete approximations. Bernoul li 2 (4), 341–363. 580 Tho de, Jr., H. C. (2002). T esting for normality . Marcel Dekker, New Y ork. 581 A ddr ess for c orr esp ondenc e : 582 Departmen t of Statistics 583 Univ ersity of Chicago 584 5734 S. Universit y Aven ue 585 Chicago, IL 60637. USA 586 E-mail: sdutta@galton.uchicago.edu 587

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

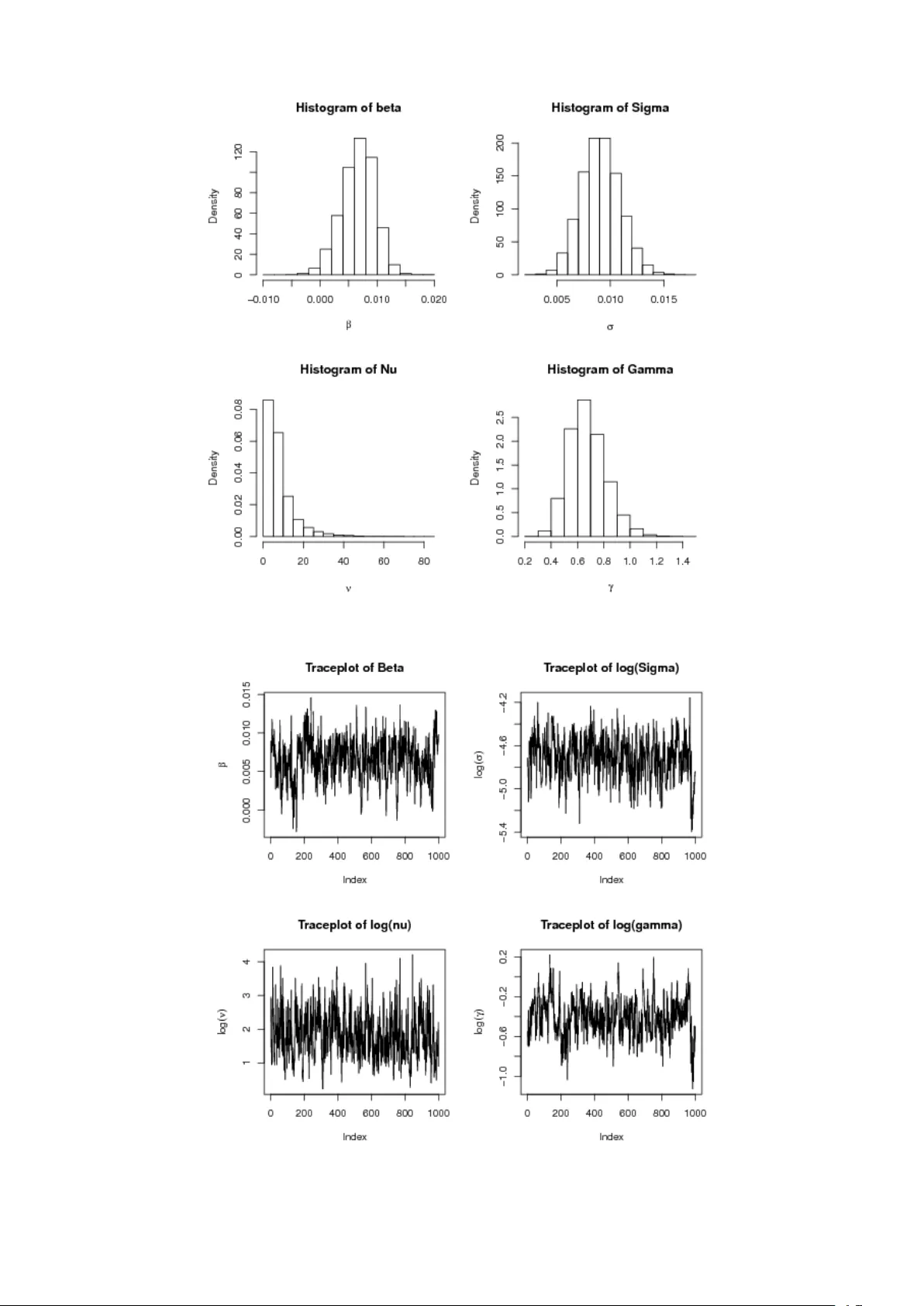

Leave a Comment