Accuracy of MAP segmentation with hidden Potts and Markov mesh prior models via Path Constrained Viterbi Training, Iterated Conditional Modes and Graph Cut based algorithms

In this paper, we study statistical classification accuracy of two different Markov field environments for pixelwise image segmentation, considering the labels of the image as hidden states and solving the estimation of such labels as a solution of t…

Authors: Ana Georgina Flesia, Josef Baumgartner, Javier Gimenez

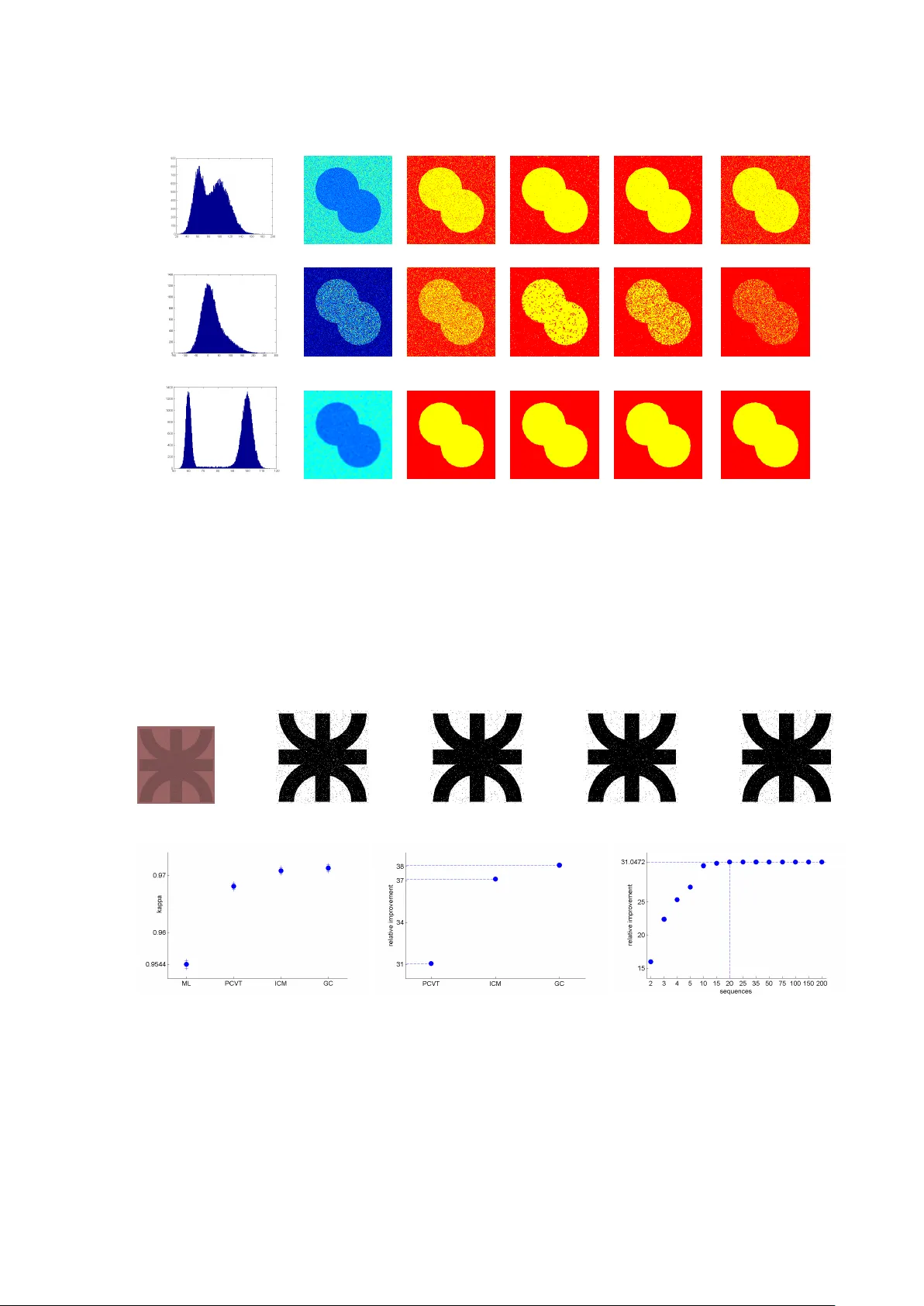

Accuracy of MAP se gmentation with hidden Potts and Marko v mesh prior models via Path Constrained V iterbi T raining, Iterated Conditional Modes and Graph Cut based algorithms. Ana Georgina Flesia a , Josef Baumgartner b , Javier Gimenez c , Jorge Martinez d a Conicet at UTN- Universidad T ecnol ´ ogica Nacional r egional C ´ or doba and F amaf- F acultad de Matem ´ atica Astr onom ´ ıa y F ´ ısica, Universidad Nacional de C ´ or doba. Medina Allende s/n. Ciudad Universitaria, 5000. C ´ or doba, Argentina. b F acultad de Ingenier ´ ıa, FCEFyN, Universidad Nacional de C ´ or doba, Argentina. Vlez Sarsfield 1611. Ciudad Universitaria, 5000. C ´ or doba. c F amaf - F acultad de Matem ´ atica Astr onom ´ ıa y F ´ ısica, Universidad Nacional de C ´ or doba. Medina Allende s/n. Ciudad Universitaria, 5000. C ´ or doba, Argentina. d Departamento de Matem ´ atica, Universidad Nacional del Sur , A venida Alem 1253. 2 Piso Bah ´ ıa Blanca, B8000CPB, Ar gentina. Abstract Pixelwise image segmentation using Markovian prior models depends on several hypothesis that determine the number of parameters and general complexity of the estimation and prediction algorithms. The Markovian neigh- borhood hypothesis, order and isotropy , are the most conspicuous properties to set. In this paper, we study statistical classification accuracy of two different Marko v field en vironments for pixel- wise image segmentation, considering the labels of the image as hidden states and solving the estimation of such labels as a solution of the MAP equation s ∗ = ar g max s p ( s | I , θ ) , where I is the observed image and θ the model. The emission distribution is assumed the same in all models, and the difference lays in the Markovian prior hypothesis made over the labeling random field. The a priori labeling knowledge will be modeled with a) a second order anisotropic Markov Mesh and b) a classical isotropic Potts model. Under such models, we will consider three dif ferent se gmentation procedures, 2D P ath Constrained V iterbi training for the Hidden Markov Mesh, a Graph Cut based segmentation for the first order isotropic Potts model, and ICM (Iterated Conditional Modes) for the second order isotropic Potts model. W e provide a unified view of all three methods, and in vestigate goodness of fit for classification, studying the influence of parameter estimation, computational gain, and extent of automation in the statistical measures Overall Accuracy , Relati ve Improvement and Kappa coef ficient, allo wing robust and accurate statistical analysis on synthetic and real-life e xperimental data coming from the field of Dental Diagnostic Radiograph y . All algorithms, using the learned parameters, generate good segmentations with little interaction when the images ha ve a clear multimodal histogram. Suboptimal learning proves to be frail in the case of non-distinctiv e modes, which limits the complexity of usable models, and hence the achie vable error rate as well. All Matlab code written is provided in a toolbox av ailable for do wnload from our website, following the Reproducible Research Paradigm. K eywor ds: Hidden Markov Models, Hidden Potts Models, EM for Gaussian mixtures, Image se gmentation, Relativ e improv ement, Confidence intervals for Kappa coef ficient of agreement 1. Introduction Bayesian approaches to image segmentation, classification and ev en compression have been devised since the early work on Causal Markov Mesh (CMM) of Abend, Harley and Kanal 1 in the sixties, and Hidden Markov random field (HMRF) models pioneered by Besag 2 in the seventies. Basically they consists of embedding the problem into a probabilistic framework by modeling each pixel as a random variable, with a giv en likelihood, embedding the knowledge of the hidden labels on a prior distrib ution. The key issue in using Markovian models for segmentation applications is parameter estimation, which is usually a computationally expensiv e task. In practice, often a trade off is accepted between accuracy of estimation and running time of the estimation algorithm, see Chen et. al (2012) 3 for an interesting revie w of the field. Pr eprint submitted to Elsevier J une 3, 2021 A common approach consists of alternatively restoring the unknown segmentation based on a maximum a posteriori (MAP) rule and then estimating the model parameters using the observations and the restored data. For Random Marko v Fields, there are av ailable iterative approximated solutions based on the use of the Gibbs distribution as a prior . This is the case, for instance, in the popular ICM algorithm of Besaj 4 , which makes use of pseudo-likelihood approximation. Complete Monte Carlo Markov Chain methods for Potts model and segmentation estimation were implemented on Pereyra et al. (2013) 5 . In Gimenez et al. (2013) 6 , a new pseudo- likelihood estimator of the smoothness parameter of a second order Potts model was proposed and included on a fast version of Besaj’ s Iterated Conditioned Modes (ICM) segmentation algorithm. Comparisons with other classical smoothness estimators, like the one introduced by Frery et al (2009) 7 , were also provided. Le vada et al (2010) 8 also discussed MAP-MRF approximations combining suboptimal solutions based on several different order isotropic Potts priors. Direct competitors of ICM are the methods discussed by Celeux et. al (2003) 9 based on mean field like approx- imations to the expectation-maximization (EM) algorithm for Potts models with first order neighborhoods, which consider conditional probabilities rather than restorations of the hidden data. The EM algorithm is widely used in the context of incomplete data and in particular to estimate independent mixture models due to its simplicity . Binary segmentation problems, as background-object problems, have been addressed successfully with graph theoretical methods. Greig et al.(1989) 10 showed that graph cuts can be used for binary image restoration as solutions of the MAP-MRF problem. Boyko v et al.(2001) 11 proposed a frame work that used s/t graph cuts to get a globally optimal object extraction method for N-dimensional images. Blake et al.(2004) 12 used a mixture of the Markov Gibss random field (MMGRR) to approximate the regional properties of segments and the spatial interaction between se gments. The standard s/t graph cut algorithm can find the exact optimal solution for a certain class of energy functionals ; howev er , in many cases the number of labels for assigning to graph nodes is more than two,and the minimization of energy functions becomes NP-hard. For approximate optimization, Boyko v et al.(2004) 13 dev eloped the α -expansion-mo ve and αβ swap move algorithms to deal with multi-labeling problems for more general energy functionals. An up to date survey of graph theoretical approaches to image segmentation is giv en in Peng et al (2013) 14 . Li et al.(2000) 15 introduced the first analytic solution to a true anisotropic 2-D hidden Marko v model (HMM). They studied a strictly-causal, nearest-neighbor , 2-D HMM, and show that exact decoding is not possible. For block-based causal modeling, they assumed that both training and testing images shared the same underlying Markovian model up to transition probabilities. The estimated the interblock transition probabilities with Path- Constrained 2-D V iterbi algorithms (PCV A) in training step. Pyun et al (2007) 16 introduced a ne w method for blockwise image se gmentation combining the mode approximation method as in Besaj 4 , with the simplest non- causal Markovian model, the bond-percolation model (BP) and its multistate extension (MBP). They did not as- sume homogeneity in spatial coherence among images, and estimated the hidden BP parameters in testing step using an stochastic EM procedure (mode field approximation) with computation time comparable to that using causal HMMs 15 for blockwise segmentation of the same aerial images. Ma et al. (2010) 17 proposed a pseudo noncausal HMM by splitting the noncausal model into multiple causal HMMs, solved in a distributed computing framew ork for pixel wise segmentations. Other decoding approximations, also made on testing step, are discussed in Perronin et al 2003 18 , Sargin et al. (2008) 19 and references therein. As we said before, the key issue in using Mark ovian prior models for segmentation applications is the complex- ity of parameter estimation. Methods considering isotropic neighborhoods (classical Potts and bond-percolation models) can smooth out important quasi linear structures in images, while methods that consider non-isotropic neighborhoods, like causal 2dHMM, and non-isotropic Potts, in v olve more parameters to estimate. Figure 1: Neighborhood systems: (a) 2D order Causal Markov Mesh , (b) second order Potts model, (c) first order Potts model. In this paper , we explore tw o specific Markov prior hypothesis for image segmentation, in the form of dif ferent neighborhood systems and probability relationships: 1. a diagonal six-pixel neighborhood for the anisotropic MRF 2 2. a four-pix el (first order) and eight-pixel (second order) neighborhood for the isotropic MRF which are depicted in Figure 1. The Markovian hypothesis assumed for each labeling Random Markov Field is different, in order to develop different estimation strategies. W e keep the same Multiv ariate Gaussian model for the emission distributions. For the first neighborhood system we ensure assumptions of a second order Causal Markov Mesh model, which introduced an extra assumption in the neighborhood probabilities related to the notion of “pixel’ s past”. In the second neighborhood system, we introduce a Gibbs distribution, in the form of the first and second order isotropic Potts model with smoothness parameter β . Under such models, we will consider three different segmentation procedures, 2D Path Constrained V iterbi testing (PCVT) for the Hidden Mark ov Mesh, a simple proposal of Graph Cut (GC) based segmentation for the first order isotropic Potts model, and Iterated Conditional Modes (ICM) for the second order isotropic Potts model. F or all methods, we propose a unified frame work consisting in three stages. The first stage is the image modeling, and initial labeling. The second stage is general parameter estimation and the third stage is computing the approximated MAP solution. Previous work on causal 2dHMM for blockwise and pixelwise segmentation assume that both training and test- ing images share the same underlying Markovian model up to transition probabilities, 15,20,17 . They estimate those probabilities in a training step and simply use them to test new images. Howe ver , this homogeneity assumption is arguable in many images. In our paper, we assume that the spatial coherence can v ary from image to image, thus we estimate parameters and transitions probabilities in testing step. W e also discuss specific choices for con- straining paths possibilities in V iterbi decoding, that produces computation time comparable to that using ICM and GC. In Sections 2, 3 and 4 we discuss in detail the equations of the Markov Mesh prior model and Potts prior model, and the approximation choices made in our implementations of PCVT , GC and ICM. The goal of this paper is to give a unified view of these approximations, theoretically and algorithmically , and compare their classification rates with synthetic and real data with a vailable ground truth. In Section 5 we introduce the design of our simulated experiments and the statistics used to ev aluate classification. The implementation issues and prospects are gi ven in Section 6. All Matlab code written is provided in a toolbox a vailable for do wnload from our website, following the Reproducible Research Paradigm. 2. MAP-MRF rules Many ef fecti ve computational tools for practical problems in image processing and computer vision ha ve been devised through Marko v Random Fields (MRF) modeling. One of these practical problems is to label an image domain pixelwise with giv en L discrete labels L = { ` 1 , ..., ` L } , with the help of a priori modeling hypothesis like the Potts model. It corresponds to a special case of such Markov Random Field (MRF) ov er the image graph, which computes the graph partition with the minimal total perimeter of the one-label (constant) regions. It does not fav or any particular order of the labels, in comparison to another similar model introduced by Ishikawa ?? , which partitions the image graph with multiple linearly ordered labels (ov erlapped to each other). The MRF-based energy function proposed by Potts model is the sum of the unary potential defined on each graph node and the pairwise potential giv en on each graph edge. 2.1. Markov Random F ields Markov random fields provide conv enient prior for modeling spatial interactions between pixels. Markov Random Fields were first introduced into vision by Geman and Geman 21 . Let P be a set of pixels in the image I of size n = z × w , L = { ` 1 , ` 2 , . . . , ` L } a set of L labels, and for each site ( i, j ) ∈ P is defined as a set ∂ ij ⊂ P called the neighborhood of ( i, j ) . The neighborhood system is a collection of sets ∂ = { ∂ ij : ( i, j ) ∈ P } which satisfies: (a) ( i, j ) / ∈ ∂ ij ,(b) ( i 0 , j 0 ) ∈ ∂ ij ⇒ ( i, j ) ∈ ∂ i 0 j 0 , (c) P = S ( i,j ) ∈P ∂ ij . The labeling problem is to assign a label from the label set L to each site in the set of sites P . Thus a labeling is a mapping from P to L . W e will denote a labeling by s = { s ij } . The set of all possible labeling L n is denoted by S . In this paper we will consider the final segmentation s = { s ij } as realizations of a Markov random field. This means that for each possible realization (called configuration) s ∈ S , it holds that • p ( s ) > 0 , • p s ij | s P −{ ( i,j ) } = p s ij | s ∂ ij . where P − { ( i, j ) } denotes set dif ference, and s ∂ ij denotes the labels of the sites in ∂ ij . 3 2.2. Maximum a P osteriori Estimation In general, the labeling field is not directly observable in the experiment. W e hav e to estimate its realized configuration s based on an observ ation I , which is related to s by means of the likelihood function p ( I | s, θ ) , where θ represents the set of all model’ s parameters. The most popular way to estimate an MRF is to maximize a posteriori (MAP) estimation. The MAP-MRF frame work was popularized in vision by Geman and Geman 21 . MAP estimation consists of maximizing the posterior probability p ( s | I , θ ) . From the point of vie w of Bayes estimation, the MAP estimate minimizes the risk under the zero-one cost function. Using Bayes rule, the MAP estimate is s ∗ = ar g max s ∈S p ( s | I , θ ) = ar g max s ∈S p ( I | s, θ ) p ( s | θ ) (1) 2.3. Gaussian observation field For the sake of completeness, we consider multispectral images where each pixel is a vector from R q . W e assume that the observ ed pix el intensities are Multi variate Gaussian Mixtures, and the emission probabilities giv en the state ` ∈ L are Multi variate Gaussian: p ( x | ` ) = 1 (2 π ) q / 2 | Σ ` | 1 / 2 exp − 1 2 ( x − µ ` ) T Σ − 1 ` ( x − µ ` ) (2) with mean µ ` and cov ariance matrix Σ ` . In this work, we compute the initial classification without considering any conte xtual prior , following Algorithm 1. Algorithm 1: Maximum Likelihood Classification 1) Supervised Maximum Likelihood (ML) segmentation of I : T o assign the label given by s ij = ar g max ` ∈L p ( I ij | ` ) to pixel ( i, j ) , with parameters chosen from the modes of a histogram, or selected pixel v alues. 2) Unsupervised Maximum Likelihood (EM-ML) segmentation of I : T o assign the label given by s ij = ar g max ` ∈L p ( I ij | ` ) to pixel ( i, j ) , with parameters giv en by the Expectation Maximization algorithm for Gaussian Mixtures, with random initializations. The labeling obtained by ML will be the benchmark classification. 3. Gibbs prior MRFs are generalizations of Markov processes that can be specified either by the joint distrib ution or by the local conditional distributions. Ho wever , local conditional distributions are subject to nontrivial consistency constraints, so the first approach is most commonly used. In this paper, we will consider two types of Markov random Fields as prior constraints for the labeling field, causal Markov Meshes, and Gibbs random fields. Before defining Gibbs random fields (GRF) we need to define a clique . A set of sites is called a clique if each member of the set is a neighbor of all the other members. A Gibbs random field can be specified by the joint Gibbs distribution: p ( s ) = Z − 1 exp − X C ∈C V C ( s ) ! , where C is the set of all cliques, Z is the normalizing constant, and { V C : C ∈ C } are real functions, called the clique potential functions. In this model, the conditional distribution of state label s ij ∈ L corresponding to pixel ( i, j ) ∈ P given the e vidence in the image is p ( s ij | s i 0 j 0 : ( i 0 , j 0 ) ∈ ∂ ij ) = p ( s ij | s i 0 j 0 : ( i 0 , j 0 ) 6 = ( i, j )) ∝ exp − X C ∈C :( i,j ) ∈ C V C ( s ) . (3) 4 This Markovian assumption guarantees the e xistence of the joint distribution of the process. 3.1. P otts model In this model, the potential functions V C of (3) are defined as follows: V C ( s ) = − β if s ij = s i 0 j 0 , C = { ( i, j ) , ( i 0 , j 0 ) } ∈ C , 0 in other case. (4) where C is the clique set corresponding to the neighborhood’ s system ∂ . Thus, the distrib ution on the neighborhood in the Potts model becomes p ( s ij | s i 0 j 0 : ( i 0 , j 0 ) ∈ ∂ ij ) ∝ exp { β U ij ( s ij ) } where U ij ( s ij ) := # { ( i 0 , j 0 ) ∈ ∂ ij : s i 0 j 0 = s ij } , and β is the smoothness parameter , sometimes called in verse temperature. Thus, the joint likelihood of the Mark ov random field is p ( s ) ∝ exp { β U s } , where U s = # { C ∈ C : C = { ( i, j ) , ( i 0 , j 0 ) } , s ij = s i 0 j 0 } . The observ ed process, which is supposed to be emitted by the hidden Markov Field, is considered Multi v ariate Gaussian as in (2) with mean µ l and cov ariance matrix Σ l which depends on the classes. Thus, gi ven the observed pixel intensities I , the a posteriori distrib ution of the map of classes is p ( s | I , θ ) ∝ exp { β U s + X ij p ( I ij | s ij ) } . (5) This distribution corresponds to a ne w Potts model in which the external field in a gi ven pix el ( i, j ) is p ( I ij | s ij ) . The optimum segmentation s ∗ is defined as a MAP solution (1), with θ = ( β , µ l , Σ l ) which is a hard problem to solve in general. There are many approximated solutions provided in the literature. In this paper , we will work with Iterated Conditional Modes and Graph Cut, algorithms that will be detailed in the following subsections. A classical proposal for β estimation consists in solving the maximum pseudolikelihood equation X ( i,j ) ∈P U ij ( s ij ) − X ( i,j ) ∈P P ` ∈ L U ij ( ` ) exp { b β U ij ( ` ) } P ` ∈ L exp { b β U ij ( ` ) } = 0 . (6) which inv olves an observed class map s , where the initial s is gi ven by maximum likelihood. Using this map, we calculate U ij ( ` ) with ∂ ij equal to the first or second order neighborhood, as depicted in Figure 1. This assumption reduces equation (6) to a smaller nonlinear equation in β , with coef ficients counting the number of patches with certain configurations; see 22 for details. This equation is solved in this paper using Brent’ s algorithm 23 . 3.2. Iterated conditional Modes Iterated Conditional Modes (ICM) is an iterative algorithm that rapidly conv erges to the local maximum of the function P ( s | I , θ ) closest to the initial segmentation provided by the user . Usually , the initial segmentation is provided by Maximum Likelihood. In each iteration ICM modifies the label of each pixel for the label that is most probable, giv en the neighborhood configuration. Giv en observ ations I , ICM finds a suboptimal solution of (1), with the algorithm 2. The first term of (7) is equi valent to the ones used by the ML classifier . The second term is the contextual component scaled by the parameter β . If β > 0 , ICM smooths out the initial se gmentation,if β < 0 , ICM reduces clusters coherence. When β = 0 the rule is reduced to the maximum likelihood, but when β → ∞ the effect is reversed, the data does not hav e any importance in the final segmentation. 3.3. Graph Cut based appr oximation to MAP-MRF rules Energy based segmentation methods attempt to minimize ener gy functions, which can be obtained from sev eral sources. The general form of an energy function (notation taken from work by Boyko v , V eksler and Zahib 11 ) is as follows E ( s ) = X ( i,j ) ∈P D ij ( s ij ) + X { ( i,j ) , ( i 0 ,j 0 ) }∈C V { ( i,j ) , ( i 0 ,j 0 ) } ( s ij , s i 0 j 0 ) (8) 5 Algorithm 2: Iterated Conditional Modes (ICM) 1) Maximum Likelihood segmentation of I (ML or EM-ML, see Algorithm 1). 2) Parameter estimation by pseudo-maximum likelihood with Brent’ s algorithm for the smoothness parameter of the second order isotropic Potts model. 3) Choose a pixel’ s visit scheme for the image. 4) For each pix el ( i, j ) , change the label gi ven in the pre vious iteration for the label ` ∈ L that maximizes g ( ` ) = ln p ( I ij | `, ˆ µ ` , ˆ Σ ` ) + ˆ β U ij ( ` ) (7) 5) Iterate until con ver gence. where D ij is called the data penalty function and indicates the compatibility of the labeling with the gi ven data. W e can transform the Potts model equation (5) into an energy based formulae p ( s | I , θ ) ∝ exp {− E ( s ) } considering the first expression D ij ( s ij ) as D ij ( ` ) = − ln p ( I ij | `, ˆ µ ` , ˆ Σ ` ) (9) and the second term, called the interaction potential or the smoothness function, as the density gi ven by the first order Potts model. The smoothness term incorporates the notion of a piece wise smooth world and penalizes assignments that label neighboring nodes differently . The potential functions V C are the ones giv en in (4). General energy functions (8) can be minimized by a graph cut algorithm 13 , 24 . The image can be considered as a weighted graph G = hV , E i , where the vertices’ s V are the pixels, and the edges E are the links between neighboring pixels. For a binary graph cut problem, two additional nodes known as source and sink terminals are added to the graph. The terminals correspond to the labels being assigned to the nodes, i.e., pix els of the image. A cut is a set of edges that separates the source and sink terminals such that no subsets of the edges themselves separate the two terminals. The sum of the weights of the edges in the cut is the capacity of the cut. In the implementation we used, the weights are the potential functions V C , in other graph cut implementations, weights are defined as submodular functions. The goal is to find the minimum cut, i.e., the cut for which the sum of the edge weights in the cut is minimum. Figure 2 is a representativ e diagram sho wing the process of partitioning the input image. In the multiple label case, the multiway cut should lea ve each pixel connected to one label. This ensures T (a) (b) (c) (d) (e) (c) S Figure 2: Example of flow netw ork used in 2D image segmentation. (a) Source/Object (b) Cut (c) T -links (d) N-links (e) Sink/background. that every multi-way cut which separates all terminals, must correspond to a valid labeling ie, a configuration of the associated MRF . T o solve the problem we used the α − expansion mo ve algorithm 11 . This algorithm minimizes an energy function with non binary variables by repeatedly minimizing an energy function with binary v ariables using Max-flo w/Min-cut method. It starts with an arbitrary labeling and performs iterati ve optimization cycles until the process con ver ges. Each cycle consists of iterating ov er the set of labels, running the α − expansion move once for e very label α . This in volv es finding a new labeling s 0 obtained by increasing the number of α labels, 6 which is better than the current labeling s , ie. E ( s 0 ) ≤ E ( s ) . The algorithm will con ver ge when in a particular cycle, no better s 0 can be found. Giv en observations I , Graph Cut finds a suboptimal solution, with the follo wing algorithm: Algorithm 3: Graph-Cut (GC) 1) Maximum Likelihood segmentation of I (ML or EM-ML, see Algorithm 1). 2) Parameter estimation by pseudo-maximum likelihood with Brent’ s algorithm, for the smoothness parameter of the first order isotropic Potts model. 3) Minimize (8) using an α − expansion mo ve algorithm, using the initial segmentation ML and the ˆ β estimated in step 2). 4. Causal prior For pixel ( i, j ) we define the following causal relationship that represents the “past”, ( i 0 , j 0 ) ≺ ( i, j ) if i 0 < i or i 0 = i and j 0 < j , and the set { s i,j − 1 , s i − 1 ,j } the neighborhood of ( i, j ) in the past. W e assume a Causal 2D order Markov Mesh model stating that, for state s ij : p ( s ij | s i 0 j 0 : ( i 0 , j 0 ) ≺ ( i, j )) = p ( s ij | s i,j − 1 , s i − 1 ,j ) . (10) The two pixels ( i, j − 1) and ( i − 1 , j ) can be understood as the “past” of pixel ( i, j ) . W e consider P ( s ij | s i,j − 1 , s i − 1 ,j ) to be independent of the current pixel so we can gather the transition probabilities in a matrix A where a ` 1 ,` 2 ,` 3 = p ( s ij = ` 1 | s i,j − 1 = ` 2 , s i − 1 ,j = ` 3 ) ∀ ` 1 , ` 2 , ` 3 ∈ L . The transition matrix A has one more dimension than the transition matrix of a 1d Markov Process due to two “past states” on the left and abov e the actual pixel. This yields at a new order of the image. Instead of lining up the pixels as we would hav e done in the one-dimensional case we are no w moving from the top-left pix el to the bottom-right pix el. Thus, the initial probabilities for the 2dMM depend only on the first state s 0 , 0 and we can write π ` = p ( s 0 , 0 = ` ) ∀ ` ∈ L . The word “hidden” that is usually added to the whole model (Gaussian observ ed process plus Markov Mesh label- ing random field) comes from the fact that this Markov Mesh can not be observed, so it is considered hidden. It can be proved that this causal relationship implies a general Markov Field hypothesis with the diagonal neighborhood stated in the introduction, that is, the probability of a label given the whole labeling specification depends only on the values in the pix els depicted in Figure 1 (a). (i,j) (i,j-1 ) (i- 1,j) T 0 T 1 T w-1 T w T w+z-2 Figure 3: T ransitions among states in a 2nd order Markov Mesh and Pixels on diagonals T 0 , . . . , T w + z − 2 If we enumerate each diagonal in the image, T 0 , . . . , T z + w − 2 , as one step in time, starting with the top-left pixel, see Figure 11, T 0 = ( s 0 , 0 ); T 1 = ( s 1 , 0 , s 0 , 1 ); T 2 = ( s 2 , 0 , s 1 , 1 , s 0 , 2 ); . . . ; T z + w − 2 = ( s z − 1 ,w − 1 ); 7 the Markov Mesh assumption (along with the particular definition of the past) implies that p ( s ) = p ( T 0 ) p ( T 1 | T 0 ) . . . p ( T z + w − 2 | T z + w − 3 , . . . , T 0 ) = p ( T 0 ) p ( T 1 | T 0 ) . . . p ( T z + w − 2 | T z + w − 3 ) . This means that each diagonal operates as an “isolating” element between neighboring diagonals, which suggest an extension of the 1d V iterbi algorithm to compute the most probable sequence of states giv en initial v alues. This is, to find the optimal combination of states s ∗ that solves (1) given the whole 2D hidden Marko v model, the labeling field and the observed Gaussian intensity process. Let T 0 = ( s 0 , 0 ); T 1 = ( s 1 , 0 , s 0 , 1 ); . . . be a path through the image where ev ery diagonal marks one step. Each diagonal consists of up to min( w , z ) states: T 0 ∈ L , T 1 ∈ L 2 , T 2 ∈ L 3 , . . . , T z + w − 2 ∈ L . This makes a total of L min( w,z ) possible state combinations only considering the main diagonal. Therefore, the exact decoding of our problem is an NP-hard problem. T o produce an approximated solution we will work constraining the set of possible state combinations. 4.1. P ath-Constrained V iterbi T raining The V iterbi training algorithm is an iterativ e algorithm that estimates all the parameters of a HMM, and finds the sequence of states that best explains the data, gi ven the estimated parameters. The procedure starts with the setting of initial parameters, which can be done using prior information, educated guess or a non-contextual estimation. Using this initial step the algorithm follows the ne xt steps until con ver gence Algorithm 4: Path-Constrained V iterbi Training (PCVT) • Initialize segmentation s (0) : Maximum Likelihood se gmentation of I (ML or EM-ML, see Algorithm 1). • Parameter estimation: Given sequence s ( n − 1) , estimation of a ( n ) ` 1 ,` 2 ,` 3 , µ ( n ) ` and Σ ( n ) ` • Decoding: Choosing the best N paths and V iterbi decoding using these paths. 4.1.1. F irst step V iterbi T raining: P arameter estimation Let’ s suppose we have the initial sequence s (0) obtained from Algorithm 1, or the sequence s ( n − 1) obtained from V iterbi in the pre vious step. Our empirical estimations of the transition probabilities and distributions param- eters are a ( n ) ` 1 ,` 2 ,` 3 = z − 1 X i =1 w − 1 X j =1 χ s ( n − 1) i − 1 ,j = ` 1 , s ( n − 1) i,j − 1 = ` 2 , s ( n − 1) ij = ` 3 z − 1 X i =1 w − 1 X j =1 χ s ( n − 1) i − 1 ,j = ` 1 , s ( n − 1) i,j − 1 = ` 2 (11) µ ( n ) ` = z − 1 X i =0 w − 1 X j =0 χ s ( n − 1) ij = ` I ij z − 1 X i =0 w − 1 X j =0 χ s ( n − 1) ij = ` (12) Σ ( n ) ` = z − 1 X i =0 w − 1 X j =0 χ s ( n − 1) ij = ` ( I ij − µ ` )( I ij − µ ` ) T z − 1 X i =0 w − 1 X j =0 χ s ( n − 1) ij = ` (13) where χ is the indicator function. 8 4.1.2. Second step V iterbi training: Decoding There are several dif ferent approximations in the literature for iterativ e decoding. Sargin et al (2008) 19 proposed an algorithm that iterativ ely updates the posterior distribution on ro ws and columns, i.e. determin- ing horizontal and vertical 1d forward-backward probabilities, combining them to approximate the values of p ( s ij | s i,j − 1 , s i − 1 ,j ) as product of horizontal and vertical probabilities. A more simplistic approach is to represent the dependency of the neighbors as the horizontal and vertical conditionals, a row and column wise constrained application of belief propag ation. Such models deviate us from the original Makovian assumptions, so in this paper we will follow the so called Path Constrained V iterbi T raining Algorithm, Li et al (2000) 15 , Ma et al (2010) 17 , which restricts the possibilities of diagonal strings of states to propose a labeling, and updates all parameters in a pseudo-Expectation Maximization way using such labeling until con ver gence. W e will describe no w the equations in volv ed in the process. T 0 T 1 T w+z-2 1 2 22 12 21 111 112 . . . 1 2 22 11 12 221 a) 1 2 22 11 12 21 1 2 111 112 122 . . . . . . . . . . . . T 0 T 1 T w-1 T w T w+z-2 T 0 T 1 T w+z-2 1 2 22 11 12 21 111 112 122 . . . . . . 1 2 22 11 12 21 1 2 22 12 21 111 112 . . . 2 22 11 12 T 0 T 1 T w+z-2 221 1 T 0 T 1 T w-1 T w T w+z-2 1 2 2 1 1 2 1 1 1 d) b) c) Figure 4: V iterbi Decoding for two possible states: a) V iterbi transformation. b) Path constrained to N = 3 . c) T racking back the optimal path of state sequences using the values sa ved in ϕ . d) Hidden state map. • Choosing the best N paths for decoding In this paper , we propose the following selection. W e firstly assume, as Li et al (2000) 15 , that we can ev aluate the lik elihood of a gi ven diagonal state sequence by simply multiplying the lik elihoods of each pix el without considering statistical dependencies between pixels, i.e. we compute ˆ p ( s ij = ` | I ij , ˆ θ ) ∝ p ( I ij | s ij = `, ˆ θ ) ˆ p ( s ij = ` | ˆ θ ) (14) where p ( I ij | s ij , ˆ θ ) is giv en by (2) and ˆ p ( s ij = ` | ˆ θ ) = z − 1 X i =0 w − 1 X j =0 χ ( s ij = ` ) z w ∀ ` ∈ L . Thus, the most likely state sequence s d, 1 is the one that has in each entry the most lik ely state for the pixel’ s observation on diagonal d ∈ { 0 , 1 , . . . , z + w − 2 } . In our particular implementation, we will obtain the next N − 1 sequences considering only the sequences that result from changing only one state of s d, 1 . Such chains are ordered using (14) and the N − 1 with the largest lik elihood are chosen. In our Discussion section we will comment the incidence of the selection of this bag of N sequences in the con ver gence of our implementation. • V iterbi decoding over the chosen N paths. In Figure 4 we show an schematic example of the decoding algorithm, which follo ws the idea of the original 1d V iterbi algorithm, using diagonals as piv otal points. W e call each diagonal state sequence s d,k where d is the index for the diagonal with d = 0 , 1 , . . . , z + w − 2 9 and k = 1 , 2 , . . . , N indicates the state sequence. Hence the initial state probabilities ˜ π k for pixel (0 , 0) are ˜ π k = p ( T 0 = s 0 ,k ) . W e denote δ d ( k ) as the maximum probability for sequence k on diagonal d . Gi ven the parameters of the PCVT we can write δ d ( k ) = max k 0 ,k 1 ,...,k d − 1 p ( s 0 ,k 0 , . . . , s d − 1 ,k d − 1 , s d,k , I 0 , . . . , I d | θ ) , with d = 0 , . . . , z + w − 2; k = 1 , . . . , N . Furthermore we collect the pixels on diagonal d in a v ariable ∆( d ) and define b s d,k ( I d ) = Y ( i,j ) ∈ ∆( d ) p ( I ij | s d,k ( i, j )) where I d = ( I ij : ( i, j ) ∈ ∆( d )) and b s d,k ( I d ) is the emission probability of sequence k on diagonal d under the assumption that each pixel is statistically independent from its neighbors. Finally , we can calculate the transition probability from sequence k on diagonal d to sequence l on diagonal d + 1 : ˜ a d,k,l = p ( T d +1 = s d +1 ,l | T d = s d,k , θ ) = Y ( i,j ) ∈ ∆( d +1) a s d,k ( i − 1 ,j ) , s d,k ( i,j − 1) , s d +1 ,l ( i,j ) d = 0 , . . . , z + w − 3; k , l = 1 , . . . , N . Now we are ready to initialize the V iterbi decoding Algorithm with the values δ 0 ( k ) = p ( T 0 = s 0 ,k ) , b s 0 ,k ( I 0 ) = ˜ π k b s 0 ,k ( I 0 , 0 ) ∀ k = 1 , 2 , . . . , N . Then we start the recursion δ d +1 ( l ) = max 1 ≤ k ≤ N δ d ( k )˜ a d,k,l b s d +1 ,l ( I d +1 ) ∀ d = 0 , 1 , . . . , z + w − 3 ∀ l = 1 , 2 , . . . , N . After each step, we sav e the index of the most probable sequence on diagonal d that leads to sequence l on diagonal d + 1 in a v ariable called ϕ : ϕ d +1 ( l ) = arg max 1 ≤ k ≤ N { δ d ( k )˜ a d,k,l } ∀ d = 0 , 1 , . . . , z + w − 3 ∀ l = 1 , 2 , . . . , N When the algorithm reaches the last diagonal, we use the values saved in ϕ to track back the most probable path through the image starting with the bottom-right pixel s ∗ z + w − 2 = arg max 1 ≤ k ≤ N δ z + w − 2 ( k ) s ∗ d = ϕ d +1 ( s ∗ d +1 ) ∀ d = z + w − 3 , z + w − 4 , . . . , 1 The final result s ∗ contains the optimal path through the N sequences at each diagonal. Note that this is equal to knowing the complete hidden state map for the whole image. 5. Experimental Results: Image Segmentation In this section we report some experiments on the three algorithms described in this article: Path Contrained V iterbi Training (PCVT , Algorithm 4), Iterated Conditional Modes (ICM, Algorithm 2), and Graph Cut (GC, Al- gorithm 3). For comparison purposes, we also provide the results when applying supervised (ML) or unsupervised (EM-ML) Maximum Likelihood Classification, with Algorithm 1. In the supervised case ML is initialized with the real means and v ariances, known from the simulation parameters, or taken from the GT in the case of real images. In the unsupervised experiment, ML selects the EM initial parameters with the modes of the histogram, and in the case of unimodal distributions, starting from random means. ML is the only algorithm among the tested ones that does not take into account spatial information. All the other algorithms were initialized with (ML) or (EM-ML) outputs, and run until con ver gence. 10 The PCV A code we made to carry out these experiments was designed from scratch, on a Matlab 2013a platform. W e used Matlab Statistical toolbox scripts for (EM-ML) and (ML). In the literature, initialization has also been made with non-parametric segmentation algorithms like k-means, while the means and v ariances for the Gaussian hypothesis on the observations were estimated over the labeled output. For the studied examples, we did not observe significant dif ferences using k-means as initialization method. W e implemented a v ersion of ICM where parameter β is estimated at each iteration by maximizing the current pseudo-likelihood with the Brent algorithm. The ICM visiting scheme consists in dividing the image support into 3 × 3 windows, and updating the labels of each cycle together as we can see in Figure 5. This visiting scheme minimizes conv ergence delays introduced by oscillations between sites. For the Graph Cut algorithm, the Figure 5: Algorithm for visiting sites adopted by ICM. All 9 cycles in one iteration are shown in different colors; the sites at each cycle are updated together . MAP solution is found as the minimum cut on a transportation network, using Boyko v and K olmogorov (2004) 13 av ailable C code, (MAXFLO W - software for computing mincut/maxflo w in a graph, V ersion 3.01). This is also a benchmark code, widely used for segmentation of multimodal images with few classes 25,26 . The β parameter is estimated initially by maximizing the current pseudo-likelihood with the Brent algorithm, particularized to the order of the neighborhood system. One of the goals of this paper is to quantify the gain in classification accuracy produced by imposing Marko vian hypothesis to the labeling field. The performance is e valuated with the following statistical measures: Ov erall Accuracy , Kappa and Relativ e Improv ement. The Kappa statistic is defined as b κ = P L i =1 p ii − P L i =1 p i + p + i 1 − P L i =1 p i + p + i . where p ij , are the proportion of pixels of the true class l j assigned to class l i in the segmented image, n is the total of pix els in the image and p +j are the proportion of pix els of class l j and p i+ are the proportion of pix els assigned to class l i . b κ is an estimator of the degree of matching in a classification scheme. It helps to determine if the scheme output is better than random allocation. Fleiss, Cohen and Everitt 27 hav e sho wn an asymptotic variance for this estimator , ˆ σ 2 ˆ κ = P L k =1 p kk [1 − ( p + k + p k + )(1 − ˆ κ )] 2 + (1 − ˆ κ ) 2 P j 6 = k p jk ( p + j + p k + ) 2 − [ ˆ κ − P L i =1 p i + p + i (1 − ˆ κ )] 2 n (1 − P L i =1 p i + p + i ) 2 , (15) that allows to define an asymptotic normal 100(1 − α )% confidence interval for Kappa, ˆ κ − z α/ 2 ˆ σ ˆ κ , ˆ κ + z α/ 2 ˆ σ ˆ κ . The Overall accurac y index is defined by O A = L X i =1 p ii . It computes the proportion of coincidences between the reference and reconstructed images (well classified pixels). A classification scheme is considered good if its Overall Accuracy is abo ve 85%. When appropriate, we also report the Relativ e Improv ement index related to the benchmark ML or EM-ML classification, defined as Rel ativ e I mpr ov ement = O A method − O A M L 100 − OA M L × 100 W e in vestig ated sev eral examples. W e first compared the performance of the algorithms in terms of the quality of the segmentations on simulated and real data with two classes, (Section5.1). Later on, we consider more complex scenarios studying scanned intra oral X-ray images with hand made Ground T ruth (Section 5.2). 11 5.1. T wo-class examples. Digitalized X-ray images have some le vel of noise introduced by the scanner, but their main characteristic is the smoothness of the joint gray le vel histogram. Classes that are quite distinguishable to the naked eye do not form a distinctive mode in the joint histogram, making segmentation difficult. Image subtraction, image enhancement and filtering are common image processing research areas when working with digitalized or digital X-ray imagery . Caution has been advised by dentists 28 about the ab use of enhancement algorithms in digital X-ray devises, that often introduce artifacts in the images by defect and by excess, leading to possible misdiagnosis. The image data analyzed in this paper has been kindly provided by Odontologist J.G. Flesia, from the Image Processing section of the Dentistry department at Univ ersity of C ´ ordoba, who also helped us to judge the discrimination capabilities of our algorithms. 5.1.1. T itanium scr ew Our first image is a scanned bite wing X-ray image that sho ws a typical implant, consisting of a titanium screw (resembling a tooth root) and background bone tissue. Bitewing X-rays are used to detect decay between teeth and changes in bone density caused by gum disease. They are also useful in determining the proper fit of a crown (or cast restoration) and the marginal inte grity of fillings. The particularity of this image, Figure 6 (a), is its bimodal histogram Figure 6 (b). The segmentations of the observed image after applying the dif ferent algorithms are giv en in Figure 6 (c)-(f). This case is interesting in that it illustrates that all algorithms can obtain good unsupervised segmentations on real images when the image has a bimodal histogram, without any need of pre-filtering or enhancement. PCVT slightly better delineates the right side of the implant that looks more fused to the bone. (a) (b) (c) (d) (e) (f) Figure 6: Inverse digitalized dental X-ray image which sho ws a titanium implant scre w surrounded by bone. (a)original image, (b) histogram, (c) EM-ML segmentation, (d) ICM segmentation, (e) GC se gmentation and (f) PCVT segmentation. 5.1.2. T wo-cir cles Here we discuss 40 experiments made with a synthetic 241 × 241 two color image to test the procedures ability to segment noisy images with unimodal and bimodal mixture distributions. Unimodal distributions are simulated by filling each class with Gaussian data with high variances; modes start to emerge when the v ariances are reduced. Examples of the segmentations obtained are sho wn in Figure 7. The corresponding parameters and performance statistics are giv en in T ables 3 and 4 as an indication of the algorithms ability to restore the truth in addition to visual assessment. These problems are difficult, so the algorithms using spatial information clearly outperform EM for independent mixture model in terms of restoration in both cases, supervised and unsupervised. Moreover , the hidden Potts algorithms, ICM and GC, produce notably lower error rates than PCVT . Confidence intervals for Kappa values mostly re vel that se gmentations are statistically different, and that a good choice for the initial means in the supervised unimodal problems are essential to guarantee con vergence in PCVT and GC. This case also illustrates that, when images generated from severely ov erlapping mixtures are considered, the PCVT performance decreases as the noise increases. This occurs to a lesser extent for ICM and GC, since in this particular situation the use of MAP classifications turns out to be an advantage. W e also provide results for same problems when the noisy image is smoothed out by filtering, allowing the modes to emerge. In this case, the performance is boosted in all algorithms, surpassing 95% accuracy and 0.9 Kappa value, Figure 7, third ro w . 5.1.3. Logo imag e Bidimensional Hidden Markov models are quite interesting models for the prior labeling field, with Gaussian mixture observations. Ne vertheless, the complexity of the final equations calls for reductions and approximations 12 Hist Original EM-ML EM-ML-ICM EM-ML-GC EM-ML-PCVT Hist Original ML ML-ICM ML-GC ML-PCVT Hist Filtered EM-ML EM-ML-ICM EM-ML-GC EM-ML-PCVT Figure 7: First ro w and second ro w: Noisy synthetic image, its histogram and se gmentations. Third row , Unimodal synthetic image filtered, its histogram and unsupervised segmentations. with frail final estimations. In this section we want to discuss the influence of the selection of the best N sequences for decoding in execution time, overall performance. Previous work with PCVT did not explicitly discuss these points, Ma et al (2010) 17 and Li et al (2000) 15 giv e no particulars about the level of complexity added to the algorithm by the decision on ho w to select the bag of possible sequences, and the influence in the segmentation output of such decision. Besides, the y reduced the number of computations estimating parameters and transition probabilities in the training stage, and using such information in testing ne w images. Our personal implementation (a) (b) (c) (d) (e) (f) (g) (h) Figure 8: (a) Noisy UTN logo, (b)EM-ML segmentation, (c)EM-ICM segmentation, (d)EM-GC segmentation, (e) EM-PCVT segmentation, (f) confidence interv als for kappa statistic, (g) Relativ e improvement of all methods related to Maximum Likelihood classification .(h) Relativ e improvement of PCVT related to ML vs number of sequences retained. allows the user to set the number of sequences N in volv ed in decoding, being 250 the preset v alue. W e worked out a small supervised study with the 2-color logo of the T echnological University degraded with gaussian noise. W e used Gaussian densities with class-dependent means being the true noise parameters ( µ 1 , σ 1) = (60 , 15) and ( µ 2 , σ 2) = (40 , 15) . W e made 16 experiments setting the number of path sequences allo wed for decoding in a 13 range from 1 to 250, as we can see in T able 1. W e also computed time until con vergence, number of iterations until con ver gence and relativ e improvement of classification accurac y related to ML segmentation. This study sho ws that allo wing the most probable 20 sequences has the same relati ve impro vement as w orking with the most probable 250, and the time of e xecution goes from 0.8 minutes to 59 minutes on an Intel I7 processor , 6Gb memory HP laptop(see T able 1). W e also show in this table that the number of iterations stabilizes when 50 or more sequences are allowed. This means that the algorithm uses the resources to search for a better minimum, but it w as not found in the 250 sequences. For the same image, the confidence intervals for Kappa also show that GC, PCVT and ICM are significantly different from ML, see panel (c) of Figure 8. The relativ e impro vement of ICM and GC over ML were 37.1096 and 38.0894 respectiv ely , see panel (d) of Figure 8. Number of Number of Relativ e Sequences Iterations T ime Improv ement 1 2 0.014729 0 2 10 0.13618 15.9829 3 16 0.23855 22.4127 4 23 0.3619 25.3521 5 22 0.35359 27.2505 10 25 0.46362 30.4960 15 28 0.60956 30.8634 20 30 0.80751 31.0472 25 30 1.0695 31.0472 35 30 1.777 31.0472 50 36 4.0193 31.0472 75 36 8.2936 31.0472 100 36 13.606 31.0472 150 36 28.7398 31.0472 200 36 46.1519 31.0472 250 36 59.2679 31.0472 T able 1: Relative impro vement ov er ML, number of sequences, number of iterations, and time to con vergence. Maximum number of iterations permitted, 100. 5.2. Multiclass images. 5.2.1. Multiclass high-quality bitewing X-r ay image. Our second scanned bitewing X-ray image shows a central molar tooth and partial views of its neighbor teeth, gums and background, Figure 9 (a). W e hav e set three classes in this image to account for the differences between tooth enamel and dentin. Enamel is the thin, hard material that co vers the dentin, or main body of the teeth, and protects it from harsh temperatures. W e initialized both algorithms with automatic EM and supervised classifica- tion. In Figure 9 we can observe from the segmentations that, in the supervised case, (c), (d), (e), the teeth are correctly segmented, dentine is clearly differentiated from tooth nerve and enamel. Initializing with automatic EM, Figure 9 ((f), (g), (h), gives only a binary division, background from teeth and gum. In this example, PCVT makes a slightly better job than ICM, since the background is better separated from the teeth. The joint histogram of the image, Figure 9 (b), does show at least three distinctive modes; in Figure 10, the histogram sho wn in panel (a)corresponding to the X-ray image of an incisive, is flat. Its corresponding segmentations, shown in panels (c)- (f), made with four classes, do not distinguish well tooth enamel, dentin and background. In the expert’ s opinion, PCVT is the only method that separates correctly enamel from background and dentin. 5.2.2. Multiclass low quality X-ray imag e: W instar r at jaw In this section we work with imagery obtained from the Image Processing section of the Dentistry department at Uni versity of C ´ ordoba. The rat ja w samples are part of a response of bone tissue study , from pups with mothers stressed with continuous light conditions 29 . Histological studies were conducted with the samples to confirm bone loss. Since these studies destroy the samples, film based conv entional dental X-ray images were carefully taken and digitalized with a flatbed scanner so as to document the study . The image used here was segmented by Odontologist J.G. Flesia in 8 different classes. Pups were three days old, so the image’ s gray scale histogram only shows tw o main classes, background and ja w , after filtering. In older 14 (a) (b) (c) (d) (e) (f) (g) (h) (i) (j) Figure 9: Segmentation of in verse digitalized dental X-ray image that has a central molar tooth and partial vie ws of its neighbor teeth, gums and background. First row , algorithms initialized with the modes of the histogram, fixed v ariance, second row , with automatic EM for Gaussian Mixtures. In both rows, from left to right, ML, ICM, GC and PCVT . (a) (b) (c) (d) (e) (f) Figure 10: Segmentation of in verse digitalized dental X-ray images. Panels (a) incisiv e image (b) histogram of pixel intensities. From left to right, ML, ICM, GC and PCVT . rats, bone loss is documented comparing the clear region (soft parts) shown in e xperiment (c) of Figure 11 with the same region of the Control group. In few days old rats, the early bone inserted in the soft part is undev eloped and very dif ficult to segment. Under the advice of the e xperts, we considered the follo wing e xperiments with this data: Experiment (a): T wo classes, complete jaw sample and background. Experiment (b): T wo classes, teeth and jawbone from the rest of the image. Experiment (c): Three classes, hard parts (teeth and jawbone), soft parts (flesh and cartilage) and back- ground. Experiment (d): Four classes, hard parts (teeth and ja wbone), flesh, cartilage and background. In T able 2 we report Kappa and Overall accuracy for the four experiments. The confidence intervals giv e dif- ferent conclusions for them. In experiment (b) and (c) Graph Cut has the highest Kappa v alue and it is statistically different from the others. In experiment (a), all results are statistically different, and ICM has the highest Kappa value, followed by Graph Cut. In experiment (d), PCVT has the highest Kappa value, follo wed by Graph cut and ICM, being all statistically different. PCVT does not have the best performance, but it reduces the background noise much better than the other methods, while maintaining ML accuracy . This is a striking feature detected also in the two-circles synthetic image. W ith PCVT , background was perfectly recovered, all errors were introduced in the object. The solution to 15 this problem could be to consider subclasses inside main regions. Segmentation Problems (a) to (c) are contained in Problem (d), thus PCVT rates can be compared merging the confusion matrix of problem (d) as needed. Doing so we can conclude that PCVT works much better when the number of classes is closer to reality . GC, instead starts, having conv ergence problems, and its performance is better when two classes are in volved. ICM is the only method that is not influenced by the number of classes considered. experiment (a) ML ICM GC PCVT O A 0,9633 0,9649 0,9631 0,9576 kappa 0,9031 ± 0.0004 0,9065 ± 0.0004 0,9016 ± 0.0004 0.8867 ± 0.0004 experiment (b) ML ICM GC PCVT O A 0,9699 0,9700 0,9710 0,9421 kappa 0,8912 ± 0.0005 0,8918 ± 0.0005 0,8945 ± 0.0006 0,8230 ± 0.0004 experiment (c) ML ICM GC PCVT O A 0,9231 0,9398 0,9442 0,9442 kappa 0,8300 ± 0.0005 0,8596 ± 0.0005 0,8672 ± 0.0005 0,8606 ± 0.0005 experiment (d) ML ICM GC PCVT O A 0,8815 0,8983 0,9153 0,9246 kappa 0,7573 ± 0.0005 0,7834 ± 0.0005 0,8089 ± 0.0005 0,8165 ± 0.0005 T able 2: Ov erall accuracy and Kappa values with standard errors for the X-ray image of a W istar rat’ s jaw . The se gmentation selected by Kappa as the best is shown in boldf ace. 6. Summary This paper deals with Marko v random field- model based image segmentation. W e discussed two different Markovian prior models and three of their most noticeable estimation algorithms, Path Constrained V iterbi T rain- ing, Iterated Conditional Modes and Graph Cut. Their presentation in a unified framew ork has the advantage to give better insight in their respectiv e theoretical properties. Graph cut was originally designed for two class images, extended later on to multimodal images. If the images do not have distinctiv e modes, the GC algorithm has a tendency to con ver ge to a reduced set of classes. ICM is more rob ust in that aspect, since it never fails to deliv er a segmentation, and it is usually better than ML. Smoothness parameter estimation is the ke y point in ICM implementations. Underestimation produces a dirty segmentation, so if images are known to contain a number of homogeneous patches, initialization of β should be high, re-estimating it at each iteration until con vergence. PCVT was the only algorithm that ga ve a segmentation which can not be considered a smoothed v ersion of ML. It has the capability of mo ving in the space of all possible segmentations away from the saddle point where ML lays. T o the knowledge of the authors, no comparison has ever been made between Hidden Potts models and Hidden Markov Mesh Models within the same computational study . The complexity of the algorithms is quite different. Pott’ s ICM and Graph Cut have only one parameter to estimate and PCVT has all transitions probabilities to estimate besides the Gaussian parameters. Nevertheless, ex ecution time has the same order in all algorithms, if PCVT is constrained to the most probable 20 sequences in all diagonals. Allowing more sequences giv es more degrees of freedom for choosing the best labeling; ho wev er , the values of the probabilities of the sequences in our studies are very low , so working with more than 60 sequences increases the complexity without allo wing very realistic combinations in the bag of possible sequences. Comparing contextual segmentations of synthetic and real images with the outputs of EM-ML and ML algorithms for inde- pendent mixture models, the three algorithms sho w significant improv ement in terms of se gmentation smoothness. It confirms the gain in dealing with spatial dependencies. Graph Cut segmentation is the only algorithm that was not completely written by the authors. W e provide a Matlab comprehensi ve toolbox 30 for PCVT and ICM algorithms, and a Graph Cut wrapper calling Boyko v and K olmogorov (2004) 13 C code, tested with several real and synthetic images . T o the author’ s knowledge, our PCVT implementation is also the only distributable code that is av ailable for 2D HMM. W e will continue enhancing our toolbox since we support the idea of a general benchmark framew ork for Markovian se gmentation methods. 16 7. References References [1] K. Abend, H. Harley , L. Kanal, Classification of binary random patterns., IEEE T ransactions on Information Theory (4) (1960) 538–544. [2] J. Besag, Spatial interaction and the statistical analysis of lattice systems, Journal of the Royal Statistical Society B B-36 (2) (1974) 192–236. [3] H. T . S. Y . Chen, C. Cattani, Markov models for image labeling,, Mathematical Problems in Engineering, Article ID 814356, 18 pages, vol 2012. [4] J. Besag, On the statistical analysis of dirty pictures, Journal of the Royal Statistical Society B B-48 (3) (1986) 259–302. [5] M. Pereyra, N. Dobigeon, H. Batatia, J. T ourneret, Estimating the granularity parameter of a Potts-Markov random field within an MCMC algorithm, IEEE T ransactions on Image Processing 22 (6) (2013) 2385–2397. [6] J. Gimenez, A. C. Frery , A. G. Flesia, Inference strategies for the smoothness parameter in the potts model., T o appear Procc. IGARSS 2013. [7] A. C. Frery , S. Ferrero, O. H. Bustos, The influence of training errors, context and number of bands in the accuracy of image classification, International Journal of Remote Sensing 30 (6) (2009) 1425–1440. [8] A. Lev ada, N. Mascarenhas, A. T annus., A nov el map-mrf approach for multispectral image contextual clas- sification using a combination of suboptimal iterative algorithms., Pattern Recognition Letters 31 (2010) 1795–1808. [9] G. Celeux, F . Forbes, N. Peyrard, Em procedures using mean field-like approximations for marko v model- based image segmentation., P attern recognition (4) (2003) 131–144. [10] D. Greig, B. Porteous, A. Scheult, Exact maximum a posteriori estimation for binary images., Journal of the Royal Statistical Society B (2) (1989) 271–279. [11] Y . Boyko v , O. V eksler , R. Zabih, F ast approximation energy minimization via graph cuts., IEEE Transactions on Pattern Analysis and Machine Intelligence 6 (6) (1984) 721–741. [12] A. Blake, C. Rother , M. B. an P . Perez, P . T orr ., Interactiv e image segmentation using an adaptive GMMRF model., Proc. Eur . Conf. on Computer V ision. [13] Y . Boykov , V . Kolmogoro v , An experimental comparison of min-cut/max-flow algorithms for energy min- imization in vision, IEEE T ransactions on Pattern Analysis and Machine Intelligence 26 (9) (2004) 1124– 1137. [14] B. Peng, L. Zhang, D. Zhang, A surve y of graph theoretical approaches to image segmentation. [15] J. Li, A. Najmi, R. M. Gray , Image classification by a two-dimensional hidden marko v model., IEEE T rans- actions on Signal Procesing (2) (2000) 517–533. [16] K. Pyun, J. Lim, C. S. W on, R. M. Gray , Image se gmentation using hidden markov gauss mixture models., IEEE T ransactions on Image Processing. 16 (7). [17] X. Ma, D. Schonfeld, A. Khokhar ., V ideo e vent classification and image segmentation based on noncausal multidimensional hidden markov models., P attern Recognition Letters 18 (6) (2010) 1304–1313. [18] J. D. F . Perronnin, K. Rose, Iterative decoding of two-dimensional hidden markov models., Proc. of the IEEE ICASSP . 3 (2003) 329–32. [19] M. E. Sargin, A. Altinok, K. Rose, B. S. Manjunath., Conditional iterative decoding of two dimensional hidden markov models., Proceedings ICIP 2008. (2008) 2552 – 2555. [20] C. S. W on, K. Pyun, R. M. Gray , Automatic object se gmentation in images with lo w depth of field., Int. Conf. Image Processing, Rochester , NY , Sep. 2002. 17 [21] S. Geman, D. Geman, Stochastic relaxation, Gibbs distributions, and the Bayesian restoration of images, IEEE T ransactions on Pattern Analysis and Machine Intelligence 6 (6) (1984) 721–741. [22] A. L. M. Lev ada, N. D. A. Mascarenhas, A. T ann ´ us, Pseudo-likelihood equations for Potts model on higher- order neighborhood systems: A quantitati ve approach for parameter estimation in image analysis, Brazilian Journal of Probability and Statistics 23 (2009) (2009) 120–140. [23] R. Brent, Algorithms for minimization without deriv ati ves., Prentice Hall, Ne w Y ork. [24] K. V ., R. Zabih, What energy function can be minimized via graph cuts?., IEEE Transactions on Pattern Analysis and Machine Intelligence 26 (2) (2004) 147–159. [25] A. M. Ali, A. A. Farag, Graph cut based segmentation of multimodal images., Proceedings of IEEE Interna- tional Symposium on Signal Processing and Information T echnology 72 (2007) 1036–1041. [26] A. M. Ali, A. A. Farag, G. L. Gimel farb, Analytical method for MGRF potts model parameter estimation., Proceedings of Pattern Recognition, 2008. ICPR 2008. 19th International Conference on (2008) 1–4. [27] J. Fleiss, J. Cohen, B. Everitt, Large sample standard errors of kappa and weighted kappa., Psychology Bulletin 72 (5) (1969) 323–327. [28] J. G. Flesia, A. G. Flesia, The influence of processing in the accuracy of measurements in indirect digitalized intra-oral radiographic imaging for forensic applications., The Forensic Oral Pathology Journal (4) (2011) 20–24. [29] P . Fontanetti, P . Mandalunis, N. V ermouth, Response of bone tissue associated with eruption of rat first mandibular molar of pups born from mothers subjected to constant light during pregnanc y ., Bone 49 (6) (2011) 1380–1385. [30] A. Flesia, J. Baumgartner , J. Gimenez, J. Martinez, Reproducible research toolbox., www .famaf.unc.edu.ar/ flesia/RR.html (2013). 18 Original Histogram Exp a) ML ML-ICM ML − GC ML-PCVT Exp b) ML ML − ICM ML-GC ML-PCVT Exp c) ML ML-ICM ML − GC ML-PCVT Exp d) ML ML-ICM ML-GC ML − PCVT Figure 11: Segmentation results of ML, ICM, GC and PCVT for experiments (a)-(d). The segmentation selected by kappa as the best is shown in boldface. Segmentations are supervised, the initial means of the classes are selected from a small sample in the image. 19 Bimodal mixture problem Unsupervised problem unfiltered filtered ( µ, σ ) = (60 , 5) ( µ, σ ) = (100 , 25) ( µ, σ ) = (60 , 5) ( µ, σ ) = (100 , 25) ML PCVT ICM GC ML PCVT ICM GC O A 0.9232 0.9262 0.9840 0.9902 0.9819 0.9819 0.9819 0.9819 kappa 0.8589 ± 0.001 0.8643 ± 0.001 0.9662 ± 0.0007 0.9789 ± 0.0006 0.9613 ± 0.0007 0.9627 ± 0.0007 0.9614 ± 0.0007 0.9611 ± 0.0006 ( µ, σ ) = (60 , 10) ( µ, σ ) = (100 , 20) ( µ, σ ) = (60 , 10) ( µ, σ ) = (100 , 20) ML PCVT ICM GC ML PCVT ICM GC O A 0.9041 0.9071 0.9758 0.9800 0.9916 0.9916 0.9916 0.9916 kappa 0.8289 ± 0.001 0.8346 ± 0.001 0.9501 ± 0.0008 0.9581 ± 0.0655 0.9821 ± 0.0005 0.9825 ± 0.0005 0.9820 ± 0.0005 0.9817 ± 0.0003 ( µ, σ ) = (60 , 15) ( µ, σ ) = (100 , 15) ( µ, σ ) = (60 , 15) ( µ, σ ) = (100 , 15) ML PCVT ICM GC ML PCVT ICM GC O A 0.9071 0.9116 0.9882 0.9871 0.9958 0.9958 0.9958 0.9958 kappa 0.8305 ± 0.001 0.8290 ± 0.0011 0.9747 ± 0.0006 0.9722 ± 0.0717 0.9905 ± 0.0004 0.9899 ± 0.0004 0.9906 ± 0.0004 0.9908 ± 0.0005 ( µ, σ ) = (60 , 20) ( µ, σ ) = (100 , 10) ( µ, σ ) = (60 , 20) ( µ, σ ) = (100 , 10) ML PCVT ICM GC ML PCVT ICM GC O A 0.9262 0.9256 0.9905 0.9807 0.9883 0.9883 0.9883 0.9883 kappa 0.8579 ± 0.001 0.8516 ± 0.0011 0.9795 ± 0.0006 0.9587 ± 0.0513 0.9745 ± 0.0006 0.9743 ± 0.0006 0.9745 ± 0.0006 0.9750 ± 0.0009 ( µ, σ ) = (60 , 25) ( µ, σ ) = (100 , 5) ( µ, σ ) = (60 , 25) ( µ, σ ) = (100 , 5) ML PCVT ICM GC ML PCVT ICM GC O A 0.9510 0.9541 0.9938 0.9818 0.9809 0.9809 0.9809 0.9809 kappa 0.9006 ± 0.001 0.9052 ± 0.001 0.9865 ± 0.0005 0.9609 ± 0.031 0.9599 ± 0.0007 0.9596 ± 0.0007 0.9598 ± 0.0007 0.96 ± 0.0008 Supervised Problem unfiltered filtered ( µ, σ ) = (60 , 5) ( µ, σ ) = (100 , 25) ( µ, σ ) = (60 , 5) ( µ, σ ) = (100 , 25) ML PCVT ICM GC ML PCVT ICM GC O A 0.9089 0.9262 0.9129 0.6522 0.9917 0.9917 0.9917 0.9917 kappa 0.8382 ± 0.001 0.8643 ± 0.001 0.8442 ± 0.001 0.3947 ± 0.0022 0.9825 ± 0.0005 0.9641 ± 0.0007 0.9824 ± 0.0005 0.9820 ± 0.0003 ( µ, σ ) = (60 , 10) ( µ, σ ) = (100 , 20) ( µ, σ ) = (60 , 10) ( µ, σ ) = (100 , 20) O A 0.9057 0.9072 0.9125 0.9125 0.9956 0.9956 0.9956 0.9956 kappa 0.8310 ± 0.001 0.8346 ± 0.001 0.8415 ± 0.001 0.8414 ± 0.001 0.9909 ± 0.0004 0.9827 ± 0.0005 0.9909 ± 0.0004 0.9904 ± 0.0001 ( µ, σ ) = (60 , 15) ( µ, σ ) = (100 , 15) ( µ, σ ) = (60 , 15) ( µ, σ ) = (100 , 15) ML PCVT ICM GC ML PCVT ICM GC O A 0.9087 0.9110 0.9177 0.9178 0.9980 0.9980 0.9980 0.9980 kappa 0.8325 ± 0.001 0.8278 ± 0.0011 0.8467 ± 0.001 0.8466 ± 0.001 0.9958 ± 0.0003 0.9914 ± 0.0004 0.9960 ± 0.0003 0.9956 ± 0.0002 ( µ, σ ) = (60 , 20) ( µ, σ ) = (100 , 10) ( µ, σ ) = (60 , 20) ( µ, σ ) = (100 , 10) O A 0.9274 0.9258 0.9355 0.9358 0.9964 0.9964 0.9964 0.9964 kappa 0.8596 ± 0.001 0.8521 ± 0.001 0.8735 ± 0.001 0.8738 ± 0.001 0.9915 ± 0.0004 0.9731 ± 0.0006 0.9916 ± 0.0004 0.9921 ± 0.0006 ( µ, σ ) = (60 , 25) ( µ, σ ) = (100 , 5) ( µ, σ ) = (60 , 25) ( µ, σ ) = (100 , 5) ML PCVT ICM GC ML PCVT ICM GC O A 0.9479 0.9542 0.9518 0.9510 0.9925 0.9925 0.9925 0.9925 kappa 0.8936 ± 0.001 0.9054 ± 0.001 0.9008 ± 0.001 0.8993 ± 0.001 0.9831 ± 0.0005 0.9601 ± 0.0007 0.9833 ± 0.0005 0.9839 ± 0.0009 T able 3: Segmentation results of ML, ICM, GC and PCVT for the two-circles e xperiment on Section 7. The statistics are Overall Accuracy (OA) and Kappa with standard error. The best se gmentation selected by Kappa is shown in boldf ace. Cases under study are filtered and unfiltered images, unsupervised and supervised segmentations. 20 Unimodal mixture problem Unsupervised Problem unfiltered filtered ( µ, σ ) = (0 , 65) ( µ, σ ) = (60 , 15) ( µ, σ ) = (0 , 65) ( µ, σ ) = (60 , 15) ML PCVT ICM GC ML PCVT ICM GC O A 0.8883 0.6522 0.9862 0.9392 0.9860 0.9860 0.9861 0.9863 kappa 0.7925 ± 0.0011 0.3947 ± 0.0022 0.9702 ± 0.0007 0.8766 ± 0.001 0.9704 ± 0.0007 0.9704 ± 0.0007 0.9706 ± 0.0007 0.9709 ± 0.0007 ( µ, σ ) = (0 , 55) ( µ, σ ) = (60 , 30) ( µ, σ ) = (0 , 55) ( µ, σ ) = (60 , 30) O A 0.8074 0.6522 0.9602 0.8757 0.9924 0.9924 0.9927 0.9930 kappa 0.6793 ± 0.001 0.3947 ± 0.0022 0.9182 ± 0.0009 0.7647 ± 0.001 0.9835 ± 0.0005 0.9836 ± 0.0005 0.9842 ± 0.0005 0.9848 ± 0.0005 ( µ, σ ) = (0 , 45) ( µ, σ ) = (60 , 45) ( µ, σ ) = (0 , 45) ( µ, σ ) = (60 , 45) ML PCVT ICM GC ML PCVT ICM GC O A 0.7623 0.6522 0.9249 0.8538 0.9956 0.9956 0.9960 0.9959 kappa 0.6297 ± 0.0011 0.3947 ± 0.0022 0.8543 ± 0.0011 0.7299 ± 0.001 0.9903 ± 0.0004 0.9903 ± 0.0004 0.9912 ± 0.0004 0.9910 ± 0.0004 ( µ, σ ) = (0 , 35) ( µ, σ ) = (60 , 60) ( µ, σ ) = (0 , 35) ( µ, σ ) = (60 , 60) O A 0.7168 0.6521 0.8644 0.7883 0.9935 0.9934 0.9939 0.9931 kappa 0.6040 ± 0.0011 0.3947 ± 0.0022 0.7768 ± 0.001 0.6886 ± 0.0009 0.9859 ± 0.0005 0.9857 ± 0.0005 0.9867 ± 0.0005 0.9849 ± 0.0005 ( µ, σ ) = (0 , 25) ( µ, σ ) = (60 , 75) ( µ, σ ) = (0 , 25) ( µ, σ ) = (60 , 75) ML PCVT ICM GC ML PCVT ICM GC O A 0.7621 0.6520 0.9038 0.8567 0.9883 0.9888 0.9890 0.9886 kappa 0.6549 ± 0.001 0.3945 ± 0.0022 0.8306 ± 0.001 0.7678 ± 0.001 0.9748 ± 0.0006 0.9757 ± 0.0006 0.9762 ± 0.0006 0.9754 ± 0.0006 Supervised Problem unfiltered filtered ( µ, σ ) = (0 , 65) ( µ, σ ) = (60 , 15) ( µ, σ ) = (0 , 65) ( µ, σ ) = (60 , 15) ML PCVT ICM GC ML PCVT ICM GC O A 0.7976 0.6523 0.7973 0.7946 0.9754 0.9860 0.9784 0.9767 kappa 0.6350 ± 0.0011 0.3950 ± 0.0022 0.6343 ± 0.0011 0.6299 ± 0.0011 0.9477 ± 0.0008 0.9704 ± 0.0007 0.9539 ± 0.0008 0.9504 ± 0.0008 ( µ, σ ) = (0 , 55) ( µ, σ ) = (60 , 30) ( µ, σ ) = (0 , 55) ( µ, σ ) = (60 , 30) O A 0.8065 0.6522 0.8127 0.8127 0.9934 0.9924 0.9941 0.9939 kappa 0.6777 ± 0.001 0.3947 ± 0.0022 0.6854 ± 0.001 0.6849 ± 0.001 0.9856 ± 0.0005 0.9837 ± 0.0005 0.9870 ± 0.0005 0.9867 ± 0.0005 ( µ, σ ) = (0 , 45) ( µ, σ ) = (60 , 45) ( µ, σ ) = (0 , 45) ( µ, σ ) = (60 , 45) ML PCVT ICM GC ML PCVT ICM GC O A 0.7479 0.6522 0.7521 0.7515 0.9955 0.9956 0.9958 0.9957 kappa 0.6214 ± 0.0011 0.3947 ± 0.0022 0.6263 ± 0.0011 0.6253 ± 0.0011 0.9903 ± 0.0004 0.9904 ± 0.0004 0.9909 ± 0.0004 0.9906 ± 0.0004 ( µ, σ ) = (0 , 35) ( µ, σ ) = (60 , 60) ( µ, σ ) = (0 , 35) ( µ, σ ) = (60 , 60) O A 0.7255 0.6522 0.7288 0.7281 0.9889 0.9934 0.9912 0.9918 kappa 0.6084 ± 0.0011 0.3947 ± 0.0022 0.6123 ± 0.0011 0.6115 ± 0.0011 0.9762 ± 0.0006 0.9857 ± 0.0005 0.9809 ± 0.0005 0.9823 ± 0.0005 ( µ, σ ) = (0 , 25) ( µ, σ ) = (60 , 75) ( µ, σ ) = (0 , 25) ( µ, σ ) = (60 , 75) ML PCVT ICM GC ML PCVT ICM GC O A 0.6369 0.6520 0.6389 0.6522 0.9572 0.6522 0.9627 0.9638 kappa 0.5354 ± 0.0013 0.3945 ± 0.0022 0.5380 ± 0.0013 0.3947 ± 0.0022 0.9157 ± 0.0009 0.3947 ± 0.0022 0.9255 ± 0.0009 0.9275 ± 0.0009 T able 4: Segmentation results of ML, ICM, GC and PCVT for the two-circles e xperiment on Section 7. The statistics are Overall Accuracy (OA) and Kappa with standard error. The best se gmentation selected by Kappa is shown in boldf ace. Cases under study are filtered and unfiltered images, unsupervised and supervised segmentations. 21

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment