Jensen divergence based on Fishers information

The measure of Jensen-Fisher divergence between probability distributions is introduced and its theoretical grounds set up. This quantity, in contrast to the remaining Jensen divergences, is very sensitive to the fluctuations of the probability distr…

Authors: P. Sanchez-Moreno, A. Zarzo, J.S. Dehesa

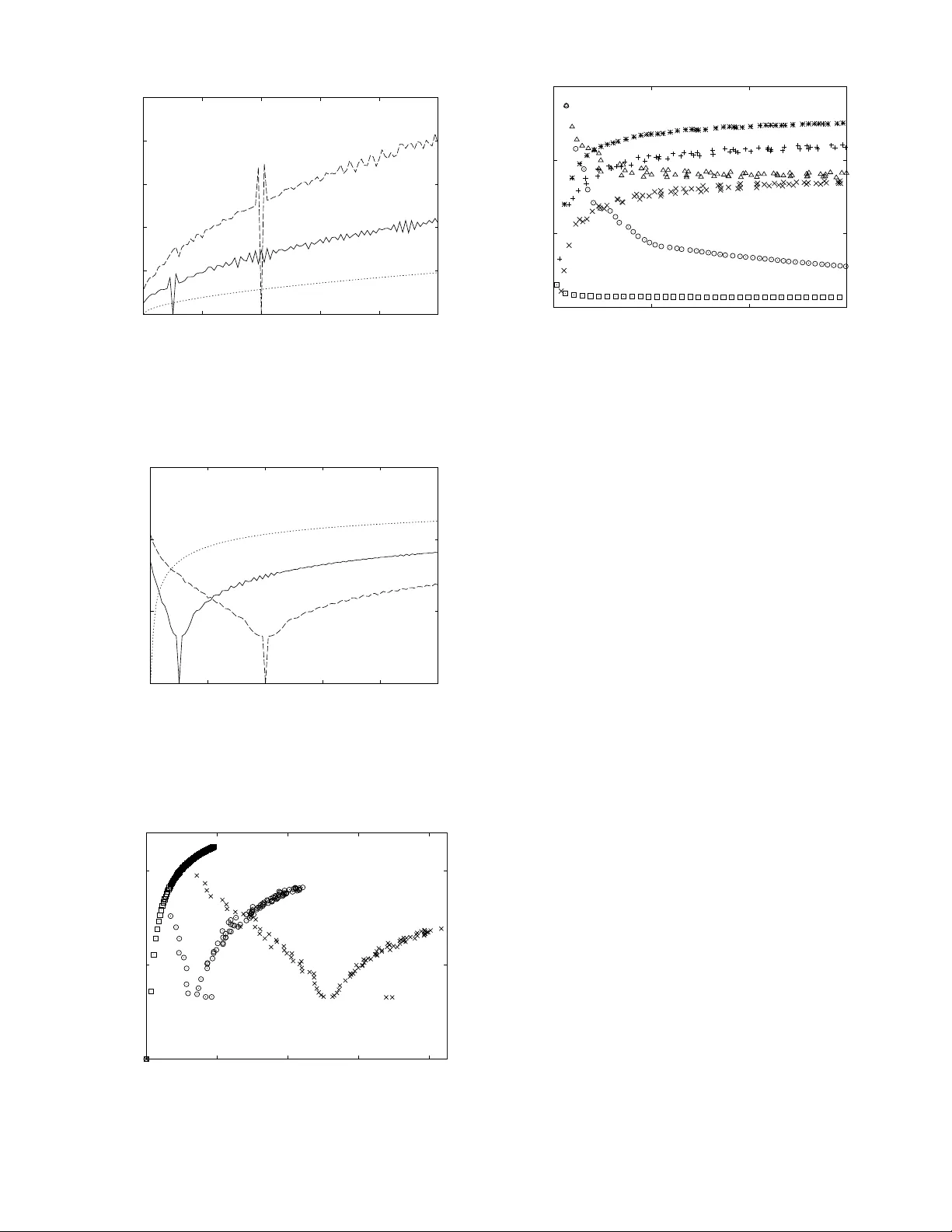

1 Jensen di v ergence based on Fisher’ s information Pablo S ´ anchez-Moreno, Alejandro Zarzo and Jes ´ us S. Dehesa Abstract —The measure of Jensen-Fisher diver gence between probability distributions is introduced and its theoretical grounds set up. This quantity , in contrast to the remaining Jensen diver gences, is very sensitive to the fluctuations of the proba- bility distributions because it is controlled by the (local) Fisher information, which is a gradient functional of the distrib ution. So, it is appropriate and informative when studying the similarity of distributions, mainly for those having oscillatory character . The new Jensen-Fisher divergence shares with the Jensen-Shannon diver gence the follo wing properties: non-negati vity , additivity when applied to an arbitrary number of probability densities, symmetry under exchange of these densities, vanishing if and only if all the densities are equal, and definiteness even when these densities present non-common zeros. Mor eover , the J ensen-Fisher diver gence is shown to be expressed in terms of the relati ve Fisher information as the Jensen-Shannon diver gence does in terms of the Kullback-Leibler or relative Shannon entropy . Finally the Jensen-Shannon and Jensen-Fisher div ergences are compared for the following three large, non-trivial and qualitatively different families of probability distributions: the sinusoidal, generalized gamma-like and Rakhmanov-Hermite distributions. Index T erms —Jensen div ergences, dissimilarity measures, dis- crimination information, Shannon entropy , Fisher information. I . I N T RO D U C T I O N The study of the measures of similarity between probability densities is a fundamental topic in probability theory and statistics per se and because of its numerous applications and usefulness in a wide variety of scientific fields, including sta- tistical physics, quantum chemistry , sequence analysis, pattern recognition, div ersity , homology , neural networks, computa- tional linguistics, bioinformatics and genomics, atomic and molecular physics and quantum information. The most popular measure of similarity between two probability densities ρ 1 ( x ) and ρ 2 ( x ) is possibly the Jensen-Shannon div ergence [1], [2], which is defined as J S D [ ρ 1 , ρ 2 ] = S ρ 1 + ρ 2 2 − S [ ρ 1 ] + S [ ρ 2 ] 2 , (1) where S [ ρ ] denotes the Shannon entropy of the density ρ ( x ) , x ∈ ∆ ⊂ R , giv en by S [ ρ ] = − Z ∆ ρ ( x ) ln ρ ( x ) dx. According to Eq. (1), the Jensen-Shannon diver gence quanti- fies the Shannon entropy excess of a couple of distributions P . S ´ anchez-Moreno is with the Department of Applied Mathematics and the Institute Carlos I for Theoretical and Computational Physics, Univ ersity of Granada, Granada, Spain A. Zarzo is with Department of Applied Mathematics, Polytechnic Univer - sity of Madrid, Madrid, Spain, and the Institute Carlos I for Theoretical and Computational Physics, Uni versity of Granada, Granada, Spain J.S. Dehesa is with the Department of Atomic, Molecular and Nuclear Physics and the Institute Carlos I for Theoretical and Computational Physics, Univ ersity of Granada, Granada, Spain with respect to the mixture of their respectiv e entropies. It can also be expressed as J S D [ ρ 1 , ρ 2 ] = K L ρ 1 , ρ 1 + ρ 2 2 + K L ρ 2 , ρ 1 + ρ 2 2 , indicating that the Jensen-Shannon div ergence is a sym- metrized and smoothed v ersion of the Kullback-Leibler diver - gence (KLD in short) or relative Shannon entropy (also called Kullback di ver gence) defined [3], [4] by K L [ ρ 1 , ρ 2 ] = Z ∆ ρ 1 ( x ) ln ρ 1 ( x ) ρ 2 ( x ) dx. The Jensen-Shannon div ergence as well as the KLD are non- negati ve and vanish if and only if the two densities are equal almost ev erywhere. Unlike the KLD, the Jensen-Shannon div ergence has two additional important characteristics: it is always well defined (in the sense that it can be e valuated e ven when ρ 1 is not absolutely continuous with respect to ρ 2 ), and its square root verifies the triangle inequality so that the square root of J S D [ ρ 1 , ρ 2 ] is a true metric in the space of probability distributions [5]. Furthermore, it admits the generalization to sev eral probability distributions [1] in the following sense: let be a vector ω = ( ω 1 , ω 2 , . . . , ω N ) and a set of N probability densities { ρ j ( x ) } N j =1 ; the Jensen-Shannon diver gence among these probability densities is giv en by J S D ω [ ρ 1 , . . . , ρ N ] = S [ ω 1 ρ 1 + · · · + ω N ρ N ] − ω 1 S [ ρ 1 ] − · · · − ω N S [ ρ N ] , where the nonnegativ e numbers ω i > 0 , for i = 1 , . . . , N , such that P N i ω i = 1 , are weights properly chosen to indicate the relati ve rele vance of each density . This is v ery useful for certain applications such as in bioinformatics, div ersity and atomic physics where there are situations in which it is necessary to measure the ov erall differences of more than two probability distributions. Notice that for N = 2 one has J S D ω [ ρ 1 , ρ 2 ] = S [ ω 1 ρ 1 + ω 2 ρ 2 ] − ω 1 S [ ρ 1 ] − ω 2 S [ ρ 2 ] , so that it simplifies to the expression (1) in the case ω 1 = ω 2 = 1 2 . This diver gence has been extensi vely applied in numerous literary , scientific and technological areas ranging from infor - mation theory [1], [6], [7], statistical and quantum mechanics [8] to bioinformatics and genomics [9], [10], atomic physics [11]–[14] and quantum information [15], [16]. Let us just mention that it has been used as a tool to study EEG records [17], to segment symbolic sequences [18], to measure the complexity of genomic sequences [9], [10], to analyze literary texts and musical score [19], to quantify quantum phenomena such as entanglement and decoherence [15], [16] and to understand the complex organization and shell-filling patterns 2 of the many-electron systems all over the periodic table of chemical elements [11]–[14]. Nev ertheless, the Jensen-Shannon div ergence is, at times, weakly informative or ev en uninformative, mainly because it depends on a quantity of global character (the Shannon entropy) in the sense that it is hardly sensiti ve to the local fluctuations or irregularities of the probability densities. So, by definition, this di ver gence has serious defects to compare probability densities with highly oscillatory character . This is often the common situation in many fields, such as e.g. in the quantum-mechanical description of natural phenomena. T o illustrate it, let us consider the simple case of the motion of a particle-in-a-box (i.e., in the infinite well V ( x ) = 0 , for 0 < x < 1 , and + ∞ elsewhere) [20]. The stationary states of the particle are characterized by the sinusoidal probability densities ρ n ( x ) = 2 sin 2 ( π nx ); x ∈ (0 , 1) , (2) and ρ n ( x ) = 0 when x / ∈ (0 , 1) , where n = 1 , 2 , . . . indicates the energetic lev el and label of the state. The div ergence between the n -th quantum state ρ n ( x ) and the ground state ρ 1 ( x ) is studied in Figure 1 by means of the Jensen-Shannon measure J S D [ ρ n , ρ 1 ] . W e observe that this diver gence tends rapidly to a constant, so that it is not informativ e enough about the enormous differences between these two probability densities. The case of a particle-in-a-box and other cases pointed out later show the necessity for defining a new diver gence to be able to measure the similarity between two or more oscillating probability densities in a much more appropriate quantitative form. This is the purpose of our work: to introduce the Jensen-Fisher di ver gence, which depends on an information- theoretic quantity (the Fisher information [21], [22]) with a locality property: it is very sensitiv e to fluctuations of the density because it is a gradient functional of it. This is done in Section II, where the definition of the new div er gence is giv en and its main properties are shown. Then, in Section III the Jensen-Shannon and Jensen-Fisher div ergences are compared in the frame work of an information theoretic plane for various cases properly chosen to illustrate the relativ e advantages and disadvantages of these two quantities; namely , the sinusoidal, generalized gamma and Rakhmanov-Hermite probability distributions. Finally , some conclusions and open problems are giv en. I I . T H E J E N S E N - F I S H E R D I V E R G E N C E M E A S U R E In this Section we define a ne w Jensen di ver gence between probability distributions based on the Fisher informations of these distributions, and we study its main properties. In doing so, we follo w a line of research similar to that of Lin [1] to deriv e the Jensen-Shannon div ergence. Let X be a continuous random variable with probability density ρ ( x ) , x ∈ ∆ ⊂ R . The (translationally in variant) Fisher information of ρ ( x ) is giv en [21], [22] by F [ ρ ] = Z ∆ ρ ( x ) d dx ln ρ ( x ) 2 dx, (3) and the relativ e Fisher information between the probability densities ρ 1 ( x ) and ρ 2 ( x ) is defined [23] by the directed div ergence F rel [ ρ 1 , ρ 2 ] = Z ∆ ρ 1 ( x ) d dx ln ρ 1 ( x ) ρ 2 ( x ) 2 dx. It is known that this quantity is non-negativ e and additiv e but non symmetric. The relativ e symmetric measure defined by G [ ρ 1 , ρ 2 ] = F rel [ ρ 1 , ρ 2 ] + F rel [ ρ 2 , ρ 1 ] = Z ∆ ( ρ 1 ( x ) + ρ 2 ( x )) d dx ln ρ 1 ( x ) ρ 2 ( x ) 2 dx, is called Fisher diver gence [23], which has been recently used in some applications to study the complexity and shell orga- nization of the atomic systems along the Periodic T able [12], [14]. As in the Shannon case, this diver gence is nonnegati ve and it vanishes if and only if ρ 1 ( x ) = ρ 2 ( x ) for any x ∈ ∆ , but it is undefined unless that ρ 1 ( x ) and ρ 2 ( x ) be absolutely continuous with respect to each other . T o ov ercome these problems of the F rel and G diver gences, we define a new directed diver gence between the probability densities ρ 1 ( x ) and ρ 2 ( x ) as F rel [ ρ 1 , ρ 2 ] = Z ∆ ρ 1 ( x ) d dx ln ρ 1 ( x ) ρ 1 ( x )+ ρ 2 ( x )) 2 ! 2 dx. This quantity is nonnegativ e because it vanishes if and only if ρ 1 ( x ) = ρ 2 ( x ) for any x ∈ ∆ , and it is well defined ev en when both densities hav e non-common zeros. In addition, it can be expressed in terms of the relativ e Fisher information as F rel [ ρ 1 , ρ 2 ] = F rel ρ 1 , ρ 1 + ρ 2 2 . Howe ver , it is nonsymmetric. T o avoid this problem we propose the following symmetrized form J F D [ ρ 1 , ρ 2 ] = F rel [ ρ 1 , ρ 2 ] + F rel [ ρ 2 , ρ 1 ] , (4) as a new measure, which we call Jensen-Fisher divergence between the probability densities ρ 1 ( x ) and ρ 2 ( x ) . From Eqs. 3 (4) and (3), we hav e that J F D [ ρ 1 , ρ 2 ] = F rel ρ 1 , ρ 1 + ρ 2 2 + F rel ρ 2 , ρ 1 + ρ 2 2 = 1 2 Z ∞ −∞ ρ 1 ( x ) d dx ln ρ 1 ( x ) ρ 1 ( x )+ ρ 2 ( x ) 2 ! 2 dx + Z ∞ −∞ ρ 2 ( x ) d dx ln ρ 2 ( x ) ρ 1 ( x )+ ρ 2 ( x ) 2 ! 2 dx = 1 2 Z ∞ −∞ ρ 1 ( x ) ρ 0 1 ( x ) ρ 1 ( x ) − ρ 0 1 ( x ) + ρ 0 2 ( x ) ρ 1 ( x ) + ρ 2 ( x ) 2 dx + Z ∞ −∞ ρ 2 ( x ) ρ 0 2 ( x ) ρ 2 ( x ) − ρ 0 1 ( x ) + ρ 0 2 ( x ) ρ 1 ( x ) + ρ 2 ( x ) 2 dx ! = 1 2 Z ∞ −∞ ( ρ 0 1 ( x )) 2 ρ 1 ( x ) − 2 ρ 0 1 ( x ) ρ 0 1 ( x ) + ρ 0 2 ( x ) ρ 1 ( x ) + ρ 2 ( x ) + ρ 1 ( x ) ( ρ 0 1 ( x ) + ρ 0 2 ( x )) 2 ( ρ 1 ( x ) + ρ 2 ( x )) 2 dx + Z ∞ −∞ ( ρ 0 2 ( x )) 2 ρ 2 ( x ) − 2 ρ 0 2 ( x ) ρ 0 1 ( x ) + ρ 0 2 ( x ) ρ 1 ( x ) + ρ 2 ( x ) + ρ 2 ( x ) ( ρ 0 1 ( x ) + ρ 0 2 ( x )) 2 ( ρ 1 ( x ) + ρ 2 ( x )) 2 dx = 1 2 Z ∞ −∞ ( ρ 0 1 ( x )) 2 ρ 1 ( x ) + ( ρ 0 2 ( x )) 2 ρ 2 ( x ) − 2 ( ρ 0 1 ( x ) + ρ 0 2 ( x )) 2 ρ 1 ( x ) + ρ 2 ( x ) + ( ρ 0 1 ( x ) + ρ 0 2 ( x )) 2 ρ 1 ( x ) + ρ 2 ( x ) = 1 2 Z ∞ −∞ ( ρ 0 1 ( x )) 2 ρ 1 ( x ) + ( ρ 0 2 ( x )) 2 ρ 2 ( x ) − ( ρ 0 1 ( x ) + ρ 0 2 ( x )) 2 ρ 1 ( x ) + ρ 2 ( x ) , so that the Jensen-Fisher di ver gence can be expressed in terms of the Fisher information as J F D [ ρ 1 , ρ 2 ] = F [ ρ 1 ] + F [ ρ 2 ] 2 − F ρ 1 + ρ 2 2 , (5) which is similar to the expression (1) of the Jensen-Shannon div ergence in terms of the Shannon entropy , sav e for a global minus sign. It is important to remark that the Jensen-Fisher div ergence we have just introduced, shares the following properties with the Jensen-Shannon diver gence. First, it is nonnegati ve be- cause of Eq. (5) and the con vexity of the Fisher information which leads to F [ ρ 1 ] + F [ ρ 2 ] 2 ≥ F ρ 1 + ρ 2 2 . Second, it vanishes if and only if the two in v olved densities are equal almost ev erywhere in the interval ∆ . This comes again from the fact that F [ ρ 1 ] + F [ ρ 2 ] 2 = F ρ 1 + ρ 2 2 ⇐ ⇒ ρ 1 = ρ 2 , by keeping in mind the conv exity of the Fisher functional; so that, J F D [ ρ 1 , ρ 2 ] = 0 ⇐ ⇒ ρ 1 = ρ 2 . Third, it is symmetric because one can straightforwardly prov e that J F D [ ρ 1 , ρ 2 ] = J F D [ ρ 2 , ρ 1 ] . Fourth, it is well defined when ρ 1 ( x ) or ρ 2 ( x ) are not absolutely continuous with respect to each other (of course, as long as the Fisher information of each density is well defined) and so, it can be used to compare probability distributions with no common zeros. In addition, the Jensen-Fisher and Jensen-Shannon div er- gences satisfy the following deBruijn-type expression d d J S D [ ρ 1 + √ ρ G , ρ 1 + √ ρ G ] =0 = − 1 2 J F D [ ρ 1 , ρ 2 ] , (6) where ρ G is a normal distribution with zero mean and variance equal to one. This can be proved by considering the original deBruijn’ s [24] identity between the Shannon entropy and the Fisher information: d d S [ ρ + √ ρ G ] =0 = 1 2 F [ ρ ] . (7) Then, d d J S D [ ρ 1 + √ ρ G , ρ 2 + √ ρ G ] =0 = d d S ρ 1 + √ ρ G + ρ 2 + √ ρ G 2 =0 − 1 2 d d S [ ρ 1 + √ ρ G ] =0 − 1 2 d d S [ ρ 2 + √ ρ G ] =0 = d d S ρ 1 + ρ 2 2 + √ ρ G =0 − 1 2 d d S [ ρ 1 + √ ρ G ] =0 − 1 2 d d S [ ρ 2 + √ ρ G ] =0 . T aking into account the deBruijn’ s identity (7), we obtain d d J S D [ ρ 1 + √ ρ G , ρ 2 + √ ρ G ] =0 = 1 2 F ρ 1 + ρ 2 2 − 1 4 F [ ρ 1 ] − 1 4 F [ ρ 2 ] = − 1 2 J F D [ ρ 1 , ρ 2 ] , and the identity (6) is proved. Furthermore, like the Jensen-Shannon div ergence, it ad- mits a generalization to N densities with different weights ω = ( ω 1 , ω 2 , . . . , ω N ) , where ω i ≥ 0 , i = 0 , 1 , . . . , N , and P N i =1 ω i = 1 , J F D ω [ ρ 1 , . . . , ρ N ] = ω 1 F [ ρ 1 ] + · · · + ω N S [ ρ N ] − F [ ω 1 ρ 1 ( x ) + · · · + ω N ρ N ( x )] . Finally , let us highlight that the Jensen-Fisher di ver gence is informative e ven in those cases where the Jensen-Shannon is not. This is illustrated in Figure 1 for the particle-in-a- box system, whose stationary quantum-mechanical states are described by the probability densities (2). Therein, we have depicted the Jensen-Fisher and Jensen-Shannon div ergences between the n th-state density ρ n ( x ) and the ground state ρ 1 ( x ) , gi ven by J F D [ ρ n , ρ 1 ] and J S D [ ρ n , ρ 1 ] respectively , in terms of n when n is going from 1 to 50. It turns out that, as n increases, the Jensen-Fisher div ergence increases much more 4 10 -1 10 1 10 3 0 10 20 30 40 50 Jensen-divergences n Fig. 1. Jensen-Shannon J SD [ ρ n , ρ 1 ] ( ) and Jensen-Fisher J F D [ ρ n , ρ 1 ] ( ) di ver gences between the sinusoidal densities ρ n ( x ) and ρ 1 ( x ) (see Eq. (2)) in terms of the quantum number n . than the Jensen-Shannon, which remains practically constant. This clearly indicates that the former diver gence is much more informativ e than the latter . I I I . J E N S E N - S H A N N O N A N D J E N S E N - F I S H E R D I V E R G E N C E S : M U T UA L C O M PAR I S O N In this Section we compare the Jensen-Fisher and the Jensen-Shannon diver gences in the information-theoretic J F D − J S D plane for the following three large, qualitativ ely different families of probability distributions: the sinusoidal distributions defined by Eq. (2), the generalized gamma-like distributions (see Eq. (8) below) and the Rakhmano v-Hermite distributions (see Eq. (9) below). A. Sinusoidal densities These probability densities giv en by Eq. (2) ha ve been used to describe v arious physical systems, such as e.g. the stationary quantum-mechanical states of a particle-in-a-box (i.e., in an infinite potential well) [20] as already mentioned. Indeed, they characterized the ground state ρ 1 ( x ) and the excited states ρ n ( x ) , with n = 2 , 3 , . . . , of this quantum mechanical system. In Figure 1, previously discussed, we have shown that the excitation of the particle is described in a much better information-theoretical way by the J F D than by the J S D , since the former diver gence between the probability density of the n th-excited-state and the ground state increases when n (so, when the energy of the particle) is increasing, while the J S D remains practically constant. In Figure 2 we hav e depicted the Jensen-Shannon div er - gence J S D [ ρ n , ρ 10 ] between the excited states with quantum number n and 10 against the corresponding Jensen-Fisher div ergence J F D [ ρ n , ρ 10 ] for n = 1 , . . . , 50 . The resulting values (points) obtained for increasing n are joined by a line to guide the eye. It is observed that the J S D remains constant except for some points. They correspond to values of n multiple and submultiple of 10, that is the quantum number of the reference state ρ 10 . At these points, ρ n ( x ) and ρ 10 ( x ) share a number of zeros, so these densities become more similar to each other , and both J S D and J F D achie ve a lower 0.13 0.14 0.15 0.16 0.17 10 3 10 4 J S D [ ρ n , ρ 10 ] J F D [ ρ n , ρ 10 ] n = 5 n = 15 n = 20 n = 30 Fig. 2. J S D [ ρ n , ρ 10 ] − J F D [ ρ n , ρ 10 ] diver gence plane of the sinusoidal densities ρ n ( x ) (see Eq. (2)) for n = 1 , . . . , 50 . value. Less dramatic deviations are observed also for v alues of n = 15 , 25 , 35 , . . . , where the density ρ n ( x ) has some of the zeros of ρ 10 ( x ) . From a quantum-mechanical point of view , the particles on those states share some common forbidden regions (or also some common maximum probability re gions). The beha viours of the J S D and J F D measures on this plane shows that although both quantities are sensitive to the overlap of the zeros, the J F D highlights this phenomenon much better (please, be aware of the different scaling in the axes of the figure). Moreover , the J F D presents larger absolute variations and has a much wider range of variation than the J S D along all the pairs of states considered. B. Generalized gamma-like densities In contrast with the pre vious case (where the densities have sev eral zeros in a finite interval), here we consider a family of one-parameter densities having at most one zero and defined in the whole real line; namely , the gamma-like densities giv en by γ β ( x ) = √ 2 2 β 2 Γ 1 + β 2 − 1 | x | β exp − x 2 2 ; β > 1 , (8) so that for β = 0 , one has a normal distribution: γ ( x ) ≡ γ 0 ( x ) = 1 √ 2 π exp − x 2 2 . In what follows we assume that β > 1 because the Fisher information (3) is not defined for 0 < β ≤ 1 , having a vertical asymptote at β = 1 . W e hav e done two different analyses. First, in Figure 3, the values of J F D [ γ β , γ ] and J S D [ γ β , γ ] are giv en as a function of β . It shows that the Jensen-Fisher diver gence is much more sensitiv e to the multiplicity of the zero than the Jensen-Shannon diver gence. While the former v aries along a range of six orders of magnitude, the latter only varies along one order of magnitude. Second, Figure 4 sho ws the J S D [ γ , γ β ] − J F D [ γ , γ β ] plane between the probability densities γ ( x ) and γ β ( x ) for all v alues β from 1 to 80. Notice that there are two regimes, one for β . 5 10 -4 10 -2 10 0 10 2 1 2 5 10 20 50 Jensen-divergences β Fig. 3. Jensen-Shannon J S D [ γ β , γ ] (dashed line) and Jensen-Fisher J F D [ γ β , γ ] (solid line) divergences between the generalized gamma den- sities γ β ( x ) and γ ( x ) (see Eq. (8)) as functions of the multiplicity parameter β . 0 0.2 0.4 0.6 0.8 10 -3 10 -2 10 -1 10 0 10 1 J S D [ γ β , γ ] J F D [ γ β , γ ] β = 3 2 β = 14 Fig. 4. J SD [ γ β , γ ] − J F D [ γ β , γ ] diver gence plane of the generalized gamma densities γ β ( x ) and γ ( x ) (see Eq. (8)) as functions of the multiplicity parameter β . 3 2 and β & 14 where the J S D remains almost constant and the J F D v aries rapidly , and another for 3 2 . β . 14 where the J F D remains almost constant while the J S D varies. As in the previous example, the range of variation of the JFD is much wider than that of J S D [ γ , γ β ] . C. Rakhmanov-Hermite densities Let us now consider the class of Rakhmanov-Hermite probability densities defined by ρ HO n ( x ) = 1 2 n n ! √ π e − x 2 H 2 n ( x ) , (9) where H n ( x ) is the orthogonal Hermite polynomial of degree n . As for the quantum infinite well pre viously discussed, the parameter n = 0 , 1 , 2 , . . . indicates the energetic lev el and labels the corresponding state. They have been shown to correspond to the quantum-mechanical probability densities of the ground and excited stationary states of the isotropic harmonic oscillator (HO, in short); see e.g. [25], [26]. Here we have done three analyses. Firstly , we depict in Figures 5 and 6 the Jensen-Fisher and Jensen-Shannon di- ver gences, respectively , between the n th-density and each of the reference probability densities with n r = 0 , 10 and 40 ; this is to say the quantities J F D [ ρ HO n , ρ HO 0 ] (dotted line), J F D [ ρ HO n , ρ HO 10 ] (solid line) and J F D [ ρ HO n , ρ HO 40 ] (dashed line) and the corresponding J S D s. W e observe from the comparison of the dotted lines of the two figures that both div ergences between the n th-state density ρ HO n ( x ) and the ground-state density ρ 0 ( x ) hav e a increasing behaviour in terms of the quantum number n as one should expect. More- ov er , from the comparison of the solid lines of the two figures, we realize an opposite behaviour in the two di ver gences be- tween the n th-state density ρ HO n ( x ) and the 10 th-state density ρ HO 10 ( x ) when the quantum number n (which controls the number of zeros of the density) is increasing; namely , the J F D [ ρ HO n , ρ HO 10 ] has an increasing sawtooth behaviour while the J S D [ ρ HO n , ρ HO 10 ] firstly decreases down to zero when n goes from 0 to the reference number 10 , and then increases when n goes from 10 upwards. A similar trend is observed from the comparison of the dashed lines of the two figures for the J F D [ ρ HO n , ρ HO 40 ] and J S D [ ρ HO n , ρ HO 40 ] div ergences but now with respect to the reference number 40 . Clearly , in the three cases ( n, n r ) = ( n, 1) , ( n, 10) and ( n, 40) the Jensen-Fisher diver gence has always higher variations than the Jensen-Shannon di ver gence, because the J F D has a stronger sensitivity than the J S D to the increasing oscillatory character of ρ n ( x ) when n is increasing. In addition we observe that the Jensen-Fisher div ergence presents two maxima around the reference v alue (maxima at 9 and 11 for n = 10 , and at 39 and 41 for n = 40 ) that can be explained taking into account the relati ve position of the zeros of the two inv olved densities. In those cases each zero of one of the densities is situated between two zeros of the other density , so none of the zeros of one of the densities are near the zeros of the other one. The opposite situation occurs for the local minima that appears in the graphics, where some zeros of a density are near the zeros of the other one. The Jensen-Shannon div ergence also shows the latter feature but with much less intensity . Howe ver , it does not show the local maxima around the reference value. Our second analysis is shown in Figure 7, where we study the comparison of the J S D and J F D div ergences between the pairs of probability densities with quantum numbers ( n, 0) , ( n, 10) and ( n, 40) in the frame of the J S D − J F D di- ver gence plane. This figure combines the results contained in the two previous Figures 5 and 6, and shows again the ov erall increasing behaviour of the Jensen-Fisher diver gence and its much higher v alues, in contrast to the Jensen-Shannon div ergence. The most important feature that this Figure shows is the separation of the clouds of points in the direction of increasing J F D , while these clouds are not distinguishable from their J S D values. Let us mention that the two couples of points to the right of the vertices of the V -shaped structures, correspond to the local maxima that appear in Figure 5. Finally , in Figure 8 we use again the J S D − J F D plane as a tool to simultaneously show the distance or diver gence of the pairs of probability densities with quantum numbers ( n, n + 1) , ( n, n + 10) , ( n, 2 n ) , ( n, 2 n + 10) , ( n, 3 n ) and 6 0 40 80 120 160 200 0 20 40 60 80 100 J F D n Fig. 5. Jensen-Fisher diver gences J F D [ ρ HO n , ρ HO 0 ] (dotted line), J F D [ ρ HO n , ρ HO 10 ] (solid line) and J F D [ ρ HO n , ρ HO 40 ] (dashed line) between the n th-excited state and the ground state, 10 th and 40 th -excited states of the isotropic harmonic oscillator, respectiv ely , in terms of the quantum number n . 0 0.25 0.5 0.75 0 20 40 60 80 100 J S D n Fig. 6. Jensen-Shannon div ergences J S D [ ρ HO n , ρ HO 0 ] (dotted line), J SD [ ρ HO n , ρ HO 10 ] (solid line) and J S D [ ρ HO n , ρ HO 40 ] (dashed line) between the n th-e xcited state and the ground state, 10 th and 40 th -excited states of the isotropic harmonic oscillator , respectively , in terms of the quantum number n . 0 0.25 0.5 0 40 80 120 160 J S D J F D Fig. 7. J S D − J F D div ergence plane of the isotropic harmonic oscillator for the pairs of stationary states ( n, m ) = ( n, 0) ( ), ( n, 10) ( ) and ( n, 40) ( × ) when n varies from 0 to 100. 0.15 0.25 0.35 0.45 0 80 160 240 J S D J F D Fig. 8. J S D − J F D div ergence plane of the isotropic harmonic oscillator for the pairs of stationary states ( n, m ) = ( n, n + 1) ( ), ( n, n + 10) ( ), ( n, 2 n ) ( × ), ( n, 2 n +10) ( M ), ( n, 3 n ) ( + ) and ( n, 4 n ) ( ∗ ), for sev eral values of n from n = 0 up to a value of the J F D of 240. ( n, 4 n ) . Here we notice that, as n increases, the J F D tends to infinity in all the cases, but the J S D tends to a constant. W e observe that J S D [ ρ HO n , ρ HO n +1 ] tends to the same value as J S D [ ρ HO n , ρ HO n +10 ] , and J S D [ ρ HO n , ρ HO 2 n ] tends to the same value as J S D [ ρ HO n , ρ HO 2 n +10 ] , being those two limiting v alues different from each other . Then, we can conclude that this asymptotic v alue of the J S D depends on the relative spread- ing of the in v olved densities. When n tends to infinity , the spreading of the density ρ HO n ( x ) con verges to that of ρ HO n +1 ( x ) or ρ HO n +10 ( x ) . Howe ver , ρ HO n ( x ) is less spread than ρ HO 2 n ( x ) or ρ HO 2 n +10 ( x ) . Thus, the JSD between those densities tends to a different value. This trend is confirmed by the asymptotic values of J S D [ ρ HO n , ρ HO 3 n ] and J S D [ ρ HO n , ρ HO 4 n ] . As in previous analyses, Figure 8 shows that the J F D has a much wider range of v ariation than the J S D , so that it allows us to discriminate between different values of n in a better way . Howe ver , contrary to what happened in Figure 7, the J F D cannot distinguish between the different clouds of points of Figure 8. This is a clear illustration of the complementarity of both the J S D and J F D when analysing the similarity of probability distributions. I V . C O N C L U S I O N S A N D O P E N P RO B L E M S In this paper the Jensen-Fisher div ergence measure is intro- duced and its theoretical grounds are sho wn. In summary , we find that the main properties (non-negati vity , additivity , sym- metry , vanishing, definiteness, deBruijn-like identity) of the Jensen-Shannon di ver gence are shared by the new diver gence. Moreov er , the Jensen-Fisher div ergence is applied to three large families of representativ e probability distrib utions (sinu- soidal, gamma-lik e and Rakhmanov-Hermite distrib utions) and compared with the Jensen-Shannon div ergence. Our results illustrate that, although both J S D and J F D diver gences are complementary in the sense that they are sensitive to different aspects of the probability distributions, the latter is more informativ e when studying the similarity of oscillating densities. 7 Finally we should immediately point out three open issues. First, the square root of J S D is known to define a metric [5], [27]. Does the Jensen-Fisher div ergence defines another distance metric for probability distributions beyond the J S D [5]–[7], [27] and the variational distance [7]?. This is still an open problem which deserves much attention per se and because of its so many implications in numerous scientific and technological fields. Second, some generalizations of the J S D hav e been recently introduced such as the Jensen-R ´ enyi [28] and Jensen-Tsallis [18], [29], [30] diver gences as well as the Jensen diver gences of order α [31] paying the price of the loss of certain interesting properties but gaining more flexibility because they hav e a new degree of freedom provided by its parameter q or α , what is very useful in numerous applications (see e.g., [32]–[36]). Does the Jensen-Fisher diver gence admits any generalization?. The answer is yes but this avenue is still to be pav ed. Finally , does there exist a quantum version of the J F D based on the quantum Fisher information [37], [38] similarly to the quantum J S D based on the von Neuman entropy [27], [31], [39]–[42]? A C K N O W L E D G E M E N T PSM and JSD are very grateful to Junta de Andaluc ´ ıa for the grants FQM-2445 and FQM-4643, and the Ministerio de Ciencia e Innov aci ´ ıon for the grant FIS2008-02380. PSM and JSD belong to the research group FQM-207. AZ agkno wledges partial fiancial support from Ministerio de Educaci ´ on y Ciencia of Spain under grants MTM2006- 07186 and MTM2009-14668-C02-02 and from Consejer ´ ıa de Innov aci ´ on, Ciencia y Empresa de la Junta de Andaluc ´ ıa, Spain, under grant P09-TEP-5022. Also, AZ has been partially funded by UPM under some contracts. This work w as finished while on a staying of AZ at Instituo Carlos I of the Granada Univ ersity partly funded by this Institute and also by the Departamento de Matem ´ atica Aplicada a la Ingenier ´ ıa Industrial, ETSII, UPM. R E F E R E N C E S [1] J. Lin, “Div ergence measures based on the Shannon entropy , ” IEEE T rans. Information Theory , vol. 37, pp. 145–151, 1991. [2] C. R. Rao, “Differential Geometry in Statistical Inference, ” IMS-Lecture Notes , vol. 10, pp. 217–225, 1987. [3] S. Kullback and A. Leibler , “On the information and sufficienc y , ” Ann. Math. Statist. , vol. 22, pp. 79–86, 1951. [4] S. Kullback, Information Theory and Statistics . Dover Publications, New Y ork, 1968. [5] D. M. Endress and J. E. Schindelin, “ A new metric for probability distributions, ” IEEE T rans. Information Theory , vol. 49, pp. 1858–1860, 2003. [6] F . T opsøe, “Some inequalities for information diver gence and related measures of discrimination, ” IEEE T rans. Information Theory , vol. 46, pp. 1602–1609, 2000. [7] S. C. Tsai, W . G. Tzeng, and H. L. W u, “On the Jensen-Shannon div ergence and variational distance, ” IEEE T rans. Information Theory , vol. 51, pp. 3333–3336, 2005. [8] P . W . Lamberti, A. P . Majte y , M. Madrid, and M. Pereyra, in Pr oceed. of the XV Conference on Non-equilibrium Statistical Mechanics and Nonlinear Physics , O. Descalzi, O. A. Rosso, and H. A. Larrondo, Eds. American Institute of Physics, New Y ork, 2007, pp. 32–37. [9] R. Rom ´ an-Rold ´ an, P . Bernaola-Galv ´ an, and J. Oliv er , “Sequence compo- sitional complexity of DNA through an entropic segmentation method, ” Phys. Rev . Lett. , vol. 80, pp. 1344–1347, 1998. [10] G. E. Simms, S. R. Jun, G. A. W u, and S. H. Kim, “ Alignment-free genome comparison with feature frequency profiles (FFP) and optimal resolutions, ” Proc. Natl. Acad. Sci. USA , vol. 106, pp. 2677–2682, 2009. [11] J. C. Angulo, J. Antolin, S. L ´ opez-Rosa, and R. O. Esquivel, “Jensen- Shannon diver gence in conjugate spaces: The entropy excess of atomic systems and sets with respect to their constituents, ” Physica A , vol. 389, p. 899, 2010. [12] J. Antolin, J. C. Angulo, and S. L ´ opez-Rosa, “Fisher and Jensen- Shannon diver gences: Quantitative comparisons among distributions. Application to position and momentum atomic densities, ” J. Chem. Phys. , vol. 130, p. 074110, 2009. [13] K. C. Chatzisavv as, C. C. Moustakidis, and C. Panos, “Information entropy , information distances, and complexity in atoms, ” J . Chem. Phys. , vol. 123, p. 174111, 2005. [14] S. L ´ opez-Rosa, J. Antol ´ ın, J. C. Angulo, and R. O. Esqui vel, “Div ergence analysis of atomic ionization processes and isoelectronic series, ” Phys. Rev . A , vol. 80, p. 012505, 2009. [15] A. Majtey , P . W . Lamberti, M. T . Martin, and A. Plastino, “W ootters’ distance revisited: a ne w distinguishability criterium, ” Eur . Phys. J. D , vol. 32, pp. 413–419, 2005. [16] A. P . Majtey , A. Borras, A. R. Plastino, M. Casas, and A. Plastino, “Some feature of the state-space trajectories follo wed by robust entan- gled four-qubit states during decoherence, ” Int. J . Quantum Inf. , vol. 8, pp. 505–515, 2010. [17] M. E. Pereyra, P . W . Lamberti, and O. Rosso, “W avelet Jensen-Shannon div ergence as a tool for studying the dynamics of frequency band components in EEG epileptic seizures, ” Physica A , v ol. 379, pp. 122– 132, 2007. [18] P . W . Lamberti and A. P . Majtey , “Non-logarithmic Jensen-Shannon div ergence, ” Physica A , vol. 329, pp. 81–86, 2003. [19] D. H. Zanette, “Segmentation and context of literary and musical sequences, ” Complex Systems , vol. 17, pp. 279–293, 2007. [20] A. Galindo and P . Pascual, Quantum Mechanics . Springer , Berlin, 1990. [21] R. A. Fisher , “Theory of statistical estimation, ” Pr oc. Cambridge Phil. Soc. , vol. 22, pp. 700–725, 1925, reprinted in Collected Papers of R.A. Fisher , edited by J.H. Bennet (Univ ersity of Adelaide Press, South Australia), 1972, 15–40. [22] B. R. Frieden, Science fr om Fisher Information . Cambridge Univ ersity Press, Cambridge, 2004. [23] P . Hammad, “Mesure d’ordre α de l’information au sens de Fisher , ” Revue de Statistique Appliqu ´ ee , vol. 26, pp. 73–84, 1978. [24] T . M. Cover and J. A. Thomas, Elements of Information Theory . W iley , N.Y ., 1991. [25] A. F . Nikiforov and V . B. Uvarov , Special Functions in Mathematical Physics . Birk ¨ auser-V erlag, Basel, 1988. [26] P . S ´ anchez-Moreno, J. S. Dehesa, D. Manzano, and R. J. Y ´ a ˜ nez, “Spreading lengths of Hermite polynomials, ” J. Comput. Appl. Math. , vol. 233, pp. 2136–2148, 2010. [27] P . W . Lamberti, A. P . Majtey , A. Borras, M. Casas, and A. Plastino, “Metric character of the quantum Jensen-Shannon div ergence, ” Phys. Rev . A , vol. 77, p. 052311, 2008. [28] J. Burbao and C. R. Rao, “On the conv exity of some diver gence measures based on entropy functions, ” IEEE T rans. Information Theory , vol. 28, p. 489, 1982. [29] A. B. Hamza, “Nonextensi ve information-theoretic measure for image edge detection, ” J. Electr on. Imaging , vol. 15, p. 013011, 2006. [30] A. P . Majtey , P . W . Lamberti, and A. Plastino, “ A monoparametric family of metrics for statistical mechanics, ” Physica A , vol. 344, pp. 547–553, 2004. [31] J. Bri ¨ et and P . Harremo ¨ es, “Properties of classical and quantum Jensen- Shannon diver gence, ” Phys. Rev . A , vol. 79, p. 052311, 2009. [32] A. B. Hamza and H. Krim, “Jensen-R ´ enyi diver gence measure: theoret- ical and computational perspecti ves, ” in IEEE International Symposium on Information Theory ISIT , 2003, p. 257. [33] Y . He, A. B. Hamza, and H. Krim, “ A generalized di ver gence measure for robust image registration, ” IEEE Tr ans. Signal Proc. , vol. 51, p. 1211, 2003. [34] M. C. Chiang, R. A. Dutton, K. M. Hayashi, A. W . T oga, O. L. Lopez, H. J. Aizenstein, J. T . Becker , and P . M. Thompson, “Fluid registration of medical images using Jensen-R ´ enyi di ver gence reveals 3D profile of brain atrophy in HIV/AIDS, ” in International Symposium on Biomedical Imaging , 2006, p. 193. [35] F . W ang, T . Syeda-Mahmood, B. C. V emuri, D. Beymer , and A. Ran- garajan, “Closed-form Jensen-Renyi di vergence for mixture of Gaussians and applications to group-wise shape registration, ” in Lectur e Notes in Computer Scienc., V ol. 5761 , 2009, pp. 648–655. 8 [36] J. Antolin, S. L ´ opez-Rosa, J. C. Angulo, and R. O. Esqui vel, “Jensen- Tsallis div ergence and atomic dissimilarity for position and momentum space electron densities, ” J. Chem. Phys. , v ol. 132, p. 044105, 2010. [37] S. Luo, “Quantum Fisher information and uncertainty relations, ” Lett. Math. Phys. , vol. 53, pp. 243–251, 2000. [38] P . Gibilisco, F . Hiai, and D. Petz, “Quantum covariance, quantum Fisher information, and the uncertainty relations, ” IEEE T rans. Information Theory , vol. 55, pp. 439–443, 2009. [39] A. P . Majtey , P . W . Lamberti, and D. P . Prato, “Jensen-Shannon div er- gence as a measure of distinguishability between mixed quantum states, ” Phys. Rev . A , vol. 72, p. 052310, 2005. [40] P . W . Lamberti, M. Portesi, and J. Sparacino, “Natural metric for quantum information theory , ” 2009, [41] S. L. Braunstein and C. M. Caves, “Statistical distance and the geometry of quantum states, ” Phys. Rev . Lett. , vol. 72, p. 3439, 1994. [42] W . Roga, M. Fannes, and K. Zyczkowski, “Universal bounds fo the Holev o quantity , coherent information and the Jensen-Shannon diver - gence, ” Phys. Rev . Lett. , vol. 105, p. 040505, 2010.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment