On the condensed density of the generalized eigenvalues of pencils of Hankel Gaussian random matrices and applications

Pencils of Hankel matrices whose elements have a joint Gaussian distribution with nonzero mean and not identical covariance are considered. An approximation to the distribution of the squared modulus of their determinant is computed which allows to g…

Authors: Piero Barone

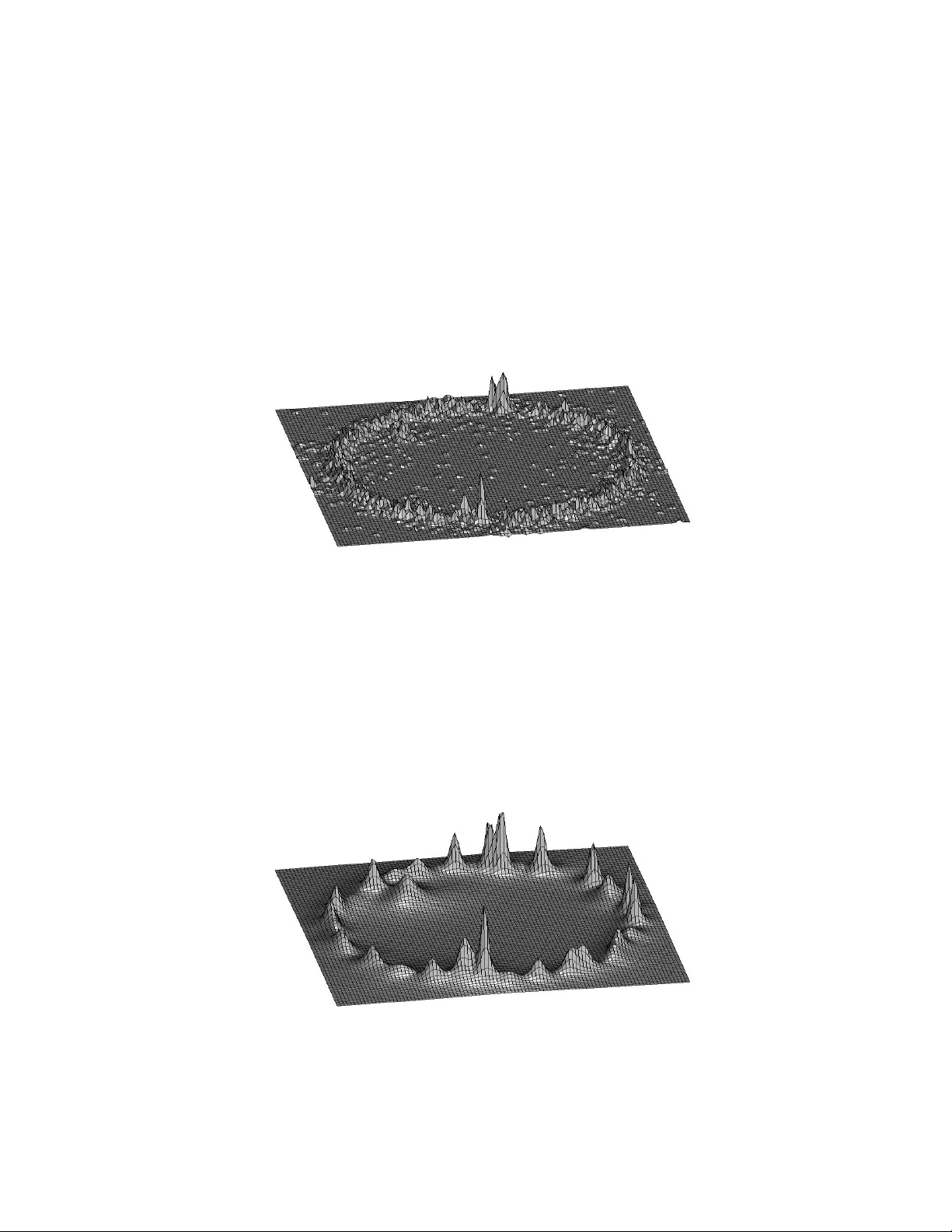

On the condensed densit y of the generalized eigen v alues of p encils of Gaussian random ma- trices and applications P . Barone Istituto p er le Applic azioni del Calc olo ”M. Pic one”, C.N.R., Via dei T aurini 19, 00185 R ome, Italy e-mail: pier o.b ar one@gmail.c om, p.b ar one@iac.cnr.it fax: 39-6-4404306 Abstract P encils of matrices whose elemen ts ha ve a join t noncen tral Gaus- sian distribution with nonidentical co v ariance are considered. An appro ximation to the distribution of the squared mo dulus of their determinan t is computed whic h allo ws to get a closed form ap- pro ximation of the condensed densit y of the generalized eigen v al- ues of the p encils. Implications of this result for solving sev eral momen ts problems are discussed and some n umerical examples are pro vided. Key wor ds: random determinan ts, complex exp onen tials, complex momen ts problem, logarithmic p oten tials 1 In tro duction Let us define the random Hankel matrices U 0 = a 0 a 1 . . . a p − 1 a 1 a 2 . . . a p . . . . . . a p − 1 a p . . . a n − 2 , U 1 = a 1 a 2 . . . a p a 2 a 3 . . . a p +1 . . . . . . a p a p +1 . . . a n − 1 (1) where n = 2 p, a k = s k + k , k = 0 , 1 , 2 , . . . , n − 1 , k is a complex Gaussian, zero mean, white noise, with v ariance σ 2 and s k ∈ I C . In the follo wing all random quan tities are denoted b y b old c haracters. Let us consider the generalized eigen v alues { ξ j , j = 1 , . . . , p } of ( U 1 , U 0 ) i.e. the ro ots of the p olynomial P ( z ) = det[( U 1 − z U 0 )], whic h form a set of exc hangeable ran- dom v ariables. Their marginal density h ( z ) also called condensed densit y [14] or normalized one-p oint correlation function [12], is the exp ected v alue of the (random) normalized coun ting measure on the zeros of P ( z ) i.e. h ( z ) = 1 p E p X j =1 δ ( z − ξ j ) or, equiv alen tly , for all Borel sets A ⊂ I C Z A h ( z ) dz = 1 p p X j =1 P r ob ( ξ j ∈ A ) . 2 It can b e pro v ed that (see e.g. [4]) h ( z ) = 1 4 π ∆ u ( z ) where ∆ denotes the Laplacian op erator with resp ect to x, y if z = x + iy and u ( z ) = 1 p E n log( | P ( z ) | 2 ) o is the corresp onding logarithmic p oten tial. The condensed densit y h ( z ) pla ys an imp ortant role for solving momen t problems suc h as the trigonometric, the complex, the Hausdorff ones. It w as sho wn in [2 − 10], [17,18] that all these problems can b e reduced to the complex exp onen tials approxi- mation problem (CEAP), whic h can b e stated as follo ws. Let us consider a uniformly sampled signal made up of a linear com bi- nation of complex exp onentials s k = p ∗ X j =1 c j ξ k j . (2) where c j , ξ j ∈ I C . Let us assume to kno w an ev en num b er n = 2 p, p ≥ p ∗ of noisy samples a k = s k + k , k = 0 , 1 , 2 , . . . , n − 1 where k is a complex Gaussian, zero mean, white noise, with finite kno wn v ariance σ 2 . W e w an t to estimate p ∗ , c j , ξ j , j = 1 , . . . , p ∗ , whic h is a well known ill-posed inv erse problem. W e notice that, in the noiseless case and when p = p ∗ , the parameters ξ j are the generalized eigen v alues of the p encil ( U 1 , U 0 ) where now U 0 and U 1 are built as in (1) but starting from { s k } . 3 F rom its definition it is evident that the condensed densit y pro- vides information ab out the lo cation in the complex plane of the generalized eigenv alues of ( U 1 , U 0 ) whose estimation is the most difficult part of CEAP . Unfortunately its computation is v ery dif- ficult in general. In [10] a method to solv e CEAP w as prop osed based on an appro ximation of the condensed densit y . An explicit expression of h ( z ) prop osed b y Hammersley [14] when the co ef- ficien ts of P ( z ) are jointly Gaussian distributed w as used. The second order statistics of these co efficien ts in the CEAP case w ere estimated b y computing man y P ade’ appro ximan ts of dif- feren t orders to the Z -transform of the data { a k } . This last step w as essen tial to realize the a v eraging that app ears in the defi- nition of h ( z ), which is the k ey feature to mak e the condensed densit y a useful to ol for applications. In fact in the noiseless case h ( z ) is a sum of Dirac δ distributions centered on the generalized eigen v alues while, when the signal is absen t ( s k = 0 ∀ k ), it w as pro ved in [4] that if z = r e iθ , the marginal condensed density h ( r ) ( r ) w.r. to r of the generalized eigen v alues is asymptotically in n a Dirac δ supp orted on the unit circle ∀ σ 2 . Moreo v er for finite n the marginal condensed densit y w.r. to θ is uniformly distributed on [ − π , π ]. Therefore if the signal-to-noise ratio (e.g. S N R = 1 σ min h =1 ,p ∗ | c h | ) is large enough h ( z ) has lo cal maxima in a neigh b or of eac h ξ j , j = 1 , . . . , p ∗ and this fact can b e ex- ploited to get go o d estimates of ξ j . Ho w ev er usually we ha v e only 4 one realization of the discrete pro cess { a k } , hence w e cannot esti- mate h ( z ) b y a veraging. In [3] a sto chastic p erturbation metho d is prop osed to o v ercome this problem. It is based on the compu- tation of the generalized eigen v alues of man y p encils obtained b y suitable p erturbations of the measured one. The computational burden is therefore relev an t. W e then lo ok for an appro xima- tion of h ( z ) whic h can b e w ell estimated b y a single realization of { a k } . It turns out that the prop osed appro ximation holds for p encils made up b y random matrices whose elemen ts hav e a join t Gaussian distribution. Ho w ever the sp ecific algebraic structure of CEAP , whic h giv es rise to pencils of Hank el random matrices, can b e tak en into account to further reduce the computational burden. Moreo v er it will b e sho wn that the noise con tribution to h ( z ) can b e smo othed out to some exten t simply acting on a parameter of the appro ximant. The pap er is organized as follows. In Section 1 some algebraic and statistical preliminaries are dev elop ed. In Section 2 the closed form approximation of h ( z ) is defined in the general case. In Sec- tion 3 it is sho wn ho w to get a smooth estimate of h ( z ) from the data b y exploiting its closed form appro ximation in the Han- k el case. In Section 4 computational issues are discussed in the Hank el case. Finally in Section 5 some n umerical examples are pro vided. 5 1 Preliminaries Let us consider the p × p complex random p encil G ( z ) = G 1 − z G 0 , z ∈ I C where the elemen ts of < G 0 , = G 0 , < G 1 , = G 1 ha ve a joint Gaussian distribution and < and = denotes the real and imaginary parts. Dropping the dep endence on z for simplifying the notations, let us define G = [ g 1 , . . . , g p ] , g = v ec ( G ) = [ g T 1 , g T 2 , . . . , g T p ] T Moreo ver ∀ z , let us define ˇ g k = [ < g k T , = g k T ] T and ˇ g = [ ˇ g T 1 , ˇ g T 2 , . . . , ˇ g T p ] T . Then ˇ g will ha v e a m ultiv ariate Gaussian distribution with mean µ = E [ ˇ g ] ∈ I R 2 p 2 and co v ariance Σ ∈ I R 2 p 2 × 2 p 2 . W e notice that no indep endence assumption neither b et w een elemen ts of G 0 and G 1 nor b etw een real and imaginary parts is made. Hence this is the most general hypothesis that can b e done ab out the Gaussian distribution of the complex random v ector g , (see [21] for a full discussion of this p oint). Let us consider the QR factorization of G where Q H Q = QQ H = I p where H denotes transposition plus conjugation, R is an upp er triangular matrix and I p is the iden tity matrix of order p . W e then 6 ha ve | det( G ) | 2 = | det( QR ) | 2 = | det( R ) | 2 = Y k =1 ,p | R k k | 2 . W e wan t to compute the condensed densit y of the generalized eigen v alues of the p encil G ( z ) whic h is giv en b y [14,4]: h ( z ) = 1 4 π p ∆ E n log( | det [ G ( z )] | 2 ) o = 1 4 π p ∆ p X k =1 E n log | R k k ( z ) | 2 o . W e are therefore interested on the distribution of | R k k | 2 , k = 1 , . . . , p in order to compute E [log | R k k | 2 ]. T o p erform the QR factorization of the random matrix G w e can use the Gram-Sc hmidt algorithm. If Q = [ q 1 , . . . , q p ] it is given in T able 1. F or k = 1 , . . . , p w k = g k if k > 1 then R ik = q H i g k , i = 1 , . . . , k − 1 w k = w k − P k − 1 i =1 R ik q i end R kk = q w H k w k q k = w k R kk end T able 1 The Gram-Sc hmidt algorithm 7 W e notice that | R k k | = R k k and R 2 k k = g H k g k , if k = 1 g H k I p − P k − 1 i =1 q i q H i g k , if k > 1 where q i are functions of g j , j = 1 , . . . , i . Therefore, denoting by ˜ g k = { ˇ g 1 , . . . , ˇ g k − 1 } w e ha v e that R 2 11 is a quadratic form in Gaussian v ariables R 2 k k , k > 1 , conditioned on ˜ g k , is a quadratic form in Gaussian v ariables . Moreo ver let us denote b y e k the k − th column of I p and let b e E k = e k ⊗ I 2 p then µ k = E T k µ, Σ k = E T k Σ E k are the mean v ector and co v ariance matrix of ˇ g k . Then we ha v e Lemma 1 F or k = 1 and for k > 1 , c onditione d on ˜ g k , R 2 k k is distribute d as P n r =1 λ ( k ) r χ 2 ν r ( δ r ) , n = 2 p , and χ 2 ν r ( δ r ) ar e indep en- dent, wher e 2( p − k + 1) = P n r =1 ν r , λ ( k ) r ar e the distinct eigen- values of Σ 1 / 2 k R ( A k )Σ 1 / 2 k with multiplicity ν r , u ( k ) i , i = 1 , . . . , n ar e the c orr esp onding eigenve ctors, δ r = P ( r ) ( u ( k ) i ) T Σ − 1 / 2 k µ k ) 2 , the summation b eing over al l eigenve ctors c orr esp onding to eigen- value λ ( k ) r , A k = ( I p − k − 1 X i =1 q i q H i ) 8 and R ( A k ) = < ( A k ) −= ( A k ) = ( A k ) < ( A k ) with −= ( A k ) = = ( A k ) T is the r e al isomorph of A k . pro of. A k = < ( A k ) + i = ( A k ) is hermitian and idemp oten t b e- cause q i are orthonormal v ectors. Therefore rank( A k ) = p − k + 1 and rank( R ( A k )) = 2( p − k + 1) b ecause the eigenv alues of R ( A k ) are those of A k with m ultiplicity 2. As A k dep ends only on q i , i = 1 , . . . , k − 1 which in turn dep end only on g i , i = 1 , . . . , k − 1 it follo ws that, conditioned on ˜ g k , R ( A k ) is a con- stan t matrix. Moreo ver g H k A k g k = ˇ g T k R ( A k ) ˇ g k as can b e easily c heck ed. Let us define the v ariables x = ( U ( k ) ) T Σ − 1 / 2 ˇ g k . W e ha v e ˇ g T k R ( A k ) ˇ g k = x T Λ ( k ) x where Λ ( k ) is the diagonal matrix of eigen- v alues of Σ 1 / 2 k R ( A k )Σ 1 / 2 k . Only m k = 2( p − k + 1) eigen v alues are not zero and w e can assume that they are the first m k . There- fore R 2 k k = P m k i =1 λ ( k ) i x 2 i is a quadratic form in Gaussian v ectors of dimension m k . The thesis follo ws e.g. b y [16, ch.29,sec.4]. 2 Corollary 1 If Σ = I 2 p 2 and µ = 0 , R 2 k k is distribute d as χ 2 2( p − k +1) pro of. As Σ = I 2 p 2 the eigenv alues of Σ 1 / 2 k R ( A k )Σ 1 / 2 k are those of R ( A k ) which are 1 with m ultiplicit y 2( p − k + 1) and 0 with m ultiplicity 2( k − 1). As µ = 0, δ i = 0. Remem b ering that the 9 χ 2 1 ( δ i ) app earing in the previous Lemma are indep endent, the thesis follo ws b y the additivity prop ert y of χ 2 distribution . 2 Remark. The Corollary follows also b y Bartlett’s decomposition of a i.i.d. zero mean Gaussian random matrix [11]. 2 Closed form approximation of h ( z ) Unfortunately w e cannot use the easy result stated in the Corol- lary b ecause in the case of interest the matrix G ( z ) has a mean differen t from zero and a co v ariance structure dep ending on z . By Lemma 1 w e kno w that R 2 11 is distributed as a linear com bination of non-cen tral χ 2 distributions. It is kno wn that this distribution admits an expansion L ( α , β , τ ) in series of generalized Laguerre p olynomials [16, c h.29,sec.6.3] and the series is uniformly con- v ergent in I R + . More sp ecifically let us denote the generalized Laguerre p olynomial of order m b y L m ( x, α ) = x − ( α − 1) e x m ! ∂ m ∂ x m ( x m + α − 1 e − x ) = m X h =0 c hm x h where c hm = ( − 1) h Γ( α + m ) h !( m − h )!Γ( α + h ) . Then, follo wing [22], we ha v e Lemma 2 The density function of R 2 11 is given by f 1 ( y ) = b 0 y α − 1 e − y /β β α Γ( α ) + y α − 1 e − y /β β α Γ( α ) ∞ X m =1 b m L m ( y /τ , α ) = L ( α, β , τ ) 10 wher e α and β ar e such that the first two moments of R 2 11 ar e the same of the first two moments of the gamma distribution r epr esenting the le ading term of the exp ansion. Mor e over b m ar e univo c al ly determine d by the moments and τ is a fr e e p ar ame- ter. If λ max denotes the maximum eigenvalue of Σ 1 , when τ − 1 > 2( β − 1 − (2 λ max ) − 1 ) the series L ( α, β , τ ) is uniformly c onver gent ∀ y ∈ I R + . If β > λ max then τ = β makes the series to c onver ge uniformly, b 0 = 1 and b m ar e determine d by the first m moments of R 2 11 . pro of. The pro of follo ws b y the results giv en in [22] for the distri- bution of quadratic forms in central normal v ariables which hold true also in the non-cen tral case as can b e easily c heck ed. 2 W e can compute E [log( R 2 11 )] b y Lemma 3 E [log ( R 2 11 )] = b 0 [log β + Ψ( α )] + ∞ X m =1 b m m X h =0 c hm Γ( α + h ) Γ( α ) β τ ! h [log β + Ψ( α + h )] pro of. By Lemma 2 the series L ( α, β , τ ) conv erges uniformly . Therefore term-b y-term in tegration can b e p erformed and the result follo ws b y noticing that, for h = 0 , 1 , . . . 1 β α ∞ Z 0 log( y ) y τ ! h y α − 1 e − y /β dy = Γ( α + h ) β τ ! h [log β + Ψ( α + h )] . 2 11 W e hav e then obtained a closed form expression for E [log( R 2 11 )] as a function of the moments of R 2 11 . By noticing that the same result holds true for the distribution of R 2 k k conditioned on ˜ g k , w e sho w no w ho w to get an appro ximation of E [log( R 2 k k )] , k > 1. Theorem 1 The density function f k ( y ) of R 2 k k c an b e exp ande d in a uniformly c onver gent series of L aguerr e functions f k ( y ) = b ( k ) 0 y α k − 1 e − y /β k β α k k Γ( α k ) + y α k − 1 e − y /β k β α k k Γ( α k ) ∞ X m =1 b ( k ) m L m ( y /τ k , α k ) . When the p ar ameter τ k , that c ontr ols the uniform c onver genc e of the series, c an b e chosen e qual to β k , then b ( k ) 0 = 1 and b ( k ) m , m = 0 , . . . , N dep ends on the first N + 1 moments of R 2 k k . Mor e over E [log ( R 2 k k )] = b ( k ) 0 [log β k + Ψ( α k )] + ∞ X m =1 b ( k ) m m X h =0 c hm Γ( α k + h ) Γ( α k ) β k τ k ! h [log β k + Ψ( α k + h )] . If the series is trunc ate d after N + 1 terms, the appr oximation err or η ( k ) N = E [log ( R 2 k k )] − b ( k ) 0 [log β k + Ψ( α k )] + N X m =1 b ( k ) m m X h =0 c hm Γ( α k + h ) Γ( α k ) β k τ k ! h [log β k + Ψ( α k + h )] is b ounde d by K 1 N +1 α 2 k β α k k Γ( α k ) 2 F 2 ( α k , α k ; 1 + α k , 1 + α k ; K 2 ) + G 3 , 0 0 , 2 − K 2 1 − α k , 1 − α k 0 , − α k , − α k !! 12 wher e K 1 > 0 , K 2 > 0 and 0 < < 1 ar e c onstants, 2 F 2 is a gener alize d hyp er ge ometric function and G 3 , 0 0 , 2 is a Meijer’s G- function. pro of. By Lemma 1, conditioned on ˜ g k , R 2 k k is distributed as a linear combination of non-cen tral χ 2 distributions. Therefore the results obtained for R 2 11 are true and the conditional densit y of R 2 k k giv en ˜ g k , denoted b y f k ( y | ˜ g k ), exists as a function of L 2 [ I R + ]. But denoting b y h ( ˜ g k ; ˜ µ k , ˜ Σ k ) the Gaussian densit y of ˜ g k , the join t densit y f k ( y , ˜ g k ) of R 2 k k and ˜ g k is uniquely sp ecified as f k ( y , ˜ g k ) = f k ( y | ˜ g k ) h ( ˜ g k ) and the marginal w.r. to R 2 k k is f k ( y ) = Z I R 2 p ( k − 1) f k ( y | ˜ g k ) h ( ˜ g k ) d ˜ g k . As the Laguerre functions form a complete system of L 2 ( I R + ) it m ust exist a con v ergent - in L 2 ( I R + ) - Laguerre series representing f k ( y ) whose co efficien t are univ o cally determined b y the momen ts of f k ( y ) whic h are finite [1, Th.4.1] and giv en b y γ m = Z I R 2 p ( k − 1) γ m ( ˜ g k ) h ( ˜ g k ) d ˜ g k , m = 1 , 2 , . . . where γ m ( ˜ g k ) are the momen ts of R 2 k k | ˜ g k . W e sho w no w that this Laguerre expansion is uniformly con vergen t. By Lemma 2 for all ˜ g k it exists a constant 0 < τ ( ˜ g k ) < ∞ suc h that the La- guerre expansion L α ( ˜ g k ) , β ( ˜ g k ) , τ ( ˜ g k ) of f k ( y | ˜ g k ) is uniformly 13 con vergen t in I R + . But then it exists τ k > 0 suc h that τ − 1 k = sup ˜ g k τ − 1 ( ˜ g k ) < ∞ and L α ( ˜ g k ) , β ( ˜ g k ) , τ k ) is also uniformly con vergen t in I R + ∀ ˜ g k . But then for [15, Th.2.3e] it follo ws that the Laguerre expansion L ( α k , β k , τ k ) of f k ( y ) is uniformly con- v ergent in I R + . W e can then in tegrate term-b y-term and w e get E [log ( R 2 k k )] = b ( k ) 0 log β k + Ψ( α k ) + N X m =1 b ( k ) m m X h =0 c hm Γ( α k + h ) Γ( α k ) β k τ k ! h [Ψ( α k + h ) + log β k ] + ∞ Z 0 log( y ) e N ( y ) dy where e N ( y ) = y α k − 1 e − y /β k β α k k Γ( α k ) ∞ X m = N +1 b ( k ) m L m ( y /τ k , α k ) . In [22, eq.(31)] the b ound | e N ( y ) | ≤ K 1 N +1 y α k − 1 e K 2 y β α k k Γ( α k ) , 0 < < 1 , K 2 = − β − 1 k + R τ k (1 + R ) , < R < 1 is giv en where K 1 > 0 , K 2 are constan ts. But then ∞ Z 0 log( y ) e N ( y ) dy ≤ ∞ Z 0 | log( y ) e N ( y ) | dy ≤ K 1 N +1 β α k k Γ( α k ) ∞ Z 0 | log( y ) | y α k − 1 e K 2 y dy = K 1 N +1 β α k k Γ( α k ) · ∞ Z 1 log( y ) y α k − 1 e K 2 y dy − 1 Z 0 log( y ) y α k − 1 e K 2 y dy = G 3 , 0 0 , 2 − K 2 1 − α k , 1 − α k 0 , − α k , − α k ! + 1 α 2 k 2 F 2 ( α k , α k ; 1 + α k , 1 + α k ; K 2 ) . 2 14 Remark. The b ound on the error giv en ab o ve is of little use in practice b ecause the computation of the constan ts K 1 , K 2 is quite in volv ed as they dep end on all momen ts. Ho w ever, by Corollary 1 w e kno w that when Σ = I 2 p 2 and µ = 0 the expansion terminates after the first term and b ( k ) 0 = 1. Moreo v er from Theorem 1 we kno w that it can happ en that the co efficients b ( k ) k , k = 0 , . . . , N are determined b y the first N + 1 momen ts only . Therefore by con tinuit y w e can conjecture that the first term of the expansion pro vides most of the information in the general case and therefore the truncation error should b e small. This conjecture is strongly supp orted by n umerical evidence as discussed in Section 5. W e can no w pro v e the main theorem Theorem 2 If Q ( z ) R ( z ) is the QR factorization of G ( z ) , u ( z ) = 1 p E { log ( | det[ G 1 − z G 0 ] | 2 ) } = 1 p p X k =1 E n log | R k k ( z ) | 2 o = 1 p p X k =1 b ( k ) 0 ( z )[log β k ( z ) + Ψ( α k ( z ))]+ ∞ X m =1 b ( k ) m ( z ) m X h =0 c hm Γ( α k ( z ) + h ) Γ( α k ( z )) β k ( z ) τ k ( z ) h [log β k ( z ) + Ψ( α k ( z ) + h )] . Mor e over u ( z ) ≈ ˜ u ( z ) = 1 p p X k =1 [log β k ( z ) + Ψ( α k ( z ))] and | u ( z ) − ˜ u ( z ) | ≤ 1 p p X k =1 η ( k ) 1 ( z ) . 15 pro of: By Theorem 1 w e can appro ximate the density function f k ( y ) of R 2 k k b y the first term divided by b ( k ) 0 of its Laguerre expansion i.e. by y α k − 1 e − y /β k β α k k Γ( α k ) . This is a consisten t approximation b ecause this normalized first term is a Γ densit y with parameters α k , β k . But then the cor- resp onding appro ximation of E [log ( R 2 k k )] is log β k + Ψ( α k ) and then w e ha v e ˜ u ( z ) = 1 p p X k =1 [log[ β k ( z )] + Ψ[ α k ( z )] . The other statemen ts are ob vious consequences of Theorem 1. 2 In order to compute the condensed densit y h ( z ) we hav e to tak e the Laplacian of u ( z ). As differentiation can b e a v ery unstable pro cess, when w e mak e use of the first order appro ximation of u ( z ), w e can expect that ev en a small appro ximation error in u ( z ) can produce a large error in h ( z ). Ho wev er, in practice w e ha ve to appro ximate the Laplacian b y finite differences b y defining a square grid ov er the region of I R 2 whic h the unkno wn complex n umbers ξ j are supp osed to b elong to. This pro vides an implicit regularization metho d if the grid size is prop erly c hosen as a function of the appro ximation error of u ( z ). W e hav e Theorem 3 If sup I C k u ( z ) − ˜ u ( z ) k ≤ ε and if z = x + iy and the L aplacian op er ator is appr oximate d by 16 ˆ ∆ u ( x, y ) = 1 δ 2 [ u ( x − δ, y ) + u ( x + δ, y ) + u ( x, y − δ ) + u ( x, y + δ ) − 4 u ( x, y )] on a squar e grid with mesh size δ wher e δ ( ε ) = C ε 1 / 4 , C c onstant, then k ˆ ∆ ˜ u − ∆ u k = O ( ε 1 / 4 ) and this is the b est p ossible appr oximation achievable. pro of. By T a ylor expansion of u ( x ± δ, y ± δ ) ab out ( x, y ) w e get | ˆ ∆ u ( z ) − ∆ u ( z ) | = O ( δ 2 ) and sup I C | ˆ ∆ u ( z ) − ∆ u ( z ) | = k ˆ ∆ u − ∆ u k = O ( δ 2 ) . But u ( z ) = ˜ u ( z ) + η ( z ) hence k ˆ ∆ ˜ u − ∆ u k = k ˆ ∆ u − ∆ u − ˆ ∆ η k ≤ O ( δ 2 ) + 5 ε δ 2 . F or fixed ε this error b ecomes unbounded as δ → 0. How ev er b y c ho osing δ ( ε ) such that δ ( ε ) → 0 and ε δ ( ε ) → 0 for ε → 0 w e get k ˆ ∆ ˜ u − ∆ u k → 0 as ε → 0 Lo oking for a mesh size of the form δ ( ε ) = C ε a suc h that the terms O ( δ 2 ) and 5 ε δ 2 are balanced, we get O ( C 2 ε 2 a ) = O 5 ε C 2 ε 2 a ! whic h implies a = 1 4 . In [13] it is pro v ed that this b ound is the b est p ossible for all approximation errors η ( z ) suc h that k η k ≤ ε . 2 17 As a final remark w e notice that for computing h ( z ) we could start from the real isomorph R ( G ) = V R − V I V I V R ∈ I R n × n . of G instead than from G . The follo wing prop osition holds ([14, Theorem 5.1]): Prop osition 1 If G = V R + i V I , V R , V I ∈ I R p × p , then | det( G ) | 2 = det( R ( G )) Let R ( G ) = ˇ Q ˇ R b e the QR factorization of R ( G ). Then ha ve | det( G ) | 2 = det R ( G ) = Y k =1 ,n ˇ R k k and h ( z ) = 1 4 π p ∆ E n log( | det [ G ( z )] | 2 ) o = 1 4 π p ∆ n X k =1 E n log ˇ R k k ( z ) o = 1 4 π n ∆ n X k =1 E n log ˇ R 2 k k ( z ) o . It will b e sho wn in Section 4 how ev er that this expression of h ( z ) is not conv enien t from the computational p oint of view. 18 3 Smo oth estimate of the condensed densit y in the Hank el case W e w an t to sho w no w that we can exploit the closed form ex- pression of the condensed density to smo oth out the noise con- tribution to h ( z ). This allows us to get a go o d estimate of p ∗ and ξ j , j = 1 , . . . , p ∗ , - whic h is the nonlinear most difficult part of CEAP - from a single realization of the measured pro cess { a k } . W e first notice that by appro ximating the densit y of R 2 k k b y a Γ density with parameters α k , β k , the mean and v ariance of R 2 k k are appro ximated resp ectiv ely b y α k β k and α k β 2 k and, if b ( k ) 0 = 1, w e ha ve exactly γ 1 = α k β k , γ 2 = α k β 2 k + ( α k β k ) 2 . Ho wev er w e kno w, b y the pro of of Theorem 1, that γ m = Z I R 2 p ( k − 1) γ m ( ˜ g k ) h ( ˜ g k ) d ˜ g k , m, 1 , 2 , . . . (3) where γ m ( ˜ g k ) are the momen ts of R 2 k k | ˜ g k . The first t w o of them are giv en b y ([19]) γ 1 ( ˜ g k ) = tr [(Σ k + 2 µ k µ T k ) R ( A k )] γ 2 ( ˜ g k ) = 2 tr [(Σ k + 2 µ k µ T k ) R ( A k )Σ k R ( A k )] + γ 2 1 ( ˜ g k ) where µ k = E [ ˇ g k ] and Σ k = cov ( ˇ g k ). When G = G 1 − z G 0 is an Hank el matrix and the elemen ts of G 0 and G 1 are normally distributed with v ariance σ 2 as stated in the In tro duction, it is 19 easy to pro ve that the cov ariance matrix of g k do es not dep end on k and it is a tridiagonal matrix Z with 1 + | z | 2 on the main diagonal and − z and z on the secondary ones. If z = x + iy it turns out that ∀ k the cov ariance matrix of ˇ g k is Σ k = σ 2 R ( Z ) where R ( Z ) is a 2 × 2 blo ck tridiagonal matrix giv en b y R ( Z ) = ( − x, 1 + | z | 2 , − x ) ( y , 0 , − y ) ( − y , 0 , y ) ( − x, 1 + | z | 2 , − x ) where ( a, b, c ) denotes a tridiagonal matrix with b on the main diagonal, a and c on the low er and upp er diagonals resp ectiv ely . But then we ha v e γ 1 ( ˜ g k ) = σ 2 tr [ R ( Z ) R ( A k )] + 2 tr [ µ k µ T k R ( A k )] γ 2 ( ˜ g k ) = 2 σ 4 tr [ R ( Z ) R ( A k ) R ( Z ) R ( A k )] + 4 σ 2 tr [( µ k µ T k ) R ( A k ) R ( Z ) R ( A k )] + γ 2 1 ( ˜ g k ) where the dep endence on ˜ g k is only in R ( A k ). By p erforming the in tegration in eq. (3) w e get γ 1 = σ 2 c + d, γ 2 = σ 4 a + σ 2 b + γ 2 1 where a = 2 Z I R 2 p ( k − 1) tr [ R ( Z ) R ( A k ) R ( Z ) R ( A k )] h ( ˜ g k ) d ˜ g k b = 4 Z I R 2 p ( k − 1) tr [( µ k µ T k ) R ( A k ) R ( Z ) R ( A k )] h ( ˜ g k ) d ˜ g k 20 c = Z I R 2 p ( k − 1) tr [ R ( Z ) R ( A k )] h ( ˜ g k ) d ˜ g k d = 2 Z I R 2 p ( k − 1) tr [ µ k µ T k R ( A k )] h ( ˜ g k ) d ˜ g k . But then we ha v e Theorem 4 If the density of R 2 k k is appr oximate d by a Γ den- sity with p ar ameters α k , β k such that the first two moments of R 2 k k c oincides with those of the appr oximant then β k is a nonde- cr e asing function of σ 2 and α k is a nonde cr e asing function of 1 σ 2 if k µ k k 2 2 σ 2 > E [ tr ( R ( Z ) R ( A k ))] 2 E [ ˜ µ T k R ( A k ) ˜ µ k ] wher e ˜ µ k = µ k k µ k k 2 and E denotes exp e c- tation w.r. to h ( ˜ g k ) . pro of. β k = γ 2 − γ 2 1 γ 1 = σ 2 σ 2 a + b σ 2 c + d , α k = γ 2 1 γ 2 − γ 2 1 = σ 2 c + d 2 σ 4 a + σ 2 b Deriving these expressions resp ectiv ely with resp ect to σ 2 and to ρ = d σ 2 where d is assumed fixed and σ 2 is v ariable, w e get ∂ β k ∂ σ 2 = bd + aσ 2 (2 d + cσ 2 ) ( d + cσ 2 ) 2 , ∂ α k ∂ ρ = 2 ad 2 ( ρ + c ) + bd ( ρ 2 − c 2 )) ( ad + bρ ) 2 . But Z and A k are positive semidefinite matrices. Therefore R ( Z ) and R ( A k ) are also p ositiv e semidefinite b ecause their eigen v al- ues are the same of those of Z and A k with m ultiplicity 2. Re- mem b ering that if X , Y are p ositiv e semidefinite matrices tr ( X Y ) n ≥ 0 , n = 1 , 2 , . . . , it follo ws that a ≥ 0 , b ≥ 0 , c ≥ 0 , d ≥ 0 because the exp ectation of a nonnegative quan tity is nonnegativ e. It fol- 21 lo ws that ∂ β k ∂ σ 2 ≥ 0 . Moreov er ∂ α k ∂ ρ ≥ 0 if ρ 2 − c 2 = 4 σ 4 E [ tr ( µ k µ T k R ( A k ))] 2 − c 2 = 4( µ T k µ k ) 2 σ 4 E [ ˜ µ T k R ( A k ) ˜ µ k ] 2 − c 2 > 0 . The thesis follo ws by noticing that pr ob { ˜ µ T k R ( A k ) ˜ µ k > 0 } = 1. In fact R ( A k ) is a random pro jector and the random quadratic form in the deterministic nonzero v ector ˜ µ k can b e zero only if ˜ µ k is orthogonal to the random eigen vectors v 2 k − 1 , . . . , v 2 p of R ( A k ) corresp onding to nonzero eigen v alues. As this ev en t has probabilit y zero, E [ ˜ µ T k R ( A k ) ˜ µ k ] > 0. 2 The idea is then to use the parameters β k as smo othing parame- ters and α k as signal-related parameters. By fixing β k = σ 2 β , ∀ k and taking α k = γ 1 k σ 2 β the v ariance of R 2 k k ( z ) is controlled b y β and h ( z ) can b e estimated by ˆ h ( z ) ∝ p X k =1 ˆ ∆ Ψ ˆ γ 1 k ( z ) σ 2 β (4) where ˆ ∆ is the discrete Laplacian and ˆ γ 1 k ( z ) is an estimate of γ 1 k ( z ). In the follo wing w e assume that the v alue ˆ R 2 k k ( z ) - ob- tained b y the QR factorization of a realization of G ( z ) corre- sp onding to a giv en set of observ ations { a k } - is an estimate of the mo de of R 2 k k ( z ) and therefore ˆ R 2 k k ( z ) = β k ( α k − 1) 22 (see e.g [16][ch.17]). Then w e get ˆ γ 1 k ( z ) σ 2 β = ˆ R 2 k k ( z ) σ 2 β + 1 . F rom a qualitative p oint of view, increasing β has the effect to make larger the supp ort of all mo des of h ( z ) and to low er their v alue b ecause h ( z ) is a probabilit y density . Hence the noise- related mo des are lik ely to b e smo othed out by a sufficien tly large β . Ho w ever a v alue of β to o large can result in a lo w resolution sp ectral estimate. 4 Computational issues in the Hank el case T o estimate h ( z ) on a lattice w e m ust compute the QR factoriza- tion of G ( z ) for all v alues z in the lattice. This requires O ( m 2 p 3 ) flops if the lattice is square of size m . How ev er we notice that G ( z ) = U 1 − z U 0 = U ( E 1 − z E 0 ) where U = a 0 a 1 . . . a p a 1 a 2 . . . a p +1 . . . . . . a p − 1 a p . . . a 2 p − 1 ∈ I C p × p +1 (5) 23 do es not dep end on z and E 0 = [ e 1 . . . e p ] ∈ I C p +1 × p , E 1 = [ e 2 . . . e p +1 ] ∈ I C p +1 × p and e k is the k − th column of the identit y matrix of order p + 1. If U = QR is the QR factorization of U where Q ∈ I C p × p is unitary and R ∈ I C p × p +1 is upp er trap ezoidal, then the QR factorization of G ( z ) can b e obtained simply b y reducing to upp er triangular form b y unitary transformations the Hessemberg matrix C ( z ) = R ( E 1 − z E 0 ) ∈ I C p × p . This is the only task that m ust be p erformed for eac h z . By using Giv ens rotations this can b e p erformed in O ( p ) flops. The total cost of the QR factorization of G ( z ) in the lattice reduces then to O ( m 2 p + p 3 ) flops. Finally we notice that if we start from R ( G ) = ˇ Q ˇ R , C ( z ) is a 2 × 2 blo c k matrix with Hessem b erg diagonal blo cks and triangular off-diagonal ones. Therefore it can not be transformed to triangular form in O (2 p ) flops. 5 Numerical results In this section some exp erimen tal evidence of the claims made in the previous sections is giv en. T o appreciate the goo dness of the appro ximation to the densit y 24 of R 2 k k pro vided b y the truncated Laguerre expansion, N = 4 · 10 6 indep enden t realizations a ( r ) k , k = 1 , . . . , n, r = 1 , . . . , N of the r.v. a k w ere generated from the complex exponentials mo del with p ∗ = 5 comp onen ts giv en b y ξ = h e − 0 . 1 − i 2 π 0 . 3 , e − 0 . 05 − i 2 π 0 . 28 , e − 0 . 0001+ i 2 π 0 . 2 , e − 0 . 0001+ i 2 π 0 . 21 , e − 0 . 3 − i 2 π 0 . 35 i c = [6 , 3 , 1 , 1 , 20] , n = 74 , p = 37 , σ = 0 . 5 . The matrices U ( r ) 0 , U ( r ) 1 based on a ( r ) k w ere computed. The matrix U ( r ) 1 − z U ( r ) 0 with z = cos(1) + i 0 . 8 w as formed, its QR decom- p osition and the first 10 empirical momen ts ˆ γ j w ere computed. Estimates of the first 10 co efficients of the Laguerre expansion w ere then computed by ([20]) ˆ α k = ˆ γ 2 1 ˆ γ 2 − ˆ γ 2 1 , ˆ β k = ˆ γ 2 − ˆ γ 2 1 ˆ γ 1 ˆ b ( k ) h = ( − 1) h Γ( ˆ α k ) h X j =0 ( − 1) j h j ˆ γ h − j Γ( ˆ α k + h − j ) , ˆ γ 0 = 1 , h = 1 , . . . , 10 The one term and ten terms approximations of the density w ere then computed and compared with the empirical density of R 2 k k for k = 1 , . . . , p . The results are giv en in fig.1. In the top left part the real part of the signal and of the data are plotted. In the top righ t part the L 2 norm of the difference b et w een the empirical densit y of R 2 k k , k = 1 , . . . , p computed b y Mon teCarlo simulation and its appro ximation obtained by truncating the series expan- sion of the densit y after the first term and after the first 10 terms is giv en. In the b ottom left part the densit y of R 2 k k , k = 36 , 25 appro ximated b y the first term of its series expansion and the empirical density are plotted. In the b ottom righ t part the den- sit y of R 2 k k , k = 36 , appro ximated b y the first 10 terms of its series expansion and the empirical densit y are plotted. It can b e noticed that the first order appro ximation is quite go o d ev en if it b ecome w orse for large k . The choice σ = 0 . 5 is justified b y the fact that this v alue is in the range of v alues used in the examples b elo w. Ho wev er the same kind of conclusions can b e dra wn for ev ery SNR . T o appreciate the adv antage of the closed form estimate ˆ h ( z ) with resp ect to an estimate of the condensed densit y obtained b y Mon teCarlo sim ulation an exp erimen t w as p erformed. N = 100 indep enden t realizations of the r.v. generated ab ov e w ere consid- ered. W e notice that the frequencies of the 3 r d and 4 th comp o- nen ts are closer than the Nyquist frequency (0 . 21 − 0 . 20 = 0 . 01 < 1 /n = 0 . 0135). Hence a sup er-resolution problem is in v olv ed in this case. Two v alues of the noise s.d. σ w ere used σ = 0 . 2 , 0 . 8 . An estimate of h ( z ) w as computed on a square lattice of dimen- sion m = 100 b y ˆ h ( z ) ∝ N X r =1 p X k =1 ˆ ∆ Ψ R ( r ) k k ( z ) 2 σ 2 β + 1 where R ( r ) ( z ) is obtained b y the QR factorization of the matrix 26 U ( r ) 1 − z U ( r ) 0 . In the top part of fig.2 the estimate of h ( z ) obtained b y Monte Carlo simulation is plotted. In the b ottom part the smo othed estimates ˆ h ( z ) for σ = 0 . 2 and β = 5 n based on a single realization was plotted. In fig.3 the results obtained with σ = 0 . 8 and β = 5 n are shown. W e notice that by the prop osed metho d w e get an improv ed qualitativ e information with resp ect to that obtained b y replicated measures. This is an imp ortan t feature for applications where usually only one data set is measured. W e also notice that when σ = 0 . 2 the probabilit y to find a ro ot of P ( z ) in a neigh b or of ξ j is larger than the probabilit y to find it elsewhere. This is no longer true when σ = 0 . 8 ev en if the signal-related complex exp onentials are well separated. In the following we will sa y that the complex exp onen tial mo del is identifiable if this last case o ccurs and it is strongly iden tifiable if the first case o ccurs. Therefore if the model is iden tifiable the signal-related complex exp onen tials are w ell separated but the relativ e imp ortance of some of them - measured b y the v alue of the lo cal maxima of h ( z ) - is not larger than the relativ e imp ortance of some noise-related complex exp onen tials. Therefore in this case w e need some a- priori information ab out the lo cation of the ξ j in order to separate signal-related comp onents from the noise-related ones. W e w an t no w to show b y means of a small sim ulation study the qualit y of the estimates of the parameters p ∗ , ξ and c whic h can b e obtained from ˆ h ( z ). T o this aim the follo wing estimation 27 pro cedure was used: • the lo cal maxima of ˆ h ( z ) are computed and sorted in decreasing magnitude • a clustering metho d is used to group the lo cal maxima into t wo groups. If the mo del is strongly iden tifiable the signal- related maxima are larger than the noise-related ones, therefore a simple thresholding is enough to separate the t w o groups. A go o d threshold is the one that pro duces an estimate of s k whic h b est fits the data a k in L 2 norm as the noise is assumed to b e Gaussian • the cardinalit y ˆ p of the class with largest av erage v alue is an estimate of p ∗ • the lo cal maxima ˆ ξ j , j = 1 , . . . , ˆ p of the class with largest a v er- age v alue are estimates of ξ j , j = 1 , . . . , p ∗ . Of course if ˆ p 6 = p ∗ some ξ j are not estimated or vicev ersa some spurious complex exp onen tials are found • c is estimated b y solving the linear least squares problem ˆ c = ar g min x k V x − a k 2 2 , a = [ a 0 , . . . , a n − 1 ] T where V ∈ I C n × ˆ p is the V andermonde matrix based on ˆ ξ j , j = 1 , . . . , ˆ p The bias, v ariance and mean squared error (MSE) of eac h pa- rameter separately w ere estimated. N = 500 indep enden t data sets a ( r ) of length n were generated b y using the mo del param- 28 eters giv en ab o ve and σ = 0 . 2. F or r = 1 , . . . , N the condensed densit y estimate ˆ h ( r ) ( z ) w as computed on a square lattice of di- mension m = 100. The estimation pro cedure is then applied to eac h of the ˆ h ( r ) ( z ) , r = 1 , . . . , N and the corresp onding estimates ˆ ξ ( r ) j , ˆ c ( r ) j , j = 1 , . . . , ˆ p ( r ) of the unkno wn parameters were obtained. If the estimate ˆ p ( r ) w as less than the true v alue p ∗ , the corresp ond- ing data set a ( r ) w as discarded. In T able 2 the bias, v ariance and MSE of eac h parameter includ- ing p ∗ is reported. They w ere computed b y c ho osing among the ˆ ξ ( r ) j , j = 1 , . . . , ˆ p ( r ) ≥ p ∗ the one closest to eac h ξ k , k = 1 , . . . , p ∗ and the corresp onding ˆ c ( r ) j . If more than one ξ k is estimated b y the same ˆ ξ ( r ) j the r − th data set a ( r ) w as discarded. In the case considered all the data sets were accepted. As a second example the reconstruction of a piecewise constan t function from noisy F ourier co efficien ts is considered. The prob- lem is stated as follows. Given a real interv al [ − π , π ] and N + 1 n umbers − π ≤ l 1 < l 2 . . . < l N +1 ≤ π , let F b e the class of functions defined as F ( t ) = N X j =1 w j χ j ( t ) , where χ j ( t ) = 1 if t ∈ [ l j , l j +1 ] 0 otherwise , 29 and the w j are real weigh ts. The problem consists in reconstruct- ing a function F ( t ) ∈ F from a finite n um b er of its noisy F ourier co efficien ts a k = 1 2 π Z − π F ( t ) e itk dt + k = s k + k , k = 0 , . . . , n − 1 , where k is a complex Gaussian, zero mean, white noise, with v ariance σ 2 . W e are lo oking for a solution which is not affected b y Gibbs artifact and can cop e, stably , with the noise. The basic observ ation is the following. The unp erturb ed momen ts s k are giv en b y s k = 1 2 π Z − π F ( t ) e itk dt = N X j =1 w j sin( β j k ) k exp( iλ j k ) , where β j = l j +1 − l j 2 , λ j = l j +1 + l j 2 . Then consider the Z -transform of the sequence { s k } s ( z ) = N X j =1 w j β j + 1 2 i ln z − e il j z − e il j +1 whic h conv erges if | z | > 1 and is defined b y analytic contin uation if | z | ≤ 1. W e notice that s ( z ) has a branc h p oin t at ξ j = e il j , j = 1 , . . . , N + 1 where l j are the discon tinuit y p oints of F ( t ). It w as prov ed in [17,18] that the c j are strong attractors of the p oles of the P ade’ approximan ts 30 [ q , r ] f ( z ) to the noisy Z -transform f ( z ) = ∞ X k =0 a k z − k when q , r → ∞ and q /r → 1. It is easy to sho w that the p oles of [ q , r ] f ( z ) are the generalized eigenv alues of the p encil ( U 1 , U 0 ) built from the data a k , k = 0 , . . . , n − 1 whose condensed densit y is h ( z ). Therefore, as sho wn in [17,18] the lo cal maxima of h ( z ) are concen trated along a set of arcs whic h ends in the branc h p oin ts ξ j and on a set of arcs close to the unit circle. As the branc h p oin ts are strong attractors for the P ade’ p oles, the probabilit y to find a p ole in a neigh b or of a branch p oin t is larger than elsewhere, therefore it can be exp ected that the branch p oints corresp ond to the largest lo cal maxima of h ( z ), as far as the SNR is sufficien tly large. In order to compute estimates ˆ l j of l j , it is sufficien t to compute the arguments of the main lo cal maxima of ˆ h ( z ). The w j are then estimated b y taking the median in eac h in terv al [ ˆ l j , ˆ l j +1 ] of the rough estimate of F ( t ) obtained by taking the discrete F ourier transform of a k , k = 0 , . . . , n − 1. The median is in fact robust with resp ect to errors affecting ˆ l j . The metho d was applied to an example considered in [18] where comparisons with other metho ds were also rep orted. In the top left part of fig.4 the original function F ( t ) is plotted. In the top righ t the rough estimate of F ( t ) when S N R = 7 is rep orted where the S N R is measured as the ratio of the standard deviations of 31 { s k } and { k } . In the b ottom parts the condensed densit y and the reconstructed function ˆ F ( t ) are plotted. Lo oking at the con- densed densit y we notice that the mo del is strongly identifiab le, therefore the estimation procedure outlined ab ov e w as applied. In fig.5 the same quan tities as abov e but with S N R = 1 are plotted. In this case the mo del is iden tifiable but not strongly therefore the clustering step does not work. The n um b er of complex exp o- nen tials used to get the reconstruction plotted in fig.5 is ˆ p = 20 and w as found by trial and errors. W e notice that when S N R = 7 w e get an almost p erfect recon- struction, b etter than that rep orted in [18]. When S N R = 1 the reconstruction quality is w orse as exp ected but still comparable with the one rep orted in [18]. References [1] Aba te J., Whitt, W. (1999) Infinite series representations of Laplace transforms of probabilit y density functions for numerical in v ersion, J.Op.R es.So c.Jap an , 42,3 268-285 [2] Barone, P. (2010). Estimation of a new sto chastic transform for solving the complex exp onentials appro ximation problem: computational aspects and applications, Digital Signal Pr o c ess. , 20,3 724-735 [3] Barone, P. (2008). A new transform for solving the noisy complex exp onentials appro ximation problem, J. Appr ox. The ory 155 127. [4] Barone, P. (2005). On the distribution of p oles of P ade’ appro ximants to the Z-transform of complex Gaussian white noise, J. Appr ox. The ory 132 224-240. 32 [5] Barone, P. (2003). Orthogonal p olynomials, random matrices and the n umerical inv ersion of Laplace transform of positive functions. J.Comp. Applie d Math. 155, 2 307-330. [6] Barone, P. (2003). Random matrices in Magnetic Resonance signal pro cessing. The 8-th SIAM Conference on Applied Linear Algebra [7] Barone, P., Ramponi, A., Sebastiani, G. (2001). On the numerical in version of the Laplace transform for Nuclear Magnetic Resonance relaxometry . Inverse Pr oblems 17 77-94. [8] Barone, P., March, R. (2001). A no v el class of Pad ´ e based metho d in sp ectral analysis. J. Comput. Metho ds Sci. Eng. 1 185-211. [9] Barone, P., March, R. (1998). Some prop erties of the asymptotic lo cation of p oles of Pad ´ e approximan ts to noisy rational functions, relev ant for modal analysis. IEEE T r ans. Signal Pr o c ess. 46 2448-2457. [10] Bar one, P., Ramponi, A. (2000). A new estimation method in mo dal analysis. IEEE T r ans. Signal Pr o c ess. 48 1002-1014. [11] Bar tlett, M.S. (1933). On the theory of statistical regression. Pr o c. R. So c. Edinb. 53 260-283. [12] Deift, P. (2000). Ortho gonal p olynomials and r andom matric es: a Riemann- Hilb ert appr o ach , American Mathematical So ciet y , Providence, RI. [13] Gr oetsch, C.W. (1991). Differentiation of approximately sp ecified functions. The A meric an Mathematic al Monthly 98,9 847-850. [14] Hammersley, J.M. (1956). The zeros of a random p olynomial, Pr o c. Berkely Symp. Math. Stat. Pr ob ability 3rd,2 89-111. [15] Henrici, P. (1977) . Applie d and c omputational c omplex analysis vol.I , John Wiley and Sons, New Y ork. [16] Johnson, N.L., Kotz, S. (1970). Continuous univariate distributions, vol.2 , John Wiley and Sons, New Y ork. 33 [17] Mar ch, R., Barone, P. (1998). Application of the Pad ´ e metho d to solve the noisy trigonometric moment problem: some initial results. SIAM J. Appl. Math. 58 324-343. [18] Mar ch, R., Barone, P. (2000). Reconstruction of a piecewise constan t function from noisy F ourier co efficien ts by Pad ´ e metho d. SIAM J. Appl. Math. 60 1137-1156. [19] Ma thai, A.M., Pro vost,B. (1977). Quadr atic forms in r andom variables , Marcel Dekk er, New Y ork. [20] Sanjel, D., Balakrishnan, N. (2008). A Laguerre p olynomial approximation for a go o dness-of-fit test for exp onen tial distribution based on progressiv ely censored data, J. Stat. Comput. Simul. 78 503-513. [21] v an den Bos, A. (1995). The multiv ariate complex normal distribution - A generalization, IEEE T r ans. Inf. The ory 41,2 537-539. [22] Tzirit as G.G. (1987). On the distribution of p ositiv e-definite Gaussian quadratic forms, IEEE T r ans. Inf. The ory 33,6 895-906. 34 Fig. 1. T op left: real part of the signal (solid) and data (dotted) with σ = 0 . 5; top righ t: L 2 norm of the difference betw een the empirical density of R 2 kk , k = 1 , . . . , 30 computed by Mon teCarlo simulation with 4 · 10 6 samples and its approximation ob- tained b y truncating the series expansion of the densit y after the first term (dotted) and after the first 10 terms (solid); b ottom left: densit y of R 2 kk , k = 36 , approxi- mated b y the first term of its series expansion (solid), empirical density (dotted); b ottom right: densit y of R 2 kk , k = 36 , appro ximated by the first 10 terms of its series expansion (solid), empirical densit y (dotted). 35 Fig. 2. T op: Monte Carlo estimate of the condensed density when σ = 0 . 2; b ottom: estimate of the condensed densit y b y the closed form appro ximation with β = 14 . 8. 36 Fig. 3. T op: Monte Carlo estimate of the condensed density when σ = 0 . 8; b ottom: estimate of the condensed density by the closed form appro ximation with β = 237. 37 p ∗ bias ( ˆ p ) s.d. ( ˆ p ) M S E ( ˆ p ) 5 0.0000 0.0000 0.0000 ξ j bias ( ˆ ξ j ) s.d. ˆ ξ j M S E ( ˆ ξ j ) j = 1 -0.2796 - 0.8606i -0.0008 + 0.0001i 0.0000 0.0000 j = 2 -0.1782 - 0.9344i 0.0036 - 0.0010i 0.0000 0.0000 j = 3 0.3090 + 0.9510i 0.0057 - 0.0064i 0.0031 0.0001 j = 4 0.2487 + 0.9685i -0.0058 + 0.0110 0.0019 0.0002 j = 5 -0.4354 + 0.5993i -0.0047 + 0.0054i 0.0108 0.0002 c j bias (ˆ c j ) s.d. (ˆ c j ) M S E ( ˆ c j ) j = 1 6.0000 0.0440 0.1238 0.0173 j = 2 3.0000 -0.0407 0.0688 0.0064 j = 3 1.0000 0.0441 0.0736 0.0074 j = 4 1.0000 -0.6767 0.0808 0.4644 j = 5 20.0000 -0.1007 0.2574 0.0764 T able 2 Statistics of the parameters ˆ p , ˆ ξ j , j = 1 , . . . , p ∗ and ˆ c j , j = 1 , . . . , p ∗ 38 Fig. 4. T op left: original function; top right: rough estimate of F ( t ) when the mo- men ts are affected by a Gaussian noise with S N R = 7. Bottom left: estimate of the condensed density by the closed form approximation; b ottom right: reconstruction of the original function. 39 Fig. 5. T op left: original function; top right: rough estimate of F ( t ) when the mo- men ts are affected by a Gaussian noise with S N R = 1. Bottom left: estimate of the condensed density by the closed form approximation; b ottom right: reconstruction of the original function. 40

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment