A Generalized Least Squares Matrix Decomposition

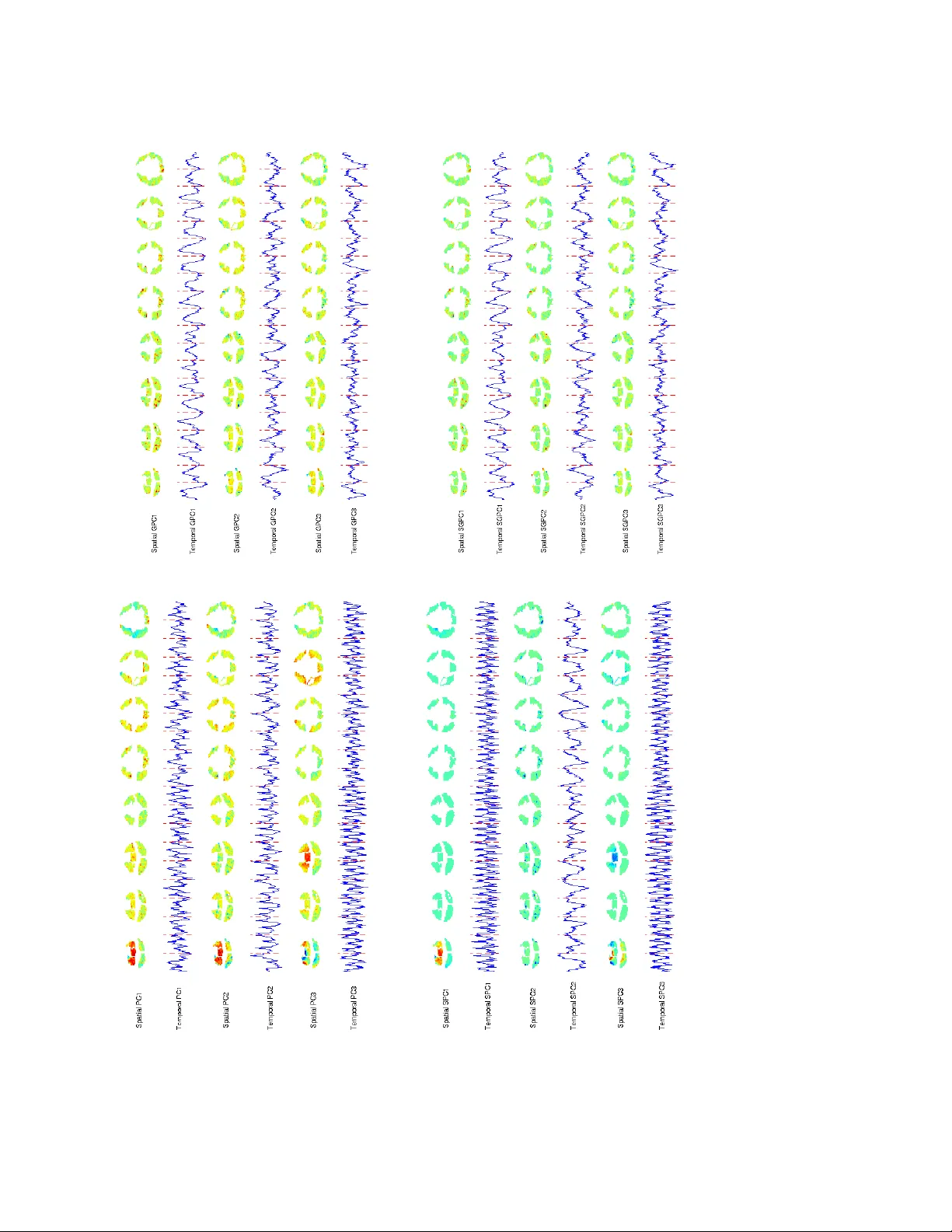

Variables in many massive high-dimensional data sets are structured, arising for example from measurements on a regular grid as in imaging and time series or from spatial-temporal measurements as in climate studies. Classical multivariate techniques …

Authors: Genevera I. Allen, Logan Grosenick, Jonathan Taylor