On the trade-off between complexity and correlation decay in structural learning algorithms

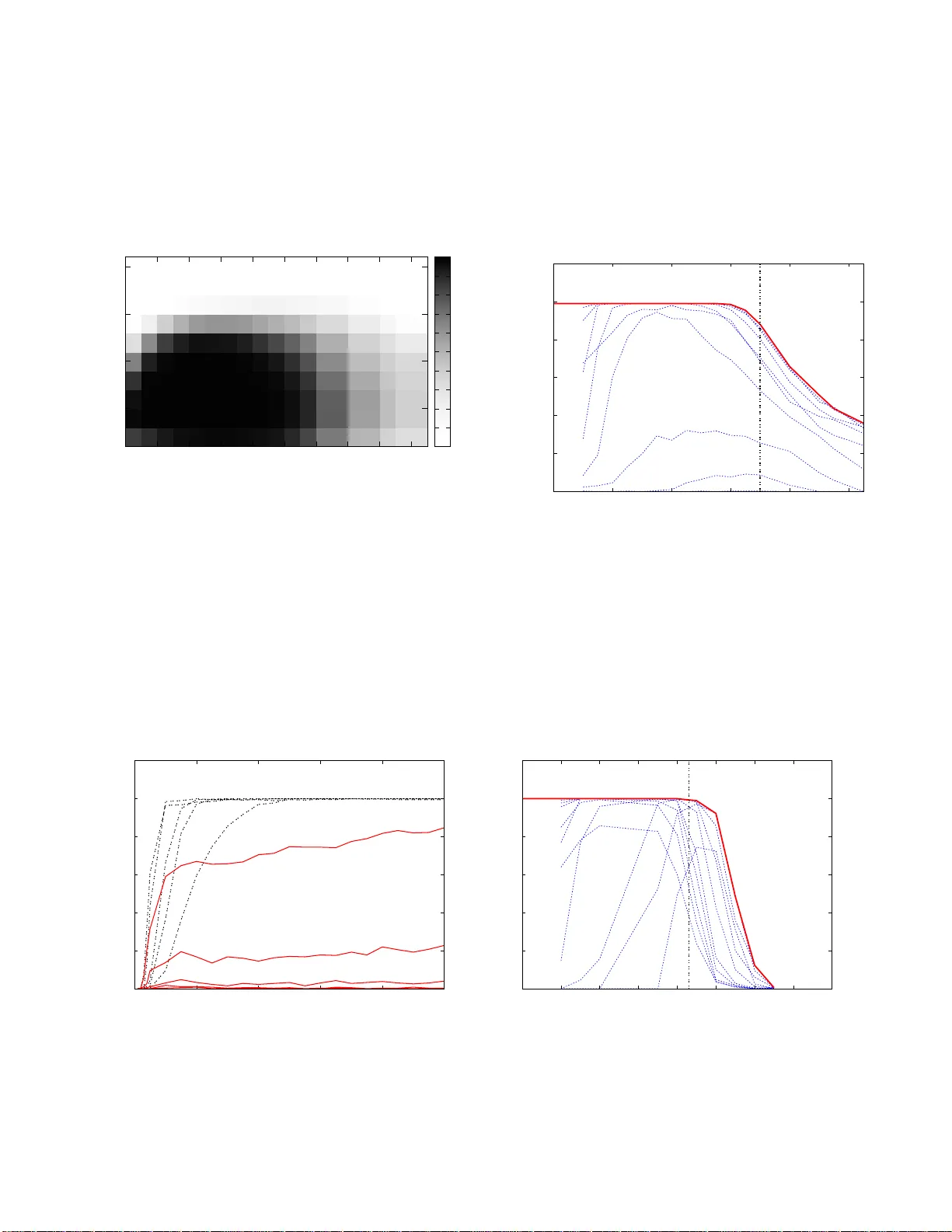

We consider the problem of learning the structure of Ising models (pairwise binary Markov random fields) from i.i.d. samples. While several methods have been proposed to accomplish this task, their relative merits and limitations remain somewhat obsc…

Authors: Jose Bento, Andrea Montanari