Sparse Signal Recovery with Temporally Correlated Source Vectors Using Sparse Bayesian Learning

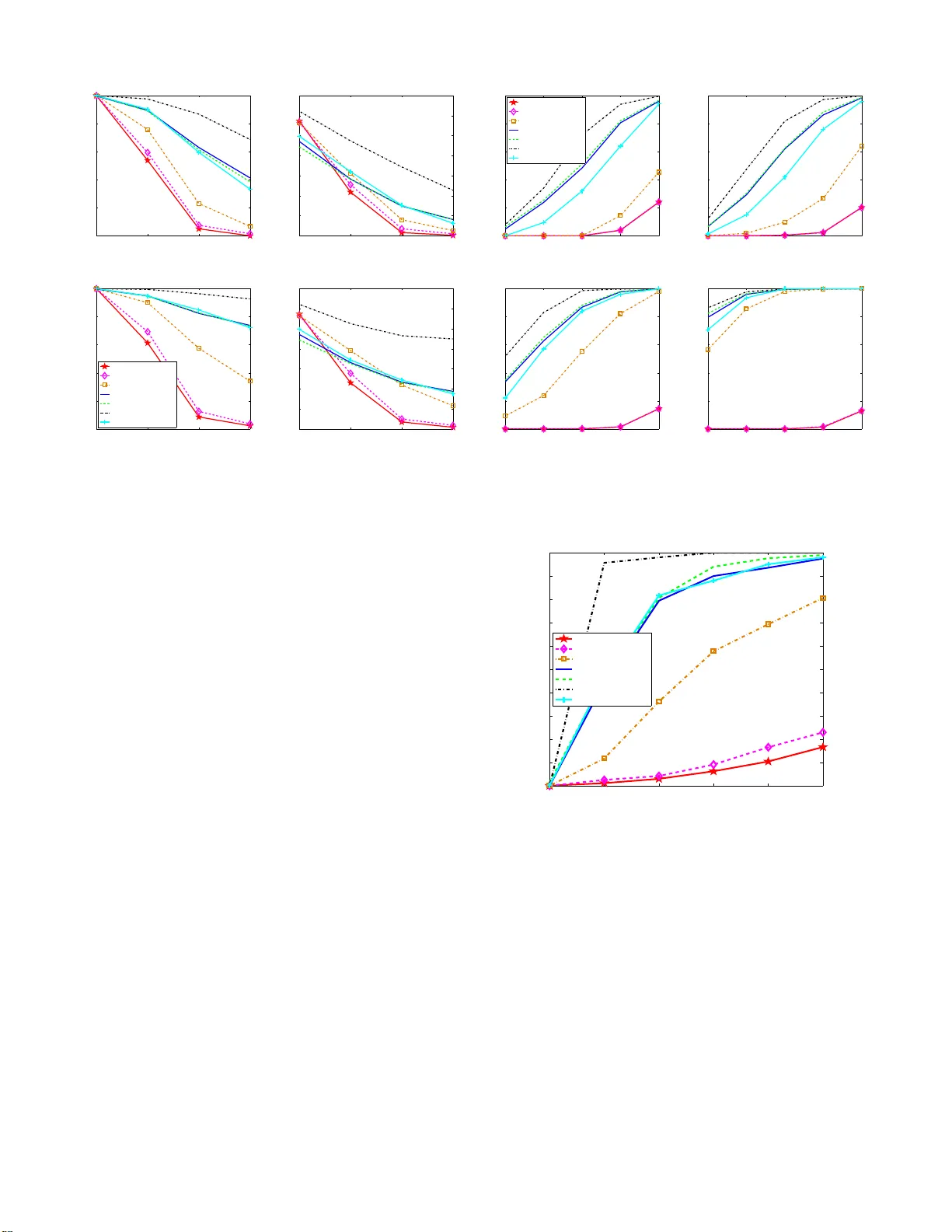

We address the sparse signal recovery problem in the context of multiple measurement vectors (MMV) when elements in each nonzero row of the solution matrix are temporally correlated. Existing algorithms do not consider such temporal correlations and …

Authors: Zhilin Zhang, Bhaskar D. Rao