How the result of graph clustering methods depends on the construction of the graph

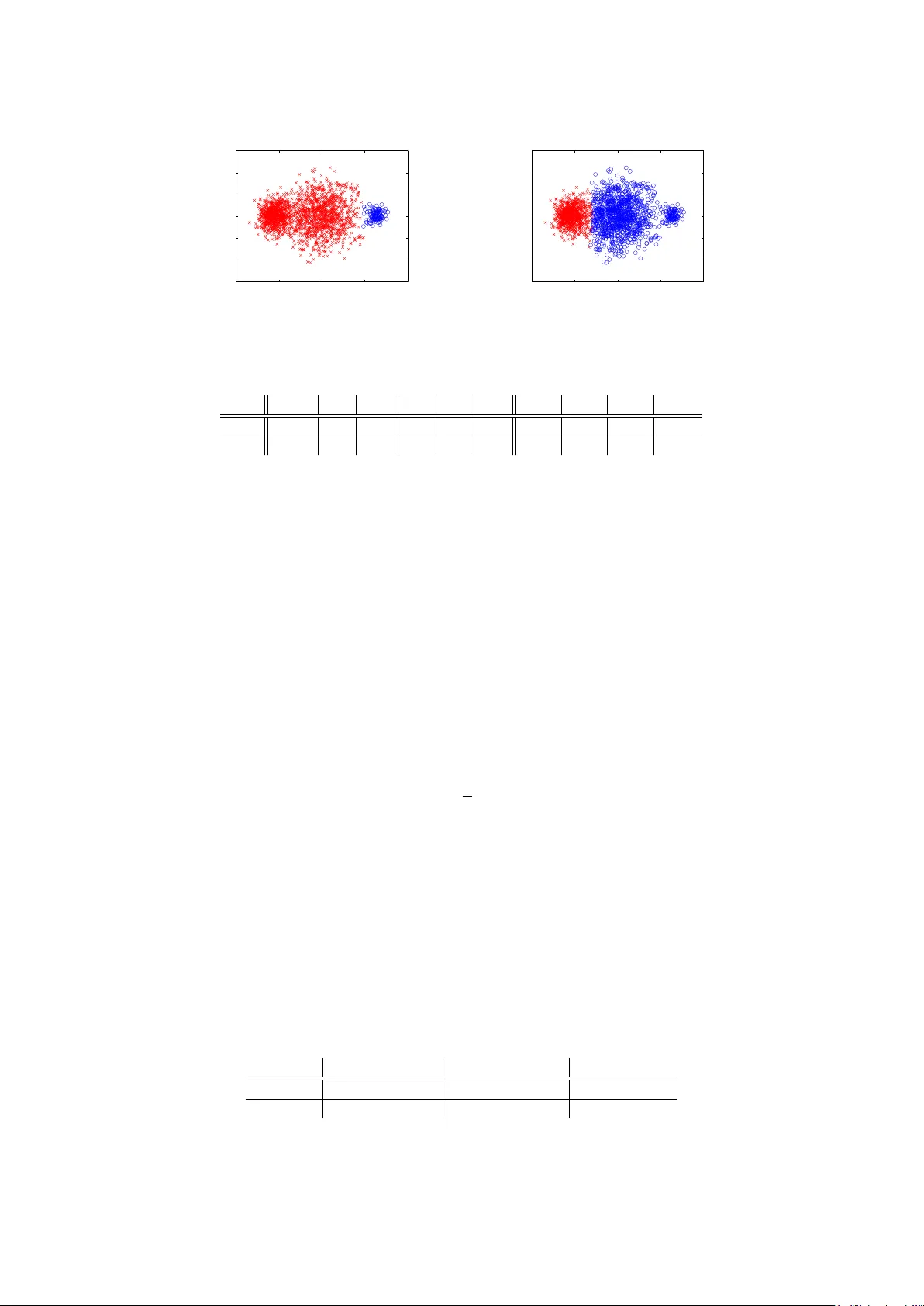

We study the scenario of graph-based clustering algorithms such as spectral clustering. Given a set of data points, one first has to construct a graph on the data points and then apply a graph clustering algorithm to find a suitable partition of the …

Authors: Markus Maier, Ulrike von Luxburg, Matthias Hein