The lowest-possible BER and FER for any discrete memoryless channel with given capacity

We investigate properties of a channel coding scheme leading to the minimum-possible frame error ratio when transmitting over a memoryless channel with rate R>C. The results are compared to the well-known properties of a channel coding scheme leading…

Authors: Johannes B. Huber, Thorsten Hehn

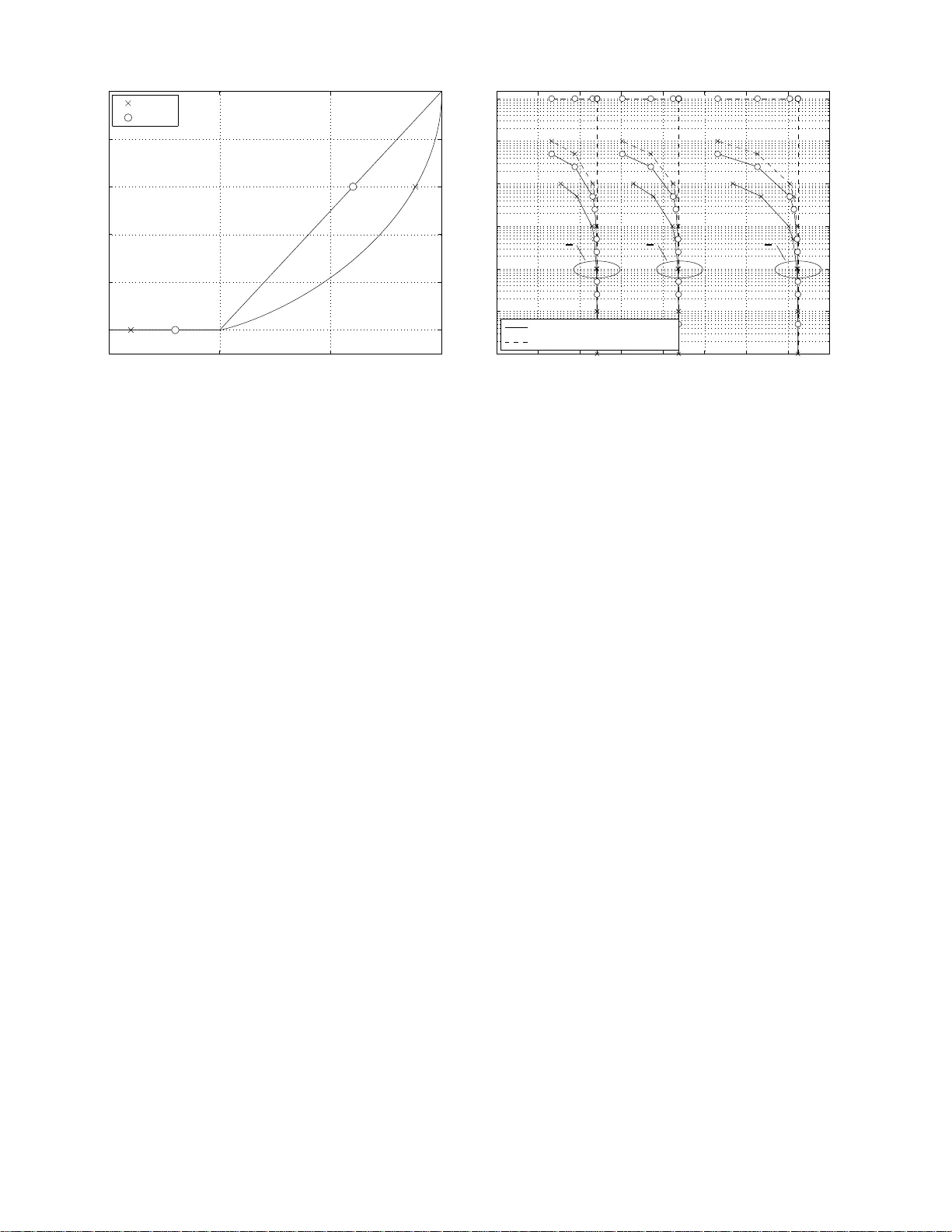

1 The Lo west-Possible BER and FER for an y Discrete Memoryless Channel with Gi ven Capacity Johannes B. Huber and Thorsten Hehn Institute for Information T ransmission Uni versity of Erlangen-Nuremberg, Germany Abstract W e in vestigate properties of a chan nel codin g scheme leading to the m inimum-p ossible fr ame er ror ratio when transmitting over a memo ryless ch annel w ith rate R > C . The results are compared to the well-known prope rties of a ch annel coding scheme leading to minim um b it error ratio . I t is conclud ed that th ese two optimization reque sts are contradictin g. A valuable application of the deri ved results is presented. I . I N T RO D U C T I O N W e consider coded data transmission over a memoryless channel with gi ven capacity C for the case that the rate of the channel e xceeds the channel ca pacity . This is a typi cal situation for a component code in a concatenated codin g scheme [1]. The properties of a channel coding scheme wit h minimum a verage bit error ratio ( BER ) hav e been discussed in s e veral p apers (e.g. [2] and references therein). In this paper , we focus on schemes with mi nimum a verage frame error ratio ( FER ). The work presented in this paper i s threefold. As a first contribution, we discuss a coding scheme o ptimal w . r .t . F ER and use rate-distortion t heory to derive the properties of the end-to-end channel. Second, we present a possible application of these finding s. This is a lower boun d on th e rate when both the channel capacity and the tolerated average frame error ratio are specified. This l eads to the most i mportant and third contribution, the insight that mi nimum BER and m inimum FER are contradicting targets which cannot be obtain ed by a single channel coding s cheme. W e show the consequences for the BER wh en the channel coding scheme is optimized w .r .t. to the FER and vice v ersa. The paper is or ganized as follows. Section II provides necessary definitions and describes the transm ission system. In Section III we repeat the con verse to the channel coding theorem whi ch i dentifies a lower bound for reliable transmiss ion. Section IV briefly repeats t he prop erties of channel codi ng scheme with minim um BER , wh ich was derived in [2]. In Section V id eal channel codi ng w .r .t. FER is introduced. An application as w ell as performance results for the non-optimi zed error ratio are shown in Section VI. 2 I I . T R A N S M I S S I O N S E T U P A N D D E FI N I T I O N S An informati on source deliv ers source symbols u [ ℓ ] , ℓ = 1 , . . . , k , from a binary alphabet. These symbols are realizations of the random variables U [ ℓ ] which are assumed to be independent o f each oth er and ha ve identical distributions. In other words, H ( U [ ℓ ]) = 1 holds , w here H ( · ) denotes the entropy of a random variable. In the following, we denote the j -th element of the set of possibl e source words b y u ( j ) , j = 1 , . . . , 2 k and u [ ℓ ] , ℓ = 1 , . . . , k deno tes the ℓ -th entry in a vector u of length k . An encoder of rate R = k /n is used to t ransform binary source vectors u of length k into vectors x . These vectors contain channel symbol s and are of length n . The vectors x are transmitted ov er the channel and receive d as vectors y of length n , cf. Figure 1. Note that we do not assum e any special properties of the channel except for being discrete and memoryless (discrete memoryless channel, DMC) and meeting the capacity C . A corresponding channel decoder uses the recei ved vector y to generate soft-output esti mates of u , denoted by v . Binary quantization of v yields ˆ u . As stated above, we are in terested in channel coding schemes that min imize the BER and FER , respecti vely , when measured over t he end-to -end channel. Here, the end -to-end channel corresponds t o the channel transmitting u to v if soft-decision output i s required and ˆ u oth erwise, cf. Figure 1. P S f r a g r e p l a c e m e n t s Binary Source Encoder R = k n DMC Capacity C Decoder Sink Sink End-to-end channel u x y v ˆ u Fig. 1. T ransmission scenario for signaling over a DMC of capacity C W e define the average bit error ratio for a given po sition ℓ as BER ℓ = Pr( ˆ U [ ℓ ] 6 = U [ ℓ ]) , ℓ = 1 , . . . , k and the av erage bit error ratio in a codeword as BER = 1 k k P ℓ =1 BER ℓ = E ℓ { BER ℓ } . Addit ionally , a tolerated average bit error ratio BER T is introdu ced. This ratio is t echnically equal to BER , as it is also measured between u and ˆ u , b ut BER T is a user-defined threshold variable. Henceforth, we tacitly assume BER T ≤ 0 . 5 . Similarly , we define the aver age frame error ratio as the probability th at the receiv ed frame diffe rs from the transmit ted one, i.e. FER = Pr( U 6 = ˆ U ) where equalit y of t wo vectors is gi ven if all elements i n the two vectors are equal. Alike BER T , we consider the tolerated ave rage frame error ratio FER T . I ( U ; V ) denotes the to tal mut ual inform ation bet ween vectors (frames) u and v whil e I ( U [ ℓ ]; V [ ℓ ]) is the mu tual information between an individual pair o f input and output symbols. Ad ditionally , we define 3 the av erage mu tual information transm itted in a frame of k sy mbols, ¯ I ( U ; V ) = 1 k P k ℓ =1 I ( U [ ℓ ]; V [ ℓ ]) . I I I . C O N V E R S E T O T H E C H A N N E L C O D I N G T H E O R E M W e repeat the conv erse t o the channel coding theorem as stated in [3, Ch. 4]. This theorem marks t he starting point for bot h our considerations on the lowest BER and FER . In [3, Ch. 4 ] the t ransmission of a sequence of s ource digits is di scussed. Note t hat these dig its can represent both s ingle information symb ols as well as complete source words. W e represent this distinction by diffe rent alphabets and as a consequence we denote the length of a sequence of s ource symbol s by L . As each di git can be taken from an arbitrary alphabet, we denote t he sequence of s ource digits by a sequence of vectors, u L 1 = [ u 1 , . . . , u L ] . The sequence of channel digi ts and recei ved dig its, both of leng th N , are denoted by x N 1 = [ x 1 , . . . , x N ] and y N 1 = [ y 1 , . . . , y N ] , respectiv ely . Please note t hat the cardinalities of the sets of channel input and out put var iables d o not have to be specified, the mutual information I ( X N 1 ; Y N 1 ) is suffic ient. In the following, we cons ider t he general error event that the source digit and the esti mated digit do not coin cide and denote the probabi lity by P e . Identify ing the source dig its from alph abets of size M = 2 and M = 2 k allows us to d educt information o n the average error ratios of int erest, i.e. the BER and the F ER , respectively . W e start by Equation (4.3.20) from [3] (Fano’ s in equality), which i s a lowe r bound on the error probability P e and reads e M ( P e ) ≥ 1 L H ( U L 1 ) − 1 L I ( X N 1 ; Y N 1 ) . (1) Here, e M ( · ) is t he M -ary entropy functi on, e M ( p ) = e 2 ( p ) + p log 2 ( M − 1) , and e 2 ( · ) is t he usual binary entropy function e 2 ( p ) = − p log 2 ( p ) − (1 − p ) log 2 (1 − p ) . Further , M denotes the si ze of the symbol alphabet. Let us first assume t hat u L 1 is a vector of binary symbols o f length L , i.e. L = k and N = n . In this case, P e coincides with the BER and 1 k H ( U k 1 ) = 1 h olds. T ogether with I ( X n 1 ; Y n 1 ) ≤ nC due to t he memoryless channel and the capacity maximum of mut ual information, the well known lower bou nd on BER results: e 2 (BER) ≥ 1 − n k C = 1 − C R . (2) As mentioned above, we regard the source sequence u for a lower bound on FER as one symbol out of a 2 k -ary alphabet, and the variables x and y represent an entire codeword and recei ved word, respectiv ely . 4 In block codin g, each so urce sequence is encoded into one codeword and subsequent codewords are mutually independent. Therefore, L = N holds and Equation (1) reads e 2 k (FER) ≥ 1 L H ( U L 1 ) − 1 L I ( X L 1 ; Y L 1 ) = H ( U ) − I ( X ; Y ) . W ith H ( U ) = k and I ( X ; Y ) ≤ nC , we obtain the corresponding result to Equati on (2) for FER , e 2 k (FER) ≥ k − nC = k (1 − C R ) . (3) It is worth mentio ning that Eq uation (2) i s a special case of Equation (3) for k = 1 . In the fol lowing, we will show by means o f rate-dist ortion theory t hat the l o wer bounds in Equati on (2) and Equation (3) can be met with equality by optim ized channel coding schemes. W e will denote t he a verage frame error ratio measured in a system optimi zed w .r .t. to minim um BER by FER ′ . Like wis e, t he average bit error ratio m easured i n a system opti mized w .r .t. to minimu m FER will be denoted by BER ′ . I V . O B T A I N I N G T H E L OW E S T P O S S I B L E B E R F O R A M E M O RY L E S S C H A N N E L W I T H G I V E N C A P A C I T Y W e inv est igate the transm ission of data at a rate which exceeds the capacity , i.e. we cons ider the region where error -free transm ission is no t possible. Rate-distortio n theory [4] pos tulates, that if an end-to-end a verage bit error ratio BER T is tolerated, a code wi th rate R and appropriate decoding rule exists and achie ves an av erage bit error ratio BER ≤ BER T as long as R ≤ C 1 − e 2 (BER T ) (4) and if n → ∞ . W e define a coding scheme (i.e. code, encoder , and decoder) with rate R = C 1 − e 2 (BER T ) to be ideal in terms of t he averag e bit err or ratio , if f the average bit er ror ratio meets the t olerated one, BER = BER T = e − 1 2 1 − C R . It was shown in [2] that Equation (4) i s met with equality when signaling over a memoryless b inary symmetric channel (BSC). This leads t o the con clusion that the us e of a coding scheme i deal w .r .t. BER results in an end-to-end channel which is a memoryless BSC [2]. For completeness we add that in [2] it is also shown that for an ideal codi ng scheme w .r .t. BER , ¯ I ( U ; ˆ U ) ≡ ¯ I ( U ; V ) ≡ 1 k I ( U ; V ) holds, i.e. soft-output has no benefit over hard-output and int erlea vin g has no influence on t he memoryless s equence of errors. 5 10 0 10 1 10 2 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 P S f r a g r e p l a c e m e n t s FER → k → C /R = 0 . 1 ( ◦ ) to C /R = 0 . 9 ( ⊳ ) Fig. 2. Minimum FER for dif ferent block lengths and giv en C /R V . O B TA I N I N G T H E L OW E S T P O S S I B L E F E R F O R A M E M O RY L E S S C H A N N E L W I T H G I V E N C A P A C I T Y In this section we present the first contribution of this paper . W e dis cuss a channel coding system leading to the end -to-end channel with the lowest possi ble a verage frame error ratio. The adaptation of the con verse to the channel cod ing th eorem for the FER being the error ratio is giv en b y Equati on (3). Figure 2 shows the corresponding lower bound on the FER ov er k for different values of C /R . The lower bound o n C /R , s pecified by Equation (3), becomes particularly interesting wh en k approaches l ar ge values. Then, C /R ≥ lim k →∞ 1 − e 2 (FER) k − log 2 (2 k − 1) k FER = 1 − FER , or equiva lently , FER ≥ 1 − C /R . (5) Again, we show that t his bound can be met with equality by means of rate-dist ortion theory and b y this it is proven that a coding scheme meeti ng the FER has indeed to exist [5]. T o this end, let us first s how that there exists a channel meeting i nequality (5) with equality , namely the M -ary sym metric chann el ( M -SC) with M = 2 k . W e assume that u and ˆ u denote the i nput and output symbo ls of that channel, respectively . The transiti on probabilities of t his end-to-end channel are denoted as Pr( U = u ( j ) | ˆ U = u ( j ) ) = 1 − FER ∀ j ∈ { 1 , . . . , 2 k } and Pr( U = u ( i ) | ˆ U = u ( j ) ) = FER / (2 k − 1 ) , ∀ j , j 6 = i . The mu tual in formation per channel use i s calculated by I ( U ; ˆ U ) = H ( U ) − H ( U | ˆ U ) Pr( U )=[2 − k ... 2 − k ] = (6) 6 k + 2 k X i =1 2 k X j = 1 Pr( ˆ U = u ( j ) | U = u ( i ) ) Pr( U = u ( i ) ) log 2 Pr( ˆ U = u ( j ) | U = u ( i ) ) Pr( U = u ( i ) ) P i ′ Pr( ˆ U = u ( j ) | U = u ( i ′ ) ) Pr( U = u ( i ′ ) ) , what can be simpl ified to I ( U ; ˆ U ) = k − e 2 k (FER) . (7) Equation (7) denotes the mutual informati on for the transm ission of a whole ve ctor . Normalized to one binary s ymbol it reads I ( U ; ˆ U ) k = 1 + (1 − FER) k log 2 (1 − FER) + FER k log 2 FER 2 k − 1 , and t hus for k → ∞ : I ( U ; ˆ U ) k = 1 − FER . Considering the data processing theorem in the form I ( U ; ˆ U ) k ≤ C R and Equation (5) in the form C /R ≥ 1 − FER allows us to conduct I ( U ; ˆ U ) k = C R = 1 − FER for k → ∞ . Again, by m aking use of rate-dist ortion theory and consid ering the fact t hat a distinct test chanel exists [5], we conclude that the lower bound provided in Equation (5) can be m et with equalit y . In the following, we will revie w the properties of this channel. In the case of an error , the 2 k -ary symmetric channel maps t he input to all incorrect o utputs wit h equal probabilit y . W e therefore conclu de that if a frame error occurs, the av erage bi t error ratio within th ese frames is 0 . 5 . Hence, the resulting end-to-end channel corresponds to a fully bursty channel. More strictly speaking , a block-erasure channel with a verage erasure probability FER and infinite frame length meets the bound C /R ≥ 1 − FER with equality . This finding allows us to establish a coherence b etween the capacity and the rate, when an a verage frame error ratio FER T is t olerated. This coherence reads R ≤ C 1 − FER T . W e define a coding scheme (i. e. code, encoder , and decoder) with rate R = C 1 − FER T to be ideal in terms of t he aver age frame err or ratio , iff the av erage frame error ratio meets th e t olerated frame error ratio FER T with equality , FER = FER T . W e denote the obt ained a verage bit error ratio o f such a channel by BER ′ . There exists a straig htforward coherence between BER ′ and the optimal a verage frame error ratio FER which reads BER ′ = 1 2 1 − C R . The capacity of t he fully bursty bi nary (end-to-end) channel, where all errors are p art o f very long error bursts, can be wri tten as C = 1 − 2BER ′ . This is due to the fact that all errors are concentrated in bursts and within these bursts the ave rage bit error rati o is 0 . 5 . Reliable comm unication is accomplished by t he simple rule o f erasing the error bursts at the recei ver side. For error detection, e.g . by m eans of a cyclic 7 redundancy check (CRC), additional redundanc y is necessary but thi s cost vanishes for k → ∞ . Alike stated in Section IV, we observe th at so ft i nformation has no benefit over hard output i f an optim al codi ng scheme w .r .t. minimum FER is used. Examples for such fully bursty channels can s imply be generated by renew al b urst chann el models, like the model of Fritchman with a single error state [6]. For given BER , the capacity of su ch a channel is maximized when t he average burst lengt h tends to infinit y and in this limit, the capacity equals 1 − 2BER . Th is enti ty exactly corresponds to the sit uation of bit errors at the output of a cod ing scheme which is ideal w .r .t . minimum average frame error ratio. Consider now an end-to-end channel with minimum BER . For k → ∞ , th e ob tained avera ge frame error ratio, denoted by FER ′ is g iv en by FER ′ = 0 iff BER = 0 and FER ′ = 1 otherwise. When consid ering blocks of infini te length, ev ery block is erroneous if bit errors are po ssible in general. W ith th e resul ts derived so far , it i s straightforward t o see that a channel coding s cheme working in the region R > C cannot obtain the mini mum-possibl e BER and the mini mum possible FER with one channel coding scheme, cf. Figure 3(a). V I . P O S S I B L E A P P L I C A T I O N A p ossible appl ication of the presented results is i ntroduced in this section. W e assu me bi nary anti podal signaling (BPSK) over the A WGN channel with a channel code of give n rate R . A lowe r bound on the obtainable BER is giv en in Equatio n (2), which can be rewritten to BER ≥ e − 1 2 1 − C R . This entity allows to generate the curves depicting the optimum BER obtainable by codes of given rate and length approaching infinity . These curves are well-kno wn from num erous publications within the area of channel codi ng and visualize the fundam ental li mits for transmiss ion at a gi ven rate. In Figure 3 (b) these curves are shown for the rates R = 1 / 4 , R = 1 / 2 , R = 3 / 4 , respective ly , and are labeled by BER . Here, the capacity of th e channel is specified by the signal-to -noise ratio expressed by 10 log 10 ( E b / N 0 ) as usual. In th is cont ext, E b denotes t he energy per transm itted bit of inform ation and N 0 represents the one-sided s pectral noise-power density . The findings presented in Section V allow to extend these fundam ental curves to scenarios where t he FER is used as the performance m easure. Here, Equat ion (5) st ates the lower bound on the FER which can be reached by code lengths approaching infinity . Figure 3(b) also depicts lower bou nds on the frame error ratios for codes of rate R = 1 / 4 , R = 1 / 2 , and R = 3 / 4 . These curves ar e labeled by FER . Figure 3(b) also shows the average error ratios BER ′ and FER ′ . These curves illustrate the av erage bi t error ratio for the case that the channel coding system of the end-to-end channel has been opti mized for 8 −0.5 0 0.5 1 0 0.1 0.2 0.3 0.4 0.5 P S f r a g r e p l a c e m e n t s BER 1 − C /R → BER ′ BER and BER ′ → (a) V isualization of the si gnificant dif ference between BER and BER ′ for coding schemes optimal w .r . t BER and FER , respec- tiv ely −2 −1.5 −1 −0.5 0 0.5 1 1.5 2 10 −6 10 −5 10 −4 10 −3 10 −2 10 −1 10 0 P S f r a g r e p l a c e m e n t s 10 log 10 ( E b / N 0 ) → BER , BER ′ , FER → R = 1 4 R = 1 2 R = 3 4 BER ( × ) and BER ′ ( ◦ ) FER ( × ) and FER ′ ( ◦ ) (b) BER , BER ′ , FER and FER ′ for coding schemes of giv en rate. Fig. 3. Performance comparisons on BER , BER ′ , FER and FER ′ the FER and the aver age frame error ratio for the case that the optimi zation was done for the BER , respectiv ely . This confirms th at channel coding schemes being ideal w .r .t . mi nimum BER and minimum FER have to be designed in q uite different ways. Esp ecially an optimization w .r .t. the BER in situations, when a low av erage frame error ratio is required as well, leads t o significant performance lo sses. In all cases, the average mu tual i nformation of the end-to-end channel is given by min ( C /R, 1) . In order to obtain m inimum BER , the end-to-end chann el is a memoryless BSC, whereas for minimum FER , an end-to-end channel with m emory (to be precise, a b lock-erasure channel) results. V I I . C O N C L U S I O N S W e considered transmi ssion at rates e xceeding the capacity of the underlying channel. Fundamental insight in a threefold manner is gi ven. First, knowledge on a codi ng scheme leading to an end-to-end channel with minimu m av erage frame error ratio is provided. It turns out that this channel is a block- erasure channel, transmitt ing frames eit her correctly or in such a way t hat no information is transmitted at all. The a verage bit error ratio within a frame corresponding to a burst error equals 0 . 5 . Second, it was shown that mi nimum BER and min imum FER are disparate requests to a channel coding scheme. The third contribution is an appli cation. It i s usual in li terature to compare the BER b eha vi or of channel coding schemes t o information theoretic bounds; this is now also possible with respect to the FER . 9 A C K N O W L E D G M E N T The a uthors want to thank the anonymous r evie wers for their valuabl e comments. R E F E R E N C E S [1] S. ten Brink. Con vergen ce beha vior of iteratively decoded parallel concatenated codes. IEEE T ransactions on Communications , 49(10):1727 –1737, October 2001. [2] S. Huettinger, J.B. Huber , R. F .H. Fischer , and R. Johanne sson. Soft-ou tput-decoding: Some aspects from information theory . In Pr oceedings of the International ITG Confer ence on Sour ce and Channel Coding (SCC) , pages 81–89, Berl in, Germany , January 2002. [3] Robert G. G allager . Information Theory and Reliable Communication . John Wile y and Sons, 1968. [4] C.E. Shannon. C oding t heorems for a discrete source wit h a fidelity criterion. IR E National Conven t ion Recor d , 4:142–163, 1959 . [5] T oby Berger . Rate Distortion Theory: A Mathematical Basis for Data Compr ession . Prentice-Hall, N.J., January 1971. [6] B. Fritchman. A binary channel characterization using partit ioned Markov chains. IE EE Tr ansactions on Information Theory , 13(2):221– 227, April 1967.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment