Subspace Pursuit for Compressive Sensing Signal Reconstruction

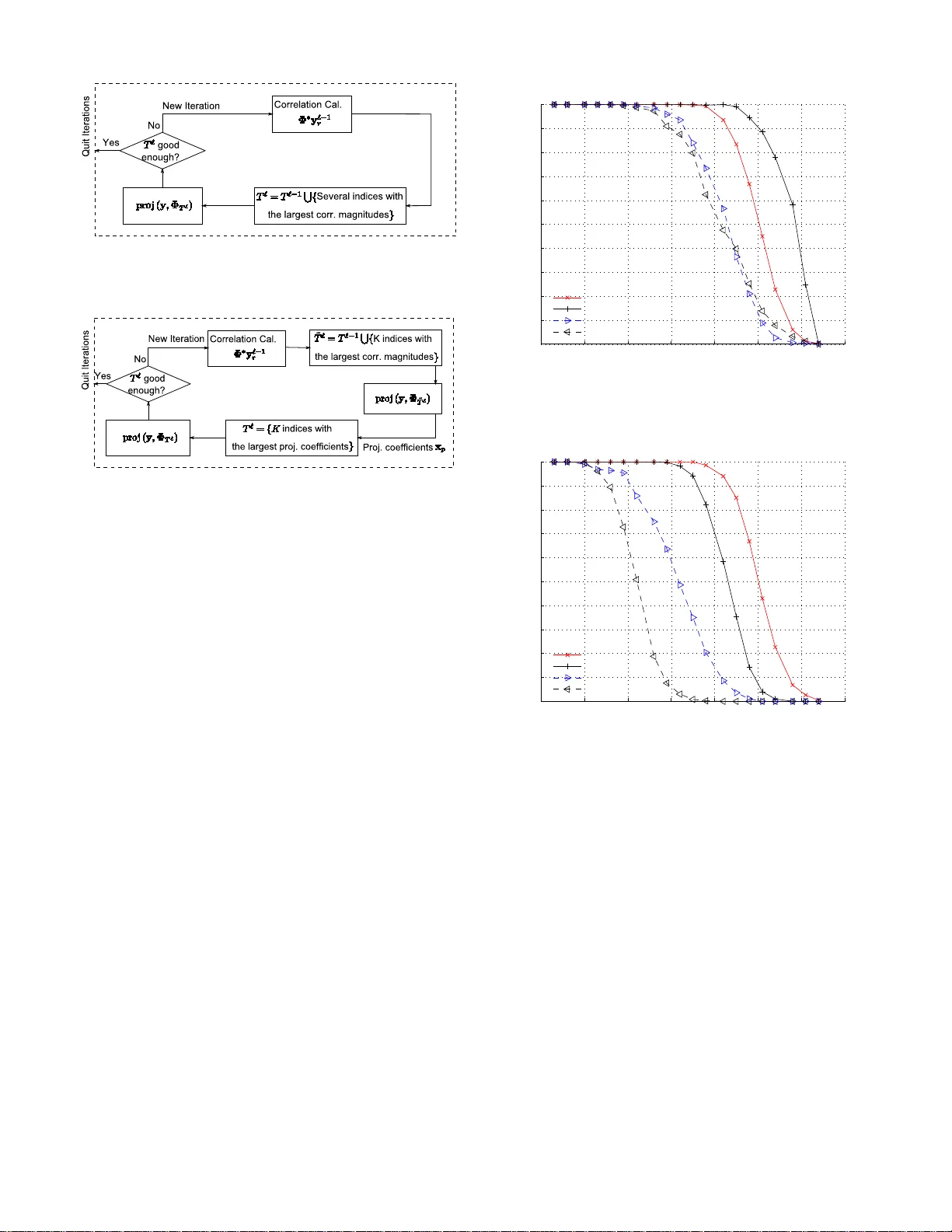

We propose a new method for reconstruction of sparse signals with and without noisy perturbations, termed the subspace pursuit algorithm. The algorithm has two important characteristics: low computational complexity, comparable to that of orthogonal …

Authors: Wei Dai, Olgica Milenkovic