On the inner and outer bounds of 3-receiver broadcast channels with 2-degraded message sets

We consider a broadcast channel with 3 receivers and 2 messages (M0, M1) where two of the three receivers need to decode messages (M0, M1) while the remaining one just needs to decode the message M0. We study the best known inner and outer bounds und…

Authors: Ch, ra Nair, Vincent Wang Zizhou

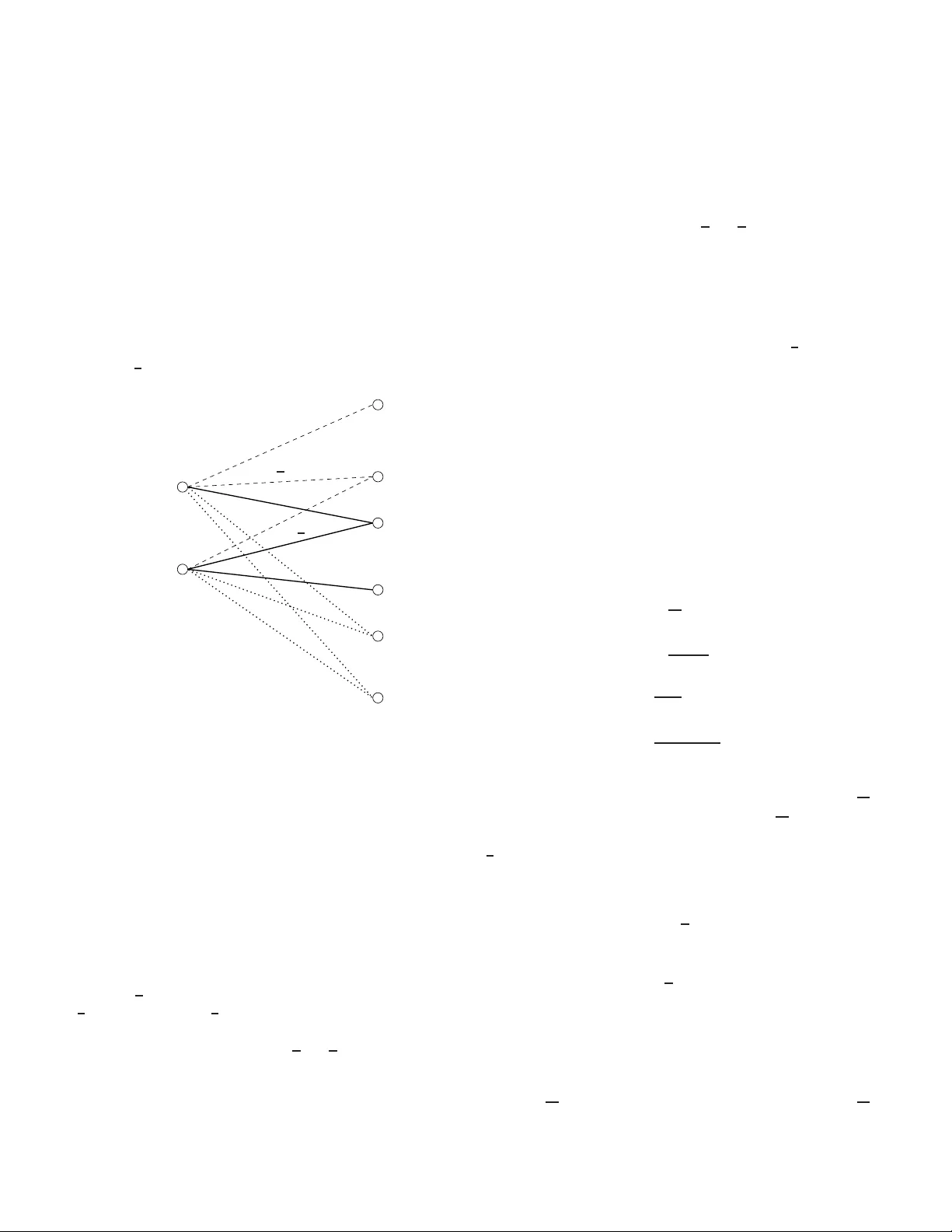

On 3-recei v er broadcast channels with 2-de graded message sets Chandra Nair Departmen t of I nform ation Engineering Chinese University of Hong K ong Sha T in, N.T ., Hong K ong Email: ch andra@ie.cu hk.edu .hk Zizhou V incent W a ng Departmen t of I nform ation Engineer ing Chinese University of Hong K ong Sha Tin, N.T ., Ho ng Kong Email: zzwang6@ie.cuhk .edu.h k Abstract — W e consider a broadcast ch annel with 3 receiv ers and 2 message s ( M 0 , M 1 ) where two of the three receiv ers need to decode messages ( M 0 , M 1 ) while t he r emainin g one just needs to decode the message M 0 . W e study the best k nown inner and outer bounds u nder this setting, in an attempt to find the deficiencies w ith the current techn iques of establi shing the bounds. W e produce a simple example where we ar e a ble to explicitly ev aluate the inn er b ound and show that it differs from the general outer bound. Fo r a class of channels where the general inner and outer bounds differ , we u se a new arg ument to show that the i nner bou nd is tight. I . I N T R O D U C T I O N The broad cast channe l with degrad ed message sets was initially studied by K ´ orner and M ¨ arton [1] for two r eceiv ers and more recently in [2], [3], [4] for three and more receivers. K ´ orner and M ¨ arton [1] established the capa city region for the degraded message sets with two receivers and some cap acity regions for thre e or mo re receivers were established [ 2], [3] b y showing that the straigh tforward extension of the inner bound in [1] was op timal. In [4], an idea called indirect decoding was introdu ced and the author s showed that this c ould be used to enhance (in some cases strictly) the straigh tforward extension of th e inn er bou nd by K ´ orner an d M ¨ arto n. Unfo rtunately , the new i nner boun ds [ 4] become quite messy and unwieldy due to the in troductio n of many aux iliary r andom variables. Howe ver there is still one class of broad cast chann els with degraded message sets wh ere the id ea of in direct dec oding does no t yield any r egion better than the straightforward extension of the K ´ orner and M ¨ arton inner b ound, an d this is the scena rio of in terest here. Consider a 3-r eceiv er broad cast ch annel with 2 messages ( M 0 , M 1 ) with the following decoding requ irement. Receiv ers Y 1 and Y 2 need to decode both m essages ( M 0 , M 1 ) while receiver Y 3 needs to decode o nly message M 0 . The tr aditional inner and outer bounds presented belo w remain the best kno wn inner and outer bo unds fo r this class of broad cast ch annels. In this pa per we look at the genera l in ner and outer bound s for this scenario in a gr eater detail. W e show th at these bo unds differ in gene ral, and th at there is a class of channels wh ere the inner bo und is tight and the outer bound is weak . There ar e two main contributions in this paper: the first one is the techn ique (same spirit as Mr s. Ge rber’ s lem ma [5]) used to e valuate the boundary of a particular inn er bou nd; the seco nd is the use of a ( 1 2 + ǫ )-co deboo k 1 rather than an ǫ -codeb ook to establish the cap acity region. Bound 1: T he un ion of th e fo llowing set of r ate pairs ( R 0 , R 1 ) satisfy ing R 0 ≤ I ( U ; Y 3 ) R 1 ≤ min { I ( X ; Y 1 | U ) , I ( X ; Y 2 | U ) } R 0 + R 1 ≤ min { I ( X ; Y 1 ) , I ( X ; Y 2 ) } over all pairs of r andom variables ( U, X ) such that U → X → ( Y 1 , Y 2 , Y 3 ) forms a Markov chain constitutes an inner bound to the cap acity r egion. Bound 2: T he union over the set of rate pairs ( R 0 , R 1 ) satisfying R 0 ≤ min { I ( U 1 ; Y 3 ) , I ( U 2 ; Y 3 ) } R 0 + R 1 ≤ min { I ( U 1 ; Y 3 ) + I ( X ; Y 1 | U 1 ) , I ( U 2 ; Y 3 ) + I ( X ; Y 2 | U 2 ) } R 0 + R 1 ≤ min { I ( X ; Y 1 ) , I ( X ; Y 2 ) } over all possible choices of ra ndom v ariables ( U 1 , U 2 , X ) such that ( U 1 , U 2 ) → X → ( Y 1 , Y 2 , Y 3 ) forms a Markov chain constitutes an outer bou nd fo r this chan nel. The a bove b ound s are trad itional, i.e. can be obtained using standard techniqu es. The inner bou nd is a straigh tforward extension of the achiev ab ility argum ent in [1] an d the outer bound can b e ded uced b y argum ents in [6], [7], etc. Remark 1 : It is also possible to in clude the co nstraint R 0 ≤ min { I ( U 1 ; Y 1 ) , I ( U 2 ; Y 2 ) } into the ou ter bou nd. Howe ver , it is quite straightforward to show that the region obtained b y adding this ine quality is identical to the bo und we presented. These bou nds are k nown to be tig ht in all of the fo llowing special cases, • Recei ver Y 1 is a less n oisy receiv er than Y 3 and Y 2 is a less no isy receiv er than Y 3 [2], [4], • Y 3 is a deterministic fu nction of X , • Y 1 is a mor e capa ble receiver th an Y 2 (or vice-versa), • Y 3 is a mor e capa ble receiver th an Y 2 (or Y 1 ), 1 An η -codeboo k is used to denote a codebook whose probabi lity of error is bounded abov e by η . The last two cases are very straig htforward and the p roof is omitted. When Y 3 is a determ inistic fun ction of X , note that it is not difficult to sh ow that by taking the c onv ex clo sure of the regions obtained by setting ( i ) U = Y 3 and ( ii ) U = ∅ in the inner bo und exha usts the following region, R 0 ≤ H ( Y 3 ) R 0 + R 1 ≤ min { I ( X ; Y 1 ) , I ( X ; Y 2 ) } and this clear ly f orms a n o uter b ound to the capa city region. One class o f chann els that doe s not fall into any of th e cases is the fo llowing channe l sho wn in Figure 1 b elow . The chann el X → ( Y 1 , Y 2 ) represents a binary skew-symmetric (BSSC) broadc ast c hannel [ 7], [8] and the chan nel X → Y 3 represents a b inary symmetric (BSC) with crossover probab ility p , with 0 ≤ p ≤ 1 2 . P S f r a g r e p l a c e m e n t s 0 1 0 1 0 1 0 1 X Y 1 Y 2 Y 3 1 2 1 2 p Fig. 1. 3-recei ver broadcast channel In the next section we evaluate Boun d 1 for this channel. Based on the symmetr y , it is very natu ral to b elieve that th e auxiliary channel U → X must be a BSC with s ome cross o ver probab ility s . In the next sectio n, we p rove th at this is indeed the case. T his uses a techniqu e similar in spirit to W yn er and Ziv’ s technique of using Mrs. Gerber’ s lemma [5]. W e will also show tha t the Bound 2 yield s a strictly larger region f or this chann el. Fin ally , we will show that the region represented by Bo und 1 co nstitutes the cap acity r e gion for this ch annel. I I . E V A L U A T I O N O F T H E I N N E R B O U N D In the ev aluation of the inn er bo und, we divide the range 0 ≤ p ≤ 1 2 into two r egions, 0 ≤ p ≤ p max and p max ≤ p ≤ 1 2 , where p max ∈ [0 , 1 2 ] is the u nique solu tion o f 1 − h ( p ) = h ( 1 4 ) − 1 2 ; i.e. the value of p at which capacity o f the BSC m atches the term ma x p ( x ) min { I ( X ; Y 1 ) , I ( X ; Y 2 ) } . The n umerical value of p max ≈ 0 . 18 4 . A. Evaluation of the in ner bound , 0 ≤ p ≤ p max In the region 0 ≤ p ≤ p max it is straigh tforward to see that the inner bou nd red uces to the following region (o btained via a time-division betwee n th e two au xiliary cha nnels: ( i ) U = ∅ and ( ii ) U = X , and in each case, setting P( X = 0) = 0 . 5 ), R 0 + R 1 ≤ h ( 1 4 ) − 1 2 , which clearly matches the ou ter b ound (Bound 2). Thu s for 0 ≤ p ≤ p max ≈ 0 . 184 , th e inner and outer boun ds are tight and giv e the cap acity re gion. B. Evaluation of the in ner bound , p max ≤ p ≤ 1 2 Let U = { 1 , 2 , ..., m } and let P( U = i ) = u i and P( X = 0 | U = i ) = s i . Further, let h ( x ) = − x log 2 x − (1 − x ) lo g 2 (1 − x ) denote th e binary en tropy fun ction. Using th ese n otations we have, I ( U ; Y 3 ) = h ( X i u i ( s i (1 − p ) + (1 − s i ) p )) − X i u i h ( s i (1 − p ) + (1 − s i ) p ) I ( X ; Y 1 | U ) = X i u i h ( s i 2 ) − X i u i s i I ( X ; Y 2 | U ) = X i u i h ( 1 − s i 2 ) − X i u i (1 − s i ) I ( X ; Y 1 ) = h ( X i u i s i 2 ) − X i u i s i I ( X ; Y 2 ) = h ( X i u i (1 − s i ) 2 ) − X i u i (1 − s i ) . Define ˜ U = { 1 , 2 , ..., m } × { 1 , 2 } , P ( ˜ U = ( i, 1)) = u i 2 , P( X = 0 | ˜ U = ( i, 1)) = s i , P( ˜ U = ( i, 2)) = u i 2 , and P( X = 0 | ˜ U = ( i, 2)) = 1 − s i . This indu ces an ˜ X with P ( ˜ X = 0) = 1 2 . It is straigh tforward to see the fo llowing: I ( ˜ U ; ˜ Y 3 ) ≥ I ( U ; Y 3 ) I ( ˜ X ; ˜ Y 1 | ˜ U ) = I ( ˜ X ; ˜ Y 2 | ˜ U ) = 1 2 ( I ( X ; Y 1 | U ) + I ( X ; Y 2 | U )) ≥ min { I ( X ; Y 1 | U ) , I ( X ; Y 2 | U ) } I ( ˜ X ; ˜ Y 1 ) = I ( ˜ X ; ˜ Y 2 ) ≥ 1 2 ( I ( X ; Y 1 ) + I ( X ; Y 2 )) From this it follows that for every U re placing U b y ˜ U leads to a larger achiev able region. Hence to evaluate Bou nd 1, it suffices to maximize over all auxiliary rando m variables of the form U defined by: U = { 1 , 2 , ..., m } × { 1 , 2 } , P( U = ( i, 1)) = u i 2 , P( X = 0 | U = ( i, 1)) = s i , P( U = ( i, 2)) = u i 2 , and P( X = 0 | U = ( i, 2)) = 1 − s i . Under this notation we have th e following expression for the rate region given in Boun d 1, R 0 ≤ I ( U ; Y 3 ) = h 1 2 − X i u i h ( s i (1 − p ) + (1 − s i ) p ) , R 1 ≤ min { I ( X ; Y 1 | U ) , I ( X ; Y 2 | U ) } = X i u i 2 h ( s i 2 ) + h ( 1 − s i 2 ) − 1 2 , R 0 + R 1 ≤ min { I ( X ; Y 1 ) , I ( X ; Y 2 ) } = h 1 4 − 1 2 . Using the symme try of the function h ( x ) = h (1 − x ) we note that h ( s i (1 − p ) + (1 − s i ) p ) = h ((1 − s i )(1 − p ) + s i p ) and thus the above r egion is con stant u nder the transfo rmation s i → 1 − s i , implyin g we can r estrict s i to take values on ly in 0 ≤ s i ≤ 1 2 . Before we pro ceed to determine the bou ndary of this region, we prove the fo llowing lemma. C. An inequ ality for a class of fun ctions Lemma 1 : Let f ( x ) and g ( x ) be two n on-negative and strictly increasing functions that are dif f erentiable in the region x ∈ [ x 1 , x 2 ] . Further assume that f (1) ( x ) g (1) ( x ) is a decreasin g function , where f (1) ( x ) and g (1) ( x ) denote the deriv atives o f the function . Given any u, 0 ≤ u ≤ 1 , let x int be uniq uely defined accord ing to f ( x int ) = uf ( x 1 ) + (1 − u ) f ( x 2 ) . Then the following h olds, g ( x int ) ≤ ug ( x 1 ) + (1 − u ) g ( x 2 ) . Pr oof: W e h ave u ( f ( x int ) − f ( x 1 )) = (1 − u )( f ( x 2 ) − f ( x int )) , and we wish to s how that u ( g ( x int ) − g ( x 1 )) ≤ (1 − u )( g ( x 2 ) − g ( x int )) . Since all th e terms are positive, this reduces to showing f ( x int ) − f ( x 1 ) g ( x int ) − g ( x 1 ) ≥ f ( x 2 ) − f ( x int ) g ( x 2 ) − g ( x int ) . Howe ver, this is immediate as shown b elow . From th e fact that f (1) ( x ) g (1) ( x ) is a decreasing fu nction, we hav e R x int x 1 f (1) ( x ) dx R x int x 1 g (1) ( x ) dx ≥ f (1) ( x int ) g (1) ( x int ) ≥ R x 2 x int f (1) ( x ) dx R x 2 x int g (1) ( x ) dx Repeated application s of Lemma 1 leads to the following corollary - po tentially o f in depend ent interest. Cor ollary 1: Let f ( x ) an d g ( x ) b e two non- negativ e and strictly increasing functions that are dif f erentiable in the region x ∈ [ x 1 , x 2 ] . Further assume that f (1) ( x ) g (1) ( x ) is a decreasin g function , wh ere as before f (1) ( x ) an d g (1) ( x ) d enote the deriv ativ es of the function. Gi ven any u i ≥ 0 , P i u i = 1 , and y i ∈ [ x 1 , x 2 ] , let x int be uniq uely d efined according to f ( x int ) = P i u i f ( y i ) . Then th e following holds g ( x int ) ≤ X i u i g ( y i ) . D. Determining the bound ary rate pairs W e use the Corollary 1 to determ ine the bou ndary of th e region. W e make the following iden tifications, let f ( x ) = h ( x 2 ) + h ( 1 − x 2 ) − 1 , and g ( x ) = h ( x (1 − p ) + (1 − x ) p ) . Ob serve that f ( x ) and g ( x ) a re increasing dif ferentiab le f unctions in the region [0 , 1 2 ] . Claim 1: F or 1 6 ≤ p ≤ 1 2 , the ratio of th e d eriv atives f (1) ( x ) g (1) ( x ) is a dec reasing fu nction. The p roof of this fact is f ound in th e App endix. (Numerical simulations ind icate that this is true for p min ≤ p ≤ 1 2 for p min ≈ 0 . 05 , but for the p urpo ses of establishing the inner bound clearly this r egion o f p suffices, as 1 6 ≤ p max ≈ 0 . 184 ) . Remark 2 : By combining Claim 1 and Corollary 1 note that h ( p ∗ f − 1 ( y )) is con vex in y , and this is very similar to Mrs. Gerber’ s Lemma [5 ]. Now let s int be d efined according to h ( s int 2 ) + h ( 1 − s int 2 ) = X i u i h s i 2 + h 1 − s i 2 . Then fr om Co rollary 1 , for p min ≤ p ≤ 1 2 we hav e h 1 2 − X i u i h ( s i (1 − p ) + (1 − s i ) p ) ≤ h 1 2 − h ( s int (1 − p ) + (1 − s int ) p ) . This im plies that the optimal auxiliary ch annel U → X is a BSC with a cro ss-over p robab ility s and P( U = 0) = 1 2 . Thus for p max ≤ p ≤ 1 2 , the bou ndary is ch aracterized b y the pair o f p oints o f the fo rm, R 0 = 1 − h ( s (1 − p ) + (1 − s ) p ) , R 1 = min 1 2 h s 2 + h 1 − s 2 − 1 , (1) h 1 4 − 3 2 + h ( s (1 − p ) + (1 − s ) p ) , for 0 ≤ s ≤ 1 2 . The second ter m in R 1 comes from taking into ac count the sum rate constrain t, R 0 + R 1 ≤ h 1 4 − 1 2 . A simple calcu lation shows that for p o ≤ p ≤ 1 2 one can ignore the s um ra te constrain t, wh ere p o = √ 3 − 1 2 √ 3 ≈ 0 . 21 1 . This p o correspo nds to the smallest value of p where the conv ex region characterized by the pairs R 0 = 1 − h ( s (1 − p ) + (1 − s ) p ) , R 1 = 1 2 h s 2 + h 1 − s 2 − 1 . has a slo pe o f − 1 at the point ( R 0 , R 1 ) = 0 , h 1 4 − 1 2 . Therefo re the inner bound has three different expressions: • 0 ≤ p ≤ p max : the inn er bou nd r educes to R 0 + R 1 ≤ h 1 4 − 1 2 , • p max ≤ p ≤ p o : the in ner bou nd is given by eq uation (1) where all in equalities ar e nec essary , • p o ≤ p ≤ 1 2 : the inn er boun d is ch aracterized by pair of points of the fo rm R 0 = 1 − h ( s (1 − p ) + (1 − s ) p ) , R 1 = 1 2 h s 2 + h 1 − s 2 − 1 . E. Comparison with the outer bound T o sho w that the ou ter b ound gi ves a larger region, we produ ce a p articular ch oice o f the pair ( U 1 , U 2 , X ) . Consider a U 1 , U 2 defined as follows, P( U 1 = 1 ) = P ( U 2 = 1 ) = u , P( U 1 = 2 ) = P ( U 2 = 2 ) = 1 − u , P( X = 0 | U 1 = 1) = P( X = 1 | U 2 = 1 ) = 1 , P( X = 0 | U 1 = 2) = P( X = 1 | U 2 = 2 ) = s, where s = 0 . 5 − u 1 − u for 0 ≤ u ≤ 0 . 5 . Ex istence of the triple ( U 1 , U 2 , X ) is guar anteed by the con sistent distribution on X . Substituting this ch oice into Bou nd 2 we obtain Re gio n A giv en by , R 0 ≤ 1 − (1 − u ) h s (1 − p ) + (1 − s ) p − uh p , R 1 ≤ (1 − u ) h s 2 − 1 2 + u, R 0 + R 1 ≤ h 1 4 − 1 2 . Figure 2 p lots Region A an d Bound 1 for p = 1 4 . Observe that Region A is larger than Bound 1 , an d h ence the Bound s 1 and 2 d o n ot m atch for the 3 -receiver channel shown in Figu re 1. T his implies the following cor ollary . 0 0.05 0.1 0.15 0.2 0.25 0.3 0.35 0 0.05 0.1 0.15 0.2 0.25 0.3 R1 R0 Inner bound Region A Fig. 2. Comparing Bound 1 and Reg ion A for p = 1 4 Cor ollary 2: There exists a class of chann els, given in Figure 1, for which th e in ner and outer bound s ( i.e. Boun ds 1 and 2) do not match. I I I . R E V I S I T I N G O U T E R B O U N D W e n ow show that the inn er b ound is tight for th e channel shown in Figu re 1 . Let π : { 0 , 1 } 7→ { 0 , 1 } ; π (0) = 1 , π (1) = 0 . Consider an ǫ -cod ebook { x n m 0 ,m 1 , 1 ≤ m 0 ≤ 2 nR 0 , 1 ≤ m 1 ≤ 2 nR 1 , A m 0 ,m 1 ⊆ Y n 1 , B m 0 ,m 1 ⊆ Y n 2 , C m 0 ⊆ Y n 3 } , where the disjoin t sets A m 0 ,m 1 , B m 0 ,m 1 , C m 0 represent the decodin g maps. From the skew symmetry of the ch annels X → ( Y 1 , Y 2 ) and the symm etry in chan nel X → Y 3 , it is clear that { π ( x n m 0 ,m 1 ) , 1 ≤ m 0 ≤ 2 nR 0 , 1 ≤ m 1 ≤ 2 nR 1 , π ( B m 0 ,m 1 ) ⊆ Y n 1 , π ( A m 0 ,m 1 ) ⊆ Y n 2 , π ( C m 0 ) ⊆ Y n 3 } represents a valid ǫ -co deboo k as well. From these two codes, construct a new codebook (with erro r bound ed by 1 2 + ǫ ) and size 2 nR 0 × 2 nR 1 +1 as fo llows: T he codewords are in dexed by x n m 0 , ( m 1 ,b ) where b = 0 , 1 . When b = 0 the codeword x n m 0 , ( m 1 ,b =0) = x n m 0 ,m 1 and when b = 1 , we have x n m 0 , ( m 1 ,b =1) = π ( x n m 0 ,m 1 ) . The decod ing maps for this c odebo ok are created as follows: If y n 1 ∈ A m 1 0 ,m 1 1 ∩ π ( B m 2 0 ,m 2 1 ) then th e receiver choo ses one of the two message pairs ( m 1 0 , m 1 1 ) , ( m 2 0 , m 2 1 ) with equ al pro bability . Otherwise it picks t he message p air correspond ing to the uniq ue set A m 1 0 ,m 1 1 or π ( B m 2 0 ,m 2 1 ) that it be longs to. A similar d ecoding strategy applies f or r eceivers Y 2 and Y 3 as we ll. The key feature is the sym metry of th e codeb ook. If x n ∈ C then π ( x n ) ∈ C and correspon d to the same message M 0 . Now o bserve that H ( M 0 , M 1 | Y n 1 ) ≤ H ( M 0 , M 1 , b | Y n 1 ) ≤ 1 + H ( M 0 , M 1 | Y n 1 , b ) = 1 + n ( R 0 + R 1 ) ǫ n . Th erefor e we obtain the same outer bound (Bound 2) using Fano’ s inequ ality and identification of the auxiliary random variables as before. In particular, th e iden tifications of the a uxiliary r andom variables remain the f ollowing: U 1 i = ( M 0 , Y i − 1 31 , Y n 1 i +1 ) and U 2 i = ( M 0 , Y i − 1 31 , Y n 2 i +1 ) . Now fo r the ske w-symmetric channels an d a symm etric co deboo k observe that P “ M 0 = m 0 , Y i − 1 31 = y i − 1 31 , Y n 1 i +1 = y n 1 i +1 , X i = x i ” = X x n 1 \ x i P “ M 0 = m 0 , X n 1 = x n 1 , Y i − 1 31 = y i − 1 31 , Y n 1 i +1 = y n 1 i +1 ” ( a ) = X x n 1 \ x i P “ M 0 = m 0 , X n 1 = x n 1 ” i − 1 Y j =1 P( Y 3 j = y 3 j | X j = x j ) × n Y k = i +1 P( Y 1 k = y 1 k | X k = x k ) ( b ) = X x n 1 \ x i P “ M 0 = m 0 , X n 1 = π ( x n 1 ) ” i − 1 Y j =1 P( Y 3 j = π ( y 3 j ) | X j = π ( x j )) × n Y k = i +1 P( Y 2 k = π ( y 1 k ) | X k = π ( x k )) = X x n 1 \ x i P “ M 0 = m 0 , X n 1 = π ( x n 1 ) , Y i − 1 31 = π ( y i − 1 31 ) , Y n 2 i +1 = π ( y n 1 i +1 ) ” ( c ) = P “ M 0 = m 0 , Y i − 1 31 = π ( y i − 1 31 ) , Y n 2 i +1 = π ( y n 1 i +1 ) , X i = π ( x i ) ” . Here ( a ) follows from the discrete memo ryless property of the chan nel; and ( b ) follows from ( i ) symmetry of the co de, ( ii ) sym metry of the chan nel X → Y 3 with r espect to π ( · ) , and ( iii ) the skew symm etry between receivers Y 1 , Y 2 i.e. P( Y 2 = π ( y ) | X = π ( x )) = P ( Y 1 = y | X = x ); and ( c ) is a con sequence of π ( · ) being a bijection. Therefo re the rand om v ar iables ( U 1 , X ) and ( U 2 , X ) are identical up to re-lab eling. Since the m utual inf ormation and entropy do not depend on the labeling, it follows that I ( U 1 ; Y 3 ) = I ( U 2 ; Y 3 ) I ( X ; Y 2 | U 1 ) = I ( X ; Y 2 | U 2 ) . Remark 3 : This techniq ue can be extend ed to o ther skew- symmetric chann els as well, i.e one for which such a π ( · ) exists. Therefo re we o btain the fo llowing revised outer bound . Bound 3: The u nion over the set of rate pairs ( R 0 , R 1 ) satisfying R 0 ≤ I ( U 1 ; Y 3 ) R 0 + R 1 ≤ min { I ( U 1 ; Y 3 ) + I ( X ; Y 1 | U 1 ) , I ( U 1 ; Y 3 ) + I ( X ; Y 2 | U 1 ) } R 0 + R 1 ≤ min { I ( X ; Y 1 ) , I ( X ; Y 2 ) } over all possible choices of rando m variables ( U 1 , X ) su ch that U 1 → X → ( Y 1 , Y 2 , Y 3 ) form s a M arkov ch ain constitutes an outer bound fo r this chan nel. It is straightfor ward to see (using the boun dary p oints) that Bound 3 matches the inner boun d and forms the capacity region. A C K N O W L E D G E M E N T S The a uthors wish to than k Arvind Ramachandr an for valu- able sug gestions and f eedback on the conten ts of the paper . R E F E R E N C E S [1] J. K ¨ orner and K. Marton, “General broadcast channels with degrade d message sets, ” IEEE T rans. Info . Theory , vol. IT -23, pp. 60–64, Jan, 1977. [2] S. Diggavi and D. Tse, “On opportunisti c codes and broadcast codes with degra ded message sets, ” Information theory workshop (ITW) , 2006. [3] V . Prabhakaran, S. Diggavi , and D. T se, “Broadca sting with degrade d message sets: A deterministic approach, ” Pro ceedings of the 45th Annual Allerton Confere nce on Communicat ion, Contr ol and Computing , 2007. [4] C. Nair and A. El Gamal, “The capacit y of a class of 3-recei ver broadca st channe ls with degraded message set s, ” Internation al Symposium on Informatio n T heory , pp. 1706–1710, 2008. [5] A. D. W yner and J. Ziv , “ A theorem on the entrop y of certa in binary sequence s and app licati ons: Part i, ” IEEE T rans. Info. Theory , vol. IT -19, pp. 769–772, Novembe r , 1973. [6] A. El Ga mal, “The cap acity of a class of broadca st channels, ” IEE E T rans. Info. Theory , vol. IT -25, pp. 166–169, March, 1979. [7] C. Nair and A. El Gamal, “ An outer bound to the capacity regi on of the broadca st channe l, ” IEE E T rans. Info. Theory , vol. IT -53, pp. 350–355, January , 2007. [8] B. Hajek and M. Pursley , “Ev aluati on of an achie vable rate regio n for the broadca st channel, ” IEEE T rans. Info. Theory , vol. IT -25, pp. 36–46, January , 1979. A P P E N D I X A. Pr o of o f Cla im 1 In this section we show that when 1 6 ≤ p ≤ 1 2 , the ratio f (1) ( x ) g (1) ( x ) is a d ecreasing fun ction o f x, x ∈ [0 , 1 2 ] . Recalling the definitions, f ( x ) = h ( x 2 ) + h ( 1 − x 2 ) − 1 , and g ( x ) = h ( x (1 − p ) + (1 − x ) p ) . As f ( x ) and g ( x ) are st rictly inc reasing in x ∈ [0 , 1 2 ] , it suffices to sh ow that f (2) ( x ) f (1) ( x ) ≤ g (2) ( x ) g (1) ( x ) , (2) where f (2) ( x ) , g (2) ( x ) denote the s econd de riv atives of the function . Let J ( x ) = log 1 − x x , U ( x ) = x (1 − x ) an d x ∗ p = x (1 − p ) + p (1 − x ) . Using th is notation and substituting fo r the deriv ativ es, ( 2) reduces to sh owing J ( x ∗ p ) U ( x ∗ p ) 1 − 2 p ≥ 2 J x 2 − J 1 − x 2 1 U ( x 2 ) + 1 U ( 1 − x 2 ) . (3) Now observe that as x → 1 2 both J ( x ∗ p ) and J x 2 − J 1 − x 2 tend to zero and all other ter ms rem ain p ositiv e. T hus we hav e an eq uality at x = 1 2 . T o show the inequ ality f or x ∈ [0 , 1 2 ] it suffi ces to prove th at th e de rivative of the left hand side (L.H.S .) of (3) is smaller than derivative o f the right hand sid e (R.H.S.) of (3). The d eriv ati ve of the L.H.S. is given b y d dx J ( x ∗ p ) U ( x ∗ p ) 1 − 2 p = − 1 + J ( x ∗ p )(1 − 2( x ∗ p )) . Let us define R ( x ) to be the deriv ativ e of th e R.H.S., i.e. d dx 2 J x 2 − J 1 − x 2 1 U ( x 2 ) + 1 U ( 1 − x 2 ) = R ( x ) . W e wish to show that − 1 + J ( x ∗ p )(1 − 2( x ∗ p )) ≤ R ( x ) , (4) for all 1 6 ≤ p ≤ 1 2 and x ∈ [0 , 1 2 ] . Gi ven any x ∈ [0 , 1 2 ] , observe that J ( x ∗ p )(1 − 2 ( x ∗ p )) is a decre asing functio n of p for 0 ≤ p ≤ 1 2 . Thus estab lishing ( 4) for p = 1 6 suffices. Let S ( x ) = − 1 + J ( x ∗ 1 6 )(1 − 2( x ∗ 1 6 )) . Figure 3 plots S ( x ) and R ( x ) . 0 0.05 0.1 0.15 0.2 0.25 0.3 0.35 0.4 0.45 0.5 −2 0 2 4 6 8 10 x R(x) S(x) Fig. 3. Comparing R ( x ) and S ( x ) Thus we have S ( x ) ≤ R ( x ) for 0 ≤ x ≤ 1 2 . This com pletes the p roof o f Claim 1.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment