A General Theory of Computational Scalability Based on Rational Functions

The universal scalability law of computational capacity is a rational function C_p = P(p)/Q(p) with P(p) a linear polynomial and Q(p) a second-degree polynomial in the number of physical processors p, that has been long used for statistical modeling …

Authors: ** Neil J. Gunther **

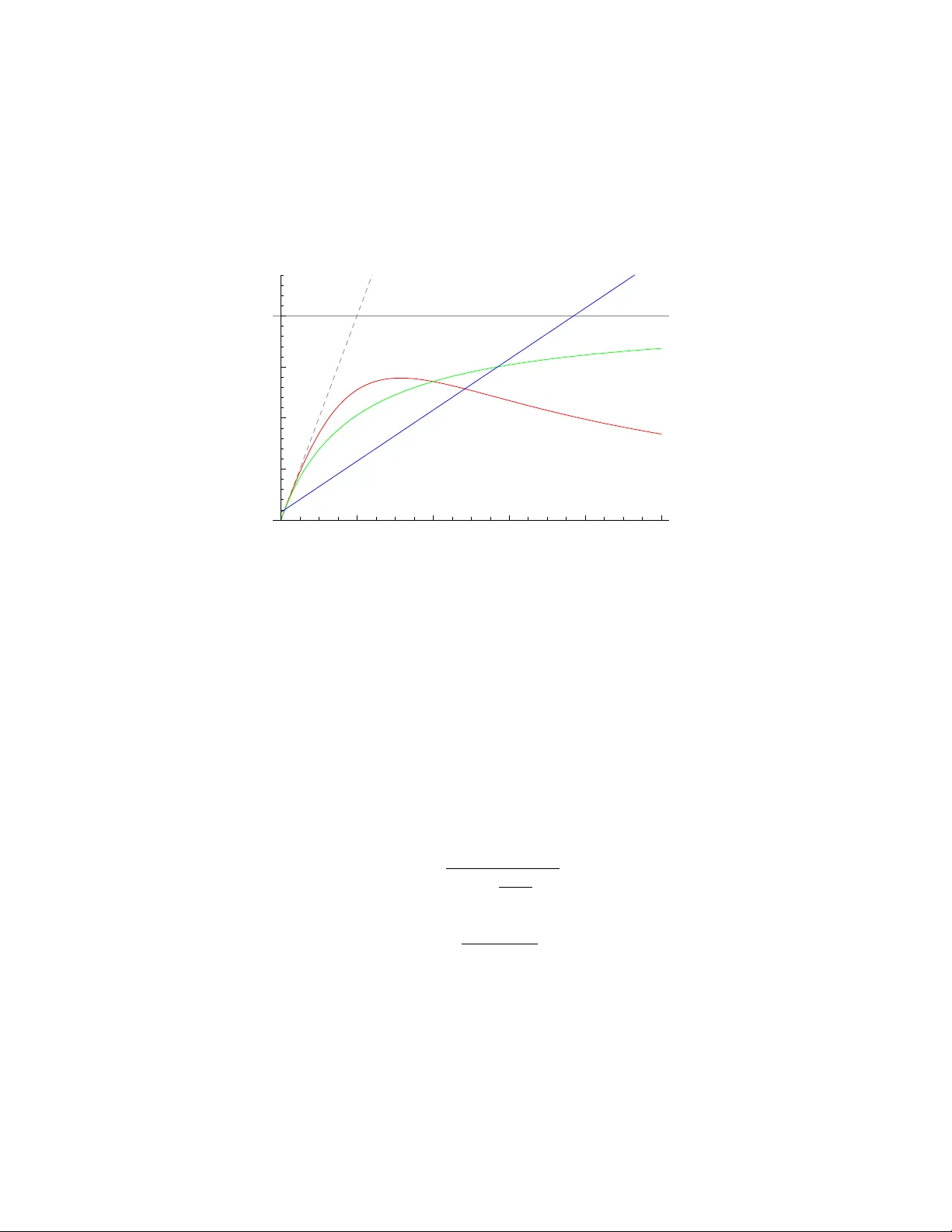

A General Theory of Computational Scalabilit y Based on Rational F unctions Neil J. Gun ther ∗ No vem ber 26, 2024 Abstract The universal scalability law of computational capacit y is a rational function C p = P ( p ) /Q ( p ) with P ( p ) a linear polynomial and Q ( p ) a second-degree p olynomial in the n umber of physical pro cessors p , that has b een long used for statistical mo deling and prediction of computer system p erformance. W e prov e that C p is equiv alen t to the synchronous throughput b ound for a mac hine- repairman with state-dependent service rate. Simpler rational functions, suc h as Amdahl’s la w and Gustafson sp eedup, are corollaries of this queue-theoretic b ound. C p is further sho wn to b e b oth necessary and sufficien t for mo deling all practical c haracteristics of computational scalability . 1 In tro duction F or sev eral decades, a class of real functions called r ational functions [1], has b een used to represen t throughput scalabilit y as a function of physical pro cessor configuration. In particular, Amdahl’s la w [2], its mo dification due to Gustafson [3] and the Universal Scalability La w (USL) [4] hav e found ubiquitous application. In this context, the relative computing capacit y , C p , is a rational function of the num b er of ph ysical pro cessors p . It is defined as the quotient of a p olynomial P ( p ) in the n umerator and Q ( p ) in the denominator, i.e., C p = P ( p ) /Q ( p ). Eac h of the ab o ve-men tioned scalabilit y models is distinguished b y the num b er of coefficients or fitting parameters asso ciated with the p olynomials in P ( p ) and Q ( p ). F or example, Amdahl’s law and Gustafson’s mo dification are single parameter mo dels, whereas the USL model contains tw o parameters. Despite their historical utilit y , these mo dels hav e stoo d in isolation without an y deeper ph ysical in terpretation. It has ev en b een suggested that Amdahl’s law is not fundamen tal [5]. More imp ortan tly , the lac k of a unified physical interpretation has led to the use of certain flaw ed scalabilit y mo dels [6]. In this note, we demonstrate that the aforemen tioned class of rational functions corresponds to certain p erformance bounds b elonging to a queue-theoretic mo del. The idea that Amdahl’s la w, which has most frequen tly been associated with the scalabilit y of massively parallel systems, can b e considered from a queue-theoretic standp oin t, is not entirely new [See e.g., 7, 8]. Ho wev er, quite apart from motiv ations entirely different from our own, those previous works emplo yed op en queueing mo dels with an unbounded num b er of requests (See App endix C), whereas we shall use a close d queueing model with a finite num b er of requests p corresp onding to the n umber of physical pro cessors. The USL function is asso ciated with a state-dep enden t generalization of the machine repairman [9]. The organization of this pap er is as follows. W e briefly review the scalability mo dels of interest in Sect. 2. The appropriate queueing metrics asso ciated with the standard mac hine repairman and its state-dep enden t extension are discussed in Sect. 3. The p erformance characteristics asso ciated with synchr onous queueing are also presen ted there. The main theorem (Theorem 2) is established in Sect. 4. Amdahl’s la w and Gustafson’s linear speedup are sho wn to b e corollaries of this theorem. Finally , in Sect. 5 w e pro ve an earlier conjecture that a rational function with Q ( p ) a second-degree p olynomial is b oth necessary and sufficien t to mo del all practical cases of computational scalabilit y . ∗ Performance Dynamics Company , 4061 East Castro V alley Blvd., Suite 110, Castro V alley , CA 94552, USA. Email: nj gunther @ perfdynamics . com 1 2 P arametric Mo dels Although technically , we are discussing rational functions, we shall hereafter refer to them as parametric mo dels, and the co efficients as parameters, since the primary application of these mo dels is nonlinear statistical regression of performance data [See e.g., 4, 10, 11, 12, and references therein]. 0 20 40 60 80 100 p 5 10 15 20 C p Figure 1: P arametric mo dels: USL (red), Amdahl (green), Gustafson (blue), with parameter v alues exaggerated to distinguish their typical characteristic relativ e to ideal linear scaling (dashed). The horizon tal line is the Amdahl asymptote at σ − 1 . Definition 1 (Sp eedup) . If an amount of w ork N is completed in time T 1 on a unipro cessor, the same amount of work can be completed in time T p < T 1 on a p -wa y m ultipro cessor. The sp eedup S p = T 1 /T p is one measure of scalability . 2.1 Amdahl’s law F or a single task that takes time T 1 to execute on a unipro cessor ( p = 1), Amdahl’s la w [2] states that if the task can b e e quip artitione d onto p processors, but contains an irreducible fraction of sequential w ork σ ∈ [0 , 1], then only the remaining portion of the execution time (1 − σ ) T 1 can b e executed as p parallel subtasks on p physical processors. The b ound on the achiev able equipartitioned speedup [13] is given by the ratio S p ( σ ) = T 1 σ T 1 + „ 1 − σ p « T 1 (1) whic h simplifies to S p ( σ ) = p 1 + σ ( p − 1) ; (2) a rational function with P ( p ) = p and Q ( p ) a first-degree p olynomial. As the pro cessor configu- ration is increased, i.e., p → ∞ , the num ber of concomitan tly smaller subtasks also increases and the speedup (1) approaches an asymptote, S p ( σ ) ∼ σ − 1 , (3) sho wn as the horizontal in Fig. 1. 2 2.2 Gustafson’s sp eedup Amdahl’s law assumes the size of the work is fixed. Gustafson’s mo dification is based on the idea of scaling up the size of the work to match p . This assumption results in the theoretical recov ery of linear speedup S G p ( σ ) = σ + (1 − σ ) p (4) Equation (4) is a rational function with Q ( p ) = 1 and P ( p ) a first-degree p olynomial in p . Although (4) has inspired v arious efforts for impro ving parallel pro cessing efficiencies, achieving truly linear sp eedup has turned out to be difficult in practice. Most recently , (4) has b een prop osed as a w ay to optimize the throughput of m ulticore pro cessors [14]. Definition 2 (Relativ e Capacity) . As an alternative to the sp eedup, scalabilit y can also b e defined as the relative capacity , C p = X ( p ) /X (1), where X ( p ) is the throughput with p pro cessors, and X (1) the throughput of a single pro cessor. 2.3 Univ ersal Scalabilit y La w (USL) The USL mo del [4, 10, 11] is a rational function with P ( p ) = p and Q ( p ) a second-degree p olyno- mial: C p ( σ, κ ) = p 1 + σ ( p − 1) + κ p ( p − 1) (5) where the coefficients b elonging to the terms in the denominator hav e b een regroup ed into three terms with t wo parameters ( σ, κ ). These terms can b e interpreted as representing: 1. Ideal concurrency associated with linear scalability ( σ, κ = 0) 2. Conten tion-limited scalabilit y due to serialization or queueing ( σ > 0 , κ = 0) 3. Coherency-limited scalability due to inconsisten t copies of data ( σ, κ > 0) T able 1 summarized ho w these parameter v alues can b e used to classify the scalability of different t yp es of applications. Equation (5) subsumes (2) and (4). In particular, (2) is identical to (5) with κ = 0. The key distinction is that, unlike (2), (5) p ossesses a maxim um at p ∗ = r 1 − σ κ (6) whic h is controlled by the parameter v alues according to: (a) p ∗ → 0 as κ → ∞ (b) p ∗ → ∞ as κ → 0 (c) p ∗ → κ − 1 / 2 as σ → 0 (d) p ∗ → 0 as σ → 1 The imp ortant implication is that b ey ond p ∗ the throughput b ecomes r etr o gr ade . See Fig. 1. This effect is commonly observed in applications that inv olve shared-writable data (Case D in T able 1). In the subsequent sections, we attempt to provide deep er insight into the physical significance of (5) b y recognizing its asso ciation with queueing theory . 3 Queueing Mo dels The machine r ep airman (Fig. 2) is a w ell-known queueing mo del [15] whic h represents an assembly line comprising a finite num b er of mac hines p which break down after a mean lifetime Z . A repair- man takes a mean time S to repair a broken machine and if multiple machines fail, the additional mac hines must queue for service in FIFO order. The queue-theoretic notation, M / M / 1 //p , implies exp onen tially distributed lifetimes and service p erio ds with a finite p opulation p of requests and buffering. 3 T able 1: Application domains for the USL mo del A: Ideal concurrency ( σ, κ = 0) B: Con ten tion-limited ( σ > 0 , κ = 0) Single-threaded tasks T asks requiring lo cking or sequencing P arallel text searc h Message-passing proto cols Read-only queries P olling proto cols (e.g., hypervisors) C: Coherency-limited ( σ = 0 , κ > 0) D: W orst case ( σ, κ > 0) SMP cache pinging T asks acting on shared-writable data Incoheren t application state b etw een Online reserv ation systems cluster no des Up dating database records p, Z S R(p) X(p) Figure 2: Conv entional machine repairman queueing mo del comprising p mac hines with mean uptime Z ( top ) and a repair queue ( b ottom ) with mean service time S . 3.1 Queueing Metrics The p erformance characteristics of interest for the subsequent discussion are the throughput X ( p ) and residence time R ( p ). Definition 3 (Throughput) . The throughput, X = N /T , is the num b er of tasks N completed in time T . Definition 4 (Residence Time) . The mean residence time R = W + S is the sum of the time spent w aiting for service W plus the actual repair time S when the repairman services the mac hine. On a verage, the n umber of machines that are “up” is Z X , while Q are “down” (for repairs), suc h that the total num b er of machines in either state is giv en by p = Q + Z X . Rearranging this expression produces: Q = p − Z X and applying Little’s law [15] ( Q = X R ) [15] X R = p − Z X giv es R ( p ) = p X ( p ) − Z (7) for the mean residence time at the repair station. Rearranging (7) provides an expression for the mean throughput of the repairman as a function of p : X ( p ) = p R ( p ) + Z (8) Definition 5 (Mean R TT) . The denominator in (8) , R ( p ) + Z , is the mean r ound-trip time (R TT) for M / M / 1 //p . 4 T able 2: Interpretation of the queueing metrics in Fig. 2 In terpretation Metric Repairman Multipro cessor Time share p mac hines pro cessors users Z up time execution p erio d think time S service time transmission time CPU time R(p) residence time interconnect latency run-queue time X(p) failure rate bandwidth throughput Since M / M / 1 //p is an abstraction, it can b e applied to different computational con texts. In the subsequent sections, the p machines will b e taken to represent ph ysical pro cessors and the time spent at the repair station is taken to represen t the interconnect latency b etw een the pro cessors [16, 17]. See T able 2. This c hoice is merely to conform to the conv entions most commonly used in discussions of parallel scalability [11], but the generic nature of queueing mo del means that an y conclusions also hold for soft ware scalability [See e.g., 10, Chap. 6]. 3.2 Sync hronous Queueing W e consider the sp ecial case of synchr onous queueing in M / M / 1 //p . The queue-theoretic p erfor- mance metrics defined in Sect. 3.1 are for the steady-state case and therefore each corresp onds to the statistical mean of the respective random v ariable. Moreov er, as already mentioned , we cannot giv e an explicit expression for R ( p ) since its v alue is dep enden t on the v alue of X ( p ), which is also unkno wn in steady state. Definition 6 (Synchronized Requests) . If all the machines in M / M / 1 //p break down simultane- ously , the queue length at the repairman is maximized such that the residence time in definition 4 b ecomes R ( p ) = pS . This situation corresponds to one machine in service and ( p − 1) waiting for service and pro vides a low er bound on (8) [18, 19, 20]: p pS + Z ≤ X ( p ) (9) In the context of multiprocessor scalabilit y (T able 2), it is tan tamount to all p pro cessors simulta- neously issuing requests across the interconnect. R emark 1 (A Parado x) . Consider the case where all p processors hav e the same deterministic Z p eriod. A t the end of the first Z perio d, all p requests will enqueue at the interconnect (low er p ortion of Fig. 2) sim ultaneously . By definition, how ever, the requests are serviced serially , so they will return to the parallel execution phase (top portion of Fig. 2) separately and thereafter will alw ays return to the interconnect at different times. In other words, ev en if the queueing system starts with sync hronized visits to the interconnect, that sync hronization is immediately lost after the first tour b ecause it is destroy ed by the serial queueing pro cess. The resolution of this paradox is discussed in App endix B. Definition 7 (Synchronous R TT) . In the presence of synchronous queueing, the mean R TT of definition 5 becomes pS + Z . Lemma 1. The relative c ap acity C p and the sp e e dup S p give identic al values for the same pr o c essor c onfigur ation p . Pr o of. Let the unipro cessor throughput is defined as X 1 = N/T 1 and the multiprocessor through- put is X p = N/T p . Hence C p = X ( p ) X (1) = N T p T 1 N ≡ S p follo ws from definition 1. 5 Lemma 2 (Serial F raction) . The serial fr action (2.1) c an b e expr esse d in terms of M / M / 1 //p metrics by the identity [6, 10, 11]: σ = S S + Z → ( 0 as S → 0 , Z = c onst. , 1 as Z → 0 , S = c onst . (10) If σ = 0 , then ther e is no communic ation b etwe en pr o c essors and the inter c onne ct latency ther e- for e vanishes (maximal exe cution time). Conversely, if σ = 1 , then the exe cution time vanishes (maximal c ommunic ation latency). Pr o of. The R TT for a single (unpartitioned) task in Fig. 2 is T 1 = S + Z . The R TT for a p equipartitioned subtasks is T p = p ( S/p ) + Z /p . F rom definition 1, the corresp onding sp eedup is S p = S + Z S + Z/p (11) Equating (11) with (1), we find S = σ T 1 and Z = (1 − σ ) T 1 . (12) Eliminating T 1 pro duces S = „ σ 1 − σ « Z (13) whic h, up on solving for σ , pro duces (10). See Appendix B for another p erspective. Definition 8. The quantit y Z S = 1 − σ σ (14) is the servic e r atio for the M / M / 1 //p model. Theorem 1 (Speedup Dualit y) . Let ( σ, π ) b e a continuous dual-p ar ameter p air with σ is the serial fr action (10) and π = 1 − σ . The A mdahl sp e e dup (2) is invariant under sc alings of ( σ, π ) by p . Pr o of. Using definition 8, theorem 1 can b e represen ted diagrammatically as: π /σ = Z/S . & Z 7→ Z /p Z 7→ Z S 7→ S S 7→ pS & . S p ( σ ) (15) The path on the left hand side of (15) corresp onds to reducing the single task execution time by p (subtasks) while the interconnect service time remains unchanged. This follows from definition 6, R = pS , but the service time for each subtask is also reduced to S/p . Hence, R = S . Conv ersely , the right hand path of (15) corresp onds to p tasks, eac h with unc hanged execution time Z , but scaled service time R = pS . Both paths result in Amdahl’s la w (2), whic h can b e seen b y first rewriting (11) in terms of the service ratio Z/S (definition 8): S p = 1 + Z /S 1 + 1 p „ Z S « . (16) (a) Interpreting the denominator in (16) as b elonging to the left hand path of (15) leads to the expansion S p = S + Z S + Z p + S − S p = ( S + Z ) /S 1 p „ S + Z S « + p − 1 p 6 Collecting terms and simplifying pro duces: S p = p 1 + „ S S + Z « ( p − 1) (17) whic h is identical to (2) up on substituting (10). (b) F ollowing the right hand path in (15) leads to the expansion S p = pS (1 + Z/S ) pS + Z = p p „ S S + Z « + Z S + Z + S S + Z − S S + Z Collecting terms in the denominator pro duces: S p = p p „ S S + Z « + 1 − S S + Z (18) whic h also yields (2) via (10). R emark 2 . Theorem 1 anticipates the interpretation of Gustafson’s la w as a consequence of scaling the w ork size Z 7→ pZ in M / M / 1 //p . See corollary 2. 3.3 State-Dep enden t Service W e no w consider a generalization of this machine repairman model in which the residence time R ( p ) includes an additional time that is prop ortional to the load on the server, expressed as the n umber of enqueued requests. Since the queue-length is a canonical measure of the state of the system, the repairman b ecomes a state-dep endent serv er [9, 15], denoted M /G/ 1 //p . Let the additional service time b e S 0 in the state-dependent progression: p = 1 : R (1) = 1 S p = 2 : R (2) = 2 ( S + S 0 ) p = 3 : R (3) = 3 ( S + 2 S 0 ) p = 4 : R (4) = 4 ( S + 3 S 0 ) (19) · · · · · · p = p : R ( p ) = p ( S + ( p − 1) S 0 ) The extra time sp en t by each machine at the repair station increases linearly with the additional n umber “down” mac hines. There is no stretching of the mean service time, S , when repairing a single mac hine. R emark 3 . In general, it is exp ected that S 0 < S . It could, how ever, b e a m ultiple of S , but that is clearly undesirable. Some example applications of the state-dep enden t M /G/ 1 //p model in a computational con text include: (a) Pairwise Exchange: Mo deling the performance degradation due to combinatoric pairwise exc hange of data b et ween p multiprocessor caches or cluster no des. See Sect. 5. (b) Broadcast Proto col: If any pro cessor broadcasts a request for data, the other ( p − 1) processors m ust stop and resp ond b efore computation can con tinue [11]. (c) Virtual Memory: Each task is a program with its own working set of memory pages. Page replacemen t relies on a higher latency device, such as a disk. As the num b er of programs p increases, page replacemen t latency causes the system to “thrash” such that the throughput to become retrograde [9]. Cf. Fig. 2. 7 4 P arametric Mo dels as Queueing Bounds In this section, we show that the parametric scalability mo dels in Section 2 correspond to certain throughput bounds on the queueing mo dels in Section 3. Theorem 2 (Main Result) . The universal sc alability law (5) is e quivalent to synchronous r elative thr oughput in M /G/ 1 //p . Pr o of. Let S 0 = cS in (19), with c a p ositiv e constan t of prop ortionalit y . The residence time for state-dep enden t, synchronous-requests b ecomes R ( p ) = pS + c p ( p − 1) S (20) Substituting (20) in to definition 2: C p = p ( S + Z ) pS + c p ( p − 1) S + Z (21) = p ( S + Z ) ( p − 1) S + ( S + Z ) + c p ( p − 1) S = p ( S + Z ) ( S + Z ) [1 + ( p − 1) S ( S + Z ) − 1 + c p ( p − 1) S ( S + Z ) − 1 ] Collecting terms and simplifying pro duces: C p = p 1 + σ ( p − 1) + c σp ( p − 1) , (22) where we ha ve applied the identit y for the serial fraction in lemma 2. Combining the co efficien ts of the third term in the denominator of (22) as κ = c σ , yields (5). R emark 4 . Since c is an arbitrary constant, c > 0 implies that the parameter κ = c σ in (5) can b e unbounded, whereas σ < 1 alw ays. R emark 5 . The state-dep endence of R ( p ) in (20) does not change lemma 2 since σ is determined b y S and Z only and b oth of those queueing metrics are constants. Corollary 1 (Amdahl’s la w) . Amdahl’s law (2) is the synchr onous b ound on r elative thr oughput in M / M / 1 //p . Pr o of. F ollows immediately from the pro of of theorem 2 with c = 0 in (21). R emark 6 . Elsewhere [6, 10, 11], corollary 1 was prov en as a separate theorem. Corollary 2 (Gustafson’s law) . Gustafson ’s law (4) c orr esp onds to the r esc aling Z 7→ pZ in M / M / 1 //p . Pr o of. Since Gustafson’s result is a modification of Amdahl’s la w, we start with (21) and let c = 0. Under Z 7→ pZ , the scalability function b ecomes: C p = p ( S + pZ ) pS + pZ = S + pZ S + Z = S + p ( Z + S − S ) S + Z Once again, after application of lemma 2, this simplifies to C p = σ + p − σp (23) whic h is identical to the linear sp eedup S G p in (4). R emark 7 . Rewriting (23) as C 0 p = p + σ/ (1 − σ ), w e note that the additiv e constant σ / (1 − σ ) = S/ Z is the inv erse of the service ratio in definition 8. In the context of M / M / 1 //p , rescaling the execution time, Z 7→ pZ , prior to partitioning, adds a fixe d o verhead ( pS/pZ ) to an otherwise linear function of p , whereas the ov erhead in Amdahl’s la w is an incr e asing function of p . The results of this section hav e also b een confirmed using even t-based sim ulations [12]. 8 5 Extrema and Univ ersalit y Ideal linear scalabilit y ( C p ∼ p, for p > 0) has a p ositiv e and constant deriv ative. More realistically , large pro cessor configurations ( p → ∞ ) are exp ected to approac h saturation, i.e., the asymptote C sat ∼ σ − 1 . An y physical computational system that develops a scalability maximum at p ∗ in the positive quadran t ( p > 0), means that C p m ust hav e a negative deriv ative for p > p ∗ and therefore relative computational capacit y falls below the saturation v alue or C p < C sat , i.e., throughput p erformance b ecomes r etr o gr ade . Since this behavior is undesirable, there is little virtue in characterizing the maxim um beyond the abilit y to quantify its location ( p ∗ ) using a giv en scalabilit y mo del. This observ ation leads to the following conjecture [See also 10, p. 65], which we no w prov e. Conjecture 1 (Universalit y) . F or a r ational function C p = P ( p ) /Q ( p ) with P ( p ) = p , a nec essary and sufficient c ondition for C p to b e a mo del of c omputational sc alability is, Q ( p ) = 1 + a 1 p + a 2 p 2 with c o efficients a 1 , a 2 > 0 . The simplest line of proof comes from considering latency rather than throughput. See Sec- tion D for further discussion. Pr o of. Ideal latency reduction, T p = T 1 /p , is a hyperb olic function and therefore has no extrema. The additional latency due to pairwise interprocessor comm unication introduces the combinatoric term, ` p 2 ´ = p ( p − 1) / 2, such that the total latency b ecomes T p ( κ ) = T 1 p + κ T 1 2 ( p − 1) (24) with constant κ > 0. Equation(24) has a unique minimum for p > 0 (Fig. 3). Substituting (24) in to the sp eedup definition 1, pro duces S p ( κ ) = p 1 + κp ( p − 1) (25) where we hav e absorbed the factor of 2 in κ . S p ( κ ) now p ossesses a unique maxim um in the p ositiv e quadrant ( p > 0). Thus, the quadratic term in the denominator of (25) is necessary for the existence of a maximum but it is not sufficien t b ecause S p ( κ ) does not exhibit the Amdahl asymptote σ − 1 when κ = 0. How ever, the tw o-parameter latency T p ( σ, κ ) = T 1 p + σT 1 „ p − 1 p « + κ T 1 2 ( p − 1) (26) do es introduce the required term into (25) and, b y lemma 1, is identical to (5). The second term in (26) can b e in terpreted as the fixed time it tak es any one processor to broadcast a request for data and wait for the remaining fraction of processors, ( p − 1) /p , to resp ond simultaneously . This is also another w ay to view synchronous queueing in M / M / 1 //p . It simply in tro duces a lo wer b ound, σ T 1 , on the latency reduction. The third term in (26) is analogous to Bro ok’s la w [21]: “Adding more manpow er to a late soft ware pro ject mak es it later,” with p in terpreted as p eople rather than pro cessors. Here, for example, it can b e interpreted as the latency due to the pairwise exchange of data to maintain cac he coherency in a multiprocessor. 6 Conclusion Sev eral ubiquitous scalability models, viz., Amdahl’s la w, Gustafson’s law and the universal scal- abilit y law (USL), b elong to a class of rational functions. T reated as parametric mo dels, they are neither ad ho c nor unphysical. Rather, they correspond to certain b ounds on the relative through- put of the mac hine repairman queueing mo del. In the most general case, the main theorem 2 states that the USL mo del corresp onds to the synchronous throughput b ound of a load-dep enden t mac hine repairman. USL subsumes b oth Amdahl’s law and Gustafson’s law as corollaries of theo- rem 2. As well as pro viding a more physical basis for these scalabilit y mo dels, the queue-theoretic in terpretation has practical significance in that it facilitates prediction of resp onse time scalability using (7) and provides deeper insight into p otential p erformance tuning opp ortunities. 9 0.5 1.0 1.5 2.0 2.5 3.0 p 1 2 3 4 5 6 T ! p " Figure 3: A minimum occurs in the total latency T ( p ) due to an increasing pairwise-exc hange time b eing added to the initial latency reduction. App endices A Wh y Univ ersal? The term “universal” is intended to conv ey the notion that the USL (defined in Sect. 2.3) can b e applied to any computer arc hitecture; from m ulti-core to m ulti-tier. This follows from the fact that there is nothing in (5) that explicitly represents an y particular system architecture or interconnect top ology . That information is presen t but it is encoded in the n umeric v alue of the parameters σ and κ . The same could b e said for Amdahl’s la w but the difference is that, being a rational function with linear Q ( p ), Amdahl’s la w cannot predict the retrograde scalabilit y commonly observed in p erformance ev aluation measuremen ts [4, 10]. As prov en in Sect. 5, the USL is b oth necessary and sufficien t to mo del all these effects. The USL do es not exclude defining a more general or more complex scalabilit y mo del to accoun t for such details as, heterogeneous pro cessors or the functional form of degradation beyond p ∗ , but an y suc h model m ust include the USL as a limiting case. The best analogy migh t be to regard the USL as b eing akin to Newton’s universal law of gr avitation . Here, “universal” means generally applicable to an y gra vitating b odies. Newton’s theory has b een sup erseded by a more sophisticated theory of gravitation; Einstein’s general theory of relativity . Einstein’s theory , how ever, do es not negate Newton’s theory but rather, contains it as a limiting case, when space-time is flat. Since space-time is flat in all practical applications, NASA uses Newton’s equations to calculate the fligh t paths of all its missions. B Sync hronous Queueing The pro ofs of theorem 2 (USL) and corollary 1 (Amdahl) employ mean v alue equations for metrics whic h characterize steady state conditions. As noted in remark 1, synchronized queueing cannot b e maintained in steady state. Sync hronization and steady state are not compatible concepts b ecause the former is an instantane ous effect, whereas the analytic solutions we seek are only v alid in long-run equilibrium. Elsewhere [10, 12], we hav e shown that a necessary requirement for main taining synchronous queueing is to introduce another buffer in addition to the waiting line ( W 1 ) at the repairman. If the extra buffer represents a p ost -repair collection p oin t, suc h that eac h repaired machine (com- pleted request) is held “off-line” until al l p machines are repaired then, sync hronous queueing is main tained pro vided the Z p erio ds are i.i.d. deterministic. The extra buffer acts as a b arrier syn- 10 chr onizer . Unfortunately , this is a D / M / 1 //p queue, whereas the pro of of corollary 1 is based on M / M / 1 //p . Moreov er, the repairman performance metrics are robust [22], so our results should also hold for G/ M / 1 //p . In more recen t simulation experiments [12], we hav e shown that this restriction on Z p eriods can b e lifted b y p ositioning the buffer as a pr e -repair waiting room. Instead of requiring all p mac hines to break do wn and enqueue simultaneously , we allow an y n umber, less than p , to fail but with the added constraint that when any mac hine inv okes service at the repairman, all other machines (or executing pro cessors) must susp end operations as well, i.e., visit the susp ension buffer. Under these conditions, Z can b e G -distributed. Because this intermittent synchr onization o ccurs with m uch higher frequency and for muc h shorter a verage time perio ds than barrier sync hronization, the potential impact of the G -distributed tails on the Z perio ds is truncated. Sync hronization can b e treated as a tw o-state Marko v pro cess, e.g., A : p ar al lel and B : serial , where the B state includes those pro cesses that are susp ended as well as waiting for service. If λ A is the transition rate for A → B and λ B for B → A , then the probability of b eing in state B is giv en by Pr( B ) = λ B λ A + λ B (27) In the previous scenario it only tak es a single machine to fail to suspended all other machines. The failure rate is therefore λ A = 1 / Z and the service rate is λ B = 1 /S . Substituting these into (27) pro duces and expression identical to the serial fraction σ in (10). In state B , some fraction p 1 are enqueued and the remainder p 2 = p − p 1 are susp ended. On av erage, any machine can exp ect to sp end time R = ( p 1 + p 2 ) S to complete repairs. Hence, the total serial time is R = pS , which is the quan tity that app ears in the pro ofs. C Queueing Mo dels of Amdahl’s La w Others hav e also considered Amdahl’s la w from a queue-theoretic standp oin t [See, e.g., 7, 8]. Of these, [8] is closest to our discussion, so we briefly summarize the differences. First, the motiv ations are quite different. The author of [8], lik e man y other authors, seeks clev er wa ys to defe at Amdahl’s law; in the sense of Gustafson (Sect. 2.2), whereas we are trying to understand Amdahl’s la w by providing it with a more fundamental physical interpretation. Ironically , both in vestigations inv oke queue-theoretic mo dels to gain more insight in to the pertinent issues; an op en ( M/ M /m ) queue in [8], a close d queue (Fig. 2) in this paper. Second, t wo steps are undertaken to define an alternative sp eedup function: 1. An attempt to unify b oth the Amdahl and Gustafson equations in to a single speedup function. 2. Extend that unified speedup function to include waiting times. The ov erarching goal is to find waiting-time optima for this unified sp eedup function. The unifica- tion step is achiev ed by purely algebraic manipulations and do es not rest on any queue-theoretic argumen ts. The op en queueing mo del provides an ad ho c means for incorp orating waiting times as a function of queue length. The subsequent analysis is based entirely on simulation results and thereafter departs significan tly from the analytic approac h of this pap er. By virtue of our approach, we hav e shown that b oth Amdahl and Gustafson scaling laws are unified b y the same queueing mo del, viz., the mac hine-repairman model. Moreov er, corollary 1 is a lo wer b ound on throughput; sync hronous throughput, and therefore represents worst-case scalabilit y . With this ph ysical interpretation, it follo ws immediately that Amdahl’s la w can b e “defeated” more conv eniently than proposed in [8] b y simply requiring that all requests be issued asynchr onously [12]. D Remarks on the Pro of of Conjecture 1 The proof in Section 5, is simplified by using the additive prop erties of the latency function T p rather than in verting the rational function f ( p ) = R ( p ) /Q ( p ) directly . Since Q ( p ) is quadratic in p , the temptation is to consider the inv erse of f − 1 and use the fact that a general quadratic function has a minimum. 11 T o see why this approach runs into difficulties, consider the simplified represen tation of (5): f ( p ) = p 1 + p + p 2 (28) with all unit co efficients. Equation (28) is not in v ertible in the formal sense b ecause f − 1 is not a one-to-one mapping, even in the p ositiv e quadrant. W e can, ho w ever, consider the full inv erse with its branches, as shown in Fig. 4. The principal branch is shown in light blue with the other branc hes o ccurring at the extrema of f − 1 . This corresp onds to the extrema of (28) o ccurring at p = ± 1 ⇒ f (1) = ± 1 / 3. Hence, f − 1 ∈ C , ∀ p > 1 / 3. ! 2 ! 1 1 2 ! 2 ! 1 1 2 Figure 4: The complete rational function (28) ( r e d ) and its inv erse ( blue ). Alternativ ely , choosing the denominator b e a p erfect square: p 1 + 2 p + p 2 (29) (29) can be expressed either as a pr o duct of line ar factors : p 1 + 2 p + p 2 = „ p 1 + p «„ 1 1 + p « or a p artial fr action exp ansion : p 1 + 2 p + p 2 = 1 1 + p − 1 (1 + p ) 2 Although such decompositions are suggestive of the need for tw o parameters (Fig. 5), they w ould seem to obscure the proof of Theorem 1 rather than illuminate it. Using the latency function T p and then “inv erting” it to pro duce the corresp onding throughput scaling using lemma 1, av oids these problems. E Ac kno wledgmen ts I thank W en Chen for finding several t yp os in the original manuscript. 12 1 2 3 4 5 x 0.2 0.4 0.6 0.8 1.0 f ! x " Figure 5: Amdahl scaling ( r e d ), en velope function (1 + p ) − 1 ( gr e en ), their conv olution ( solid blue ) and equation (28) ( dashe d blue ). The small difference in the latter tw o curv es arises from the factor of 2 in the denominator of equation (29). References [1] Wikip edia. Rational function. en.wikipedia.org/wiki/Rational_function , April 2008. [2] G. Amdahl. Validity of the single processor approach to ac hieving large scale computing capabilities. Pr o c. AFIPS Conf. , 30:483–485, Apr. 18-20 1967. [3] J. L. Gustafson. “Reev aluating Amdahl’s law”. Comm. ACM , 31(5):532–533, 1988. [4] N. J. Gun ther. “A simple capacit y model for massiv ely parallel transaction systems”. In Pr o c. CMG Conf. , pages 1035–1044, San Diego, California, Decem b er 1993. [5] F. P . Preparata. Should Amdahl’s law b e rep ealed? In ISAAC ’95: Pro c e e dings of the 6th International Symp osium on A lgorithms and Computation , page 311, London, UK, Dec 4-6 1995. Springer-V erlag. [6] N. J. Gun ther. A new in terpretation of Amdahl’s law and Geometric scalability . abs/cs/0210017 , Oct 2002. [7] L. Kleinro ck and J-H. Huang. On parallel pro cessing systems: Amdahl’s law generalized and some results on optimal design. IEEE T r ans. Softwar e Eng. , 18(5):434–447, 1992. [8] R. D. Nelson. Including queueing effects in Amdahl’s law. Comm. ACM , 39(12es):231–238, 1996. [9] N. J. Gunther. “Path integral methods for computer p erformance analysis”. Information Pr o c essing L etters , 32(1):7–13, 1989. [10] N. J. Gun ther. Guerril la Cap acity Planning . Springer-V erlag, Heidelb erg, Germany , 2007. [11] N. J. Gun ther. Unification of Amdahl’s law, LogP and other performance mo dels for message- passing architectures. In PDCS 2005, International Conferenc e on Parallel and Distribute d Computing Systems , pages 569–576, Nov ember 14–16, 2005, Pho enix, AZ, 2005. [12] J. Holtman and N. J. Gunther. Getting in the zone for successful scalability . In Pr o c. CMG Conf. , page T o app e ar , Las V egas, NV, 7–12 December 2008. [13] W. W are. The ultimate computer. IEEE Spe ctrum , 9:89–91, March 1972. [14] H. Sutter. Break Amdahl’s law! www.ddj.com/hpc- high- performance- computing/ 205900309 , Jan 2008. [15] D. Gross and C. Harris. F undamentals of Queueing The ory . Wiley , 1998. [16] D. A. Reed and R. M. F ujimoto. Multic omputer Networks: Message Base d Parallel Pr o c essing . MIT Press, Boston, Mass., 1987. [17] M. Ajmone-Marsan, G. Balbo, and G. Conte. Performanc e Mo dels of Multipr o c essor Systems . MIT Press, Boston, Mass., 1990. 13 [18] R. R. Muntz and J. W. W ong. Asymptotic prop erties of closed queueing netw ork mo dels. In Pr o c. Ann. Princ eton Conf. on Inf. Sci. and Sys. , pages 348–352, 1974. [19] J. C. S. Lui and R. R. Muntz. Computing b ounds on steady state av ailability of repairable computer systems. Journal of the ACM , 41(4):676–707, 1994. [20] J. Zahorjan, K. C. Sev cik, D. L. Eager, and B. Galler. Balanced job b ound analysis of queueing net works. Comm. ACM , 25(2):134–141, F eb 1982. [21] F. P . Bro oks. The Mythic al Man-Month . Addison-W esley , Reading, MA, anniversary edition, 1995. [22] B. D. Bunday and R. E. Scraton. The G/ M /r mac hine interference model. Eur op e an Journal of Op er ations R ese ar ch , 4:399–402, 1980. 14

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment