Geometry-aware similarity metrics for neural representations on Riemannian and statistical manifolds

Similarity measures are widely used to interpret the representational geometries used by neural networks to solve tasks. Yet, because existing methods compare the extrinsic geometry of representations in state space, rather than their intrinsic geome…

Authors: N Alex Cayco Gajic, Arthur Pellegrino

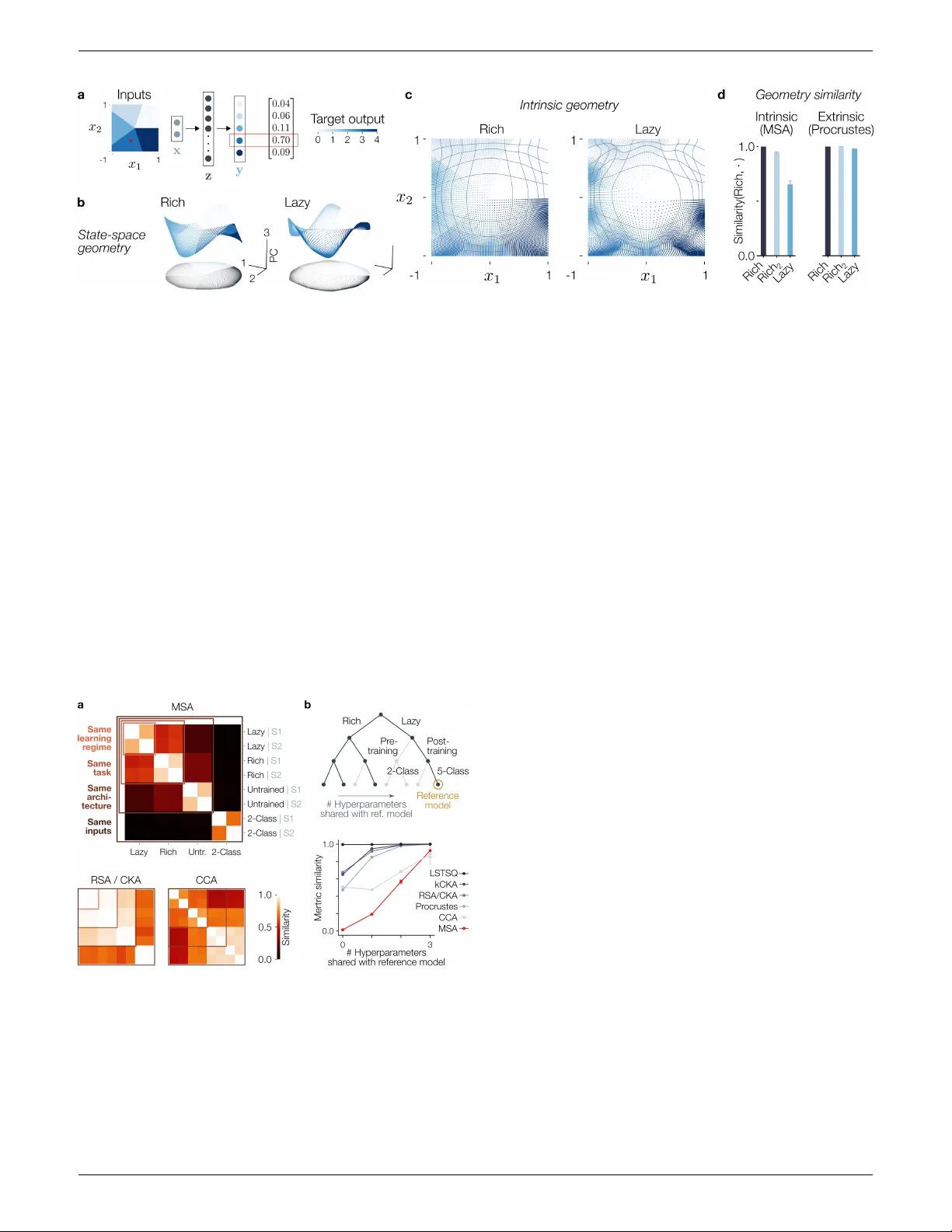

Geometry-a w are similarit y metrics for neural represen tations on Riemannian and statistical manifolds N Alex Ca yco Ga jic 1, † , Arth ur Pellegrino 2,3, † † Equal contribution. Author order determined randomly . 1. D´ ep artement d’Etudes Co gnitives, ´ Ec ole Normale Sup´ erieur e – PSL 2. Gatsby Unit, University Col le ge London 3. D´ ep artement d’Informatique, ´ Ec ole Normale Sup´ erieur e – PSL Correspondence to: arthur.pellegrino@ens.fr Abstract Similarit y measures are widely used to interpret the represen tational geometries used b y neural netw orks to solv e tasks. Y et, b ecause existing methods compare the extrinsic geometry of represen tations in state space, rather than their intrinsic geometry , they ma y fail to capture subtle y et crucial distinctions betw een fundamentally differen t neural net work solutions. Here, w e in tro duce metric similarity analysis (MSA), a no vel method whic h leverages tools from Riemannian geometry to compare the in trinsic geometry of neural represen tations under the manifold hypothesis. W e sho w that MSA can b e used to i) disen tangle features of neural computations in deep netw orks with differen t learning regimes, ii) compare nonlinear dynamics, and iii) in vestigate diffusion models. Hence, w e in tro duce a mathematically grounded and broadly applicable framew ork to understand the mec hanisms b ehind neural computations by comparing their in trinsic geometries. 1 In tro duction Neural netw orks ha ve historically b een seen as “black-box” systems whose in ternal computations are inheren tly opaque. Ho wev er, recen t progress in mechanistic interpretabilit y has led to broad efforts to reverse-engineer neural net w orks b y analysing their weigh ts and activ ations. These find- ings hav e rev ealed that subtle v ariations in initialization ( Y. Li et al., 2015 ; W ang et al., 2018 ), arc hitecture ( Ma- hesw aranathan et al., 2019 ), or learning regime ( Chizat, Oy allon, and Bac h, 2019 ; Jacot, Gabriel, and Hongler, 2018 ; P accolat et al., 2021 ; Saxe, McClelland, and Gan- guli, 2019 ; W o o dworth et al., 2020 ) can pro duce different learned represen tations in otherwise similarly p erformant mo dels. Imp ortantly , suc h represen tational geometry has b een sho wn to determine generalization prop erties ( Chou et al., 2025 ; Johnston and F usi, 2023 ; Q. Li, Sorscher, and Somp olinsky , 2024 ; Shang, Kreiman, and Sompolinsky , 2025 ; W oo dworth et al., 2020 ). Understanding the in ternal mec hanisms underlying a particular neural computation th us requires a principled w ay to contrast net works with p oten tially different arc hitectures. T o wards this end, a m ultitude of similari ty measures ha ve b een proposed to compare the hidden la y er activ ations of neural netw orks ( Klabunde et al., 2025 ). Widely used meth- o ds include linear regression, Procrustes, cen tred kernel alignmen t (CKA), canonical correlation analysis (CCA), represen tational similarity analysis (RSA), and their v ari- an ts ( Boix-Adsera et al., 2022 ; Gra ve, Joulin, and Berthet, 2019 ; Harv ey , Lipshutz, and Williams, 2024 ; Korn blith et al., 2019 ; Kriegesk orte, Mur, and Bandettini, 2008 ; Ragh u et al., 2017 ; Williams et al., 2021 ). The core idea b ehind these metho ds is to align or correlate the represen- tational geometries of hidden lay er activ ations as they are em b edded in state-space, to measure their similarity . By fo cusing on the state-space geometries, these methods do not explicitly lev erage the “manifold h yp othesis”: the widely accepted view that input data lie on an intrinsi- cally lo w-dimensional manifold ( T enenbaum, Silv a, and Langford, 2000 ). An alternate approac h instead examines neural net work computations in terms of how they trans- form this input manifold. F or example, successive la yers in a deep net w ork progressively warp the input manifold to extract task-relev ant features. Suc h warping can b e studied formally using to ols from Riemannian geometry , b oth in neural net works ( Brandon, Angus Chadwic k, and P ellegrino, 2025 ; Hauser and Ray, 2017 ; Kaul and Lall, 2019 ; Poole et al., 2016 ), as w ell as in dynamical systems mo dels ( P ellegrino and Chadwick, 2025 ), where the input manifold is instead w arp ed through time. In this Riemannian view, the extrinsic geometry of a repre- sen tation (e.g., its embedding) reflects less ab out internal computations than its intrinsic geometry , which charac- terises the pairwise relationships b etw een p oints along the manifold. F or example, a t w o-dimensional input ma y b e em b edded in ambien t space as a flat plane or curv ed into a Swiss roll (Fig. 1 ). In this case, Pro crustes or RSA will rep ort high dissimilarit y , despite all angles and distances along the manifolds b eing identical. Conv ersely , one can design embeddings that are highly similar extrinsically 1 / 13 Preprint Metric similarity anal ysis but whose intrinsic geometries mismatc h. In the following sections, w e will sho w that neural net works can lik ewise exhibit substan tial discrepancies betw een their in trinsic and extrinsic geometry . This illustrative example reveals the need for a Riemannian approach that targets the sim- ilarit y of representations’ intrinsic geometries, not their em b eddings. Fig. 1: Intrinsic vs. extrinsic ge ometric similarity. T o address this gap, w e introduce metric similarity analysis (MSA) , a new framework to compare the intrin- sic geometry of neural net work computations from the lens of Riemannian geometry . W e highligh t MSA as a mathe- matically grounded metho d that satisfies the conditions to b e a distance function defining a top ological metric space o ver Riemannian metrics of a manifold. Our theoretical con tributions are: 1. A R iemannian similarity me asur e. MSA lever- ages the pullbac k metric to define a similarity betw een the exact in trinsic geometries of manifolds. W e pro v e sev eral mathematical results regarding MSA, including in v ariance to state-space rotations and choice of intrinsic co ordinates. 2. A novel distanc e b etwe en symmetric p ositive def- inite (SPD) matric es. In order to compare Rieman- nian metrics arising from differen t represen tations, we prop ose the sp e ctr al r atio as a distance on the SPD cone that is w ell suited for similarity analysis. W e apply this theory to: • R ich and lazy le arning. W e sho w that common meth- o ds rep ort high similarit y b etw een rich and lazy deep net works, t wo learning regimes that are known to use differen t computational strategies. In con trast, MSA distinguishes clearly betw een ric h vs. lazy represen ta- tions. • Dynamic al systems. W e train state-space mo dels (SSMs) and recurrent neural netw orks (RNNs) on a sequence working memory task, and show that MSA unco vers differences in their in ternal computations. • Diffusion mo del. In large-scale text-to-image diffusion mo dels, w e study ho w guidance affects latent diffusion dynamics by using MSA to compare the information geometries of their statistical manifolds. Th us, we in tro duce a new framework anchored in Rie- mannian geometry , with whic h we show that identifying fundamen tally distinct neural computations often requires unco vering minute differences in represen tational geometry . Related w ork Distances b et ween represen tations. Similarit y mea- sures compare the hidden-lay er activ ations of tw o netw orks φ 1 : R n in → R n 1 and φ 2 : R n in → R n 2 b y defining a distance: d ( φ 1 , φ 2 ) ≥ 0 (or in v ersely , a similarity; see Klabunde et al. (2025 ) for a review). Methods such as CKA, CCA, RSA and Pro- crustes first sample the activ ations of each net w ork given a finite set of k inputs: M 1 = [ φ 1 ( x 1 ) , ..., φ 1 ( x k )] and M 2 = [ φ 2 ( x 1 ) , ..., φ 2 ( x k )] for x i ∈ R n in . The distance b et ween φ 1 and φ 2 is treated as a distance b etw een M 1 and M 2 as point clouds embedded in state space. F or example, Procrustes finds the minimum distance ov er ro- tations of one point cloud to another: d Proc ( M 1 , M 2 ) = min Q ∈ O ( n ) || M 1 − M 2 Q || 2 F . In this w ork, we do not p er- form an y such sampling, and instead define a distance b et ween the functions implemen ted by the net works using the pullbac k metrics of φ 1 and φ 2 . This enables an exact comparison of the intrinsic geometries of the manifolds, factoring out the em b edding entirely . Distances b etw een nonlinear dynamics. A num b er of metho ds hav e recently been developed whic h go b eyond static geometry to instead compare dynamical systems mo dels, whether b y learning diffeomorphic mappings b e- t ween vector fields ( Chen et al., 2024 ; Sago di and I. M. P ark, 2025 ), aligning Ko opman modes in function space ( Huang et al., 2025 ; Ostro w et al., 2023 ; Zhang et al., n.d. ), or computing the W asserstein distance b etw een em- b eddings of lo cal dynamics ( Gosztolai et al., 2025 ). While MSA is not dynamics-specific, it can nonetheless b e used to compare the geometry and dynamics of input-driven sys- tems. F urthermore, its non-specificity enables comparison across mo del classes. Riemannian geometry of neural net w orks. Rieman- nian geometry has numerous applications in machine learn- ing, including: metric learning ( Gruffaz and Sassen, 2025 ), natural gradients ( Amari, 1998 ), mechanistic in terpretabil- it y ( Brandon, Angus Chadwic k, and P ellegrino, 2025 ; Hauser and Ray, 2017 ; Pellegrino and Chadwic k, 2025 ), and characterizing the laten t space of deep generative mo d- els ( Arv anitidis, Hansen, and Haub erg, 2017 ; Y. - H. P ark et al., 2023 ; Shao, Kumar, and Thomas Fletcher, 2018 ). One recen t pap er applied information geometry to compare probabilistic models in the space of their predictions, i.e., outputs ( Mao et al., 2024 ). How ever, MSA is the first metho d to define a Riemannian similarit y measure on hid- den layer repr esentations . T o this end, w e introduce the sp ectral ratio (SR), a bounded distance on the SPD cone for similarity analysis. Unlike the classical affine-in v arian t Riemannian metric (AIRM), the boundedness of the SR guaran tees similarities in [0 , 1]. Nevertheless, it w ould b e straigh tforward to define an AIRM-based v arian t of MSA, as has been done for RSA when comparing represen tational co v ariance matrices ( Shahbazi et al., 2021 ). 2 / 13 Preprint Metric similarity anal ysis 2 Metho ds In this section we define metric similarit y analysis (MSA), whic h provides an exact comparison of neural representa- tions under the manifold h yp othesis. W e b egin b y introduc- ing a neural netw ork as a mapping from an input manifold to a representational manifold in activ ation space. W e then review the pullbac k metric as an exact characterization of the in trinsic geometry of this mapping at a p oin t on the manifold. T o compare tw o net works, w e introduce the sp ectral ratio (SR): a new pseudo distance betw een SPD matrices using generalised eigen v alue theory . Finally , w e pro vide a formal definition of MSA as the integrated SR o ver the input manifold, and demonstrate that it satis- fies the conditions to induce a pseudometric space ov er Riemannian metrics. Neural netw ork mo del. W e w ork in the general setting of a netw ork mapping inputs on a manifold M to target outputs. Sym b olically , such a net work can be summarised as: M ψ − − → R n in φ − − → R n ζ − − → R n out where ψ is the embedding of the input manifold, φ the net- w ork up to the hidden la y er whose representation w e wish to study , and ζ the subsequen t lay ers and deco der. Man y arc hitectures such as multi-la y er p erceptrons, conv olutional net works, and transformers fall within this setting. In sec- tion 3 we will sho w ho w MSA can be extended to dynamical systems, including state-space and diffusion mo dels. The pullbac k metric. The intrinsic geometry of a man- ifold is determined b y a c hoice of inner pro duct o v er its tangen t spaces. T o characterise our neural netw ork, we will c ho ose a particular intrinsic geometry on M that captures ho w it is warped by φ ◦ ψ . This can b e done using the pul lb ack metric , whic h defines the inner product b etw een the tangent vectors of M in terms of their correspond- ing dot pro duct in R n when mapp ed to the hidden lay er ( Hauser and Ra y, 2017 ; Lee, 2003 ). Letting ( · ) ∗ denote the pushforward, the pullbac k metric is the follo wing map: g p : T p M × T p M ψ ∗ − − → R n in × R n in φ ∗ − − → R n × R n ⟨· , ·⟩ − − → R where T p M is the tangen t space at p oint p ∈ M . In- tuitiv ely , the pushforward maps tangent vectors of the abstract input manifold M to tangen t vectors of the hid- den lay er manifold ( φ ◦ ψ )( M ), where their inner pro duct can b e measured using the standard Euclidean dot product ⟨· , ·⟩ (Fig. 2 ). This v alue can b e taken as the inner pro duct b et ween the original tangen t v ectors in T p M , in order to define the same intrinsic geometry on M as its image in the hidden la yer activ ation. In lo cal co ordinates, the pushforward at p can b e rep- resen ted by the Jacobian J ( p ) of φ ◦ ψ . Then the tan- gen t vectors v i ∈ T p M are pushed forward to J ( p ) v i in the hidden lay er, where their inner product is simply ( J ( p ) v i ) · ( J ( p ) v j ) = v i J ( p ) T J ( p ) v j . In this wa y , the pull- bac k metric can be represen ted as G ( p ) = J ( p ) T J ( p ) ∈ Fig. 2: The pul lb ack metric of neur al r epr esentations. R m × m where m = dim ( M ). In particular, if the pushfor- w ard is injectiv e, meaning that ( φ ◦ ψ )( M ) is an immersion , G ( p ) is symmetric p ositiv e definite (SPD). Sev eral studies hav e sho wn that the geometry defined b y G ( p ) reflects the in ternal computations of deep net works ( Brandon, Angus Chadwick, and P ellegrino, 2025 ; Hauser and Ray, 2017 ; T ennenholtz and Mannor, 2022 ). W e ma y therefore expect that, for tw o different netw orks φ 1 and φ 2 receiving data from the same input manifold, com- paring their respective pullbac k metrics G 1 ( p ) and G 2 ( p ) should pro vide a means of quan tifying the similarit y of their computations. This requires making a choice of distance function 1 b et ween SPD matrices. The sp ectral ratio of generalised eigen v alues. There exist distance functions on the space of SPD matrices ( F¨ orstner and Moonen, 2003 ; Lin, 2019 ). Such distance functions hav e for example b een used to compare empirical co v ariance matrices, compute the KL divergence betw een Gaussian distributions, and define canonical correlations b et ween data matrices. Here w e in troduce a no vel distance function on SPD matrix space particularly suited for sim- ilarit y analysis. Nev ertheless, many of our mathematical results hold for general affine-in v arian t distance functions (App. A1 ). Definition 2.1 (Sp ectral ratio). Consider t wo SPD ma- trices G, G ′ ∈ R m × m , and the generalised eigenequation G v i = λ i G ′ v i . Here λ i ∈ R + and v i ∈ R m form the i th generalised eigen v alue-eigenv ector pair of ( G ′ , G ) with λ i +1 ≤ λ i for i ∈ [ m ]. W e define the sp ectral ratio as: d SR ( G, G ′ ) = 1 − r λ m λ 1 ∈ [0 , 1] Proposition 2.2 (The sp ectral ratio is a distance). The SR is a pseudo-distanc e function on SPD matric es. This me ans that it satisfies i) sep ar ation: d SR ( G, G ) = 0 , ii) symmetry: d SR ( G, G ′ ) = d SR ( G ′ , G ) and iii) the trian- gle ine quality: d SR ( G, G ′′ ) ≤ d SR ( G, G ′ ) + d SR ( G ′ , G ′′ ) . [Proof] F urthermore, since the sp ectral ratio is b ounded, it can be 1 Distance functions are also called metrics , a concept that is related to – but not to be confused with – the Riemannian metric . 3 / 13 Preprint Metric similarity anal ysis naturally used to define a similarit y function: 1 − d SR ( G, G ′ ) ∈ [0 , 1] T o gain in tuition as to what this quan tity represents we can consider t wo extreme cases. First, 1 − d SR ( G, G ) = 1. Second, if rank ( G ) = rank ( G ′ ), then 1 − d SR ( G, G ′ ) = 0. Figure 3 sho ws the b ehaviour of this similarity function b y comparing pairs of 2 × 2 SPD matrices generated b y con tinuously v arying the angle or the relativ e magnitudes among the columns of eac h matrix. Fig. 3: The sp e ctr al r atio is a distanc e over SPD matric es. a. The set of SPD matrices (here 2 × 2) is a solid cone in the Euclidean space of their entries. b. Slice of the SPD cone along matrices which are related b y a scalar m ultiple. The slice is c haracterised b y a v ariable r , defining the relative magnitude of the diagonal en tries and θ defining the magnitude of the off- diagonal entries. The sp ectral ratio defines a similarity b etw een pairs of matrices on the SPD cone, with different relative θ ( c ) or r ( d ). Metric Similarit y Analysis (MSA). W e ha ve argued for using the pullback metric to c haracterize how the intrinsic geometry around a particular p oint p ∈ M is transformed b y a neural netw ork. Recall that for a specific c hoice of co ordinate system, the pullback metric can b e represented as an SPD matrix. Thus, we can compare t w o net works globally b y integrating their spectral ratio ov er M : Definition 2.3 (Metric similarit y analysis). Let G φ 1 p and G φ 2 p the lo cal co ordinate represen tations of pullback metrics of the neural net works φ 1 and φ 2 , at a point p on an m - dimensional manifold M . Then we define MSA as: d MSA ( φ 1 , φ 2 ) = 1 V ol g ( M ) Z M d SR ( G φ 1 p , G φ 2 p )dv ol g ( p ) where the normalising factor V ol g ( M ) and in tegration ov er the v olume form dv ol g are dependent on the metric g on the input data manifold. Just as the SR is a distance ov er SPD matrices, MSA defines a distance o ver Riemannian metrics. Proposition 2.4 (MSA is a distance ov er pullback Rie- mannian metrics). MSA fol lows the sep ar ation, symmetry and triangle ine quality identities. [Proof] As for the SR, the MSA distance is bounded, and can be turned in to a similarity function: 1 − d MSA ( φ 1 , φ 2 ) ∈ [0 , 1] W e use “MSA” to refer to either the distance or similarit y function, dep ending on the con text. MSA provides a principled means of comparing the intrinsic geometries of neural netw ork representations (Fig. 4 ). W e will return to the mathematical properties of MSA in Section 4 , where we show that it is in v arian t to the choice of co ordinates on the manifold and to rotations in the neural net work state space. In the next section, w e demonstrate the applicabilit y and relev ance of MSA for comparing the in trinsic geometries of neural netw orks in v arious settings. Fig. 4: Metric similarity analysis enables c omp arison of the intrinsic ge ometry of neur al r epr esentations. Tw o neural net w orks receiving inputs from the same manifold with differen t hidden-la yer geometries, corresponding to distinct Rie- mannian m etrics. 3 Numerical exp erimen ts Here, we highligh t three k ey applications of MSA: i) re- v ealing structural differences in the in trinsic geometry of deep net work represen tations, ii) disen tangling the com- putational mec hanisms of differen t nonlinear dynamics arc hitectures, and ii) comparing statistical manifolds in diffusion mo dels. MSA distinguishes ric h and lazy representations T o highligh t the importance of characterizing the intrinsic geometry of neural represen tations, w e start by p erforming MSA on models kno wn to produce differen t solutions to the same task: rich and lazy deep netw orks. W e trained a one-hidden-la y er neural netw ork to map inputs on a t wo-dimensional manifold to discrete classes (Fig. 5 a ). Sp ecifically , w e considered M = [ − 1 , 1] 2 , with: x ∈ M , z = tanh( W 1 x ) , y = W 2 z The net work was trained using a cross entrop y loss to map x to a one-hot-enco ded class v ector: onehot( θ , k ) = [ 1 θ ∈ [0 , 2 π /k ) , ..., 1 θ ∈ [2 π − 2 π /k , 2 π ) ] where θ = atan2 ( x ) ∈ [0 , 2 π ) and k is the n um b er of classes. Considerable prior w ork has sho wn that neural net works can perform such tasks via t wo fundamentally different 4 / 13 Preprint Metric similarity anal ysis Fig. 5: Rich and lazy network r epr esentations have differ ent intrinsic ge ometries. a. Netw ork trained to map a 2D manifold to discrete classes. b. PCA applied to the activ ation of the ric h and lazy netw ork, coloured according to the target class. c. Mesh grid of the intrinsic geometry of the representations. d. Similarity betw een the rich and lazy representation; rich 2 is a different seed. computational mec hanisms. In the ric h regime, net works learn latent features of their training data, leading to struc- tured represen tations, whereas net works in the lazy regime o verfit individual training data points ( W oo dworth et al., 2020 ). Small-v ariance weigh t initializations are kno wn to pro duce rich learning, w hereas large-v ariance initializa- tions tend to lead to lazy learning ( Saxe, McClelland, and Ganguli, 2013 ). What representational geometries lie at the heart of these differen t computations? Simply visualizing the netw ork activ ation in state space via principal component analy- sis (PCA) suggests a similar embedding geometry in each regime (Fig. 5 b ). In con trast, plotting the geo desic grid lines of the input manifold under the pullbac k metric hin ts at distinct in trinsic geometries, p otentially supporting dif- Fig. 6: MSA c aptur es hier ar chic al structur e acr oss neur al r epr esentations. Netw orks with different learning regimes, tasks, architectures, and seeds. a. MSA, RSA and CCA applied to pairs of these net w orks. Note that RSA and CKA are equiv- alen t when mean-cen tered (). MSA captures the hierarchical structure across models while CCA and RSA do not. b. Instead of considering all com binations of suc h h yperparameters, we hi- erarc hically v ary them. All netw orks receiv e the same input on a 2-dimensional planar manifold. They ha ve differen t learning regimes (ric h vs. lazy), or differen t tasks (5 vs. 2 classes), or seeds (S1/S2, defining initial w eights). feren t netw ork computations (Fig. 5 c ). T o quantify this, w e performed similarit y analyses b et ween the rich and lazy net works using both the in trinsic (via MSA) and ex- trinsic geometries (via Pro crustes). Indeed, Pro crustes rep orted near-p erfect similarit y b etw een lazy and rich net- w orks, whereas MSA reported low v alues compared to the similarit y of the same regime across seeds ( 5 d ). Th us, c har- acterizing the intrinsic geometry is necessary to disen tangle fundamen tally different computations in neural net works. W e next sough t to exploit the fact that different h yp erpa- rameters generate divergen t net work solutions, due to dif- feren t tasks (determined by the n umber of classes), learning regimes (by the v ariance of initial w eights), or initializations (b y the pseudo-random seed). T o further test whether MSA can iden tify meaningful differences in net work solutions, w e v aried these hyperparameters sequen tially , forming a hierarc hical structure (Fig. 6 b ). W e then asked whether MSA iden tified similarities matching this hierarc hy . Indeed, MSA was able to capture this hierarch y , with similarities scaling evenly with the n umber of differing hy- p erparameters across models (Fig. 6 a , b ). In comparison, RSA was unable to distinguish b et ween rich, lazy , and ev en untrained models. Imp ortantly , both RSA and CCA rep orted intermediate similarity v alues for mo dels with the few est shared h yperparameters, with fundamen tally differ- en t computations – for example when comparing trained vs. untrained models, or those trained on t wo vs. five classes. MSA, in contrast, found zero similarit y in these cases. These results demonstrate that MSA can disen tangle finer- grained task-relev an t structure than methods based purely on extrinsic geometry , enabling more meaningful compar- isons b et ween net works. In the next section, we will sho w that similar insights can be gained for nonlinear dynamics. MSA enables the comparison of nonlinear dynamics Here, w e will sho w how MSA can b e used to inv estigate dynamical systems mo dels b y examining how input mani- folds are w arp ed ov er time b y nonlinear flows ( Pellegrino 5 / 13 Preprint Metric similarity anal ysis and Chadwick, 2025 ). Sp ecifically , we ask ed whether MSA could provide insigh t in to the internal computations of dynamical systems mo dels performing a task in which an in ternal memory of the input manifold m ust b e maintained. W e trained mo dels to repro duce an input sequence defined b y t wo angles θ 1 , θ 2 ∈ S 1 follo wing a v ariable dela y (Fig. 7 a ). W e considered tw o architectures commonly used in neuroscience applications: v anilla RNNs and structured SSMs ( Durstewitz, Kopp e, and Thurm, 2023 ; Ryoo et al., 2025 ). The dynamics of each mo del w as gov erned b y: RNN : d dt x ( t ) = A tanh( x ( t )) + B u ( t ) SSM : d dt x ( i ) ( t ) = A ( i ) tanh( x ( i ) ( t )) + B ( i ) u ( i ) ( t ) where u ( t ) = u (0) ( t ) ∈ R 3 enco des the input angles and dela y for each mo del (Fig. 7 a ). The SSM consisted of t wo blo cks of dynamics ( i ∈ { 0 , 1 } ) c hained through a nonlinearit y: u (1) ( t ) = C (0) tanh ( x (0) ( t )). The outputs w ere deco ded from the hidden state: y ( t ) = C x ( t ) and y ( t ) = C (1) [ x (1) ( t ) , x (2) ( t )] for the SSM and RNN, resp ec- tiv ely . All A ’s, B ’s, and C ’s were trainable parameters. The RNN w eights were initialised to b e Gaussian, while the SSM w eights were initialised deterministically with HiPPO-LegS ( Gu et al., 2022 ). The input manifold for this task is a t wo-dimensional torus, M = T 2 = S 1 × S 1 parametrised by θ 1 and θ 2 (Fig. 7 b ). After receiving the inputs, the model must main tain a rep- resen tation of b oth angles in its in ternal state during the dela y p erio d. Multiple computational mechanisms ha ve b een prop osed for suc h working memory tasks, from contin- uous attractors of fixed points ( Khona and Fiete, 2022 ) to dynamical memories supported by non-normal dynamics ( Ganguli, Huh, and Sompolinsky , 2008 ; Goldman, 2009 ). Imp ortan tly , the architecture biased the implemen ted mech- anism: e.g. the SSM’s blo ck structure fa voured non-normal dynamics. W e th us asked whether the tw o mo dels employ differen t computational strategies to p erform the task. W e applied MSA to the full input-time manifolds pro duced b y the RNN and the SSM. Sp ecifically , w e consider time as a separate co ordinate, forming a 3-dimensional manifold M ′ on whic h the 3 × 3 pullbac k metrics could b e defined, enabling the geometry and dynamics of the models to b e sim ultaneously compared. MSA was able to cluster the same arc hitectures ov er seeds, while also identifying dif- ferences betw een trained and un trained mo dels (Fig. 7 c ). T o visualise these results w e applied a v ariant of m ulti- dimensional scaling (MDS) on the MSA distance matrix, observing a lo w-dimensional representation t hat reflected the structure of the hyperparameters (architecture and training, Fig. 7 d ). W e ask ed whether it was p ossible to observe these differ- ences through comparisons of the geometry or dynamics separately , by either applying RSA or DSA (Fig. 7 e ). While RSA w as able to separate b etw een the t wo trained mo del arc hitectures it was not sensitive to training stage, rep orting the same similarit y b etw een the trained RNN vs. untrained SSM as the trained RNN vs. trained SSM (y ellow vs. orange bars). DSA w as even less sensitive to differences b et ween the models. In contrast, MSA iden- tified similarities that scaled both with arc hitecture and training stage. T o see traces of the dynamic geometry within the mo dels, w e next applied MSA to compare the represen tation within the same mo del b etw een timep oin ts t and t ′ (Fig. 7 f ). In terestingly , the tw o models show ed differen t changes in their geometries ov er time, with only the RNN main taining high similarity throughout the dela y . These results ec ho recen t work sho wing that linear dynamics can con v erge to non-normal rotational solutions ( Ritter and Angus Chad- wic k, 2025 ), which could sk ew geometries due to shearing. Fig. 7: MSA enables c omp aring state-sp ac e mo dels to r e- curr ent neur al networks. a. The netw ork receives a sequence of angular inputs: u i ( t ) = f i ( θ 1 ) 1 t = t 1 + f i ( θ 2 ) 1 t = t 2 for i = 1 , 2, with f 1 ( x ) = cos ( x ) and f 2 ( x ) = sin ( x ), which it m ust k eep in memory for a v ariable delay p erio d. A t the Go cue (signalled b y u 3 ( t ) = 1 t = t go ), it must output same sequence. b. PCA applied to the state of the RNN. c. MSA applied to the full input-time manifolds of different models. d. Eigenv ectors of the doubly-cen tred pairwise MSA similarity matrix (i.e. MDS with MSA distance). e. Similarity of the geometry (RSA), dynamics (DSA) and geometry+dynamics (MSA) betw een the trained RNN and all other mo dels. f. MSA applied to compare the represen tations within a single mo del at tw o timepoints (after the secon d input). 6 / 13 Preprint Metric similarity anal ysis T ogether these results demonstrate ho w MSA rev eals struc- tural differences in dynamical represen tations of models while pro viding insight in to their internal computations. MSA extends to statistical manifold analysis W e next demonstrate how MSA can b e extended to a prob- abilistic setting. W e start b y reviewing the link b etw een the pullback and Fisher-Rao m etrics, before applying MSA to generativ e text-to-image diffusion mo dels. Consider a statistical manifold M whose points π ∈ M parametrise a probabilit y density p π ( x ) o ver x ∈ R n . F or a giv en sample x , the log-likelihoo d defines a map: M log p ( · ) ( x ) − − − − − − → R whose pullbac k in lo cal coordinates is J p ( π ) ⊤ J p ( π ) ∈ R m × m , where J p ( π ) = ( ∇ π log p π ( x )) ⊤ . T aking an exp ec- tation o ver x , w e recov er the Fisher information matrix: F ( π ) = E x ∼ p π [ ∇ π log p π ( x ) ⊗ ∇ π log p π ( x )] ∈ R m × m This SPD matrix defines the lo cal coordinate expression of the Fisher-Rao metric, enco ding c hanges in p π ( · ) o ver M . W e next show how MSA can compare the information geometries of diffusion models. A diffusion model is a bac kward-in-time stochastic differen tial equation (SDE): dx = f ( x , π , t )dt + σ ( t )dB , x (1) ∼ N ( 0 , I ) where B is a Bro wnian motion and π ∈ M is a v ariable that deterministically controls the diffusion pro cess (e.g. a text prompt for conditional image generation). The model can b e summarised as: R n φ π − − → R n , x (1) → x (0) where φ π is the flo w of the system, and x (0) the generated sample (e.g. an image). This SDE is associated with a corresp onding probabilit y flow: ∂ t p π ( x , t ) = −∇ x · ( f ( x , π , t ) p π ( x , t )) + 1 2 σ 2 ( t )∆ x p π ( x , t ) where ∇· is the div ergence, ∆ the Laplacian, and p π ( x (1) , 1) = N ( 0 , I ). Solving this flo w at p π ( x (0) , 0) gives the marginal densit y of the learned generative distribution of the SDE at x (0). Here we study the information geometry of this distribution o ver π ∈ M . Thanks to the scalability of MSA, we can apply it to StableDiffusionXL, a large text-to-image diffusion mo del capable of generating high quality images ( Podell et al., 2023 ). The conditional v ariable π is the text embedding of the prompt such that the laten t diffusion dynamics are giv en by: dx = UNet( x , T extEmb( “a leaf ” ) , t )dt + σ ( t )dB W e defined a manifold M of text embeddings via bilinear in terp olation b etw een four basis em b eddings (Fig. 8 b ). This pro duces a statistical manifold with each π ∈ M cor- resp onding to a differen t distribution of generated images. Using MSA we computed the similarit y of the Fisher-Rao Fig. 8: The latent sp ac e of diffusion mo dels c ol lapses without guidanc e. a. Schematic of StableDiffusionXL: a bac k- w ard diffusion process transports a Gaussian distribution to a latent generative distribution. Samples are then mapp ed to image space via a v ariational auto-enco der (V AE). b. The diffusion pro cess is conditioned on a text embedding. W e gen- erated a manifold of text embeddings via bilinear in terp olation: z ( π ) = π 1 π 2 v 1 + (1 - π 1 ) π 2 v 2 + π 1 (1 - π 2 ) v 3 + (1 - π 1 )(1 - π 2 ) v 4 . This defines a statistical manifold of generative distributions. c. Samples across the manifold for a fixed x (1). d. Similarity b et ween the statistical manifold geometry at an y pair of time- p oin t. e. Visualization of the information geometry using MDS on the MSA distance. f. MSA similarity b etw een the statistical manifold at different guidance lev els vs. no guidance ( γ = 1). metric at pairs of time p oints during the diffusion pro- cess, reav ealing dynamic c hanges in the in trinsic geometry throughout the flo w (Fig. 8 d ). F urthermore, applying MDS on the MSA distance matrix enabled the tra jectory of the information geometry to b e visualized in a low-dimensional em b edding (Fig. 8 e ). Finally , MSA can b e used to compare differen t diffusion dynamics, e.g., while v arying hyperparameters. In par- ticular, w e studied ho w guidanc e affected the underlying information geometry . The guided diffusion dynamics are giv en by adding an estimation of the marginal score via an empt y-string embedding: dx = γ UNet( x , T extEmb( “a leaf ” ) , t ) + (1 − γ )UNet( x , T extEm b( “ ” ) , t ) dt + σ ( t )dB Previous work has found empirically that the guidance 7 / 13 Preprint Metric similarity anal ysis parameter γ con trols a trade-off b et ween image div ersit y and alignment-to-prompt ( Ho and Salimans, 2022 ). T o study this phenomenon at the lev el of the latent v ariable x w e computed the MSA similarity of models with differen t lev els of guidance against the guidance-free mo del. W e found that, past a certain γ , the geometry of the manifold b ecame more similar to the guidance-free mo del, especially at times near 0. This hin ts at an optimal lev el γ where the information geometry of the learned generative distribution is most dissimilar to the guidance-free model, p oten tially aiding h yp erparameter fine tuning. 4 Mathematical prop erties In this section we briefly in tro duce t wo mathematical prop- erties of MSA which are essen tial for it to b e a well-defined similarit y metric. First, a fundamental desideratum of any similarit y measure is in v ariance to rotations. This is the case for man y metho ds based on extrinsic geometry , such as CCA and Pro crustes. MSA also satisfies this prop erty . Proposition 4.1 (MSA is in v ariant to state-space ro- tations). Consider two networks φ 1 : R n in → R n 1 and φ 2 : R n in → R n 2 . Then: d MSA ( Q 1 ◦ φ 1 , Q 2 ◦ φ 2 ) = d MSA ( φ 1 , φ 2 ) wher e Q i ∈ O ( n i ) is an ortho gonal matrix . [Proof] This inv ariance to rotations can b e understo o d intuitiv ely: giv en t wo tangent vectors v 1 , v 2 ∈ T p M , composing the neural netw ork functions with an orthogonal matrix Q (or an y Euclidean isometry), will simply rotate the images of eac h v i under the pushforw ard, and th us will not change their dot pro duct. Since the pullback metric is defined via this dot pro duct, the intrinsic geometry remains the same. A more subtle question, esp ecially crucial for an y metho d relying on Riemannian geometry , is whether MSA dep ends on the choice of local coordinates. In our numerical ex- p erimen ts we c hose particular co ordinates on the manifold aligned with the task v ariables. This in turn defined a co- ordinate represen tation of the metrics as matrices G φ 1 ( p ), G φ 2 ( p ) whose generalized eigen v alues w e could then com- pute. Ho wev er, changing the local co ordinates will c hange these matrices, and therefore the solution to the generalised eigen v alue problem. Despite this, MSA itself remains in- v ariant under suc h a change of local co ordinates. Proposition 4.2 (MSA is inv ariant to c hanges in lo cal co ordinates). Consider two networks φ 1 : R n in → R n 1 , φ 2 : R n in → R n 2 . L et ( U j , ϕ j ) and ( U k , ϕ k ) b e smo oth charts on M . Then: d MSA ( φ 1 ◦ ψ ◦ f , φ 2 ◦ ψ ◦ f ) = d MSA ( φ 1 , φ 2 ) wher e f : ϕ k ( U k ) → ϕ j ( U j ) is a diffe omorphism b etwe en the lo c al c o or dinate chart domains. [Proof] These t wo k ey properties ensure that that MSA pro vides a meaningful distance b et ween neural represen tations. 5 Discussion W e introduced a new similarit y measure for comparing neural net w ork solutions through their in trinsic geometries. A t its most general, MSA defines a distance metric b e- t ween Riemannian metrics o ver a manifold. In order to compare neural computations, we consider neural net works as functions that progressively warp an input manifold, whether from lay er to lay er or through time. Applying MSA to the pullback metrics of each net work then pro vides a mathematically principled wa y to quantify the similarit y of the net works’ in ternal computations, rather than their extrinsic em b edding geometries. W e demonstrated the ef- fectiv eness of our approac h in sev eral examples of v arying arc hitecture, for static, dynamical, and generativ e models. MSA requires a characterisation of the input data manifold. While this is straigh tforw ard to implement for most mo dels, suc h a c haracterization can b e challenging for applications to data where the manifold is not explicitly pro vided, e.g. in neuroscience data ( Barb osa et al., 2025 ). This can b e remedied b y learning the manifold with a parametric mo del b efore applying MSA, as long as the data is sufficien tly densely sampled. On the other hand, sampling-based meth- o ds suc h as Pro crustes analysis and CCA w ork w ell even for sparsely sampled data. T o this end, a natural extension of MSA to the data-po or regime w ould b e to in tro duce a probabilistic mo del on the data sampling pro cess. A second limitation of MSA is that it do es not capture ho w represen tations are used downstream. By fo cusing on the intrinsic geometry of a single hidden la yer, MSA is agnostic as to how that information is exploited (or not) in later lay ers. F or example, when the deco der lay er is rank-deficien t or lo w-dimensional, it may ignore part of the represen tational geometry , dep ending on how it is aligned with the nullspace. In contrast, several shap e metrics ha ve known connections with linear deco ding ( Harvey, Lipsh utz, and Williams, 2024 ). Therefore a more complete c haracterization of net work computations will likely require additional considerations of deco ding. Finally , our w ork remains mostly correlational. Similarity metrics do not offer causal insigh ts into neural netw orks, only a geometric lens through which their computations can b e in terpreted. Nevertheless, similarity analysis can pro vide a useful to ol for refining and manipulating models, for example, to identify new solutions ( Qian and Pehlev an, 2025 ). F uture work could attempt to directly manipulate the geometry of neural netw orks based on the insights gained from MSA to design more p erformant or robust mo dels, offering a promising a ven ue for mec hanistic inter- pretabilit y . 8 / 13 Preprint Metric similarity anal ysis Ac kno wledgments W e thank the Gatsby Unit for their feedback on this work. In particular, we are grateful to Da vid O’Neill, whose results obtained during his Master’s at UCL inspired the dev elopment of MSA. W e also thank the mem b ers of the Cen tre Sciences des Donn´ ees at ENS for helpful discussions, esp ecially Alexandre V ´ erine for insightful exchanges on the diffusion model section. This work was supported by the Agence National de Recherc he (ANR-23-IA CL-0008, ANR- 17- 726 EURE-0017). References Amari, Shun-Ic hi (1998). “Natural gradient works effi- cien tly in learning”. In: Neur al c omputation 10.2, pp. 251– 276. Arv anitidis, Georgios, Lars Kai Hansen, and Søren Hauberg (2017). “Latent space oddity: on the curv ature of deep generativ e mo dels. arXiv ”. In: Pr eprint p oste d online Octob er 31. Barb osa, Joao et al. (2025). “Quantifying Differences in Neural P opulation Activity With Shap e Metrics”. In: bioRxiv , pp. 2025–01. Boix-Adsera, Enric et al. (2022). “GULP: a prediction- based metric b etw een represen tations”. In: A dvanc es in Neur al Information Pr o c essing Systems 35, pp. 7115– 7127. Brandon, Julian, Angus Chadwick, and Arth ur P ellegrino (2025). “Emergent Riemannian geometry o ver learning discrete computations on contin uous manifolds”. In: arXiv pr eprint arXiv:2512.00196 . Chen, Ruiqi et al. (2024). “Dform: Diffeomorphic vector field alignment for assessing dynamics across learned mo dels”. In: arXiv pr eprint arXiv:2402.09735 . Chizat, Lenaic, Edouard Oy allon, and F rancis Bac h (2019). “On lazy training in differentiable programming”. In: A dvanc es in neur al information pr o c essing systems 32. Chou, Chi-Ning et al. (2025). “F eature Learning beyond the Lazy-Ric h Dichotom y: Insights from Representational Geometry”. In: F orty-se c ond International Confer enc e on Machine L e arning . Durstewitz, Daniel, Georgia Kopp e, and Max Ingo Th urm (2023). “Reconstructing computational system dynam- ics from neural data with recurrent neural net w orks”. In: Natur e R eviews Neur oscienc e 24.11, pp. 693–710. F¨ orstner, W olfgang and Boudewijn Mo onen (2003). “A met- ric for cov ariance matrices”. In: Ge o desy-the Chal lenge of the 3r d Mil lennium . Springer, pp. 299–309. Ganguli, Sury a, Dongsung Huh, and Haim Sompolinsky (2008). “Memory traces in dynamical systems”. In: Pr o- c e e dings of the national ac ademy of scienc es 105.48, pp. 18970–18975. Goldman, Mark S (2009). “Memory without feedback in a neural net work”. In: Neur on 61.4, pp. 621–634. Gosztolai, Adam et al. (2025). “MARBLE: interpretable represen tations of neural population dynamics using geometric deep learning”. In: Natur e Metho ds , pp. 1–9. Gra ve, Edouard, Armand Joulin, and Quen tin Berthet (2019). “Unsup ervised alignment of em b eddings with w asserstein pro crustes”. In: The 22nd International Confer enc e on Artificial Intel ligenc e and Statistics . PMLR, pp. 1880–1890. Gruffaz, Sam uel and Josua Sassen (2025). “Riemannian metric learning: Closer to you than y ou imagine”. In: arXiv pr eprint arXiv:2503.05321 . Gu, Alb ert et al. (2022). “Ho w to train your hippo: State space mo dels with generalized orthogonal basis pro jec- tions”. In: arXiv pr eprint arXiv:2206.12037 . Harv ey , Sarah E, Da vid Lipshutz, and Alex H Williams (2024). “What representational similarit y measures im- ply ab out decodable information”. In: arXiv pr eprint arXiv:2411.08197 . Hauser, Mic hael and Asok Ray (2017). “Principles of Rie- mannian geometry in neural net w orks”. In: A dvanc es in neur al information pr o c essing systems 30. Ho, Jonathan and Tim Salimans (2022). “Classifier-free dif- fusion guidance”. In: arXiv pr eprint arXiv:2207.12598 . Huang, Ann et al. (2025). “Inputdsa: Demixing then com- paring recurren t and externally driv en dynamics”. In: arXiv pr eprint arXiv:2510.25943 . Jacot, Arth ur, F ranck Gabriel, and Cl´ emen t Hongler (2018). “Neural tangen t kernel: Conv ergence and generalization in neural net works”. In: A dvanc es in neur al information pr o c essing systems 31. Johnston, W Jeffrey and Stefano F usi (2023). “Abstract represen tations emerge naturally in neural netw orks trained to perform multiple tasks”. In: Natur e Commu- nic ations 14.1, p. 1040. Kaul, Piyush and Brejesh Lall (2019). “Riemannian curv a- ture of deep neural net works”. In: IEEE tr ansactions on neur al networks and le arning systems 31.4, pp. 1410– 1416. Khona, Mik ail and Ila R Fiete (2022). “Attractor and in tegrator netw orks in the brain”. In: Natur e R eviews Neur oscienc e 23.12, pp. 744–766. Klabunde, Max et al. (2025). “Similarity of neural net work mo dels: A survey of functional and representational measures”. In: A CM Computing Surveys 57.9, pp. 1– 52. Korn blith, Simon et al. (2019). “Similarit y of neural net- w ork representations revisited”. In: International c on- fer enc e on machine le arning . PMlR, pp. 3519–3529. Kriegesk orte, Nikolaus, Mariek e Mur, and P eter A Ban- dettini (2008). “Representational similarit y analysis- connecting the branc hes of systems neuroscience”. In: F r ontiers in systems neur oscienc e 2, p. 249. Lee, John M (2003). “Smooth manifolds”. In: Intr o duction to smo oth manifolds . Springer, pp. 1–29. Li, Qian yi, Ben Sorscher, and Haim Somp olinsky (2024). “Represen tations and generalization in artificial and 9 / 13 Preprint Metric similarity anal ysis brain neural netw orks”. In: Pr o c e e dings of the National A c ademy of Scienc es 121.27, e2311805121. Li, Yixuan et al. (2015). “Con vergen t learning: Do different neural net works learn the same represen tations?” In: arXiv pr eprint arXiv:1511.07543 . Lin, Zhenhua (2019). “Riemannian geometry of symmetric p ositiv e definite matrices via Cholesky decomposition”. In: SIAM Journal on Matrix Analysis and Applic ations 40.4, pp. 1353–1370. Mahesw aranathan, Niru et al. (2019). “Univ ersality and individualit y in neural dynamics across large p opula- tions of recurren t net works”. In: A dvanc es in neur al information pr o c essing systems 32. Mao, Jialin et al. (2024). “The training pro cess of man y deep netw orks explores the same lo w-dimensional man- ifold”. In: Pr o c e e dings of the National A c ademy of Sci- enc es 121.12, e2310002121. Ostro w, Mitc hell et al. (2023). “Beyond geometry: Com- paring the temp oral structure of computation in neural circuits with dynamical similarity analysis”. In: A d- vanc es in Neur al Information Pr o c essing Systems 36, pp. 33824–33837. P accolat, Jonas et al. (2021). “Geometric compression of in v ariant manifolds in neural netw orks”. In: Journal of Statistic al Me chanics: The ory and Exp eriment 2021.4, p. 044001. P ark, Y ong-Hyun et al. (2023). “Understanding the laten t space of diffusion mo dels through the lens of rieman- nian geometry”. In: Advanc es in Neur al Information Pr o c essing Systems 36, pp. 24129–24142. P ellegrino, Arth ur and A Chadwic k (2025). “RNNs perform task computations by w arping neural represen tations”. In: A dvanc es in neur al information pr o c essing systems 38. P o dell, Dustin et al. (2023). “Sdxl: Impro ving latent dif- fusion mo dels for high-resolution image syn thesis”. In: arXiv pr eprint arXiv:2307.01952 . P o ole, Ben et al. (2016). “Exponential expressivit y in deep neural net w orks through transient chaos”. In: A dvanc es in neur al information pr o c essing systems 29. Qian, William and Cengiz P ehlev an (2025). “Disco vering alternativ e solutions b eyond the simplicity bias in recur- ren t neural netw orks”. In: arXiv pr eprint arXiv:2509.21504 . Ragh u, Maithra et al. (2017). “Svcca: Singular vector canon- ical correlation analysis for deep learning dynamics and in terpretability”. In: A dvanc es in neur al information pr o c essing systems 30. Ritter, Laura and Angus Chadwick (2025). “Efficien t W ork- ing Memory Main tenance via High-Dimensional Rota- tional Dynamics”. In: bioRxiv , pp. 2025–09. Ry o o, Avery Hee-W o on et al. (2025). “Generalizable, real- time neural deco ding with hybrid state-space models”. In: arXiv pr eprint arXiv:2506.05320 . Sago di, Ab el and Il Memming Park (2025). “Dynami- cal arc hetype analysis: Autonomous computation”. In: arXiv pr eprint arXiv:2507.05505 . Saxe, Andrew M, James L McClelland, and Surya Ganguli (2013). “Exact solutions to the nonlinear dynamics of learning in deep linear neural netw orks”. In: arXiv pr eprint arXiv:1312.6120 . — (2019). “A mathematical theory of semantic dev elop- men t in deep neural net works”. In: Pr o c e e dings of the National A c ademy of Scienc es 116.23, pp. 11537–11546. Shah bazi, Mahdiyar et al. (2021). “Using distance on the Riemannian manifold to compare representations in brain and in mo dels”. In: Neur oImage 239, p. 118271. Shang, Jiaqi, Gabriel Kreiman, and Haim Somp olinsky (2025). “Unra v eling the geometry of visual relational reasoning”. In: ArXiv , arXiv–2502. Shao, Hang, Abhishek Kumar, and P Thomas Fletc her (2018). “The riemannian geometry of deep generative mo dels”. In: Pr o c e e dings of the IEEE Confer enc e on Computer Vision and Pattern R e c o gnition Workshops , pp. 315–323. T enen baum, Joshua B, Vin de Silv a, and John C Langford (2000). “A global geometric framework for nonlinear di- mensionalit y reduction”. In: scienc e 290.5500, pp. 2319– 2323. T ennenholtz, Guy and Shie Mannor (2022). “Uncertain ty estimation using riemannian mo del dynamics for of- fline reinforcement learning”. In: A dvanc es in Neur al Information Pr o c essing Systems 35, pp. 19008–19021. W ang, Liwei et al. (2018). “T ow ards understanding learning represen tations: T o what extent do different neural net works learn the same represen tation”. In: A dvanc es in neur al information pr o c essing systems 31. Williams, Alex H (2024). “Equiv alence betw een represen ta- tional similarity analysis, centered kernel alignmen t, and canonical correlations analysis”. In: bioRxiv , pp. 2024– 10. Williams, Alex H et al. (2021). “Generalized shape met- rics on neural representations”. In: A dvanc es in neur al information pr o c essing systems 34, pp. 4738–4750. W oo dworth, Blak e et al. (2020). “Kernel and rich regimes in o verparametrized models”. In: Confer enc e on L e arning The ory . PMLR, pp. 3635–3673. Zhang, Shimin et al. (n.d.). “KoopSTD: Reliable Similarit y Analysis b et ween Dynamical Systems via Appro ximat- ing Ko opman Spectrum with Timescale Decoupling”. In: F orty-se c ond International Confer enc e on Machine L e arning . 10 / 13 Preprint Metric similarity anal ysis Appendix A1 Metric similarit y analysis Pseudo distance function Pr o of of pr op osition 2.2 . Let G, G ′ , G ′′ ∈ R m × m b e positive definite matrices. W e will denote λ ( G,G ′ ) i to be the i th generalized eigen v alue of G and G ′ with corresp onding eigen vector v ( G,G ′ ) i , satisfying: G v ( G,G ′ ) i = λ ( G,G ′ ) i G ′ v ( G,G ′ ) i . By con ven tion we order the eigen v alues from largest to smallest: λ ( G,G ′ ) 1 ≥ · · · ≥ λ ( G,G ′ ) m > 0 . A useful fact will b e that the generalized eigen v alues of ( G, G ′ ) and ( AG, AG ′ ) are equal for any nonsingular A ∈ R m × m . This follo ws from multiplying the equation abov e by A , i.e.: AG v ( G,G ′ ) i = λ ( G,G ′ ) i AG ′ v ( G,G ′ ) i , therefore: λ ( AG,AG ′ ) i = λ ( G,G ′ ) i ⇒ d SR ( AG, AG ′ ) = d SR ( G, G ′ ) In particular, if we choose A = ( G ′ ) − 1 , w e obtain the standard eigen v alue equation for the matrix ( G ′ ) − 1 G with λ ( G,G ′ ) i = λ (( G ′ ) − 1 G, I ) i . 1. Sep ar ation: In this case, λ ( G,G ) i = λ ( G − 1 G, I ) i = λ ( I , I ) i = 1. Therefore, d SR ( G, G ) = 1 − q 1 1 = 0. 2. Symmetry: Each generalize d eigen v alue for ( G, G ′ ) corresponds to a generalized eigen v alue for ( G ′ , G ). Since the eigen v alues are nonzero: G v ( G,G ′ ) i = λ ( G,G ′ ) i G ′ v ( G,G ′ ) i ⇒ 1 λ ( G,G ′ ) i G v ( G,G ′ ) i = G ′ v ( G,G ′ ) i Since w e define the generalized eigenv alues in decreasing order of magnitude, this means λ ( G ′ ,G ) i = 1 / ( λ ( G,G ′ ) m − i +1 ). Th us, d SR ( G ′ , G ) = 1 − v u u t λ ( G ′ ,G ) m λ ( G ′ ,G ) 1 = 1 − v u u t 1 / ( λ ( G,G ′ ) 1 ) 1 / ( λ ( G,G ′ ) m ) = 1 − v u u t λ ( G,G ′ ) m λ ( G,G ′ ) 1 = d SR ( G, G ′ ) . 3. T riangle ine quality: W e start b y defining ∆ d SR = d SR ( G, G ′ ) + d SR ( G ′ , G ′′ ) − d SR ( G, G ′′ ). It then suffices to pro v e that ∆ d SR ≥ 0. So far we ha v e shown that the spectral ratio is symmetric and in v arian t under left-multiplication b y in vertible matrices. Using these prop erties, we can rewrite: ∆ d SR = d SR ( G, G ′ ) + d SR ( G ′′ , G ′ ) − d SR ( G, G ′′ ) = d SR (( G ′ ) − 1 G | {z } := ˜ G , I ) + d SR (( G ′ ) − 1 G ′′ | {z } := ˜ G ′′ , I ) − d SR (( G ′ ) − 1 G | {z } := ˜ G , ( G ′ ) − 1 G ′′ | {z } := ˜ G ′′ ) T o pro ve that ∆ d SR ≥ 0 w e will need to understand how the standard eigenv alues of ˜ G and ˜ G ′′ relate to their generalized eigen v alues. F or clarity w e write v i = v ( ˜ G, ˜ G ′′ ) i for the remainder of the proof. Then, w e can write the generalized eigen v alues as: ˜ G v i = λ ( ˜ G, ˜ G ′′ ) i ˜ G ′′ v i ⇒ λ ( ˜ G, ˜ G ′′ ) i = v ⊤ i ˜ G v i v ⊤ i ˜ G ′′ v i = R ( ˜ G, v i ) R ( ˜ G ′′ , v i ) where R ( A, v ) is the Ra yleigh quotien t. Then, the spectral ratio distance can b e written in terms of Rayleigh quotien ts: d SR ( ˜ G, ˜ G ′′ ) = 1 − s R ( ˜ G, v m ) R ( ˜ G ′′ , v m ) · R ( ˜ G ′′ , v 1 ) R ( ˜ G, v 1 ) Recall that the Ra yleigh quotient is bounded by abov e and b elow b y the standard eigen v alues: λ ( A, I ) m ≤ R ( A, v ) ≤ 11 / 13 Preprint Metric similarity anal ysis λ ( A, I ) 1 for an y v . W e can use this to b ound the ratio of Rayleigh quotien ts: R ( ˜ G, v m ) R ( ˜ G ′′ , v m ) · R ( ˜ G ′′ , v 1 ) R ( ˜ G, v 1 ) ≥ λ ( ˜ G, I ) m λ ( ˜ G ′′ , I ) 1 · λ ( ˜ G ′′ , I ) m λ ( ˜ G, I ) 1 = b a · b ′′ a ′′ where w e hav e defined the following quan tities for clarit y: a = q λ ( ˜ G, I ) 1 , b = q λ ( ˜ G, I ) m a ′′ = q λ ( ˜ G ′′ , I ) 1 , b ′′ = q λ ( ˜ G ′′ , I ) m Putting it all together, w e can write (or b ound) all three terms in ∆ d SR as: d SR ( ˜ G, I ) = 1 − b a d SR ( ˜ G, I ) = 1 − b ′′ a ′′ d SR ( ˜ G, ˜ G ′′ ) ≤ 1 − b a · b ′′ a ′′ Finally , since b/a ≤ 1 and b ′′ /a ′′ ≤ 1, 0 ≤ 1 − b a 1 − b ′′ a ′′ = 1 − b a − b ′′ a ′′ + b a · b ′′ a ′′ = 1 − b a + 1 − b ′′ a ′′ − 1 − b a · b ′′ a ′′ ≤ d SR ( ˜ G, I ) + d SR ( ˜ G ′′ , I ) + d SR ( ˜ G, ˜ G ′′ ) = ∆ d SR , whic h concludes the pro of. Pr o of of pr op osition 2.4 . The sep ar ation and symmetry immediately follo w from Prop osition 2.2 and the linearit y of in tegration ov er a manifold. As for the triangle i ne quality : ∆ d MSA = d MSA ( φ 1 , φ 2 ) + d MSA ( φ 2 , φ 3 ) − d MSA ( φ 1 , φ 3 ) = 1 v Z M d SR ( G φ 1 , G φ 2 ) + d SR ( G φ 2 , G φ 3 ) − d SR ( G φ 1 , G φ 3 ) dV ( p ) = 1 v Z M ∆ d SR ( p ) dV ( p ) . In the pro of of Proposition 2.2 w e sho w that ∆ d SR ≥ 0, and the integral of a non-negativ e n umber is itself non-negative. Hence: 0 ≤ ∆ d MSA ⇐ ⇒ d MSA ( φ 1 , φ 3 ) ≤ d MSA ( φ 1 , φ 2 ) + d MSA ( φ 2 , φ 3 ) . In v ariances prop erties Pr o of of pr op osition 4.1 . It suffices to sho w that the metric itself is inv ariant to state-space rotation. W e consider a net work φ i : R n in → R n i with m -dimensional input manifold M em b edded through the mapping ψ : M → R n in . Let ( U, ϕ ) be a smo oth c hart on M where U ⊂ M is an open set with ϕ : U → ϕ ( U ) ⊆ R m a diffeomorphism. The pullback metric at p ∈ U can b e expressed in lo cal co ordinates as the follo wing pro duct of Jacobians: G φ i ( p ) = J ⊤ φ i ◦ ψ ◦ ϕ − 1 J φ i ◦ ψ ◦ ϕ − 1 = J ⊤ ψ ◦ ϕ − 1 J ⊤ φ i J φ i J ψ ◦ ϕ − 1 Let ˜ G φ i ( p ) b e the corresp onding metric after rotating in state space b y Q i ∈ O ( n i ). Then: ˜ G φ i ( p ) = J ⊤ Q i ◦ φ i ◦ ψ ◦ ϕ − 1 J Q i ◦ φ i ◦ ψ ◦ ϕ − 1 = J ⊤ ψ ◦ ϕ − 1 J ⊤ φ i Q ⊤ i Q i J φ i J ψ ◦ ϕ − 1 12 / 13 Preprint Metric similarity anal ysis = J ⊤ ψ ◦ ϕ − 1 J ⊤ φ i J φ i J ψ ◦ ϕ − 1 = G φ i ( p ) Where J f ( p ) is the Jacobian of the function f ev aluated at p . Thus: d MSA ( Q 1 ◦ φ 1 , Q 2 ◦ φ 2 ) = 1 v Z M d SR ( ˜ G φ 1 ( p ) , ˜ G φ 2 ( p ))d V ( p ) = 1 v Z M d SR ( G φ 1 ( p ) , G φ 2 ( p ))d V ( p ) = d MSA ( φ 1 , φ 2 ) Hence MSA is in v ariant to neural net w ork hidden-lay er state-space rotations. This result can be viewed as a sp ecial case of a more general prop osition: MSA is in v ariant to state-space transformations whic h are p oint-wise isometries with respect to the Euclidean dot product. The pro of follows m utatis m utandis, with ˜ G φ i ( p ) the metric under the isometry . W e note that these argumen ts do not rely on having netw orks with the same hidden lay er sizes, as they can b e indep enden tly rotated. W e can get a stronger in v ariance if we assume that b oth neural netw orks are sim ultaneously acted up on b y the same transformation. So far we ha v e considered inv ariances to transformations of the hidden-lay er state-space, but MSA is also inv ariant to sim ultaneous transformations of the input manifold, and in particular c hanges of lo cal coordinates. Pr o of of pr op osition 4.2 . W e consider tw o netw orks φ 1 : R n in → R n 1 , φ 2 : R n in → R n 2 , with m -dimensional input manifold M em b edded through the mapping ψ : M → R n in . Let ( U j , ϕ j ) and ( U k , ϕ k ) b e t wo smooth c harts on M where U j , U k ⊂ M are open sets with U j ∩ U k = ∅ and ϕ j : U j → ϕ j ( U j ) ⊆ R m , ϕ k : U k → ϕ k ( U k ) ⊆ R m are diffeomorphisms on to their images. The pullbac k metric at p ∈ U j can be expressed in local co ordinates as the follo wing pro duct of Jacobians: G φ i ( p ) = J ⊤ φ i ◦ ψ ◦ ϕ − 1 j J φ i ◦ ψ ◦ ϕ − 1 j = J ⊤ ψ ◦ ϕ − 1 j J ⊤ φ i J φ i J ψ ◦ ϕ − 1 j No w suppose w e wish to c hange the lo cal coordinates to use chart ϕ k at p ∈ U j ∩ U k instead of ϕ j . W e define the follo wing transition mapping: ϕ k ( U j ∩ U k ) ϕ − 1 k − − → U j ∩ U k ϕ j − → ϕ j ( U j ∩ U k ) The transition function is ϕ j ◦ ϕ − 1 k , which maps b etw een op en subsets of R m , and w e denote its Jacobian by J kj ( p ) ∈ R m × m . Here, unlike in the pro of of prop osition 4.1 , the metric will change under this transformation. Denoting ˜ G φ i ( p ) the new metric in lo cal co ordinates (associated with ϕ k ) at p : ˜ G φ i ( p ) = J ⊤ φ i ◦ ψ ◦ ϕ − 1 j ◦ ϕ j ◦ ϕ − 1 k J φ i ◦ ψ ◦ ϕ − 1 j ◦ ϕ j ◦ ϕ − 1 k = J ⊤ kj J ⊤ ψ ◦ ϕ − 1 j J ⊤ φ i J φ i J ψ ◦ ϕ − 1 j J kj whic h is not equal to G φ i ( p ) in general. F or notational clarity w e will write J i = J φ i J ψ ◦ ϕ − 1 j , so that G φ i ( p ) = ( J i ) ⊤ J i , ˜ G φ i ( p ) = J ⊤ kj ( J i ) ⊤ J i J kj No w, the generalised eigenv alue problem in the new lo cal co ordinates b ecomes: ˜ G φ 1 ( p ) v = λ ˜ G φ 2 ( p ) v ⇒ J ⊤ kj ( J 1 ) ⊤ J 1 J kj v = λJ ⊤ kj ( J 2 ) ⊤ J 2 J kj v . Since the transition function is in vertible, w e can left-multiply b y J −⊤ kj to get: ( J 1 ) ⊤ J 1 ˜ v = λ ( J 2 ) ⊤ J 2 ˜ v where w e let ˜ v = J kj v . Th us, ˜ v / ∥ ˜ v ∥ is a generalized eigen v ector for the original local co ordinates, with generalized eigen v alue λ . This tells us although the generalised eigenv ectors ma y change under local co ordinates, the generalised eigen v alues don’t. Since the sp ectral ratio is only dependent on the generalised eigenv alues, MSA is independent of lo cal coordinate changes. 13 / 13

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment