On-the-fly Repulsion in the Contextual Space for Rich Diversity in Diffusion Transformers

Modern Text-to-Image (T2I) diffusion models have achieved remarkable semantic alignment, yet they often suffer from a significant lack of variety, converging on a narrow set of visual solutions for any given prompt. This typicality bias presents a ch…

Authors: Omer Dahary, Benaya Koren, Daniel Garibi

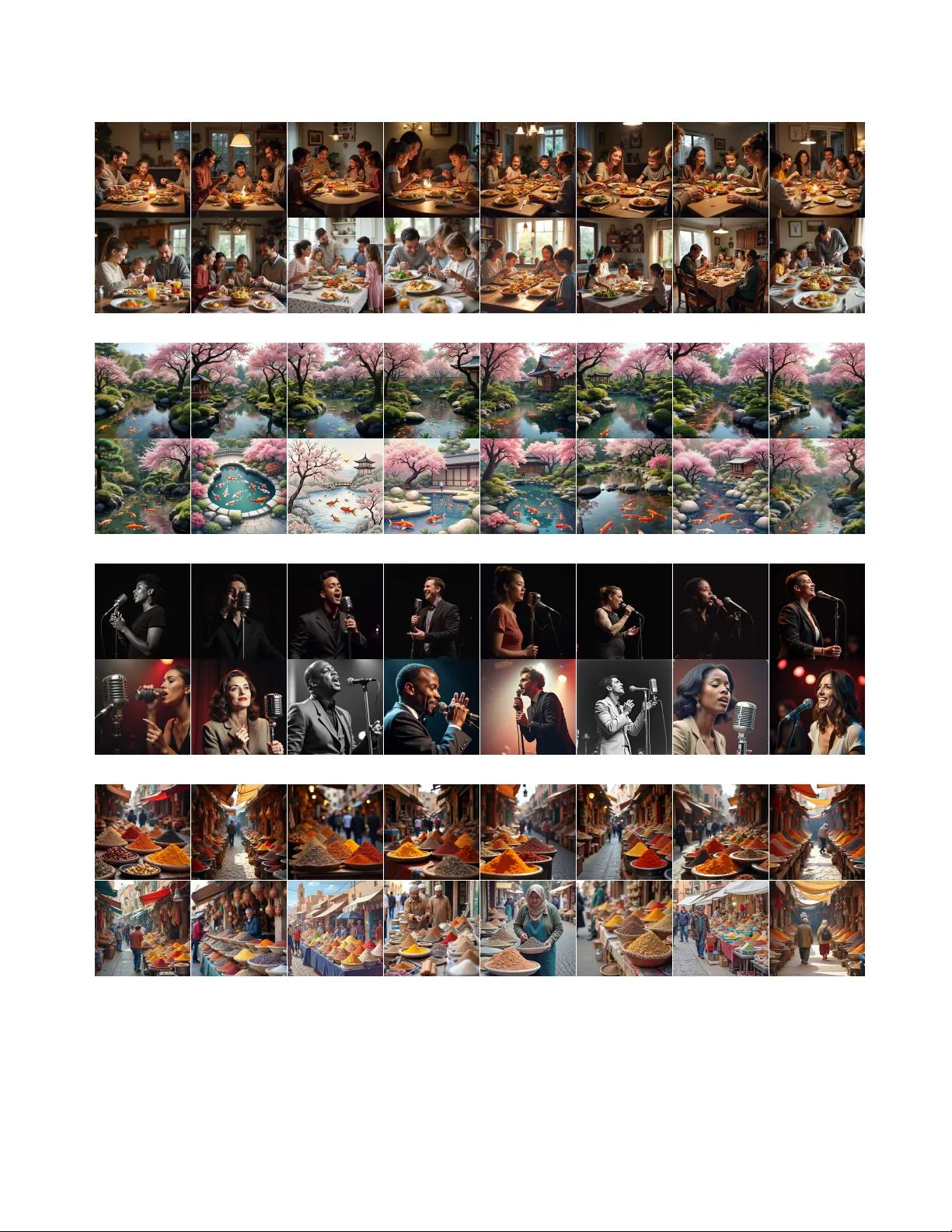

On-the-f ly Repulsion in the Contextual Space for Rich Div ersity in Diusion T ransformers OMER D AHARY ∗ , T el A viv University, Israel and Snap Research, Israel BENA Y A K OREN ∗ , T el A viv University, Israel D ANIEL GARIBI, T el A viv University, Israel and Snap Research, Israel D ANIEL COHEN-OR, T el A viv University, Israel and Snap Research, Israel “ P e o p l e w i t h 3 D h o l o gr a m s ” F lu x - d e v O u r s Fig. 1. Example results of our Conte xtual Space repulsion framew ork using Flux-dev . The base model (top) typically converges on a narrow set of visual solutions. By applying semantic intervention within the internal multi-modal aention channels, our approach (boom) pr oduces a diverse set of images with minimal computational overhead. Modern T ext-to-Image (T2I) diusion models have achieved remarkable semantic alignment, yet they often suer from a signicant lack of variety , converging on a narrow set of visual solutions for any given prompt. This typ- icality bias presents a challenge for creative applications that require a wide range of generative outcomes. W e identify a fundamental trade-o in current approaches to diversity: modifying model inputs requires costly optimiza- tion to incorporate feedback from the generative path. In contrast, acting on spatially-committed intermediate latents tends to disrupt the forming visual structure, leading to artifacts. In this work, w e propose to apply repulsion in the Contextual Space as a novel framework for achieving rich diversity in Dif- fusion Transformers. By intervening in the multimodal attention channels, we apply on-the-y repulsion during the transformer’s forward pass, inject- ing the intervention between blocks where text conditioning is enriched with emergent image structure. This allo ws for redirecting the guidance trajectory after it is structurally informed but before the composition is xed. Our results demonstrate that repulsion in the Contextual Space produces signicantly richer diversity without sacricing visual delity or seman- tic adherence. Furthermor e, our method is uniquely ecient, imp osing a small computational overhead while remaining eective even in modern “T urbo” and distilled models where traditional trajectory-based inter ventions typically fail. Project page: https://contextual- repulsion.github.io/. 1 Introduction The rapid evolution of T ext-to-Image (T2I) ge nerative models has ushered in a new era of high-delity visual synthesis, where mod- els now exhibit unprecedented alignment with complex textual prompts [Esser et al . 2024; Podell et al . 2023; Rombach et al . 2022]. Howev er , this progress has come at a signicant cost: the reduction * Denotes equal contribution. of generative diversity . As advanced generative models are increas- ingly optimized for precision and human prefer ence, they tend to converge on a narro w set of “typical” visual solutions, a phenome- non often described as typicality bias [T eotia et al . 2025]. Diversity is no longer a secondary metric; it has b ecome a central research problem addressed by a growing bo dy of work [Jalali et al . 2025; Morshed and Bo ddeti 2025; Um and Y e 2025]. This is b ecause the utility of generative AI depends on its ability to act as a creative part- ner that e xplores the vast manifold of human imagination. It should function as a generative engine rather than merely a sophisticated retrieval mechanism. The diversity problem is fundamentally dicult due to the struc- tural tension between quality and variety . High-quality genera- tion currently relies on strong conditioning signals, most notably Classier-Free Guidance (CFG) [Ho and Salimans 2022], which eec- tively sharp ens the probability distribution around a single mode by suppressing nearby semantically valid alternativ es. Consequently , restoring diversity requires an ecient me chanism to overcome this bias without degrading the structural integrity of the image or losing semantic adherence. Previous attempts to bridge the diversity-alignment gap can be categorized by their point of intervention within the denoising trajectory , as illustrated in Figure 2. Upstream methods (Figure 2a) attempt to solve the problem by altering initial conditions, such as noise seeds or prompt embeddings. However , these approaches are often decoupled from the actual generation process [Sadat et al . 2023]; to achieve semantic gr ounding, they must either r ely on noisy intermediate estimates [Kim et al . 2025] or employ optimization that incur signicant computational overhead [Parmar et al . 2025; 2 • Omer Dahary ∗ , Benaya Koren ∗ , Daniel Garibi, and Daniel Cohen-Or (a) Upstream (b) Downstream (c) Ours Fig. 2. Conceptual comparison of diversity strategies in dual-stream DiT architectures. Here 𝑝 ( 𝑖 ) denotes the prompt embedding for sample 𝑖 , 𝑧 ( 𝑖 ) 𝑡 denotes the latent at timestep 𝑡 for sample 𝑖 , and the red double- arrow icon indicates the point of diversity manipulation. (a) Upstream : Interventions on noise or prompt embeddings lack structural feedback fr om the emerging image. (b) Downstream : Repulsion in image latents acts on a fixed visual mode and can push samples o the data manifold, causing artifacts. ( c) Ours : By applying on-the-fly repulsion within the Contextual Space (text-aention channels), we steer the model’s generative intent. This allows for a semantically driven inter vention synchronized with the emergent visual structure. Um and Y e 2025]. Conversely , downstream methods (Figure 2b) enforce repulsion in the image latent space during denoising [Corso et al . 2023; Jalali et al . 2025]. While these can force variance , they often push samples outside the learned data manifold, resulting in catastrophic drops in visual delity and unnatural visual artifacts. The core diculty lies in an inter ventional trade-o: early in- terventions lack structural feedback, while late inter ventions face a committed visual mode. This is particularly acute in few-step "T urbo" models, where the generative path is de cided almost in- stantly . Upstream methods require slow optimization to search for diversity-inducing initial conditions, while do wnstream repulsion arrives too late to steer the composition. In this work, we present a novel approach that bypasses this trade- o by identifying and lev eraging the Contextual Space (Figure 2c), which emerges inside the multimodal attention blocks of Diu- sion Transformer (DiT) ar chitectures [Esser et al. 2024; Labs 2024]. Unlike previous U-Net mo dels where text conditioning remains a static external signal, these blocks facilitate a dynamic bidirectional exchange between text and image tokens, continuously updating the text representations in response to the evolving image. This interaction creates an “enriched” semantic representation that is both aware of the prompt and synchronized with emergent visual details [Helbling et al. 2025]. By leveraging these enriched textual representations, our ap- proach steers the model’s generative intent to overcome the CFG mode collapse. By targeting these representations rather than raw pixels, w e preser ve samples within the learned data manifold, avoid- ing the artifacts common in downstream interventions. T o achieve this, we apply repulsion to the tokens as they pass between multi- modal attention blocks. This intervention is performed on-the-y during the transformer’s forward pass, at a stage where the emer- gent representation is already structurally informed but the nal composition is not yet xed. Intervening while the representation is still exible allows for steering that remains semantically driven yet image-aware . This enables the model to explor e diverse paths while maintaining natural, high-quality results. T o demonstrate the ecacy of our approach, we conduct ex- tensive experiments across multiple DiT -base d architectures. W e evaluate our r esults on the COCO benchmark using metrics for both visual quality and distributional variety . Our results show that repul- sion in the Contextual Space consistently produces richer diversity without the mode collapse or semantic misalignment characteristic of prior work. Furthermore, we demonstrate that our metho d is uniquely ecient, requiring only a small computational ov erhead and no additional memory , making it compatible with the rapid inference requirements of modern distilled models. 2 Related W ork Diusion transformers. While foundational diusion mo dels pre- dominantly utilized UNet-based architectures [Podell et al . 2023; Ramesh et al . 2022; Razzhigaev et al . 2023; Rombach et al . 2022; Saharia et al . 2022], contemp orary state-of-the-art text-to-image systems have largely shifted toward Diusion T ransformers (DiT s) as their backb one [Esser et al . 2024; Kong et al . 2025; Labs 2024; Labs et al . 2025]. A key distinction lies in the conditioning mecha- nism: whereas UNets typically incorporate text via cross-attention layers, DiT s process text and image tokens concurrently within the transformer . This ar chitecture emplo ys multimodal attention blocks to facilitate bidirectional interaction, ensuring a unied integration of visual and textual information throughout the generation pro- cess. A growing b ody of research has successfully employed this architecture across div erse downstream tasks [A vrahami et al . 2025; Dalva et al . 2024; Garibi et al . 2025; Kamenetsky et al . 2025; Labs et al. 2025; T an et al. 2025; Zarei et al. 2025] Research addressing the diversity-alignment gap in T ext-to-Image (T2I) models generally falls into two categories based on the stage and level of intervention: upstream methods, which modify condi- tions prior to or in the earliest stages of the generative process, and downstream methods, which manipulate the image latents through- out the denoising trajectory . Upstream Interventions. Upstream methods attempt to induce di- versity by optimizing input conditions, namely the initial noise or text conditioning, before a stable image structure emerges. Pur ely decoupled interventions like CADS [Sadat et al . 2023] inje ct prompt- agnostic noise into text embeddings, which often leads to semantic drifting due to a lack of structural feedback. T o bridge this, meth- ods like CNO [Kim et al . 2025] utilize the very rst timestep’s ˆ 𝑥 0 prediction to force divergence, yet these estimates are frequently structurally unformed at high noise levels, providing an unstable signal for conceptual variety . Similarly , optimization-based regimes such as MinorityPrompt [Um and Y e 2025] and Scalable Group In- ference (SGI) [Parmar et al . 2025] seek diversity-inducing initial conditions through iterative search; howe ver , their heavy computa- tional overhead makes them increasingly impractical for r eal-time applications or integration with fast-inference distilled models. On-the-f ly Repulsion in the Contextual Space for Rich Diversity in Diusion Transformers • 3 Downstream Interventions. Do wnstream methods manipulate the latent trajectory throughout the denoising process, either through interacting particle systems or modied guidance schedules. The former , pione ered by Particle Guidance (PG) [Corso et al . 2023], uses kernel-based repulsion in the image latent space to force variance between samples, with subsequent works focusing on improving repulsion loss obje ctives [Askari Hemmat et al . 2024; Jalali et al . 2025; Morshed and Boddeti 2025]. Despite these renements, these methods operate on non-semantic representations, repelling low- level pixel-space features rather than semantic content. Imp ortantly , semantic concepts in the image latent space are spatially entangled and not aligned across samples, so the same high-level attribute may correspond to dierent spatial locations and congurations in dierent generations. As a result, repulsion in this space often pushes samples outside the learne d manifold, leading to unnatu- ral artifacts. In addition, such approaches lack sucient trajectory depth to remain eective in modern distilled “T urbo” models; since the generative path is de cided almost instantly , the remaining de- noising trajectory is insucient for late-stage repulsion to steer the model toward diverse modes. Alternatively , scheduling-based approaches like Inter val Guid- ance [K ynkäänniemi et al . 2024] preserve variety by modulating the CFG scale during denoising. However , because these rescal- ing schedules are xed and indep endent of the model’s internal state, they often reduce the prompt’s inuence before the model has suciently established semantic alignment to the prompt. A recurring limitation of these approaches is that their steering signals, whether derived from raw latents or external enco ders, lack the semantic coher ence necessar y for meaningful control during the critical early stages of denoising. This forces an unfavorable trade- o: upstream inter vention must incur signicant computational overhead to nd valid diversity-inducing paths, while downstream interventions occur on a committed visual mode where the com- position is already xed, often producing noise-level variance that pushes samples outside the learned manifold and results in unnatu- ral artifacts. Our work departs from these by identifying a Contex- tual Space within Diusion Transformers that is both semantically exible and structurally informe d. This allows us to redirect the guidance trajectory once the bidirectional exchange between text and image tokens has established a stable semantic signal, but b efore the model has fully converged on a specic generative outcome. 3 Method: Repulsion in the Contextual Space In this section, w e formalize our approach to generative div ersity by shifting the intervention focus to the Contextual Space . As identied in Se ction 2, the cor e diculty of existing methods lies in the timing and location of the repulsion: upstream methods act on unformed noise, while downstream methods act on a rigid latent manifold. Our central insight is that the Contextual Space, inher ent to multi- modal transformer architectures such as DiT s, provides an ee ctive environment for diversity interventions b ecause it is structurally informed yet conceptually exible. 3.1 Defining the Contextual Space The Contextual Space is the high-dimensional manifold formed within the Multimodal Attention (MM- Attention) blocks of a DiT . Unlike the static text embeddings used in U-Net architectures, the DiT processing ow facilitates a bidirectional exchange b etween text features 𝑓 𝑇 and image features 𝑓 𝐼 . In each transformer block 𝑙 , the resulting tokens undergo a struc- tural transformation: ˆ 𝑓 ( 𝑙 ) 𝑇 , ˆ 𝑓 ( 𝑙 ) 𝐼 = MM- Attn ( 𝑓 ( 𝑙 − 1 ) 𝑇 , 𝑓 ( 𝑙 − 1 ) 𝐼 ) . (1) In this interaction, the text features 𝑓 𝑇 guide the image tokens toward the prompt’s semantic requirements. Simultaneously , the image fea- tures 𝑓 𝐼 provide fe edback regarding the spatial composition and emerging visual details, which the text features absorb to b ecome uniquely tied to the specic image being formed. W e therefore iden- tify the resulting enriched text tokens ˆ 𝑓 ( 𝑙 ) 𝑇 as the primary elements of the Contextual Space. A key advantage of this space is its inherent token ordering. Unlike the image latent space, where specic semantic content can shift spatially across dierent samples, the Contextual Space maintains a xe d semantic alignment across the se quence index. This facilitates a consistent representation where each token index generally represents the same conceptual comp onent across the entire batch, largely indep endent of its realized placement in the emergent image structure. 3.2 The Mechanism of Contextual Repulsion W e illustrate the positioning of our intervention in Figure 2c. Our key insight is that applying repulsion within the Contextual Space allows for the manipulation of generativ e intent . By enforcing dis- tance between batch samples in this space, we steer the mo del’s high-level planning before it commits to a specic visual mode. T o achieve this, we adopt the particle guidance framework [Corso et al . 2023], which treats a batch of 𝐵 samples as interacting particles. Howev er , unlike prior work that applies guidance to the image la- tents 𝑧 𝑡 (Figure 2b), we apply the repulsive forces directly to the Contextual Space tokens ˆ 𝑓 𝑇 (Figure 2c). Since the conditioning for each sample is initialized from the same unmodied prompt encoding at every timestep, the intervention mitigates the risk of permanent semantic drift. This common starting point promotes a state where contextual features remain closely aligned to the original prompt and directly comparable acr oss the batch throughout the trajectory , allowing the repulsion to act as a force that dierentiates how the same pr ompt is visually realized. A critical advantage of our approach is that these forces are com- puted on-the-y . Because we inter vene directly on the internal activations, the method does not require backpropagating through the model layers, making it signicantly more computationally e- cient than optimization-based methods. Within each transformer block, we apply 𝑀 inner-block iterations to iteratively rene the token positions. Following the gradient-based guidance formula- tion [Corso et al . 2023], the updated state of the contextual tokens for a sample 𝑖 ∈ { 1 , . . . , 𝐵 } after each iteration is given by: ˆ 𝑓 ( 𝑙 ) ′ 𝑇 ,𝑖 = ˆ 𝑓 ( 𝑙 ) 𝑇 ,𝑖 + 𝜂 𝑀 ∇ ˆ 𝑓 ( 𝑙 ) 𝑇 ,𝑖 L 𝑑 𝑖 𝑣 ( { ˆ 𝑓 ( 𝑙 ) 𝑇 , 𝑗 } 𝐵 𝑗 = 1 ) , (2) 4 • Omer Dahary ∗ , Benaya Koren ∗ , Daniel Garibi, and Daniel Cohen-Or where 𝜂 is the overall repulsion scale and L 𝑑 𝑖 𝑣 is a diversity loss dened over the batch of 𝐵 samples. T o maintain diversity through- out the traje ctory , we apply this repulsion across all transformer MM-blocks. Howev er , since the initial stages of the denoising tra- jectory are the most crucial for the eventual semantic meaning and global composition [Balaji et al. 2023; Cao et al. 2025; Dahar y et al. 2025, 2024; Hub erman et al . 2025; Patashnik et al . 2023; Y ehezkel et al . 2025], and are also where strong guidance signals such as CFG most strongly bias the generative path, we restrict the intervention to a chosen interval of the rst few timesteps. 3.3 Diversity Objective The Contextual Space encodes global semantic intent shared across the batch, making diversity objectives based on batch-level similarity more appropriate than token-wise or local measures. While our framework is exible and can adopt various diversity losses dene d in prior work [Jalali et al . 2025; Morshed and Boddeti 2025], we specically utilize the V endi Score [Askari Hemmat et al . 2024; Friedman and Dieng 2022] as our primar y objective. The V endi Score provides a principled way to measure the eective numb er of distinct samples in a batch by considering the eigenvalues of a similarity matrix. Formally , it is dene d as the exponent of the von Neumann entropy of that matrix. For simplicity , we represent each sample 𝑖 at block 𝑙 as a single vector c ( 𝑙 ) 𝑖 ∈ R 𝑁 𝐷 by attening the sequence of 𝑁 contextual tokens, each of dimension 𝐷 . For a batch of size 𝐵 represented by these attened contextual vectors { c ( 𝑙 ) 𝑖 } 𝐵 𝑖 = 1 , w e rst dene a kernel matrix K ∈ R 𝐵 × 𝐵 , where each entry 𝐾 𝑖 𝑗 represents the similarity between samples 𝑖 and 𝑗 . In our work, we use the cosine similarity as our kernel: 𝐾 𝑖 𝑗 = ⟨ c ( 𝑙 ) 𝑖 , c ( 𝑙 ) 𝑗 ⟩ ∥ c ( 𝑙 ) 𝑖 ∥ ∥ c ( 𝑙 ) 𝑗 ∥ (3) T o maximize diversity , we compute the eigenvalues { 𝜆 𝑘 } of the normalized kernel ˜ K = 1 𝐵 K and dene our loss L 𝑑 𝑖 𝑣 as the negative von Neumann entropy: L 𝑑 𝑖 𝑣 = − 𝐵 𝑘 = 1 𝜆 𝑘 log 𝜆 𝑘 (4) This objective eectiv ely pushes the tokens in the Conte xtual Space to span a higher-dimensional manifold, preventing the semantic collapse typically induced by CFG. 4 The Contextual Space In this section, we empirically e xamine the properties of the Con- textual Space by analyzing how internal representations b ehave under controlled interpolation and extrapolation. W e focus on how semantic structure is preserved or degraded when steering repre- sentations in two internal spaces of the DiT: the V AE latent space and the contextual (enriched text) token space . The goal is to char- acterize how each of these spaces reects semantic variation when multiple samples are generate d fr om the same prompt, and to assess their suitability for diversity control without introducing visual artifacts. “ A mythical creature” T arget Interpolation Source Extrapolation Contextual Space Latent Space “ A p erson with their pet” T arget Interpolation Source Extrapolation Contextual Space Latent Space Fig. 3. Comparison of interpolation and extrapolation between the internal representations of tw o images. Intermediate frames are gen- erated by denoising the source image while linearly blending its internal features with those of the target; extrapolation extends this vector beyond the endpoints. While Latent Space interpolation leads to structural blurring and artifacts due to spatial misalignment, the Contextual Space maintains high visual fidelity . This demonstrates that the Contextual Space enables smooth semantic transitions by decoupling generative intent from fixe d spatial structures. T o examine this, we conduct an interpolation and extrapolation experiment across these two internal representation spaces. W e consider two prompts, “a person with their pet” and “a mythical creature ” . For each pr ompt, we generate two samples using dierent initial noise se eds, which we designate as a source image and a target image . Maintaining the initial noise of the source image, we intervene during the denoising process by replacing its internal representation with a linear combination of the source and target representations h 𝑖𝑛𝑡 𝑒𝑟 𝑝 = h 𝑠𝑜 𝑢𝑟 𝑐𝑒 + 𝛼 ( h 𝑡 𝑎𝑟 𝑔𝑒𝑡 − h 𝑠𝑜 𝑢𝑟 𝑐𝑒 ) , (5) where h represents the representation in a given space, and 𝛼 is the steering coecient. W e compare this behavior across two distinct spaces: the V AE Latent Space ( 𝑧 𝑡 ) and our proposed Contextual Space (enriched text tokens ˆ 𝑓 𝑇 ). As illustrate d in Figure 3, the results highlight a fundamental dierence in how these spaces handle semantic information. In the V AE Latent Space, representations are tied to the spe cic spatial grid and pixel-lev el layout of the sample. Since the source and target images are spatially unaligned (exhibiting dierent poses and com- positions) interpolating between them results in a structural blur . On-the-f ly Repulsion in the Contextual Space for Rich Diversity in Diusion Transformers • 5 The model attempts to resolve tw o conicting geometries simulta- neously , leading to incoherent overlays and ghostly artifacts. More critically , extrapolating in the V AE Latent Space quickly pushes the latents outside the learned data manifold, resulting in severe artifacts. In contrast, performing the same operation within the Contextual Space yields a smooth semantic transition. Rather than blending pixels or geometries, the model reallocates visual elements in a coherent manner , gradually modifying appearance and composition while maintaining a sharp, high-delity structure . For instance, as we move from the source image toward the target, we observe a meaningful evolution in high-level appearance attributes of the subject, such as facial features and overall visual style, which shift naturally from the source toward the target. In the bottom example, this transition applies coherently to each subject independently , with both the woman and the accompanying p et undergoing meaningful semantic changes (e.g., the pet gradually shifting from a dog-like to a cat-like app earance). Throughout this interpolation, the pre- trained weights retain the generated images on-manifold, preserving structural integrity and visual plausibility . Furthermore, the Contextual Space maintains its integrity during extrapolation, where the shifts remain semantically consistent with the direction of the steering vector ( h 𝑡 𝑎𝑟 𝑔𝑒𝑡 − h 𝑠𝑜 𝑢𝑟 𝑐𝑒 ). As shown in the right-most columns of Figure 3, applying extrapolation ( 𝛼 < 0 ) relative to the target does not lead to manifold collapse. Instead, it generates a semantically meaningful extrapolation: In the top example, extrapolation progr essively remo ves the creature ’s horns and beast-like features, producing a plausible semantic evolution rather than noise or collapse. In the bottom example, the w oman’s features e volve toward a darker-tone, eectively mo ving away from the characteristics of the reference. Simultaneously , the pet’s appear- ance is modied in a logically consistent manner , such as deepening the coat color and shifting the ears to a more drooping shape. These observations suggest that the Contextual Space encodes global se- mantic features independently of a xed spatial grid. Intervening in this space enables the modication of high-level attributes while the transformer’s attention mechanisms maintain the structural coherence of the output. 5 Experiments T o evaluate the generality of our approach, we conduct experiments across three state-of-the-art Diusion T ransformer (DiT) architec- tures that span distinct design choices and sampling regimes: Flux- dev [Labs 2024], a guidance-distilled model; SD3.5- Turbo, distilled for high-speed, few-step inference; and SD3.5-Large [Esser et al . 2024], a standard non-distilled model. T ogether , these models cover a broad spectrum of modern DiT variants, allowing us to demon- strate that Contextual Space r epulsion is broadly applicable and not tied to a specic architecture, training regime, or sampling budget. W e compare our Contextual Space repulsion against represen- tative diversity-enhancing baselines, including upstr eam methods that modify initial conditions such as CADS [Sadat et al . 2023] and SGI [Parmar et al . 2025], as well as downstream methods that inter- vene in the latent space, including Particle Guidance [Corso et al . Flux Ours “Kids with paper airplanes” Flux Ours “ A ballet dancer on stage” Fig. 4. alitative results. For each prompt, we compare the base model results (top) to our results (boom). 2023] and SP ARKE [Jalali et al . 2025]. Full implementation details and hyperparameter settings are provided in Appendix A. 5.1 alitative Results Flux-dev results. W e compare our results with the base Flux-dev model in Figures 4 and 11; additional comparisons with Flux-dev , SD3.5-Large and SD3.5- Turbo are provided in App endix B. Even when sampled with dierent random initial noises, the base model typically produces a ver y narrow and repetitive range of outputs for many prompts. As shown in Figure 11, our method alleviates typicality biases, such as the barely visible or harsh lighting seen in the “musician” and “scientist” examples. Furthermore, it generates a diverse array of compositions, arrangements, and camera angles for the “painter” and “stadium” prompts. Baseline comparisons. W e present qualitative comparisons against the baseline in Figure 12. A s illustrated, downstream methods like PG and SP ARKE often introduce visual artifacts be cause they in- tervene directly in the V AE latent space. For instance, in the “red bus” example, PG fails to modify the image structure , while SP ARKE succeeds in moving obje cts but leaves patterned “holes” in their original locations. In contrast, upstream methods maintain higher image quality , though they face dierent trade-os. CADS frequently leads to semantic drift, where diversity is achieved through weak prompt alignment ( e.g., replacing “photographs” with people, or a “phoenix“ with a bonre). SGI, which lters a large set of initial noise candi- dates through optimization, achieves both high quality and prompt 6 • Omer Dahary ∗ , Benaya Koren ∗ , Daniel Garibi, and Daniel Cohen-Or Flux Kontext Ours Input Image “a person running a marathon” Fig. 5. Integration with image editing models. W e demonstrates that our method can be successfully integrated into Flux-Kontext to generate high- quality diverse results. adherence by minimizing intervention. How ever , SGI often strug- gles to produce high variation for prompts where the base model lacks inherent diversity , resulting in repetitive subject appearances and compositions (e.g., the “r ed bus”). Our method achiev es richer div ersity even with challenging prompts, without sacricing alignment or quality . Interestingly , the axes of variation adapt to each prompt: for the “phoenix, ” the mo del alternates b etween artistic styles; for the “bus, ” it varies weather and pose; and for the “camera with old photographs” and “wolf pack, ” it generates unique compositions and object arrangements. Example result on Flux-Kontext. In Figure 5, we demonstrate that our method generalizes beyond text-to-image generation and can b e applied out of the box to image editing models, sp ecically Flux Kon- text [Labs et al . 2025]. Perhaps surprisingly , this requires no modi- cation to the mo del or to our intervention strategy: we apply the exact same Contextual Space repulsion within the editing instruc- tion stream. While the base editing model produces nearly identical edits across dierent random seeds, our approach yields diverse yet coherent edit realizations, all while preser ving the intended edit semantics and maintaining the visual integrity of the original image. This result highlights that contextual r epulsion operates at a level of abstraction that is compatible with both generation and editing paradigms, despite being developed specically for text-to-image models. 5.2 antitative Results Diversity-Quality trade-o. W e evaluated our method using 1,000 prompts sampled from the MS-COCO 2017 validation set, generating four images per prompt for a total of 4,000 images per conguration. T o provide a holistic view of the diversity-quality trade-o, we uti- lize the V endi Inception Score [Friedman and Dieng 2022; Szegedy et al . 2017] to measure high-level semantic diversity alongside three primary quality and alignment axes: ImageReward [Xu et al . 2023] for human preference , V Q AScore [Lin et al . 2024] for ne-grained prompt adher ence, and Kernel Inception Distance (KID) [Bińkowski et al . 2018] for distributional delity . By plotting the Pareto frontier of the diversity score versus each of these metrics, we can ana- lyze how ee ctively each method navigates the tension b etween generative variety and visual delity . Fig. 6. antitative evaluation. Pareto frontiers comparing our method against baseline methods using Flux-dev . W e evaluate the trade-o between semantic diversity (V endi Score) and three performance axes: (Le) Human Preference [ImageReward ↑ ], (Middle) Prompt Alignment [VQ AScore ↑ ], and (Right) Distributional Fidelity [KID ↓ ]. Our method (red) achieves a superior frontier across all metrics. T able 1. Runtime comparison for generating a group of four images. Our method provides a significant speedup over optimization-based diver- sity methods like SGI while maintaining a low overhead relative to the base model. Method SD3.5-Large SD3.5- Turbo Flux-dev Base Model 13.83s 4.18s 10.34s Ours (Contextual) 18.12s 5.52s 12.80s SGI 8 Candidates 66.79s 13.15s 47.47s 16 Candidates 76.79s 23.73s 56.32s 32 Candidates 101.44s 46.15s 75.39s 64 Candidates 145.14s 91.30s 113.99s T o map the Pareto frontiers, w e systematically vary the control hyperparameters for each baseline: the guidance scale for PG and SP ARKE, the noise intensity for CADS, and the number of initial noise candidates for SGI. Specic hyperparameter congurations are provided in Appendix A. As shown in Figur e 6, our method achieves a superior trade-o on Flux-dev . Notably , while our method exceeds the performance of SGI, the strongest baseline, it do es so with drastically lower computational overhead (see Paragraph 5.2). Results for additional models, including SD3.5- Turbo and SD3.5-Large, are provided in Appendix C. Runtime. Many existing diversity methods rely on costly down- stream signals, either through gradient-base d optimization or by selecting from large pools of candidate latents. Both strategies im- pose substantial time overhead. By avoiding these me chanisms entirely , our approach provides a markedly more ecient solution, increasing runtime by only 20%–30% relative to the base mo del (T able 1). User study . Standard quantitative metrics often fail to capture the nuances of generative diversity . These evaluators are typically trained on datasets dominated by common visual patterns, leading them to favor “typical” or average cases as more aesthetically pleas- ing or prompt-adherent. Consequently , methods that successfully push for greater diversity and creative interpretation may b e un- fairly penalize d by these metrics, even when the resulting variations are highly desirable to human users. T o address this limitation and On-the-f ly Repulsion in the Contextual Space for Rich Diversity in Diusion Transformers • 7 Fig. 7. Overall user preference comparison. Distribution of user choices comparing our method with five competing approaches. Bars indicate the percentage of cases in which users preferred our results ( green), preferred competing methods (red), or rated both e qually (gray). provide a more meaningful assessment of our method, we conducted a user study . W e utilized ChatGPT to generate 40 diverse prompts across vari- ous categories. For each prompt, participants w ere presented with two batches of 8 images (16 images total): one batch generated by our method and the other by a competing method or the base model (Flux-dev). Participants were tasked with performing a side-by-side comparison to determine which batch: (i) Exhibited greater visual and semantic diversity; (ii) Maintained higher image quality; (iii) Demonstrated better prompt adherence; and (iv) W as preferred overall. W e colle cted 450 responses from 45 participants. Figure 7 reports the overall user preference results of this study , with the full prefer- ence table provided in Appendix C. O verall, our method achieves higher user preference than all competing approaches. The only ex- ception is SGI, where preferences are closely matched, with a slight advantage for our method. Importantly , these gains are achieved with minimal runtime overhead, as demonstrated in T able 1. 5.3 Ablation Studies W e evaluate the impact of the repulsion scale and the specic repr e- sentation space used for inter vention below , with further hyperpa- rameter analyses provided in Appendix D. Repulsion scale ablation. In Figure 8, we ablate the eect of the repulsion scale 𝜂 . The top row ( 𝜂 = 0 ) represents the base Flux-dev generations, which exhibit a narro w interpretation of the prompt; each image displays a similar-looking house in nearly identical environments. In each subsequent row , we show the results of our method with an increasing repulsion scale. A s can be seen, higher values of 𝜂 generally yield greater diversity , introducing structural changes like adding a tower to the house, altering the landscape with a lake, or shifting the scene ’s season. Repulsion space ablation. T o isolate the ecacy of inter vening in the Contextual Space ( ˆ 𝑓 𝑇 ), we compare our framework against an identical repulsion mechanism applied instead to the image atten- tion tokens ( ˆ 𝑓 𝐼 ) within the multimodal blocks (i.e., the dual-stream 𝜂 = 0 𝜂 = 5 𝑒 10 𝜂 = 1 𝑒 11 𝜂 = 2 . 5 𝑒 11 𝜂 = 4 𝑒 11 “ A breathtaking view of a distant house in beautiful scener y” Fig. 8. Ablation of the repulsion scale 𝜂 . W e visualize the impact of the repulsion scale on our results. At 𝜂 = 0 (top r ow), the base model exhibits lo w diversity , producing similar architectural styles and environments across multiple seeds. As 𝜂 increases, our Contextual Space repulsion introduces progressively larger variations, while maintaining high image quality and prompt alignment. blocks in Flux). As illustrated in Figure 9, repulsion in the Con- textual Space produces a signicantly more r obust Pareto frontier , yielding superior human preference (ImageReward), distributional delity (KID), and prompt alignment (VQ AScore). Notably , while the image-token baseline exhibits sharp performance degradation as diversity increases, our method maintains a shallower decline across all metrics. This suggests that the Contextual Space is better suited for navigating semantic diversity while strictly preserving the integrity of samples within the learned conditional manifold. Figure 10 provides qualitative examples. As can be seen, applying repulsion in the image token space ( ˆ 𝑓 𝐼 ) often results in stagnant lay- outs due to its spatial rigidity; this forces the repulsion to articially promote diversity by modifying local textures, leading to artifacts such as the sea blending unnaturally into the road in the “street” example. In contrast, intervening in the contextual space ( ˆ 𝑓 𝑇 ) tends to promote varied compositions while maintaining alignment and quality . 6 Conclusions At a high level, this work highlights the Contextual Space in Dif- fusion Transformers as a particularly eective place to intervene when aiming for diversity . The Contextual Space sits between text and image: the representations already encode rich semantic intent shaped by the emerging image, yet they are not spatially locked in. Unlike image latents, this space is not tied to a spatial grid, so 8 • Omer Dahary ∗ , Benaya Koren ∗ , Daniel Garibi, and Daniel Cohen-Or Fig. 9. Ablation of Repulsion Space. Pareto frontiers comparing r epulsion applied to text aention tokens (Contextual Space , ˆ 𝑓 𝑇 ) versus image aention tokens ( ˆ 𝑓 𝐼 ) within the Flux-dev architecture. W e evaluate the trade-o between semantic diversity (V endi Score) and three performance axes: (Le) Human Preference [ImageRewar d ↑ ], (Middle) Prompt Alignment [V QAScore ↑ ], and (Right) Distributional Fidelity [KID ↓ ]. Our method (red) achieves a superior frontier across all metrics. Image Contextual “T wo pieces of bread with a leafy green on top of it” Image Contextual “ A city street scene with a green bus coming up a street, with o cean” Fig. 10. alitative Ablation of Repulsion Space. For each prompt, we compare repulsion applied in the image aention space (Image) versus our Contextual Space (Contextual). While image-space r epulsion is limited by spatial rigidity , our method achieves more varied compositions. samples can be pushed apart semantically without tearing geometr y or introducing visual artifacts. At the same time, unlike early text embeddings, it is structurally informed, meaning that inter ventions meaningfully inuence what the model actually generates. Applying on-the-y repulsion in this space allows diversity to be increased in a controlled way , without sacricing visual quality or relying on heavy optimization with signicant computational cost. More broadly , this p oints to the imp ortance of inter vening at the right representational level, where decisions are still exible, but already grounded in the image being formed. Limitations . Contextual repulsion increases diversity but does not provide direct control ov er which attributes will vary , and may sometimes favor coarse semantic changes ov er ne, user-specied ones. In addition, the inter vention is focused on early to mid stages of generation; how to best coordinate it with later stages, or combine it with other control mechanisms, remains an open question. Future directions . An interesting direction for future work is to investigate whether a user provided textual cue , such as “color” or “size” , can be used to guide the repulsion along a specic semantic direction in the Contextual Space. Instead of encouraging diversity in an unconstrained manner , the idea would be to bias the repulsiv e forces so that samples spread primarily along attributes associated with the given word. This could enable a more controlled and inter- pretable form of diversity , where variation is focuse d on selecte d semantic aspects while other parts of the generation remain stable. Acknowledgments W e would like to thank Or Patashnik, Yuval Alaluf, Nir Goren, Maya Vishnevsky , Sara Dorfman, Shelly Golan, Saar Huberman, and Jackson W ang for their early feedback and insightful discussions. W e also thank the anonymous reviewers for their thorough and constructive comments, which helped improve this work. References Reyhane Askari Hemmat, Melissa Hall, Alicia Sun, Candace Ross, Michal Drozdzal, and Adriana Romero-Soriano. 2024. Improving geo-diversity of generated images with contextualized vendi score guidance . In European Conference on Computer Vision . Springer , 213–229. Omri A vrahami, Or Patashnik, Ohad Fried, Egor Nemchinov, Kr Aberman, Dani Lischinski, and Daniel Cohen-Or. 2025. Stable F low: Vital Layers for Training- Free Image Editing. In 2025 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) . IEEE, 7877–7888. doi:10.1109/cvpr52734.2025.00738 Y ogesh Balaji, Seungjun Nah, Xun Huang, Arash V ahdat, Jiaming Song, Qinsheng Zhang, Karsten Kreis, Miika Aittala, Timo Aila, Samuli Laine, Bryan Catanzaro, T ero Karras, and Ming- Y u Liu. 2023. eDi-I: T ext-to-Image Diusion Models with an Ensemble of Expert Denoisers. arXiv:2211.01324 [cs.CV] https://ar xiv .org/abs/2211.01324 Mikołaj Bińkowski, Danica J Sutherland, Michael Arbel, and Arthur Gretton. 2018. Demystifying mmd gans. arXiv preprint arXiv:1801.01401 (2018). Y u Cao, Zengqun Zhao, Ioannis Patras, and Shaogang Gong. 2025. T emporal Score Anal- ysis for Understanding and Correcting Diusion Artifacts. arXiv:2503.16218 [cs.CV] https://arxiv .org/abs/2503.16218 Gabriele Corso, Yilun Xu, V alentin De Bortoli, Regina Barzilay , and T ommi Jaakkola. 2023. Particle guidance: non-iid diverse sampling with diusion models. arXiv preprint arXiv:2310.13102 (2023). Omer Dahary , Y ehonathan Cohen, Or Patashnik, Kr Aberman, and Daniel Cohen-Or . 2025. Be Decisive: Noise-Induced Layouts for Multi-Subject Generation. In Procee d- ings of the Special Interest Group on Computer Graphics and Interactive Techniques Conference Conference Papers . 1–12. Omer Dahar y , Or Patashnik, Kr Aberman, and Daniel Cohen-Or . 2024. Be your- self: Bounded attention for multi-subject text-to-image generation. In European Conference on Computer Vision . Springer , 432–448. Y usuf Dalva, Kavana V enkatesh, and Pinar Y anardag. 2024. FluxSpace: Disentangled Semantic Editing in Rectied Flow Transformers. arXiv:2412.09611 [cs.CV] https: //arxiv .org/abs/2412.09611 Patrick Esser , Sumith Kulal, Andreas Blattmann, Rahim Entezari, Jonas Müller , Harry Saini, Y am Levi, Dominik Lorenz, Axel Sauer , Frederic Boesel, et al . 2024. Scal- ing rectied ow transformers for high-resolution image synthesis. In Forty-rst international conference on machine learning . Dan Friedman and Adji Bousso Dieng. 2022. The vendi scor e: A diversity evaluation metric for machine learning. arXiv preprint arXiv:2210.02410 (2022). On-the-f ly Repulsion in the Contextual Space for Rich Diversity in Diusion Transformers • 9 Daniel Garibi, Shahar Y adin, Roni Paiss, Omer T ov , Shiran Zada, Ariel Ephrat, T omer Michaeli, Inbar Mosseri, and T ali Dekel. 2025. T okenV erse: V ersatile Multi-concept Personalization in T oken Modulation Space. arXiv:2501.12224 [cs.CV] https://ar xiv . org/abs/2501.12224 Alec Helbling, T una Han Salih Meral, Ben Hoover , Pinar Y anardag, and Duen Horng Chau. 2025. Conceptattention: Diusion transformers learn highly interpretable features. arXiv preprint arXiv:2502.04320 (2025). Jonathan Ho and Tim Salimans. 2022. Classier-free diusion guidance. arXiv preprint arXiv:2207.12598 (2022). Saar Huberman, Or Patashnik, Omer Dahary , Ron Mokady , and Daniel Cohen-Or . 2025. Image Generation from Contextually-Contradictory Prompts. arXiv preprint arXiv:2506.01929 (2025). Mohammad Jalali, LEI Hao yu, Amin Gohari, and Farzan Farnia. 2025. SP ARKE: Scalable Prompt- A ware Diversity and Novelty Guidance in Diusion Models via RKE Score. In The Thirty-ninth A nnual Conference on Neural Information Processing Systems . Ronen Kamenetsky , Sara Dorfman, Daniel Garibi, Roni Paiss, Or Patashnik, and Daniel Cohen-Or . 2025. SAEdit: T oken-level control for continuous image editing via Sparse AutoEncoder . arXiv:2510.05081 [cs.GR] https://ar xiv .org/abs/2510.05081 Byungjun Kim, Soobin Um, and Jong Chul Y e. 2025. Diverse Te xt-to-Image Generation via Contrastive Noise Optimization. arXiv preprint arXiv:2510.03813 (2025). W eijie Kong, Qi Tian, Zijian Zhang, Rox Min, Zuozhuo Dai, Jin Zhou, Jiangfeng Xiong, Xin Li, Bo Wu, Jianw ei Zhang, Kathrina W u, Qin Lin, Junkun Y uan, Y anxin Long, Aladdin W ang, Andong W ang, Changlin Li, Duojun Huang, Fang Y ang, Hao T an, Hongmei W ang, Jacob Song, Jiawang Bai, Jianbing Wu, Jinbao Xue, Joey Wang, Kai W ang, Mengyang Liu, Pengyu Li, Shuai Li, W eiyan W ang, W enqing Yu, Xinchi Deng, Y ang Li, Yi Chen, Yutao Cui, Y uanb o Peng, Zhentao Y u, Zhiyu He, Zhiyong Xu, Zixiang Zhou, Zunnan Xu, Y angyu Tao , Qinglin Lu, Songtao Liu, Dax Zhou, Hongfa W ang, Y ong Y ang, Di Wang, Yuhong Liu, Jie Jiang, and Caesar Zhong. 2025. HunyuanVide o: A Systematic Framework For Large Video Generative Models. arXiv:2412.03603 [cs.CV] T uomas K ynkäänniemi, Miika Aittala, T ero Karras, Samuli Laine, Timo Aila, and Jaakko Lehtinen. 2024. Applying guidance in a limited interval improves sample and distribution quality in diusion models. Advances in Neural Information Processing Systems 37 (2024), 122458–122483. Black Forest Labs. 2024. FLUX. https://github.com/black- forest- labs/ux. Black Forest Labs, Stephen Batifol, Andreas Blattmann, Frederic Bo esel, Saksham Consul, Cyril Diagne, Tim Dockhorn, Jack English, Zion English, Patrick Esser , et al . 2025. FLUX. 1 Konte xt: Flow Matching for In-Context Image Generation and Editing in Latent Space. arXiv preprint arXiv:2506.15742 (2025). Zhiqiu Lin, Deepak Pathak, Baiqi Li, Jiayao Li, Xide Xia, Graham Neubig, Pengchuan Zhang, and Deva Ramanan. 2024. Evaluating text-to-visual generation with image- to-text generation. In European Conference on Computer Vision . Springer , 366–384. Mashrur M Morshed and Vishnu Boddeti. 2025. DiverseF low: Sample-Ecient Di- verse Mode Coverage in Flows. In Proceedings of the Computer Vision and Pattern Recognition Conference . 23303–23312. Gaurav Parmar , Or Patashnik, Daniil Ostashev , Kuan-Chieh W ang, Kr Aberman, Srinivasa Narasimhan, and Jun- Y an Zhu. 2025. Scaling Group Inference for Diverse and High-Quality Generation. arXiv preprint arXiv:2508.15773 (2025). Or Patashnik, Daniel Garibi, Idan Azuri, Hadar A verbuch-Elor , and Daniel Cohen-Or. 2023. Localizing Object-level Shape V ariations with T ext-to-Image Diusion Models. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV) . Dustin Podell, Zion English, Kyle Lacey , Andreas Blattmann, Tim Dockhorn, Jonas Müller , Joe Penna, and Robin Rombach. 2023. Sdxl: Improving latent diusion models for high-resolution image synthesis. arXiv preprint arXiv:2307.01952 (2023). Aditya Ramesh, Prafulla Dhariwal, Alex Nichol, Casey Chu, and Mark Chen. 2022. Hierarchical T ext-Conditional Image Generation with CLIP Latents. arXiv:2204.06125 [cs.CV] Anton Razzhigaev , Arseniy Shakhmatov, Anastasia Maltseva, Vladimir Arkhipkin, Igor Pavlov , Ilya Ryab ov , Angelina Kuts, Alexander Panchenko, Andrey Kuznetsov , and Denis Dimitrov . 2023. Kandinsky: an Improved T ext-to-Image Synthesis with Image Prior and Latent Diusion. arXiv:2310.03502 [cs. CV] https://arxiv .org/abs/2310. 03502 Robin Rombach, Andreas Blattmann, Dominik Lorenz, Patrick Esser , and Björn Ommer . 2022. High-resolution image synthesis with latent diusion models. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition . 10684–10695. Seyedmorteza Sadat, Jakob Buhmann, Derek Bradley , Otmar Hilliges, and Romann M W eber . 2023. CADS: Unleashing the div ersity of diusion models thr ough condition- annealed sampling. arXiv preprint arXiv:2310.17347 (2023). Chitwan Saharia, William Chan, Saurabh Saxena, Lala Li, Jay Whang, Emily Den- ton, Seyed Kamyar Seyed Ghasemipour , Burcu Karagol A yan, S. Sara Mahdavi, Rapha Gontijo Lopes, Tim Salimans, Jonathan Ho, David J Fleet, and Mohammad Norouzi. 2022. Photorealistic T ext-to-Image Diusion Mo dels with Deep Language Understanding. arXiv:2205.11487 [cs.CV] Christian Szegedy , Sergey Ioe, Vincent V anhoucke, and Alexander Alemi. 2017. Inception-v4, inception-resnet and the impact of residual connections on learn- ing. In Proceedings of the AAAI conference on articial intelligence , V ol. 31. Zhenxiong Tan, Songhua Liu, Xingyi Y ang, Qiao chu Xue, and Xinchao W ang. 2025. OminiControl: Minimal and Universal Control for Diusion Transformer . arXiv:2411.15098 [cs.CV] Revant T eotia, Candace Ross, Karen Ullrich, Sumit Chopra, Adriana Romero-Soriano , Melissa Hall, and Matthew Muckley. 2025. DIMCIM: A Quantitative Evaluation Framework for Default-mode Diversity and Generalization in T ext-to-Image Gener- ative Models. In Proceedings of the IEEE/CVF International Conference on Computer Vision . 16431–16440. Soobin Um and Jong Chul Y e. 2025. Minority-Focused T ext-to-Image Generation via Prompt Optimization. In Proceedings of the Computer Vision and Pattern Recognition Conference . 20926–20936. Jiazheng Xu, Xiao Liu, Yuchen W u, Y uxuan T ong, Qinkai Li, Ming Ding, Jie Tang, and Y uxiao Dong. 2023. Imagereward: Learning and evaluating human prefer ences for text-to-image generation. Advances in Neural Information Processing Systems 36 (2023), 15903–15935. Shai Y ehezkel, Omer Dahar y , Andrey V oynov , and Daniel Cohen-Or . 2025. Navigating with Annealing Guidance Scale in Diusion Space. arXiv preprint (2025). Jiahui Yu, Yuanzhong Xu, Jing Yu Koh, Thang Luong, Gunjan Baid, Zirui W ang, Vi- jay V asudevan, Alexander Ku, Yinfei Y ang, Burcu Karagol A yan, Ben Hutchinson, W ei Han, Zarana Parekh, Xin Li, Han Zhang, Jason Baldridge, and Y onghui Wu. 2022. Scaling A utoregressive Models for Content-Rich T ext-to-Image Generation. arXiv:2206.10789 [cs.CV] Arman Zarei, Samyadeep Basu, Mobina Pournemat, Sayan Nag, Ryan Rossi, and Soheil Feizi. 2025. SliderEdit: Continuous Image Editing with Fine-Grained Instruction Control. arXiv:2511.09715 [cs.CV] 10 • Omer Dahary ∗ , Benaya Koren ∗ , Daniel Garibi, and Daniel Cohen-Or Flux Ours “ A jazz musician playing saxophone in a dimly lit club” Flux Ours “ An artist painting a landscap e in an outdoor studio” Flux Ours “ A scientist in a mo dern laboratory ” Flux Ours “ A crowd cheering at a sports stadium” Fig. 11. alitative results. For each prompt, we compar e the base model results (top) to our r esults (boom). Each batch of images was generated using the same random seed to ensure a fair comparison. Additional results are pro vided in Appendix B. On-the-f ly Repulsion in the Contextual Space for Rich Diversity in Diusion Transformers • 11 Ours SGI CADS SP ARKE PG “ A wolf pack howling at the moon” Ours SGI CADS SP ARKE PG “ A pho enix rising from ashes” Ours SGI CADS SP ARKE PG “ A camera with old photographs” Ours SGI CADS SP ARKE PG “ A red London double-de cker bus” Fig. 12. alitative comparison of our Contextual Repulsion approach against baseline metho ds. Each quadrant displays four generated samples p er method for a given prompt. 12 • Omer Dahary ∗ , Benaya Koren ∗ , Daniel Garibi, and Daniel Cohen-Or Appendix A Implementation Details All experiments were conducte d on an N VIDIA A100 GP U. Quantita- tive metrics and runtime evaluations wer e performed by generating groups of 4 images. Div ersity metrics were calculated within each 4-image group and subsequently averaged across all groups. The number of denoising steps was chosen based on the model architecture: 4 steps for SD3.5- Turbo [Esser et al . 2024], 20 steps for Flux-dev [Labs et al. 2025], and 28 steps for SD3.5-Large [Esser et al . 2024]. The guidance scale was set to 3.5 for both Flux-dev and SD3.5-Large, and 0.0 for SD3.5- T urbo. For our proposed method, we employed 𝑀 = 100 gradient steps for the Stable Diusion models and 𝑀 = 50 for Flux-dev . For all models, we apply repulsion to the text tokens in the multimodal attention blo cks (dual-stream in F lux). For SD3.5-Large, which is not distilled for classier-free guidance, the repulsion is applied to both the conditional and unconditional branches. For Flux-dev and Flux-Kontext, we additionally apply it to all tokens in the later single-stream blocks, which are specic to these architectures. The repulsion scale 𝜂 was used to balance the trade-o between diversity and delity , with the inter vention disabled after a xed numb er of timesteps, denote d by 𝜏 . The range of 𝜂 was tuned per model: 𝜂 ∈ [ 2 . 5 · 10 7 , 5 · 10 8 ] with 𝜏 = 4 for SD3.5-Large; 𝜂 ∈ [ 5 · 10 6 , 1 · 10 8 ] with 𝜏 = 1 for SD3.5-T urbo; and 𝜂 ∈ [ 2 . 5 · 10 8 , 5 · 10 10 ] with 𝜏 = 1 for Flux-dev . For simplicity , 𝜂 remained constant throughout the intervention window . W e utilized ocial implementations for all baseline methods, where available. For baselines without compatible ocial implemen- tations, we re-implemented them and tuned their hyperparameters to ensure competitive diversity levels. In addition to the shared guidance and step congurations, the following hyperparameters were used for the baselines: • PG [Corso et al . 2023]: Repulsion scales were varied b e- tween 10 and 100. • CADS [Sadat et al . 2023]: Scales were varied between 0.1 and 0.7, with 𝜏 1 = 0 . 3 , 𝜏 2 = 0 . 8 , and 𝜓 = 1 . • SP ARKE [Jalali et al . 2025]: Scales were selected between 0.02 and 0.14, depending on the model. • SGI [Parmar et al . 2025]: Evaluated with initial candi- date groups of 𝑁 ∈ { 8 , 16 , 32 , 64 } , utilizing default hyperpa- rameters from the ocial implementation. All qualitative comparisons and the user study results reported here were conducted with 𝑁 = 64 . B Additional alitative Results W e present additional qualitative r esults of our method on SD3.5- Large (Figure 15), SD3.5- Turbo (Figur e 16) and Flux-Dev (Figures 17, 18, and 19). C Additional antitative Results Additional comparisons. W e present additional quantitative com- parisons on SD3.5-Large (Figure 13) and SD3.5- T urb o (Figure 14). Our method achieves competitive quality-div ersity trade-os at a Fig. 13. antitative evaluation on SD3.5-Large. Fig. 14. antitative evaluation on SD3.5- Turbo. T able 2. Detailed metrics for the Flux-dev Pareto frontiers in Figure 6. Method V endi ( ↑ ) IR ( ↑ ) VQ A ( ↑ ) KID × 10 − 4 ( ↓ ) Base Model 1.780 1.075 0.883 Ours 𝜂 = 2 . 5 · 10 8 1.810 1.102 0.884 0.066 𝜂 = 5 · 10 8 1.831 1.092 0.883 0.103 𝜂 = 5 · 10 9 1.869 1.075 0.883 0.157 𝜂 = 2 . 5 · 10 10 1.898 1.070 0.880 0.172 CADS 𝑠 = 10 − 20 1.908 0.377 0.719 0.558 𝑠 = 10 − 18 1.908 0.377 0.719 0.558 𝑠 = 10 − 12 1.910 0.303 0.699 0.530 𝑠 = 10 − 11 1.923 0.208 0.674 0.588 PG 𝑠 = 1 1.753 0.991 0.871 0.555 𝑠 = 80 1.759 1.018 0.864 0.675 𝑠 = 150 1.787 0.846 0.848 2.650 SGI 8 Candidates 1.778 1.152 0.875 0.440 16 Candidates 1.829 1.085 0.873 0.461 32 Candidates 1.860 1.063 0.872 0.289 64 Candidates 1.916 1.042 0.872 0.297 SP ARKE 𝑠 = 0 . 01 1.790 1.094 0.884 0.057 𝑠 = 0 . 02 1.850 1.067 0.873 1.079 fraction of the computational cost required by SGI. Detailed metrics across all evaluated models are provided in T ables 2, 3, and 4. User study table. W e provide the full results of our user study in T able 5. Evaluation on detailed prompts. While diversity is typically easier to achieve when prompts leav e signicant room for interpretation, we evaluate our method on the 100 longest prompts from the “Com- plex” and “Fine-Grained Detail” categories of PartiPrompts [Y u et al . 2022] using Flux-dev . Even under these highly constrained condi- tions, our method increases diversity and human preference scores with a negligible impact on prompt alignment. Specically , we ob- serve an increase in V endi score ( + 0 . 08 ) and ImageReward ( + 0 . 05 ), On-the-f ly Repulsion in the Contextual Space for Rich Diversity in Diusion Transformers • 13 SD3.5-Large Ours “ An abandone d carnival” SD3.5-Large Ours “ A couple stargazing” SD3.5-Large Ours “Elephants at a waterhole” SD3.5-Large Ours “ A climb er on a cli ” Fig. 15. alitative results on SD3.5-Large. SD3.5- T urbo Ours “ A dragon guarding its treasure” SD3.5- T urbo Ours “ A picnic under cherr y blossoms” SD3.5- T urbo Ours “ A french baker y at dawn” SD3.5- T urbo Ours “ A snow y village at night” Fig. 16. alitative results on SD3.5- Turbo. 14 • Omer Dahary ∗ , Benaya Koren ∗ , Daniel Garibi, and Daniel Cohen-Or Flux Ours “ A family enjoying a traditional meal together at home” Flux Ours “ A b eautiful Japanese garden with a koi pond and cherry blossoms” Flux Ours “ A jazz singer p erforming on stage with a vintage microphone” Flux Ours “ A bustling street market in Morocco with colorful spices” Fig. 17. Additional qualitative results on Flux-dev . Each batch of images was generated using the same random seed to ensure a fair comparison. On-the-f ly Repulsion in the Contextual Space for Rich Diversity in Diusion Transformers • 15 Flux Ours “ A group of students studying together in a university library” Flux Ours “ A futuristic warrior standing on the e dge of a neon-lit cli ” Flux Ours “ A wedding couple sharing a romantic moment” Flux Ours “ A chef preparing a gourmet meal in a professional kitchen” Fig. 18. Additional qualitative results on Flux-dev . Each batch of images was generated using the same random seed to ensure a fair comparison. 16 • Omer Dahary ∗ , Benaya Koren ∗ , Daniel Garibi, and Daniel Cohen-Or Flux Ours “ An astronaut exploring the terrain of an alien planet” Flux Ours “ An astronaut oating in space with Earth in the background” Flux Ours “ A classic bicycle leaned against an old brick wall” Flux Ours “ A delicious breakfast spread served on a woo den table” Fig. 19. Additional qualitative results on Flux-dev . Each batch of images was generated using the same random seed to ensure a fair comparison. On-the-f ly Repulsion in the Contextual Space for Rich Diversity in Diusion Transformers • 17 T able 3. Detailed metrics for the SD3.5-Large Pareto frontiers in Fig- ure 13. Method V endi ( ↑ ) IR ( ↑ ) V QA ( ↑ ) KID × 10 − 4 ( ↓ ) Base Model 1.819 1.051 0.905 Ours 𝜂 = 2 . 5 · 10 4 1.851 1.018 0.904 0.619 𝜂 = 2 . 5 · 10 6 1.878 1.012 0.904 0.627 𝜂 = 2 . 5 · 10 7 1.941 0.988 0.900 0.625 𝜂 = 2 . 5 · 10 8 1.980 0.940 0.890 0.445 CADS 𝑠 = 10 − 12 2.004 0.131 0.717 0.941 𝑠 = 10 − 10 2.025 0.051 0.692 0.953 𝑠 = 10 − 08 2.018 0.066 0.692 0.953 PG 𝑠 = 1 1.900 0.783 0.878 1.521 𝑠 = 60 1.913 0.707 0.868 4.053 𝑠 = 80 1.924 0.632 0.861 5.930 SGI 8 Candidates 1.828 1.050 0.903 0.465 16 Candidates 1.862 1.025 0.902 0.455 32 Candidates 1.883 1.030 0.902 0.429 64 Candidates 1.915 1.004 0.901 0.421 SP ARKE 𝑠 = 0 . 01 1.860 1.027 0.902 0.362 𝑠 = 0 . 02 1.887 0.999 0.901 0.770 𝑠 = 0 . 03 1.912 0.925 0.899 1.393 𝑠 = 0 . 04 1.989 0.735 0.882 2.918 T able 4. Detailed metrics for the SD3.5- T urbo Pareto frontiers in Fig- ure 14. Method V endi ( ↑ ) IR ( ↑ ) V QA ( ↑ ) KID × 10 − 4 ( ↓ ) Base Model 1.724 0.978 0.891 Ours 𝜂 = 5 · 10 6 1.819 0.914 0.887 1.796 𝜂 = 2 . 5 · 10 7 1.879 0.899 0.884 1.786 𝜂 = 5 · 10 7 1.914 0.864 0.876 1.897 𝜂 = 5 · 10 8 2.079 0.562 0.822 1.914 CADS 𝑠 = 0 . 1 1.808 0.551 0.772 0.158 𝑠 = 0 . 5 1.853 0.383 0.731 0.526 𝑠 = 0 . 8 1.911 0.180 0.683 1.319 𝑠 = 0 . 9 1.958 0.127 0.673 1.348 PG 𝑠 = 2 1.765 0.915 0.884 0.881 𝑠 = 10 1.857 0.638 0.859 2.285 𝑠 = 40 1.926 0.221 0.821 14.128 SGI 4 Candidates 1.707 0.962 0.888 0.078 8 Candidates 1.775 0.944 0.889 0.079 16 Candidates 1.829 0.933 0.883 0.005 32 Candidates 1.853 0.923 0.884 0.028 64 Candidates 1.879 0.913 0.886 0.120 SP ARKE 𝑠 = 0 . 04 1.728 1.011 0.890 0.206 𝑠 = 0 . 08 1.763 0.928 0.885 0.744 𝑠 = 0 . 1 1.812 0.837 0.871 1.219 𝑠 = 0 . 12 1.869 0.629 0.850 2.742 𝑠 = 0 . 14 1.970 0.231 0.803 7.037 while V Q AScore remains nearly constant ( − 0 . 01 ). These results demonstrate that intervening in the Contextual Space eectively identies and navigates remaining semantic degrees of freedom, even in the presence of e xtensive conditioning. T able 5. User study results comparing our method against fiv e com- peting approaches across four evaluation metrics. V alues show the percentage of times users preferred our method (Ours), the competitor (Comp.), or rated both equally (Tie). Results are aggregated from 450 pair- wise comparisons per metric. Metric Choice Base Model CADS SGI PG SP ARKE A verage Diversity Ours 71.6 52.2 56.7 80.0 34.4 61.1 Comp. 12.9 30.0 11.1 14.4 53.1 22.0 Tie 15.5 17.8 32.2 5.6 12.5 16.9 Quality Ours 49.1 67.8 15.6 82.2 85.9 58.0 Comp. 6.9 11.1 31.1 12.2 3.1 13.1 Tie 44.0 21.1 53.3 5.6 10.9 28.9 Adherence Ours 25.0 74.4 13.3 67.8 79.7 48.9 Comp. 15.5 11.1 22.2 13.3 4.7 14.0 Tie 59.5 14.4 64.4 18.9 15.6 37.1 Overall Ours 57.8 74.4 31.1 83.3 87.5 65.1 Comp. 13.8 15.6 27.8 10.0 9.4 15.6 Tie 28.4 10.0 41.1 6.7 3.1 19.3 All Metrics Ours 50.9 67.2 29.2 78.3 71.9 58.3 Comp. 12.3 16.9 23.1 12.5 17.6 16.2 Tie 36.9 15.8 47.8 9.2 10.5 25.6 T able 6. Scalability across batch sizes. antitative results on SD3.5- T urbo for varying batch sizes. W e report the average V endi score per pair to normalize for batch size constraints. Batch size V endi V endi (avg. pair ) ImageReward 4 1.819 1.393 0.914 8 2.295 1.401 0.923 16 2.768 1.404 0.928 D Additional Ablation Studies Batch size ablation. W e examine the scalability of our method by evaluating performance across varying batch sizes on SD3.5- Turbo. T o ensure a fair comparison across dierent sample counts, we report the average V endi score per pair , as the raw V endi score is in- herently bounded by the batch size. As shown in T able 6, our method exhibits a consistent positive tr end across all evaluated metrics as the batch size increases. This suggests that the repulsion mechanism scales eectively and benets from the denser representation of the conditional manifold provided by larger batches. Timestep ablation. W e analyze the impact of the repulsion window across the diusion trajectory by applying the intervention within specic timestep inter vals while keeping all other hyperparameters constant. T able 7 summarizes these results. For both SD3.5-Large and SD3.5- T urbo, applying repulsion later in the trajectory typically improves ImageReward at the expense of diversity . Conversely , maintaining the intervention throughout the entire trajectory yields the highest diversity but results in a more pronounced decline in delity and alignment scores. Transformer block ablation. W e further investigate how the selec- tion of transformer blocks inuences performance by restricting the intervention to the rst, middle, or last third of the architecture’s blocks. As reported in Table 8, applying repulsion to the middle 18 • Omer Dahary ∗ , Benaya Koren ∗ , Daniel Garibi, and Daniel Cohen-Or T able 7. Eect of the timestep interval on diversity and human pref- erence. W e evaluate dierent intervention windows during the diusion trajectory for SD3.5-Large and SD3.5-T urbo. Model Timestep interval V endi ImageReward SD3.5- Turbo [0,1/4] 1.764 0.829 [1/4,2/4] 1.776 0.811 [2/4,3/4] 1.809 0.745 [3/4,1] 1.988 0.660 [0,1] 2.064 0.501 SD3.5-Large [0,1/7] 1.849 0.942 [1/7,2/7] 1.854 0.942 [2/7,3/7] 1.849 0.946 [3/7,4/7] 1.847 0.932 [4/7,5/7] 1.848 0.954 [5/7,6/7] 1.900 0.919 [6/7,1] 1.960 0.852 [0,1] 2.135 0.535 T able 8. Performance across dierent transformer block groups. Re- sults are reported for interventions applied to the first, middle, or last third of the blocks for SD3.5-Large and SD3.5- T urb o. SD3.5- Turbo SD3.5-Large Block group V endi ImageReward V endi ImageReward First third 1.878 0.774 1.887 0.895 Middle third 1.947 0.844 1.947 0.902 Last third 1.765 0.913 1.835 0.985 All blocks 1.764 0.829 1.960 0.852 blocks yields the strongest diversity among the partitioned groups, while preserving high preference scores for both SD3.5-Large and SD3.5- Turbo.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment