See it to Place it: Evolving Macro Placements with Vision-Language Models

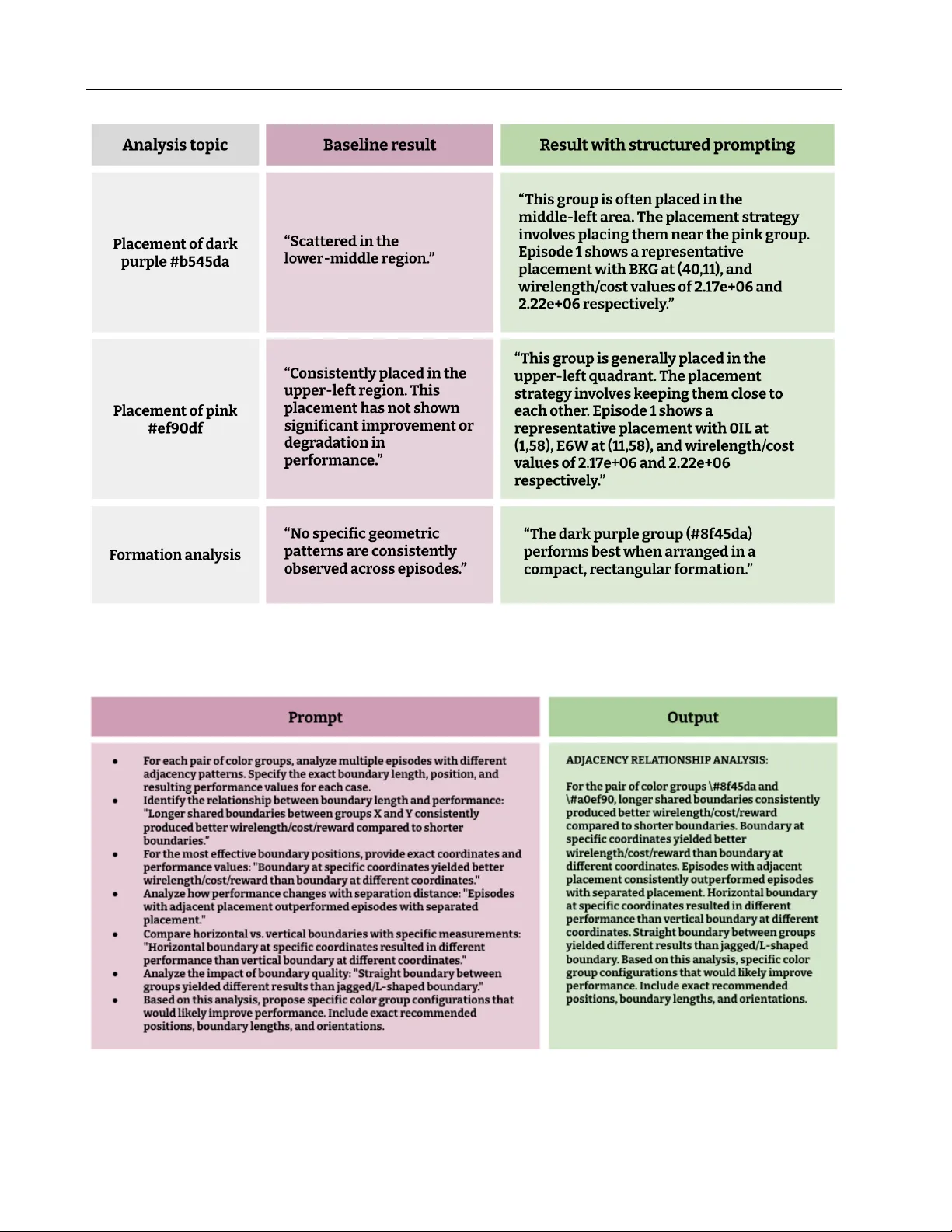

We propose using Vision-Language Models (VLMs) for macro placement in chip floorplanning, a complex optimization task that has recently shown promising advancements through machine learning methods. Because human designers rely heavily on spatial rea…

Authors: Ikechukwu Uchendu, Swati Goel, Karly Hou