Constructing Composite Features for Interpretable Music-Tagging

Combining multiple audio features can improve the performance of music tagging, but common deep learning-based feature fusion methods often lack interpretability. To address this problem, we propose a Genetic Programming (GP) pipeline that automatica…

Authors: Chenhao Xue, Weitao Hu, Joyraj Chakraborty

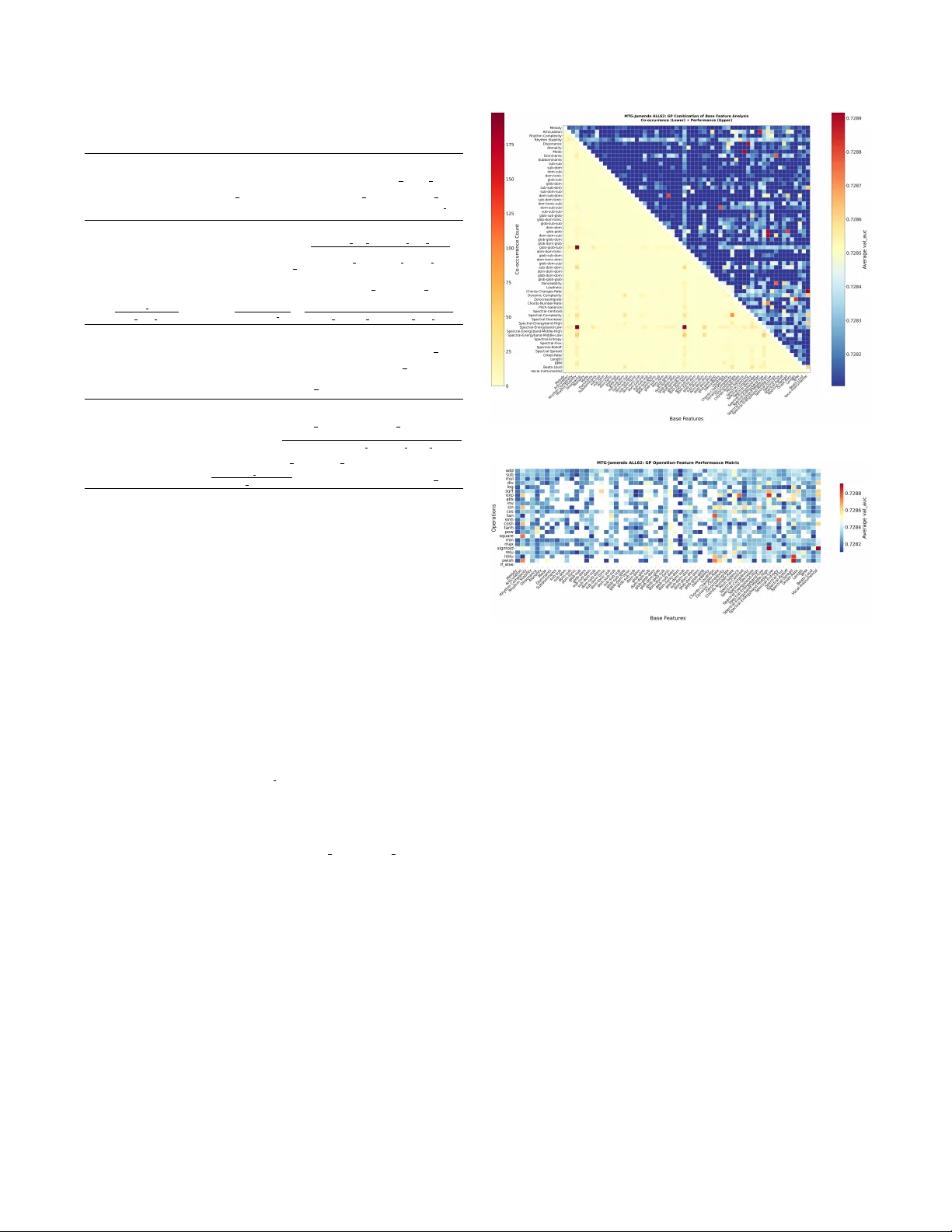

CONSTR UCTING COMPOSITE FEA TURES FOR INTERPRET ABLE MUSIC-T A GGING Chenhao Xue 1 W eitao Hu 2 J oyraj Chakr aborty 1 Zhijin Guo 1 Kang Li 1 T ianyu Shi 3 Martin Reed 4 Nikolaos Thomos 4 1 Uni versity of Oxford 2 Independent Researcher 3 Uni versity of T oronto 4 Uni versity of Esse x ABSTRA CT Combining multiple audio features can improv e the performance of music tagging, but common deep learning-based feature fusion methods often lack interpretability . T o address this problem, we propose a Genetic Programming (GP) pipeline that automatically ev olves composite features by mathematically combining base mu- sic features, thereby capturing synergistic interactions while preserv- ing interpretability . This approach provides representational benefits similar to deep feature fusion without sacrificing interpretability . Ex- periments on the MTG-Jamendo and GTZAN datasets demonstrate consistent improv ements compared to state-of-the-art systems across base feature sets at different abstraction lev els. It should be noted that most of the performance gains are noticed within the first fe w hundred GP ev aluations, indicating that ef fectiv e feature combina- tions can be identified under modest search b udgets. The top e volved expressions include linear , nonlinear , and conditional forms, with various low-comple xity solutions at top performance aligned with parsimony pressure to prefer simpler e xpressions. Analyzing these composite features further re veals which interactions and transfor - mations tend to be beneficial for tagging, offering insights that re- main opaque in black-box deep models. Index T erms — Music tagging; Feature construction; Genetic programming; Interpretability; Music information retriev al. 1. INTRODUCTION Music audio tagging concerns automatically assigning descriptive labels or “tags” (e.g., mood, theme, instrument) to music tracks based on their audio content. This is a fundamental problem in music information retrie val (MIR) because accurate tags enable the ef ficient organization and retrie val of lar ge music collections [1]. For decades, automatic tagging has been approached via handcrafted feature e xtraction follo wed by traditional machine-learning classi- fiers [2, 3, 4, 5]. These engineered features were designed to capture low- and mid-level acoustically and musically significant character- istics (e.g., MFCC for timbre or chroma features for tonality), which A CCEPTED A T THE 2026 IEEE INTERNA TIONAL CONFERENCE ON A COUSTICS, SPEECH, AND SIGN AL PR OCESSING (ICASSP 2026), B ARCELONA, SP AIN, MA Y 4–8, 2026. © 2026 IEEE. PERSON AL USE OF THIS MA TERIAL IS PER- MITTED. PERMISSION FR OM IEEE MUST BE OBT AINED FOR ALL O THER USES, IN ANY CURRENT OR FUTURE MEDIA, INCLUD- ING REPRINTING/REPUBLISHING THIS MA TERIAL FOR ADVER- TISING OR PROMO TIONAL PURPOSES, CREA TING NEW COLLEC- TIVE WORKS, FOR RESALE OR REDISTRIBUTION TO SER VERS OR LISTS, OR REUSE OF ANY COPYRIGHTED COMPONENT OF THIS WORK IN O THER WORKS. The authors thank Puyu W ang for providing music theory v alidation of the ev olved GP expressions. made them inherently interpretable as they correspond to perceptual aspects of music. In recent years, there has been a shift to wards minimal feature engineering and end-to-end deep learning models that learn features directly from audio [6, 7, 8]. These methods significantly enhance the accuracy of music tagging models with ov erwhelming parameters, vast labeled data, and synergy modelling of individual music features (closer to human perception [9, 10]) with explicit or implicit comple x feature fusion [1, 11, 12]. While deep-learning models achiev e state-of-the-art tagging per- formance, their opacity remains a concern, as they cannot easily ex- plain why a certain tag was assigned. This is exacerbated in tasks with subjectiv e or ambiguous ground truth [13]. The subjective na- ture of tags like “happy” or “aggressiv e” means that even ground truth annotations can vary between annotators [13, 14]. A black- box model could latch onto spurious correlations. Without inter - pretability , neither developers nor users can tell if the model is lever - aging unwarranted biases (e.g., associating “sadness” only with slow tempo) [15]. Indeed, a lack of transparency in the tagging decisions makes it difficult to detect dataset biases or flaws in model reason- ing. This is critical in music machine learning (ML) where models often train on limited genres or cultures, and hidden biases may lead to poor generalization or unfair outcomes [16]. These practical considerations call for interpretable music au- dio tagging methods that could approach some advantages of deep learning models, such as the modelling of synergetic effects of music features, which has recei ved limited study in music tasks [17, 18]. In this paper , we introduce a method to automatically construct inter - pretable composite features from individual base features for music tagging using Genetic Programming (GP), which is a form of sym- bolic regression [19, 20]. Our specific contributions are: • we propose a Genetic Programming pipeline that constructs interpretable composite features by combining base music features to improv e tagging and rev eal interaction insights; • we demonstrate that the GP-augmented feature sets consis- tently improve tagging accuracy across two datasets and dif- ferent types of base features, with gains achie ved under mod- est ev aluation budgets; • we analyze the resulting symbolic expressions to identify ef- fectiv e feature interactions and transformations, offering in- terpretability and insight not av ailable in black-box models. The GP-constructed composite features, e xpressions, and code for this paper are made av ailable on GitHub 1 . Fig. 1 . GP pipeline for Composite Feature and Model Construction. 2. METHODS Although deep learning-based music tagging methods achie ve state- of-the-art music-tagging performance, their feature fusion remains opaque, and hence, making it difficult to understand why a tag has been allocated to a piece of music. T raditional handcrafted ap- proaches provide interpretability through feature importance, but cannot systematically discov er complex feature combinations at scale. T o address this challenge, we propose a GP pipeline that ev olves interpretable mathematical combination expressions, pro- viding explicit symbolic representations of feature interactions while enabling automated discovery of effectiv e combinations. As shown in Figure 1, the pipeline consists of three stages: (1) Audio Feature Extraction, (2) GP-Based Composite Feature Construction, and (3) T agging Model T raining. 2.1. A udio Feature Extraction W e employ two feature sets at dif ferent abstraction le vels, follo wing established music information retrie val (MIR) practices that com- bine signal descriptors with perceptual knowledge [5]. Specifically , Signal-level Features (E23): W e extract 23-dimensional audio descriptors using the Essentia library’ s music feature extractor [21]. This base feature set captures signal-level characteristics (e.g., Loud- ness, BPM, Onset Rate, Zero Crossing Rate, Dynamic Complexity , Pitch Salience, and Spectral-Centroid); Low and Mid-Le vel Perceptual Featur es (ALL62): W e use a 62-dimensional feature set from L yberatos et al. ’s study [5]. This set includes: (a) the E23 features described above, (b) 32 ontology- grounded harmonic-function features computed using the Omnizart chord recognition Python library [22] mapped to Functional Har- mony Ontology classes with normalized n -gram frequencies queried via SP ARQL [23], and (c) 7 perceptual features estimated through multi-output regression using a VGG-style Conv olutional Neural Networks (CNN) processing Mel-Frequency Cepstral Coefficients (MFCC) segments deri ved from 15-sec clips. Before further processing, all features are scaled to have zero mean and unit variance. These base feature sets are input for GP- based composite feature construction. 2.2. GP-Based Composite Featur e Construction Let us define base features X = { x 1 , . . . , x n } and target labels y . W e first formulate the GP composite feature construction as an optimization problem: max f 1 ,...,f M ∈F P ( X ∪ { f 1 ( X ) , . . . , f M ( X ) } , y ) − λ M X i =1 ℓ ( f i ) where f i : R n → R are composite features from function space F defined by GP operations O . P ( · , y ) represents the tagging per- formance metric. ℓ ( f i ) is the expression size (number of nodes, i.e., operators and terminals) in the expression tree of f i (see Figure 2). Our GP system ev olves interpretable mathematical expressions 1 https://github.com/ChenHX111/GP- Music- Tagging Fig. 2 . GP crosso ver operation for composite feature construction that solve this optimization problem at a scale, generating human- readable expressions that reveal feature interactions. Once evolv ed, these expressions require only basic computations for ne w instances, concentrating computational cost at training time rather than deploy- ment. W e e volve up to M composite features in M iterations using base features as primitives. Each iteration consists of a full GP run. In our framework, each GP individual encodes a scalar compos- ite feature as a rooted expression tree, constructed from standard- ized base features together with ephemeral constants sampled from c ∼ U [ − 2 , 2] . The operation set O includes arithmetic and protected numeric operators (e.g., log , sqrt , div , inv ) for linear/nonlinear combination; trigonometric and hyperbolic functions for periodic patterns; min / max ; neural-style acti v ations (e.g., sigmoid , RELU , LRELU , swish ); and a ternary if then for piecewise dependen- cies, with closure ensuring real-valued outputs. Initialization uses ramped half-and-half (depth in [1,3]). Population e volution uses one-point subtree crossover (with rate 0.8, illustrated in Figure 2); uniform subtree mutation (rate 0.1) replacing a randomly selected subtree with a full tree of depth in [0,2]; tournament selection (size 3); and a static height limit of 6 to control bloat. Numerical robust- ness is enforced via protected primitives, fitness penalties for in valid values ( NaN / ∞ ), sanitization via nan to num , and standardization of each candidate feature before ev aluation. The fitness function e valuates candidates by augmenting the fea- ture set and measuring XGBoost [24] held-out validation set perfor - mance, thereby directly optimizing for predicti ve utility . Following parsimony pressure [25], we apply a complexity penalty ( λ = 0 . 01 per node found by experimentation) to promote interpretability by fa voring simpler e xpressions over comple x ones (e.g., f 3 ov er f 4 in Figure 2), preventing bloat and maintaining transparency . The ev olved expressions provide interpretability through two mecha- nisms: first, each expression explicitly shows how base features combine at scale re vealing v arious interactions una vailable in both black-box models [11, 12] and beyond systematic exploration capa- bilities of manual feature engineering [5, 26], and second, iterati ve single-feature addition directly assesses each composite feature’ s contribution. 2.3. T agging Model T raining W e employ XGBoost [24] as our tagging classifier due to its strong performance on tab ular data and robustness to mixed feature types. MTG-Jamendo mood/theme tagging is treated as multiple binary classification tasks (multilabel) [27], while GTZAN genre tagging uses multiclass classification [28]. W e ev aluate using R OC-A UC for MTG-Jamendo and accurac y for GTZAN, consistent with estab- lished interpretable [5, 26] and deep learning methods [29, 30]. 3. EXPERIMENT AL RESUL TS 3.1. Dataset and Setup 2 T able 1 . GP-Augmented vs. Baseline Performance for deployment models Method MTG-Jamendo (A UC) GTZAN (A CC) Score [95% CI] +∆ Score [95% CI] +∆ ALL62 [5, 26] 0.727 – 0.765 – ALL62 + GP100 0.729 [ 0.724–0.733 ] 0.002 0.800 [ 0.760–0.845 ] 0.035 ALL62 + GP500 0.730 [ 0.724–0.736 ] 0.003 0.805 [ 0.760–0.850 ] 0.040 E23 [5, 26] 0.719 – 0.740 – E23 + GP100 0.722 [ 0.716–0.728 ] 0.003 0.785 [ 0.730–0.830 ] 0.045 E23 + GP500 0.724 [ 0.717–0.731 ] 0.005 0.790 [ 0.735–0.840 ] 0.050 Fig. 3 . MTG-Jamendo A UC Dis- tribution of all augmented sets Fig. 4 . GTZAN Accuracy Distri- bution of all augmented sets Our GP implementation uses populations of 100 and 500 individ- uals via DEAP [31], with termination after 50 generations or early stopping (stagnation: if no improv ement for 15 generations; con- ver gence: if fitness variance < 0 . 0001 over 5 generations). W e ev aluated the effecti veness of our proposed pipeline against es- tablished approaches, which employ XGBoost [5, 26] without GP enhancement: music mood/theme-tagging on the MTG-Jamendo dataset [27] and genre-tagging on the GTZAN dataset [28]. The mood/theme subset of the MTG-Jamendo dataset contains 18486 songs with 56 tags. The GTZAN dataset is a collection of 1,000 audio tracks, each 30 sec long, with 100 tracks for each of its 10 featured genres. Baseline XGBoost models are trained using E23 and ALL62 feature sets, respecti vely , for both MTG-Jamendo mood/theme tag- ging and GTZAN genre tagging, following the same train/v al/test splits and other setups of the state-of-the-art interpretable methods on Github [5, 26], such as identical XGBoost hyperparameters: 70 estimators with max depth 3 and learning rate 0.1 for MTG-Jamendo multiple binary classification, and max depth 2 with learning rate 0.3 for GTZAN multiclass classification. 3.2. GP-A ugmented Performance Comparison Our reproduced baselines achieve 0.727 ROC-A UC (MTG-Jamendo) and 0.765 accuracy (GTZAN) using ALL62 features, closely match- ing but slightly below the original reported performance [5, 26] of 0.729 R OC-A UC and 0.79 accuracy , respecti vely . T able 1 presents the best-performing GP-augmented feature sets for deplo yment on the ne ver-seen test set with stratified bootstrap confidence intervals ( B = 2000 , 95% CI). These results demonstrate two key findings: first, GP consistently improves upon both base feature sets across datasets, with particularly substantial gains on GTZAN (4.0-5.0% accuracy improvement). This indicates that GP ef fectiv ely disco vers beneficial combinations, regardless of the le vel of information of the base feature set. Second, our method surpasses state-of-the-art inter- pretable approaches [5, 26]) while approaching state-of-the-art deep learning performance (0.781 R OC-A UC on MTG-Jamendo [29], 0.84 accuracy on GTZAN [30]) with only 5 GP iterations. Fig. 5 . MTG-Jamendo Impro vement T rajectory Fig. 6 . GTZAN Impro vement T rajectory T o validate that the performance improv ements of the best- performing GP-augmented feature sets (T able 1) reflect systematic effecti veness rather than outlier beha vior , Figures 3 and 4 present the complete performance distributions across all GP-augmented feature sets. The key finding is that median GP performance con- sistently e xceeds baselines across all configurations (population size 100 or 500), with narrow inter-quartile ranges indicating stable im- prov ement. While the median g ains are small, the findings indicate that most GP-evolv ed features are beneficial. Deployment models (i.e., those sho wn in T able 1) occupy the upper tail of an overall improv ed distribution, rather than being rare outliers. 3.3. GP Impro vement T rajectory T o assess computational cost-efficiency , Figures 5 and 6 present the anytime trajectories of GP feature construction, showing best-so-far and running-a verage scores versus ev aluation count for GP config- uration of 100 individuals in population. Across datasets, the best- so-far curv es rise steeply during the first few hundred ev aluations and then flatten, while the running av erages increase more gradu- ally toward a plateau. This improvement pattern remains consis- tent across population sizes, with GP500 showing similar trajec- tories (additional plots available in our GitHub repository in Sec- tion 1). This characteristic steep-then-plateau pattern reflects e vo- lutionary search dynamics in large combinatorial spaces: early gen- erations explore di verse feature combinations yielding rapid gains, while later refinement produces diminishing returns as the search ap- proaches local optima. Dataset complexity determines conv ergence rates. Specifically , in GTZAN’ s 10-class genre classification, our method achieves near-optimal performance within 300 e valuations, while in MTG-Jamendo’ s challenging 56-tag multilabel prediction, it requires 1000 e valuations for substantial gains, with the largest improv ement (E23-GP500) extending to 8000 e valuations. These improv ements are both computationally efficient (5.5 sec per ev alu- ation for MTG-Jamendo, 1.2 sec for GTZAN on R TX 3080T i) and practically significant, surpassing established interpretable methods while maintaining modest search budgets. 4. INTERPRET ABILITY ANAL YSIS ON GP FEA TURES The interpretability advantage of our GP approach is demonstrated by analysing the symbolic mathematical expressions of evolv ed 3 T able 2 . T op GP Individual Expressions Dataset: MTG-J amendo (Base: ALL62, Metric: A UC) A UC 0.730: max(0 , cos( glob glob sub )) − cos(max(0 , Rhythm Stability )) + Spectral Ener gyband Lo w A UC 0.729: Loudness − BPM dom dom Dataset: GTZAN (Base: ALL62, Metric: A CC) A CC 0.805: max( sub sub dom , glob dom dom ) Danceability | ( glob dom Spectral Energyband Middle Low ) 2 A CC 0.800: if if ( Mode > 0 , cosh( Chords Changes Rate ) , Spectral Entropy dom dom tonic ) > 0 , 1 1+ e − glob sub , 1 Chords Number Rate − glob dom tonic Dataset: MTG-J amendo (Base: E23, Metric: A UC) A UC 0.724: 2 · Length − 3 · Onset Rate − min( Danceability , Spectral Decrease ) A UC 0.724: tanh Length − 2 · Onset Rate − Zerocrossingrate Dataset: GTZAN (Base: E23, Metric: A CC) A CC 0.790: tan(max( Spectral Flux , Spectral Rolloff )) | 1 1+ e − (cosh( Spectral Energyband Middle Low )) 2 A CC 0.785: Chords Number Rate · Danceability | Onset Rate Dynamic Complexity − Danceability + Onset Rate composite features and their feature-feature and feature-operator co- occurrence patterns, extending beyond traditional importance-based methods [5, 15, 24] to rev eal how base features should be combined and transformed for optimal tagging performance. Note, base fea- ture names appear in typewriter font (e.g., Spectral-Spread ). 4.1. Best F eatures Expressions W e analyze composite features from the top-performing ev olved e x- pressions to understand base feature interaction mechanisms. T a- ble 2 shows that GP disco vers heterogeneous solutions ranging from linear and nonlinear combinations to comple x conditional forms, re- vealing multiple effecti ve pathways for feature combination rather than conv ergence on a single type. Sev eral low-complexity expres- sions achieve top performance alongside deeper constructs, consis- tent with parsimony pressure [25] that compact expressions curb bloat and are easier to interpret. For example, the MTG-Jamendo expression Loudness − BPM dom dom potentially captures percei ved energy through dynamics-tempo interaction modulated by harmonic tension. Moreover , conditional operators could suggest piece wise or categorical feature-label correlations, such as GTZAN’ s condi- tional expression using Mode and Chords Changes Rate could exploit genres’ characteristic differences in harmonic rhythm and major/minor tendencies. 4.2. Base F eatures and Operation Co-occurrence Co-occurrence analysis re veals task-specific feature syner gies with music-theoretic implications. Figure 7 shows pairwise co-occurrence (lower triangles) and mean performance conditioned on pair pres- ence (upper triangles) for top-500 e volv ed expressions. For MTG- Jamendo, Spectral-Spread paired with timbral features ap- pears at moderate frequency with high R OC-A UC, potentially cap- turing how spectral distribution affects perceiv ed brightness and warmth. Additionally , Spectral-Decrease with Beats-Loud shows mid-range frequency but strong performance, suggest- ing rhythmic and spectral characteristics jointly contribute to mood discrimination. GTZAN exhibits different synergies. It benefits from harmonic function pairs (e.g., dom-tonic-dom Fig. 7 . MTG-Jamendo ALL62 Co-occurrence Matrix Fig. 8 . MTG-Jamendo ALL62 Operator -Feature Performance with glob-dom-glob showing high mean accuracy), reflecting genre-distinctiv e chord progression patterns that GP discov ers as harmonically-informed combinations. The frequent co-occurrence of Spectral-Entropy with Spectral-Flux indicates that spectral irregularity measures together enhance genre classification. Operator-feature patterns sho wn in Figure 8 reveal perceptually- motiv ated transformations across tasks. For MTG-Jamendo, log a- rithmic operations on temporal features ( Danceability , Onset- Rate , Length ) appear frequently with high ROC-A UC, poten- tially reflecting rhythmic perception’ s nonlinear nature, consis- tent with logarithmic scaling observed in many perceptual do- mains. Sigmoid/swish operations with Vocal-Instrumental features at lower frequency but high performance suggest non- linear vocal-instrumental balance contributes to mood perception through threshold-lik e mechanisms. GTZAN shows dif ferent pat- terns. Sigmoid transforms on spectral features ( Spectral-Flux , Spectral-Rolloff ) achiev e near-best accuracy , possibly mod- eling threshold effects in timbre perception, while power operations with harmon y features ( glob-dom , glob-sub-dom ) further sup- port the genre-harmonic relationship. (Corresponding GTZAN plots are av ailable in our GitHub repository .) 5. CONCLUSION This paper proposes a GP pipeline to construct interpretable compos- ite features that augment base feature sets for music tagging. Our method mathematically combines base music features at scale to capture synergistic interactions, thereby achieving representational 4 benefits similar to deep learning–style feature fusion. Howe ver , un- like the latter methods, our GP pipeline preserv es interpretability . Experiments on MTG-Jamendo and GTZAN datasets show consis- tent performance gains across base features of v arying abstraction lev els, with improvements emerging within the first fe w hundred GP ev aluations, and hence with a relati vely modest search budget. The ev olved best-performing e xpressions range from linear and non- linear to conditional forms, including lo w-complexity solutions at top performance, showing the GP’ s parsimony pressure produces simpler , more interpretable expressions without sacrificing effecti ve- ness. Analysis of feature-feature and feature-operator co-occurrence patterns re veals that interpretable feature construction extends be- yond traditional importance-based methods. 6. REFERENCES [1] Minz W on, Keunwoo Choi, and Xavier Serra, “Semi- supervised music tagging transformer , ” arXiv preprint arXiv:2111.13457 , 2021. [2] George Tzanetakis and Perry Cook, “Musical genre classifica- tion of audio signals, ” IEEE T ransactions on speech and audio pr ocessing , vol. 10, no. 5, pp. 293–302, 2002. [3] Matthew Prockup, Andreas F Ehmann, Fabien Gouyon, Erik M Schmidt, and Y oungmoo E Kim, “Modeling musical rhyth- matscale with the music genome project, ” in W ASP AA , 2015. [4] Y udong Zhao, Gy ¨ orgy Fazekas, and Mark Sandler , “V iolinist identification using note-level timbre feature distributions, ” in ICASSP . IEEE, 2022, pp. 601–605. [5] V assilis L yberatos, Spyridon Kantarelis, Edmund Dervak os, and Giorgos Stamou, “Perceptual musical features for inter- pretable audio tagging, ” in ICASSPW . IEEE, 2024. [6] Jordi Pons Puig, Oriol Nieto Caballero, Matthe w Prockup, Erik M. Schmidt, Andreas F . Ehmann, and Xavier Serra, “End- to-end learning for music audio tagging at scale, ” in ISMIR , 2018. [7] Jordi Pons and Xavier Serra, “musicnn: Pre-trained conv olu- tional neural networks for music audio tagging, ” arXiv preprint arXiv:1909.06654 , 2019. [8] Qiuqiang K ong, Y in Cao, Turab Iqbal, Y uxuan W ang, W enwu W ang, and Mark D Plumbley , “P ANNs: Large-scale pre- trained audio neural networks for audio pattern recognition, ” IEEE/A CM T ASLP , vol. 28, pp. 2880–2894, 2020. [9] Peter Harrison and Marcus T Pearce, “Simultaneous conso- nance in music perception and composition., ” Psychological Revie w , vol. 127, no. 2, pp. 216, 2020. [10] Malinda J McPherson, Sophia E Dolan, Alex Durango, T omas Ossandon, Joaqu ´ ın V ald ´ es, Eduardo A Undurraga, Nori Ja- coby , Ricardo A Godoy , and Josh H McDermott, “Perceptual fusion of musical notes by native amazonians suggests univ er- sal representations of musical intervals, ” Nature communica- tions , vol. 11, no. 1, pp. 2786, 2020. [11] Pei-Chun Chang, Y ong-Sheng Chen, and Chang-Hsing Lee, “IIOF: Intra-and inter -feature orthogonal fusion of local and global features for music emotion recognition, ” P attern Recog- nition , vol. 148, pp. 110200, 2024. [12] Aizhen Liu, “Research on multi-feature fusion music emo- tion classification method under cognitiv e psychology , ” Inter- national Journal of High Speed Electronics and Systems , p. 2540339, 2025. [13] Morgan Buisson, Pablo Alonso-Jim ´ enez, and Dmitry Bog- danov , “ Ambiguity modelling with label distribution learning for music classification, ” in ICASSP , 2022. [14] Hendrik V incent K oops, W Bas De Haas, John Ashley Bur- goyne, Jeroen Bransen, Anna Kent-Muller , and Anja V olk, “ Annotator subjectivity in harmon y annotations of popular mu- sic, ” JNMR , v ol. 48, no. 3, pp. 232–252, 2019. [15] Saumitra Mishra, Bob L Sturm, and Simon Dixon, “Local interpretable model-agnostic explanations for music content analysis., ” in ISMIR , 2017, v ol. 53, pp. 537–543. 5 [16] Andre Holzapfel, Bob Sturm, and Mark Coeckelbergh, “Eth- ical dimensions of music information retrie val technology , ” TISMIR , vol. 1, no. 1, pp. 44–55, 2018. [17] Bin Cui, Jialie Shen, Gao Cong, Heng T ao Shen, and Cui Y u, “Exploring composite acoustic features for ef ficient music sim- ilarity query , ” in A CM MM , 2006. [18] T oni M ¨ akinen, Serkan Kiran yaz, Jenni Raitoharju, and Mon- cef Gabbouj, “ An evolutionary feature synthesis approach for content-based audio retriev al, ” EURASIP Journal on Audio, Speech, and Music Processing , v ol. 2012, no. 1, pp. 23, 2012. [19] Y i Mei, Qi Chen, Andrew Lensen, Bing Xue, and Mengjie Zhang, “Explainable artificial intelligence by genet ic program- ming: A surv ey , ” IEEE TEVC , vol. 27, no. 3, pp. 621–641, 2022. [20] Tingting Y ang, Chenhao Xue, and Jun Chen, “Design of driver stress prediction model with CNN-LSTM: Exploration of fea- ture space using genetic programming, ” in IJCNN . IEEE, 2024. [21] Dmitry Bogdanov , Nicolas W ack, Emilia G ´ omez Guti ´ errez, Sankalp Gulati, Perfecto Herrera Boyer , Oscar Mayor, Gerard Roma Trepat, Justin Salamon, Jos ´ e Ricardo Zapata Gonz ´ alez, and Xa vier Serra, “Essentia: An audio analysis library for mu- sic information retriev al, ” 2013. [22] Y u-T e W u, Y in-Jyun Luo, Tsung-Ping Chen, I W ei, Jui-Y ang Hsu, Y i-Chin Chuang, Li Su, et al., “Omnizart: A general toolbox for automatic music transcription, ” arXiv preprint arXiv:2106.00497 , 2021. [23] Andy Seaborne and Eric Prud’hommeaux, “SP ARQL query language for RDF, ” W3C Recommendation, W3C , 2008. [24] Tianqi Chen and Carlos Guestrin, “XGBoost: A scalable tree boosting system, ” in KDD , 2016, pp. 785–794. [25] Riccardo Poli and Nicholas Freitag McPhee, “Parsimon y pres- sure made easy , ” in GECCO , 2008. [26] V assilis L yberatos, Spyridon Kantarelis, Edmund Dervak os, and Giorgos Stamou, “Challenges and perspectives in inter - pretable music auto-tagging using perceptual features, ” IEEE Access , 2025. [27] Dmitry Bogdanov , Minz W on, Philip T ovstogan, Alastair Porter , and Xavier Serra, “The MTG-Jamendo dataset for au- tomatic music tagging, ” ICML, 2019. [28] Bob L Sturm, “The GTZAN dataset: Its contents, its faults, their effects on e v aluation, and its future use, ” arXiv pr eprint arXiv:1306.1461 , 2013. [29] Jaeyong Kang and Dorien Herremans, “T o wards unified music emotion recognition across dimensional and categorical mod- els, ” arXiv preprint , 2025. [30] Daisuke Niizumi, Daiki T akeuchi, Y asunori Ohishi, Noboru Harada, and Kunio Kashino, “Masked modeling duo: Learn- ing representations by encouraging both networks to model the input, ” in ICASSP , 2023, pp. 1–5. [31] F ´ elix-Antoine F ortin, Franc ¸ ois-Michel De Rainville, Marc- Andr ´ e Gardner Gardner, Marc Parizeau, and Christian Gagn ´ e, “Deap: Evolutionary algorithms made easy , ” JMLR , vol. 13, no. 1, pp. 2171–2175, 2012. 6

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment