Seeing with You: Perception-Reasoning Coevolution for Multimodal Reasoning

Reinforcement learning with verifiable rewards (RLVR) has substantially enhanced the reasoning capabilities of multimodal large language models (MLLMs). However, existing RLVR approaches typically rely on outcome-driven optimization that updates both…

Authors: Ziqi Miao, Haonan Jia, Lijun Li

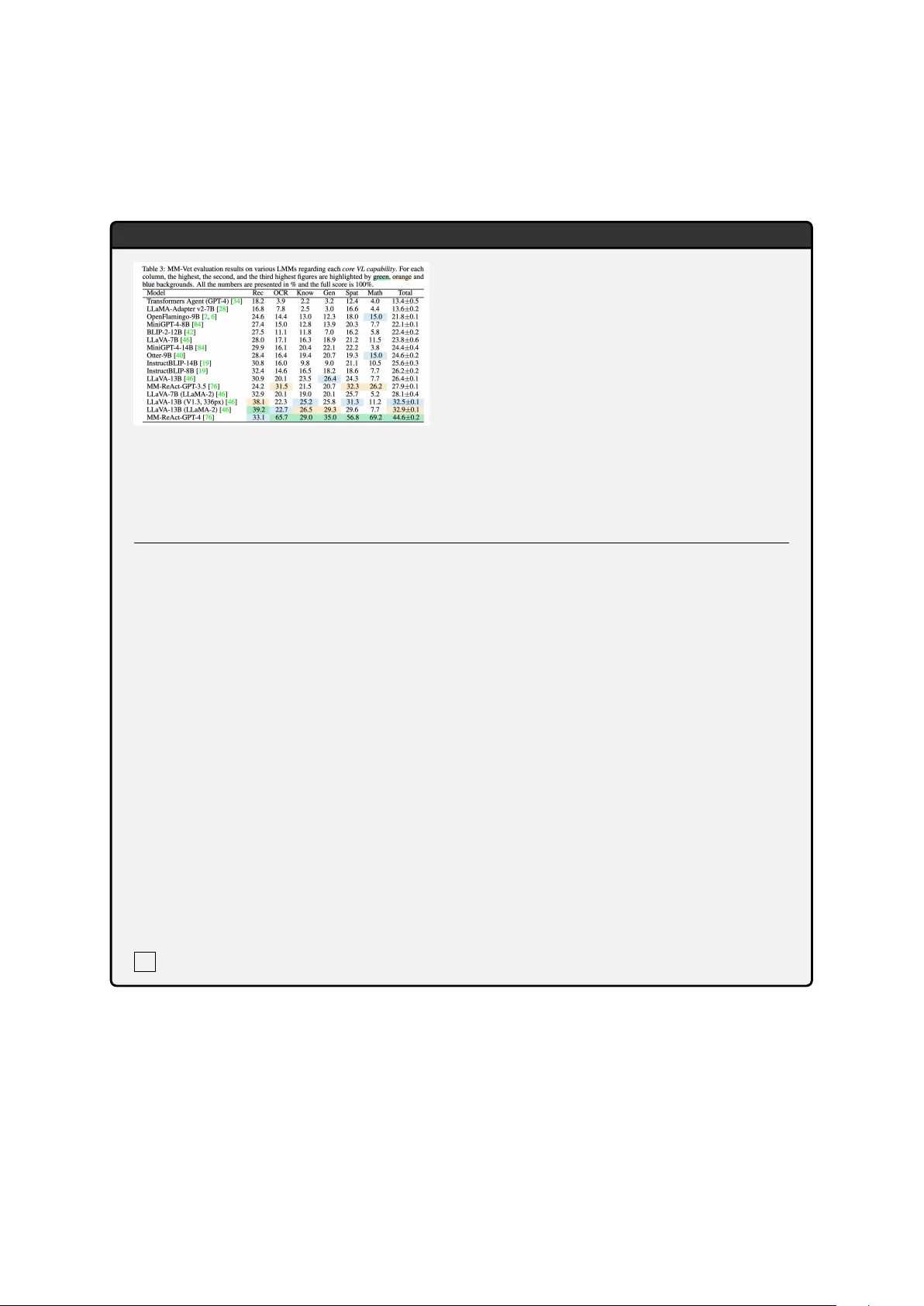

Seeing with Y ou: P er ception–Reasoning Coev olution f or Multimodal Reasoning Ziqi Miao ⋆ , Haonan Jia ⋆ , Lijun Li ⋆ † , Chen Qian, Y uan Xiong, W enting Y an, Jing Shao † 1 Shanghai Artificial Intelligence Laboratory 2 Gaoling School of Artificial Intelligence, Renmin Uni versity of China 3 Zhejiang Uni versity Abstract Reinforcement learning with v erifiable re wards (RL VR) has substantially enhanced the reason- ing capabilities of multimodal large language models (MLLMs). Howe v er , existing RL VR approaches typically rely on outcome-driv en optimization that updates both perception and reasoning using a shared re ward based solely on the final answer . This shared re w ard blurs credit assignment, frequently improving reason- ing patterns while failing to reliably enhance the accuracy of upstream visual evidence ex- traction. T o address this perception bottleneck, we introduce PRCO ( P erception– R easoning Co ev olution), a dual-role RL VR framework with a shared policy . PRCO consists of two cooperativ e roles: an Observ er that generates an evidence caption tailored to the question and a Solver that predicts the final answer based on this caption. Crucially , PRCO employs role-specific reward signals: the Solver is op- timized using verifiable outcome rew ards on the final answer , while the Observer recei v es a utility re ward deri ved from the Solver’ s down- stream success. Extensiv e e xperiments across eight challenging multimodal reasoning bench- marks demonstrate that PRCO yields consistent improv ements across model scales by over 7 points on a v erage accuracy compared to the base model, outperforming prior open-source RL-tuned baselines. 1 Introduction Reinforcement learning with verifiable rewards (RL VR), particularly online algorithms such as Group Relati ve Polic y Optimization (GRPO), has substantially enhanced the reasoning capabilities of Lar ge Language Models (LLMs) in te xt-only problem domains ( Guo et al. , 2025 ; T eam et al. , 2025 ; Xu et al. , 2026 ; W ang et al. , 2026 ; Huang et al. , 2026a ; Zhou et al. , 2026 ). Building on these ⋆ Equal contribution. † Corresponding authors. Code: https://github.com/Dtc7w3PQ/PRCO Below is a top- down view of a circular water surfa ce. What is the value of OA·OB = ()? Image & Question \n To solve the problem, … Calculate the distances OA and OB: The distance OA is the distance from the origin (0, 0) to point A (0, 2). The distance OB is the distance from the origin (0, 0 ) to point B (2, 2 ). … GRPO: Perception Error 24.3% 7.6% Figure 1: Diagnostic analysis of GRPO on W e- Math ( Qiao et al. , 2025 ). Left: GRPO reduces reasoning errors much more than perception errors. Right: a rep- resentativ e failure case caused by incorrect perception. adv ances, recent work e xtends RL VR to Multi- modal Large Language Models (MLLMs) for chal- lenging multimodal reasoning tasks ( Huang et al. , 2025b ; Shen et al. , 2025a ; Su et al. , 2025 ; Fan et al. , 2025 ; Zeng et al. , 2025b ; Cao et al. , 2025 ; Meng et al. , 2025 ; Zeng et al. , 2025a ). Most prior multimodal RL VR work focuses on data-centric cu- ration ( Leng et al. , 2025 ; W u et al. , 2025 ; Li et al. , 2025a ; W ang et al. , 2025d ) and rew ard-centric en- gineering ( Liu et al. , 2025c ; W an et al. , 2025 ; W ang et al. , 2025b , c ). Ef fecti ve reasoning relies on ac- curate perception, which pro vides the necessary grounding for logical deduction ( Liu et al. , 2025a ; Y ao et al. , 2025b ; Xiao et al. , 2026 ). Ho wev er , RL VR is often applied in an outcome-dri v en man- ner , verifying only the final textual answer while largely ne glecting the accuracy of upstream visual perception ( W ang et al. , 2025e ; Li et al. , 2025c ). T o concretely examine this bottleneck, we con- duct a diagnostic analysis using GRPO as a rep- resentati ve multimodal RL VR baseline. W e train Qwen2.5-VL-7B ( T eam , 2025 ) with GRPO and compare its failure modes against its initialization on W eMath ( Qiao et al. , 2025 ) using fine-grained error categorization. As shown in Fig. 1 , tr aining with GRPO substantially r educes r easoning err ors, 1 wher eas per ception err or s impr o ve only mar ginally over the base model. W e attrib ute this bottleneck to outcome-only RL VR with a shared re ward updating both perception and reasoning. This blurs credit as- signment and improv es reasoning patterns without reliably improving visual e vidence e xtraction. Recently , some works have recognized percep- tion as critical and explored perception-centric RL VR for MLLMs. These efforts focus on three directions: introducing additional perception- oriented optimization objectiv es ( Xiao et al. , 2025 ; W ang et al. , 2025e ), using weighted token-lev el credit assignment for perception and reasoning to- kens ( Huang et al. , 2025a ), requiring an explicit image-description step before reasoning ( Xing et al. , 2025 ; Gou et al. , 2025 ). Despite being promising, these works still distribute outcome- based rewards to both perception and reasoning tokens. Consequently , the coupled training signal may improve reasoning patterns without reliably improving visual e vidence e xtraction. These find- ings naturally prompt the question: Can we use reliable , separate learning signals for per ception and r easoning to decouple them at the gradient level? T o this end, we introduce PRCO ( P erception– R easoning Co e volution), a dual-role RL VR frame- work that disentangles perception and reasoning. In this setup, a shared multimodal policy alter- nates between two roles: the Observer , which performs question-conditioned evidence e xtraction and writes an evidence caption tailored to the ques- tion; and the Solver , which produces the answer based on the question and the caption, using the im- age when a v ailable. Crucially , they are trained with separate and reliable learning signals: the Solver is optimized with verifiable outcome re wards on the final answer , while the Observer is optimized with a utility rew ard defined by the Solver’ s v erifier - v alidated success when conditioned on its caption. These role-specific learning signals decouple policy updates for perception and reasoning while still al- lo wing joint optimization under a shared polic y . As a result, PRCO dri ves coev olution, in which the Ob- server progressi v ely improv es question-grounded visual perception by extracting and articulating question-rele v ant evidence, while the Solver learns to reason more reliably under explicit evidence guidance. T o validate PRCO, we conduct extensiv e ex- periments on eight challenging multimodal rea- soning benchmarks cov ering mathematics, geome- try , logic, and multidisciplinary reasoning. PRCO yields consistent gains across model scales: our 7B model improves av erage accuracy by 7.18 points ov er the corresponding base model and outper- forms prior open-source RL-tuned methods, while our 3B model improv es a verage accurac y by 7.65 points. Moreov er , on W eMath, PRCO reduces per- ception errors by 39.2% relativ e to the base model, whereas v anilla GRPO achie v es only a 7.6% reduc- tion. T o sum up, our main contributions are threefold: • W e propose PRCO , a dual-role RL VR frame- work that disentangles perception and reason- ing with an Observer and a Solver under a shared policy . • W e demonstrate the effecti v eness of PRCO on di verse and challenging multimodal reason- ing benchmarks, showing consistent impro ve- ments ov er strong RL VR baselines. • W e provide extensi ve ablation and diagnos- tic analyses that v alidate PRCO’ s key design choices and characterize its effects on percep- tion and reasoning. 2 Method W e propose a dual-role RL VR frame work that trains a shared MLLM policy in two coopera- ti ve roles. The Observer produces a question- conditioned evidence caption c , i.e., one tailored to the question, and the Solver answers based on the caption and the image when a v ailable. W e first re- vie w GRPO (Sec. 2.1 ), then describe the dual-role interaction loop (Sec. 2.2 ), detail the Observer and Solver (Sec. 2.3 – 2.4 ), and finally present unified op- timization with role-specific adv antages (Sec. 2.5 ). 2.1 Preliminary: Group Relativ e Policy Optimization GRPO ( Shao et al. , 2024 ) is a reinforcement learn- ing algorithm for fine-tuning a polic y LLM without learning a separate v alue function. Its key idea is to compute r elative learning signals by normalizing re wards against other responses sampled from the same prompt. For a gi ven prompt x , the policy gen- erates a group of G complete responses { y i } G i =1 . Each response recei ve s a scalar re ward r i . GRPO con v erts these rew ards into response-lev el adv an- tages via z-score normalization: ˆ A i = r i − mean( { r j } G j =1 ) std( { r j } G j =1 ) + ϵ norm , (1) 2 Dual - Rol e RL VR Fr amewo rk T r aining Da t a Image (I) Grou n d Tr ut h (a ) Question( q ) 𝑰 𝑺 , 𝐪 𝑰, 𝐪 S har e d Pol ic y 𝜋 " (ro le= O b s e r v e r) Cap tions 𝒄 𝒌 𝒌$𝟏 𝑮 𝑶 Sol u t ions Cap tion 𝐜 𝐤 S har e d Pol ic y 𝝅 𝜽 (ro le= S o lv e r) 𝑽 ) 𝒂 𝒌,𝒈 , 𝒂 Fo r m at C h e c k Leak age check Sol ver Reward 0 1 … Obse r ver Reward 0.25 0.75 … Util i ty fe e d back Obse r ver Ad v an t ag e Sol ver Adv an t ag e 𝑰 𝑺 : Cap tion-First W a r mup Upda ted 𝜋 " 𝒓 𝒌 𝑶 𝒓 𝒌,𝒈 𝑺 ) 𝒂 𝒌,𝒈 𝒈$𝟏 𝑮 𝑺 - 𝑨 𝒌 𝑶 = 𝒓 𝒌 𝑶 − 𝒎 𝒆𝒂𝒏 𝒓 𝒌 𝑶 𝒌$𝟏 𝑮 𝑶 - 𝑨 𝒌,𝒈 𝑺 = 𝒓 𝒌,𝒈 𝑺 − 𝒎𝒆𝒂𝒏 {𝒓 𝒌,𝒈 𝑺 } 𝒈$𝟏 𝑮 𝑺 𝔼 𝑽 ) 𝒂 𝒌,𝒈 , 𝒂 - 𝑨 𝒌 𝑶 𝒌$𝟏 𝑮 𝑶 ∪ - 𝑨 𝒌,𝒈 𝑺 𝒈$𝟏 𝑮 𝑺 Figure 2: Overvie w of PRCO. A shared policy alternates between an Observer for question-conditioned evidence captioning and a Solver for e vidence-conditioned reasoning. The two roles are jointly optimized with role-specific learning signals and group-relativ e adv antages, enabling perception–reasoning coe volution under a shared polic y . where ϵ norm is a small constant for numerical sta- bility . Policy update. GRPO updates the policy using a PPO-style clipped surrogate objecti v e to impro ve stability . T o pre vent excessi ve policy drift, the ob- jecti ve is re gularized with a KL-div ergence penalty to the old policy: L GRPO ( θ ) = − E i,t h min ρ i,t ( θ ) ˆ A i , clip ρ i,t ( θ ) , 1 − ϵ, 1 + ϵ ˆ A i i + β E x h KL π θ ( ·| x ) ∥ π θ old ( ·| x ) i . (2) where ρ i,t ( θ ) is the token-le vel importance ratio, ϵ is the clipping threshold, and β controls the KL penalty . Optimizing this objective increases the likelihood of responses with positi ve relative ad- v antages, while the KL term constrains diver gence from π θ old for stable training. 2.2 Overview RL VR setting. Follo wing typical RL VR setups ( W ang et al. , 2025a ; Y u et al. , 2025 ), our training dataset D consists of tuples ( I , q , a ) where I is an image, q is a question, and a is a short ground-truth answer . W e do not rely on any existing chain-of- thought data and initiate RL training directly with- out supervised fine-tuning. W e use a lightweight rule-based verifier V (ˆ a, a ) ∈ { 0 , 1 } that checks whether the predicted answer ˆ a matches a , and a simple format checker F ormatScore( ˆ a ) ∈ [0 , 1] that e v aluates whether the output satisfies the re- quired format. T wo r oles under one shar ed policy . W e instanti- ate a single policy π θ in two roles via role-specific prompting. W e denote by r O and r S the prompts for the Observer and Solver , respectiv ely . For each sample ( I , q , a ) , the Observer first generates an intermediate caption c summarizing question- rele vant visual e vidence; then the Solver outputs the final answer conditioned on c and optionally the image. W e denote the Solver’ s visual input as I S ∈ {∅ , I } . For each training instance, we sample G O candidate captions { c k } G O k =1 under the Observer role; gi ven a caption c k , we sample G S candidate answers { ˆ a k,g } G S g =1 under the Solver role. The Ob- server is encouraged to externalize visual e vidence into captions, while the Solver is trained to lev erage captions for e vidence-conditioned reasoning. 2.3 Observer: Utility-Driven Evidence Captioning The Observer con verts high-dimensional visual in- put into a textual evidence signal by producing a question-conditioned e vidence caption that summa- rizes the visual evidence most rele v ant to q (e.g., entities, attributes, and relations). Formally , gi ven ( I , q ) , the Observer samples c ∼ π θ ( · | I , q , r O ) . Utility reward with leakage suppression. Di- rectly verifying the intrinsic quality of an evidence caption is dif ficult. W e therefore train the Observ er through the downstream utility that its caption pro- vides to the Solver . A ke y failure mode is answer shortcutting, where the Observer directly places the final answer in the caption instead of e xtracting question-rele v ant visual evidence. T o suppress this behavior , we use an auxiliary LLM-based leakage 3 checker that takes the caption c and question q as input and outputs a binary leakage indicator . Let I leak ( q , c ) ∈ { 0 , 1 } be the indicator of answer leak- age in c , where 1 indicates the presence of leakage. For a sampled caption c k , we define the Observ er re ward as r O k = 1 − I leak ( q , c k ) E ˆ a ∼ π θ ( ·| I S ,q ,c k ,r S ) [ V (ˆ a, a )] . (3) The expectation is approximated by the empirical mean of the verifier scores over G S sampled Solver rollouts conditioned on ( I S , q , c k , r S ) . This re ward fa vors captions that help do wnstream solving and suppresses captions judged as leaking the answer . 2.4 Solver: Evidence-Conditioned Reasoning The Solver produces a short final answer by rea- soning over the question and the Observer’ s cap- tion, with the image provided when av ailable. Conditioning on c encourages explicit evidence- dri ven reasoning, while image input helps reco v er global structure or complex geometric relations that are dif ficult to fully con vey in te xt. Formally , gi ven a caption c , the Solver samples ˆ a ∼ π θ ( · | I S , q , c, r S ) . Solver reward. W e define the correctness re ward via the verifier as r acc = V (ˆ a, a ) . In addition, r format ∈ [0 , 1] measures whether the response strictly follows the required format. W e compute it with a simple rule-based checker as r format = F ormatScore( ˆ a ) . The Solver is re warded for both correctness and basic format compliance: r S = λ r acc + (1 − λ ) r format , (4) where λ balances accuracy and format compliance, with a default v alue of 0 . 9 . Caption-first warmup. In early training, we set I S = ∅ so that the Solver must rely on ( q , c ) . W e find that if the Solver recei v es both the image and the caption too early , it tends to ignore the cap- tion and solve directly from the image, which can dro wn out the learning signal for improving cap- tions. Therefore, we first warm up the Solver to solve using only captions; after a short warmup, we switch to I S = I to restore full multimodal grounding while retaining the benefits of caption conditioning. 2.5 Unified Policy Optimization with Role-Specific Advantages The Observer and Solver share the same policy and are optimized jointly . Ho we v er , their roll- outs define different comparison groups for rel- ati ve optimization. Observ er captions are com- pared across samples from the same ( I , q ) in- stance, whereas Solver answers are compared within caption-conditioned answer groups. W e therefore compute relati ve adv antages separately for the two roles and optimize the shared policy ov er the combined rollouts. Role-wise grouping and advantage computa- tion. For each sample ( I , q , a ) , the Observer generates G O candidate captions { c k } G O k =1 under π θ ( · | I , q , r O ) . For each caption c k , we generate G S Solver answers { ˆ a k,g } G S g =1 under ( I S , q , c k , r S ) and compute re w ards { r S k,g } G S g =1 using Eq. ( 4 ) . W e then compute Observer rew ards { r O k } G O k =1 via Eq. ( 3 ) . Follo wing Eq. ( 1 ) , we compute group- relati ve advantages separately for the two roles while omitting the standard deviation normaliza- tion term. Concretely , for a re ward group { r i } G i =1 , we use ˆ A i = r i − mean( { r j } G j =1 ) . By centering re wards around the role-specific group mean with- out standard deviation normalization, we ensure that gradient updates are driv en by within-group relati ve performance rather than cross-role vari- ance dif ferences. W e compute caption advantages { ˆ A O k } G O k =1 from { r O k } G O k =1 across the G O captions. For the Solver update, we reuse these evidence- conditioned answer rollouts. For each ( I , q , a ) , we preferentially sample one caption index ˜ k uni- formly from captions with V ar( { r S k,g } G S g =1 ) > 0 to a void degenerate relati v e signals, and compute Solver adv antages { ˆ A S ˜ k,g } G S g =1 from { r S ˜ k,g } G S g =1 . Unified policy update. W e aggregate Observ er caption trajectories associated with ˆ A O and Solver answer trajectories associated with ˆ A S into a com- bined rollout batch and optimize a unified GRPO- style objecti ve: L dual ( θ ) = L GRPO θ ; ˆ A S + L GRPO θ ; ˆ A O , (5) where L GRPO ( θ ; ˆ A ) denotes Eq. ( 2 ) instantiated on the corresponding role trajectories with adv antage ˆ A . Follo wing ( Y u et al. , 2025 ), we set β = 0 to remov e the KL penalty and encourage exploration. Under the shared policy , this unified update jointly improv es perception and reasoning. 4 Model Math-Related General T ask Overall MathV erse MathV ision MathV ista W eMath DynaMath LogicV ista MMMU-Pro MMStar A vg. Based on Qwen2.5-VL-3B Base Model 34.13 22.50 65.00 23.52 12.37 38.70 26.76 56.06 34.88 GRPO 36.29 24.70 67.40 30.57 17.96 38.92 29.88 58.00 37.97 D APO 40.98 27.40 70.20 35.14 20.35 43.62 31.73 60.40 41.23 P APO-G-3B 37.56 23.61 67.60 31.05 17.96 40.26 29.01 58.86 38.24 P APO-D-3B 40.48 26.61 69.30 32.29 19.36 47.20 31.27 60.66 40.90 MMR1-3B-RL 38.57 22.17 64.50 38.29 16.36 40.49 30.34 57.20 38.49 V ision-SR1-3B 36.29 25.65 64.90 34.48 18.16 41.16 33.12 56.73 38.81 PRCO-3B 42.51 27.27 70.30 40.00 22.36 44.97 31.85 61.00 42.53 Based on Qwen2.5-VL-7B Base Model 43.02 25.46 70.20 35.43 20.35 45.41 35.49 64.26 42.45 GRPO 44.28 28.28 75.40 41.43 25.14 46.08 39.01 64.06 45.46 D APO 48.73 29.30 74.80 45.62 26.14 47.87 41.38 65.40 47.41 P APO-G-7B 44.79 27.20 74.30 39.62 23.55 43.17 40.11 64.93 44.71 P APO-D-7B 47.33 24.34 76.70 39.05 25.34 48.54 41.50 66.93 46.22 R1-ShareVL-7B 48.22 29.14 73.30 45.14 24.55 48.76 38.32 65.06 46.56 Perception-R1-7B 46.70 26.74 73.40 46.48 23.75 44.07 38.20 64.33 45.46 NoisyRollout-7B 46.44 27.50 72.30 46.10 23.15 48.32 36.30 63.93 45.51 MMR1-7B-RL 43.90 26.01 71.60 47.87 27.14 49.44 35.08 64.80 45.73 VPPO-7B 47.20 30.52 76.60 43.81 27.94 47.87 39.65 67.20 47.60 V ision-Matters-7B 47.08 27.23 72.30 41.71 24.75 48.09 37.10 62.20 45.06 V ision-SR1-7B 42.76 27.76 72.30 37.14 24.55 48.32 41.38 65.26 44.93 PRCO-7B 49.49 30.86 77.10 50.29 29.74 49.66 42.08 67.80 49.63 T able 1: Main results on eight multimodal reasoning benchmarks with Qwen2.5-VL-3B and Qwen2.5-VL-7B backbones. W e report benchmark scores on math-related benchmarks, general-task benchmarks, and their ov erall av erage. The best and second-best results within each backbone are highlighted in bold and underlined , respecti v ely . 3 Experiments 3.1 Experimental Setup Models, Data, and Baselines. W e perform direct RL training on the Qwen2.5-VL-3B, Qwen2.5-VL- 7B, and Qwen3-VL-8B-Instruct backbones using V iRL39K ( W ang et al. , 2025a ). V iRL39K contains 39K verifiable multimodal reasoning questions across div erse visual formats, such as diagrams and charts. W e benchmark our method against re- cent open-source reasoning MLLMs at the 3B and 7B scales, and further ev aluate it on the stronger Qwen3-VL-8B-Instruct backbone. For the 3B set- ting, we compare with P APO-G-3B and P APO- D-3B ( W ang et al. , 2025e ), MMR1-3B-RL ( Leng et al. , 2025 ), and V ision-SR1-3B ( Li et al. , 2025c ). For the 7B setting, we include P APO-G-7B and P APO-D-7B ( W ang et al. , 2025e ), R1-ShareVL- 7B ( Y ao et al. , 2025a ), Perception-R1-7B ( Xiao et al. , 2025 ), V ision-Matters-7B ( Li et al. , 2025b ), NoisyRollout-7B ( Liu et al. , 2025b ), MMR1-7B- RL ( Leng et al. , 2025 ), VPPO-7B ( Huang et al. , 2025a ), and V ision-SR1-7B ( Li et al. , 2025c ). W e also implement two strong RL VR baselines by fine- tuning the Qwen2.5-VL backbones and Qwen3- VL-8B-Instruct with GRPO ( Shao et al. , 2024 ) and D APO ( Y u et al. , 2025 ). Appendix A.5 reports the Qwen3-VL-8B-Instruct results, and Appendix A.1 provides additional details. T raining Details. All experiments are imple- mented using the EasyR1 codebase ( Zheng et al. , 2025a ) and optimized with AdamW ( Loshchilov and Hutter , 2017 ), with a learning rate of 1 × 10 − 6 . Follo wing prior work ( Y ao et al. , 2025a ; Liu et al. , 2025b ; W ang et al. , 2025e ; Huang et al. , 2025a ), we use a rollout batch size of 384 for 200 optimiza- tion steps. W e set the Observer rollout group size to 4. F or the Solver , we use a rollout group size of 8, in line with recent multimodal RL training practice ( Huang et al. , 2025a ; Li et al. , 2025c ). W e adopt a caption-first warmup for the first 40 steps, during which the Solver is trained without image inputs to encourage ef fecti ve caption conditioning before restoring full multimodal inputs. More train- ing details are provided in Appendix A.2 . Evaluation Benchmarks. W e e v aluate on eight multimodal reasoning benchmarks, including math- related visual reasoning on MathV ista ( Lu et al. , 2023 ), MathV erse ( Zhang et al. , 2024 ), MathV i- sion ( W ang et al. , 2024 ), W eMath ( Qiao et al. , 5 2025 ), and DynaMath ( Zou et al. , 2024 ), and general tasks on LogicV ista ( Xiao et al. , 2024 ), MMMU-Pro ( Y ue et al. , 2025 ), and MMStar ( Chen et al. , 2024 ). W e use VLMEvalKit ( Duan et al. , 2024 ) with greedy decoding for all benchmarks, setting temperature to 0 and top-p to 1 . 0 . W e re- port single-sample greedy results under each bench- mark’ s official VLMEv alKit metric, denoted as ac- curacy for simplicity . All models are e v aluated under a single fixed ev aluation configuration to ensure fair comparison and reproducibility . See Appendix A.1 for additional e v aluation details. 3.2 Main Results PRCO yields consistent improvements across model scales and task categories. As sho wn in T a- ble 1 , PRCO impro ves upon the Qwen2.5-VL back- bones at both scales, with average gains of 7.65 and 7.18 points in the 3B and 7B settings, respec- ti vely . Under identical training settings, PRCO out- performs GRPO and D APO. PRCO-3B surpasses strong RL VR baselines and recent open-source rea- soning MLLMs built on the same 3B backbone. PRCO-7B further achie ves the best ov erall perfor - mance and the strongest results across all ev alu- ated benchmarks, outperforming the strongest base- line, VPPO. Across task cate gories, PRCO yields steady gains on math-related benchmarks while also improving general multimodal reasoning, in- dicating broad cross-task generalization. Overall, PRCO enables perception-reasoning coev olution through role-specific, reliable learning signals un- der a shared policy , setting a new performance bar among open-source MLLMs. 3.3 Ablation Study T o better understand the contribution of each com- ponent in PRCO, we conduct comprehensiv e ab- lations. W e report math-related, general-task, and ov erall a verages in T able 2 . More ablation details are provided in Appendix A.4 . Effect of role-wise updates. W e isolate PRCO’ s role-specific learning signals by dropping one role’ s trajectories during policy updates while keep- ing the trajectory generation procedure unchanged. PRCO w/o Solver updates only from the Observ er caption trajectories, whereas PRCO w/o Observer updates only from the Solver answer trajectories. As sho wn in T able 2 , removing Solv er updates sub- stantially reduces the overall impro vement. This is expected because the base model’ s reasoning is lim- Model Math ∆ General ∆ A vg ∆ Qwen2.5-VL-3B 31.50 - 40.51 - 34.88 - + PRCO w/o warmup 39.45 +7.95 45.88 +5.37 41.86 +6.98 + PRCO w/o Observer 39.48 +7.98 45.02 +4.51 41.56 +6.68 + PRCO w/o Solver 33.46 +1.96 41.55 +1.04 36.49 +1.61 + PRCO 40.49 +8.99 45.94 +5.43 42.53 +7.65 Qwen2.5-VL-7B 38.89 - 48.39 - 42.45 - + PRCO w/o warmup 44.52 +5.63 51.57 +3.18 47.16 +4.71 + PRCO w/o Observer 46.22 +7.33 51.47 +3.08 48.19 +5.74 + PRCO w/o Solver 41.73 +2.84 49.16 +0.77 44.52 +2.07 + PRCO 47.50 +8.61 53.18 +4.79 49.63 +7.18 T able 2: Ablation study of PRCO on Qwen2.5-VL-3B and Qwen2.5-VL-7B. W e report average scores on math- related benchmarks, general-task benchmarks, and all benchmarks; ∆ denotes improvement over the corre- sponding base model. 0 50 100 150 200 Training Steps 0.2 0.3 0.4 0.5 0.6 0.7 0.8 Reward PRCO-3B W/O Solver W/O Observer 0 50 100 150 200 Training Steps 0.3 0.4 0.5 0.6 0.7 0.8 0.9 Reward PRCO-7B W/O Solver W/O Observer (a) Training curves based on Qwen2.5- VL-3B (b) Training curves based on Qwen2.5- VL-7B Figure 3: Training re w ard curves of PRCO and its role- ablation variants with Qwen2.5-VL-3B and Qwen2.5- VL-7B as backbones. ited, and outcome-driven optimization on Solver trajectories is necessary for improving end-task accuracy . Notably , PRCO w/o Solver still outper- forms the baseline, indicating that utility-driv en caption learning alone can improv e final-answer accuracy and suggesting that the perception side remains a key bottleneck. In contrast, removing Observer updates yields consistent drops across model scales and benchmarks. This confirms that utility-dri ven e vidence extraction pro vides comple- mentary benefits on top of outcome-optimized rea- soning by strengthening the intermediate e vidence signal av ailable to the Solver . W e also report the training curves in Fig. 3 , which show that PRCO achie ves higher re wards throughout training. Effect of caption-first warmup. W e further ab- late the caption-first w armup, where the Solv er is first trained without image inputs to encourage re- liance on the Observ er caption before restoring full multimodal inputs. As shown in T able 2 , remov- ing warmup degrades performance on both back- bones, reducing the overall average on Qwen2.5- VL-7B from 49 . 63 to 47 . 16 . This suggests that warmup is important for encouraging caption us- age. W ithout warmup, the Solv er can rely on raw 6 2 0 2 1 2 2 2 3 2 4 2 5 k (number of samples) 40 50 60 70 80 Pass@ k (a) Pass@ k performance on Wemath PRCO-7B VPPO-7B DAPO-7B 2 0 2 1 2 2 2 3 2 4 2 5 k (number of samples) 65 70 75 80 85 Pass@ k (b) Pass@ k performance on MMStar PRCO-7B VPPO-7B DAPO-7B Figure 4: Pass@ k comparison on W eMath and MMStar for PRCO-7B, D APO-7B, and VPPO-7B under different inference-time sampling budgets. visual inputs too early , which weakens the learn- ing signal for the Observer . T o further diagnose this behavior , we analyze how the standard devi- ation of caption rewards ev olv es during training in Fig. 9 . W ithout warmup, the standard devia- tion decreases rapidly . Solver outcomes then be- come lar gely insensiti ve to which caption is pro- vided. Consequently , dif ferent captions induce sim- ilar do wnstream outcomes and yield lo w-contrast utility re ward to the Observer , weakening credit assignment and making perception–reasoning de- coupling less ef fecti ve later in training. 3.4 More Results and Analysis Pass@k Perf ormance. Pass@k estimates the probability that a model can solve a question within k attempts, and is commonly used as a proxy for the model’ s reasoning capability ( Chen et al. , 2021 ). W e compare PRCO-7B with two competiti ve Qwen2.5-VL-7B baselines, D APO- 7B and VPPO-7B, by estimating pass@k with k ∈ { 1 , 2 , 4 , 8 , 16 , 32 } sampled solutions per ques- tion. W e report the pass@k on W eMath and MM- Star in Fig. 4 . As k increases, PRCO-7B exhibits larger gains o ver the baselines on both benchmarks. On W eMath, the gap ov er D APO-7B gro ws from 3.53 at pass@1 to 7.33 at pass@32. On MMStar, PRCO-7B is comparable to VPPO-7B at pass@1, while the margin increases from 0.47 at pass@1 to 6.27 at pass@32. This trend suggests that PRCO- 7B scales better with the sampling budget, indicat- ing more robust reasoning capability . Error category analysis. W e conduct an error- category analysis of Qwen2.5-VL-7B and PRCO- 7B on W eMath and MathV ista. Using the prompt in Fig. 11 , we use OpenAI’ s GPT -5.1 model to cat- egorize each incorrect prediction into three types: perception errors, reasoning errors, and other er- rors (including knowledge and extraction errors). Compared with Qwen2.5-VL-7B, PRCO reduces 23.8% 39.2% 19.7% 31.4% Figure 5: Error category analysis on W eMath and Math- V ista. Compared with Qwen2.5-VL-7B, PRCO-7B re- duces both perception and reasoning errors. For pre- sentation clarity , Knowledge and Extraction errors are grouped into the Other category . both perception and reasoning errors, as sho wn in Fig. 5 . On W eMath, PRCO reduces perception er- rors by 39.2% and reasoning errors by 23.8%. No- tably , PRCO achiev es a larger reduction in percep- tion errors than GRPO, consistent with the results in Fig. 1 . A similar trend is observed on Math- V ista, where PRCO reduces both perception and reasoning errors. These results suggest that sepa- rate and reliable learning signals improve question- grounded visual perception and enable more robust reasoning under explicit e vidence guidance. Effect of rollout gr oup size. Rollout group size is a ke y hyperparameter in online RL, as it controls both the number of within-prompt samples and the training-time rollout b udget. W e further study PRCO under different rollout budgets by first fixing the Observer group size and v arying the Solv er roll- out group size G S . As sho wn in Fig. 6 , increasing G S consistently improv es both PRCO and D APO, with a larger gain from 4 to 8 than from 8 to 12. No- tably , PRCO with only G S =4 already outperforms D APO with G =12 , underscoring the effecti v eness of PRCO ev en with a much smaller Solver-side roll- out group. This indicates that PRCO benefits from separate learning signals for perception and reason- ing, which decouple the two roles at the gradient le vel and impro v e optimization ef ficiency under a fixed rollout b udget. W e also study the ef fect of the Observer rollout group size G O in Appendix A.4 . 3.5 Case Study T o better understand ho w PRCO improv es question- grounded visual perception, Fig. 7 presents two rep- resentati ve qualitati v e cases, showing the Observ er outputs and the corresponding attention ov erlays obtained by av eraging attention to image tokens across all layers ( Dang et al. , 2024 ). In case (a), the Observer accurately extracts only the question- 7 G=4 G=8 G=12 38 40 42 44 46 48 (a) Math G=4 G=8 G=12 48 50 52 54 (b) General G=4 G=8 G=12 42 44 46 48 50 (c) Avg Qwen2.5- VL-7B (Base) DAPO PRCO Figure 6: Effect of solver rollout group size G S on Qwen2.5-VL-7B. W e vary G S among {4, 8, 12} and compare PRCO with D APO on Math, General, and A vg. The dashed line denotes the base model performance. Question(a) : W hich model a chieves the highest s core in t er ms of Rec ? Choices : ( A) … Obse r ve r Outpu t The “Rec” col umn v a l ues for v ariou s mo dels are as f oll ows : T ransf or me rs Agent (GPT - 4) = 18.2, LL aMA - Adap t er v2 - 7B = 1 6.8, O penFl amingo- 9B = 24.6, MiniGPT -4- 8B = 27 .4, BLIP - 2- 12B = 2 7 . 5 , […]( omit ) , LLa VA - 7B (LL aMA - 2) = 3 2. 9 , LLaV A - 13B (V1.3 , 336px ) = 38.1, LLaV A - 13B (LL aMA - 2) = 39 .2 , MM - ReAct - GPT - 4 = 33.1. Question(b ) : Below is a top-down view of […]( omit ). Wha t ar e the lengths of OC an d AB? Obse r ve r Outpu t 1. P o i nt A is loc a t ed a t (0 , 2) , and po int B is l oca t ed a t (2, 2). 2. Point O is (1, 1 ), whi ch ap pea rs t o be the c en t er of th e circl e . 3. Line segment AB co nn e ct s points A(0 , 2) and B(2, 2), forming a horizo nt al line segmen t . 4. Line segmen t OC is a vertic al l ine segmen t c onne cting O(1 , 1) t o the midpoint of AB . Figure 7: Qualitativ e analysis of PRCO-7B on two rep- resentativ e cases. For each case, we sho w the Observ er output together with an attention overlay obtained by av eraging attention to image tokens across all layers. rele vant visual evidence from the Rec column, rather than transcribing the full table, indicating that it preserves the evidence necessary for table- based option selection while av oiding unnecessary visual details. In case (b), the Observer localizes points, coordinates, and segment relations from the diagram, providing the full set of visual evi- dence required for solving the geometry question. In both cases, the attention overlays are concen- trated on the corresponding question-relev ant re- gions. More complete case studies are provided in Appendix A.6 . 4 Related W ork RL with verifiable r ewards f or multimodal rea- soning. Reinforcement learning with verifiable re wards (RL VR) defines re w ards via automatic out- come verification. It is often optimized with group- based PPO variants such as GRPO and DAPO ( Shao et al. , 2024 ; Y u et al. , 2025 ). Recent work has begun to explore RL VR for MLLMs, with impro ve- ments in data construction, curricula, and rollout strategies. V ision-R1 bootstraps multimodal chain- of-thought with staged RL schedules ( Huang et al. , 2025b ), NoisyRollout perturbs images during roll- outs to improve exploration and robustness ( Liu et al. , 2025b ), and VL-Rethinker stabilizes training via selectiv e replay and forced rethinking ( W ang et al. , 2025a ). RL VR has also been paired with ex- plicit visual operations, e.g., Activ e-O3 ( Zhu et al. , 2025 ), DeepEyes ( Zheng et al. , 2025b ), Pixel Rea- soner ( W ang et al. , 2025b ), and OpenThinkIMG ( Su et al. , 2025 ). Per ception-awar e RL for multimodal r easoning. Beyond outcome rew ards, recent work incorporates perception-aw are signals and objecti ves to impro ve visual perception in multimodal reasoning ( W ang et al. , 2025e ; Xiao et al. , 2025 ; Zhang et al. , 2025 ). Perception-R1 introduces an explicit perception re ward to score the fidelity of visual evidence in trajectories ( Xiao et al. , 2025 ). CapRL defines caption rew ards by their question-answering utility for a vision-free LLM ( Xing et al. , 2025 ), while SOPHIA adopts semi-off-polic y RL that propa- gates outcome rew ards from external slo w-thinking traces back to the model’ s visual understanding ( Shen et al. , 2025b ). Other caption-centric or con- sistency objecti v es similarly optimize descriptions for downstream solvability ( Gou et al. , 2025 ; T u et al. , 2025 ). P APO integrates perception signals into policy optimization via objectiv e-le v el regular - ization ( W ang et al. , 2025e ). Re ward designs based on v erifiable perception proxies or perception gates provide complementary supervision ( W ang et al. , 2025c ; Zhang et al. , 2025 ). Credit assignment is further refined by reweighting updates tow ard visually dependent tokens ( Huang et al. , 2025a , 2026b ). 5 Conclusion In this paper , we presented PRCO, a dual-role RL VR frame work for multimodal reasoning that disentangles perception and reasoning under a shared policy . By assigning separate and reli- able learning signals to an Observer for question- conditioned e vidence captioning and a Solver for e vidence-conditioned reasoning, PRCO enables perception–reasoning coe volution during RL VR training. Extensiv e experiments on eight chal- lenging benchmarks sho wed consistent gains ov er strong RL VR baselines across model scales, while ablation and diagnostic analyses further v alidated the ef fecti veness of its key design choices. Over- all, these results suggest that role-specific learning signals are a promising direction for improving 8 multimodal reasoning under verifiable re w ards. Limitations Our current study focuses on multimodal reasoning benchmarks with concise and verifiable answers. Further e v aluation is needed to determine ho w well PRCO generalizes to more open-ended generation settings. Extending the frame work to broader multi- modal generation tasks is an important direction for future work, since re ward signals in these settings are often less well defined. In addition, the Ob- server is trained with auxiliary supervision for leak- age detection and answer verification. Although this auxiliary supervision is helpful in our setting, it may also introduce additional noise and com- putational overhead. Finally , representing visual e vidence as short captions is inherently lossy . Im- portant aspects of the input, such as global structure (e.g., layout and texture), fine-grained spatial re- lations, and geometric details that are difficult to compress faithfully into text, may be only partially preserved. Future work could therefore explore richer intermediate representations for visual inputs that cannot be adequately captured by captions. Ethical Considerations This work aims to impro ve multimodal reasoning by explicitly separating perception and reasoning during reinforcement learning. All e xperiments are conducted on publicly a vailable datasets and bench- marks. As in prior work, these data sources may contain social biases, annotation artifacts, or other imperfections that can af fect model behavior and e v aluation outcomes. W e do not identify additional ethical risks introduced specifically by our method beyond those already associated with multimodal model training and e v aluation on existing public data. W e encourage continued attention to data quality , transparent ev aluation, and responsible re- porting of model capabilities and limitations. References Meng Cao, Haoze Zhao, Can Zhang, Xiaojun Chang, Ian Reid, and Xiaodan Liang. 2025. Ground-r1: In- centivizing grounded visual reasoning via reinforce- ment learning. arXiv pr eprint arXiv:2505.20272 . Lin Chen, Jinsong Li, Xiaoyi Dong, Pan Zhang, Y uhang Zang, Zehui Chen, Haodong Duan, Jiaqi W ang, Y u Qiao, Dahua Lin, and 1 others. 2024. Are we on the right way for ev aluating lar ge vision-language models? Advances in Neural Information Pr ocessing Systems , 37:27056–27087. Mark Chen, Jerry T worek, Heew oo Jun, Qiming Y uan, Henrique Ponde De Oliv eira Pinto, Jared Kaplan, Harri Edwards, Y uri Burda, Nicholas Joseph, Greg Brockman, and 1 others. 2021. Evaluating large language models trained on code. arXiv preprint arXiv:2107.03374 . Y unkai Dang, Kaichen Huang, Jiahao Huo, Y ibo Y an, Sirui Huang, Dongrui Liu, Mengxi Gao, Jie Zhang, Chen Qian, Kun W ang, and 1 others. 2024. Explain- able and interpretable multimodal large language models: A comprehensiv e surve y . arXiv preprint arXiv:2412.02104 . Haodong Duan, Junming Y ang, Y uxuan Qiao, Xinyu Fang, Lin Chen, Y uan Liu, Xiaoyi Dong, Y uhang Zang, Pan Zhang, Jiaqi W ang, and 1 others. 2024. Vlmev alkit: An open-source toolkit for ev aluating large multi-modality models. In Pr oceedings of the 32nd ACM international confer ence on multimedia , pages 11198–11201. Y ue Fan, Xuehai He, Diji Y ang, Kaizhi Zheng, Ching- Chen Kuo, Y uting Zheng, Srav ana Jyothi Naraya- naraju, Xinze Guan, and Xin Eric W ang. 2025. Grit: T eaching mllms to think with images. arXiv pr eprint arXiv:2505.15879 . Y unhao Gou, Kai Chen, Zhili Liu, Lanqing Hong, Xin Jin, Zhenguo Li, James T Kw ok, and Y u Zhang. 2025. Perceptual decoupling for scalable multi-modal rea- soning via rew ard-optimized captioning. arXiv e- prints , pages arXiv–2506. Daya Guo, Dejian Y ang, Haowei Zhang, Junxiao Song, Peiyi W ang, Qihao Zhu, Runxin Xu, Ruoyu Zhang, Shirong Ma, Xiao Bi, and 1 others. 2025. Deepseek-r1: Incentivizing reasoning capability in llms via reinforcement learning. arXiv pr eprint arXiv:2501.12948 . Ailin Huang, Ang Li, Aobo K ong, Bin W ang, Binxing Jiao, Bo Dong, Bojun W ang, Boyu Chen, Brian Li, Buyun Ma, and 1 others. 2026a. Step 3.5 flash: Open frontier-le v el intelligence with 11b activ e parameters. arXiv pr eprint arXiv:2602.10604 . Muye Huang, Lingling Zhang, Y ifei Li, Y aqiang W u, and Jun Liu. 2026b. Sk etchvl: Policy op- timization via fine-grained credit assignment for chart understanding and more. arXiv pr eprint arXiv:2601.05688 . Siyuan Huang, Xiaoye Qu, Y afu Li, Y un Luo, Zefeng He, Daizong Liu, and Y u Cheng. 2025a. Spotlight on token perception for multimodal reinforcement learning. arXiv pr eprint arXiv:2510.09285 . W enxuan Huang, Bohan Jia, Zijie Zhai, Shaosheng Cao, Zheyu Y e, Fei Zhao, Zhe Xu, Xu T ang, Y ao Hu, and Shaohui Lin. 2025b. V ision-r1: Incentivizing reasoning capability in multimodal large language models. arXiv pr eprint arXiv:2503.06749 . Sicong Leng, Jing W ang, Jiaxi Li, Hao Zhang, Zhiqiang Hu, Boqiang Zhang, Y uming Jiang, Hang Zhang, 9 Xin Li, Lidong Bing, and 1 others. 2025. Mmr1: Enhancing multimodal reasoning with v ariance- aware sampling and open resources. arXiv pr eprint arXiv:2509.21268 . Shenshen Li, Kaiyuan Deng, Lei W ang, Hao Y ang, Chong Peng, Peng Y an, Fumin Shen, Heng T ao Shen, and Xing Xu. 2025a. Truth in the few: High-value data selection for efficient multi-modal reasoning. arXiv pr eprint arXiv:2506.04755 . Y uting Li, Lai W ei, Kaipeng Zheng, Jingyuan Huang, Guilin Li, Bo W ang, Linghe K ong, Lichao Sun, and W eiran Huang. 2025b. Revisiting visual understand- ing in multimodal reasoning through a lens of image perturbation. arXiv pr eprint arXiv:2506.09736 . Zongxia Li, W enhao Y u, Chengsong Huang, Rui Liu, Zhenwen Liang, Fuxiao Liu, Jingxi Che, Dian Y u, Jordan Boyd-Graber , Haitao Mi, and 1 others. 2025c. Self-rew arding vision-language model via reasoning decomposition. arXiv pr eprint arXiv:2508.19652 . Chengzhi Liu, Zhongxing Xu, Qingyue W ei, Juncheng W u, James Zou, Xin Eric W ang, Y uyin Zhou, and Sheng Liu. 2025a. More thinking, less seeing? as- sessing amplified hallucination in multimodal reason- ing models. arXiv pr eprint arXiv:2505.21523 . Xiangyan Liu, Jinjie Ni, Zijian W u, Chao Du, Longxu Dou, Haonan W ang, T ian yu Pang, and Michael Qizhe Shieh. 2025b. Noisyrollout: Reinforcing visual reasoning with data augmentation. arXiv preprint arXiv:2504.13055 . Ziyu Liu, Ze yi Sun, Y uhang Zang, Xiaoyi Dong, Y uhang Cao, Haodong Duan, Dahua Lin, and Jiaqi W ang. 2025c. V isual-rft: V isual reinforcement fine-tuning. In Pr oceedings of the IEEE/CVF International Con- fer ence on Computer V ision , pages 2034–2044. Ilya Loshchilov and Frank Hutter . 2017. Decou- pled weight decay regularization. arXiv pr eprint arXiv:1711.05101 . Pan Lu, Hritik Bansal, T ony Xia, Jiacheng Liu, Chun- yuan Li, Hannaneh Hajishirzi, Hao Cheng, Kai- W ei Chang, Michel Galley , and Jianfeng Gao. 2023. Mathvista: Evaluating mathematical reasoning of foundation models in visual contexts. arXiv pr eprint arXiv:2310.02255 . Fanqing Meng, Lingxiao Du, Zongkai Liu, Zhixiang Zhou, Quanfeng Lu, Daocheng Fu, Botian Shi, W en- hai W ang, Junjun He, Kaipeng Zhang, and 1 others. 2025. Mm-eureka: Exploring visual aha moment with rule-based large-scale reinforcement learning. arXiv pr eprint arXiv:2503.07365 . Runqi Qiao, Qiuna T an, Guanting Dong, MinhuiW u MinhuiW u, Chong Sun, Xiaoshuai Song, Jiapeng W ang, Zhuoma Gongque, Shanglin Lei, Y if an Zhang, and 1 others. 2025. W e-math: Does your large multi- modal model achie ve human-lik e mathematical rea- soning? In Pr oceedings of the 63rd Annual Meeting of the Association for Computational Linguistics (V ol- ume 1: Long P apers) , pages 20023–20070. Zhihong Shao, Peiyi W ang, Qihao Zhu, Runxin Xu, Junxiao Song, Xiao Bi, Hao wei Zhang, Mingchuan Zhang, YK Li, Y ang W u, and 1 others. 2024. Deepseekmath: Pushing the limits of mathematical reasoning in open language models. arXiv pr eprint arXiv:2402.03300 . Haozhan Shen, Peng Liu, Jingcheng Li, Chunxin Fang, Y ibo Ma, Jiajia Liao, Qiaoli Shen, Zilun Zhang, Kangjia Zhao, Qianqian Zhang, and 1 oth- ers. 2025a. Vlm-r1: A stable and generalizable r1- style large vision-language model. arXiv preprint arXiv:2504.07615 . Junhao Shen, Haiteng Zhao, Y uzhe Gu, Songyang Gao, Kuikun Liu, Haian Huang, Jianfei Gao, Dahua Lin, W enwei Zhang, and Kai Chen. 2025b. Semi-off-polic y reinforcement learning for vision- language slo w-thinking reasoning. arXiv preprint arXiv:2507.16814 . Zhaochen Su, Linjie Li, Mingyang Song, Y unzhuo Hao, Zhengyuan Y ang, Jun Zhang, Guanjie Chen, Jiawei Gu, Juntao Li, Xiaoye Qu, and 1 others. 2025. Openthinkimg: Learning to think with images via visual tool reinforcement learning. arXiv preprint arXiv:2505.08617 . Kimi T eam, Angang Du, Bofei Gao, Bo wei Xing, Changjiu Jiang, Cheng Chen, Cheng Li, Chenjun Xiao, Chenzhuang Du, Chonghua Liao, and 1 others. 2025. Kimi k1. 5: Scaling reinforcement learning with llms. arXiv pr eprint arXiv:2501.12599 . Qwen T eam. 2025. Qwen2.5-vl . Songjun T u, Qichao Zhang, Jingbo Sun, Y uqian Fu, Lin- jing Li, Xiangyuan Lan, Dongmei Jiang, Y ao wei W ang, and Dongbin Zhao. 2025. Perception- consistency multimodal large language models rea- soning via caption-regularized policy optimization. arXiv pr eprint arXiv:2509.21854 . Zhongwei W an, Zhihao Dou, Che Liu, Y u Zhang, Dongfei Cui, Qinjian Zhao, Hui Shen, Jing Xiong, Y i Xin, Y ifan Jiang, and 1 others. 2025. Srpo: En- hancing multimodal llm reasoning via reflection- aware reinforcement learning. arXiv pr eprint arXiv:2506.01713 . Haozhe W ang, Chao Qu, Zuming Huang, W ei Chu, Fangzhen Lin, and W enhu Chen. 2025a. Vl- rethinker: Incenti vizing self-reflection of vision- language models with reinforcement learning. arXiv pr eprint arXiv:2504.08837 . Haozhe W ang, Alex Su, W eiming Ren, Fangzhen Lin, and W enhu Chen. 2025b. Pixel reasoner: Incentivizing pixel-space reasoning with curiosity- driv en reinforcement learning. arXiv preprint arXiv:2505.15966 . K e W ang, Junting Pan, W eikang Shi, Zimu Lu, Houxing Ren, Aojun Zhou, Mingjie Zhan, and Hongsheng Li. 2024. Measuring multimodal mathematical reason- ing with math-vision dataset. Advances in Neural Information Pr ocessing Systems , 37:95095–95169. 10 Xiyao W ang, Zhengyuan Y ang, Chao Feng, Y ongyuan Liang, Y uhang Zhou, Xiaoyu Liu, Ziyi Zang, Ming Li, Chung-Ching Lin, K e vin Lin, and 1 others. 2025c. V icrit: A verifiable reinforcement learning proxy task for visual perception in vlms. arXiv pr eprint arXiv:2506.10128 . Xiyao W ang, Zhengyuan Y ang, Chao Feng, Hongjin Lu, Linjie Li, Chung-Ching Lin, K e vin Lin, Furong Huang, and Lijuan W ang. 2025d. Sota with less: Mcts-guided sample selection for data-ef ficient vi- sual reasoning self-improvement. arXiv preprint arXiv:2504.07934 . Y ongyao W ang, Ziqi Miao, Lu Y ang, Haonan Jia, W ent- ing Y an, Chen Qian, and Lijun Li. 2026. T absieve: Explicit in-table evidence selection for tab ular pre- diction. arXiv pr eprint arXiv:2602.11700 . Zhenhailong W ang, Xuehang Guo, Sofia Stoica, Haiyang Xu, Hongru W ang, Hyeonjeong Ha, Xiusi Chen, Y angyi Chen, Ming Y an, Fei Huang, and 1 others. 2025e. Perception-aware policy opti- mization for multimodal reasoning. arXiv preprint arXiv:2507.06448 . Zijian W u, Jinjie Ni, Xiangyan Liu, Zichen Liu, Hang Y an, and Michael Qizhe Shieh. 2025. Synthrl: Scal- ing visual reasoning with verifiable data synthesis. arXiv pr eprint arXiv:2506.02096 . Canran Xiao, T ianxiang Xu, Y iyang Jiang, Haoyu Gao, Y uhan W u, and 1 others. 2026. Rev ersible primiti ve– composition alignment for continual vision–language learning. In The F ourteenth International Conference on Learning Representations . T ong Xiao, Xin Xu, Zhen ya Huang, Hongyu Gao, Quan Liu, Qi Liu, and Enhong Chen. 2025. Perception- r1: Advancing multimodal reasoning capabilities of mllms via visual perception rew ard. arXiv pr eprint arXiv:2506.07218 . Y ijia Xiao, Edward Sun, T ianyu Liu, and W ei W ang. 2024. Logicvista: Multimodal llm logical reason- ing benchmark in visual contexts. arXiv preprint arXiv:2407.04973 . Long Xing, Xiaoyi Dong, Y uhang Zang, Y uhang Cao, Jianze Liang, Qidong Huang, Jiaqi W ang, Feng W u, and Dahua Lin. 2025. Caprl: Stimulating dense im- age caption capabilities via reinforcement learning. arXiv pr eprint arXiv:2509.22647 . Zihang Xu, Haozhi Xie, Ziqi Miao, W uxuan Gong, Chen Qian, and Lijun Li. 2026. Stable adaptive think- ing via advantage shaping and length-a ware gradient regulation. arXiv preprint . An Y ang, Anfeng Li, Baosong Y ang, Beichen Zhang, Binyuan Hui, Bo Zheng, Bowen Y u, Chang Gao, Chengen Huang, Chenxu Lv , and 1 others. 2025. Qwen3 technical report. arXiv preprint arXiv:2505.09388 . Huanjin Y ao, Qixiang Y in, Jingyi Zhang, Min Y ang, Y ibo W ang, W enhao W u, Fei Su, Li Shen, Minghui Qiu, Dacheng T ao, and 1 others. 2025a. R1-sharevl: Incentivizing reasoning capability of multimodal large language models via share-grpo. arXiv pr eprint arXiv:2505.16673 . Zijun Y ao, Y antao Liu, Y anxu Chen, Jianhui Chen, Jun- feng Fang, Lei Hou, Juanzi Li, and T at-Seng Chua. 2025b. Are reasoning models more prone to halluci- nation? arXiv preprint . Qiying Y u, Zheng Zhang, Ruofei Zhu, Y ufeng Y uan, Xiaochen Zuo, Y u Y ue, W einan Dai, T iantian Fan, Gaohong Liu, Lingjun Liu, and 1 others. 2025. Dapo: An open-source llm reinforcement learning system at scale. arXiv pr eprint arXiv:2503.14476 . Xiang Y ue, T ian yu Zheng, Y uansheng Ni, Y ubo W ang, Kai Zhang, Shengbang T ong, Y uxuan Sun, Botao Y u, Ge Zhang, Huan Sun, and 1 others. 2025. Mmmu- pro: A more robust multi-discipline multimodal un- derstanding benchmark. In Pr oceedings of the 63rd Annual Meeting of the Association for Computational Linguistics (V olume 1: Long P apers) , pages 15134– 15186. Shuang Zeng, Xinyuan Chang, Mengwei Xie, Xinran Liu, Y ifan Bai, Zheng Pan, Mu Xu, Xing W ei, and Ning Guo. 2025a. Futuresightdriv e: Thinking visu- ally with spatio-temporal cot for autonomous driving. arXiv pr eprint arXiv:2505.17685 . Shuang Zeng, Dekang Qi, Xinyuan Chang, Feng Xiong, Shichao Xie, Xiaolong W u, Shiyi Liang, Mu Xu, and Xing W ei. 2025b. Janusvln: Decoupling se- mantics and spatiality with dual implicit memory for vision-language na vigation. arXiv preprint arXiv:2509.22548 . Bo Zhang, Jiaxuan Guo, Lijun Li, Dongrui Liu, Su- jin Chen, Guanxu Chen, Zhijie Zheng, Qihao Lin, Lewen Y an, Chen Qian, and 1 others. 2026. Deep- sight: An all-in-one lm safety toolkit. arXiv pr eprint arXiv:2602.12092 . Chi Zhang, Haibo Qiu, Qiming Zhang, Y ufei Xu, Zhix- iong Zeng, Siqi Y ang, Peng Shi, Lin Ma, and Jing Zhang. 2025. Perceptual-evidence anchored rein- forced learning for multimodal reasoning. arXiv pr eprint arXiv:2511.18437 . Renrui Zhang, Dongzhi Jiang, Y ichi Zhang, Haokun Lin, Ziyu Guo, Pengshuo Qiu, Aojun Zhou, Pan Lu, Kai-W ei Chang, Y u Qiao, and 1 others. 2024. Math- verse: Does your multi-modal llm truly see the dia- grams in visual math problems? In Eur opean Confer- ence on Computer V ision , pages 169–186. Springer . Y aowei Zheng, Junting Lu, Shenzhi W ang, Zhangchi Feng, Dongdong Kuang, and Y uwen Xiong. 2025a. Easyr1: An efficient, scalable, multi-modality rl train- ing framew ork. arXiv pr eprint arXiv:2501.12345 . Ziwei Zheng, Michael Y ang, Jack Hong, Chenxiao Zhao, Guohai Xu, Le Y ang, Chao Shen, and Xing 11 Y u. 2025b. Deepeyes: Incenti vizing" thinking with images" via reinforcement learning. arXiv preprint arXiv:2505.14362 . Y ixiao Zhou, Y ang Li, Dongzhou Cheng, Hehe F an, and Y u Cheng. 2026. Look inward to explore outward: Learning temperature policy from llm internal states via hierarchical rl. arXiv pr eprint arXiv:2602.13035 . Muzhi Zhu, Hao Zhong, Canyu Zhao, Zongze Du, Zheng Huang, Mingyu Liu, Hao Chen, Cheng Zou, Jingdong Chen, Ming Y ang, and 1 others. 2025. Activ e-o3: Empo wering multimodal lar ge language models with activ e perception via grpo. arXiv pr eprint arXiv:2505.21457 . Chengke Zou, Xingang Guo, Rui Y ang, Junyu Zhang, Bin Hu, and Huan Zhang. 2024. Dynamath: A dy- namic visual benchmark for e v aluating mathemati- cal reasoning rob ustness of vision language models. arXiv pr eprint arXiv:2411.00836 . A A ppendix A.1 Evaluation Details W e ev aluate our method on a di verse set of bench- marks spanning both math-related reasoning tasks and general multimodal tasks. T able 3 summarizes the benchmarks used in our e v aluation, where the e valuation splits and reported metrics follow the settings in VLMEv alKit ( Duan et al. , 2024 ). Dur- ing e v aluation, we strictly use the of ficial prompts for all open-source MLLM baselines to av oid po- tential ev aluation discrepancies. For PRCO, we use role-specific prompts for the Observ er and Solver during inference. The Observer is prompted to produce a question-conditioned e vidence caption, while the Solver is prompted to answer the question based on the caption and image. Math-Related Reasoning T asks. This category e v aluates mathematical reasoning abilities. • MathV erse ( Zhang et al. , 2024 ) is a bench- mark for multimodal mathematical reasoning that examines whether MLLMs truly under- stand diagrams. By presenting each problem in multiple versions with different distribu- tions of te xtual and visual information, it en- ables fine-grained analysis of a model’ s re- liance on visual versus te xtual cues. • MathV ision ( W ang et al. , 2024 ) focuses on competition-le vel multimodal math reasoning. Its problems are drawn from real mathematics competitions and cov er multiple disciplines and dif ficulty le vels, pro viding a challenging testbed for advanced reasoning o ver diagrams and symbolic content. • MathV ista ( Lu et al. , 2023 ) is a comprehen- si ve benchmark for visual mathematical rea- soning. It covers div erse task types such as geometry , charts, tables, and scientific figures, making it a broad benchmark for ev aluating mathematical reasoning in visually grounded settings. • W eMath ( Qiao et al. , 2025 ) introduces a di- agnostic e valuation paradigm for multimodal math reasoning. By decomposing problems into sub-problems based on knowledge con- cepts, it supports fine-grained analysis of a model’ s strengths and weaknesses. • DynaMath ( Zou et al. , 2024 ) is designed to e v aluate the rob ustness and generalization of multimodal mathematical reasoning. It generates dynamic variations of seed prob- lems, allowing e v aluation of whether a model can maintain consistent reasoning under con- trolled changes. General Multimodal T asks. This category e v al- uates broader multimodal understanding abilities. • LogicV ista ( Xiao et al. , 2024 ) focuses on logi- cal reasoning in visual contexts. Although not limited to mathematics, it is useful for ev alu- ating whether models can perform structured reasoning grounded in diagrams and other vi- sual inputs. • MMMU-Pro ( Y ue et al. , 2025 ) is an enhanced benchmark for multidisciplinary multimodal understanding and reasoning. It is designed to reduce shortcuts from textual clues and pro- vide a more rigorous ev aluation of genuine visual understanding across subjects. • MMStar ( Chen et al. , 2024 ) is a curated benchmark for core multimodal reasoning abilities. Its samples are designed to require genuine visual understanding, making it a concise but challenging benchmark for multi- modal reasoning e v aluation. Evaluation parameters. Unless otherwise spec- ified, we use greedy decoding for single-sample e v aluation, with temperature set to 0.0, top- p to 1.0, top- k to -1, and the maximum number of generated tokens to 2048. For pass@ k e v aluation, we instead use temperature 0.6, top- p 0.95, and top- k -1. This setting follows common e v aluation practice ( Duan et al. , 2024 ; Zhang et al. , 2026 ). 12 Implementation details of error analysis. For error categorization, we use OpenAI’ s GPT -5.1 with temperature set to 0.0. For each incorrect prediction, the classifier recei v es the follo wing in- puts simultaneously: Image, Question, Model re- sponse, and Gold answer . The detailed classifi- cation prompt is shown in Fig. 11 . W e classify each error into one of fiv e categories: Perception, Reasoning, Kno wledge, Extraction, and Other . In practice, we find that the numbers of Knowledge, Extraction, and Other errors are relativ ely small. Therefore, for clearer visualization, we merge these three categories into a single Other category in Fig. 5 . A.2 T raining Details In this section, we describe the training details of the dif ferent methods. All training is conducted on 8 NVIDIA H200 GPUs. For the RL VR baselines GRPO and D APO, we follow the EasyR1 implementations ( Zheng et al. , 2025a ) exactly . GRPO uses clipping fac- tors ϵ l = 0 . 2 and ϵ h = 0 . 3 with a reference KL penalty coefficient β = 0 . 01 , while D APO uses ϵ l = 0 . 2 and ϵ h = 0 . 28 , removes the reference KL term, enables token-lev el loss averaging, and adopts dynamic sampling with a maximum of 20 retries. Other training hyperparameters, including the number of training steps, rollout batch size, and maximum sequence length, are summarized in T a- ble 4 . More implementation details can be found in the EasyR1 codebase. Our implementation of PRCO is based on the EasyR1 framework ( Zheng et al. , 2025a ). W e train all models on the V iRL39K and use MMK12 ( Meng et al. , 2025 ) as the v alidation set. Follo wing DAPO ( Y u et al. , 2025 ), we use dynamic sampling, clip-higher , and token-le vel polic y gra- dient loss. The clipping factors are set to ϵ l = 0 . 2 and ϵ h = 0 . 28 , respecti vely , and no KL-di ver gence penalty is applied. W e also remove the standard- de viation normalization term when computing the grouped adv antage in PRCO. W e find this design more suitable for role-specific optimization, as it preserves the original relative reward differences within each role and leads to more faithful adv an- tage updates for both the Observ er and the Solv er . T able 4 summarizes the main hyperparameters used in our experiments. For PRCO, the maximum roll- out length is set to 1024 tokens for the Observer and 2048 tokens for the Solver . W e use Qwen3- VL-8B-Instruct ( Y ang et al. , 2025 ) as the auxiliary model for answer leakage checking. W e also adopt a caption-first w armup for the first 40 training steps, during which the Solver is trained without image inputs to encourage caption conditioning before restoring full multimodal inputs. A.3 Prompt T emplates In this section, we present the prompts used in our experiments. For the RL VR baselines, including GRPO and D APO, we follow the prompt setting used in EasyR1 ( Zheng et al. , 2025a ), where the model is asked to first reason through the problem and then provide the final answer in a box ed format. For PRCO, we use role-specific prompts for the Observer and Solv er . The Observer is prompted to produce a question-conditioned e vidence caption that captures the question-relev ant visual e vidence without re v ealing the final answer , while the Solv er is prompted to answer the question based primarily on the caption and consult the image only when necessary . Fig. 10 shows the prompts used for PRCO and the RL VR baselines. Beyond the main training and inference prompts, we also employ auxiliary prompts for both training and analysis. Specifically , we use a leakage-checking prompt to verify that the Observer caption does not directly re veal the answer , and an error-type classification prompt to categorize model failures in the error analysis. Fig. 11 sho ws these auxiliary prompts. A.4 More Results and Analysis W e additionally ev aluate three PRCO-7B v ariants to study the roles of Solver -side visual ground- ing, leakage suppression, and coev olving utility feedback: ( i ) PRCO w/ I S = ∅ , which keeps the Solver image input empty throughout the RL stage; ( ii ) PRCO w/o Leakage Checker , which removes the leakage checker from the Observer utility re- ward; and ( iii ) PRCO w/ Fixed Utility Estimator , which replaces the co-ev olving Solver with a fix ed Qwen2.5-VL-7B for caption utility estimation. T able 5 shows that all three variants underper- form the full PRCO-7B, confirming that PRCO’ s gains arise from the combination of e vidence- conditioned reasoning, utility re ward with leakage checking, and Observer–Solv er coe volution. The largest drop is observed for PRCO w/ I S = ∅ , where the Solver ne ver re gains access to the image after the caption-first warmup. This suggests that while restricting the Solver to caption-based evi- dence is beneficial in early training, access to the image remains important in later RL optimization. 13 Benchmark Evaluation Split Num. of Samples Metric MathV erse ( Zhang et al. , 2024 ) MathV erse_MINI_V ision_Only 788 Overall MathV ista ( Lu et al. , 2023 ) MathV ista_MINI 1000 acc MathV ision ( W ang et al. , 2024 ) MathV ision 3040 acc W eMath ( Qiao et al. , 2025 ) W eMath 1740 Score (Strict) DynaMath ( Zou et al. , 2024 ) DynaMath 5010 Overall (W orst Case) LogicV ista ( Xiao et al. , 2024 ) LogicV ista 447 Overall MMMU-Pro ( Y ue et al. , 2025 ) MMMU_Pro_V 1730 Overall MMStar ( Chen et al. , 2024 ) MMStar 1500 Overall T able 3: Details of the benchmarks we e v aluate. The ev aluation splits and reported metrics follow the settings in VLMEvalKit ( Duan et al. , 2024 ). W e report single-sample greedy scores under each benchmark’ s official VLMEvalKit metric, which we denote as accurac y for simplicity . Method lr Max Len. Steps W armup Opt. Rollout BS Fr eeze VT T emp. top- p top- k GRPO 1e-6 2048 200 – AdamW 384 False 1 1.0 -1 D APO 1e-6 2048 200 – AdamW 384 False 1 1.0 -1 PRCO 1e-6 1024 / 2048 200 40 AdamW 384 False 1 1.0 -1 T able 4: T raining hyperparameters used in our experiments. For GRPO and D APO, Max Len. denotes the maximum rollout length of the single policy . For PRCO, it denotes Observ er / Solver maximum rollout lengths. In PRCO, the caption is the primary e vidence chan- nel, but restored image access still helps recover global structure, fine-grained spatial relations, and geometric details that are difficult to fully compress into text. Removing the leakage checker also de- grades the ov erall a v erage, indicating that suppress- ing answer leakage is important for learning useful intermediate e vidence. W ithout leakage checking, the Observer is more likely to e xploit answer short- cutting by placing the final answer directly in the caption, rather than extracting question-rele v ant vi- sual e vidence. This weakens the utility rew ard as a learning signal for evidence e xtraction and blurs the credit assignment between perception and rea- soning. PRCO w/ Fixed Utility Estimator further underperforms standard PRCO, suggesting that Ob- server learning benefits more from utility feedback that co-e volv es with the Solver and remains better aligned with its changing information needs. Observer rollout group size. W e further vary the observer rollout group size G O on Qwen2.5- VL-7B while keeping the Solver rollout group size fixed. As shown in Fig. 8 , the effect of G O is not monotonic: performance improves from G O = 2 to G O = 4 , b ut slightly declines at G O = 8 . A pos- sible e xplanation is that perception saturates earlier than reasoning, which is also consistent with Fig. 3 , where the v ariant without Solv er updates reaches its plateau relati vely early . Once the Observer already provides suf ficiently informati v e captions, further G=2 G=4 G=8 38 40 42 44 46 48 (a) Math G=2 G=4 G=8 48 50 52 54 (b) General G=2 G=4 G=8 42 44 46 48 50 (c) Avg Qwen2.5- VL-7B (Base) Observer rollout group size Figure 8: Ablation of the observer rollout group size G O in PRCO on Qwen2.5-VL-7B. Bars show dif ferent G O settings ( 2 , 4 , 8 ) on Math, General, and A vg, and the dashed line indicates the base model performance. increasing G O yields diminishing returns and may reduce the relati v e benefit of allocating more roll- outs to the Solv er . Under a fixed compute b udget, allocating additional rollouts to the Solver appears more effecti v e than further increasing the Observer rollout group size. This trend is also reflected in Fig. 8 and Fig. 6 : the setting with G O = 8 , G S = 8 performs worse than G O = 4 , G S = 12 , ev en though the former uses a larger Observer rollout group. W e use G O = 4 , which provides a good trade-of f between performance and training cost. A.5 PRCO on Qwen3-VL-8B-Instruct T o further ev aluate PRCO on a stronger vision- language backbone, we also train Qwen3-VL-8B- Instruct ( Y ang et al. , 2025 ). The training details are exactly the same as those in Appendix A.2 . On this backbone, we compare PRCO with two 14 Setting MathV erse MathV ision MathV ista W eMath DynaMath LogicV ista MMMU-Pro MMStar A vg. Qwen2.5-VL-7B (Base) 43.02 25.46 70.20 35.43 20.35 45.41 35.49 64.26 42.45 PRCO-7B (Ours) 49.49 30.86 77.10 50.29 29.74 49.66 42.08 67.80 49.63 PRCO w/ I S = ∅ 47.34 28.82 75.20 43.81 28.94 48.77 42.89 66.13 47.74 ↓ 1.89 PRCO w/ Fixed Utility Estimator 49.24 29.67 76.70 45.52 29.74 48.55 40.17 66.20 48.22 ↓ 1.41 PRCO w/o Leakage Checker 50.00 29.31 76.00 44.19 29.54 51.45 41.33 67.60 48.68 ↓ 0.95 T able 5: Additional ablations of PRCO-7B. W e report benchmark scores on eight benchmarks. Red downward arrows in the A vg. column indicate the drop relative to PRCO-7B (ours). Setting MathV erse (Vision Only) MathV ision MathV ista W eMath DynaMath LogicV ista MMMU-Pro-V MMStar A vg. Qwen3-VL-8B-Instruct (Base) 54.57 36.64 74.90 51.33 38.12 53.02 41.27 69.40 52.41 GRPO 64.59 46.94 78.30 59.62 41.12 61.75 52.72 73.00 59.75 D APO 65.61 50.13 78.30 66.86 43.31 62.19 53.87 74.07 61.79 PRCO (Ours) 69.67 51.78 79.00 68.57 46.11 60.40 54.57 74.27 63.05 T able 6: Comparison of PRCO with GRPO and DAPO on Qwen3-VL-8B-Instruct. W e report benchmark scores on eight benchmarks. The best and second-best results within each backbone are highlighted in bold and underlined. 0 50 100 150 200 Training Steps 0.02 0.04 0.06 0.08 0.10 0.12 0.14 0.16 0.18 Caption reward std PRCO-3B W/O warmup-3B 0 50 100 150 200 Training Steps 0.00 0.02 0.04 0.06 0.08 0.10 0.12 0.14 0.16 0.18 Caption reward std PRCO-7B W/O warmup-7B (a) Caption reward std based on Qwen2.5- VL-3B (b) Caption reward std based on Qwen2.5-VL-7B Figure 9: Training caption reward standard deviation curves of PRCO and its W/O warmup variant with Qwen2.5-VL-3B and Qwen2.5-VL-7B as backbones. RL VR baselines, D APO and GRPO. As shown in T able 6 , PRCO outperforms both GRPO and D APO on Qwen3-VL-8B-Instruct, further demonstrating its ef fecti veness on stronger models. A.6 Case Study For Fig. 7 , we construct the attention heatmap by extracting attention weights from the Observer’ s generated output tokens to visual tokens, av erag- ing them across all heads and transformer layers, and mapping the aggregated scores back to the 2D visual-token layout. W e further present four representativ e qualitative cases produced by PRCO trained on Qwen2.5-VL- 7B in Figs. 12 , 13 , 14 , and 15 , co vering synthetic object filtering, bar-chart reasoning, table-based option selection, and diagram-grounded geometry reasoning. These examples span sev eral visual formats that frequently appear in our ev aluation suite, including rendered scenes, charts, tables, and geometric diagrams. Across all cases, PRCO ex- hibits the intended division of labor between its two roles. The Observer first conv erts the image into a question-conditioned e vidence caption that externalizes the entities, attrib utes, v alues, and re- lations most relev ant to the question, while the Solver performs the downstream counting, compar- ison, or deri v ation ov er this intermediate e vidence. Qualitati vely , the Observer tends to preserve the at- tributes, numeric v alues, and spatial relations most rele v ant to the question, while the Solver performs the required filtering, comparison, counting, or ge- ometric deduction on top of the extracted evidence. These examples complement the main quantitati ve results by showing that PRCO not only improv es final-answer accuracy , but also yields cleaner and more task-aligned intermediate e vidence. 15 Prompt for Observer Y ou ar e the Observer . Y ou are gi v en an image and a rele v ant question. Y our task is to write a caption that extracts only the visually grounded details most useful for answering the question later . Question: {question} Caption guidelines: - Focus on the question’ s target(s): describe relev ant objects/regions/v alues with attrib utes and spatial relations (left/right/top/bottom, near/far , inside/ov erlapping), including counts and comparisons when rele v ant. - Include all visually grounded details necessary and sufficient to answer the question later . Y ou may omit visual clutter unrelated to the question. - Write the caption with enough visual evidence that a later LLM can answer Question as if it had seen the image (using only the caption + the question). - Don’t pro vide the final answer . Only describe what is directly observ able in the image, and av oid any additional reasoning or calculations. - Write a thorough caption that preserv es enough visual details to reconstruct the scene later; a v oid ov erly short summaries. - When the answer depends on specific labels, numbers, or option mappings, transcribe them e xplicitly rather than summarizing. Please format the caption as a Markdo wn bullet list rather than a paragraph. Prompt for Solver Y ou ar e the Solver . Use the caption as the PRIMAR Y and DEF A UL T input and solve directly from it. Only check the image when the caption does not provide enough information to complete the task. The detailed caption of the pro vided image: Question: {question} No w perform your reasoning inside ... , then output the final answer in \boxed{} . Prompt for GRPO and D APO {Question} Y ou first think through the reasoning process as an internal monologue, enclosed within tags. Then, provide your final answer enclosed within \boxed{}. Figure 10: T raining and inference prompt templates of the PRCO Observer and Solv er , GRPO, and D APO. 16 Prompt for Leakage Checker Y ou ar e the Leakage Checker . Y ou will be gi ven: - The question. - The Observer caption describing an image. Y our task: decide whether the caption contains answer leakage. Question: {question} Observer caption: Reminder: 1 = LEAK, 0 = SAFE. [Leakage Evaluation Rules...] Output f ormat (MUST f ollow exactly): Return a v alid JSON object with exactly tw o keys: - "label" : 0 or 1 - "reason" : a short reason (1–5 sentences) Example: { "reason": "The caption does not explicitly state a final answer.", "label": 0 } No w output the final answer as the JSON object (and nothing else). Prompt for Error T ype Classification Y ou will be giv en the f ollowing inputs: - Image - Question: {question} - Model response: {model_response} - Gold answer: {gold_answer} Y our task: Decide which error type best describes why the model response differs from the gold answer , and output e xactly one primary label plus a 1–3 sentence rationale. Choose exactly one PRIMAR Y type fr om this set: - Per ception : Perception error (misread options/tables, failed to extract info; if downstream mistak es are caused by misreading/missing visual info, label as Perception e ven if reasoning also f ails). - Reasoning : Reasoning error (missing/contradictory steps, wrong logical chain, arithmetic/calcula- tion mistakes, algebraic manipulation errors, etc.). - Knowledge : Factual / domain kno wledge error . - Extraction : Final-answer extraction/formatting error . - Other : Other / cannot categorize. Output requir ements: - Return a single minified JSON object only . - Do not output markdown, code fences, or any e xtra e xplanation. - Use exactly this format: {"rationale":"1-3 sentences why","category":"

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment