Unsafe2Safe: Controllable Image Anonymization for Downstream Utility

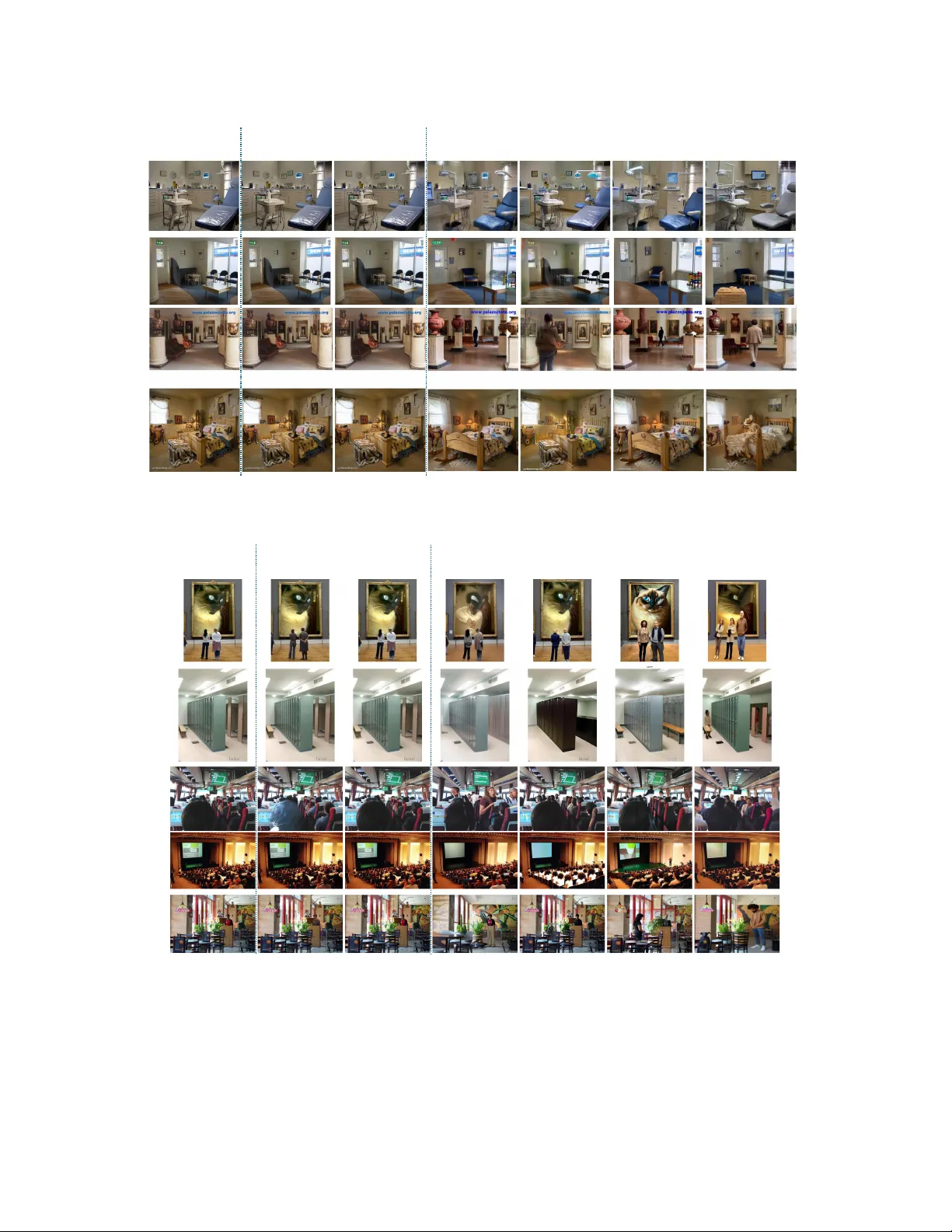

Large-scale image datasets frequently contain identifiable or sensitive content, raising privacy risks when training models that may memorize and leak such information. We present Unsafe2Safe, a fully automated pipeline that detects privacy-prone ima…

Authors: Mih Dinh, SouYoung Jin