Moving Beyond Review: Applying Language Models to Planning and Translation in Reflection

Reflective writing is known to support the development of students' metacognitive skills, yet learners often struggle to engage in deep reflection, limiting learning gains. Although large language models (LLMs) have been shown to improve writing skil…

Authors: Seyed Parsa Neshaei, Richard Lee Davis, Tanja Käser

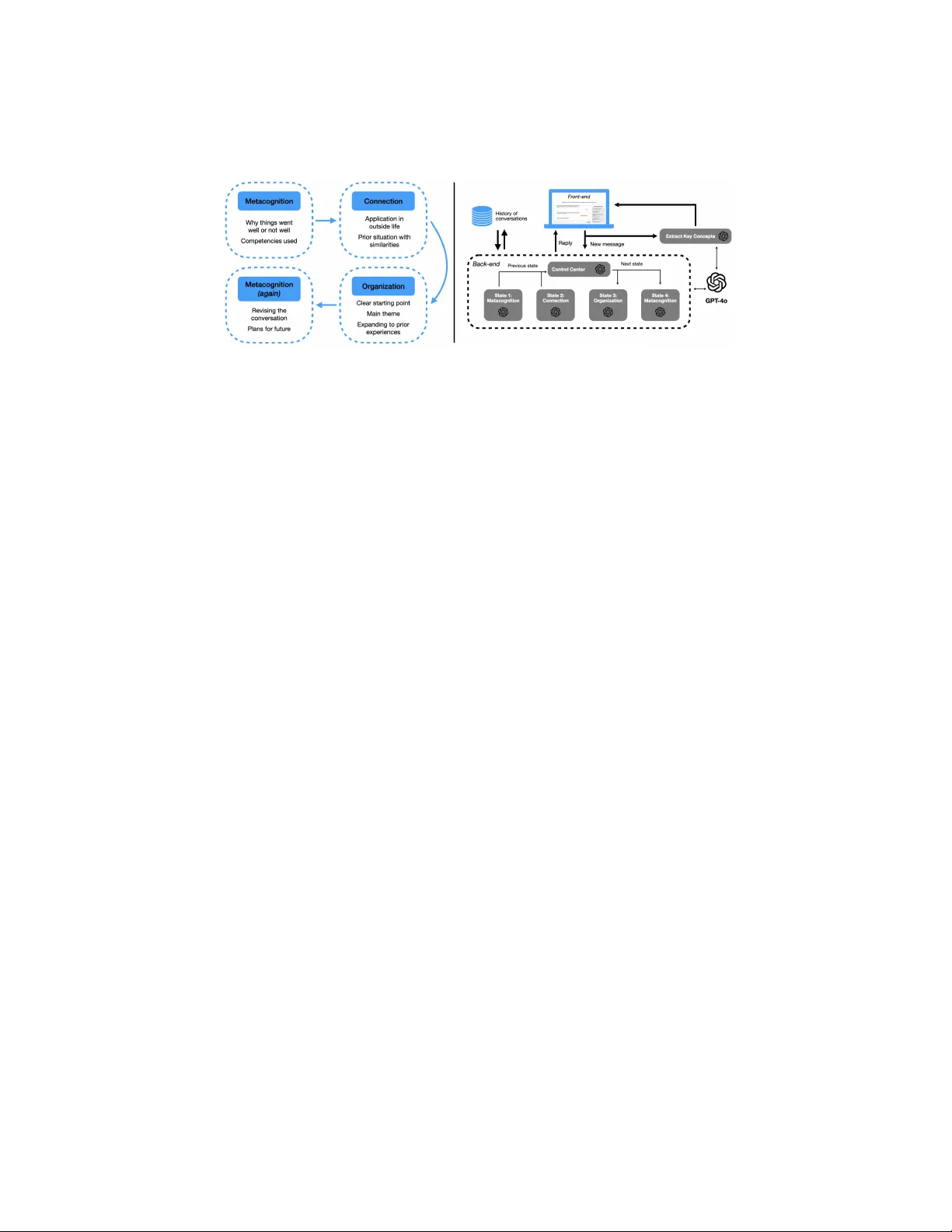

Mo ving Bey ond Review: Applying Language Mo dels to Planning and T ranslation in Reflection Sey ed P arsa Neshaei 1 [0000 − 0002 − 4794 − 395 X ] , Ric hard Lee Da vis 2 [0000 − 0002 − 6175 − 9200] , and T anja Käser 1 [0000 − 0003 − 0672 − 0415] 1 EPFL, Lausanne, Switzerland {seyed.neshaei,tanja.kaeser}@epfl.ch 2 KTH Ro yal Institute of T ec hnology , Sto c kholm, Sweden rldavis@kth.se Abstract. Reflectiv e writing is known to supp ort the developmen t of studen ts’ metacognitive skills, yet learners often struggle to engage in deep reflection, limiting learning gains. Although large language mo dels (LLMs) hav e b een shown to impro ve writing skills, their use as conv er- sational agen ts for reflectiv e writing has produced mixed results and has largely fo cused on pro viding feedbac k on reflective texts, rather than sup- p ort during planning and organizing. In this paper, inspired by the Cog- nitiv e Pro cess Theory of writing (CPT), we prop ose the first application of LLMs to the planning and tr anslation steps of reflective writing. W e in- tro duce Pensée, a tool to explore the effects of explicit AI supp ort during these stages b y scaffolding structured reflection planning using a conv er- sational agen t, and supp orting tr anslation b y automatically extracting k ey concepts. W e ev aluate Pensée in a controlled b et ween-sub jects exp er- imen t ( N = 93 ), manipulating AI supp ort across writing phases. Results sho w significantly greater reflection depth and structural quality when learners receive supp ort during planning and tr anslation stages of CPT, though these effects reduce in a dela yed p ost-test. Analyses of learner b e- ha vior and p erceptions further illustrate how CPT-aligned conv ersational supp ort shap es reflection pro cesses and learner exp erience, contributing empirical evidence for theory-driven uses of LLMs in AI-supp orted re- flectiv e writing. Keyw ords: metacognition · reflective writing · cognitive pro cess theory · large language models · intelligen t and in teractive writing assistants · AI supp ort in writing · conv ersational agents 1 In tro duction Reflectiv e writing plays a k ey role in fostering metacognitive a wareness [27]. De- v eloping learners’ capacity to engage in reflective activities is therefore considered a v aluable educational goal across a wide range of contexts, including vocational training [3, 19]. The task of reflectiv e writing in volv es ev aluating learning exp e- riences and adapting them in the form of a written essay . Despite these b enefits, learners often struggle to pro duce high-quality reflections. Meaningful reflection 2 S. P . Neshaei et al. requires more than simple recounting of even ts; it entails analyzing causes, con- necting exp eriences to prior kno wledge, and planning future actions [20]. No vice learners often struggle with these higher-order processes; they reflect mainly descriptiv ely and not sufficiently analytically [22], resulting in limited learning gains and necessitating reflection supp ort. Prior work has therefore explored a wide range of approaches to supp ort learners’ reflectiv e writing pro cesses. These include instructional scaffolds suc h as guiding questions [21, 16], sentence op eners [13], visualizations of writing progress or text structure [16, 13], and using structural reflection frameworks [23, 25]. A substantial p ortion of this researc h has fo cused on analyzing and as- sessing learners’ written reflections as a basis for pro viding adaptive feedback. Suc h approaches t ypically identify refle ctiv e elemen ts or components within stu- den ts’ texts [28, 9] using traditional machine learning metho ds, including random forests [1, 14] and topic mo deling tec hniques [4]. The recent rise of large language mo dels (LLMs) has accelerated this feedbac k- orien ted line of work by enabling more fluent and context-a w are analysis of writ- ings [17]. P articularly , LLMs hav e b een used to generate adaptive feedback based on rubrics [2] or combined with classifiers to p ersonalize feedback on reflection structure [23]. Beyond text analysis, LLMs also enable con versational agen ts (CAs) that interact with learners in natural language. Early CAs for reflective writing relied on pre-scripted dialogues or predefined prompts to guide learners’ reflection or to support text structuring [25]. More recent systems allow free- form interaction with LLM-based CAs. F or example, Kumar et al. [15] sho w that access to an LLM supp orting self-reflection can improv e academic p erfor- mance, while Kim et al. [12] employ a CA to supp ort daily reflective journaling in men tal health contexts. Other work uses CAs to guide learners through writing sp ecific comp onen ts of reflectiv e texts [23]. Despite this gro wing bo dy of w ork, relativ ely few studies hav e examined whether AI-based CAs lead to measurable impro v ements in reflectiv e writing qualit y . Evidence regarding the effectiveness of CAs remains mixed: while some studies rep ort p ositiv e effects on learners’ p erceptions (e.g., p erceiv ed useful- ness), improv ements in reflection depth and analytical quality are limited or inconsisten t [23]. A key limitation of existing approaches is that reflection is commonly treated as a single writing-and-feedback activity , ov erlo oking the cog- nitiv e pro cesses inv olved in pro ducing reflectiv e texts. W riting theories, how ever, emphasize that writing is a complex, multi-stage pro cess. The Cognitive Pro- cess Theory of writing (CPT) conceptualizes writing as an interpla y of planning , tr anslation , and r eviewing [6]. Explicitly supp orting these stages, which has b een sho wn to be helpful in other writing tasks [7, 11], might enable deep er and more analytically rich reflective writing. In this pap er, we explore the use of LLMs to explicitly support the planning and tr anslation stages of reflective writing. Grounded in the Cognitive Pro cess Theory of writing (CPT), we design P ensée, a to ol that provides targeted assis- tance aligned with the cognitive process es underlying reflectiv e writing. P ensée uses an AI-enabled conv ersational agent that guides learners through structured Mo ving Beyond Review: Applying Language Mo dels in Reflection 3 Fig. 1. Interface of P ensée consisting of t wo screens. Left: A CA guides learners in the planning phase, with key concepts automatically b eing extracted to supp ort tr anslation . Righ t: W riting area where learners compose their text using key concepts and receive automated feedback on the text structure during the r eview stage. planning activities to foster reflection depth, assists them in tr anslating planned elemen ts in to coheren t reflections through automatic k ey concept extraction, and supp orts r eviewing by pro viding AI-based feedbac k on writing structure. W e ev aluate Pensée in a con trolled user study with 93 vocational students, ma- nipulating AI supp ort in the planning and tr anslation phases. This study ad- dresses three researc h questions: the effects of providing supp ort in the planning and tr anslation steps of CPT on (R Q1) the depth and structure of learners’ reflectiv e texts, (RQ2) their system usage b eha vior, and (RQ3) their p erceiv ed exp erience. Results indicate that AI-supp orted planning with Pensée improv es reflection depth and structural qualit y , though effects diminish in a delay ed p ost- test. Analyses of learner b eha vior and p erceptions shed light on how such supp ort shap es reflection pro cesses and learner exp erience. Overall, this work con tributes to AIED research b y presenting the first CPT-oriented application to explicitly supp ort planning and tr anslation phases in reflectiv e writing. 2 P ensée: Reflectiv e Learning with CPT T o ev aluate the effects of AI-based explicit CPT-orien ted supp ort on reflective writing, we developed the interactiv e environmen t P ensée (see Fig.1) that sep- arates reflective writing into the three stages aligned with CPT. Eac h stage is supp orted through dedicated interface comp onen ts and resp ectiv e AI functional- it y , enabling students to transform their exp eriences into coheren t and structured reflections. W e designed the system to b e light weigh t and usable in real-world classro om settings without requiring extensive prior training. 2.1 User In terface Design T o ground our design, w e considered how a student engages in reflective writing according to CPT [6]. CPT describ es writing as a process inv olving planning , tr anslation , and r eviewing . The learner t ypically begins b y mentally revisiting a concrete exp erience through selecting certain moments and planning what to include. This step refers to generating and organizing ideas, setting goals, and deciding what to say . They then tr anslate these ideas in to written text, but might 4 S. P . Neshaei et al. struggle in organizing their though ts coherently . Finally , they r eview their draft to ev aluate whether it fully captures the exp erience and includes deep er reflection (e.g., analysis of the even t) rather than mere description. This step also includes detecting proble ms and revising conten t and structure. These three phases of planning , tr anslation , and r eviewing indicate the cognitiv e demands that our to ol is designed to scaffold. As sho wn in Figure 1 (left), learners are first presen ted with the CA in- terface when logging in to the to ol. They interact with the AI-p ow ered agent that is designed to supp ort the planning step of CPT through asking targeted questions, prompting learners to recall concrete exp eriences and articulate the learned lessons. This in terface aims to aid students in externalizing and organiz- ing their though ts before they b egin writing their reflection, and mak es explicit the kind of idea generation that students would otherwise hav e to manage inter- nally during their initial planning phase. T o inform the learning design of our CA, we adapted the cycle prop osed by [10] (see Fig. 2-left) to our case. It consists of four main states to cov er in order: 1) metac o gnition on talking ab out why the exp erience wen t well or not well, and whic h comp etencies they had to use in the ev ent; 2) c onne ction on the application of the concepts the students learned in the even t outside of their professional life and/or if they remem b er a prior situation with similar concepts or mistakes; 3) or ganization on the imp ortance of having a clear starting p oin t in reflection, rev olving around a main coherent theme, and expanding the text to include prior exp eriences; and 4) metac o gni- tion again on asking the learner to revise the conv ersation, communicate any p oin ts missed in their though t pro cess, and planning for c hanges in their actions in the future. As the learners progress through the conv ersation, the “key concepts” of their resp onses are automatically extracted and summarized in a sidebar, with a title and relev ant quote from the con versation for eac h. This sidebar effectively serves as a dynamic planning b oard at this stage, similar to the notes students might otherwise try to k eep men tally or jot do wn informally , but here p ersisten tly organized and visible. After the end of the con versation session, students are presen ted with a dedicated writing page (Fig. 1-righ t), in whic h, corresp onding to the tr anslation step of CPT, the extracted key concepts remain visible, helping learners con vert their planned ideas in to a coherent reflective writing without the need to rely solely on memory . Students can click on any of the key concepts to cop y the corresp onding quote to the clipb oard, ready to paste in the writing area, or rather, read them in a list. The writing page also includes guidance on how to structure a reflection according to the Gibbs reflectiv e cycle, built after prior w orks on reflection supp ort [23]; users ha ve the ability to ho ver their mouse p oin ter o v er each comp onen t and read the definition of the comp onen t plus sev eral examples underneath, which show what well-structured reflections lo ok lik e b eyond simple descriptiv e texts. In unsupp orted writing, this translation phase requires studen ts to transform their loosely organized though ts in to a structured piece, but here, the p ersistent key concepts reduce that cognitive load and help students maintain alignmen t in their text with the original exp erience. Mo ving Beyond Review: Applying Language Mo dels in Reflection 5 After the essay draft is completed and the learner clicks on the “F eedback” button b elo w the typing area, the system supp orts the r eviewing step of CPT b y pro viding feedback on which comp onen ts of the Gibbs reflectiv e cycle are satisfied in the text, underlining the sentences in the text with the color corresp onding to each comp onen t on the righ t panel. The chec ks and crosses on the righ t panel indicate whic h components are satisfied and missing in the text, resp ectiv ely . Similar to how students reread their drafts to chec k if they include the necessary comp onen ts, the to ol mak es this ev aluative process explicit. As a result, our design explicitly maps eac h CPT phase to concrete user in teractions: the CA for planning , the sidebar and extracted k ey concepts for tr anslation , and the writing feedback page for r eviewing , aligned with how stu- den ts w ould otherwise carry out these pro cesses indep enden tly . 2.2 AI Mo dels In Pensée, each CPT stage is supp orted through differen t AI mechanisms. Planning. The planning step is supp orted through an LLM-based CA, the ar- c hitecture of whic h can b e seen in Figure 2 (right). The CA is implemen ted as a state machine, progressing through the four main stages of metacognition, elab- oration, organization, and again metacognition, as describ ed abov e 3 . A t each state, another LLM agen t (built based on GPT-4o) decides whether all of the questions for the curren t state hav e b een addressed in the con versation history . If so, the state machine mov es to the next state. If not, the LLM will first ac- kno wledge the answer pro vided by the user, and then contin ue with asking the remaining questions in the current state. A t any point, users can ask follow-up questions, which are handled by the CA and do not trigger a state transition. Up on completion of the fourth state, the conv ersation ends, and the user is instructed to mov e to the writing and feedbac k interface. This model aims to supp ort le arners in “op ening up” and discussing their exp eriences with probing questions, helping them plan their reflection b efore writing the final text. T ranslation. The translation step is supp orted by an LLM-based agent (built based on GPT-4o) that pro cesses the most recent question–answ er pair and gen- erates a corresp onding key concept. This concept is displa yed in the sidebar and stored in the back-end for later use alongside the writing interface. The agent is prompted to return an automatically-extracted brief title, as well as the relev an t quote from the con versation supp orting the key concept, only when new infor- mation is provided in the learner message. The agent returns structured output as a JSON ob ject containing the key concept title and quote. These key concepts serv e as an intermediate representation b et ween planning and writing, helping learners translate ideas surfaced during the conv ersational planning phase into coheren t reflectiv e texts. Reviewing. The reviewing step is supp orted b y an LLM-based agen t (GPT-4o) that classifies excerpts of the written reflection according to the six comp onen ts 3 The full list of questions p er state, as well as the t yp es and conten ts of the prompts used for the LLM, are av ailable in https://gith ub.com/epfl-ml4ed/cpt-reflection 6 S. P . Neshaei et al. Fig. 2. Left: the reflection cycle from Glogger et al. [10], starting from top-left, used in designing and steering the mo del b ehind our CA. Right: the arc hitecture for the differen t LLM agents used in the planning and tr anslation stages in our to ol. of the Gibbs reflective cycle [8]: Description, F eelings, Ev aluation, Analysis, Con- clusion, and Action Plan. This structural framework has b een used in multiple prior w orks on reflection (e.g., [5, 26]). The agent is prompted to return a struc- tured output in the form of a JSON list of ob jects. Each ob ject corresp onds to an excerpt of text and contains tw o fields: 1) the classified comp onen t of the Gibbs reflectiv e cycle, and 2) the corresp onding text excerpt. The prompt includes a full example of an annotated reflection to guide the mo del. W e ev aluated the classifier on a set of 96 reflective texts from prior works [23] and achiev ed a mean balanced accuracy of 0.66 (Description 0.86, F eelings 0.72, Ev aluation 0.50, Analysis 0.37, Conclusion 0.61, Action Plan 0.74), in line with v alues found in prior works. In the interface, classification results are display ed b oth in a dashboard indicating the presence of reflectiv e comp onen ts (with green c heckmarks and red crosses), and directly in the writing area, where text excerpts are highligh ted b y comp o- nen t. This feedback supports the reviewing pro cess b y making the structure of the reflection explicit, which has b een shown to impro ve reflection quality [23]. 3 Exp erimen tal User Study T o ev aluate the effectiv eness of CPT-oriented AI supp ort for reflective writing, w e conducted a con trolled study in an authentic classro om setting. Conditions. W e used a fully randomized b et w een-sub jects design, comparing t wo v ersions of our to ol: the main AI-enabled v ersion as the treatment group (TG), and a version without AI supp ort in the planning and tr anslation steps as the con trol group (CG). The CG version of our tool enabled us to isolate the added v alue of AI supp ort in the planning and tr anslation steps of CPT. In the CG version, the CA in the planning step of CPT is replaced with static text b o xes, asking the same reflection questions that the agen t asks in the AI-based v ersion, in the same order. F or the tr anslation step, we directly show the full question and answers of students to the questions. As a result, in this version of our to ol, the interfac e still supp orts students in progressing through planning , tr anslation , and r eviewing stages, but without adaptive dialog or automatic ex- traction of k ey concepts. W e did not isolate the r eviewing step, and kept AI Mo ving Beyond Review: Applying Language Mo dels in Reflection 7 supp ort in this step for b oth versions of our to ol, building up on prior w ork that has already sho wn the b enefits of AI supp ort for this step [23], removing the necessit y to isolate the effects for this stage of CPT. P articipants. The study included N = 93 students ( 89 identified as female 4 ) enrolled in a medical assistance vocational training program in Switzerland (a p opulation comparable to the target groups in prior work [24, 23]), ranging in age from 15 to 19 years (mean = 18 . 47 , SD = 3 . 11 ). Studen ts w ere randomly assigned to either treatment ( N = 45 ) or control ( N = 48 ) groups. All participants consen ted to the collection of their data, and a parental opt-out pro cedure was applied for underage students. The study proto col was approv ed b y the universit y ethics review b oard (Appro v al No. HREC 013-2021). Pro cedure. Our exp eriment consisted of three sessions: a pre-interv ention (week 1), a learning interv ention (w eek 2), and a delay ed (up to 7 days) p ost-in terven tion. Pr e-intervention Session. W e started the exp erimen t with a pre-surv ey , where w e collected demographics data and then ev aluated the effectiveness of the ran- domization across conditions using tw o different constructs of IT usage mo del (e.g., “ Using an AI writing assistant to help cr aft elements of my writing is a go o d pr actic e ”) and reflectiv e writing knowledge (e.g., “ I have written r efle ctions b efor e ”), based on the literature [29, 23]. Each construct included questions with p ossible answers from Strongly Disagree to Strongly Agree on a 1-5 Lik ert scale. T o ensure randomization, we used the mean of the results for eac h question within the constructs. After the pre-survey , the participants reflected on a situ- ation in the w orkplace when things did not go as planned. They had to write a minim um of 75 w ords. This session lasted around 25 minutes p er student. L e arning Intervention Session. In the learning phase, students used the v ersion of the to ol corresp onding to their group (treatment or con trol). They were in- structed to use the to ol to reflect on a workplace situation that they felt they handled v ery well. After the interv ention, they answ ered a questionnaire, contain- ing questions on a 1-7 Lik ert scale from the T echnology A cceptance Mo del [29] and used in prior work [24], a veraged p er construct similar to the pre-surv ey . The survey contained the constructs of excitemen t after interaction (e.g., “ In- ter acting with the to ol was exciting ”), p erceived usefulness (e.g., “ Using the to ol impr oves my de ep r efle ctive writing p erformanc e ”), p erceiv ed ease of use (e.g., “ It is e asy for me to b e c ome skil lful at using the to ol ”), technology acceptance (e.g., “ Assuming the to ol is available, the next time I want to write a r efle ction, I would use it again ”), p erceiv ed long-term improv ement (e.g., “ I assume using the to ol in the long run wil l help me impr ove my abilities to write de ep er r e- fle ctions ”), and correctness (e.g., “ A daptive r esp onses, suggestions, and fe e db ack fr om the to ol ar e c orr e ct ”). This session lasted around 50 min utes p er studen t. Post-intervention Session. Our p ost-in terv ention session consisted of four main parts. First, w e sho wed the reflection they had written in the prior session on a page where the studen ts w ere asked to read it as a knowledge activ ation task. Second, as a dela yed p ost-test, w e asked a question similar to the question asked in the pre-interv en tion session (reflecting in a minim um of 75 w ords on a s ituation 4 This reflected the distribution of students in the program. 8 S. P . Neshaei et al. in the w orkplace when things did not go as planned). During this session, the learners did not receiv e an y CPT-related supp ort from the to ol. This session lasted around 35 minutes p er student. 3.1 Measures and Analysis W e graded each of the three reflections p er participan t (in the pre-interv en tion, in the learning session, and in the p ost-in terven tion) using tw o dimensions [28]: 1) Depth. W e used the reflection strategy p osed by [10], whic h was also used to inform the questions ask ed by the CA, as a basis for our rubric. W e graded eac h reflection on three asp ects. F or metacognition , we granted one p oin t if the reflection discussed the reasons b ehind why the exp erience wen t well, one p oin t if the reflection discussed the reasons to the contrary (i.e., wh y it did not go as w ell), one p oin t for competencies they had to use in the ev ent, and one p oin t for discussing how they would change their b eha vior or actions in the future. F or connection , w e granted one p oin t if they discussed if and how they can apply the learned concepts outside of their professional life, and one point if they men tioned another situation with similar comp etencies or mistakes. Finally , for organization , w e granted one p oint if the reflection had a clear starting p oin t to the problem or the main idea, one p oin t if it consistently rev olved around a coheren t main theme, and one p oint if it included prop er expansion of the text to past exp eriences. F or the annotation according to this rubric, t wo researchers lab eled ten reflections indep enden tly and achiev ed a Cohen’s Kappa of 0 . 8693 , indicating a ne ar p erfe ct agreemen t. Afterwards, one of the tw o annotated the rest of the texts on their o wn. 2) Structure. While w e were mainly in terested in reflection depth, w e also annotated all of the reflections p er learner based on the six comp onen ts of the Gibbs reflectiv e cycle [8] as a measure for how well-structured a reflection is. Similar to how it has b een done in prior w ork [23], one point w as given p er eac h class existing in the text (leading to a minimum of 0 and a maximum of 6 p oin ts per text). T o annotate the texts, t w o researc hers lab eled the Gibbs comp onen ts indep endently in five reflections (Cohen’s Kappa = 0 . 9285 , ne ar p erfe ct agreemen t). Afterwards, one of them annotated the rest of the texts on their own. Statistical analysis. T o measure the improv emen ts in reflective writing after pro viding explicit support, and also to reveal if there can b e seen any differences b et w een the AI-based (TG) and non-AI (CG) groups (R Q1), w e conducted a series of linear mixed-effects analyses. W e considered one reflective writing out- come v ariable for structure (the Gibbs score from 0 to 6) and four v ariables for depth (normalized v alues of metacognition, connection, organization, and the a verage of all). F or each outcome v ariable, we fitted a mixed-effects mo del with stage (Pre, T o ol, Post for the pre-, learning, and post-interv en tion sessions), study conditions (AI supp ort in TG vs. no AI in CG), and their interaction as fixed effects, and a random intercept for participan t. After the global tests of fixed effects, w e computed estimated marginal means and conducted planned Mo ving Beyond Review: Applying Language Mo dels in Reflection 9 Fig. 3. Comparing the depth score of reflections across different stages and groups. con trasts with Holm-corrected comparisons. Within each group, con trasts com- pared performance from Pre to T o ol and to Post to ev aluate improv ement ov er time. Other contrasts compared TG and CG at each stage to determine whether the presence of AI supp ort led to significan t c hanges in reflective writings. Beha vioral Analysis. T o answer RQ2, we fo cused on the part of the to ol differ- en t b et ween TG and CG (i.e., the CA versus the static questions). W e computed the a verage num b er of words p er each answer to the b ot (in TG) or resp onse to the static questions (in CG) and conducted a statistical comparison with a t-test to see if there are any meaningful differences in the quan tity of learner re- sp onses. W e also particularly computed the mean n umber of w ords for the first and last three messages (excluding the initial greeting messages) of the learn- ers, and compared their changes ov er time using Benjamini-Ho c hberg corrected t-tests, to find trends in quantitativ e resp onse b eha vior. W e also analyzed all of the user and CA messages in TG to find evidence of asp ects unique to the CA compared to the static interface in CG (that is, the abilit y of users to ask follow- up questions, and the ability of the CA to engage in free discussion without stic king to asking one question at a time). User Exp erience and Perception Metrics. T o in vestigate the learners’ p er- ceptions of the interv ention and the learning exp erience using the system (RQ3), w e compared resp onses on our six self-rep orted p erception metrics (see ab ov e) b et w een the tw o study conditions (TG vs. CG). W e first calculated the mean of the resp onses to the items from each p ost-survey construct. Then, for each con- struct, we conducted indep enden t samples W elch t-tests to ev aluate the mean differences b et w een the tw o study groups. W e adjusted the p-v alues using the Benjamini-Ho c h b erg correction. This analysis enabled an analysis of whether p erceptions of learning exp erience differed as a function of receiving AI supp ort. 4 Results W e in vestigated the effect of CPT-oriented AI-supp ort on the depth and struc- ture of learners’ reflections (RQ1), how studen ts interacted with a conv ersational AI-supp ort system (RQ2), and how they perceived their experience (RQ3). 10 S. P . Neshaei et al. 4.1 R Q1: Effects of Explicit CPT-oriented Supp ort A cross Stages W e analyzed the changes in depth and structure of reflections across stages (Pre, T o ol, P ost) and groups (TG, CG). Depth. W e observ ed a significant effect of stage ( p < 2 e − 16 ). As can b e seen in Fig. 3, the reflective writing depth score increased substantially from Pre to T o ol (TG: 0 . 29 to 0 . 45 ; CG: 0 . 28 to 0 . 47 ; b oth con trasts p < . 0001 ), indicating that pro viding explicit CPT-oriented scaffolding (in terface supp ort for planning and translation) improv ed learners’ reflective writing while they used the writing phase of the system, after the end of interaction with the CA. While learners also tended to impro ve in the delay ed post-interv ention without supp ort, the difference only show ed a trend to significance for CG (TG: 0 . 29 to 0 . 30 ; p = . 576 ; CG: 0 . 28 to 0 . 33 ; p = . 067 ), suggesting limited transfer. Metac o gnition scores increased strongly from Pre to T o ol in b oth groups (TG: 0 . 20 to 0 . 58 ; CG: 0 . 21 to 0 . 59 ; b oth contrasts p < . 0001 ), showing that CPT- orien ted supp ort led to deeper metacognitiv e conten t in the reflections. F rom Pre to P ost, the improv emen t was smaller: while b oth groups had an increased mean, and the effect was significant in CG (from 0 . 21 to 0 . 32 ; p = . 016 ), it w as insignifican t in TG (from 0 . 20 to 0 . 22 ; p = . 692 ). Conne ction scores increased from Pre to T o ol in b oth groups (TG: 0 . 00 to 0 . 12 ; p = . 0005 ; CG: 0 . 01 to 0 . 14 ; p = . 0003 ), but returned to near zero at Post (TG: 0 . 02 ; CG: 0 . 01 ). None of the t wo groups had a significan t change from Pre to P ost (TG: p = . 969 ; CG: p = 1 . 000 ). This indicates that the in terven tion led to a higher connection-making when supp ort was present, but the effect did not p ersist to the dela yed p ost-test without supp ort. Or ganization scores increased from Pre to T o ol and P ost in CG (Pre: 0 . 63 ; T ool: 0 . 68 ; P ost: 0 . 66 ), b ut slightly decreased from Pre to T o ol and then increased bac k to Post in TG (Pre: 0 . 66 ; T o ol: 0 . 64 ; Post: 0 . 66 ). The global stage effect did not sho w an y significan t difference ( p = . 570 ). W e found no significant effect of condition in an y of the statistical tests, not in the o verall av erage depth score nor in any of the rubrics (Ov erall: p = . 325 ; Metacog- nition: p = . 130 ; Connection: p = . 852 ; Organization: p = . 964 ). Similarly , the in teraction effect of stage and condition w as also insignifican t (Overall: p = . 548 ; Metacognition: p = . 163 ; Connection: p = . 825 ; Organization: p = . 238 ). Structure. W e observed a significan t effect of stage ( p < 2 e − 16 ) and an ov erall clear effect: the structure score increased substantially from Pre to T o ol (TG: 2 . 13 to 4 . 38 ; CG : 2 . 10 to 4 . 71 ; b oth con trasts p < . 0001 ), indicating that Pensée impro ved the writing structure while they used the system. How ever, while there w as a positive gain to the third, p ost-interv en tion session, the difference w as not significan t (TG: 2 . 13 to 2 . 40 ; p = . 409 ; CG: 2 . 10 to 2 . 42 ; p = . 409 ), indicating a limited transfer. Moreov er, the effects of condition ( p = . 558 ) and the interaction of stage and condition ( p = . 538 ) w ere not significant. Over al l, explicit CPT-oriente d supp ort impr ove d r efle ctive writing structur e and depth during to ol use, esp e cial ly for metac o gnition and c onne ction. However, tr ansfer to a delaye d p ost-test without to ol supp ort showe d limite d le arning gains. The impr ovement pr o gr ess was similar in b oth gr oups: they b oth b enefite d during Mo ving Beyond Review: Applying Language Mo dels in Reflection 11 to ol use, but the additional AI adaptivity and automatic key c onc epts extr action did not le ad to higher sc or es than static non-AI sc affolding. 4.2 R Q2: Resp onse Patterns and Interaction Behavior Next, w e analyzed learners’ behavior during the planning phase, whic h differed b et w een TG and CG. W e h yp othesized that the CA in TG would encourage longer and deep er in teraction compared to the worksheet-st yle CG interface. W e first examined learners’ response length. Students in TG resp onded to b ot messages with a mean of 17 . 54 words (SD = 6 . 35 ), while CG students resp onded to static text b o xes with a slightly higher mean of 18 . 65 words (SD = 13 . 24 ). Ho wev er, this difference was not statistically significan t ( t = 0 . 505; p = . 615 ). A cross time, b oth groups show ed a significan t decrease in resp onse length. In TG, resp onses decreased from 24 . 50 words on av erage (SD = 18 . 58 ) in the first three messages to 8 . 47 words (SD = 7 . 23 ) in the last three ( t = 8 . 205; p < . 0001 ). Similarly , in CG, resp onses decreased from 30 . 24 words (SD = 21 . 73 ) to 11 . 32 w ords (SD = 10 . 86 ) ( t = 7 . 832; p < . 0001 ). A subsequen t c on ten t analysis of TG responses further show ed that only three users asked a single follow-up question each, all of which were clarification questions ab out terms men tioned by the bot (e.g., “what are comp etencies”). This suggests that most users (93%) in teracted with the CA in a w ay similar to how CG students interacted with static worksheet-st yle prompts, indicating limited use of the CA’s in teractive capabilities. Additionally , analysis of the CA messages show ed that the CA consistently ended its resp onses with a question (except for the final congratulatory message), making the in teraction structure closely resemble the static question approach used in CG. Over al l, we found a notable de cr e ase in r esp onse length over time. W e also found a high similarity in the usage p attern of the CA in TG and the static questions in CG by the students. 4.3 R Q3: User Exp erience and Perception Metrics Finally , we explored the resp onses learners pro vided to our p ost-survey questions. As can b e seen in Figure 4, in our p ost-survey , TG rep orted higher scores than CG on all metrics, including ease of use (TG: 3 . 97 ; CG: 3 . 50 ), correct- ness (TG: 4 . 26 ; CG: 3 . 83 ), excitemen t (TG: 3 . 64 ; CG: 3 . 20 ), usefulness (TG: 3 . 99 ; CG: 3 . 61 ), p erceived long-term impro vemen t (TG: 3 . 84 ; CG: 3 . 48 ), and tec hnology acceptance (TG: 3 . 60 ; CG: 3 . 31 ). How ev er, none of these differences remained statistically significan t after multiple-comparison Benjamini-Ho c hberg correction of the p-v alues ( p > . 1 ). This indicates that although TG tended to rate the system more p ositiv ely , the evidence did not show significant p erception differences when adding AI supp ort under our curren t sample size. Over al l, AI-supp orte d planning and tr anslation showe d c onsistently mor e p osi- tive p er c eption outc omes than non-AI CPT sc affolding, suggesting a pr omising advantage that may not have r e ache d signific anc e due to limite d statistic al p ower. 12 S. P . Neshaei et al. Fig. 4. Self-rep orted p erception metrics by group (Mean ± SEM). 5 Discussion This study in v estigated whether grounding support in CPT, particularly tar- geting planning and tr anslation stages, would impro ve learners’ reflections and exp eriences with the system. By explicitly supp orting CPT in our to ol, we shifted the fo cus of AI supp ort for reflection from generic feedbac k on finished texts to- w ard supp orting the end-to-end cognitive pro cesses that can also precede writing. Our findings demonstrate that explicit pro cess-oriented scaffolding can mean- ingfully change reflective writing practice: b oth study groups show ed substantial impro vemen ts in reflection depth and structure during to ol use, with significant shifts in metacognition, connection-making, and structure. Ho wev er, tw o findings w arrant careful consideration. First, the gains observed during to ol use did not transfer robustly to the delay ed p ost-test, where learners wrote without scaffolding. This suggests that short-term exp osure, while b o ost- ing performance, may not pro duce lasting c hanges in reflective writing skills (a common challenge in writing interv en tions). Second, the AI-supp orted condi- tion show ed no significant adv an tage ov er the static scaffolding condition. Both groups improv ed similarly , indicating that the primary driv er was the explicit CPT supp ort itself, rather than AI adaptivity or conv ersational in teraction. Implications and Next Steps. These findings carry implications for the de- sign, study , and ev aluation of LLM-based learning to ols. Design. Our b eha vioral analysis revealed that studen ts in the AI condition largely treated the CA lik e a static w orksheet. Response patterns w ere simi- lar across conditions, response length declined sharply ov er turns, and only 7% of users ever asked a follow-up question. This implies that the CA’s design ma y ha ve inadverten tly replicated the static scaffolding experience. T o realize the in teractive p oten tial of LLMs, future designs should explicitly scaffold richer in teraction patterns (e.g., pre-defined buttons for asking follow-ups, giving ex- amples, or challenging reasoning). Changes lik e these, as well as considering careful ev aluation of the LLM outputs, may b e necessary to unlo c k the p oten tial of CAs for learning. L ong-term imp act. Although not statistically significan t, the AI-supp orted group consisten tly rated the system higher on ease of use, excitement, usefulness, and Mo ving Beyond Review: Applying Language Mo dels in Reflection 13 willingness to reuse, matching prior CA and writing works (e.g., [30]). If learners prefer AI-supp orted to ols, impact may emerge through sustained engagemen t, with higher adoption leading to more practice ov er time. This suggests a shift in research fo cus from single-session outcomes tow ard understanding how p er- ception adv antages translate in to long-term to ol use and, ultimately , learning gains. Designing for rep eated engagemen t rather than one-off in terven tions may b e where AI supp ort pro ves inv aluable. Evaluation. The lack of significant learning gains reflects a common issue in writ- ing interv entions: short exposure to supp ort can b oost performance, but may not lead to transfer p erformance once the scaffolds are remov ed. This p oints to a metho dological gap the communit y should address: many reflection or LLM-writing studies ev aluate same-session outcomes (e.g., [23, 18]), while de- la yed p ost-tests are less common, and set a higher bar for performance. This gap may lead the field to ov erestimate in terven tion effects. F uture work should adopt dela yed post-tests as standard practice and design longitudinal studies that capture learning tra jectories ov er time to distinguish betw een p erformance and long-term learning. Conclusion. W e presented Pensée, the first application of LLMs to explicitly supp ort the planning and translation stages of reflectiv e writing, grounded in CPT. Our results show that pro cess-orien ted scaffolding significantly improv es reflection depth and structure during use. Y et the absence of significant differ- ences b et w een AI-supported and static conditions offers a grounding insight: LLMs are p o werful to ols, but they are not magic. Realizing their p oten tial for learning requires careful, theory-driven design and rigorous ev aluation. This work iden tifies concrete directions for the field: engineering interfaces that unlo c k gen- uine con v ersational engagemen t, in v estigating whether p erception adv antages comp ound into learning gains through sustained use, and adopting ev aluation practices that measure lasting impact. A ckno wledgmen ts. This pro ject was substantially financed by the Swiss State Sec- retariat for Education, Researc h, and Innov ation (SERI). W e thank the teachers who enabled us run our study , as well as Dominik Glandorf, Ek aterina Shv ed, and Peter Bühlmann for their assistance with data collection, F atma Betül Güres for pro ofreading the manuscript, and Roman Rietsche and Thiemo W am bsganss for their contributions to ideation and providing v aluable feedback. Disclosure of Interests. The authors hav e no comp eting interests to declare that are relev ant to the con tent of this article. References 1. Alrashidi, H., Almujally , N., Kadhum, M., Daniel Ullmann, T., Jo y , M.: Ev aluat- ing an automated analysis using machine learning and natural language pro cess- ing approaches to classify computer science studen ts’ reflective writing. In: Perv a- siv e computing and social netw orking: Pro ceedings of ICPCSN 2022, pp. 463–477. Springer (2022) 14 S. P . Neshaei et al. 2. A widi, I.T.: Comparing exp ert tutor ev aluation of reflective essays with marking b y generativ e artificial intelligence (AI) to ol. Computers and Education: Artificial In telligence 6 , 100226 (2024) 3. Cattaneo, A.A., Motta, E.: “ I reflect, therefore I am. . . a go od professional”. On the relationship b et ween reflection-on-action, reflection-in-action and professional p er- formance in vocational education. V o cations and Learning 14 (2), 185–204 (2021) 4. Chen, Y., Y u, B., Zhang, X., Y u, Y.: T opic mo deling for ev aluating studen ts’ reflectiv e writing: a case study of pre-service teachers’ journals. In: Pro ceedings of the sixth international conference on learning analytics & knowledge. pp. 1–5 (2016) 5. Ezezik a, O., Johnston, N.: Developmen t and implementation of a reflective writing assignmen t for undergraduate students in a large public health biology course. P edagogy in Health Promotion 9 (2), 101–115 (2023), publisher: SAGE Publications Sage CA: Los Angeles, CA 6. Flo wer, L., Ha yes, J.R.: A cognitive pro cess theory of writing. College Composition & Communication 32 (4), 365–387 (1981) 7. Gero, K., Calderwoo d, A., Li, C., Chilton, L.: A design space for writing supp ort to ols using a cognitive pro cess mo del of writing. In: Proceedings of the first work- shop on intelligen t and interactiv e writing assistan ts (In2W riting 2022). pp. 11–24 (2022) 8. Gibbs, G.: Learning b y doing: A guide to teaching and learning methods. F urther Education Unit (1988) 9. Gibson, A., De Vine, L., Canizares, M., Willis, J.: Reflexive expressions: T ow ards the analysis of reflexive capability from reflective text. In: International conference on artificial intelligence in education. pp. 353–364. Springer (2023) 10. Glogger, I., Sch wonk e, R., Holzäpfel, L., Nückles, M., Renkl, A.: Learning strategies assessed by journal writing: Prediction of learning outcomes b y quantit y , quality , and com binations of learning strategies. Journal of educational psychology 104 (2), 452 (2012) 11. Göldi, A., W ambsganss, T., Neshaei, S.P ., Rietsche, R.: Intelligen t supp ort engages writers through relev ant cognitive pro cesses. In: Proceedings of the CHI conference on human factors in computing systems. pp. 1–12 (2024) 12. Kim, T., Bae, S., Kim, H.A., Lee, S.w., Hong, H., Y ang, C., Kim, Y.H.: Mindful- Diary: Harnessing large language mo del to supp ort psychiatric patients’ journaling. In: Proceedings of the CHI conference on human factors in computing systems. pp. 1–20 (2024) 13. Kingk aew, C., Theeramunk ong, T., Supnithi, T., Chatpreecha, P ., Morita, K., T anak a, K., Ikeda, M.: A learning environmen t to promote a wareness of the ex- p erien tial learning pro cesses with reflective writing supp ort. Education Sciences 13 (1), 64 (2023) 14. K ov ano vić, V., Joksimović, S., Mirriahi, N., Blaine, E., Gašević, D., Siemens, G., Da wson, S.: Understand students’ self-reflections through learning analytics. In: Pro ceedings of the 8th in ternational conference on learning analytics and knowl- edge. pp. 389–398 (2018) 15. Kumar, H., Xiao, R., La wson, B., Musabirov, I., Shi, J., W ang, X., Luo, H., Williams, J.J., Raffert y , A.N., Stamp er, J., others: Supp orting self-reflection at scale with large language mo dels: Insights from randomized field exp erimen ts in classro oms. In: Pro ceedings of the eleven th ACM conference on learning@ scale. pp. 86–97 (2024) Mo ving Beyond Review: Applying Language Mo dels in Reflection 15 16. Lai, G., Calandra, B.: Examining the effects of computer-based scaffolds on novice teac hers’ reflective journal writing. Educational T echnology Researc h and Devel- opmen t 58 (4), 421–437 (2010) 17. Lee, M., Gero, K.I., Chung, J.J.Y., Shum, S.B., Raheja, V., Shen, H., V enugopalan, S., W ambsganss, T., Zhou, D., Alghamdi, E.A., others: A design space for intelli- gen t and interactiv e writing assistants. In: Pro ceedings of the 2024 CHI conference on human factors in computing systems. pp. 1–35 (2024) 18. Mejia-Domenzain, P ., F rej, J., Neshaei, S.P ., Mouc hel, L., Nazaretsky , T., W amb- sganss, T., Bosselut, A., Käser, T.: Enhancing pro cedural writing through p erson- alized example retriev al: a case study on co oking recip es. International Journal of Artificial Intelligence in Education 35 (1), 330–366 (2025) 19. Mejia-Domenzain, P ., Marras, M., Giang, C., Cattaneo, A., Käser, T.: Evolution- ary clustering of apprentices’ self-regulated learning b eha vior in learning journals. IEEE T ransactions on Learning T echnologies 15 (5), 579–593 (2022) 20. Middleton, R.: Critical reflection: the struggle of a practice developer. International Practice Developmen t Journal (2017) 21. Moussa-Inat y , J.: Reflective writing through the use of guiding questions. Inter- national Journal of T eaching and Learning in Higher Education 27 (1), 104–113 (2015) 22. Neh yba, J., Štefánik, M.: Applications of deep language models for reflective writ- ings. Education and Information T echnologies 28 (3), 2961–2999 (2023) 23. Neshaei, S.P ., Mejia-Domenzain, P ., Davis, R.L., Käser, T.: Metacognition meets AI: Emp o wering reflective writing with large language models. British Journal of Educational T ec hnology (2025) 24. Neshaei, S.P ., T ashko vsk a, M., Mejia-Domenzain, P ., W ambsganss, T., Käser, T.: User-cen tric reflective writing assistance: Leveraging RAG for enhanced personal- ized supp ort. In: Pro ceedings of the extended abstracts of the CHI conference on h uman factors in computing systems. pp. 1–8 (2025) 25. Neshaei, S.P ., W ambsganss, T., El Bouc hrifi, H., Käser, T.: MindMate: Exploring the effect of con versational agents on reflective writing. In: Pro ceedings of the extended abstracts of the CHI conference on human factors in computing systems. pp. 1–9 (2025) 26. Nurlatifah, L., Purnaw arman, P ., Sukyadi, D.: The implementation of reflective assessmen t using Gibbs’ reflectiv e cycle in assessing students’ writing skill. In: AIP conference pro ceedings. v ol. 2621. AIP Publishing (2023), num b er: 1 27. P erry , J., Lundie, D., Golder, G.: Metacognition in schools: what do es the literature suggest ab out the effectiveness of teaching metacognition in sc ho ols? Educational Review 71 (4), 483–500 (2019) 28. Ullmann, T.D.: Automated analysis of reflection in writing: V alidating machine learning approac hes. International Journal of Artificial Intelligence in Education 29 (2), 217–257 (2019) 29. V enk atesh, V., Bala, H.: T echnology acceptance mo del 3 and a researc h agenda on in terven tions. Decision sciences 39 (2), 273–315 (2008) 30. W ambsganss, T., Kueng, T., So ellner, M., Leimeister, J.M.: ArgueT utor: An adap- tiv e dialog-based learning system for argumentation skills. In: Pro ceedings of the 2021 CHI conference on h uman factors in computing systems. pp. 1–13 (2021)

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment