Debt Behind the AI Boom: A Large-Scale Empirical Study of AI-Generated Code in the Wild

AI coding assistants are now widely used in software development. Software developers increasingly integrate AI-generated code into their codebases to improve productivity. Prior studies have shown that AI-generated code may contain code quality issu…

Authors: Yue Liu, Ratnadira Widyasari, Yanjie Zhao

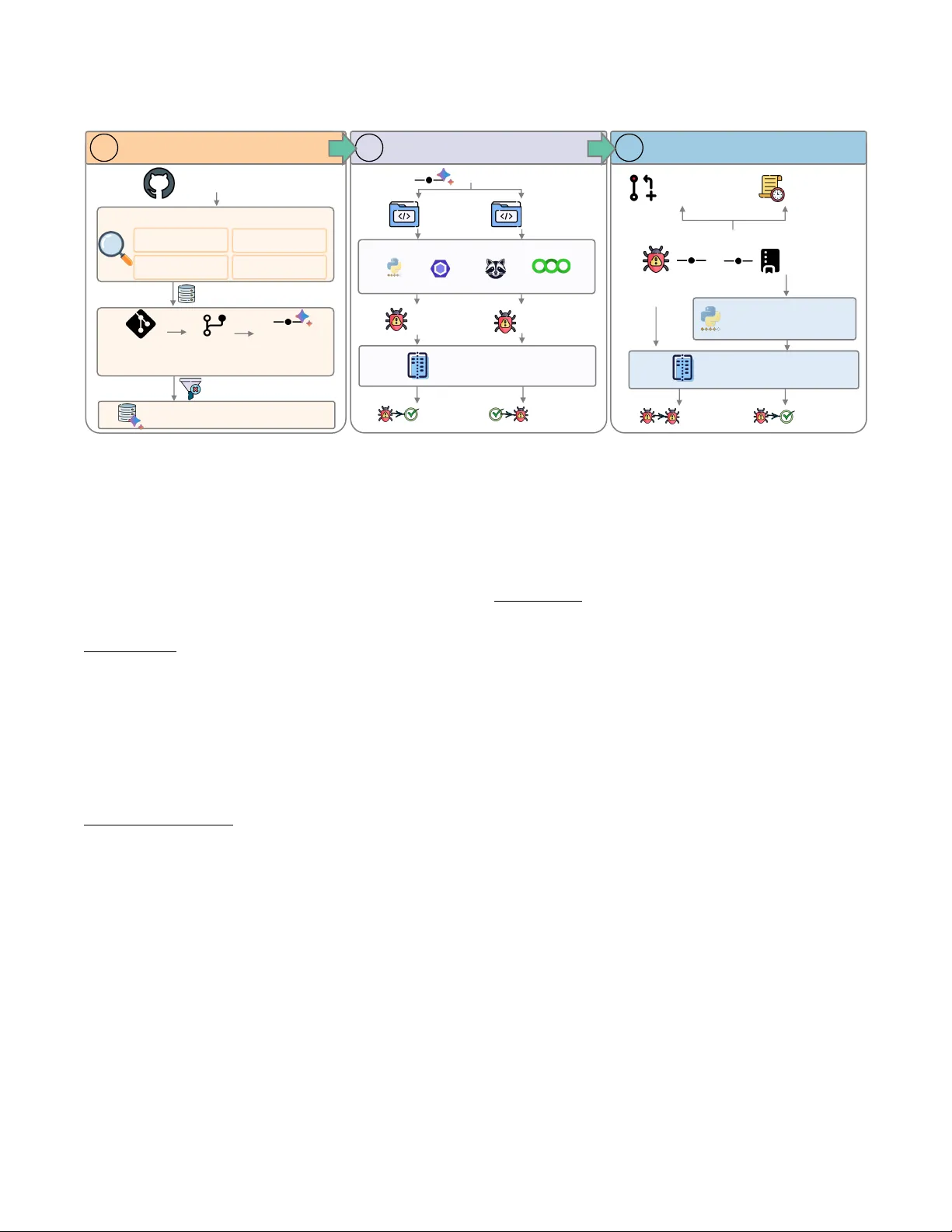

Debt Behind the AI Boom: A Large-Scale Empirical Study of AI-Generated Code in the Wild Y ue Liu Singapore Management University Singapore, Singapore liuyue@smu.edu.sg Ratnadira Widyasari Singapore Management University Singapore, Singapore ratnadiraw@smu.edu.sg Y anjie Zhao Huazhong University of Science and T echnology Wuhan, China yanjie_zhao@hust.edu.cn Ivana Clairine IRSAN Singapore Management University Singapore, Singapore ivanairsan@smu.edu.sg David Lo Singapore Management University Singapore, Singapore davidlo@smu.edu.sg Abstract AI coding assistants are now widely used in software development. Software developers increasingly integrate AI-generated code into their codebases to improve productivity . Prior studies have shown that AI-generated code may contain code quality issues under con- trolled settings. Howev er , we still know little about the real-world impact of AI-generated code on software quality and maintenance after it is introduced into production repositories. In other w ords, it remains unclear whether such issues are quickly xed or persist and accumulate over time as technical debt. In this paper , we con- duct a large-scale empirical study on the technical debt introduced by AI coding assistants in the wild. T o achieve that, we built a dataset of 304,362 veried AI-authored commits fr om 6,275 GitHub repositories, covering ve widely used AI coding assistants. For each commit, we run static analysis before and after the change to precisely attribute which code smells, bugs, and security issues the AI introduced. W e then track each introduced issue from the introducing commit to the latest repositor y revision to study its lifecycle. Our r esults show that we identied 484,606 distinct issues, and that code smells are by far the most common type, accounting for 89.1% of all issues. W e also nd that more than 15% of com- mits from every AI coding assistant introduce at least one issue, although the rates var y across tools. More importantly , 24.2% of tracked AI-introduced issues still survive at the latest r evision of the repository . These ndings show that AI-generated code can introduce long-term maintenance costs into real software projects and highlight the need for stronger quality assurance in AI-assisted development. Ke y words AI coding assistants, technical debt, co de quality , software mainte- nance, empirical study A CM Reference Format: Y ue Liu, Ratnadira Widyasari, Y anjie Zhao, Ivana Clairine IRSAN, and David Lo. 2026. Debt Behind the AI Boom: A Large-Scale Empirical Study of AI- Generated Code in the Wild. In . A CM, New Y ork, NY, USA, 11 pages. https://doi.org/10.1145/nnnnnnn.nnnnnnn 1 Introduction Through AI coding assistants (e.g., Cursor , Claude Code), software developers can now describe what they want in natural language and get working code back in seconds. They signicantly improv e development productivity . Thus, AI is becoming standard e quip- ment for modern software developers [ 14 ]. According to the 2025 Stack Overow Developer Survey , 84% of professional dev elopers are using or are planning to use AI tools within their develop- ment processes [ 45 ]. The AI-generated code is also widely used in real-world software pr ojects. For example, both Google and Mi- crosoft disclosed in 2025 that AI now writes over 20% of their new code [ 31 , 38 ]. Similarly , GitHub reported that mor e than 1.1 million public repositories use d AI co ding tools between 2024 and 2025 [ 14 ]. Overall, AI-generated code has graduated from experiment to pro- duction reality , and it is every where. Although AI coding assistants have proven ee ctive at gener- ating functional programs, many previous research studies have revealed a range of quality concerns in AI-generated code. Recent studies have shown that AI-generated code suers from functional bugs, runtime errors, and systemic maintainability issues [ 22 , 44 ]. Also, the code pr o duced by AI tools poses security risks [ 23 , 33 , 34 ]. Pearce et al. [ 33 ] found that about 40% of AI-generated code in security-sensitive contexts contains critical vulnerabilities. Ho w- ever , recent r esearch [ 34 , 39 ] has found that developers tend to place excessive trust in the quality of AI-generated code, blindly accepting the code without pr op er validation. A s a result, these unveried code snippets are merged into production codebases. Over time, this can cause a considerable accumulation of technical debt , which can be costly and time-consuming to address [ 16 , 28 ]. Recognizing these risks, recent empirical studies have started to investigate AI-generated code in real-world repositories. These studies cover a range of practical concerns, such as security weak- nesses [ 13 , 50 ], project-level development velocity [ 17 ], pull request acceptance rates [ 51 ], and co de redundancy [ 18 ]. For example, He et al. [ 17 ] found that Cursor adoption in 807 GitHub repositories led to a transient velocity b oost but persistent increases in code complexity . However , existing studies still have several limitations. First, most studies focus on a single tool or a narrow set of to ols, which limits the generalizability of their ndings. Second, many studies r ely on pro xy-base d attribution (e.g., conguration les, self-disclosure signals, or classiers), rather than directly tracking which code lines or les are AI-generated. Third, they mostly e valu- ate code at a single point in time, failing to capture the long-term lifecycle of the code. Thus, it remains unknown how AI-generated Conference’17, July 2017, W ashington, DC, USA Y ue Liu, Ratnadira Widyasari, Y anjie Zhao, Ivana Clairine IRSAN, and David Lo code actually ages in production. W e still do not know whether the technical debt it introduces persists, gets refactored, or silently accumulates over time. T o bridge this gap, we conduct a large-scale empirical study to investigate the lifecycle of AI-intr o duced technical debt in the wild. Our approach consists of three steps. First, we build a large dataset of veried AI-authored commits. Instead of relying on classiers or proxy signals, we use e xplicit Git metadata to identify commits generated by AI coding tools across thousands of GitHub repos- itories. Second, we p erform a commit-level quality analysis. For each AI-authored commit, we run static analysis tools on the sour ce code immediately before and after the change. This allows us to precisely identify which code smells, bugs, and security issues the AI introduced or xed. Third, we conduct a debt lifecycle analysis. W e track each intr o duced issue to the latest repositor y re vision ( HEAD ) to determine whether it still survives or has been resolved. Through this design, our study provides the rst comprehensive view of how AI-generated code ages in real-world software . Contribution. T o the best of our knowledge, this pap er is the rst to: • Conduct a large-scale empirical study of AI-introduced tech- nical debt across ve major AI coding assistants (i.e., GitHub Copilot, Claude, Cursor , Gemini, and Devin) and over 6,000 real-world GitHub repositories. • Perform commit-level dierential analysis to pr e cisely at- tribute code smells, bugs, and security issues to individual AI-authored commits. • Track the lifecycle of AI-introduced technical debt from the moment of introduction to the latest repository revision, revealing ho w it persists, evolves, or gets resolv e d over time . Open Science. T o support the open science initiative, we pub- lish the studies dataset and a replication package, which is publicly available in GitHub. 1 Paper Organization. Section 2 presents the background and motivation. Section 3 describes our approach. Section 4 presents the experimental setup. Section 5 presents the results. Section 6 discusses the ndings and their implications. Section 7 presents the related work. Section 8 discloses the thr eats to validity . Section 9 draws the conclusions. 2 Background 2.1 AI Co ding Assistants Modern AI co ding assistants (e.g., Cursor , Claude Co de, GitHub Copilot) are now deeply embedded in software development work- ows. They help softwar e developers write, modify , explain, test, or debug code using natural-language instructions and code context. Advanced agentic tools can now process entire functions, les, or even entire r ep ositories to autonomously create pull requests with minimal human intervention. Driven by these impr oved capabili- ties, AI-generated code is entering production codebases at unprece- dented speed and scale. According to GitHub, over 1.1 million public repositories adopted AI coding tools between 2024 and 2025 [ 14 ]. As shown in Figur e 1 , in Anthropic’s claudes-c-compiler reposi- tory [ 4 ], Claude appears as the top contributor , with 3,957 commits 1 https://github.com/xxxx/xxx x Figure 1: Contributor statistics for the Anthropic claudes-c-compiler repository . seagullz4/hysteria2 [ 43 ] , hysteria2.py:L77 , e277daf # issue: shell=True introduces command-injection risk subprocess.run( "systemctl stop hysteria-iptables.service 2>/dev/null" , :::::: shell=True ) ⇓ seagullz4/hysteria2 , hysteria2.py:L77 d9e392d # fix: remove shell=True subprocess.run( [ "systemctl" , "disable" , "hysteria-iptables.service" ], stderr=subprocess.DEVNULL ) Figure 2: Command inje ction risk introduced by GitHub Copilot in hysteria2 (1.7K stars) and its subsequent x. and nearly 500K lines of code added within just a few w e eks. At this volume and speed, it is unlikely that all AI-generated code receives a thorough human review . This makes it increasingly important to understand the long-term implications of AI-generated code on software quality and maintenance. In controlled (lab) settings [ 22 , 33 ], the provenance of code is usually clear since researchers can generate the code directly . How- ever , it is dierent in real-world codebases. The reason is that AI-generated code and human-written code are often mixed to- gether . This makes it harder to observe the long-term impact of AI-generated code after it is merged into production codebases. 2.2 T echnical Debt T echnical debt refers to design or implementation choices that pri- oritize short-term speed o ver long-term quality [ 7 ]. These shortcuts may help in the short term, but they increase the future cost of main- taining and evolving the software [ 5 , 21 ]. Its costs can accumulate over time if not addressed. This concern b ecomes more important as AI coding assistants are widely adopted. AI coding assistants help developers work faster and produce more code. Previous studies have shown that AI-generated code contains code smells, main- tainability issues, and security vulnerabilities [ 13 , 22 , 33 ]. When such issues are accepte d into production repositories, they can accumulate as technical debt in the codebase. Debt Behind the AI Boom: A Large-Scale Empirical Study of AI-Generated Code in the Wild Conference’17, July 2017, W ashington, DC, USA realsenseai/librealsense [ 36 ] , test-fps-performance.py:L168 , 5535b8a # issue: undefined constant used before definition :::::::::::::::::::: time.sleep(DEVICE_INIT_SLEEP_SEC) ⇓ realsenseai/librealsense , test-fps-performance.py:L37 , 14026c8 # fix: add missing constant definition DEVICE_INIT_SLEEP_SEC = 3 time.sleep(DEVICE_INIT_SLEEP_SEC) Figure 3: Undened variables introduced by GitHub Copilot in librealsense (8.6K stars), causing a runtime error . Fixed three weeks later . 2.3 Motivation The technical debt introduced by AI co ding assistants is not just a theoretical risk. Such issues are becoming increasingly common in real-world codebases. Figure 2 and 3 show two examples. In Figure 2 , a Copilot-authored commit introduced a shell=True subprocess call in hysteria2.py [ 42 ]. This pattern increases security risk by allowing command injection if user input is involved. A human developer later xe d it, noting in the commit message: “Improve code security by removing shell=True from subprocess calls” [ 41 ]. Figure 3 shows a bug from Intel’s librealsense [ 36 ]. A Copilot-authored commit replaced a value with a named constant but never dened it [ 20 ]. The buggy code remained in the repository for over thr e e weeks before the maintainer added the missing denition [ 19 ]. These two examples highlight the motivation of our study . AI coding assistants can generate functional code, but they may in- troduce quality issues into production codebases. Developers also tend to over-trust and accept AI suggestions without thorough r e- view [ 34 , 39 ]. These issues may be xed later , or they may persist for a long time, or even cr eate long-term maintenance challenges. Re- cent studies [ 13 , 17 , 22 , 30 , 44 , 50 ] have examined the AI-generated code, but they have sev eral limitations. How ever , most prior studies focus on a single tool, a small set of tasks, or controlled settings. For example, W atanabe et al. [ 51 ] measured the initial acceptance rate of AI-authored code in a single repositor y . W e still know little about the long-term implications of AI-generated code on software quality and maintenance. Thus, we study the technical debt intro- duced by AI coding assistants in the wild: how often it is introduced, what kinds of issues appear , and whether those issues still remain in the codebase over time. 3 Approach Figure 4 provides an overview of our approach. W e rst collect AI- authored commits from GitHub r ep ositories at scale (Section 3.1 ). W e then analyze each AI-authored commit at the code level to determine which quality issues it introduced or xed (Section 3.2 ). Finally , we track the lifecycle of b oth the issues and the code itself to determine whether AI-introduced debt persists or gets resolved over time (Section 3.3 ). 3.1 Data Colle ction This step aims to identify candidate GitHub repositories that contain AI-authored commits. Repository Discovery . W e use the GitHub Archiv e dataset, which records all public events (i.e., PushEvent ) on GitHub, to identify repositories with potential AI-authored co de. W e scan all PushEvent records from January 2024 to October 2025, focusing on reposito- ries with recent development activity . Using Go ogle BigQuery , we extract four metadata elds from each event: actor login, author name, author email, and commit message. W e then match these elds against our curated AI-attribution patterns. Only r ep ositories with at least one matching event are retained. It is worth mention- ing that GitHub changed the Events API on October 7, 2025, and removed commit-lev el summaries from PushEvent payloads [ 15 ]. Thus, for the post-October-2025 data, we supplement BigQuery- based discovery with GitHub API queries over top repositories and then apply the same attribution rules through full-history reposi- tory scanning (described in the next section). These steps help us ensure that active and popular repositories ar e not missed due to the API change. AI Attribution Rules . W e build attribution rules for widely adopted AI coding tools (e.g., Cursor , GitHub Copilot, Claude Code) identi- ed in the 2025 Stack Overow De veloper Sur vey [ 45 ]. W e identify AI-authored commits using explicit signals in Git metadata. Our approach covers AI-authored commits only when the use of an AI coding tool leaves explicit traces in Git metadata The rules are based on four sources of evidence: (1) actor logins ( e.g., copilot- swe- agent[bot]), (2) author emails (e .g., noreply@anthropic.com), (3) au- thor names (e.g., Cursor Agent ), and (4) Co-authored-by trailers in commit messages. These signals identify whether and which AI coding tool was involved in a commit. In total, 29 AI coding tools left identiable traces in the repositories we collected. The full list of tools and detection rules is included in our replication package. Full-History Commit Scanning . The discovery stage captures only push-event metadata. However , it provides only partial evi- dence about AI-authored activity in a repository . T o obtain a more complete set of AI-authored commits, we perform a bare clone of each candidate repository . W e then scan the full commit history across all branches and apply the same attribution rules to ev er y commit. For each commit, we extract the SHA, author and commit- ter metadata, timestamp, and full commit message. This step allows us to identify AI-authored commits that are not directly visible during repository discov er y (e.g., commits on non-default branches, commits outside the observation window). Filtering . T o focus on established op en-source projects, w e l- ter out repositories that do not meet our study criteria. W e keep only repositories with at least 100 GitHub stars. W e remo ve forks with fewer than 100 stars. W e also require at least one conrmed AI-authored commit. Our downstream analysis is restricted to pro- duction Python, JavaScript, and T ypeScript source les, excluding repositories or commits that do not contain analyzable source code in these languages. After ltering, our dataset contains 6,275 repos- itories with 304.4k total AI-authored commits. 3.2 Commit-Level Quality Analysis T o understand the impact of AI coding tools, we avoid evaluating a repository snapshot at a single point in time. For each AI-authored commit 𝑐 , we analyze the source code at its parent revision ( before ) and at the commit itself ( after ) to determine which quality issues Conference’17, July 2017, W ashington, DC, USA Y ue Liu, Ratnadira Widyasari, Y anjie Zhao, Ivana Clairine IRSAN, and David Lo Data Co llectio n 1 Git Hub Archiv e ( BigQuer y ) Repos it ory Di sc over y Actor Log in Author Emai l Author Name Commit Messa ge AI Candi dat e Repos Clon e F ul l Commit His tor y Scan All Branches Ide ntify AI - Author ed Commit s Filt ering (e. g., ≥100 stars) Repos it ori es & AI - Aut hored Commits nj s s c an Commit - Level Quality Analysis 2 Stat ic Analys is Pyli nt ESLint Code (Befo re) Code (After) AI - Autho red Commits Issu es (Befo re) Issu es (After) Compar e & Cl ass if y Fixed Issu es Intro duc ed Issu es Debt Lifecycl e An alysis 3 … HEAD (Lat es t Revi si on) Subsequent Commit s Semgrep Bandit Intro duc ed Issu es Survi ving Issu es Resol ved Issu es Stat ic Analys is at HEAD Compar e & Cl ass if y Commit Mess ages File Change s Figure 4: Overview of our approach. it introduced or xed. Our analysis focuses on production source les written in Python, JavaScript, and T ypeScript. W e e xclude les that are unlikely to reect production code quality (e.g., tests, doc- umentation, conguration les, auto-built artifacts, and vendored dependencies). Files are classie d based on their paths and nam- ing patterns (e .g., les under test/ or __tests__/ directories, or matching *_test.py ). The full classication rules are included in our replication package. Static Analysis . For each AI-authored commit 𝑐 that modies a source le 𝑓 , we che ck out tw o versions of 𝑓 (i.e., the version before 𝑐 (at its parent commit) and the version after 𝑐 ). W e run the same static analysis toolchain on both versions to identify code quality and security issues. For Python, we use Pylint and Bandit. For JavaScript and T yp eScript, we use ESLint and njsscan. For each detected issue, we r e cord its rule identier , line number , severity , and message. This produces two issue sets 𝐼 : the issue set b efore the commit, denoted by 𝐼 − 𝑓 , and the issue set after the commit, denoted by 𝐼 + 𝑓 . Dierential Attribution . T o nd out which issues commit 𝑐 in- troduced or xe d, we compare 𝐼 − 𝑓 and 𝐼 + 𝑓 . W e also use git diff to extract the set of changed lines, denoted by Δ 𝑓 . However , not all dierences between 𝐼 − 𝑓 and 𝐼 + 𝑓 (i.e., issues in 𝐼 + 𝑓 \ 𝐼 − 𝑓 or 𝐼 − 𝑓 \ 𝐼 + 𝑓 ) represent real changes. When a commit inserts or deletes lines, it can cause existing issues to shift line numbers. T o address this, we rst match issues across the two sets. An issue 𝑖 is considered matched if the same rule and message appear in both 𝐼 − 𝑓 and 𝐼 + 𝑓 at the same or nearby line number . After matching, the remain- ing issues are classied as follows. An unmatched issue 𝑖 ∈ 𝐼 + 𝑓 is classied as introduced only if its line falls within Δ 𝑓 . This means the issue exists only after the commit, on a line that the commit actually changed. An unmatched issue 𝑖 ∈ 𝐼 − 𝑓 is classied as xed . This means the issue existed before the commit but is no longer present afterward. 3.3 Debt Life cycle Analysis Detecting te chnical debt at the time of intr o duction is only half the picture. An issue that is quickly resolved has a very dierent cost than one that lingers for months. W e therefore track whether AI-introduced issues persist or get resolved over time. Issue Survival . For each issue intr oduce d by an AI-authored com- mit, we che ck whether it still exists at the repository’s latest revision (i.e., HEAD ). If the le has been renamed, we follo w its histor y using git log –follow . W e then run static analysis on the correspond- ing le at HEAD . Next, we look for the same issue in the analysis results. W e do not rely on the line number alone, since the loca- tion of the issue may mov e as the le changes. Instead, we match issues using their rule identier together with a small amount of surrounding code context. If a match is found, the issue is classied as surviving . Other wise, it is classied as not surviving . At the same time, we also record whether les touche d by AI- authored commits are modie d again before HEAD . W e trace the subsequent commit histor y of each aected le to understand how actively it is maintained after the AI-authored change. This addi- tional context helps us interpret the survival results and understand the maintenance patterns around AI-introduced debt. 4 Experimental Setup In this section, we describe the experimental setup for our study . 4.1 Dataset Summar y W e collected our dataset by mining GitHub and applying ltering criteria. In our study , we focus on repositories containing produc- tion source les in Python, JavaScript, or T ypeScript. Also, each repository must have at least one AI-authored commit. After lter- ing, we found 6,699 GitHub r epositories with AI-authored commits, covering 29 AI coding tools. However , some tools have few com- mits, which may not provide reliable data for comparison. Thus, we focus on the ve assistants with more than 10,000 attributed commits: GitHub Copilot, Claude, Cursor , Gemini, and Devin. Our Debt Behind the AI Boom: A Large-Scale Empirical Study of AI-Generated Code in the Wild Conference’17, July 2017, W ashington, DC, USA T able 1: Summar y of AI-authored commits by co ding tool. AI Coding T ool # AI Commits # Rep os A vg. Commits/Repo GitHub Copilot 117,851 4,473 26.3 Claude 139,300 2,431 57.3 Cursor 19791 717 27.6 Gemini 12770 681 18.8 Devin 14650 300 48.8 T otal 304,362 6,275 * 48.5 * Repositories may use more than one tool; the total is deduplicated. 0 10 000 20 000 30 000 40 000 50 000 60 000 70 000 20 24- 0 1 20 24- 0 4 20 24- 0 7 20 24- 1 0 20 25- 0 1 20 25- 0 4 20 25- 0 7 20 25- 1 0 20 26- 0 1 N"of "Co mmits 100 - 500 ,& 44. 0% 500 - 1K ,& 13. 4% 1K - 5K ,& 21. 1% 5K - 10K ,& 8. 5% 10K - 50K ,& 11. 3% 50K + ,& 1. 7% (a) Monthly commits. 0 10 000 20 000 30 000 40 000 50 000 60 000 70 000 20 24- 0 1 20 24- 0 4 20 24- 0 7 20 24- 1 0 20 25- 0 1 20 25- 0 4 20 25- 0 7 20 25- 1 0 20 26- 0 1 N" o f " C o m m i t s 100 - 500 ,& 44.0% 500 - 1K ,& 13.4% 1K - 5K ,& 21.1% 5K - 10K ,& 8.5% 10K - 50K ,& 11.3% 50K+ ,& 1.7% (b) Repositor y distribution. Figure 5: Overview of our dataset: (a) growth of AI-authored commits over time, and (b) distribution of repositories by GitHub star count as of March 2026. nal dataset includes 6,275 public GitHub repositories with 304,362 veried AI-authored commits. T able 1 summarizes the distribution of commits across the ve AI coding assistants in our dataset. Fig- ure 5a shows the monthly growth of AI-authored commits, with a sharp increase starting from mid-2025. Figur e 5b shows that our dataset covers repositories with a wide range of popularity levels. 4.2 Research Questions Our study focuses on the following research questions: RQ1: What kinds of technical debt are introduce d by AI cod- ing assistants? This question investigates the basic characteristics of AI-introduced te chnical debt. W e study what types of debt they in- troduce, including code smells and security issues. W e also analyze how these issues are distributed across severity levels, languages, and rules. RQ2: How do es technical debt vary across AI coding assis- tants? This question compares dierent AI coding assistants at the commit lev el. W e examine whether some assistants introduce more technical debt than others, and whether the kinds of issues they introduce dier . This helps us understand whether technical debt patterns are tool-specic. RQ3: How does AI-introduced te chnical debt evolve over time? Introducing debt is not necessarily a problem if it gets xe d quickly . The real concern is debt that persists unnotice d. W e study T able 2: Overview of AI-introduce d technical debt by issue type. T ype # Introduced # Repos # Commits % of T otal Code Smells 431,850 3,789 25,895 89.1% Runtime Bugs 28,149 663 1,646 5.8% Security Issues 24,607 1,038 4,158 5.1% All Issues 484,606 3,841 26,564 - whether introduced issues remain in the latest version of the reposi- tory or disapp ear over time. W e also examine whether the associated code and les are later modied, xed, or refactored. 4.3 Evaluation Metrics W e use the following metrics to support our three research ques- tions. For issue intr o duction (RQ1, RQ2), w e report the total numb er of issues introduced by AI-author e d commits, the percentage of commits that introduce at least one issue, and the average number of issues per commit. W e break these down by issue type, pr ogram- ming language, rule, and AI coding assistant. For the debt lifecycle (RQ3), we use three metrics. First, we com- pute the net impact by comparing the number of issues introduce d and xed by AI-authored commits. Second, we measure the survival rate of introduced issues: Survival Rate = # issues surviving at HEAD # issues tracked Third, to normalize for dierences in commit volume across time pe- riods, we report the number of surviving issues per 100 AI-authored commits. 5 Results This section presents the empirical results of our study and answers the three research questions raised in Section 4.2 . 5.1 RQ1: T ypes and Patterns of AI-Introduced Debt Overview . T able 2 pr esents a summary of the technical debt intro- duced by AI coding assistants in our dataset. In total, we identied 484,606 introduced issues across 3,841 repositories (61.2% of 6,275 repositories) and 26,564 commits (8.7% of 304,362 commits). This shows that a non-trivial portion of AI-authored commits intro- duce quality issues, and that these issues aect a large number of real-world repositories. Among all introduced issues, code smells, runtime bugs, and security issues are the three main categories. Code smells are by far the most common, accounting for 89.1% of all introduced issues. Below , we discuss each type in detail with real-world examples. Code Smells . Code smells are maintainability problems that make code harder to understand, debug, and ev olve [ 12 ]. They increase long-term maintenance costs, even if they do not cause immediate failures. This nding is consistent with prior work under contr olled settings [ 22 , 44 ], but our study conrms that the same pattern also appears in real-world repositories. T able 3 lists the top 5 most common code smell patterns (e.g., broad exception handling, unuse d Conference’17, July 2017, W ashington, DC, USA Y ue Liu, Ratnadira Widyasari, Y anjie Zhao, Ivana Clairine IRSAN, and David Lo T able 3: T op 5 most frequent rules violated by AI coding assistants for each issue typ e. T ype Rule Count Rate Code Smells Broad exception handling 41,723 8.6% Unused variables or parameters 28,718 5.9% Unused argument 24,444 5.0% Shadowed outer variable 20,251 4.2% Access to protected member 19,835 4.1% Runtime Bugs Undened variable or reference 23,091 4.8% Redeclared symbol 1,870 0.4% Possibly used before assignment 1584 0.3% Access member before denition 893 0.2% Unsubscriptable object 130 0.0% Security Issues Subprocess Without Shell Check 4,334 0.9% Try-Except-Pass 4,040 0.8% Partial Executable Path 2,539 0.5% Insecure Random Generator 2,355 0.5% Subprocess Import 1,718 0.4% ArchiveBox/ArchiveBox [ 3 ] , core/models.py:L594:598 , d360798 # issue: broad exception handler silently swallows JSON-loading failures try : with ::::::::: open(json_path) as f: data = json.load(f) :::: except: pass Listing 1: Code smell: broad exception handling and missing le encoding in ArchiveBox (Claude Code). variables or parameters). These issues are often small and easy to overlook during co de revie w . Listing 1 shows an example from ArchiveBox [ 1 ] ( > 27k stars). In commit d36079829bed [ 3 ], Claude Code updated the metadata loading logic in ArchiveBox . But the new code introduces two code smells. First, the bar e except: pass block catches all exceptions silently . This makes errors har der to detect and debug. Second, the open() function does not specify a le encoding. This can lead to inconsistent behavior across dierent platforms and locales, since the default enco ding may vary [ 29 ]. These issues may not cause immediate failures, but they can lead to maintenance challenges and subtle bugs in the future. Runtime Bugs . Runtime bugs are code defects that can cause the program to fail during execution. Compared with code smells, they are less frequent. From T able 2 , 28,149 runtime bugs are identied, which cover 663 repositories and 1,646 commits. However , their impact is more direct and sever e than code smells. T able 3 shows the top 5 most common runtime bugs, which include undened variable or reference, redeclared symbol, access to memb er before deni- tion, possibly used b efore assignment, and unsubscriptable object. These patterns suggest that AI-generated code may lo ok locally correct, but still fail to stay consistent with the surrounding context. What is interesting in this table is that we identied 23,091 cases of undened variable or reference. Listing 2 pr esents one such case from firecrawl ( > 98k stars). In commit fb99747ba978 [ 11 ], Devin added a call that passes cache=cache as an argument. However , firecrawl/firecrawl [ 11 ] , python-sdk/firecrawl/firecrawl.py:L4004:4047 , fb99747 # issue: undefined variable "cache" causes a runtime NameError async def generate_llms_text( self, url: str , *, max_urls: Optional[ int ] = None, show_full_text: Optional[ bool ] = None, experimental_stream: Optional[ bool ] = None) -> GenerateLLMsTextStatusResponse: response = await self.async_generate_llms_text( url, max_urls=max_urls, show_full_text=show_full_text, ::::::: cache=cache, experimental_stream=experimental_stream ) Listing 2: Runtime bug: undened variable causing NameError in Firecrawl (Devin). microsoft/data-formulator [ 26 ] , /tables_routes.py:L881:889 , d8549c0 # issue: user-controlled table name is interpolated directly into SQL for source_name in source_table_names: if source_name == updated_table_name: df = pd.DataFrame(updated_table[ ' rows ' ]) else : with db_manager.connection(session[ ' session_id ' ]) as db: ::::::::::::::::::::::::::::::::::::: result = db.execute(f"SELECT * FROM {source_name}").fetchdf() df = result Listing 3: Security issue: possible SQL injection in data-formulator (Copilot). cache is never dened in the method, which leads to a NameError when that path is executed. The maintainer later xed the bug by removing the undened argument [ 10 ]. This example shows that AI-generated code can introduce real runtime errors. These errors require additional human eort to x later . Security Issues . Security issues are another concern in AI-generated code. They pose a threat to data privacy and system safety . If not properly addressed, they can lead to data breaches or unautho- rized access. Thus, it is important to identify and x security is- sues early before they enter production repositories. A s shown in T able 2 , potentially insecure code patterns are also detected in 1,038 repositories and 4,158 commits. T able 3 shows that the most common security issues include subprocess without shell check, try-except-pass, insecure random generator , partial executable path, and unsafe HuggingFace Hub download. These patterns suggest that AI-generated code can introduce unsafe practices in process execution, error handling, and external resource usage. Figure 2 shows an example of the most common issues (i.e., subprocess with- out shell check) from hysteria2 ( > 1.5k stars). A Copilot-authored commit [ 42 ] introduced a shell=True subprocess call, which can enable command injection if user input reaches the call. A human developer later identied and r emoved the unsafe ag [ 41 ]. Bey ond that, Listing 3 shows another example of a se curity issue (SQL injection) in data-formulator ( > 1.2k stars). This repositor y is de- veloped by Microsoft and uses GitHub Copilot for co de generation. Debt Behind the AI Boom: A Large-Scale Empirical Study of AI-Generated Code in the Wild Conference’17, July 2017, W ashington, DC, USA T able 4: T op 5 most frequent rules by language. Rate indicates each rule’s share of all issues in that language. Language Rule Count Rate Python Broad exception handling 41,721 14.1% Unused argument 24,444 8.3% Undened variable or reference 23,091 7.8% Access to protected member 19,835 6.7% Unused import 17,435 5.9% JavaScript/ T ypeScript Unused variables or parameters 28,718 15.2% Shadowed outer variable 18,016 9.6% Block-scoped variable misuse 11255 6.0% No sequences 8989 4.8% No unused expressions 7673 4.1% Codi ng T ool Issu es Smell s Bugs Secur it y Git Hub Copil ot 1.27 1.134 0.094 0.042 Cla ude 1.96 1.734 0.1 1 1 0.1 19 Cur sor 1.56 1.455 0.03 0.079 Gemini 1.38 1.25 0.053 0.076 Devi n 0.87 0.807 0.017 0.042 T able 5: A verage number of issues introduced per commit by type. Darker cells indicate higher values. In commit d8549c0 [ 26 ], Copilot added a backend endpoint that constructs a SQL quer y by directly interpolating a user-supplied table name. This creates a potential SQL injection vector . If an attacker can control the source_name variable, they can inject ma- licious SQL code that may lead to data breaches or unauthorized access. This issue r emaine d in the repositor y for sev eral weeks before the maintainer refactored the code and removed the unsafe SQL construction [ 27 ]. Programming Language Dierences . T able 4 compares the top 5 most frequent rules in Python and JavaScript/T yp eScript. There are some patterns that ar e common in both languages. For e xample, both languages show issues related to unused code (i.e ., unused arguments in Python, unused variables in JavaScript/T yp eScript). At the same time, each language also has its own characteristic issues. Python’s top rules are dominated by exception handling and dynamic typing problems, while JavaScript/T yp eScript issues tend to involve scoping and variable declaration patterns. This obser- vation is consistent with prior studies [ 22 , 44 ], which also found that the types of issues in AI-generated code can vary depending on the programming language and the tools used. These results suggest that some debt patterns may be language-specic. How- ever , the overall trend ( e.g., code smells dominate) holds across b oth languages. A nswer to RQ1: AI-generated code introduces technical debt in the form of code smells (89.1%), runtime bugs (5.8%), and secu- rity issues (5.1%). A mong them, code smells are by far the most common. 17.3% 24.5% 25.9% 28.7% 23.7% 0 20,000 40,000 60,000 80,000 100,000 120,000 140,000 0% 5% 10 % 15 % 20 % 25 % 30 % 35 % Gi t H u b Co pi l ot Cl a u de Cu r s or Gem i n i De vin N5of5Commits Percentage %5 with5I ss u e s To tal5 Comm i t s Figure 6: Percentage of commits with issues and total commit volume per AI coding assistant. 400K 200K 0K 200K 400K Code smell Bug Security 431,850 449,984 28,149 23,326 24,607 13,487 net -18,134 net +4,823 net +11,120 Fixed by AI Introduced by AI Figure 7: Net impact of AI coding assistants: issues introduced vs. xed by issue type. 5.2 RQ2: Comparison Acr oss AI Coding Assistants In this RQ , we examine how technical debt patterns vary across AI coding assistants. Figure 6 shows the p ercentage of commits with issues for each of the ve AI coding assistants. What stands out in this gure is that more than 15% of commits by each AI tool introduce at least one issue. The rates also vary across tools, rang- ing from 17.3% for GitHub Copilot to 28.7% for Gemini. Ho wever , technical debt does not disapp ear when using dierent or newer tools. This suggests that te chnical debt is a common and persistent problem in AI-generated code. T able 5 further compares the average numb er of introduced issues p er commit by type. W e can see that all ve tools share a common pattern, where the code smell rate is much higher than the bug and security issue rates. This is consistent with our ndings in RQ1. At the same time, ther e are also dierences across tools. Fr om T able 5 , we can see that Claude has the highest issue rate per commit (1.96), while Devin has the lowest (0.87). These dierences may be due to dier ences in usage patterns and development context, rather than the tools alone. Still, it is apparent that the o verall pattern of technical debt is consistent across all ve tools. A nswer to RQ2: T e chnical debt patterns vary across AI coding assistants, but the overall trend is consistent. Across all ve to ols, code smells remain the dominant form of AI-introduced debt. Conference’17, July 2017, W ashington, DC, USA Y ue Liu, Ratnadira Widyasari, Y anjie Zhao, Ivana Clairine IRSAN, and David Lo 0 20 00 0 40 00 0 60 00 0 80 00 0 10 00 00 12 00 00 25 -0 1 25 -0 2 25 -0 3 25 -0 4 25 -0 5 25 -0 6 25 -0 7 25 -0 8 25 -0 9 25 -1 0 25 -1 1 25 -1 2 26 -0 1 26 -0 2 Number'of'Surviving'Is sues'at'HEAD Issue'Introduction'Time'(Year - Month) Sec u ri t y'I s su e s Ru n t i m e ' Bug Co d e ' S me l l s Figure 8: Cumulative growth of AI-introduced issues ov er time, by issue type. T able 6: Issue survival by time since introduction. V alues show survival rates and the number of sur viving issues per 100 AI-authored commits. Time Since Introduction Survival Rate Surviving Issues per 100 Commits Overall Code Smells Bugs Security > 9 months 19.20% 22.20 19.58 0.95 1.67 6–9 months 17.90% 29.73 25.60 1.56 2.57 3–6 months 29.30% 41.23 35.62 2.62 2.99 < 3 months 24.80% 39.92 33.03 3.10 3.79 All Issues 24.20% 37.25 31.49 2.55 3.22 5.3 RQ3: Life cycle of AI-Intr o duced Debt Net Impact . In RQ1 and RQ2, we fo cus on the technical debt intr o- duced by AI-authored commits. However , AI co ding assistants can also remove existing issues during refactoring or code improvement. T o better understand the overall lifecycle of AI-introduced debt, we compare the number of issues introduce d and xed by AI commits (see Figur e 7 ). For co de smells, we can se e that AI-authored commits x more issues than they introduce (449,984 vs. 431,850), resulting in a net reduction of 18,134 code smells. In contrast, for runtime bugs and security issues, AI commits introduce mor e issues than they x. What is inter esting is that AI introduces nearly twice as many security issues as it xes. These ndings indicate that the net impact of AI coding assistants is mixed. AI co ding assistants can help r e duce maintainability issues, which tend to follow simple and repetitive patterns. How ever , for bugs and security issues, which require a deeper understanding and reasoning about program logic and context, AI coding assistants introduce more problems than they resolve . Issue Survival . The net impact analysis above pro vides an ov er view of what AI coding assistants add and remove . But it does not show what happens to the specic issues introduced by AI. T o answer this question, we track each AI-introduced issue to the latest repository snapshot and check whether it still exists at HEAD . Figure 8 shows that the cumulative number of sur viving issues keeps growing over time. The total v olume of unresolved technical debt increases Figure 9: A T ypeScript lint issue introduced by a Claude- authored commit in Stirling-PDF was xed one day later by removing the unused variable. rapidly , climbing from just a few hundred issues in early 2025 to over 110,000 surviving issues by Februar y 2026. This suggests that as the rapid adoption of AI coding assistants continues, the amount of AI-introduced debt in real-world repositories is also growing signicantly . T able 6 provides a normalize d view of this sur viving debt. O verall, we can see that 24.2% of tracked AI-introduced issues potentially still survive at HEAD , which corresponds to 37.25 surviving issues per 100 AI-authored commits. Survival rates also dier across issue types. Security issues are the most likely to remain at HEAD (41.1%), followed by runtime bugs (30.3%) and code smells (22.7%). When we group these issues by age, we can see an interesting pattern. It is apparent that issues introduced earlier tend to have few er survivors per 100 commits than more recent ones. For issues older than 9 months, only 22.20 issues per 100 commits still survive, compared to 39.92 for those introduced in the last 3 months. A similar decrease can also be observed for all thr ee issue types. This suggests that AI- introduced debt does get gradually resolved over time. However , the resolution process is slow , and even after nine months, a substantial amount of debt remains in the codebase. These aggregate results are also reected in real-world reposito- ries. For example, the broad exception handling issue in Listing 1 was xed within hours after it was introduced [ 2 , 3 ]. Similarly , in Stirling-PDF [ 48 ] ( > 75k stars), a Claude-authored commit in- troduced an unuse d variable filename in a T yp eScript le [ 47 ]. As shown in Figure 9 , the maintainer xed it the next day with a commit titled “Fix T ypeScript linting error” [ 46 ]. In contrast, the undened variable bug in firecrawl (Listing 2 ) took 42 days before the maintainer xed it [ 10 ]. Howev er , some issues can sur- vive for much longer or even remain unresolved. For instance, a Devin-authored commit in brave_search_tool.py added a call to requests.get(...) without a timeout on December 2024 [ 6 ]. This is a known potential se curity issue, because requests made without a timeout may block indenitely if the remote service does not respond[ 35 ]. However , the issue still remains in the latest repository revision. A nswer to RQ3: AI-introduce d debt is not always removed quickly after introduction. Overall, 24.2% of tracked issues still survive at HEAD , and even issues older than 9 months leave a substantial surviving burden in the codebase. Debt Behind the AI Boom: A Large-Scale Empirical Study of AI-Generated Code in the Wild Conference’17, July 2017, W ashington, DC, USA 6 Discussion 6.1 Implications Our empirical study shows that AI coding assistants introduce technical debt into real softwar e repositories. This is not a property of any single tool. W e observed that across all ve tools we studied, more than 15% of commits introduce at least one detectable issue . These issues persist regardless of repository size or popularity . In this se ction, we discuss the implications of our ndings for practitioners, researchers, and tool builders. The main implication is not just that AI coding assistants may produce low-quality code. More importantly , AI-assisted software development changes how technical debt enters and remains in production systems. Prior studies have shown that developers are more likely to ov er-trust AI suggestions [ 39 ]. This over-trust can lead to a higher acceptance rate of AI-generated code, ev en when it contains issues. It means that many AI-introduced issues can accumulate in the codebase. Our results show that code smells are the most common type of AI-introduced debt. They often do not break the software system immediately , making them easy to accept during code review . But AI coding assistants allow developers to produce code at much higher spee d and volume. A s a result, these minor issues can accumulate into a substantial maintenance burden over time. Figure 8 shows that the cumulative number of surviving AI-introduced issues continues to rise over time, e xcee ding 110,000 by February 2026. The technical debt introduced by AI does not have to be a temp orary side ee ct. It can be come part of long-term maintenance challenges for modern software systems. At the same time, our ndings suggest although AI coding assis- tants introduce technical debt, they also x existing issues in the codebase. First, we observe that AI co-authored commits actually x a similar number of code smell issues as they introduce. This suggests that AI coding assistants are able to perform local cleanup and repetitive maintenance tasks eectively . They can recognize and address surface-lev el code quality problems (e .g., formatting, naming, or simple refactoring opportunities). Howev er , what is concerning is that AI coding assistants se em to b e less ee ctive at xing bugs and security issues, and they even introduce more of these than they x. Section 5.3 shows that the survival rate of AI-introduced runtime bugs and security issues is much higher than that of code smells. This inconsistency suggests that AI coding assis- tants may struggle with changes that require deeper understanding of program behavior , execution context, or security implications. Also, the practical impact of bugs and security issues is often more severe than that of code smells, making it more critical to address them eectively . Developers should not treat all AI-generated code as equally trustworthy , and they should pay particular attention to changes that may introduce bugs or security vulnerabilities. Our cross-tool comparison shows that this problem cannot be solved simply by switching from one assistant to another . Figure 6 shows that all ve tools introduce a similar pattern of issues. They all have a high rate of code smells, and a non-trivial rate of bugs and security issues. This suggests that the quality risk is a systemic issue with the current mode of AI-assisted development. Thus, developers and teams should carefully review AI-generated code regardless of the tool used. Static analysis, tests, and security checks should be part of the normal worko w . Code review should extend beyond the point of merge. The main reason is that our study shows that 24.2% of tracked AI-introduced issues still sur vive at HEAD , and older issues are not fully cleaned up even after months. Merging the AI-generated code do es not mean the end of the stor y since debt can persist and accumulate over time. This makes continuous monitoring and targeted debt repayment ne cessary for AI-touched code. Overall, our results suggest that future research and tool design should not just focus on generating more acceptable code. W e also need to ask whether that code remains maintainable, correct, and secure over time . Most existing research studies [ 22 , 44 ] focus on short-term outcomes such as task completion, acceptance rate, or immediate correctness. How ever , these measures only capture what happens when the co de is introduced, not what happens later in maintenance. Future w ork needs to examine which factors make AI-introduced debt more likely to persist (e .g., repositor y maturity , review intensity , or task type). Our ndings also suggest the need for better assistants. Future tools should make stronger checks for security-sensitive changes, use repositor y context more eectively , and clearly show AI provenance so r eviewers can better judge the risk of a change. In the end, the key question is not only whether AI can produce code at scale, but whether the software engineering ecosystem can manage that code well over time. 6.2 Limitations Lack of human-written baseline . Our study does not compare AI-authored commits against a clean baseline of human-written commits. In real world, dev elop ers may use AI coding assistants but they do not leave any trace of it in the Git metadata. Even when a commit is labeled as AI-authored, the nal code may include contri- butions from the human dev elop er (e.g. edits, additions). This makes it dicult to construct a reliable baseline of purely human-authored commits. Further , comparing our datasets with an unreliable base- line would cause biased results and misleading conclusions. For this reason, we focus on tracking the te chnical debt inside explicitly conrmed AI-authored or co-authored commits. Scope of technical debt . In this study , we focus on code-level technical debt that can b e detected by static analysis tools. W e categorize issues into three types: code smells, bugs, and security issues. This categorization is based on the capabilities of the tools we use (e.g., Pylint, Bandit) and the common types of te chnical debt in software development. How ever , technical debt is a broader concept that includes architectural debt, design debt, documentation debt, and other forms of maintenance challenges. Thus, our ndings should b e interpreted as reecting the code-level technical debt introduced by AI coding assistants, rather than the full spectrum of technical debt that may arise in software projects. Survival Tracking Limitations . Our issue survival analysis de- termines whether an issue still exists at HEAD by matching rule identiers and surrounding code conte xt. However , a le may be deleted or entirely rewritten between the introducing commit and HEAD . In such cases, the issue is classied as resolved, even though the resolution may not be a deliberate x. W e ackno wle dge this lim- itation and note that our sur vival rates may slightly underestimate the true persistence of AI-introduced debt. Conference’17, July 2017, W ashington, DC, USA Y ue Liu, Ratnadira Widyasari, Y anjie Zhao, Ivana Clairine IRSAN, and David Lo 7 Related w ork Code Quality of AI-Generate d Code . Previous studies have e x- amined the quality and se curity of AI-generated code. Base d on controlled experiments, they sho wed that AI coding assistants (e .g., GitHub Copilot and ChatGPT) are able to produce functional code, but the code quality varies widely across languages, tasks, and prompts [ 22 , 25 , 30 ]. AI-generated code can also contain security weaknesses, and developers may ov er-trust it and fail to properly review it [ 33 , 34 , 40 ]. Recent studies have also begun examining AI-generated code in real-world production environments. They show that AI-generated code is b eing widely adopted in platforms such as GitHub and Stack Overow , and that it can carry quality and security issues [ 13 , 44 , 50 ]. He et al. [ 17 ] studied the impact of Cursor adoption on 807 repositories and observed persistent increases in code complexity . W atanabe et al. [ 51 ] found that most Claude Code pull requests ar e merged, though many require human revisions. How ever , these studies mainly focus on a single to ol or a narrow set of quality issues, and they do not track ho w those issues evolve . T echnical Debt in Software Development . T echnical debt is a core topic in software engineering research for years. Previous work has introduced many automate d methods to identify co de smells and self-admitted technical debt in softwar e repositories [ 32 , 37 , 52 ]. Bey ond detection, many studies have also investigated the lifecycle of technical debt in human-written co de. Tufano et al. [ 49 ] showed that code smells are often introduced during normal development. Once introduced, they can linger in the codebase for a long time b efore anyone removes them [ 49 ]. Digkas et al. [ 9 ] identied a similar pattern in large op en-source ecosystems. Their results showed that technical debt mainly accumulates when ne w code is added [ 8 , 9 ]. Other studies further suggest that debt is rar ely removed in an intentional way , and that automated repayment is still dicult in practice [ 24 , 53 ]. T ogether , these studies provide a strong foundation for understanding technical debt in human- written software. 8 Threats to V alidity Below , we discuss threats that may impact the results of our study . External validity . Our study focuses on public GitHub reposi- tories with at least 100 stars and production source les in Python, JavaScript, and T ypeScript. Therefore, our ndings may not gener- alize to private r ep ositories, smaller projects, or software written in other languages. In addition, we only analyze AI-authored commits that leave explicit traces in Git metadata. Projects or tools that use AI without such traces are outside the scope of our dataset. Internal validity . Our pip eline depends on the correctness of AI attribution, issue matching, and lifecycle tracking. Although we use explicit Git metadata rather than proxy signals, some commits may still include both AI and human contributions. Similarly , matching issues across r evisions is challenging because les ev olve over time and issues may shift lo cation. T o reduce this risk, we compare code before and after each commit, restrict introduced issues to changed lines, and use rule identiers, messages, and surrounding code context during matching. Ho wever , some attribution errors may still remain. Construct validity . T echnical debt is a broad concept with many possible forms. In this study , we operationalize technical debt mainly through code smells, correctness issues, and security issues detected by static analysis tools. This choice allows us to measure debt consistently at scale and track it ov er time at the commit level. However , static analysis tools can produce false p ositives, agging code that is technically correct but matches a known risky pattern. Additionally , static analysis do es not cover all forms of technical debt. Architectural debt, design erosion, documentation debt, and test adequacy issues are outside the scope of our tools. Our results should therefore be interpreted as evidence about code- level technical debt, not the full spectrum of quality challenges that AI-generated code may introduce. 9 Conclusion AI coding assistants are rapidly becoming part of real-world soft- ware development, but their long-term impact on software quality remains unclear . In this paper , we presented a large-scale empirical study of the technical debt introduced by AI-generated code in the wild. By mining 304,362 AI-authored commits from 6,275 GitHub repositories, we designe d a commit-level pip eline to identify in- troduced technical debt and track its later evolution in production repositories. Our study provides a longitudinal view of how AI- generated code ages after it is merged, including what kinds of debt are introduced, how debt varies across assistants, and whether intro- duced issues persist or are later addr essed. Overall, this work oers new evidence on the maintenance costs behind the rapid adoption of AI coding assistants and highlights the nee d for stronger quality assurance in AI-assisted software development. References [1] 2025. ArchiveBox. https://github.com/ArchiveBox/ArchiveBo x [2] 2025. Commit 762cddc : x: address PR review comments from cubic-dev-ai. https://github.com/Archiv eBox/ArchiveBox/commit/ 762cddc8c5d42095c26dda0e193fab6794fd69d5 [3] 2025. Commit d360798 : Replace index.json with index.jsonl at JSONL format. https://github.com/ArchiveBox/ArchiveBo x/commit/ d36079829bed32d71b2a1a5e8e6019457d6a7ae7 [4] Anthropic. 2026. Claude Opus 4.6 wrote a dependency-free C compiler in Rust, with backends targeting x86 (64- and 32-bit), ARM, and RISC- V , capable of com- piling a booting Linux kernel. https://github.com/anthropics/claudes- c- compiler [5] Paris A vgeriou, Philippe Kruchten, Ipek Ozkaya, and Carolyn Seaman. 2016. Man- aging technical debt in software engineering (dagstuhl seminar 16162). Dagstuhl reports 6, 4 (2016), 110–138. [6] crew AIInc. 2024. Commit 439cde1 : style: apply nal formatting changes. https://github.com/crew AIInc/crew AI- tools/commit/ 439cde180cd69791f46dedde192c41184ca1f96f [7] W ard Cunningham. 1992. The WyCash portfolio management system. ACM Sigplan Oops Messenger 4, 2 (1992), 29–30. [8] George Digkas, Alexander Chatzigeorgiou, Apostolos Ampatzoglou, and Paris A vgeriou. 2020. Can clean new code reduce technical debt density? IEEE T rans- actions on Software Engineering 48, 5 (2020), 1705–1721. [9] Georgios Digkas, Mircea Lungu, Paris A vgeriou, Alexander Chatzigeorgiou, and Apostolos Ampatzoglou. 2018. How do developers x issues and pay back technical debt in the apache ecosystem? . In 2018 IEEE 25th International Conference on software analysis, evolution and reengineering (SANER) . IEEE, 153–163. [10] recrawl. 2025. Commit a7aa0cb : Fix Pydantic eld name shadowing issues causing import NameError . https://github.com/recrawl/recrawl/commit/ a7aa0cb2f4496394a94b50f0013eb0328b408dc8 [11] recrawl. 2025. Commit fb99747 : x: revert accidental cache=True changes to preserve original cache parameter handling. https://github.com/recrawl/ recrawl/commit/f b99747ba9787683ac5722ba55c46f823461691a [12] Martin Fowler . 2018. Refactoring: improving the design of existing code . Addison- W esley Professional. [13] Y ujia Fu, Peng Liang, Amjed Tahir , Zengyang Li, Mojtaba Shahin, Jiaxin Yu, and Jinfu Chen. 2025. Security weaknesses of copilot-generated code in github Debt Behind the AI Boom: A Large-Scale Empirical Study of AI-Generated Code in the Wild Conference’17, July 2017, W ashington, DC, USA projects: An empirical study. ACM Transactions on Software Engineering and Methodology 34, 8 (2025), 1–34. [14] GitHub. 2024. Octoverse: A new developer joins GitHub every second as AI leads T ypeScript to #1. https://github.blog/news- insights/octoverse/octoverse- a- new- developer- joins- github- every- second- as- ai- leads- typescript- to- 1/ [15] GitHub. 2025. Upcoming changes to GitHub Events API payloads. https://github.blog/changelog/2025- 08- 08- upcoming- changes- to- github- events- api- payloads/ [16] William Harding and Matthew Kloster . 2024. Coding on copilot: 2023 data suggests downward pressure on code quality. https://w ww . gitclear . com/cod- ing_on_copilot_data_shows_ais_downward_pressur e_on_co de_quality/ (2024). [17] Hao He, Courtney Miller, Shyam Agar wal, Christian Kästner , and Bogdan V asilescu. 2025. Does AI-Assisted Coding Deliver? A Dier ence-in-Dierences Study of Cursor’s Impact on Software Projects. arXiv e-prints (2025), arXiv–2511. [18] Haoming Huang, Pongchai Jaisri, Shota Shimizu, Lingfeng Chen, Sota Nakashima, and Gema Rodríguez-Pérez. 2026. More Code, Less Reuse: Investigating Code Quality and Reviewer Sentiment towards AI-generated Pull Requests. arXiv preprint arXiv:2601.21276 (2026). [19] Intel RealSense. 2025. Commit 14026c8 : Add missing constants and x 6fps bug. https://github.com/realsenseai/librealsense/commit/ 14026c898f790db79a0b588983c08a3108fa326e [20] Intel RealSense. 2025. Commit 5535b8a : Refactor test script with named constants. https://github .com/realsenseai/librealsense/commit/ 5535b8a204bc759324ee89f864eb680362be5ece [21] Zengyang Li, Paris A vgeriou, and Peng Liang. 2015. A systematic mapping study on technical debt and its management. Journal of systems and software 101 (2015), 193–220. [22] Y ue Liu, Thanh Le-Cong, Ratnadira Widyasari, Chakkrit Tantithamthav orn, Li Li, Xuan-Bach D Le, and David Lo. 2024. Rening chatgpt-generated code: Characterizing and mitigating code quality issues. ACM Transactions on Software Engineering and Methodology 33, 5 (2024), 1–26. [23] Y ue Liu, Zhenchang Xing, Shidong Pan, and Chakkrit T antithamthavorn. 2025. When AI Takes the Whe el: Security Analysis of Framework-Constrained Program Generation. arXiv preprint arXiv:2510.16823 (2025). [24] Antonio Mastropaolo, Massimiliano Di Penta, and Gabriele Bavota. 2023. T owards automatically addressing self-admitted technical debt: How far are we? . In 2023 38th IEEE/ACM International Conference on Automated Software Engineering (ASE) . IEEE, 585–597. [25] Antonio Mastropaolo, Luca Pascarella, Emanuela Guglielmi, Matteo Ciniselli, Simone Scalabrino, Rocco Oliveto, and Gabriele Bavota. 2023. On the robustness of code generation techniques: An empirical study on github copilot. In 2023 IEEE/ACM 45th International Conference on Software Engineering (ICSE) . IEEE, 2149–2160. [26] Microsoft. 2025. Commit d8549c0 : Add refr esh data feature with backend endpoint and UI components. https://github.com/microsoft/data- formulator/ commit/d8549c0c8c139531ee5bf266609f7e5352384c5f [27] Microsoft. 2026. data-formulator . https://github.com/microsoft/data- formulator [28] Sergio Mor eschini, Elvira-Maria Arvanitou, Elisavet-Persefoni Kanidou, Nikolaos Nikolaidis, Ruoyu Su, Apostolos Ampatzoglou, Alexander Chatzigeorgiou, and V alentina Lenarduzzi. 2026. The Evolution of Technical Debt from DevOps to Generative AI: A multivocal literature review . Journal of Systems and Software 231 (2026), 112599. [29] Inada Naoki. 2021. PEP 597 – Add optional EncodingW arning. https://peps. python.org/pep- 0597/ [30] Nhan Nguyen and Sarah Nadi. 2022. An empirical evaluation of GitHub copilot’s code suggestions. In Proceedings of the 19th International Conference on Mining Software Rep ositories . 1–5. [31] Jordan Novet. 2025. Satya Nadella says as much as 30% of Microsoft code is written by AI. https://www.cnbc.com/2025/04/29/satya- nadella- says- as- much- as- 30percent- of- microsoft- code- is- written- by- ai.html [32] Fabio Palomba, Gabriele Bavota, Massimiliano Di Penta, Fausto Fasano , Ro cco Oliveto, and Andrea De Lucia. 2018. On the diuseness and the impact on maintainability of code smells: a large scale empirical investigation. In Proceedings of the 40th international conference on software engineering . 482–482. [33] Hammond Pearce, Baleegh Ahmad, Benjamin T an, Brendan Dolan-Gavitt, and Ramesh Karri. 2025. Asleep at the keyboard? assessing the se curity of github copilot’s code contributions. Commun. A CM 68, 2 (2025), 96–105. [34] Neil Perry , Megha Srivastava, Deepak Kumar , and Dan Boneh. 2023. Do users write more insecure code with ai assistants?. In Procee dings of the 2023 ACM SIGSAC conference on computer and communications security . 2785–2799. [35] PyCQA. 2023. B113: T est for Missing Requests Timeout. https://bandit. readthedocs.io/en/latest/plugins/b113_request_without_timeout.html [36] RealSense. 2025. librealsense. https://github.com/IntelRealSense/librealsense Accessed: 2026-01-15. [37] Xiaoxue Ren, Zhenchang Xing, Xin Xia, David Lo, Xinyu W ang, and John Grundy . 2019. Neural network-based detection of self-admitted technical debt: From performance to explainability . ACM transactions on software engineering and methodology (TOSEM) 28, 3 (2019), 1–45. [38] K ylie Robison. 2024. Google CEO Sundar Pichai says more than a quarter of the company’s new code is created by AI. https://fortune.com/2024/10/30/googles- code- ai- sundar- pichai/ [39] Sadra Sabouri, Philipp Eibl, Xinyi Zhou, Morteza Ziyadi, Nenad Medvidovic, Lars Lindemann, and Souti Chattopadhyay . 2025. Trust dynamics in ai-assisted development: Denitions, factors, and implications. In 2025 IEEE/A CM 47th In- ternational Conference on Software Engineering (ICSE) . IEEE Computer Society , 736–736. [40] Gustavo Sandoval, Hammond Pearce, Teo Nys, Ramesh Karri, Siddharth Garg, and Brendan Dolan-Gavitt. 2023. Lost at c: A user study on the security implications of large language model co de assistants. In 32nd USENIX Security Symposium (USENIX Security 23) . 2205–2222. [41] seagullz4. 2025. Commit d9e392d : Improve code security by remo ving shell=T rue. https://github.com/seagullz4/hysteria2/commit/d9e392d [42] seagullz4. 2025. Commit e277daf : Introduce shell-based sub- process call. https://github.com/seagullz4/hysteria2/commit/ e277daf540dad4b5a34822f0088e70617b689587 [43] seagullz4. 2025. hysteria2. https://github.com/seagullz4/hysteria2 Accessed: 2026-01-15. [44] Mohammed Latif Siddiq, Lindsay Roney , Jiahao Zhang, and Joanna Cecilia Da Silva Santos. 2024. Quality assessment of chatgpt generated co de and their use by developers. In Proceedings of the 21st international conference on mining software repositories . 152–156. [45] Stack Overow. 2025. 2025 Developer Survey . https://survey .stackoverow .co/ 2025/ [46] Stirling T ools. 2025. Commit 00efc880 : Fix T ypeScript linting error in zipFileService. https://github.com/Stirling- T ools/Stirling- PDF/commit/ 00efc8802cd4be7bdf30c746dbd7a2cb1108a601 [47] Stirling T o ols. 2025. Commit e7109bb : Convert extract-image-scans to Re- act component. https://github.com/Stirling- T ools/Stirling- PDF/commit/ e7109bb4e9f beb1fed7f10f50e5831f48da870be [48] Stirling T ools. 2026. Stirling-PDF . https://github.com/Stirling- T ools/Stirling- PDF [49] Michele T ufano, Fabio Palomba, Gabriele Bavota, Rocco Oliveto, Massimiliano Di Penta, Andrea De Lucia, and Denys Poshyvanyk. 2017. When and why your code starts to smell bad (and whether the smells go away). IEEE Transactions on Software Engine ering 43, 11 (2017), 1063–1088. [50] Bin W ang, W enjie Yu, Yilu Zhong, Hao Yu, Keke Lian, Chaohua Lu, Hongfang Zheng, Dong Zhang, and Hui Li. 2025. AI Code in the Wild: Measuring Security Risks and Ecosystem Shifts of AI-Generated Code in Modern Software. arXiv preprint arXiv:2512.18567 (2025). [51] Miku W atanabe, Hao Li, Y utaro Kashiwa, Brittany Reid, Hajimu Iida, and Ahme d E Hassan. 2025. On the use of agentic coding: An empirical study of pull requests on github. arXiv preprint arXiv:2509.14745 (2025). [52] Meng Y an, Xin Xia, Emad Shihab, David Lo, Jianwei Yin, and Xiaohu Y ang. 2018. Automating change-level self-admitted technical debt determination. IEEE Transactions on Software Engineering 45, 12 (2018), 1211–1229. [53] Fiorella Zampetti, Alexander Serebrenik, and Massimiliano Di Penta. 2018. W as self-admitted technical debt removal a real removal? an in-depth perspective. In Proceedings of the 15th international conference on mining software repositories . 526–536.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment